Training-Free Group Relative Policy Optimization

Youtu-Agent Team$^*$

Abstract

Recent advances in Large Language Model (LLM) agents have demonstrated their promising general capabilities. However, their performance in specialized real-world domains often degrades due to challenges in effectively integrating external tools and specific prompting strategies. While methods like agentic reinforcement learning have been proposed to address this, they typically rely on costly parameter updates, for example, through a process that uses Supervised Fine-Tuning (SFT) followed by a Reinforcement Learning (RL) phase with Group Relative Policy Optimization (GRPO) to alter the output distribution. However, we argue that LLMs can achieve a similar effect on the output distribution by learning experiential knowledge as a token prior, which is a far more lightweight approach that not only addresses practical data scarcity but also avoids the common issue of overfitting. To this end, we propose Training-Free Group Relative Policy Optimization (Training-Free GRPO), a cost-effective solution that enhances LLM agent performance without any parameter updates. Our method leverages the group relative semantic advantage instead of numerical ones within each group of rollouts, iteratively distilling high-quality experiential knowledge during multi-epoch learning on a minimal ground-truth data. Such knowledge serves as the learned token prior, which is seamlessly integrated during LLM API calls to guide model behavior. Experiments on mathematical reasoning and web searching tasks demonstrate that Training-Free GRPO, when applied to DeepSeek-V3.1-Terminus, significantly improves out-of-domain performance. With just a few dozen training samples, Training-Free GRPO outperforms fine-tuned small LLMs with marginal training data and cost.

Date: October 9, 2025

Correspondence: [email protected]

Code: https://github.com/TencentCloudADP/youtu-agent/tree/training_free_GRPO

$^*$Full author list in contributions.

Executive Summary: Large language models (LLMs) show great promise as agents that can handle complex tasks like problem-solving and web research by integrating tools such as calculators or search engines. Yet, in specialized real-world settings, these agents often fall short because they lack familiarity with domain-specific tools and prompting techniques. Traditional solutions, like reinforcement learning methods that fine-tune model parameters, demand massive data and computing power, leading to high costs, overfitting with limited data, and reduced ability to generalize across tasks. This creates a dilemma: powerful general-purpose LLMs underperform in niches, while fine-tuning them is impractical for most organizations, especially with data scarcity and environmental concerns from energy use.

This paper introduces Training-Free Group Relative Policy Optimization (Training-Free GRPO), a method to boost LLM agent performance in specialized domains without any parameter updates or heavy training. The goal is to mimic the benefits of standard reinforcement learning—shifting outputs toward better strategies—through lightweight context adjustments, using just dozens of examples to make large, frozen LLMs more effective.

The approach draws from Group Relative Policy Optimization, a reinforcement technique, but adapts it for inference only. For each task in a small training set, the system generates multiple possible responses (called rollouts) using the base LLM, scores them with a simple reward system, and has the LLM itself analyze differences to extract key insights in natural language—termed semantic advantages. These insights build an evolving "experiential knowledge" library, which is then added to prompts for future queries, guiding the model like a learned prior without changing its core. Experiments used strong base models like DeepSeek-V3.1-Terminus on math reasoning tasks from the AIME 2024 and 2025 benchmarks (100 training examples from a math dataset) and web searching tasks from the WebWalkerQA benchmark (100 examples from a web interaction dataset). The process ran for three short epochs with groups of 3 to 5 rollouts each, relying on official LLM API pricing for costs.

Key results highlight strong gains with minimal effort. On math reasoning, Training-Free GRPO lifted performance on unseen AIME problems from 80% to 82.7% success rate on the 2024 set and from 67.9% to 73.3% on the 2025 set, using just 100 training samples—outpacing fine-tuned smaller models (under 32 billion parameters) that needed thousands of examples and cost over $10,000. It also cut unnecessary tool calls by encouraging efficient strategies. For web searching, success rates rose from 63.2% to 67.8% on complex queries requiring multi-step navigation. Ablation tests confirmed the value of comparing multiple rollouts and using ground-truth rewards: skipping these dropped gains by 2-5 percentage points. Across domains, the method preserved broad capabilities, unlike fine-tuned models that excel in one area but fail in others, such as a math-tuned agent scoring only 18% on web tasks.

These findings mean organizations can adapt powerful LLMs to specialized needs—like math tools or web agents—at a fraction of the cost and without deploying custom models, reducing risks of overfitting and maintenance burdens. It resolves the cost-performance trade-off by leveraging scalable API-based LLMs, cutting training expenses by over 500 times (to about $18) and enabling pay-per-use inference that suits variable demand, potentially lowering overall system complexity and environmental impact. Unexpectedly, it even works without ground truths in some cases, relying on the LLM's self-comparison, broadening its use where labeled data is hard to get.

Next steps should include piloting Training-Free GRPO in real applications, starting with low-volume specialized tasks to integrate experiential knowledge into existing LLM workflows. For broader rollout, test on more domains like code debugging or customer service, and explore hybrid setups combining it with light fine-tuning for edge cases. Trade-offs: It shines with capable base models but may underperform on weaker ones; options include scaling group sizes for harder tasks at slight cost increases.

While robust on benchmarks, limitations include dependence on high-quality base LLMs and potential gaps in domains without reliable rewards. Confidence is high for math and web tasks, with statistically reliable averages from 32 runs, but readers should verify in their contexts before full decisions, as real-world variability could affect outcomes.

1 Introduction

Section Summary: Large language models, or LLMs, are advanced AI systems that excel at tasks like problem-solving and web research but often struggle in specialized real-world settings that require tools like calculators or databases, along with tailored instructions. Traditional methods to improve them, such as fine-tuning with reinforcement learning, demand huge amounts of data and computing power, lead to poor adaptability across tasks, and are inefficient for most uses. To address this, the authors introduce Training-Free Group Relative Policy Optimization, a lightweight approach that boosts LLM performance without altering the model's core parameters, using minimal examples and AI self-reflection to guide better decision-making, as shown in tests on math and web tasks where it outperforms costlier tuned models.

Large Language Models (LLMs) are emerging as powerful general-purpose agents capable of interacting with complex, real-world environments. They have shown remarkable capabilities across a wide range of tasks, including complex problem-solving ([1, 2, 3]), advanced web research ([4, 5, 6, 7]), code generation and debugging ([8, 9]), and proficient computer use ([10, 11, 12]). Despite their impressive capabilities, LLM agents often underperform in specialized, real-world domains. These scenarios typically demand the integration of external tools (e.g., calculators, APIs, databases), along with domain-specific task definitions and prompting strategies. Deploying a general-purpose agent out-of-the-box in such settings often results in suboptimal performance due to limited familiarity with domain-specific requirements or insufficient exposure to necessary tools.

To bridge this gap, agentic training has emerged as a promising strategy to facilitate the adaptation of LLM agents to specific domains and their associated tools ([1, 4, 5, 13]). Recent advancements in agentic reinforcement learning (Agentic RL) approaches have employed Group Relative Policy Optimization (GRPO) ([14]) and its variants ([15, 16, 17]) to align model behaviors in the parameter space. Although these methods effectively enhance task-specific capabilities, their reliance on tuning LLM parameters poses several practical challenges:

- Computational Cost: Even for smaller models, fine-tuning demands substantial computational resources, making it both costly and environmentally unsustainable. For larger models, the costs become prohibitive. Furthermore, fine-tuned models require dedicated deployment and are often limited to specific applications, rendering them inefficient for low-frequency use cases compared to more versatile general-purpose models.

- Poor Generalization: Models optimized via parameter tuning often suffer from unsatisfactory cross-domain generalization, limiting their applicability to narrow tasks. Consequently, multiple specialized models must be deployed to handle a comprehensive set of tasks, significantly increasing system complexity and maintenance overhead.

- Data Scarcity: Fine-tuning LLMs typically necessitates large volumes of high-quality, carefully annotated data, which are often scarce and prohibitively expensive to obtain in specialized domains. Additionally, with limited samples, models are highly susceptible to overfitting, leading to poor generalization.

- Diminishing Returns: The prohibitive training costs usually compel existing approaches to fine-tune smaller LLMs with fewer than 32 billion parameters, due to resource constraints rather than optimal design choices. While larger models would be preferred, the computational expense of fine-tuning necessitates this compromise. Paradoxically, API-based or open-source larger LLMs often deliver better cost-performance ratios through scalability and continuous model updates. However, these general-purpose models underperform in specialized domains where fine-tuning is necessary, creating a cost-performance dilemma.

Such limitations inherent in parameter tuning motivate a fundamental research question: Is applying RL in parametric space the only viable approach? Can we enhance LLM agent performance in a non-parametric way with lower data and computational costs?

We answer this question affirmatively by proposing Training-Free Group Relative Policy Optimization (Training-Free GRPO), a novel and efficient method that improves LLM agent behavior in a manner similar to vanilla GRPO, while preserving the original model parameters unchanged. Our approach is motivated by the insight that LLMs already possess the fundamental capability to adapt to new scenarios, requiring only minimal practice through limited samples to achieve strong performance. Thus, instead of adapting their output distribution through parameter tuning, in-context learning ([18]) that leverages a lightweight token prior can also encapsulate experiential knowledge learned from a minimal training dataset.

Training-Free GRPO retains the multi-epoch learning mechanism of vanilla GRPO. In each epoch, multiple outputs are generated to deliver a group of rollouts for every query, which helps to explore the policy space and evaluate potential strategies. While vanilla GRPO relies on gradient-based parameter updates to iteratively improve policy performance, Training-Free GRPO eliminates this requirement by employing inference-only operations using LLMs. At each optimization step, rather than calculating a numerical advantage for gradient ascent within each group of rollouts, our method leverages LLMs to introspect on each group and distill a semantic advantage. Such advantage refines external experiential knowledge and guide policy outputs based on evolving contextual priors, thereby achieving policy optimization effects without modifying any model parameters.

By evaluating challenging mathematical reasoning and interactive web searching tasks, we demonstrate that our method significantly enhances the performance of frozen policy models such as DeepSeek-V3.1-Terminus ([19]) with only dozens of training samples. It surpasses fine-tuned 32B models in performance while requiring only a fraction of the computational resources, offering a simple and much more efficient alternative to traditional fine-tuning techniques.

Our principal contributions are threefold:

- A New Training-Free RL Paradigm: We introduce Training-Free GRPO, which shifts policy optimization from the parameter space to the context space by leveraging evolving experiential knowledge as token priors without gradient updates.

- Semantic Group Advantage: We replace numerical group advantage in vanilla GRPO with semantic group advantage, enabling LLMs to introspect their own rollouts and continuously updating experiential knowledge at multiple optimization steps.

- Data and Computational Efficiency: Experiments confirm that Training-Free GRPO effectively enhances the performance of a frozen policy with minimal training samples, offering a practical and cost-effective alternative across different domains.

- Superior Generalization: By leaving model parameters frozen and plugging in different token priors, our approach fully preserves the generalization power, eliminating the cost and complexity of deploying multiple fine-tuned specialists.

2 Training-Free GRPO

Section Summary: Training-Free GRPO is a method that achieves the same alignment improvements as the standard GRPO algorithm without any training or updates to the language model's core parameters, instead relying on an external library of experiential knowledge to guide better outputs. It starts by generating multiple responses to a query using the frozen model, scores them with a reward system, and then creates natural language summaries of why some responses succeed or fail relative to the group, turning these into "semantic advantages" that capture key lessons. These advantages are used to update the knowledge library through simple operations like adding, deleting, or modifying entries, which subtly shifts the model's future responses toward higher-quality results by changing the input context rather than altering the model itself.

In this section, we introduce our Training-Free GRPO, a method designed to replicate the alignment benefits of the GRPO algorithm without performing any gradient-based updates to the policy model's parameters.

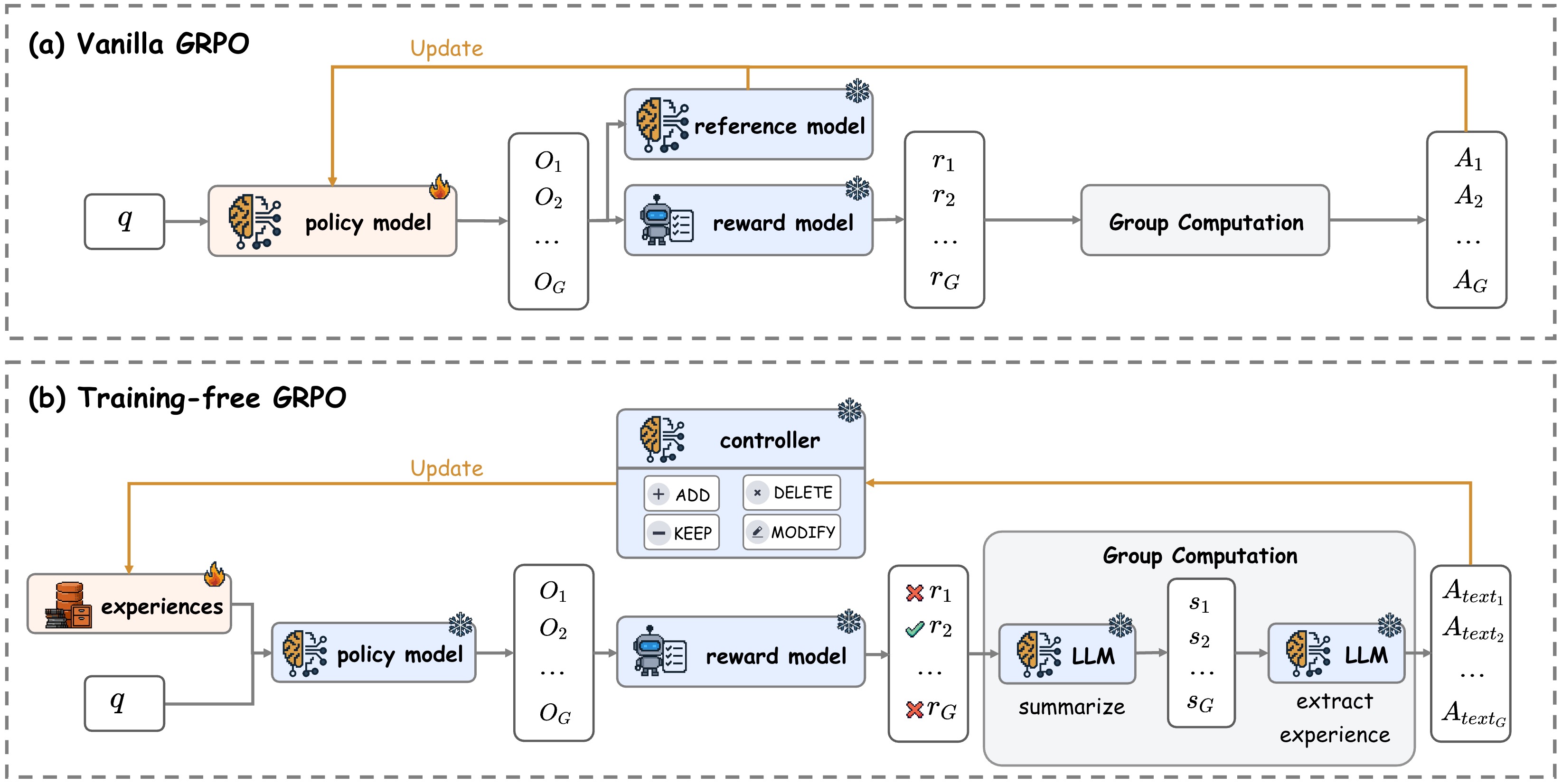

Vanilla GRPO. As shown in Figure 2, the vanilla GRPO procedure operates by first generating a group of $G$ outputs ${o_1, o_2, \dots, o_G}$ for a given query $q$ using the current policy LLM $\pi_\theta$, i.e., $\pi_\theta(o_i \mid q)$. Each output $o_i$ is then independently scored with a reward model $\mathcal{R}$. Subsequently, with rewards $\mathbf{r}={r_1, \dots, r_G}$, it calculates a group-relative advantage $\hat{A}i = \frac{r_i - \text{mean}(\mathbf{r})}{\text{std}(\mathbf{r})}$ for each output $o_i$. By combining a KL-divergence penalty against a reference model, it constructs a PPO-clipped objective function $\mathcal{J}{\text{GRPO}}(\theta)$, which is then maximized to update the LLM parameters $\theta$.

Training-Free GRPO repurposes the core logic of this group-based relative evaluation but translates it into a non-parametric, inference-time process. Instead of updating the parameters $\theta$, we leave $\theta$ permanently frozen and maintain an external experiential knowledge $\mathcal{E}$, which is initialized to $\emptyset$.

Rollout and Reward. As shown in Figure 2, our rollout and reward process mirrors that of GRPO exactly. Given a query $q$, we perform a parallel rollout to generate a group of $G$ outputs ${o_1, o_2, \dots, o_G}$ using the LLM. Notably, while GRPO uses the current trainable policy $\pi_\theta$, our policy conditions on the experiential knowledge, $\pi_\theta(o_i|q, \mathcal{E})$. Identical to the standard GRPO setup, we score each output $o_i$ by the reward model $\mathcal{R}$ to obtain a scalar reward $r_i = \mathcal{R}(q, o_i)$.

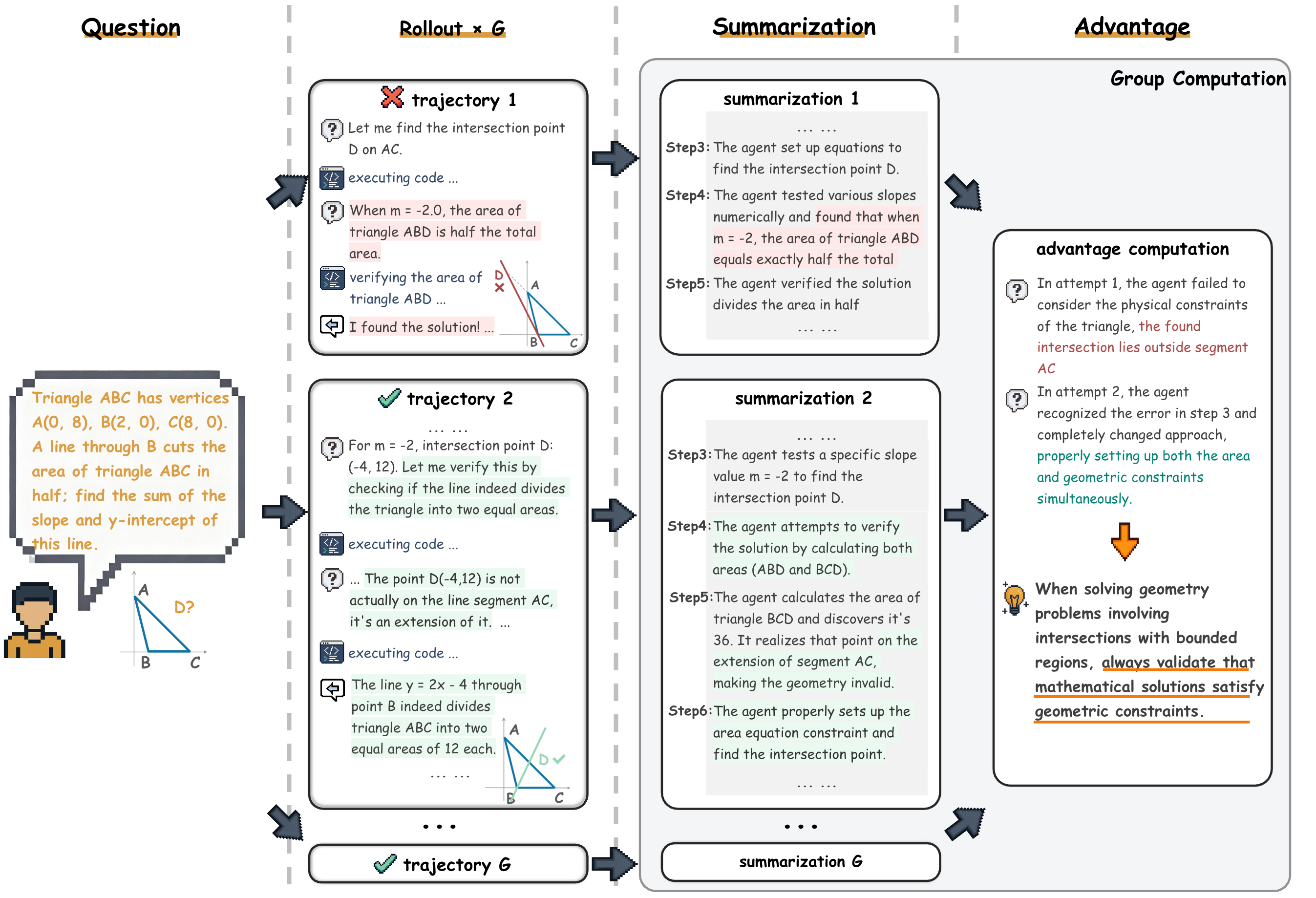

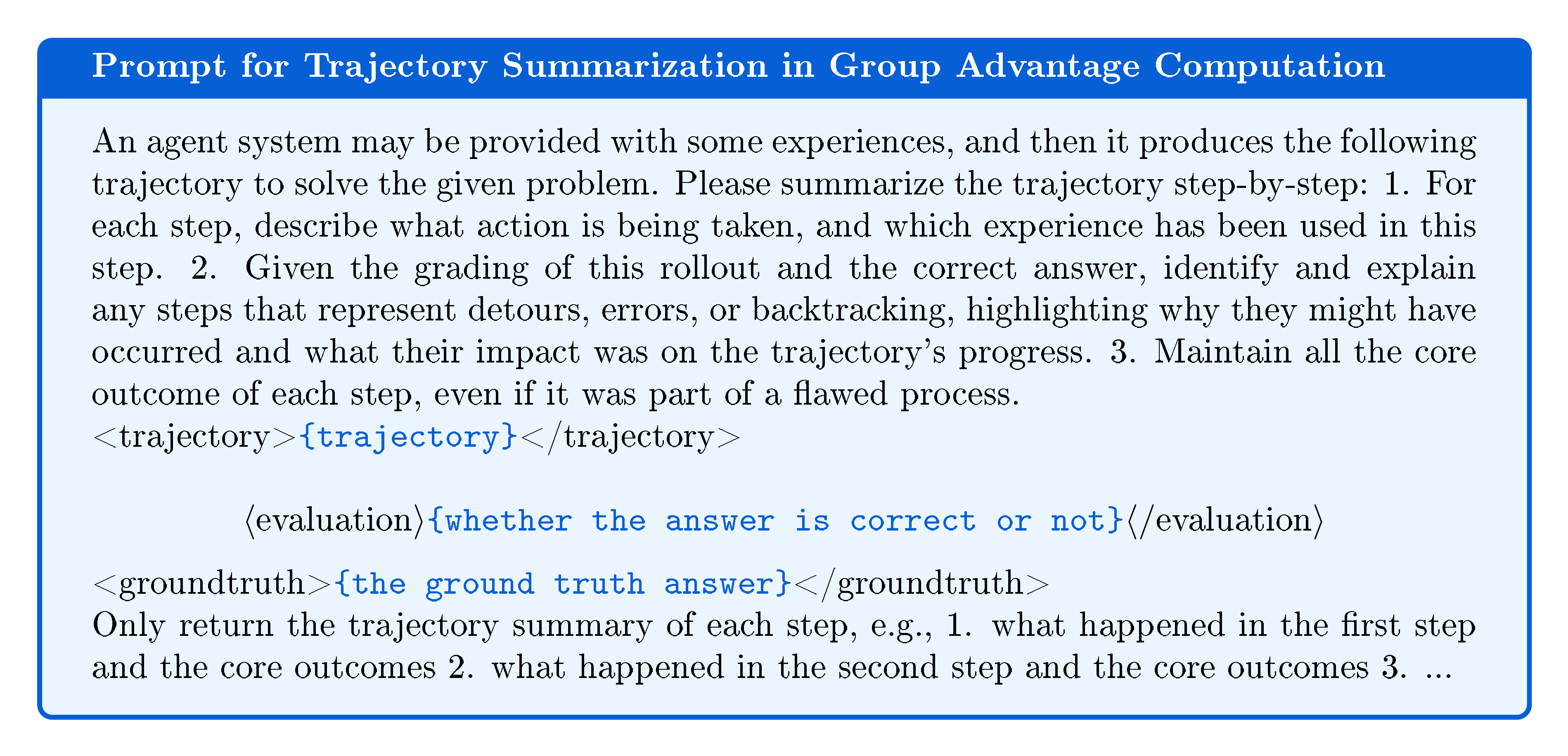

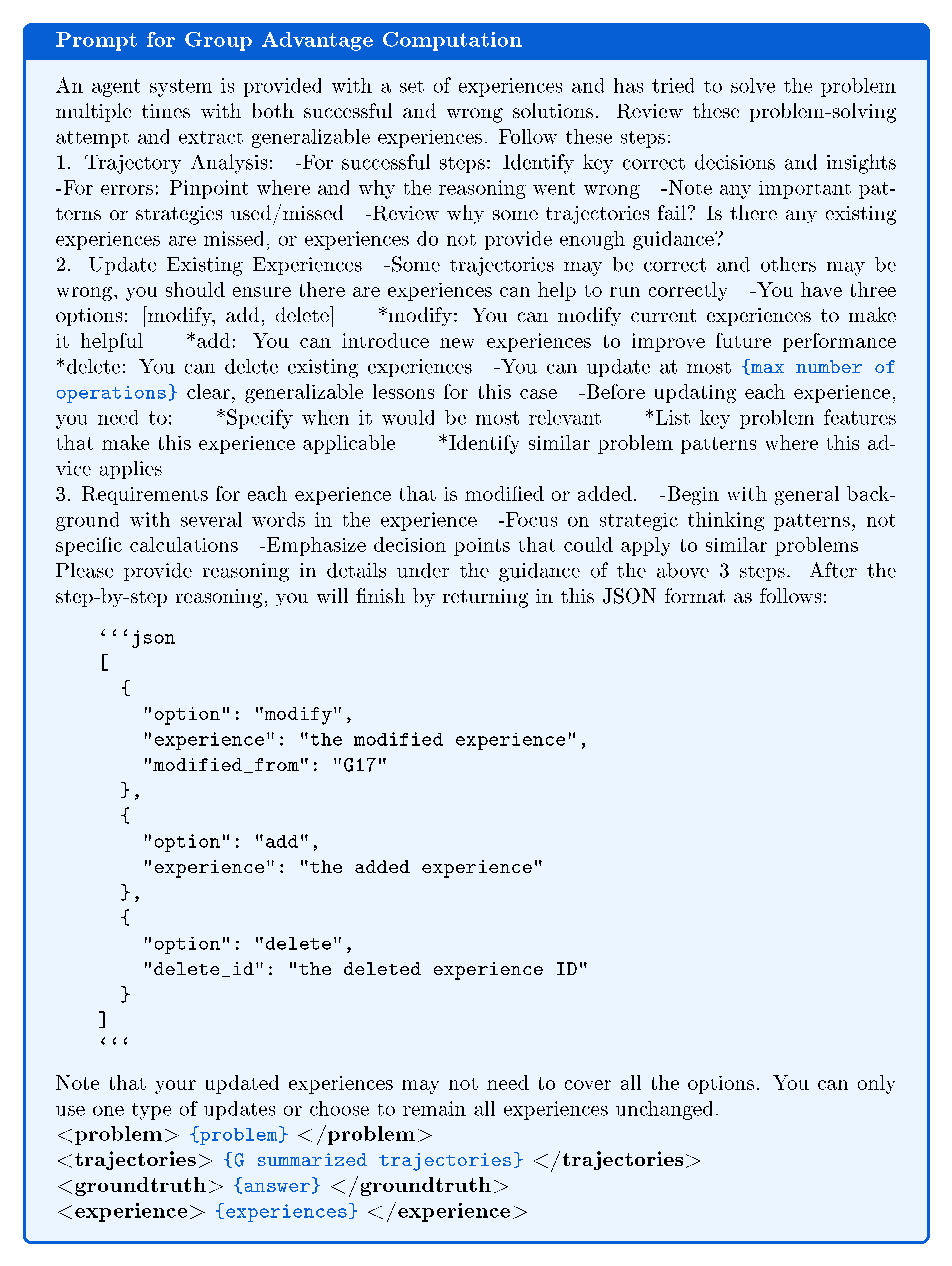

Group Advantage Computation. To provide an optimization direction for policy parameters, vanilla GRPO computes a numerical advantage $\hat{A}i$ that quantifies each output $o_i$ 's relative quality within its group. Similarly, Training-Free GRPO performs an analogous comparison within each group but produces a group relative semantic advantage in the form of natural language experience, as shown in Figure 3. Since $\hat{A}i=0$ when all $G$ outputs receive identical rewards (i.e., $\text{std}(\mathbf{r})=0$) in vanilla GRPO, we generate such semantic advantages only for groups with both clear winners and losers. Specifically, for each output $o_i$, we first ask the same LLM $\mathcal{M}$ to provide a corresponding summary $s_i=\mathcal{M}(p{\text{summary}}, q, o_i)$ separately, where $p{\text{summary}}$ is a prompt template that incorporates the query $q$ and output $o_i$ to form a structured summarization request. Given the summaries ${s_1, s_2, \dots, s_G}$ and the current experiential knowledge $\mathcal{E}$, the LLM $\mathcal{M}$ articulates the reasons for the relative success or failure of the outputs, followed by extracting a concise natural language experience $A_{\text{text}}=\mathcal{M}({p_{\text{extract}}}, q, s_i, \mathcal{E})$, where $p_{\text{extract}}$ is another prompt template for experience extraction. This natural language experience $A_{\text{text}}$ serves as our semantic advantage, functionally equivalent to vanilla GRPO's $\hat{A}_i$, encoding the critical experiential knowledge of what actions lead to high rewards.

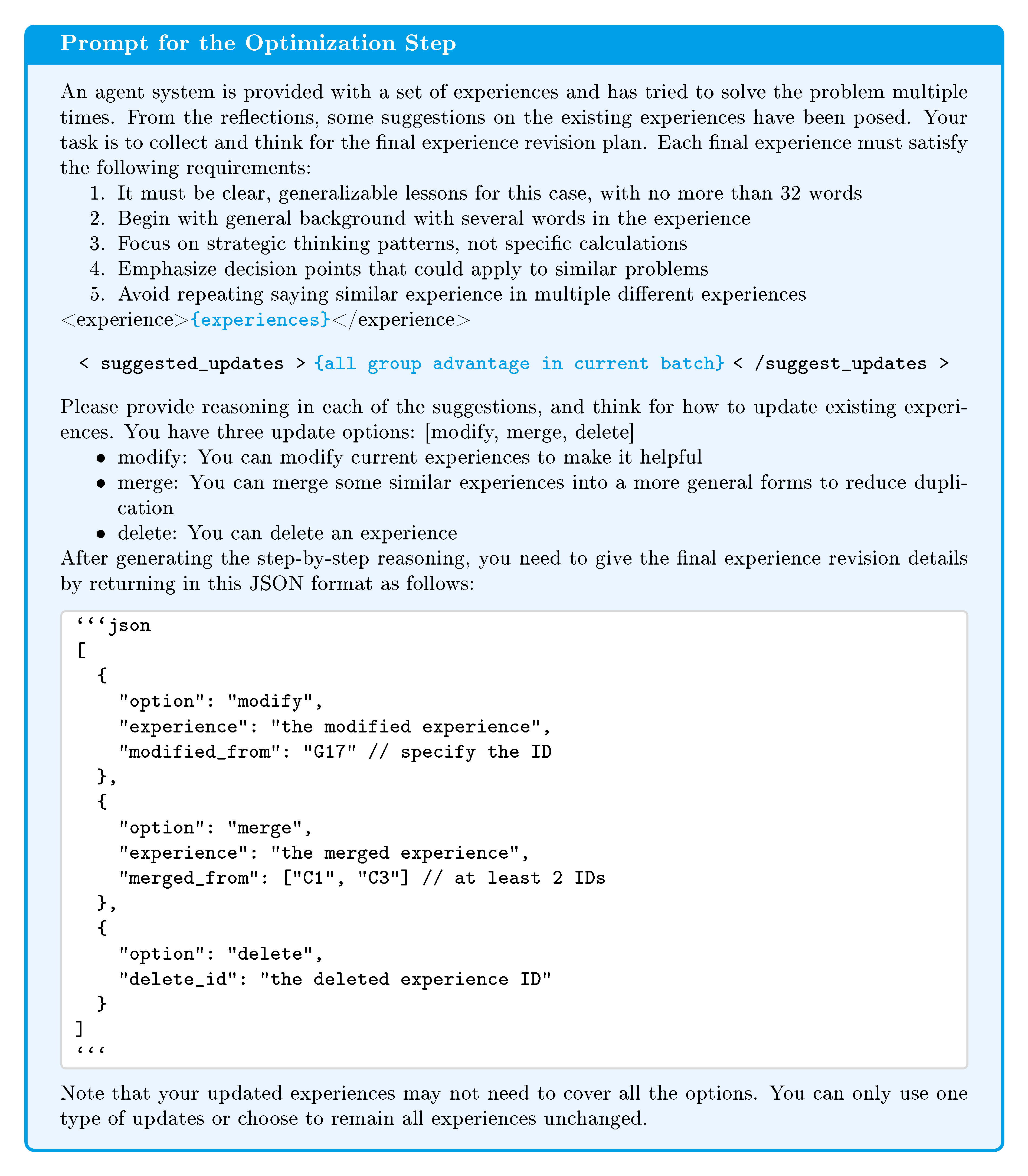

Optimization. Whereas vanilla GRPO updates its model parameters $\theta$ via gradient ascent on $\mathcal{J}{\text{GRPO}}(\theta)$ computed by all advantages in a single batch, we update our experience library $\mathcal{E}$ using all semantic advantages $A{\text{text}}$ from the current batch. Specifically, given the existing experiences library $\mathcal{E}$, we prompt the LLM to generate a list of operations based on all these $A_{\text{text}}$, where each operation could be:

- Add: Directly append the experience described in $A_{\text{text}}$ to the experience library $\mathcal{E}$.

- Delete: Based on $A_{\text{text}}$, remove a low-quality experience from the experience library $\mathcal{E}$.

- Modify: Refine or improve an existing experience in the experience library $\mathcal{E}$ based on insights from $A_{\text{text}}$.

- Keep: The experience library $\mathcal{E}$ remains unchanged.

After updating the experience library $\mathcal{E}$, the conditioned policy $\pi_\theta(y|q, \mathcal{E})$ produces a shifted output distribution in subsequent batches or epochs. This mirrors the effect of a GRPO policy update by steering the model towards higher-reward outputs, but achieves this by altering the context rather than the model's fundamental parameters. The frozen base model $\pi_\theta$ acts as a strong prior, ensuring output coherence and providing a built-in stability analogous to the KL-divergence constraint in GRPO that prevents the policy from deviating excessively from $\pi_{\text{ref}}$.

3 Evalution

Section Summary: Researchers tested Training-Free GRPO, a method to improve large language models without traditional training, against standard approaches on tough math problems from the AIME24 and AIME25 benchmarks using powerful models like DeepSeek-V3.1-Terminus. The method boosted accuracy by 2.7% to 5.4% over baselines like ReAct, using just 100 sample problems and costing only about $18, far outperforming costlier techniques that need thousands of examples. It also showed steady progress during learning, fewer unnecessary tool uses, and strong results even without perfect answer keys or group comparisons, proving its efficiency and flexibility.

To compare Training-Free GRPO with competitive baselines, we conduct comprehensive experiments on both mathematical reasoning and web searching benchmarks.

3.1 Mathematical Reasoning

Benchmarks. We conduct our evaluation on the challenging AIME24 and AIME25 benchmarks ([20]), which are representative of complex, out-of-domain mathematical reasoning challenges. To ensure robust and statistically reliable results, we evaluate each problem with 32 independent runs and report the average Pass@1 score, which we denote as Mean@32.

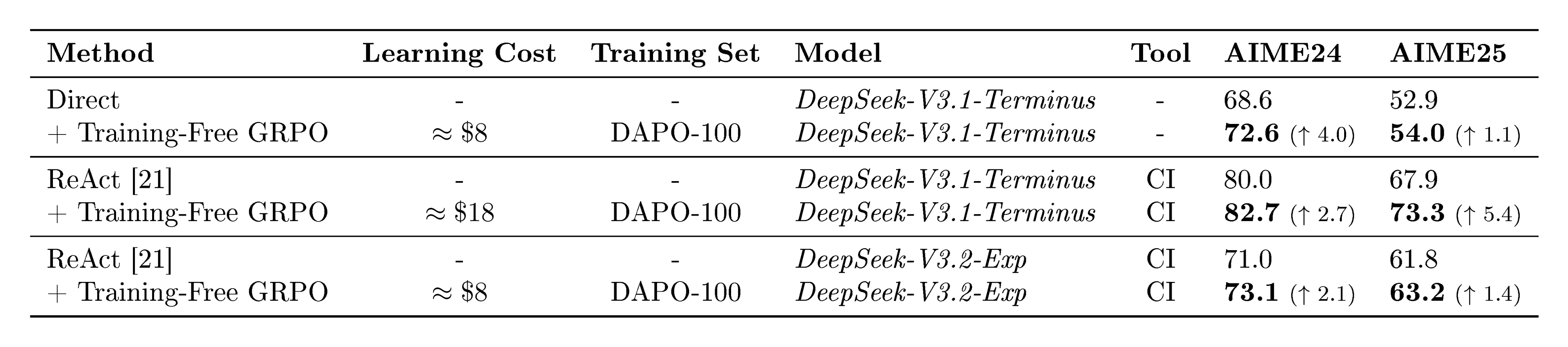

Setup. We primarily focus on large powerful LLMs that are usually hard and expensive to be fine-tuned in real-world applications, such as DeepSeek-V3.1-Terminus ([19]). We include two basic configurations: (1) Direct Prompting without tool use (a text-only input/output process), and (2) ReAct ([21]) with a code interpreter (CI) tool. To apply Training-Free GRPO experiments, we randomly sample 100 problems from the DAPO-Math-17K dataset ([16]), denoted as DAPO-100. We run the learning process for 3 epochs with a single batch per epoch (i.e., 3 steps), using a temperature of $0.7$ and a group size of $5$ during the learning phase. For out-of-domain evaluation on AIME 2024 and 2025 benchmarks, we use a temperature of $0.3$.

:::

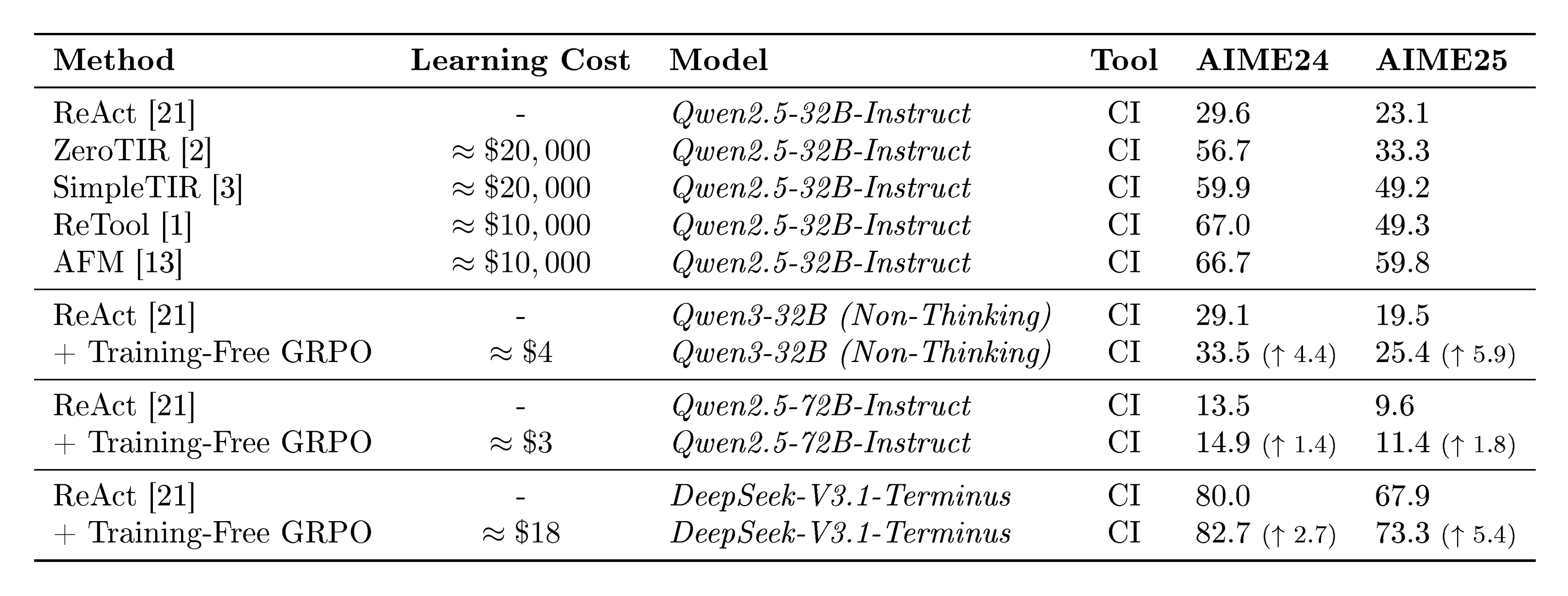

Table 1: Mean@32 on AIME 2024 and AIME 2025 benchmarks (%).

:::

Main Results. As illustrated in Table 3, Training-Free GRPO achieves substantial gains in mathematical reasoning, showing a clear advantage in performance across both the tool-use and non-tool-use scenarios. The strong baseline established by DeepSeek-V3.1-Terminus with ReAct ([21]) yields scores of $80.0%$ on AIME24 and $67.9%$ on AIME25. Critically, applying Training-Free GRPO to the frozen DeepSeek-V3.1-Terminus elevates its performance significantly, reaching $82.7%$ on AIME24 and $73.3%$ on AIME25. This represents a substantial absolute gain of $+2.7%$ and $+5.4%$, respectively, which is achieved with only $100$ out-of-domain training examples and zero gradient updates. This performance surpasses various state-of-the-art RL methods like ReTool ([1]) and AFM ([13]) trained on 32B LLMs (see Table 3), which typically require thousands of training examples and incur costs exceeding $10, 000$. In contrast, Training-Free GRPO utilizes only $100$ data points with an approximate cost of $18$. Such outcome suggests that in real-world applications, guiding a powerful but frozen model through context-space optimization is more effective and efficient than exhaustively fine-tuning a less capable model in parameter space. For quantitative evaluation and learned experiences of Training-Free GRPO, please refer to Appendix A and Appendix C, respectively.

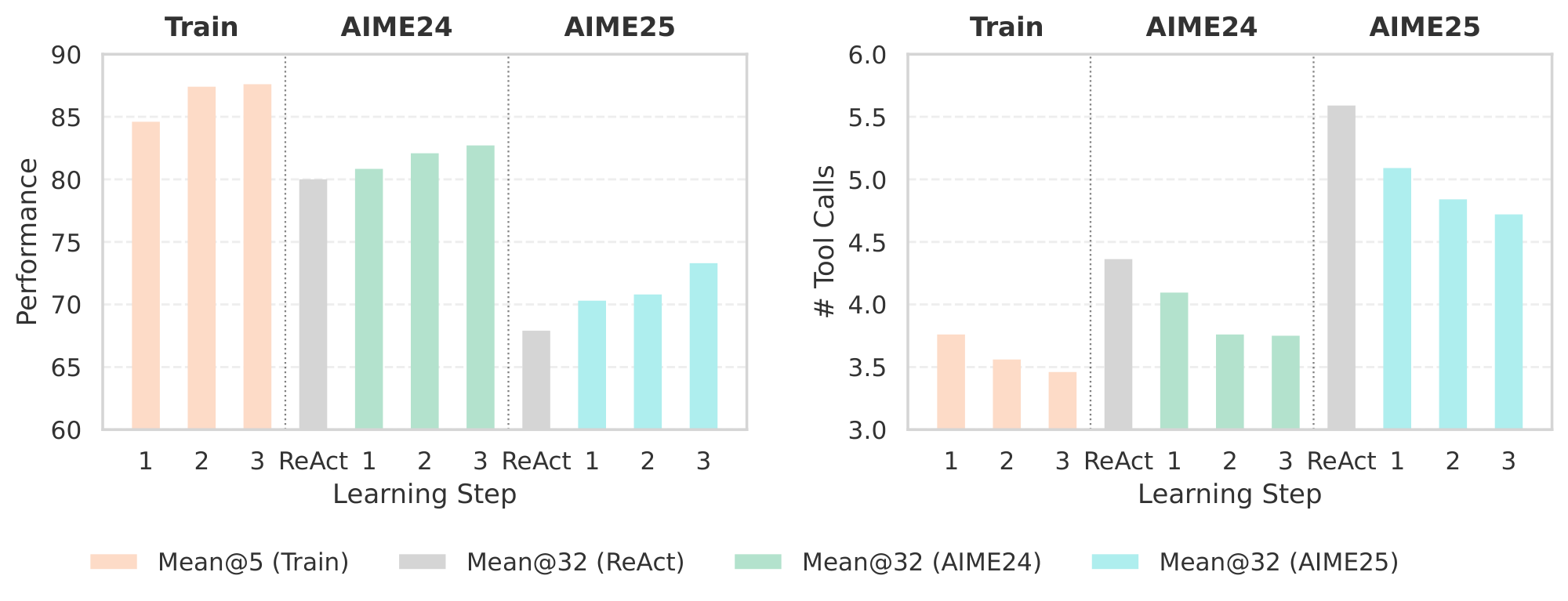

Learning Dynamics. As shown in Figure 4, during the 3-step learning process, we observe a steady and significant improvement in Mean@5 on the training set. Concurrently, the Mean@32 performance on both AIME24 and AIME25 also improves with each step, demonstrating that the learned experiences from only $100$ problems generalize effectively and the necessity of multi-step learning. Also, the average number of tool calls decreases during both training and out-of-domain evaluation on AIME benchmarks. This suggests that Training-Free GRPO not only encourages correct reasoning and action, but also teaches the agent to use tools more efficiently and judiciously. The learned experiential knowledge helps the agent to discover some shortcuts and avoid erroneous or redundant tool calls, validating the effectiveness of our semantic advantage guided optimization.

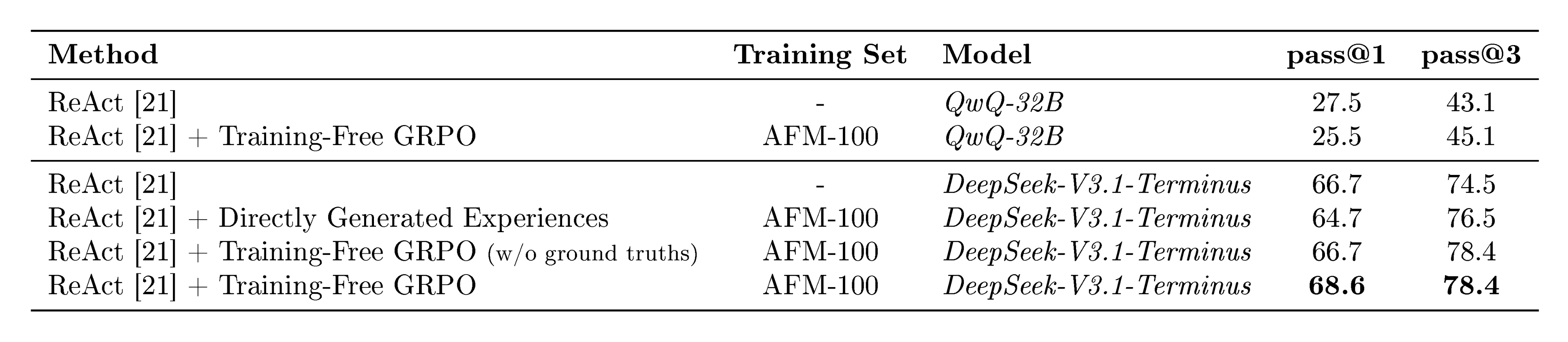

:Table 2: Ablation study on DeepSeek-V3.1-Terminus (Mean@32, %).

| Method | Training Set | AIME24 | AIME25 |

|---|---|---|---|

| ReAct ([21]) | - | 80.0 | 67.9 |

| ReAct ([21]) + Directly Generated Experiences | - | 79.8 | 67.3 |

| ReAct ([21]) + Training-Free GRPO (w/o ground truths) | DAPO-100 | 80.7 | 68.9 |

| ReAct ([21]) + Training-Free GRPO (w/o group computation) | DAPO-100 | 80.4 | 69.3 |

| ReAct ([21]) + Training-Free GRPO | DAPO-100 | 82.7 | 73.3 |

:::

Table 3: Mean@32 with smaller LLMs on AIME 2024 and AIME 2025 benchmarks (%).

:::

Effectiveness of Learned Experiences. In Table 2, we also include the ReAct enhanced with the experiences directly generated by DeepSeek-V3.1-Terminus, matching the quantity learned from Training-Free GRPO. However, such directly generated experiences fail to improve the performance, highlighting the effectiveness of learned experiential knowledge from Training-Free GRPO.

Robustness to Reward Signal. Table 2 also presents a variant of Training-Free GRPO, where the ground truth answers are not provided during learning process. In such cases, the semantic advantage is directly obtained by comparing the rollouts within each group, where the LLM can only rely on implicit majority voting, self-discrimination and self-reflection to optimize the experiences. Although it does not surpass the default version with ground truths, Training-Free GRPO still achieves an impressive results of 80.7% on AIME24 and 68.9% on AIME25. It shows the robustness and applicability to domains where ground truths are scarce or unavailable, further broadening its practical utility.

Removing Group Computation. We also remove the group computation by setting the group size of $1$ in Training-Free GRPO, where the LLM can only distill semantic advantage from the single rollout of each query. The results in Table 2 show that it significantly harm the performance comparing with the default group size of $5$. It confirms the necessity of group relative computation, which enables the LLM to compare different trajectories within each group for better semantic advantage and experience optimization.

Applicability to Different Model Sizes. By applying Training-Free GRPO on smaller LLMs, specifically Qwen3-32B ([22]) and Qwen2.5-72B-Instruct ([23]) with DAPO-100 dataset, we observe consistent improvements on the out-of-domain AIME benchmarks in Table 3. Training-Free GRPO requires significantly fewer data and much lower learning cost, contrasting sharply with recent RL methods like ZeroTIR ([2]), SimpleTIR ([3]), ReTool ([1]), and AFM ([13]), which often necessitate thousands of data points and substantial computational resources for parameter tuning. Furthermore, powered by larger models like DeepSeek-V3.1-Terminus, our approach achieves much higher Mean@32 on AIME benchmarks than all RL-trained models, while only incurring about $18$ for the learning process.

3.2 Web Searching

:Table 4: Pass@1 on WebWalkerQA (%).

| Method | Training Set | Model | pass@1 |

|---|---|---|---|

| ReAct ([21]) | - | DeepSeek-V3.1-Terminus | 63.2 |

| + Training-Free GRPO | AFM-100 | DeepSeek-V3.1-Terminus | 67.8 ($\uparrow$ 4.6) |

In this section, we evaluate the effectiveness of Training-Free GRPO in addressing web searching tasks by leveraging minimal experiential data to enhance agent behavior.

Datasets. For training, we constructed a minimal training set by randomly sampling 100 queries from the AFM (Chain-of-Agents) web interaction RL dataset ([13]), denoted as AFM-100. AFM provides high-quality, multi-turn interactions between agents and web environments, collected via reinforcement learning in realistic browsing scenarios. For evaluation, we employ WebWalkerQA benchmark ([24]), a widely-used dataset for assessing web agent performance. Its tasks require understanding both natural language instructions and complex web page structures, making it a rigorous evaluation framework for generalist agents.

Methods. Our proposed Training-Free GRPO is applied to DeepSeek-V3.1-Terminus without any gradient-based updates. We perform 3 epochs of training-free optimization with a group size of $G=3$. The temperature settings follow those used in prior mathematical experiments.

Main Results. We evaluate the effectiveness of our proposed Training-Free GRPO method on the WebWalkerQA benchmark. As shown in Table 4, our method achieves a pass@1 score of 67.8% using DeepSeek-V3.1-Terminus, a significant improvement over the baseline of 63.2%. This result indicates that our approach effectively steers model behavior by leveraging learned experiential knowledge, surpassing the capabilities of static prompting ReAct strategy.

:::

Table 5: Ablation results on WebWalkerQA subset (%).

:::

Ablation. We conduct ablation studies on a stratified random sample of 51 instances from the WebWalkerQA test set, where the sampling is proportionally stratified by difficulty level to ensure balanced representation across different levels of complexity. All ablated models are evaluated after 2 epochs of experience optimization. The results are summarized in Table 5. Using directly generated experiences slightly degrades over ReAct (64.7% vs. 66.7% pass@1), confirming that mere in-context examples without proper optimization may not yield gains. Training-Free GRPO without ground truth maintains the same pass@1 as ReAct (66.7%) but improves pass@3 to 78.4%, demonstrating that relative reward evaluation can enhance consistency even without ground truth. The full Training-Free GRPO achieves the best performance (68.6% pass@1 and 78.4% pass@3), highlighting the importance of combining ground truth guidance with semantic advantage and experience optimization. Applying Training-Free GRPO to QwQ-32B ([25]) yields only 25.5% pass@1, significantly lower than the 66.7% achieved with DeepSeek-V3.1-Terminus, and even under performing its own ReAct baseline (27.5%). This may suggest that the effectiveness of our method is dependent on the underlying model’s reasoning and tool-use capabilities in complex tool use scenarios, indicating that model capability is a prerequisite for effective experience-based optimization.

4 Comparing RL Learning on Context Space and Parameter Space

Section Summary: The section compares reinforcement learning approaches for AI models, highlighting how Training-Free GRPO excels in adapting to different tasks like math problems and web searches without losing general usefulness, unlike traditional methods that specialize too narrowly and perform poorly outside their training area. This method uses a fixed language model and adds domain-specific experiences on the fly, making it more versatile for real-world varied demands. Additionally, it drastically cuts costs, requiring only about $18 for training compared to $10,000 for similar tuned models, and offers flexible, usage-based inference expenses that avoid the high fixed costs of dedicated hardware.

4.1 Cross-domain Transfer Analysis

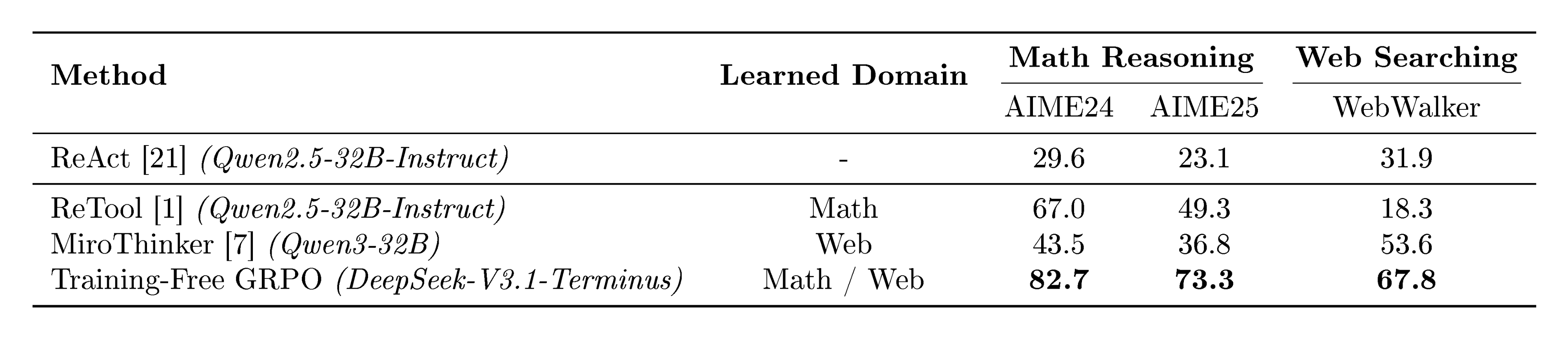

:::

Table 6: Cross-domain transferability (Averaged pass@1, %).

:::

A critical strength of Training-Free GRPO lies in its ability to achieve strong performance across diverse domains without suffering from the domain specialization trade-off observed in parameter-tuned methods. As demonstrated in Table 6, we observe the unsatisfactory performance when domain-specialized models are transferred to different domains. For instance, ReTool ([1]) specifically trained on mathematical reasoning tasks, achieves competitive performance on AIME24 and AIME25 within its specialized domain. However, when transferred to web searching tasks on WebWalker, its performance drops dramatically to only $18.3%$, which is much lower than ReAct ([21]) without fine-tuning. Similarly, though optimized for web interactions, MiroThinker ([7]) significantly underperforms ReTool that is trained in the math domain on the AIME benchmarks. Such phenomenon highlights that parameter-based specialization narrows the model's capabilities to excel in the training domain at the expense of generalizability. In contrast, Training-Free GRPO applied to the frozen LLM achieves state-of-the-art performance in both domains simultaneously by simply plugging in domain-specific learned experiences. Such cross-domain robustness makes Training-Free GRPO particularly valuable for real-world applications where agents must operate in multifaceted environments with diverse requirements.

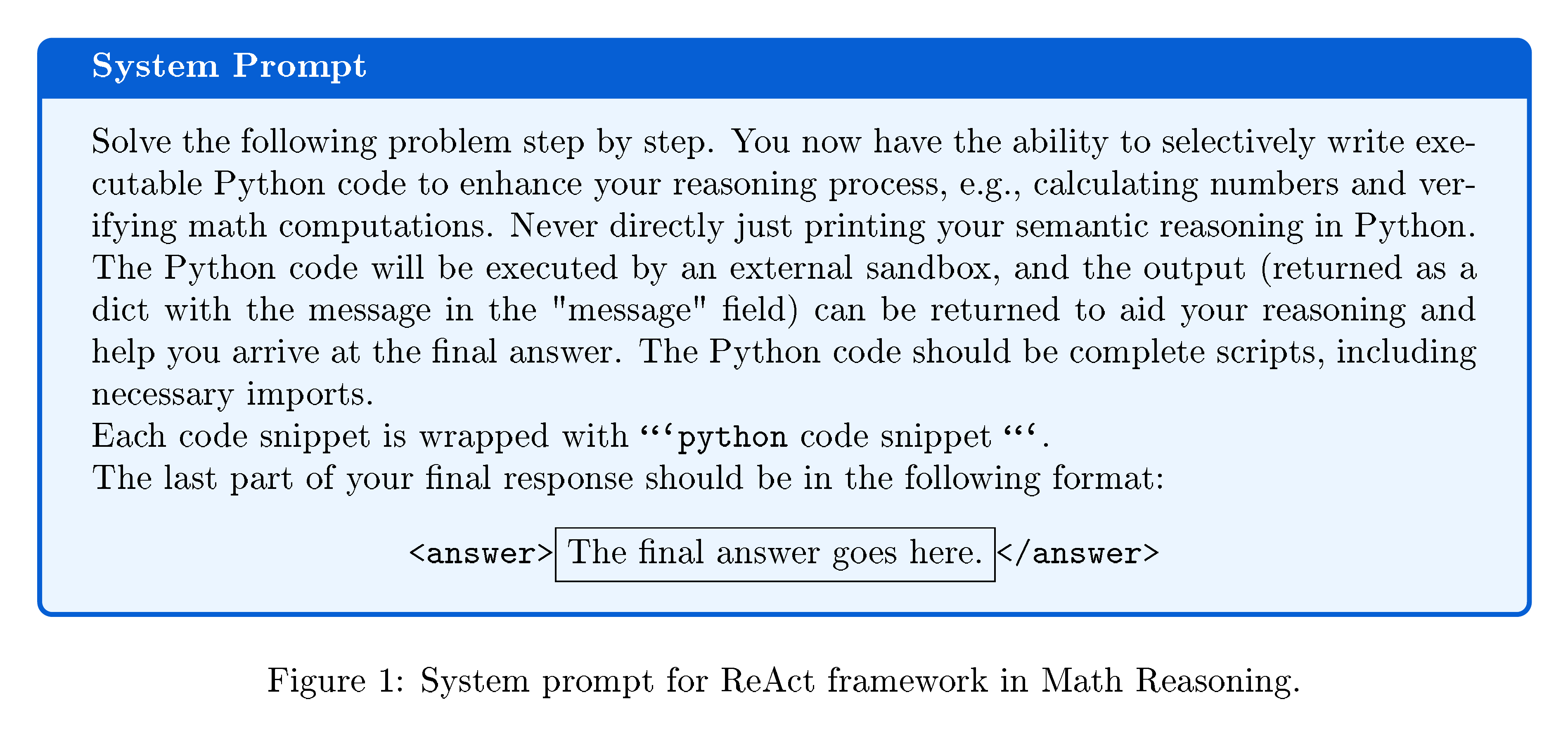

4.2 Computational Costs

As shown in Figure 1, we further analyze the economic benefits of Training-Free GRPO by comparing its computational costs with a vanilla RL training approach, specifically ReTool ([1]), on mathematical problem-solving tasks. This comparison highlights the practical benefits of our method in scenarios characterized by limited data, constrained budgets, or volatile inference demand.

Training Cost. By replicating the training process of ReTool ([1]) on Qwen2.5-32B-Instruct ([23]), we find that it requires approximately 20, 000 $\times$ GPU hours with the rental price of $0.5 per GPU hour, resulting in the total training expense amounts to roughly $10, 000. In contrast, Training-Free GRPO, when applied to DeepSeek-V3.1-Terminus, achieves superior performance on the AIME benchmarks (Table 3) while requiring only minimal costs. It requires only $3$ training steps over $100$ samples completed within $6$ hours, which consumes 38M input tokens and 6.6M output tokens, amounting to a total cost of approximately $18 based on the official DeepSeek AI pricing[^1]. The drastic reduction in training cost by over two orders of magnitude makes our approach especially cost-effective.

[^1]: https://api-docs.deepseek.com/quick_start/pricing. Most of the input tokens qualify for the lower cache hit pricing with ReAct framework, as each processing step typically involves reusing extensive prior context.

Inference Cost. Deploying a trained model like ReTool-32B entails significant fixed infrastructure costs. In a typical serving setup with 4 $\times$ GPUs at the price of $0.5 per GPU-hour, vLLM-based batching requests can process about 400 problems per hour from the AIME benchmarks. The inference cost per problem averages $0.005. While this per-instance cost is relatively low, it presupposes continuous GPU availability, which becomes inefficient under fluctuating or low request volumes. In contrast, Training-Free GRPO incurs a token-based cost. On average, each request consumes 60K input tokens and 8K output tokens, totaling about $0.02 per problem with cache hit pricing for reused contexts. Although per-query inference with a large API-based model is more expensive than with a dedicated small model, many real-world applications, particularly specialized or low-traffic services, experience irregular and modest usage patterns. In such cases, maintaining a dedicated GPU cluster is economically unjustifiable. By leveraging the shared, on-demand infrastructure of large model services like DeepSeek, Training-Free GRPO eliminates fixed serving overhead and aligns costs directly with actual usage. This pay-as-you-go model is distinctly advantageous in settings where demand is unpredictable or sparse.

5 Related Work

Section Summary: This section reviews research on large language models (LLMs) that act as agents by using external tools to handle tasks like planning and computation, building on early frameworks such as ReAct and Toolformer, and advancing to multi-agent systems like MetaGPT. It then discusses reinforcement learning techniques, including algorithms like PPO and GRPO, which train LLMs to pursue long-term goals through interaction and feedback, though these often demand heavy computation and work best on smaller models, prompting the authors' training-free GRPO approach for efficiency on advanced LLMs. Finally, it explores training-free methods like in-context learning and iterative refinements such as Self-Refine and Reflexion, which improve outputs without altering model parameters, contrasting these with the authors' method that refines a shared experience library across multiple examples to boost performance on new queries.

LLM Agents. By leveraging external tools, Large Language Models (LLMs) can overcome inherent limitations, such as lacking real-time knowledge and precise computation. This has spurred the development of LLM agents that interleave reasoning with actions. Foundational frameworks like ReAct ([21]) prompt LLMs to generate explicit chain-of-thought (CoT) and actionable steps, enabling dynamic planning through tool use. Furthermore, Toolformer ([26]) demonstrates that LLMs can learn to self-supervise the invocation of APIs via parameter fine-tuning. Building on these principles, subsequent research has produced sophisticated single- and multi-agent systems, such as MetaGPT ([27]), CodeAct ([28]), and OWL ([29]), which significantly enhance the quality of planning, action execution, and tool integration.

Reinforcement Learning. Reinforcement learning (RL) has proven highly effective for aligning LLMs with complex and long-horizon goals. Foundational algorithms like Proximal Policy Optimization (PPO) ([30]) employ a policy model for generation and a separate critic model to estimate token-level value. Group Relative Policy Optimization (GRPO) ([14]) eliminates the need for a critic by estimating advantages directly from groups of responses. Recent research try to apply RL to transform LLMs from passive generators into autonomous agents that learn through environmental interaction. GiGPO ([31]) implements a two-level grouping mechanism for trajectories, enabling precise credit assignment at both the episode and individual step levels. ReTool ([1]) uses PPO to train an agent to interleave natural language with code execution for mathematical reasoning. Chain-of-Agents ([13]) facilitates multi-agent collaboration within a single model by using dynamic, context-aware activation of specialized tool and role-playing agents. Furthermore, Tongyi Deep Research ([4]) introduces synthetic data generation pipeline and conduct customized on-policy agentic RL framework. However, such parameter-updating approaches result in prohibitive computational cost, which typically restricts application to LLMs with fewer than 32B parameters. Moreover, they only achieve diminishing returns compared to simply using larger, more powerful frozen LLMs. In contrast, our proposed Training-Free GRPO method seeks to achieve comparable or even better performance on state-of-the-art LLMs without any parameter updates, drastically reducing both data and computational requirements.

Training-Free Methods. A parallel line of research aims to improve LLM behavior at inference time without updating model weights. The general approach is in-context learning (ICL) ([18]), which leverages external or self-generated demonstrations within a prompt to induce desired behaviors. More recent methods introduce iterative refinement mechanisms. Self-Refine ([32]) generates an initial output and then uses the same LLM to provide verbal feedback for subsequent revisions. Similarly, Reflexion ([33]) incorporates an external feedback signal to prompt the model for reflection and a new attempt. In-context reinforcement learning (ICRL) ([34, 35]) demonstrates that LLMs can learn from scalar reward signals by receiving prompts containing their past outputs and associated feedback. TextGrad ([12]) proposes a more general framework, treating optimization as a process of back-propagating textual feedback through a structured computation graph. A key characteristic of these methods is their focus on iterative, within-sample improvement for a single query. In contrast, our Training-Free GRPO more closely mirrors traditional RL by learning from a separate dataset across multiple epochs to iteratively refine a shared, high-quality experience library for out-of-domain queries. Furthermore, given each query, unlike self-critique or context updates for a single trajectory, our method explicitly compares multiple rollouts per query for a semantic advantage to compare different trajectories in each group, which has been confirmed effective in Section 3.1. Specifically in the context for optimizing agent systems, Agent KB ([36]) constructs a shared, hierarchical knowledge base to enable the reuse of problem-solving experiences across tasks. Unlike the complex reason-retrieve-refine process of Agent KB, Training-Free GRPO simply injects the learned experiences into the prompt. Moreover, Agent KB relies on hand-crafted examples and employs an off-policy learning paradigm only once by collecting trajectories in the different way of online inference. In contrast, our Training-Free GRPO uses a consistent pipeline and more closely mirrors on-policy RL with multi-epoch learning.

6 Conclusion

Section Summary: This paper presents Training-Free GRPO, a new approach that optimizes AI decision-making by adjusting the context rather than tweaking the model's internal parameters. It uses grouped trial runs to build up useful knowledge from experiences, which guides a fixed language model to produce better outputs in specific areas. The method beats traditional techniques by handling limited data and high computing demands, opening up easier ways to customize powerful AI for everyday uses.

In this paper, we introduced Training-Free GRPO, a novel paradigm that shifts RL policy optimization from the parameter space to the context space. By leveraging group-based rollouts to iteratively distill a semantic advantage into an evolving experiential knowledge which serves as the token prior, our method successfully steers the output distribution of a frozen LLM agent, achieving significant performance gains in specialized domains. Experiments demonstrate that Training-Free GRPO not only surmounts the practical challenges of data scarcity and high computational cost but also outperforms traditional parameter-tuning methods. Our work establishes a new, highly efficient pathway for adapting powerful LLM agents, making advanced agentic capabilities more accessible and practical for real-world applications.

Contributions

Section Summary: The research paper on this topic was written by a team of authors primarily affiliated with Tencent Youtu Lab, including contributions from researchers at Fudan University and Xiamen University. Four authors—Yuzheng Cai, Siqi Cai, Yuchen Shi, and Zihan Xu—made equal contributions to the project. Ke Li served as the corresponding author for the group.

Authors Yuzheng Cai $^{\text{\scriptsize\rm 1, 2*}}$ Siqi Cai $^{\text{\scriptsize\rm 1*}}$ Yuchen Shi $^{\text{\scriptsize\rm 1*}}$ Zihan Xu $^{\text{\scriptsize\rm 1*}}$ Lichao Chen $^{\text{\scriptsize\rm 1, 3}}$ Yulei Qin $^{\text{\scriptsize\rm 1}}$ Xiaoyu Tan $^{\text{\scriptsize\rm 1}}$ Gang Li $^{\text{\scriptsize\rm 1}}$ Zongyi Li $^{\text{\scriptsize\rm 1}}$ Haojia Lin $^{\text{\scriptsize\rm 1}}$ Yong Mao $^{\text{\scriptsize\rm 1}}$ Ke Li $^{\text{\scriptsize\rm 1✉️}}$ Xing Sun $^{\text{\scriptsize\rm 1}}$ Affiliations $^{\text{\scriptsize\rm 1}}$ Tencent Youtu Lab $^{\text{\scriptsize\rm 2}}$ Fudan University $^{\text{\scriptsize\rm 3}}$ Xiamen University *Equal Contributions Yuzheng Cai Siqi Cai Yuchen Shi Zihan Xu

A Case Study

Section Summary: This case study examines how Training-Free GRPO improves an AI system's performance in math reasoning and web searching by incorporating guided experiences. In a geometry problem involving rectangles and collinear points, the unguided AI misplaces coordinates and skips verification, leading to an incorrect length calculation for segment CE, while the experience-guided version uses structured parameterization, correct orientations, and thorough checks to arrive at the precise value of 104. For a web search task quantifying rewards in a creator program and a play-to-airdrop event, the baseline AI relies on incomplete snippets and broad queries, missing key details, whereas the enhanced approach prioritizes official sources for more accurate and efficient results.

On math reasoning and web searching scenarios, we provide examples illustrating how Training-Free GRPO enhances ReAct ([21]) with DeepSeek-V3.1-Terminus and corresponding tools.

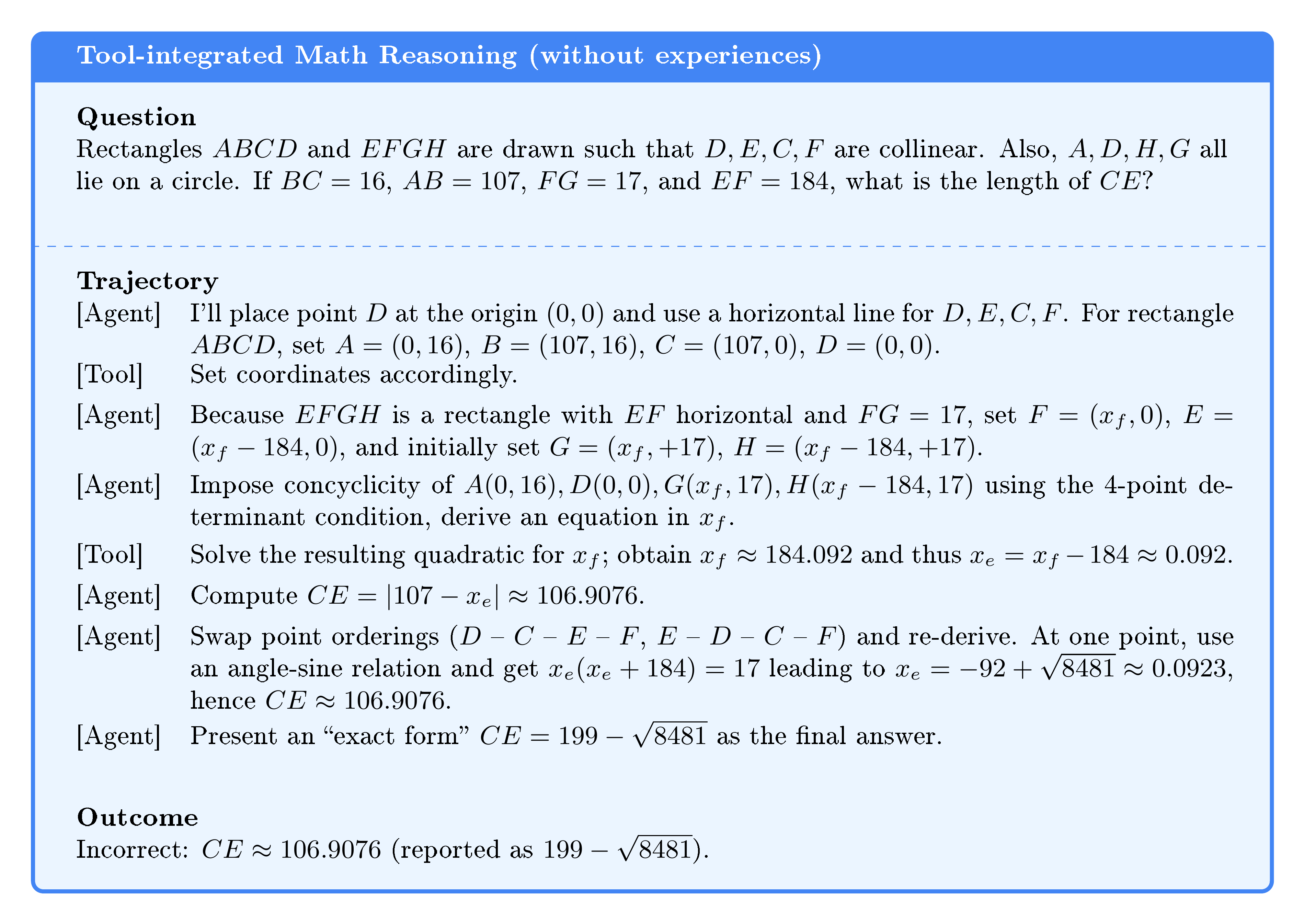

A.1 Experience-Guided Tool-Integrated Math Reasoning

We consider a geometric configuration with two rectangles $ABCD$ and $EFGH$ where $D, E, C, F$ are collinear in that order, and $A, D, H, G$ are concyclic. Given $BC=16$, $AB=107$, $FG=17$, $EF=184$, the task is to determine $CE$.

Baseline (without experiences). As shown in Figure 5, the unassisted agent initializes a coordinate system with $D=(0, 0)$ and models $ABCD$ as axis-aligned. For $EFGH$, it places $E=(x_f-184, 0)$, $F=(x_f, 0)$ and, critically, sets $G=(x_f, , +17)$, $H=(x_f-184, , +17)$, i.e., with a positive vertical orientation for the short side. It then enforces the four-point concyclicity of $A(0, 16)$, $D(0, 0)$, $G$, $H$ via a determinant condition and solves for $x_f$, yielding $x_f\approx 184.092$ and consequently $x_e=x_f-184\approx 0.092$. From this, it reports $CE\approx 106.9076$ and an "exact" expression $199-\sqrt{8481}$. This trajectory exhibits three systemic issues: (i) misinterpretation of the vertical orientation (wrong sign for the $y$-coordinates of $G, H$), (ii) inconsistent handling of the order $D$ – $E$ – $C$ – $F$ and the lack of a unified parameterization for segment relations, and (iii) absence of systematic, comprehensive post-solution verification—i.e., no integrated check that the final coordinates simultaneously satisfy rectangle dimensions. These issues lead to an incorrect cyclic constraint (e.g., an intermediate relation of the form $x(x+184)=17$) and acceptance of a spurious solution without full geometric verification. Note that although $CE\approx 106.91$ lies within $0<CE<107$, this alone does not validate the solution; the critical failure was the lack of holistic consistency checks across all problem constraints.

Enhanced (With Experiences). Refer to Figure 6, with a curated experience pool, the agent follows a structured pipeline:

- Directional ordering ([29]) and boundedness validation ([1]): It fixes the order $D$ – $E$ – $C$ – $F$ on a line and sets $CE=x$ with $0<x<107$, ensuring $E$ lies on segment $DC$ and $F$ lies beyond $C$.

- Segment-addition parameterization ([37]): It uses $DE+EC=DC=AB=107$ and $EC+CF=EF=184$ to obtain $DE=107-x$, $CF=184-x$, and places $D=(0, 0)$, $E=(107-x, 0)$, $C=(107, 0)$, $F=(291-x, 0)$.

- Consistent vertical orientation and cyclic modeling: Noting $A=(0, 16)$, $D=(0, 0)$, it orients the short side downward ($FG=17$) so $H=(107-x, -17)$, $G=(291-x, -17)$. Using the circle equation $x^2+y^2+Dx+Ey+F=0$ with $A$ and $D$ yields $F=0$, $E=-16$. Substituting $H$ and $G$, subtracting the two equations gives $D=2x-398$; back-substitution reduces to the quadratic $x^2 - 398x + 30576 = 0$, with discriminant $398^2 - 4\cdot 30576 = 36100 = 190^2$ and roots $x=104, , 294$.

- Root selection and full verification ([1], [7]): Applying $0<x<107$ filters out $x=294$, selecting $x=104$. The agent then verifies all constraints: $DE=107-104=3$, $CF=184-104=80$, $EF=184$, $FG=17$, and confirms that the circle $x^2+y^2-190x-16y=0$ passes through $A=(0, 16)$, $D=(0, 0)$, $H=(3, -17)$, $G=(187, -17)$.

Comparative Analysis. This case reveals a clear causal link between experience-guided behaviors and correctness. Experience [29] eliminates directional ambiguity and enforces the correct collinearity order, directly addressing the baseline’s misplacement of $G, H$. Experience [37] induces a clean single-variable parameterization ($DE=107-x$, $CF=184-x$), which simplifies the cyclic constraint to a solvable quadratic. Experience [1] imposes a necessary boundedness filter ($0<x<107$) to discard extraneous roots. Finally, experience [7] mandates comprehensive post-solution verification (rectangle dimensions, collinearity, concyclicity), preventing acceptance of spurious solutions. Compared to the unassisted trajectory, the experience-informed reasoning corrects the vertical orientation, resolves ordering and parameterization inconsistencies, and installs principled validation gates. This case demonstrates the positive impact of integrating domain-specific experiences on reliability and accuracy in tool-integrated mathematical reasoning.

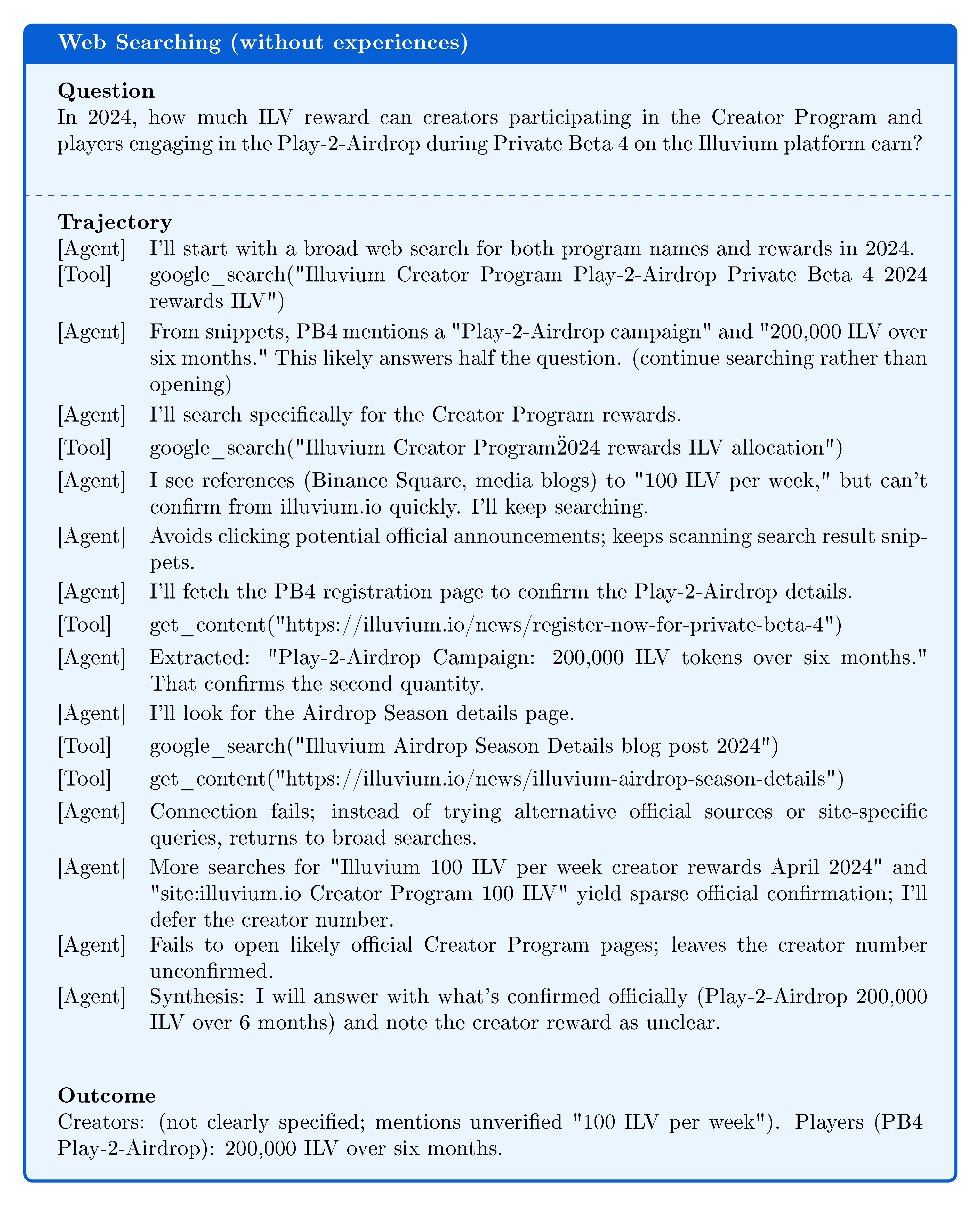

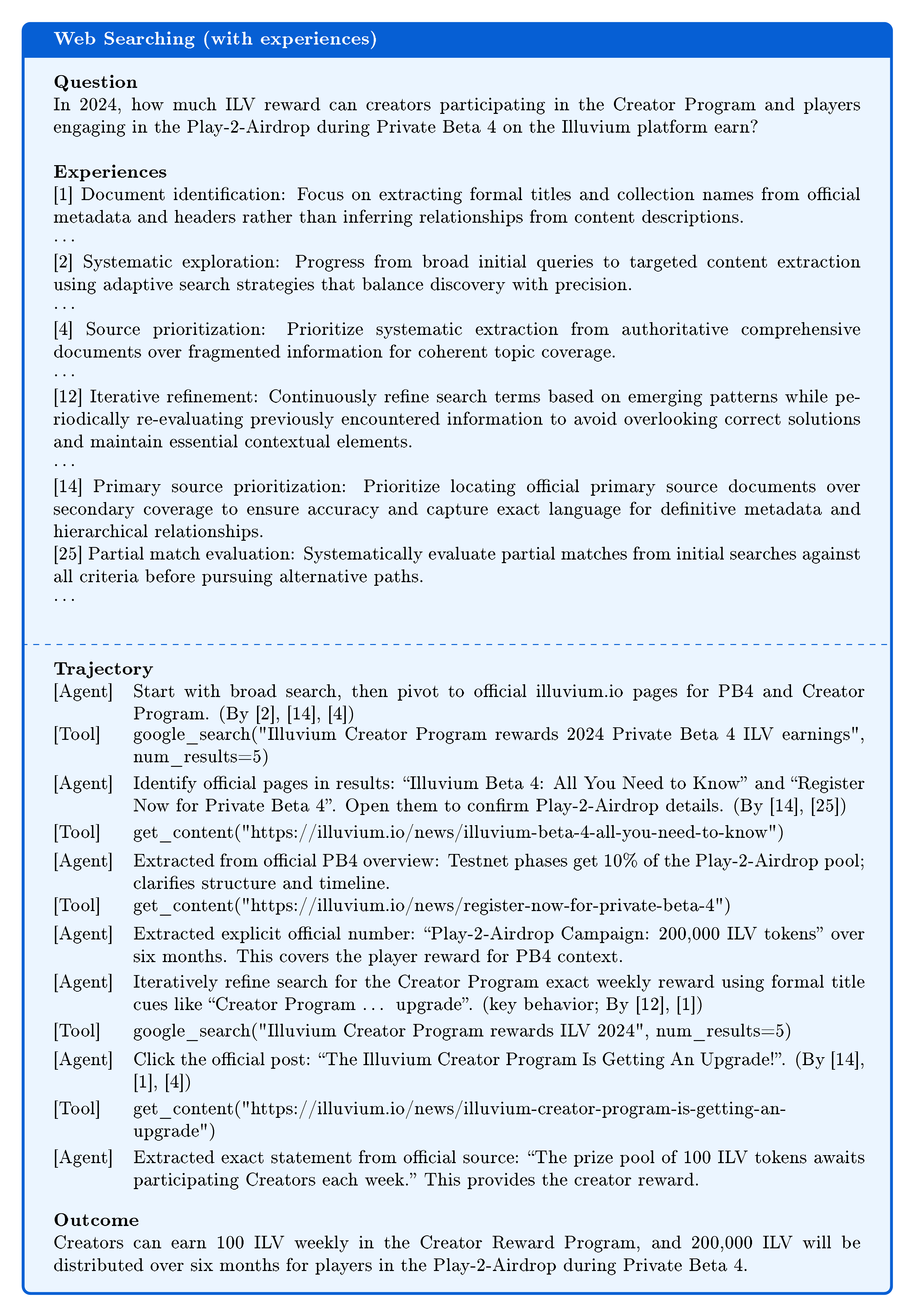

A.2 Experience-Guided Web Searching

We consider a web searching task from WebWalkerQA: quantify 2024 rewards for (i) creators in the Creator Program (weekly amount), and (ii) players in the Play-2-Airdrop during Private Beta 4 (total pool and duration).

Baseline (without experiences). As summarized in Figure 7, the unassisted agent issues multiple broad searches and relies heavily on result snippets and third-party summaries, delaying clicks into authoritative pages. It eventually opens the PB4 registration post to confirm “Play-2-Airdrop Campaign: 200{, }000 ILV over six months, ” but continues to scan snippets for the Creator Program value without opening the relevant official post. Connection errors to one official page cause the agent to revert to broad searches rather than alternative primary-source strategies (e.g., site-specific queries or adjacent official posts). The trajectory remains incomplete: it reports the Play-2-Airdrop figure but fails to confirm the Creator Program’s “100 ILV weekly” from an official source, yielding an incorrect/incomplete answer.

Enhanced (With Experiences). Refer to Figure 8. With a curated experience pool, the agent follows a disciplined pipeline: (1) prioritize official sources ([14], [4]) and open the PB4 overview and registration posts to extract the “200{, }000 ILV over six months” and Testnet/Mainnet allocation structure; (2) refine search terms to target formal titles ([2], [12], [1]) and open “The Illuvium Creator Program Is Getting An Upgrade!”; (3) extract the exact line “The prize pool of 100 ILV tokens awaits participating Creators each week, ” and (4) synthesize both verified statements into a complete answer aligned with the question requirements ([25]). This results in the correct, fully supported output: creators earn 100 ILV weekly; players have a 200{, }000 ILV pool distributed over six months in PB4’s Play-2-Airdrop.

Comparative Analysis. Experience-guided behaviors directly address baseline deficiencies: primary source prioritization ([14], [4]) removes reliance on snippets and third-party coverage; document identification ([1]) and iterative refinement ([2], [12]) ensure the agent locates and opens the exact Creator Program post; partial match evaluation ([25]) steers the agent to confirm numerical claims at their authoritative origin. In contrast, the baseline wastes context on searches without content acquisition, leaves critical values unverified, and produces an incomplete answer.

B Prompts

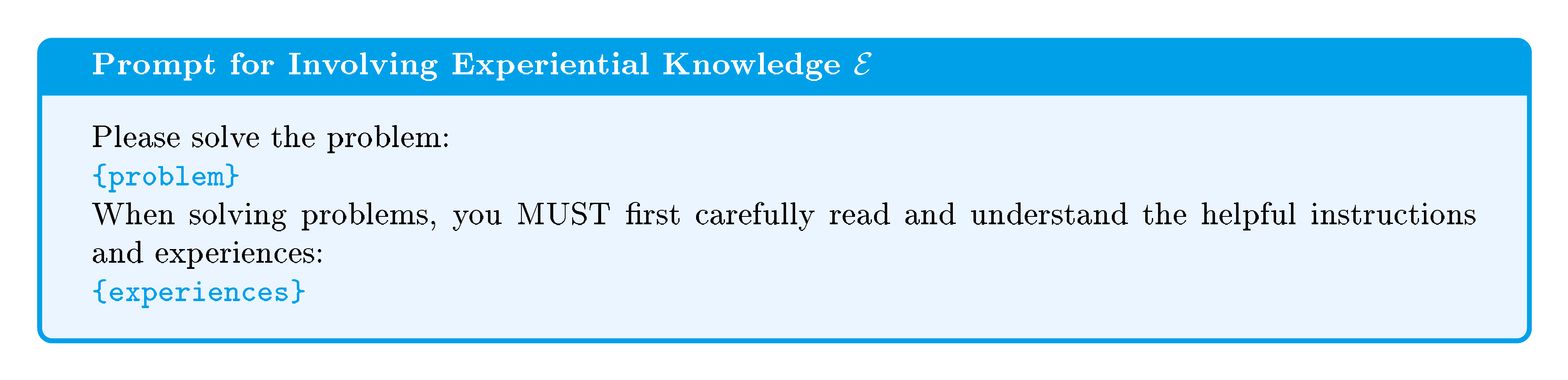

Section Summary: This appendix outlines the various prompts employed in math reasoning tasks to guide AI models. Figures 9 and 10 show the core prompts for tackling math problems, including one that incorporates prior experiential knowledge. Figures 11 through 13 detail additional prompts for summarizing reasoning paths, calculating group-based advantages in comparisons, and refining that experiential knowledge based on batch results.

In this appendix, we provide the prompts used in math reasoning tasks. Figures 9 and 10 present the prompts for solving math tasks. Figures 11 and 12 are used in group relative semantic advantage. Figure 13 works for optimizing the experiential knowledge $ \mathcal{E} $.

C Examples of Learned Experiences

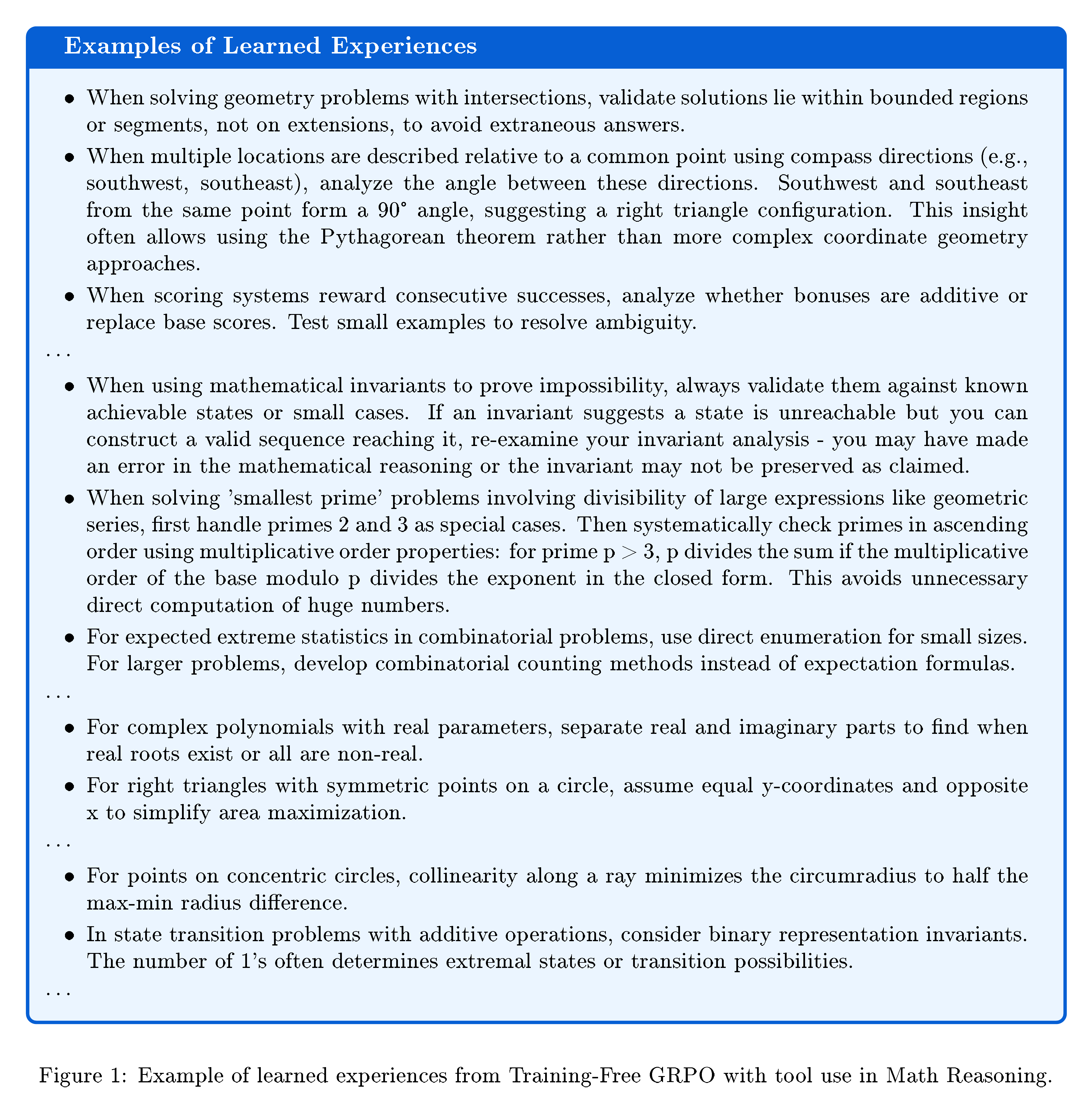

In this appendix, we provide some examples in Figure 14, which are extracted from 48 learned experiences by Training-Free GRPO with tool use in Math Reasoning.

References

Section Summary: This references section lists over two dozen sources, primarily recent academic papers and technical reports from 2023 to 2025, focusing on advancements in artificial intelligence, such as reinforcement learning for large language models, AI agents that use tools for reasoning and problem-solving, and code generation systems. It includes arXiv preprints on topics like strategic tool use in AI, multi-agent collaboration for tasks like math and web navigation, and open-source projects from teams at companies like Alibaba and MiroMind. Older foundational works, such as early papers on language models and surveys on AI interfaces, provide historical context alongside resources for math problems and model technical reports from projects like DeepSeek and Qwen.

[1] Jiazhan Feng, Shijue Huang, Xingwei Qu, Ge Zhang, Yujia Qin, Baoquan Zhong, Chengquan Jiang, Jinxin Chi, and Wanjun Zhong. Retool: Reinforcement learning for strategic tool use in llms. arXiv preprint arXiv:2504.11536, 2025a.

[2] Xinji Mai, Haotian Xu, Weinong Wang, Jian Hu, Yingying Zhang, Wenqiang Zhang, et al. Agent rl scaling law: Agent rl with spontaneous code execution for mathematical problem solving. arXiv preprint arXiv:2505.07773, 2025.

[3] Zhenghai Xue, Longtao Zheng, Qian Liu, Yingru Li, Xiaosen Zheng, Zejun Ma, and Bo An. Simpletir: End-to-end reinforcement learning for multi-turn tool-integrated reasoning. arXiv preprint arXiv:2509.02479, 2025.

[4] Tongyi DeepResearch Team. Tongyi-deepresearch. https://github.com/Alibaba-NLP/DeepResearch, 2025.

[5] Zhengwei Tao, Jialong Wu, Wenbiao Yin, Junkai Zhang, Baixuan Li, Haiyang Shen, Kuan Li, Liwen Zhang, Xinyu Wang, Yong Jiang, et al. Webshaper: Agentically data synthesizing via information-seeking formalization. arXiv preprint arXiv:2507.15061, 2025.

[6] Bowen Jin, Hansi Zeng, Zhenrui Yue, Jinsung Yoon, Sercan Arik, Dong Wang, Hamed Zamani, and Jiawei Han. Search-r1: Training llms to reason and leverage search engines with reinforcement learning. arXiv preprint arXiv:2503.09516, 2025.

[7] MiroMind AI Team. Mirothinker: An open-source agentic model series trained for deep research and complex, long-horizon problem solving. https://github.com/MiroMindAI/MiroThinker, 2025a.

[8] Kechi Zhang, Jia Li, Ge Li, Xianjie Shi, and Zhi Jin. Codeagent: Enhancing code generation with tool-integrated agent systems for real-world repo-level coding challenges. arXiv preprint arXiv:2401.07339, 2024.

[9] Dong Huang, Jie M Zhang, Michael Luck, Qingwen Bu, Yuhao Qing, and Heming Cui. Agentcoder: Multi-agent-based code generation with iterative testing and optimisation. arXiv preprint arXiv:2312.13010, 2023.

[10] Shuai Wang, Weiwen Liu, Jingxuan Chen, Yuqi Zhou, Weinan Gan, Xingshan Zeng, Yuhan Che, Shuai Yu, Xinlong Hao, Kun Shao, et al. Gui agents with foundation models: A comprehensive survey. arXiv preprint arXiv:2411.04890, 2024a.

[11] Junyang Wang, Haiyang Xu, Haitao Jia, Xi Zhang, Ming Yan, Weizhou Shen, Ji Zhang, Fei Huang, and Jitao Sang. Mobile-agent-v2: Mobile device operation assistant with effective navigation via multi-agent collaboration. Advances in Neural Information Processing Systems, 37:2686–2710, 2024b.

[12] Mert Yuksekgonul, Federico Bianchi, Joseph Boen, Sheng Liu, Pan Lu, Zhi Huang, Carlos Guestrin, and James Zou. Optimizing generative ai by backpropagating language model feedback. Nature, 639(8055):609–616, 2025.

[13] Weizhen Li, Jianbo Lin, Zhuosong Jiang, Jingyi Cao, Xinpeng Liu, Jiayu Zhang, Zhenqiang Huang, Qianben Chen, Weichen Sun, Qiexiang Wang, Hongxuan Lu, Tianrui Qin, Chenghao Zhu, Yi Yao, Shuying Fan, Xiaowan Li, Tiannan Wang, Pai Liu, King Zhu, He Zhu, Dingfeng Shi, Piaohong Wang, Yeyi Guan, Xiangru Tang, Minghao Liu, Yuchen Eleanor Jiang, Jian Yang, Jiaheng Liu, Ge Zhang, and Wangchunshu Zhou. Chain-of-agents: End-to-end agent foundation models via multi-agent distillation and agentic rl. 2025. URL https://arxiv.org/abs/2508.13167.

[14] Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Yang Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300, 2024.

[15] Zichen Liu, Changyu Chen, Wenjun Li, Penghui Qi, Tianyu Pang, Chao Du, Wee Sun Lee, and Min Lin. Understanding r1-zero-like training: A critical perspective. arXiv preprint arXiv:2503.20783, 2025.

[16] Qiying Yu, Zheng Zhang, Ruofei Zhu, Yufeng Yuan, Xiaochen Zuo, Yu Yue, Weinan Dai, Tiantian Fan, Gaohong Liu, Lingjun Liu, et al. Dapo: An open-source llm reinforcement learning system at scale. arXiv preprint arXiv:2503.14476, 2025.

[17] Chujie Zheng, Shixuan Liu, Mingze Li, Xiong-Hui Chen, Bowen Yu, Chang Gao, Kai Dang, Yuqiong Liu, Rui Men, An Yang, et al. Group sequence policy optimization. arXiv preprint arXiv:2507.18071, 2025.

[18] Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

[19] DeepSeek-AI. Deepseek-v3 technical report, 2024. URL https://arxiv.org/abs/2412.19437.

[20] AIME. Aime problems and solutions, 2025. URL https://artofproblemsolving.com/wiki/index.php/AIME_Problems_and_Solutions.

[21] Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models. In International Conference on Learning Representations (ICLR), 2023.

[22] An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. Qwen3 technical report. arXiv preprint arXiv:2505.09388, 2025a.

[23] An Yang, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran Wei, Huan Lin, Jian Yang, Jianhong Tu, Jianwei Zhang, Jianxin Yang, Jiaxi Yang, Jingren Zhou, Junyang Lin, Kai Dang, Keming Lu, Keqin Bao, Kexin Yang, Le Yu, Mei Li, Mingfeng Xue, Pei Zhang, Qin Zhu, Rui Men, Runji Lin, Tianhao Li, Tianyi Tang, Tingyu Xia, Xingzhang Ren, Xuancheng Ren, Yang Fan, Yang Su, Yichang Zhang, Yu Wan, Yuqiong Liu, Zeyu Cui, Zhenru Zhang, and Zihan Qiu. Qwen2.5 technical report, 2025b. URL https://arxiv.org/abs/2412.15115.

[24] Jialong Wu, Wenbiao Yin, Yong Jiang, Zhenglin Wang, Zekun Xi, Runnan Fang, Linhai Zhang, Yulan He, Deyu Zhou, Pengjun Xie, and Fei Huang. Webwalker: Benchmarking llms in web traversal. 2025. URL https://arxiv.org/abs/2501.07572.

[25] Qwen Team. Qwq-32b: Embracing the power of reinforcement learning, March 2025b. URL https://qwenlm.github.io/blog/qwq-32b/.

[26] Timo Schick, Jane Dwivedi-Yu, Roberto Dess`ı, Roberta Raileanu, Maria Lomeli, Luke Zettlemoyer, Nicola Cancedda, and Thomas Scialom. Toolformer: Language models can teach themselves to use tools, 2023. arXiv preprint arXiv:2302.04761, 2023.

[27] Sirui Hong, Mingchen Zhuge, Jonathan Chen, Xiawu Zheng, Yuheng Cheng, Ceyao Zhang, Jinlin Wang, Zili Wang, Steven Ka Shing Yau, Zijuan Lin, et al. MetaGPT: Meta programming for a multi-agent collaborative framework. International Conference on Learning Representations, ICLR, 2024.

[28] Xingyao Wang, Yangyi Chen, Lifan Yuan, Yizhe Zhang, Yunzhu Li, Hao Peng, and Heng Ji. Executable code actions elicit better llm agents. In Forty-first International Conference on Machine Learning, 2024c.

[29] Mengkang Hu, Yuhang Zhou, Wendong Fan, Yuzhou Nie, Bowei Xia, Tao Sun, Ziyu Ye, Zhaoxuan Jin, Yingru Li, Qiguang Chen, Zeyu Zhang, Yifeng Wang, Qianshuo Ye, Bernard Ghanem, Ping Luo, and Guohao Li. OWL: Optimized workforce learning for general multi-agent assistance in real-world task automation, 2025. URL https://arxiv.org/abs/2505.23885.

[30] John Schulman, Filip Wolski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

[31] Lang Feng, Zhenghai Xue, Tingcong Liu, and Bo An. Group-in-group policy optimization for llm agent training. arXiv preprint arXiv:2505.10978, 2025b.

[32] Aman Madaan, Niket Tandon, Prakhar Gupta, Skyler Hallinan, Luyu Gao, Sarah Wiegreffe, Uri Alon, Nouha Dziri, Shrimai Prabhumoye, Yiming Yang, et al. Self-refine: Iterative refinement with self-feedback. Advances in Neural Information Processing Systems, 36:46534–46594, 2023.

[33] Noah Shinn, Federico Cassano, Ashwin Gopinath, Karthik Narasimhan, and Shunyu Yao. Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems, 36:8634–8652, 2023.

[34] Kefan Song, Amir Moeini, Peng Wang, Lei Gong, Rohan Chandra, Yanjun Qi, and Shangtong Zhang. Reward is enough: Llms are in-context reinforcement learners. arXiv preprint arXiv:2506.06303, 2025.

[35] Giovanni Monea, Antoine Bosselut, Kianté Brantley, and Yoav Artzi. Llms are in-context reinforcement learners. 2024.

[36] Xiangru Tang, Tianrui Qin, Tianhao Peng, Ziyang Zhou, Daniel Shao, Tingting Du, Xinming Wei, Peng Xia, Fang Wu, He Zhu, et al. Agent KB: Leveraging cross-domain experience for agentic problem solving. arXiv preprint arXiv:2507.06229, 2025.