Variational Flow Maps: Make Some Noise for One-Step Conditional Generation

Abbas Mammadov

Department of Statistics, University of Oxford

So Takao

California Institute of Technology

Bohan Chen

California Institute of Technology

Ricardo Baptista

University of Toronto

Morteza Mardani

NVIDIA

Yee Whye Teh

Department of Statistics, University of Oxford

Julius Berner

NVIDIA

Correspond to: Abbas Mammadov [email protected]

Keywords: Machine Learning, ICML

Abstract

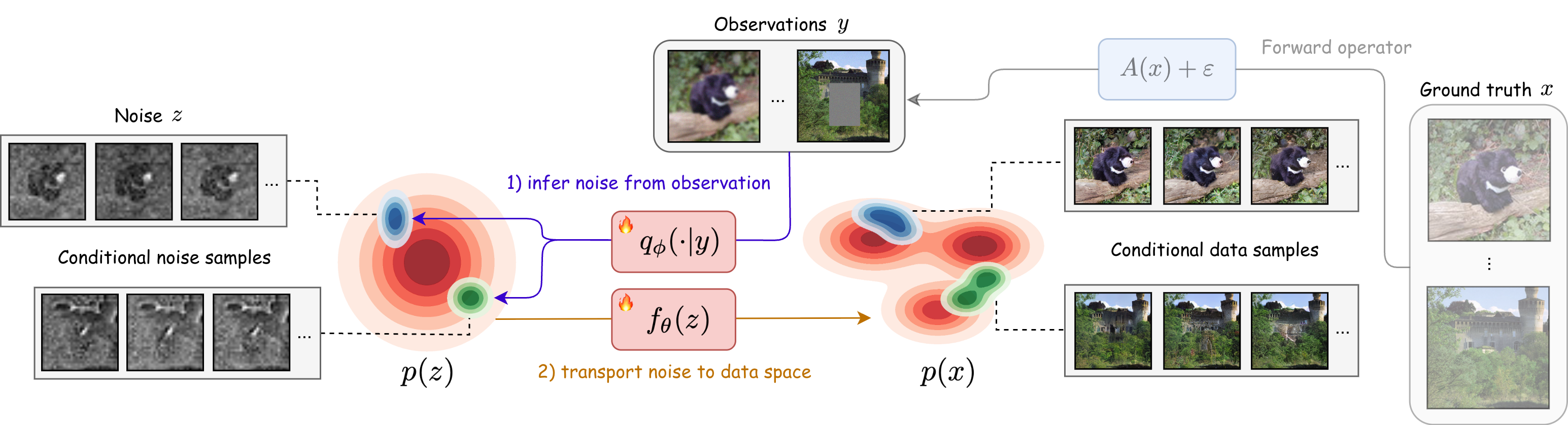

Flow maps enable high-quality image generation in a single forward pass. However, unlike iterative diffusion models, their lack of an explicit sampling trajectory impedes incorporating external constraints for conditional generation and solving inverse problems. We put forth Variational Flow Maps, a framework for conditional sampling that shifts the perspective of conditioning from "guiding a sampling path", to that of "learning the proper initial noise". Specifically, given an observation, we seek to learn a noise adapter model that outputs a noise distribution, so that after mapping to the data space via flow map, the samples respect the observation and data prior. To this end, we develop a principled variational objective that jointly trains the noise adapter and the flow map, improving noise-data alignment, such that sampling from complex data posterior is achieved with a simple adapter. Experiments on various inverse problems show that VFMs produce well-calibrated conditional samples in a single (or few) steps. For ImageNet, VFM attains competitive fidelity while accelerating the sampling by orders of magnitude compared to alternative iterative diffusion/flow models. Code is available at https://github.com/abbasmammadov/VFM .

Executive Summary: Generative models, such as diffusion-based systems, have revolutionized image creation by producing high-fidelity outputs like realistic photos from noise. However, they require dozens to hundreds of iterative steps for each sample, making them computationally expensive and impractical for real-time use in areas like medical imaging, video editing, or scientific simulations. This slowness becomes especially problematic for conditional generation tasks, where outputs must align with specific constraints, such as restoring a blurred or partially obscured image—an inverse problem common in fields like astronomy and diagnostics. As AI applications scale, reducing this computational burden without sacrificing quality is urgent to enable broader adoption and lower energy costs.

This paper introduces Variational Flow Maps (VFMs), a new framework designed to achieve high-quality conditional image generation in just one or a few steps. The core idea is to adapt fast "flow maps"—neural networks that directly transform random noise into images—by learning a simple "noise adapter" that selects appropriate starting noise based on an observation, like a degraded image. Unlike traditional methods that iteratively guide the generation process, VFMs reformulate the problem in the noise space, ensuring the output respects both the constraint and the underlying data distribution.

The authors developed VFMs through a principled training approach that jointly optimizes the flow map and noise adapter using a variational objective, similar to techniques in autoencoders but tailored for flows. They tested it on a 2D toy dataset and high-resolution ImageNet images (256x256 pixels), covering inverse problems like inpainting missing regions, deblurring, and super-resolution. Training involved datasets of up to 50,000 samples, with models based on established architectures fine-tuned over 100-180 epochs on GPU clusters. Key assumptions included Gaussian noise distributions and linear forward operators for observations, ensuring computational tractability.

The main findings highlight VFMs' efficiency and effectiveness. First, on ImageNet tasks, VFMs generated samples with superior perceptual quality—measured by metrics like Fréchet Inception Distance (FID) of 33 versus 63-76 for baselines—and better distribution matching, outperforming iterative diffusion methods by capturing multimodal posteriors (e.g., diverse plausible completions for masked images). Second, sampling speed improved by orders of magnitude: one neural evaluation (about 0.03 seconds) versus 250+ steps (up to a minute) for competitors, while maintaining sharpness and diversity. Third, joint training of the components proved essential; training the adapter alone led to artifacts and poor calibration. A 2D ablation confirmed that this coupling warps the noise space to better fit complex constraints, with metrics like maximum mean discrepancy (MMD) dropping by 20-50% compared to fixed-map baselines. Finally, VFMs extended to reward alignment, fine-tuning models to favor high-reward outputs (e.g., aligned with text prompts) in under 0.5 epochs, achieving top scores on human preference benchmarks.

These results matter because they bridge the gap between fast unconditional generation and constrained real-world applications, potentially cutting inference costs by 100x and enabling on-device AI for tasks like real-time photo editing or uncertainty-aware medical scans. Unlike expectations that one-step methods sacrifice quality, VFMs deliver competitive or better fidelity, especially in perceptual metrics, while preserving uncertainty estimates crucial for decision-making in high-stakes fields. This challenges prior work reliant on slow iterations and opens doors to energy-efficient AI deployment.

Next, organizations should pilot VFMs in targeted applications, such as image restoration pipelines, integrating the open-source code for custom fine-tuning. For broader impact, extend the framework to video or audio by incorporating temporal flows, or relax the Gaussian adapter assumption with more flexible distributions to handle non-linear problems. Trade-offs include balancing speed gains against minor setup complexity for joint training.

Confidence in these findings is high for image inverse problems, backed by rigorous comparisons on standard benchmarks, though results may vary with dataset shifts. Limitations include reliance on pre-trained backbones, sensitivity to hyperparameters like the relaxation parameter (τ), and untested scalability to ultra-high resolutions or non-vision domains; further validation on diverse real-world data would strengthen applicability.

1. Introduction

Section Summary: Diffusion and flow-based methods have become the leading approach for creating high-quality generated content like images and videos, but they are slow because generating even one sample requires many sequential steps, making them impractical for real-time use. Recent efforts, such as consistency models and flow maps, aim to speed this up by enabling generation in just a few steps, yet they struggle with conditional tasks where outputs must match specific constraints, like restoring a blurry image, due to a lack of ways to guide the process. To solve this, the authors introduce Variational Flow Maps, a new framework that learns to select the right starting noise based on the given constraint, allowing fast one- or few-step generation while jointly training the core model and an adapter for better alignment between noise, data, and observations, with extensions for tasks like reward-based sampling.

Diffusion and flow-based methods have emerged as the dominant paradigm for high-fidelity generative modeling, achieving state-of-the-art results across images, audio, and video ([1, 2, 3, 4, 5, 6]). These methods can be understood from the unified perspective of interpolating between two distributions; a simple noise distribution and a complex data distribution, and learning dynamics based on ordinary or stochastic differential equations (ODE/SDEs) that transport one to the other [7]. However, these share a fundamental limitation that generating a single sample requires dozens to hundreds of sequential function evaluations, creating high computational cost for real-time applications.

To address this issue, recent research have sought to dramatically reduce this sampling cost. Consistency models ([8]), for example, learn to map any point on the flow trajectory directly to the corresponding clean data, enabling few-step generation. Despite their promise, consistency models often suffer from training instabilities and frequently require re-noising steps for multi-step sampling to correct the drift trajectory, complicating the inference process [9]. Flow maps ([10, 11]) offer an alternative framework that seeks to learn ODE flows directly, by training on the mathematical structure of such flows. For example, the state-of-the-art Mean Flow model [12] presents a particular parameterisation of flow maps based on average velocities, and trained on the so-called Eulerian condition satisfied by ODE flows.

While flow maps excel at unconditional few-steps generation, many applications require conditional generation to produce samples that satisfy external constraints. Inverse problems provide a canonical example: given a degraded observation $y = A(x) + \varepsilon$ (e.g., a blurred, masked, or noisy image), we seek to recover plausible original signals $x$ consistent with both the observation and our learned prior $p(x)$. Iterative generative models naturally accommodate such conditioning through guidance mechanisms [13, 14, 15, 16], where the trajectory is iteratively nudged toward the conditional target. Flow maps, despite their efficiency, lack this iterative refinement mechanism: once the noise vector $z$ is chosen, the generated sample $z \mapsto x$ is fixed; there is no intermediate state to guide, nor a trajectory to steer, hence there is no opportunity to incorporate measurement information during generation. This "guidance gap" has limited flow maps to unconditional settings, leaving their potential for conditional generation largely unexplored.

To fill this "guidance gap", we introduce Variational Flow Maps (VFMs), a framework for conditional sampling that is compatible with one/few-step generation using flow maps. Our approach is based on the following perspective: rather than steer the generation process itself, we can find the noise $z$ to generate from, as each $z$ deterministically maps to a data $x = f_\theta(z)$ (see Figure 1). Specifically, given an observation $y$, we seek to produce a distribution of $z$ 's, such that each $x = f_\theta(z)$ is a candidate data that produced $y$. Formulating this as a Bayesian inverse problem, we can derive a principled variational training objective to jointly learn the flow map $f_\theta$ and a noise adapter model $q_\phi$ that produces appropriate noise $z$ from observations $y$.

We note the resemblance to variational autoencoders (VAEs) ([17]), where $q_\phi$ plays the role of an encoder that takes $y$ to a latent $z$, and $f_\theta$ acts as a decoder from $z$ to data $x$. Our key innovation is in learning the alignment of all three variables $(x, y, z)$ simultaneously, allowing updates to $q_\phi$ to reshape the noise-to-data coupling by $f_\theta$ and vice versa. Notably, we observe that joint training can compensate for limited adapter expressivity by learning a noise-to-data coupling that makes the conditional posterior easier to represent in latent space.

Altogether, our contributions can be summarized as follows:

- We introduce Variational Flow Maps (VFMs), a new paradigm enabling one and few-step conditional generation with flow maps by learning an observation-dependent noise sampler.

- We derive a principled variational objective for joint adapter/flow map training, linking the mean flow loss to likelihood bounds.

- We demonstrate empirically and theoretically that joint training yields better noise-data coupling to fit complex posteriors in data space using simple variational posteriors in noise space.

- We extend the framework to general reward alignment, introducing a fast and scalable method that fine-tunes pre-trained flow maps to sample from reward-tilted distributions in a single step.

2. Background

Section Summary: This section provides background on key concepts in generative modeling and inference. It first explains flow-based models, which transform simple noise into realistic data using mathematical flows, and introduces flow maps as a faster way to generate samples in just a few steps without lengthy computations. It then covers inverse problems, where noisy observations are used to recover original signals through Bayesian methods relying on generative priors, and discusses variational inference, which approximates complex probability distributions via neural networks, as seen in variational autoencoders that encode and decode data efficiently.

We review essential backgrounds on flow maps for few-step generation, the Bayesian formulation of inverse problems, and variational inference with amortization.

2.1 Flow-based Generative Models and Flow Maps

Flow-based generative models learn to transport samples from a prior distribution $p_1(z) = \mathcal{N}(0, I)$ to the data distribution $p_0(x) = p_{\text{data}}(x)$ via an ODE:

$ \frac{dx_t}{dt} = v_t(x_t), \quad t \in [0, 1], $

where $v_t$ is a time-dependent velocity field. Flow matching ([5, 6, 7]) provides a training objective to learn $v_t$: given $x_0 \sim p_{\text{data}}$ and $x_1 \sim \mathcal{N}(0, I)$, we construct a linear interpolant $x_t = (1-t)x_0 + tx_1$ with conditional velocity $v_t = x_1 - x_0$. Then, $v_\theta(x_t, t) \approx v_t(x_t)$ is trained via:

$ \mathcal{L}{\text{FM}}(\theta) = \mathbb{E}{x_0, x_1, t}\left[|v_\theta(x_t, t) - (x_1 - x_0)|^2 \right]. $

At inference time, samples are generated by integrating the ODE backwards from $t=1$ to $t=0$, typically requiring 50–250 function evaluations.

To accelerate sample generation, flow maps ([10, 11]) directly learn the solution operator of the ODE, instead of the instantaneous velocity $v_t$. Denoting by $\phi_{t, s}: x_t \mapsto x_s$ the backward flow of the ODE, the two-time flow map $f_\theta(x_t, s, t)$ learns to approximate $\phi_{t, s}(x_t)$ for any $0 \leq s < t \leq 1$. This enables generation with an arbitrary number of steps chosen post-training, e.g. a single evaluation $f_\theta(x_1, 0, 1)$ produces a one-step sample, while intermediate evaluations can be composed for multi-step refinement.

One such approach to learn flow maps is mean flows ([12]), which introduce the average velocity as an alternative characterization:

$ u(x_t, r, t) := \frac{1}{t - r} \int_r^t v_s(\phi_{t, s}(x_t)) , ds. $

The average velocity satisfies $x_r = x_t - (t-r) \cdot u(x_t, r, t)$, enabling one-step generation via $x_0 = x_1 - u(x_1, 0, 1)$. Thus the corresponding flow map is given by $f_\theta(x_t, r, t) = x_t - (t-r) \cdot u_\theta(x_t, r, t)$. For simplicity, we denote the one-step flow map as $f_\theta(z) := z - u_\theta(z, 0, 1)$, mapping noise $z \sim \mathcal{N}(0, I)$ directly to data $x = f_\theta(z)$.

2.2 Inverse Problems

Inverse problem seeks to recover an unknown signal $x \in \mathbb{R}^d$ from noisy observations, given by

$ y = A(x) + \varepsilon, \quad \varepsilon \sim \mathcal{N}(0, \sigma^2 I), $

where $A: \mathbb{R}^d \to \mathbb{R}^m$ is a known forward operator and $\sigma > 0$ is the noise level. Given a prior $p(x)$ over signals, the Bayesian formulation seeks the posterior distribution:

$ p(x|y) \propto \exp\left(-\frac{|y - A(x)|^2}{2\sigma^2} \right) p(x).\tag{1} $

When $p(x)$ is defined implicitly by a generative model, guidance-based methods ([14, 16]) approximate posterior sampling by incorporating likelihood gradients $\nabla_x \log p(y|x)$ at each denoising step. While effective, these methods inherently require iterative refinement and cannot be applied to one-step flow maps.

2.3 Variational Inference and Data Amortization

Variational inference seeks to approximate an intractable posterior $p(z|x)$ with a tractable disribution $q(z|x)$ by minimizing the Kullback-Leibler (KL) divergence:

$ \text{KL}(q(z|x) | p(z|x)) := \mathbb{E}{q\phi}[\log q(z|x) - \log p(z|x)]. $

Extending this, amortized inference uses a neural network to directly predict the variational distribution from the conditioning variable $x$, rather than optimizing separately for each instance. For example, if we choose the variational family to be Gaussians with diagonal covariance, then amortized inference learns a neural network $x \mapsto (\mu_\phi(x), \sigma_\phi(x))$ with parameter $\phi$, such that $q_\phi(z|x) = \mathcal{N}(z|\mu_\phi(x), \mathtt{diag}(\sigma^2_\phi(x)))$ is close to $p(z|x)$ under the KL divergence.

A prototypical example is the Variational Autoencoder (VAE) ([17]), which learns both an encoder $q_\phi(z|x)$ and a decoder $p_\theta(x|z)$ by optimizing the VAE objective $\mathcal{L}{\text{VAE}}(\theta, \phi) = \mathbb{E}{p(x)}[\ell(\theta, \phi; x)]$, where

$ \ell(\theta, \phi; x) := -\mathbb{E}{q\phi(z|x)}[\log p_\theta(x|z)] + \text{KL}(q_\phi(z|x) | p(z)), $

is the negative evidence lower bound (ELBO), yielding $q_\phi(z | x) \approx p_\theta(z | x) \propto p_\theta(x|z)p(z)$ for any $x \sim p(x)$. Probabilistically, the VAE objective can be derived from the KL divergence between two representations of the joint distribution of $(x, z)$, i.e., $\text{KL}(q_\phi(z, x) || p_\theta(z, x))$, where $q_\phi(z, x) = q_\phi(z | x)p(x)$ and $p_\theta(z, x) = p_\theta(x | z)p(z)$. This perspective will be useful in the derivation of our loss later.

3. Variational Flow Maps (VFMs)

Section Summary: Variational Flow Maps (VFMs) offer a method for generating data in one step based on observations by reworking a complex math problem into a simpler "noise space," using a flow map to connect random noise to data and approximating the likely noise patterns with a technique similar to variational autoencoders. While a basic version of this approach falls short because it ignores important flow map structures and uses a too-simple approximation for the noise patterns, the improved strategy trains the flow map and the noise approximator together, adding a term to ensure generated data stays true to the original dataset. This joint training, supported by math showing it better captures the average expected data for any observation, avoids biases from separate training and makes the process more reliable.

Our proposed method for one-step conditional generation, which we term Variational Flow Maps (VFMs), is based on reformulating the inverse problem Equation 1 in noise space. To motivate our methodology, we begin with a simple “strawman" approach that is intuitively sound but ultimately insufficient for our task: Let $x = f_\theta(z)$ denote a pretrained flow map. Then the posterior over latent noise variables induced by the inverse problem can be written as

$ p(z|y) \propto \exp\left(-\frac{|y - A(f_\theta(z))|^2}{2\sigma^2} \right) p(z).\tag{2} $

Although the posterior Equation 2 is intractable, we can approximate it in the same spirit as VAEs. In particular, introducing a variational posterior $q_\phi(z | y) \approx p(z | y)$, we minimize the objective $\mathcal{L}{\text{VAE}}(\theta, \phi) = \mathbb{E}{p(y)}[\ell(\theta, \phi ; y)]$, where,

$ \ell(\theta, \phi ; y) := -\mathbb{E}{q\phi(z|y)}[\log p_\theta(y|z)] + \text{KL}(q_\phi(z|y) | p(z)),\tag{3} $

and $p_\theta(y | z) := \mathcal{N}(y | A(f_\theta(z)), \sigma^2 I)$, the likelihood in noise space. A key advantage of working in the noise space rather than the original data space is that the noise prior $p(z)$ is simple and tractable (commonly $\mathcal{N}(0, I)$, which we assume hereafter). Thus, imposing a conjugate variational posterior, such as $q_\phi(z|y) = \mathcal{N}(z|\mu_\phi(y), \mathtt{diag}(\sigma^2_\phi(y)))$, makes the computation of the KL term in Equation 3 tractable.

However, the objective Equation 3 has two major limitations in our setting. First, it does not impose structural properties of flow maps, such as the semi-group property ([11]), known to be crucial for learning said maps. Second, when the flow map $f_\theta$ is pretrained and held fixed, a Gaussian variational posterior $q_\phi(z|y)$ may not be expressive enough to approximate the true posterior $p(z | y)$ accurately.

Motivated by this observation, we pursue training the parameters $\theta$ and $\phi$ jointly. By adapting the map $f_\theta : z \mapsto x$ alongside learning the variational posterior $q_\phi$, we can compensate for the limited expressibility of $q_\phi(z|y)$ by reshaping the correspondence between noise and data. In the next section, we formalize this idea by deriving a modified objective that enables joint training of $(\theta, \phi)$ while explicitly incorporating additional structural constraints to the flow.

3.1 Joint Training of the Flow Map and Noise Adapter

We now propose a joint training strategy that simultaneously aligns the data variable $x$, the observation $y$, and the latent noise variable $z$. Following the probabilistic perspective underlying VAEs (see Section 2.3), we achieve this by matching the following two factorizations of $p(x, y, z)$:

$ \begin{align} q_\phi(z | y) p(y | x) p(x) \approx p_\theta(x, y | z) p(z). \end{align}\tag{4} $

For simplicity, we assume a Gaussian decoder of the form

$ \begin{align} p_\theta(x, y | z) != !\mathcal{N}(x | f_\theta(z), \tau^2 I) , \mathcal{N}(y | A(f_\theta(z)), \sigma^2 I), \end{align}\tag{5} $

where we introduce a new hyperparameter $\tau > 0$ that relaxes the correspondence between $x$ and $z$. Taking the KL divergence between the two representations in Equation 4 yields

$ \begin{align} &\text{KL}(q_\phi(z | y) p(y | x) p(x) , ||, p_\theta(x, y | z) p(z)) \ &\leq \frac{1}{2\tau^2}\mathcal{L}{\text{data}}(\theta, \phi) + \frac{1}{2\sigma^2} \mathcal{L}{\text{obs}}(\theta, \phi) + \mathcal{L}_{\text{KL}}(\phi), \nonumber \end{align}\tag{6} $

(see Appendix A.1 for details), where

$ \begin{align} \mathcal{L}{\text{data}}(\theta, \phi) &= \mathbb{E}{q_\phi(z | y) p(y | x)p(x)}\left[|x - f_\theta(z)|^2\right], \ \mathcal{L}{\text{obs}}(\theta, \phi) &= \mathbb{E}{q_\phi(z | y) p(y)}\left[|y - A(f_\theta(z))|^2\right], \ \mathcal{L}{\text{KL}}(\phi) &= \mathbb{E}{p(y)}\left[\text{KL}\left(q_\phi(z|y) , ||, p(z)\right)\right]. \end{align}\tag{7} $

We note that relative to 3, this formulation gives rise to an additional term $\mathcal{L}{\text{data}}(\theta, \phi)$ that measures closeness of the reconstructed state $f\theta(z)$ and the ground-truth data $x$, where noise $z$ is drawn from the noise adapter $q_\phi(z|y)$, with observation $y$ taken from $x$. This term couples the adapter model and flow map more tightly, encouraging the samples ${f_\theta(z)}{z \sim q{\phi}(z|y)}$ to remain consistent with data manifold.

In the following result, we identify a concrete benefit of jointly learning $f_\theta$ and $q_\phi$ to target the true posterior $p(x|y)$, under a simple Gaussian setting. While this does not claim that the distribution of samples ${f_\theta(z)}{z \sim q{\phi}(z|y)}$ matches $p(x|y)$ exactly, it shows that joint training can at least match the posterior mean for every observation $y$. This sharply contrasts with separately training $f_\theta$ and $q_\phi$, which leads to bias almost surely, even at the level of the posterior mean.

Proposition 1

Assume that $p(z) = \mathcal{N}(z | 0, I)$, $p(x) = \mathcal{N}(x | m, C)$ for some $m\in \mathbb{R}^d$ and $C \in \mathbb{R}^{d \times d}$ symmetric positive definite, $f_\theta(z) = K_\theta z + b_\theta$ and $q_\phi(z|y) = \mathcal{N}(z|\mu_\phi(y), \mathtt{diag}(\sigma^2_\phi(y)))$. Then, for any linear observation $y = Ax + \varepsilon$, we have that

- Separate Training: Training $f_\theta$ first to match $p(x)$ and then training $q_\phi$ via loss Equation 6 with $\theta$ fixed almost surely fails to match the posterior mean, i.e., $\mathbb{E}{z \sim q{\phi}(z|y)} [f_\theta(z)] \neq \mathbb{E}_{p(x|y)}[x]$.

- Joint Training: Joint optimization of $f_\theta$ and $q_\phi$ via loss Equation 6 recovers the true posterior mean $\mathbb{E}{p(x|y)}[x]$ exactly via the procedure $\mathbb{E}{z \sim q_\phi(z|y)}[f_\theta(z)]$.

Proof: See Proposition 12 in Appendix A.2.

Next, we relate the new term $\mathcal{L}_{\text{data}}(\theta, \phi)$ in Equation 6 to the mean flow loss [12], which imposes structural constraints on the flow map.

Connection to mean flows.

We briefly recall the mean flow objective from [12]. Denoting

$ \begin{align} &\mathcal{E}\theta(x, z, r, t) := (t-r) \left[u\theta(\psi_t(x, z), r, t) - \dot{\psi}_t(x, z)\right], \nonumber \ &\text{where} \quad \psi_t(x, z) := (1 - t)x + t z, 0\le r\le t\le 1, \end{align} $

is the linear interpolant between data $x$ and noise $z$, the mean flow loss is given by

$ \begin{align} &\mathbb{E}{x, z, r, t}\left[|\partial_t \mathcal{E}\theta(x, z, r, t)|^2\right] \approx \mathcal{L}{\text{MF}}(\theta) \ &, := \mathbb{E}{x, z, r, t}\left[|u_\theta(\psi_t(x, z), r, t) - \mathtt{stopgrad}(u_{\text{tgt}})|^2\right], \nonumber \end{align}\tag{8} $

where $u_{\text{tgt}} := \dot{\psi}t(x, z) - (t-r)\frac{d}{dt} u\theta(\psi_t(x, z), r, t)$ is the effective regression target. Below, we establish a direct link between this objective and the term $\mathcal{L}_{\text{data}}(\theta, \phi)$ in Equation 6.

Proposition 2

Let the noise-to-data map $f_\theta$ be defined by $f_\theta(z) := z - u_\theta(z, 0, 1)$. Then we have

$ \begin{align} |x - f_\theta(z)|^2 \leq \int^1_0 |\partial_t \mathcal{E}_\theta(x, z, 0, t)|^2 dt. \end{align} $

Proof: See Appendix A.3.

This result shows that the mean flow loss in the anchored case $r = 0$ and $t \sim U([0, 1])$ acts as an upper bound proxy to the reconstruction error $|x - f_\theta(z)|^2$ in Equation 7. This specialized setting targets direct one-step transport to $r=0$. Motivated by this connection, we opt to use the general mean flow loss Equation 8, which distributes learning over $(r, t)$ to additionally learn intermediate flow maps $f_\theta(x_t, r, t)$. While this does not ensure optimality for the one-step transport $x = f_\theta(z, 0, 1)$, in practice, it yields strong empirical performance and furthermore provides functionality for multi-step sampling (Section 3.3). Summarizing, we propose to train $(\theta, \phi)$ using the following objective:

$ \begin{align} \mathcal{L}{\theta, \phi} := \frac{1}{2\tau^2} \mathcal{L}{\text{MF}}(\theta; \phi) + \frac{1}{2\sigma^2} \mathcal{L}{\text{obs}}(\theta, \phi) + \mathcal{L}{\text{KL}}(\phi), \end{align}\tag{9} $

where the mean flow term is evaluated using $(x, z)$-pairs sampled from the joint distribution $\pi_\phi(x, z) := \int q_\phi(z | y)p(y | x)p(x) dy$, in accordance with Equation 7. This dependence induces an implicit coupling between $\theta$ and $\phi$. To promote stable optimization, we further limit the interaction to this term by replacing $\theta$ in the observation loss $\mathcal{L}{\text{obs}}$ with its exponential moving average (EMA), yielding $\mathcal{L}{\text{obs}}(\theta^-, \phi)$, where $\theta^-$ denotes the EMA of $\theta$.

Remark

Our framework can also be related to consistency model training by Proposition 6.1 in [18]. In this case, the mean flow loss in Equation 9 is replaced by an appropriate consistency loss.

Input: Observation $y$, inverse problem class $c$, time partition $1=t_0 > \cdots > t_K=0$, adapter mean and standard deviation $\mu_\phi, \sigma_\phi$, mean flow model $u_\theta$

$\epsilon \sim \mathcal{N}(0, I)$

$z \gets \mu_\phi(y, c) + \sigma_\phi(y, c)\odot \epsilon$

$x \gets z$

**for** $k=1$ to $K$ **do**

$x \gets x + (t_k - t_k-1) u_\theta(x, t_k, t_k-1)$

**end for**

Output: $x$

Input: Inverse problem classes $\mathcal{A}_1, \ldots, \mathcal{A}_C$, observation noise standard deviation $\sigma$, data misfit tolerance $\tau$, conditional noise proportion $\alpha$, learning rates $\eta_1, \eta_2$, EMA rate $\mu$, adaptive loss constants $\gamma, p$

$\theta^- \leftarrow \mathtt{stopgrad}(\theta)$

**repeat**

Sample $c \sim p(c)$, $x \sim p(x)$

Sample forward operator $A_c^\omega \in \mathcal{A}_c$

$y \leftarrow A_c^\omega x + \varepsilon, \quad \varepsilon \sim \mathcal{N}(0, \sigma^2I)$

$z \gets \mu_\phi(y, c) + \sigma_\phi(y, c)\odot \epsilon, \quad \epsilon \sim \mathcal{N}(0, I)$

$\mathcal{L}_{\text{obs}}(\phi) \leftarrow \|y - A_c^\omega(f_{\theta^-}(z, 0, 1))\|^2$

$\mathcal{L}_{\text{KL}}(\phi) \leftarrow \text{KL}\!\left(\mathcal{N}(\mu_\phi(y, c), \sigma^2_\phi(y, c)I) \,||\,\mathcal{N}(0, I)\right)$

Sample $w \sim U([0, 1])$ and $(r, t) \sim p(r, t)$

**if** $w > \alpha$ **then**

$z \sim \mathcal{N}(0, I)$

**end if**

$\mathcal{L}_{\text{MF}}(\theta; \phi) \leftarrow \mathrm{MeanFlowLoss}(x, z, r, t)$

$\mathcal{L}(\theta, \phi) \leftarrow \frac{1}{2\tau^2} \mathcal{L}_{\text{MF}}(\theta; \phi) + \frac{1}{2\sigma^2} \mathcal{L}_{\text{obs}}(\theta) + \mathcal{L}_{\text{KL}}(\phi)$

$\mathcal{L}(\theta, \phi) \leftarrow \mathcal{L}(\theta, \phi) / \mathtt{stopgrad}(\|\mathcal{L}(\theta, \phi) + \gamma\|^p)$

$\theta \leftarrow \theta - \eta_1 \nabla_{\theta} \mathcal{L}(\theta, \phi)$

$\phi \leftarrow \phi - \eta_2 \nabla_{\phi} \mathcal{L}(\theta, \phi)$

$\theta^- \leftarrow \mathtt{stopgrad}(\mu \theta^- + (1 - \mu) \theta)$

**until** convergence

3.2 Amortizing Over Multiple Inverse Problems

In many applications, one is interested not in a single inverse problem defined by a fixed forward operator $A$, but rather a family of inverse problems. To accommodate this setting, we extend our framework by amortizing inference over multiple forward operators $A_1, \ldots, A_C$. This allows for a single model to handle multiple tasks, such as denoising, inpainting, and deblurring.

To achieve this, we consider a class-conditional noise adapter $q_\phi(z | y, c) !=! \mathcal{N}(z | \mu_\phi(y, c), \mathtt{diag}(\sigma^2_\phi(y, c)))$, where $c \in {1, \ldots, C}$ is a categorical variable indicating which forward operator $A_c$ was used to generate the observation $y$. Conditioning the adapter on $c$ enables the model to adapt its posterior approximation to the specific structure of each inverse problem. We may further extend this by amortizing over inverse problem classes, where $c$ now defines a collection of inverse problems $\mathcal{A}c = {A_c^\omega}{\omega \in \Omega}$. For example, these can define a family of random masks or a distribution of blurring kernels.

3.3 Single and Multi-Step Conditional Sampling

Given a trained noise adapter $q_\phi(z|y)$ and flow map $f_\theta(z)$, samples from the data-space posterior $p(x|y)$ can be approximately generated by first sampling $z \sim q_\phi(z|y)$ and then mapping $x = f_\theta(z)$. The validity of this procedure is justified by the following result.

Proposition 3

Let the joint distribuion of $(x, y, z)$ be given by $p(x, y, z) = p_\theta(x, y | z) p(z)$, for $p_\theta(x, y | z)$ in Equation 5. Then, for any fixed observation $y$, the data-space posterior $p(x | y)$ converges weakly to the pushforward of the noise-space posterior $p(z | y)$ under the map $f_\theta$, as $\tau \rightarrow 0$.

Proof: See Appendix A.4.

The proposition states that in the limiting case $\tau \rightarrow 0$, sampling from $p(x|y)$ is equivalent in distribution to first sampling $z \sim p(z|y)$ (approximated by $q_\phi(z|y)$) and then applying $x = f_\theta(z)$. While sound in theory, we find that when $\tau \ll \sigma$, joint optimization of $(\theta, \phi)$ becomes difficult. This is likely due to the RHS distribution in Equation 4 concentrating sharply around the submanifold ${(x, y, z) : x = f_\theta(z)}$, making it nearly impossible to match using the LHS representation of Equation 4, which remains a full distribution over $(x, y, z)$. In practice, we find that using $\tau$ larger than $\sigma$ yields stable optimization and the best empirical results.

Sample quality can also be improved by considering multi-step sampling instead of single-step sampling, as described in Algorithm 1. Empirically, high-quality samples can be obtained with only a small number of steps $K$, substantially fewer than the number of integration steps required for solving a full generative ODE or SDE.

3.4 Other Training Considerations

Mixing in the unconditional loss:

We observe that training solely using the objective Equation 9 can degrade the quality of unconditional samples $x = f_\theta(z)$, with $z \sim \mathcal{N}(0, I)$. This behaviour arises because latent samples drawn from $q_\phi(z | y)$ retain structural details of $y$, and therefore are not fully representative of pure noise drawn from $\mathcal{N}(0, I)$. Thus, during training, the mean flow loss is never evaluated on pure noise, imparining the model to generate unconditional samples. To address this, we modify the computation of the mean flow loss $\mathcal{L}{\text{MF}}(\theta; \phi)$, by sampling $(x, z) \sim \pi\phi(x, z)$ with probability $\alpha$ and with remaining probablility $1-\alpha$, we sample $z \sim \mathcal{N}(0, I)$ independently of $x$.

Adaptive loss:

Similar to the mean flow training procedure of [12], we consider an adaptive loss scaling to stabilize optimization. Specifically, we use the rescaled loss $w \cdot \mathcal{L}{\theta, \phi}$, where the weight $w$ is given by $w = 1/\mathtt{stopgrad}(|\mathcal{L}{\theta, \phi} + \gamma|^p)$ for constants $\gamma, p > 0$.

We summarize the full training procedure in Algorithm 2.

4. Experiments

Section Summary: In the experiments section, researchers test their Variational Flow Matching (VFM) method on a simple 2D toy problem using a checkerboard pattern of data points, where only one coordinate is observed with added noise, comparing it to baseline approaches that either fix the core model or train it without constraints. VFM outperforms these baselines by better capturing the two-peaked uncertainty in the data while keeping samples on the intended pattern, as shown through metrics measuring prediction accuracy, uncertainty, and structure preservation, with key improvements from joint training adjustments and stabilizing techniques. The method also demonstrates strong results on real-world image tasks like filling in missing parts or sharpening blurry photos from the ImageNet dataset, producing diverse and realistic outputs that surpass existing tools.

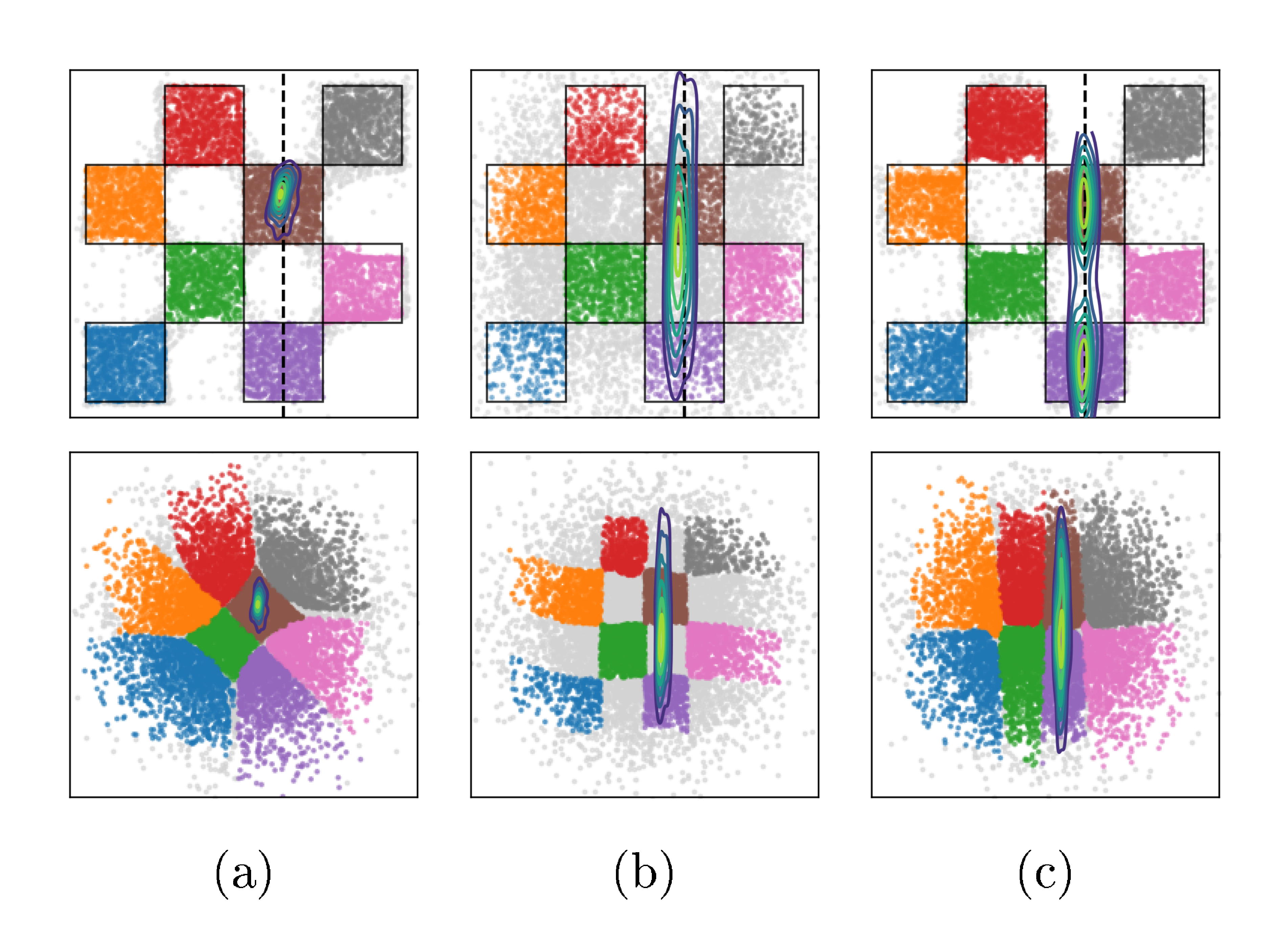

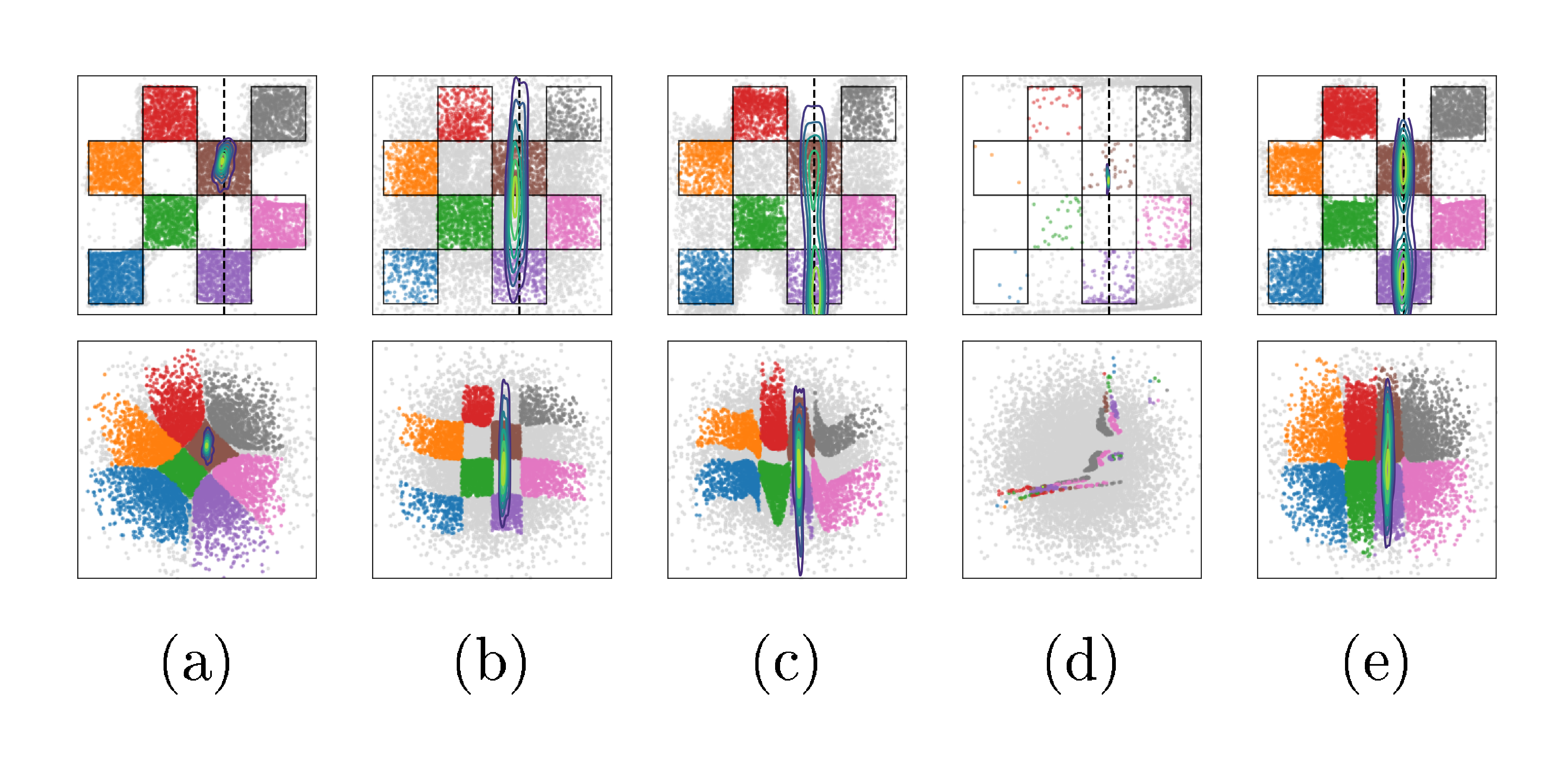

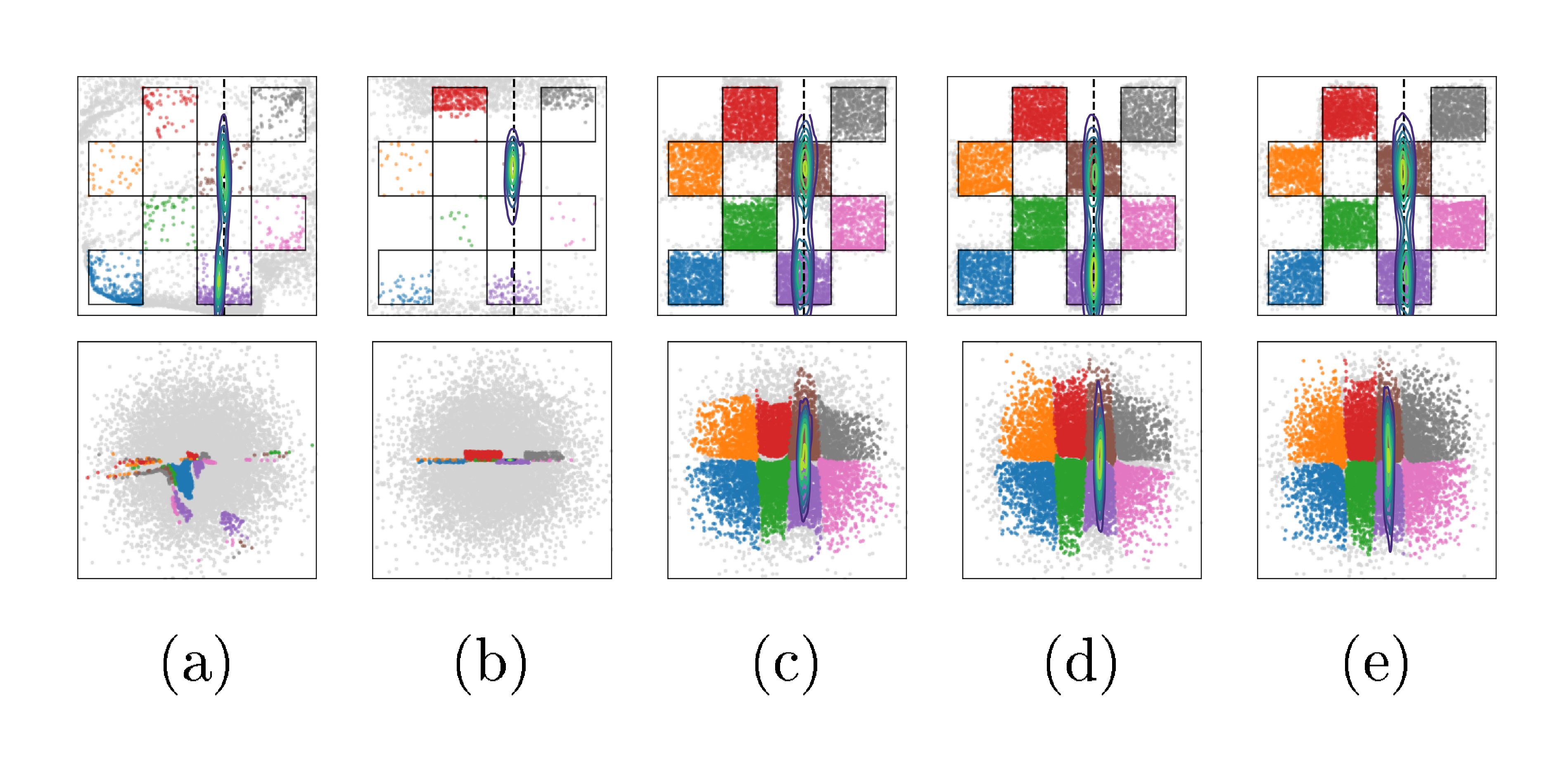

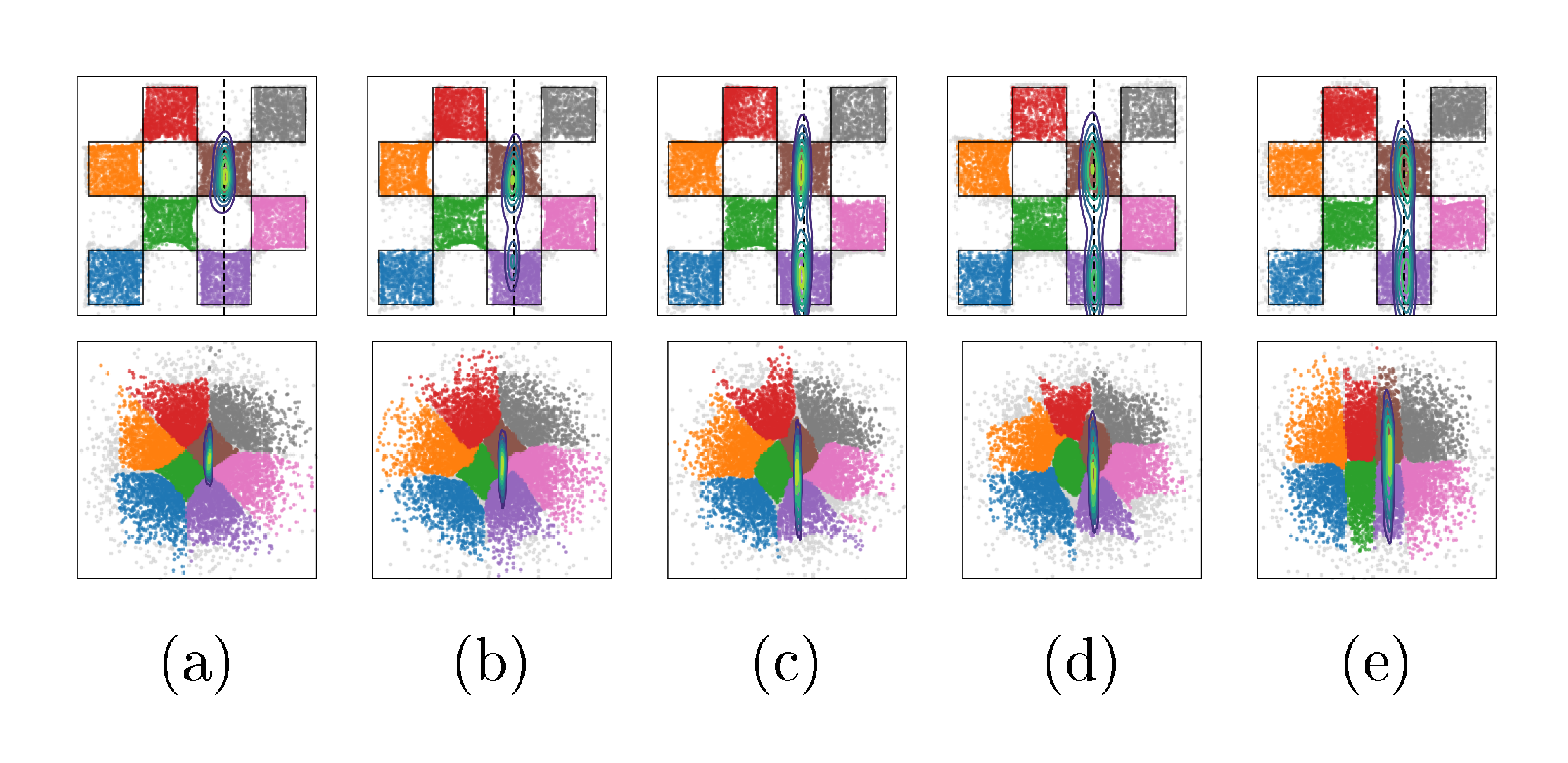

4.1 Illustration on a 2D Example

In this experiment, we illustrate the effects of jointly training $(\theta, \phi)$ on a toy 2D example, and perform ablations on key design choices in VFM. Specifically, we take $p(x)$ to be a $4\times4$ checkerboard distribution supported on $[-2, 2]\times[-2, 2]$. For the forward problem, we observe only the first coordinate, i.e. $y = Ax + \varepsilon$ with $A=\begin{pmatrix}1 & 0\end{pmatrix} $ and $ \varepsilon\sim\mathcal N(0, \sigma^2) $ with $ \sigma=0.1$. We refer the readers to Appendix B.1 for details on the experimental setup.

Baselines and evaluation metrics.

We consider two baselines: the first, frozen- $\theta$ trains only the noise adapter $q_\phi(z|y)$ via loss Equation 3 (amortized over $y$), while keeping $\theta$ fixed to a pretrained flow map. The second, unconstrained- $\theta$ , optimizes the same objective but learns $\theta$ jointly with $\phi$. These baselines are chosen to illustrate (i) the effect of joint optimization of $\theta$ and $\phi$, and (ii) the failure mode that can occur when $\theta$ is trained without the structural constraints imposed by the mean flow loss.

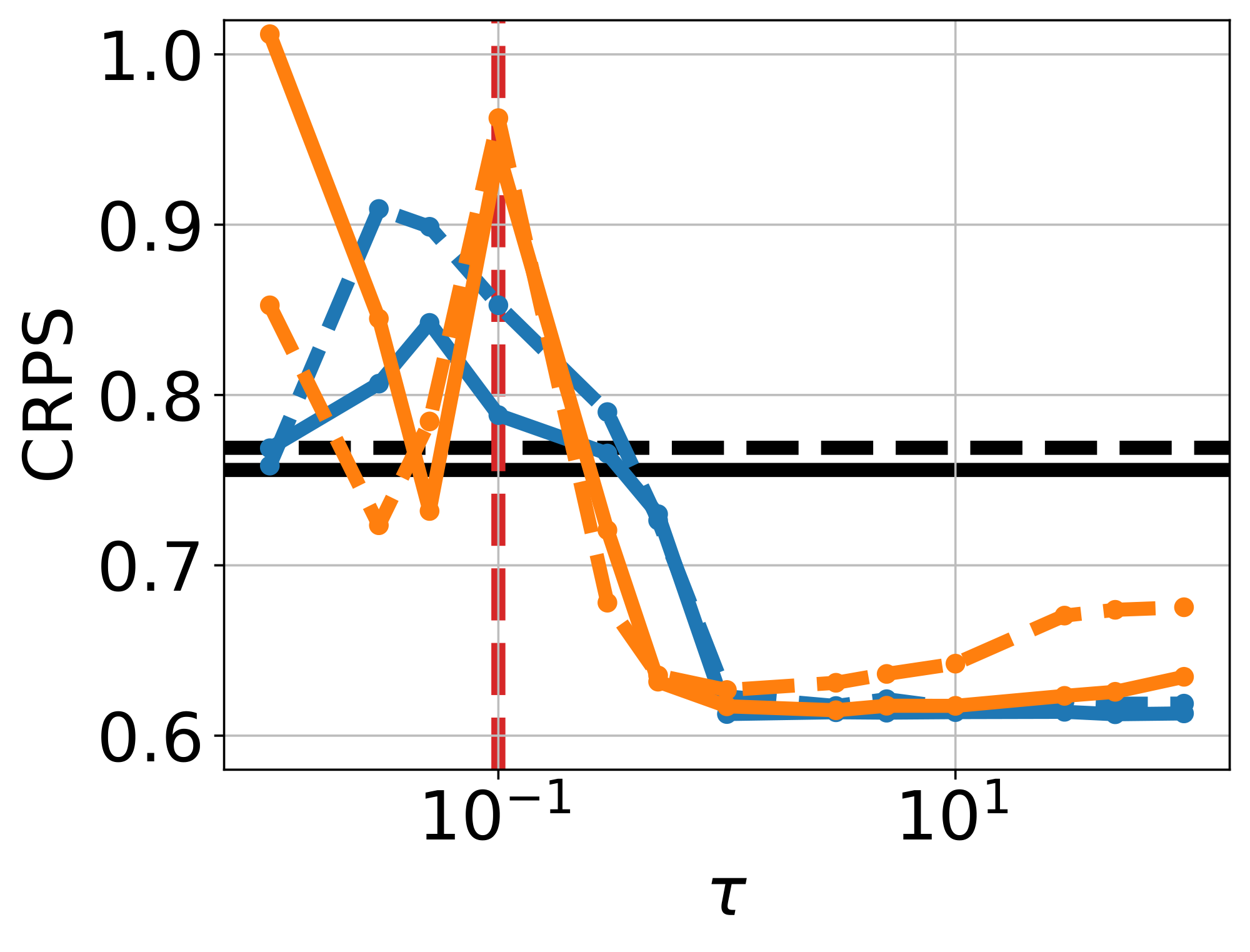

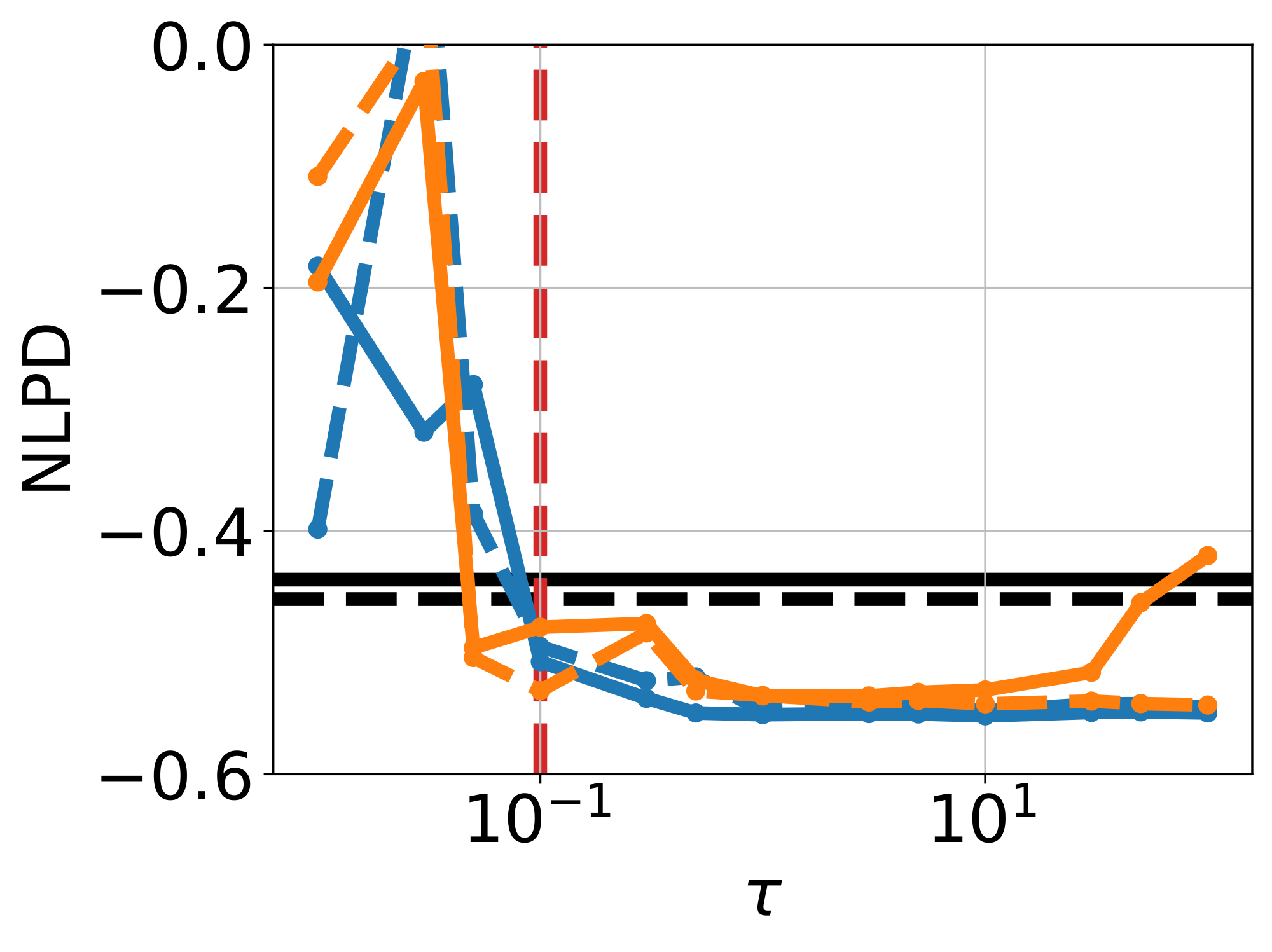

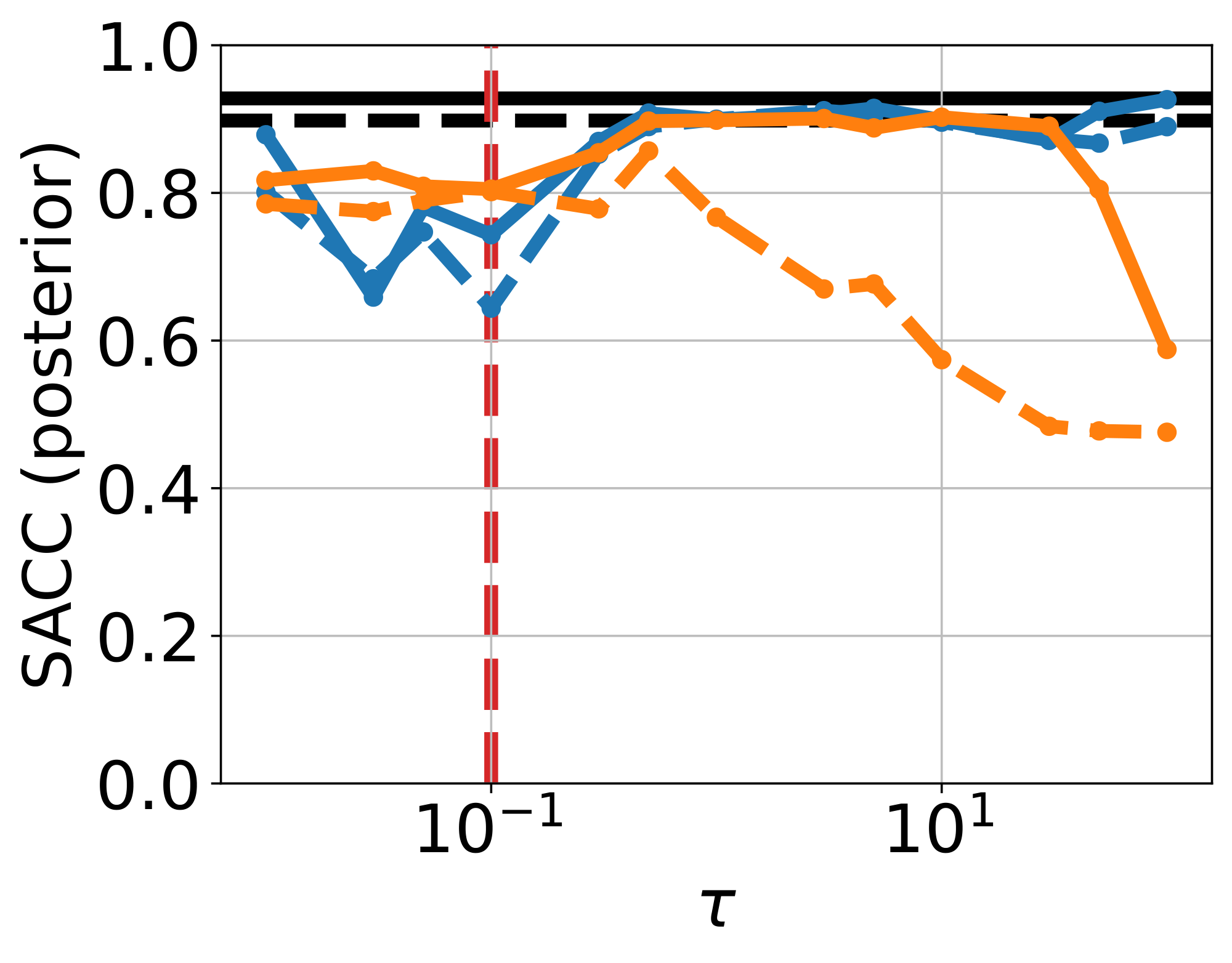

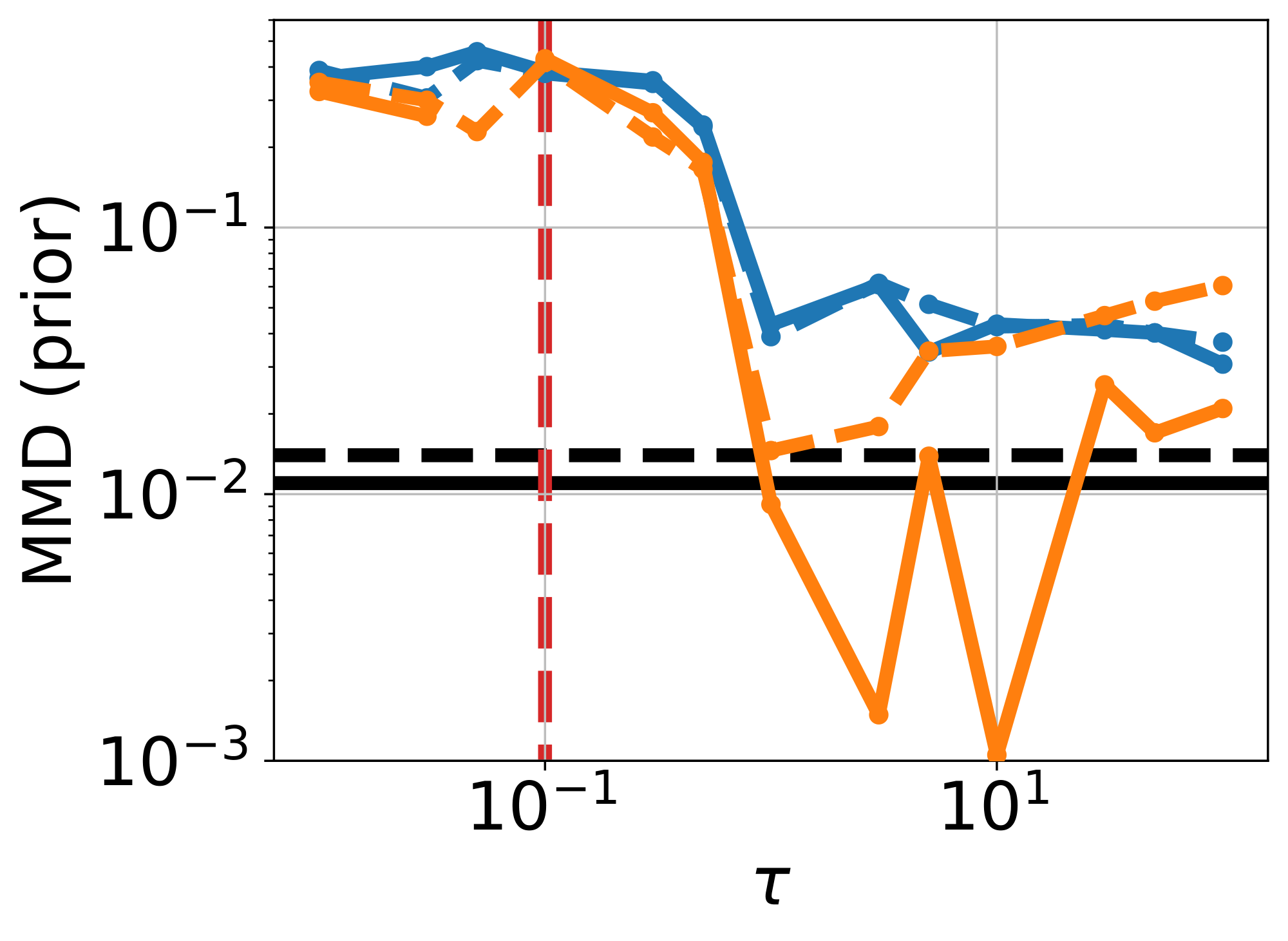

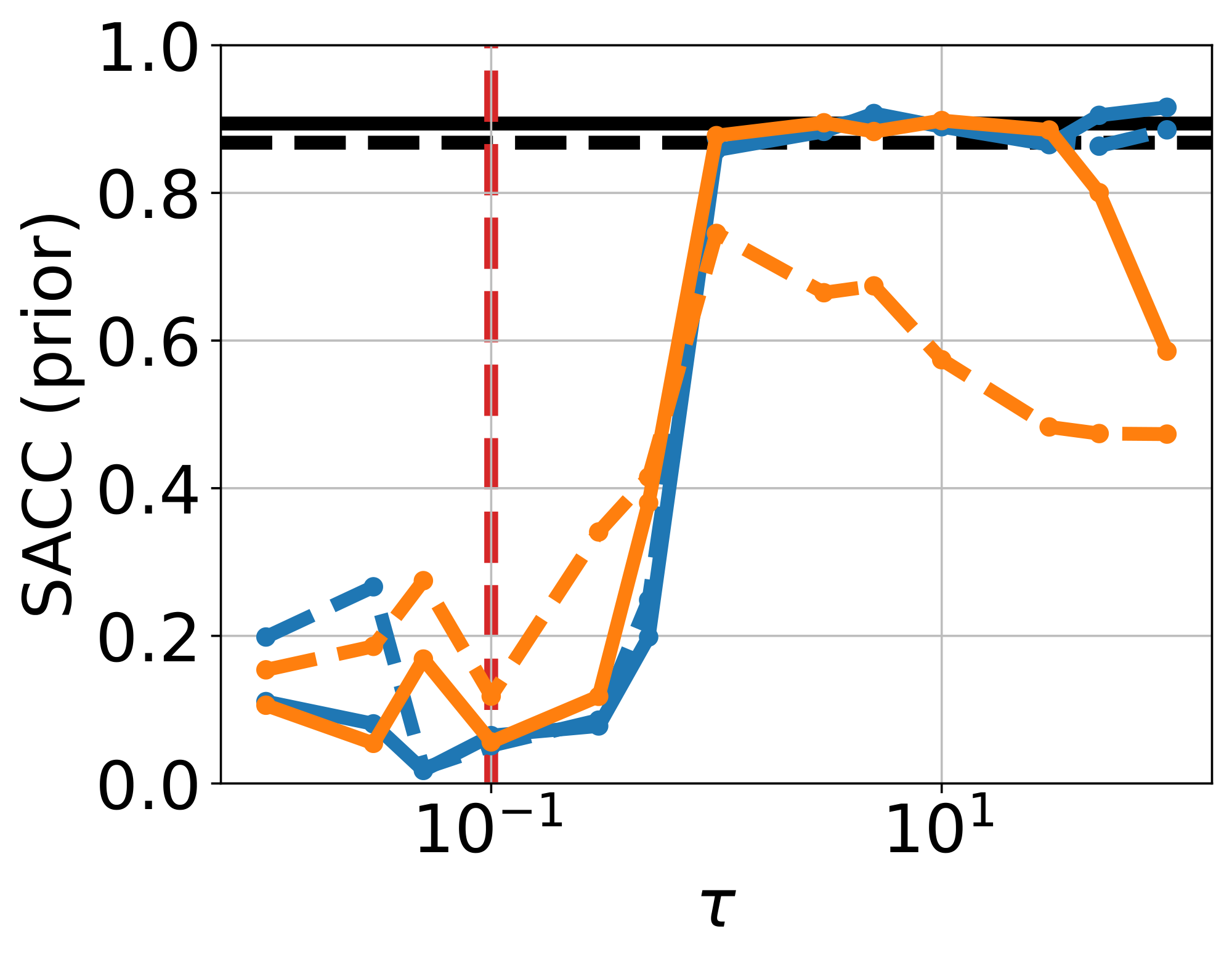

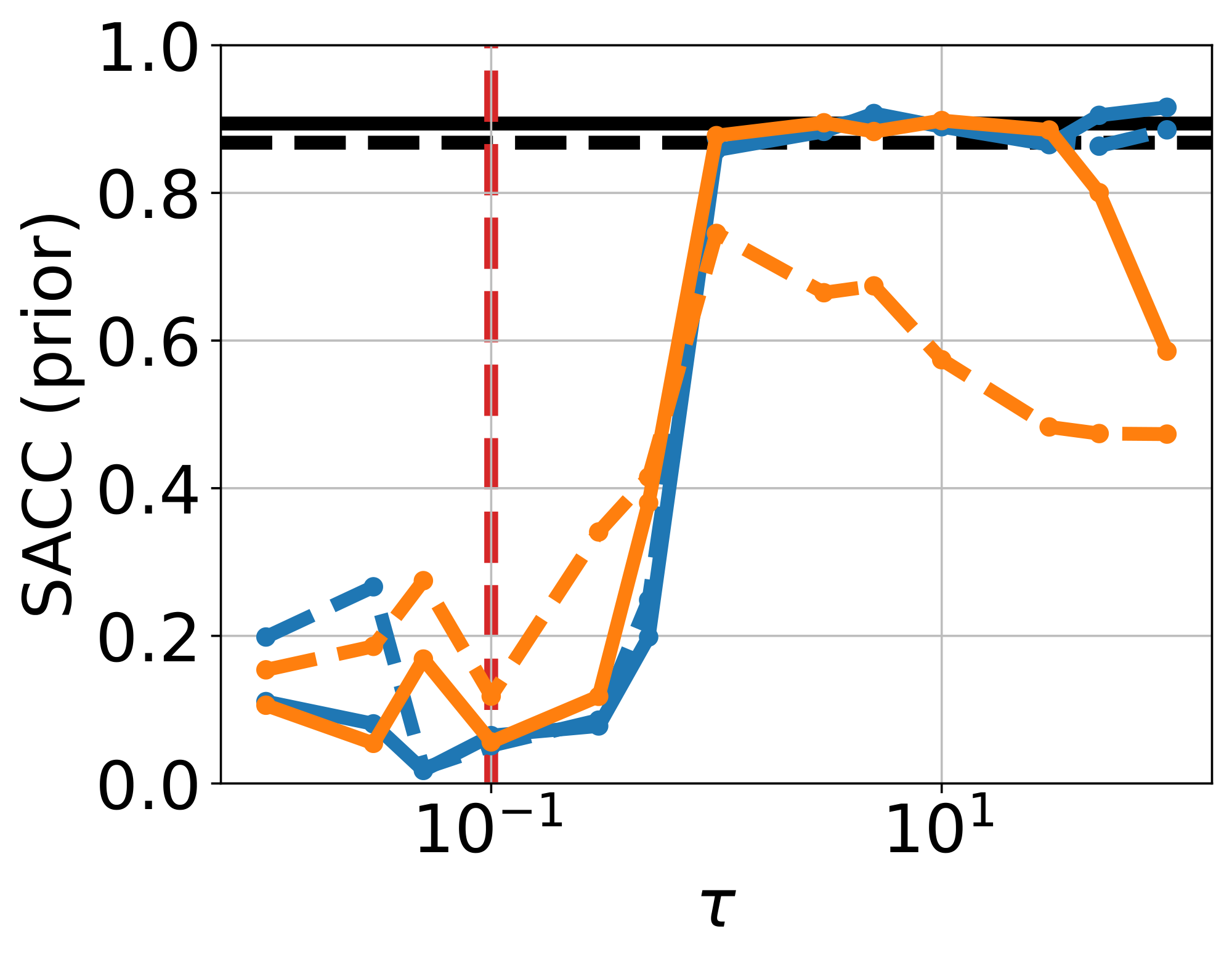

For model evaluation, we use the following metrics: (1) The negative log predictive density (NLPD), evaluates how well generated samples are consistent with observations $y$; (2) the continuous ranked probability score (CRPS) measures uncertainty calibration around the ground truth $x$ that generated $y$; (3) the maximum mean discrepancy (MMD) provides a sample-based distance between the true and approximate posteriors [19]; (4) the support accuracy (SACC) measures the proportion of samples $x = f_\theta(z)$ that lie on the checkerboard support. We compare MMD and SACC on both unconditional samples ${f_\theta(z)}{z \sim \mathcal{N}(0, I)}$ and conditional samples ${f\theta(z)}{z \sim q\phi(z | y)}$ to evaluate the quality of both prior and posterior approximations, respectively. For details, see Appendix B.1.3.

Ablation on the loss components.

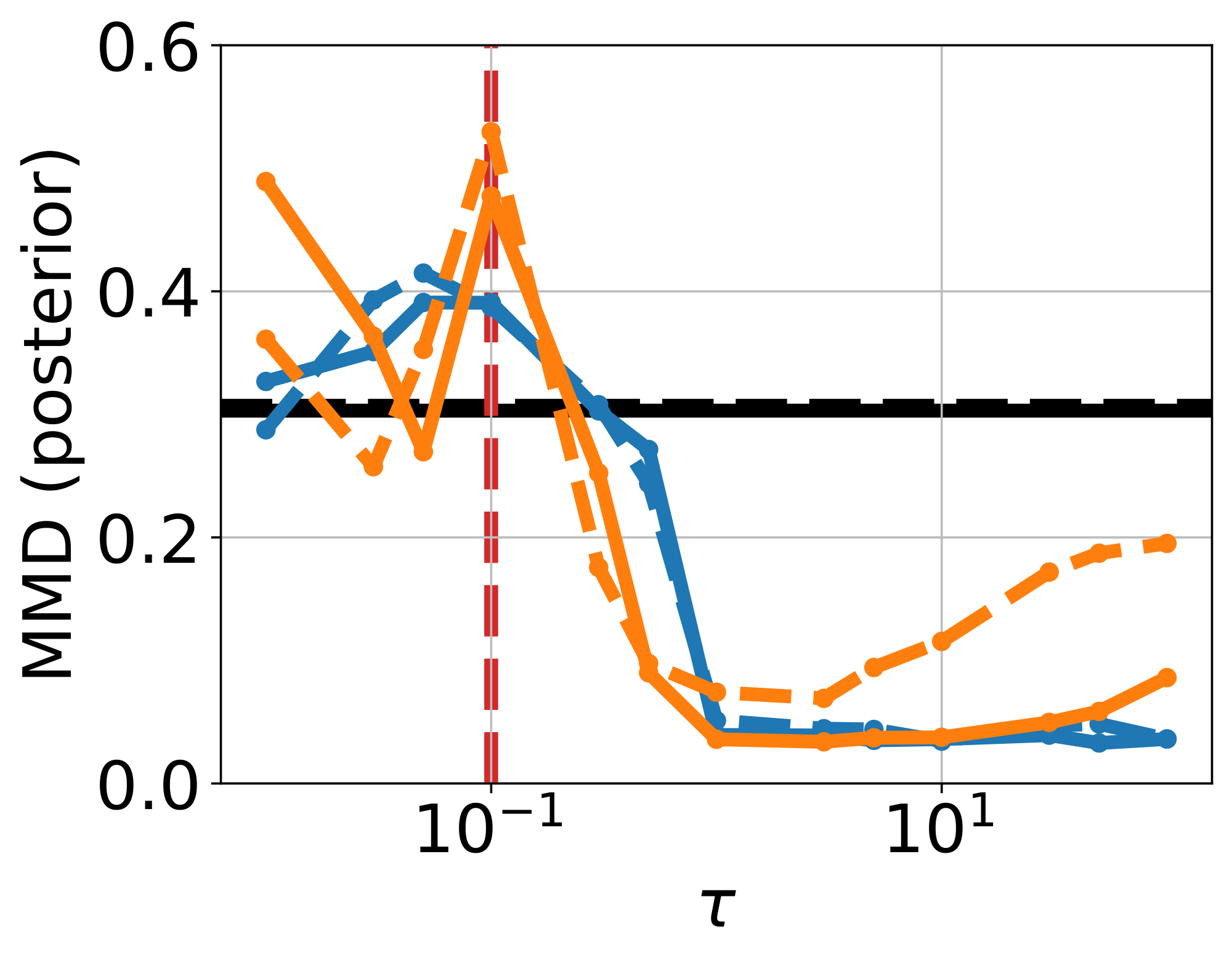

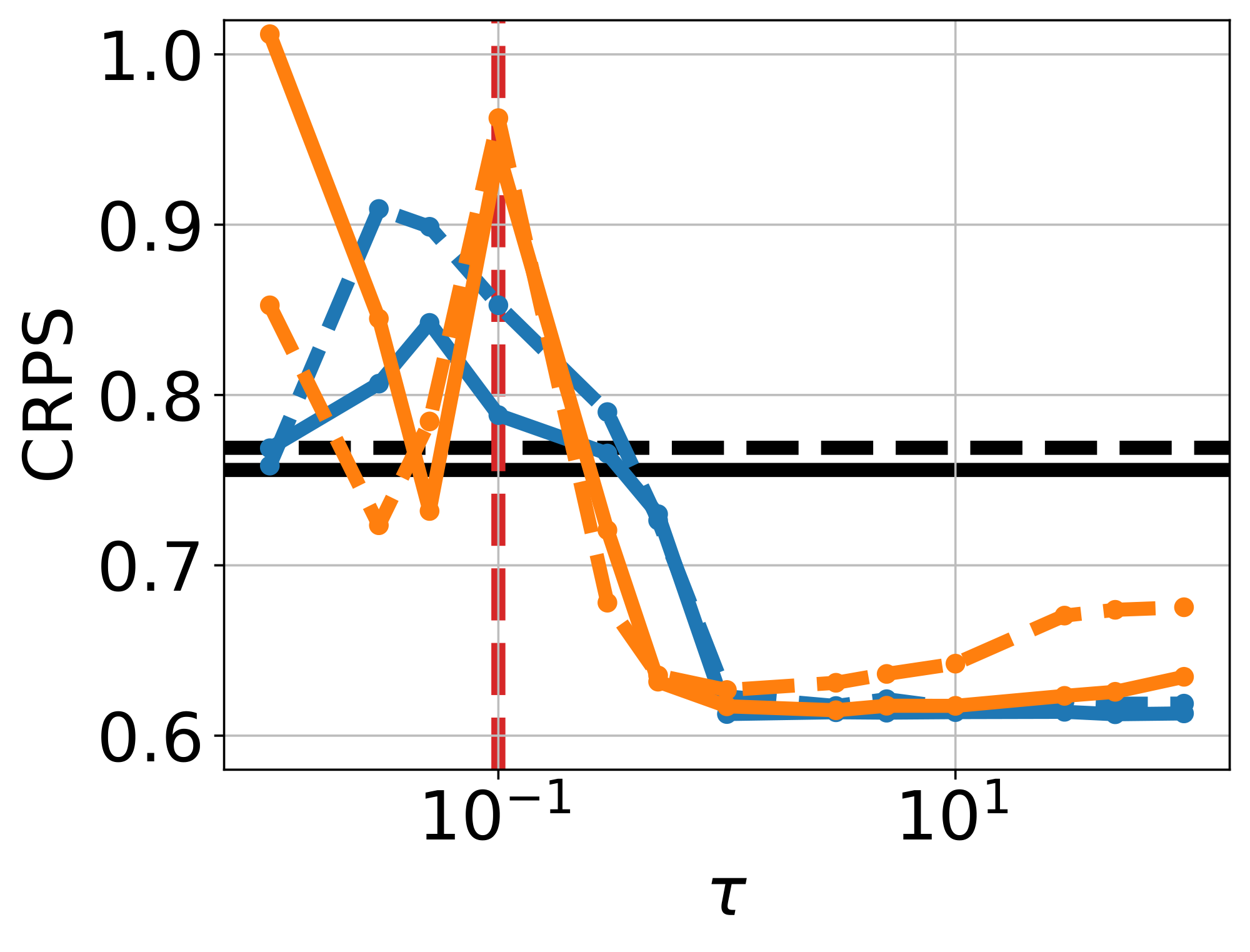

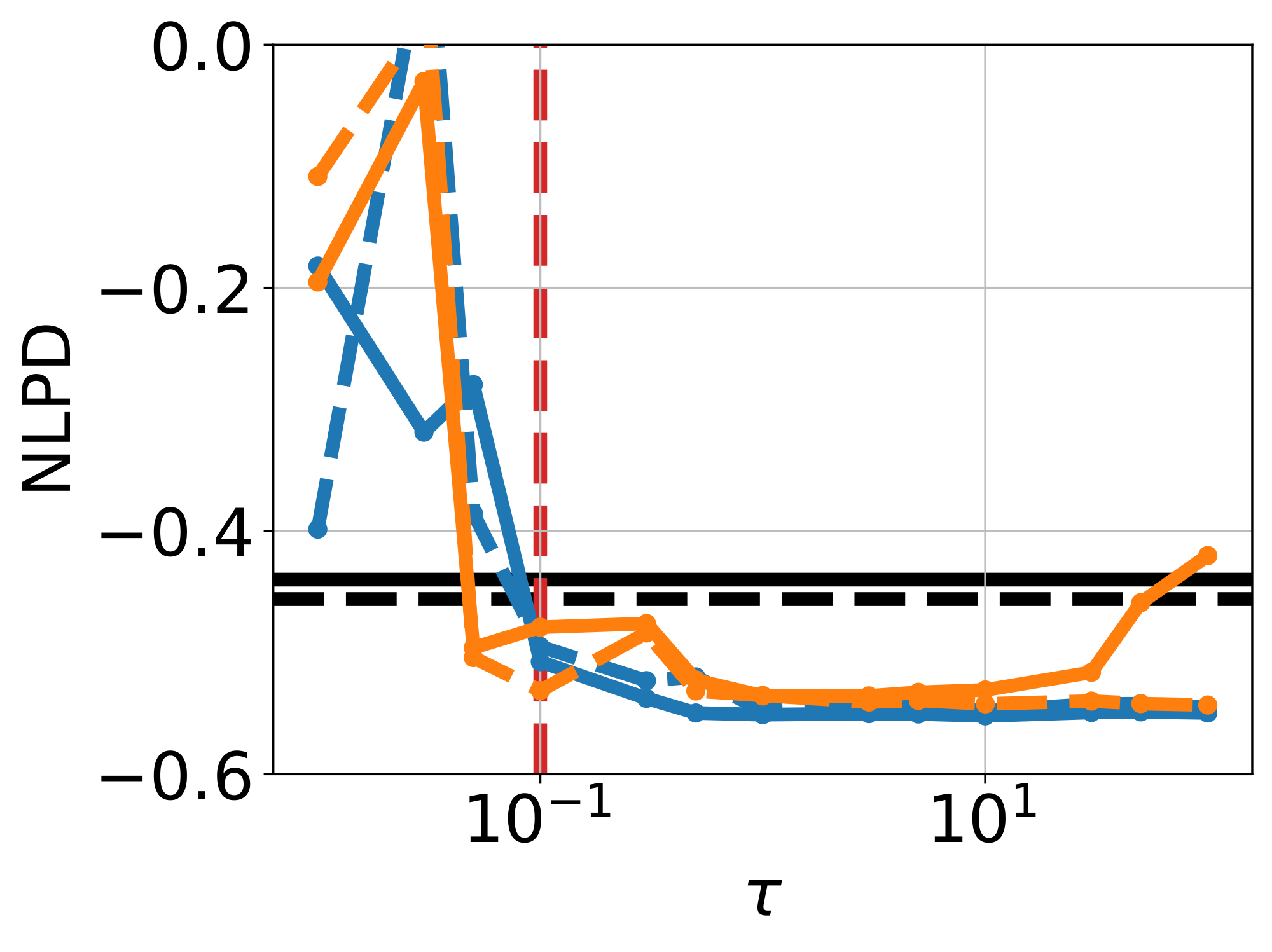

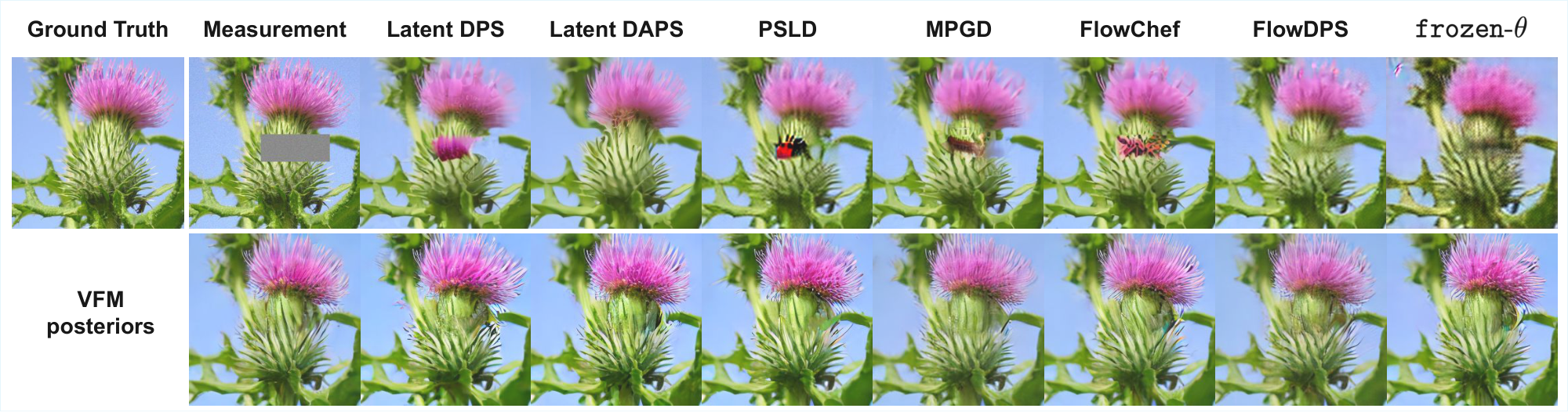

We compare VFM against frozen- $\theta$ and unconstrained- $\theta$ to isolate the effect of the mean flow term $\mathcal{L}{\text{MF}}(\theta; \phi)$ in Equation 9; results displayed in Figure 2. The frozen- $\theta$ baseline (Figure 2a) fails to capture the bimodality of the true posterior (support in the brown and purple cells), due to the limited flexibility of $q\phi$. On the other hand, unconstrained- $\theta$ (Figure 2b) is able to sample from both brown and purple cells, however, also produces many off-manifold samples. VFM (Figure 2c, $\tau=100$, $\alpha=1$) successfully captures both modes while preserving the checkerboard pattern; joint training improves the noise-to-data coupling, while $\mathcal{L}{\text{MF}}$ pull samples towards the structured data manifold. This observation is supported by the improvements in CRPS and posterior MMD (see Figure 17 & Figure 18, Appendix), and high support accuracy comparable to the pretrained flow map used in frozen- $\theta$ . Finally, removing $\mathcal{L}{\text{KL}}(\phi)$ from Equation 9 makes training unstable, owing to the ill-posedness of the inverse problem without prior regularization.

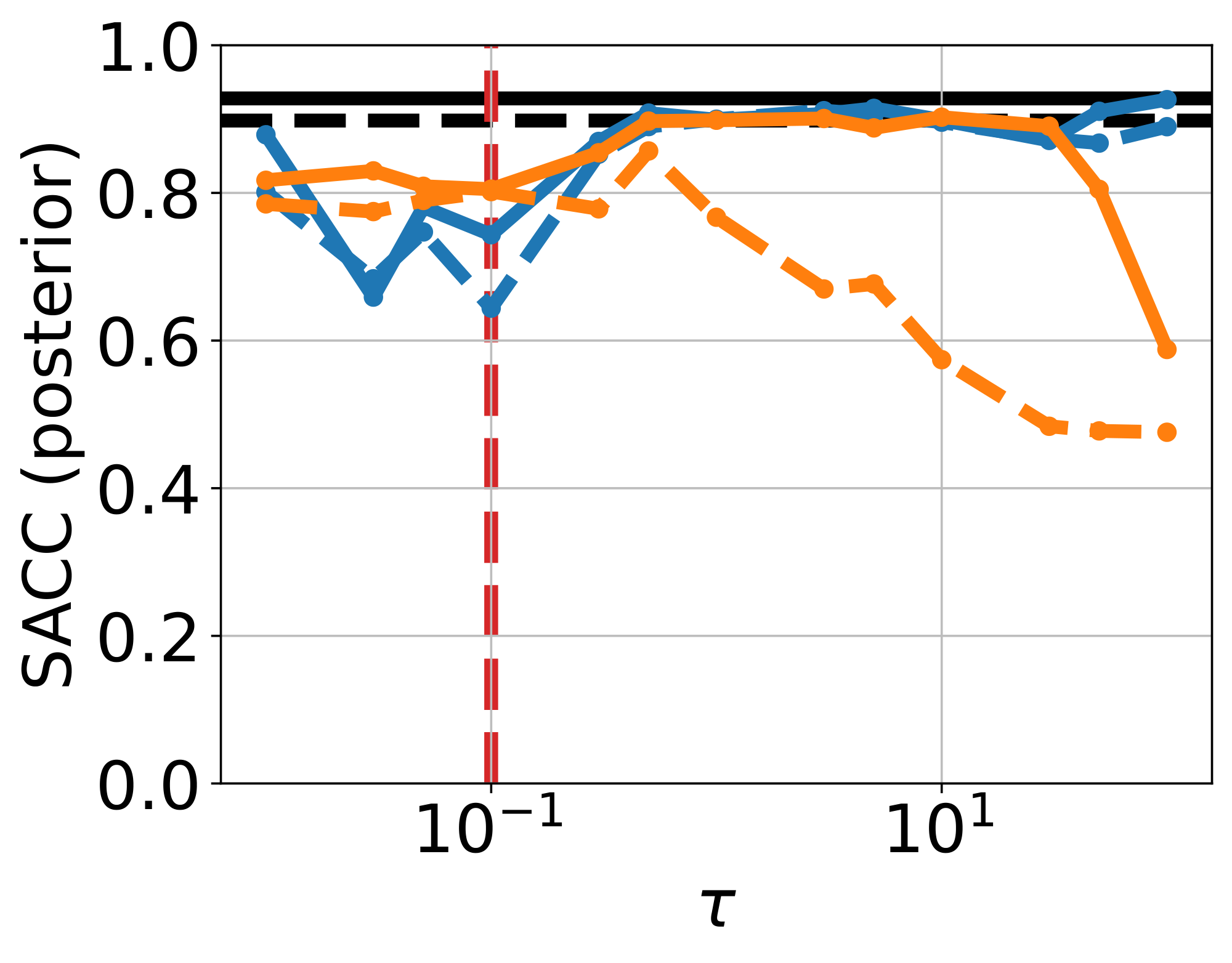

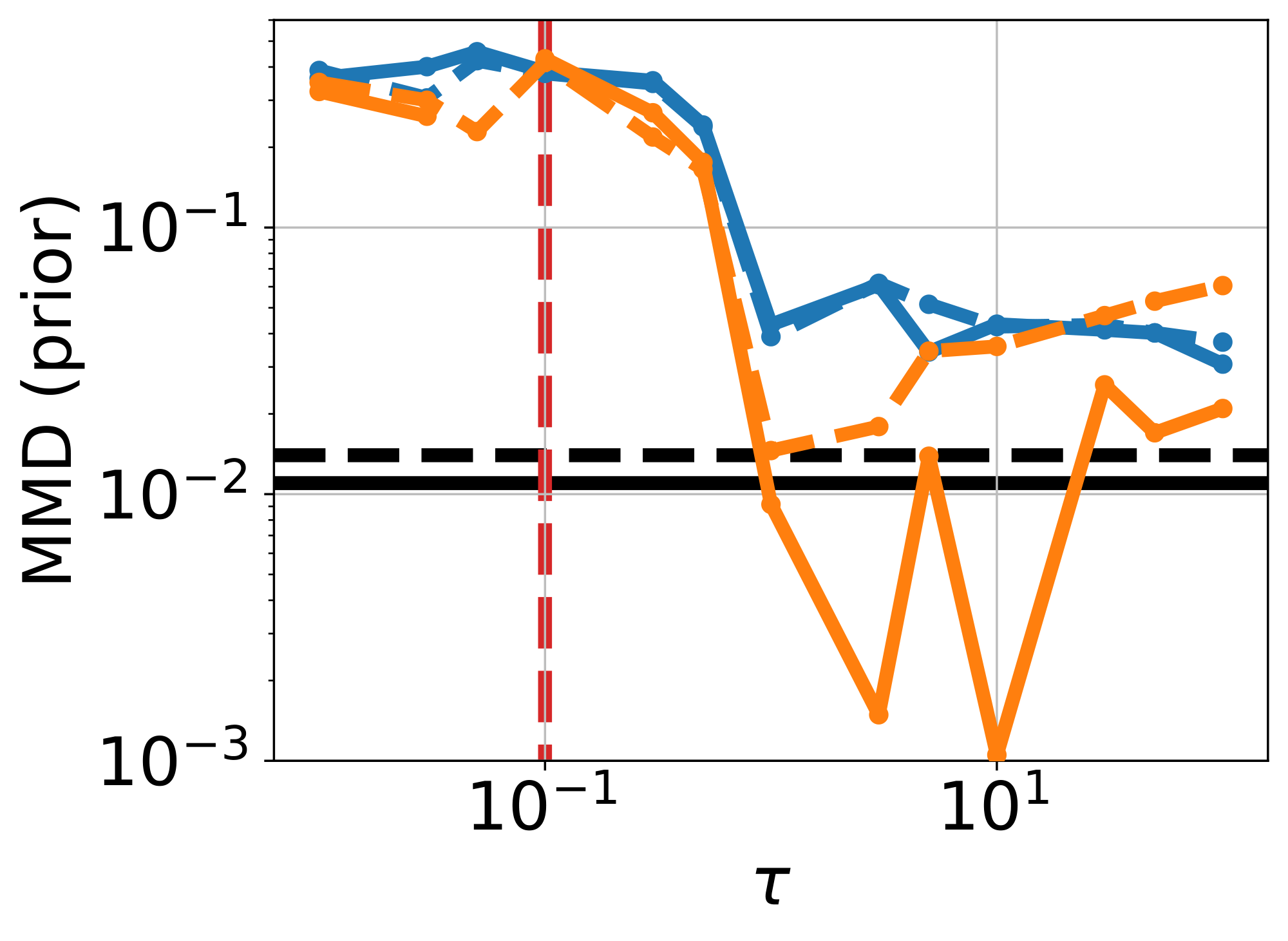

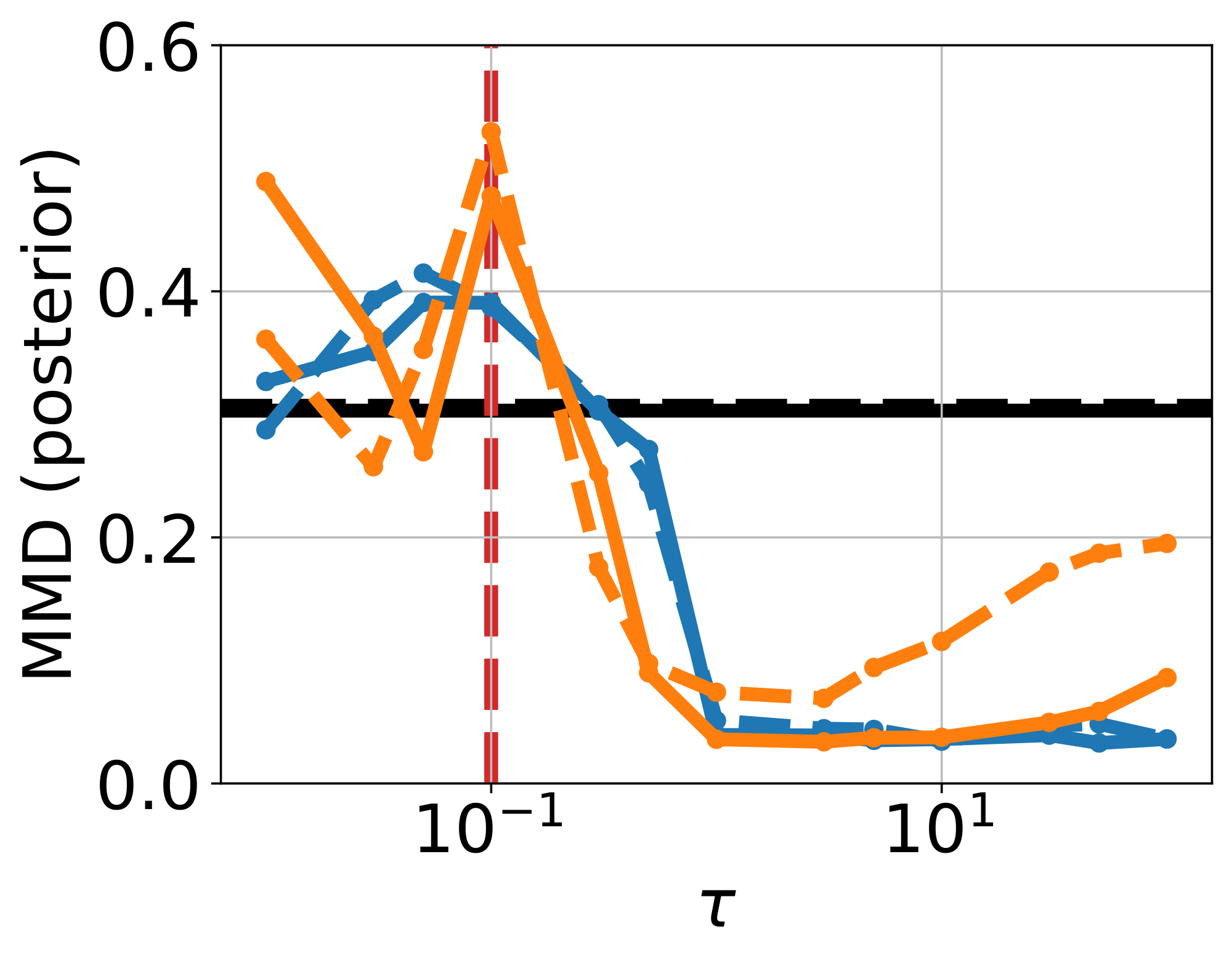

Ablation on $\tau$ and $\alpha$.

We sweep $\tau \in [10^{-2}, 10^2]$, and report metrics using a single-step and 4-step sampler (See Figure 17 & Figure 18, Appendix). When $\tau \lesssim \sigma$, performance across metrics is generally worse than frozen- $\theta$ (Figure 20a & Figure 20b, Appendix). For $\tau \geq 1$, results improve substantially, especially CRPS and posterior MMD, while SACC and prior MMD approach the strong values already achieved by the pretrained flow used in frozen- $\theta$ . We also ablate on $\alpha$, fixing $\tau = 100$ (Figure 21, Appendix). Setting $\alpha = 0$ decouples the training of mean flow and the adapter, yielding behaviour close to frozen- $\theta$ . Increasing $\alpha$ strengthens the coupling, inducing a more pronounced warping of the latent space. In practice, $\alpha < 1$ is more stable and yields better prior fit (lower prior MMD, compare Figure 16 and Figure 16), whereas $\alpha = 1$ gives the best posterior fit (lower posterior MMD, see Figure 16 vs Figure 16, Appendix).

To EMA or not to EMA.

Finally, we examine the role of using an EMA of $\theta$ in the observation loss $\mathcal{L}{\text{obs}}(\theta, \phi)$. Without EMA, i.e., allowing $\theta$-gradients to propagate through $\mathcal{L}{\text{obs}}$, both prior and posterior support accuracy deteriorate as $\tau$ increases (orange curves in Figure 17 and Figure 18). This can be explained by the fact that in the limit $\tau \rightarrow \infty$, this pushes training toward the unconstrained- $\theta$ failure mode, leading to unstructured sample generation. This can be seen in Figure 19c, where the no-EMA variant when $\tau=100$ yields results similar to unconstrained- $\theta$ .

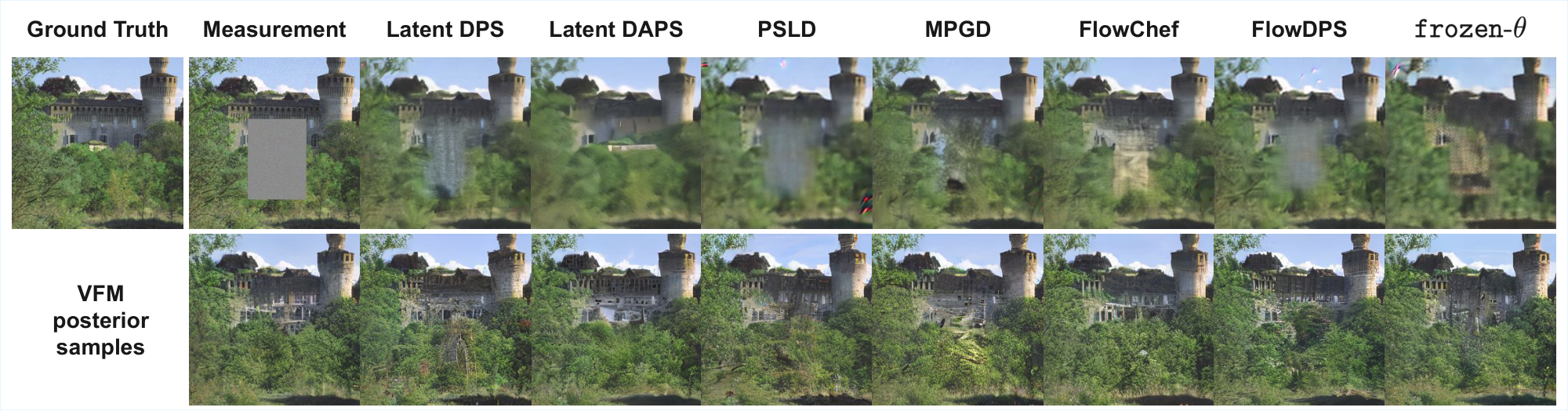

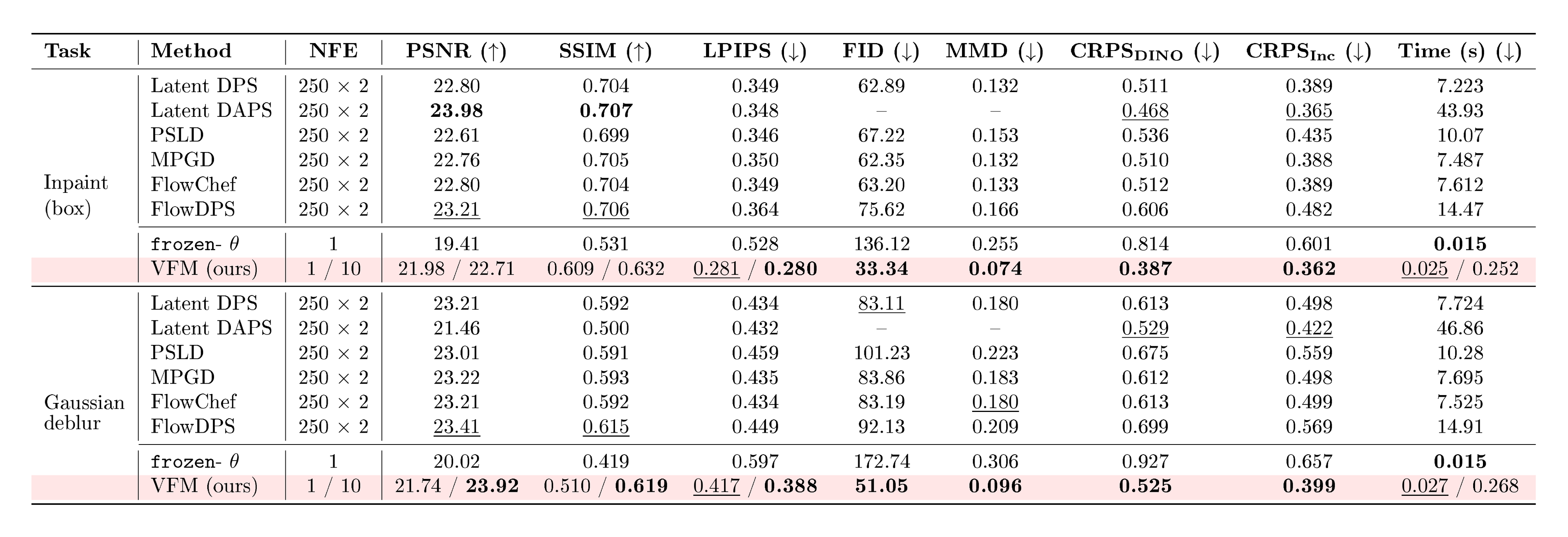

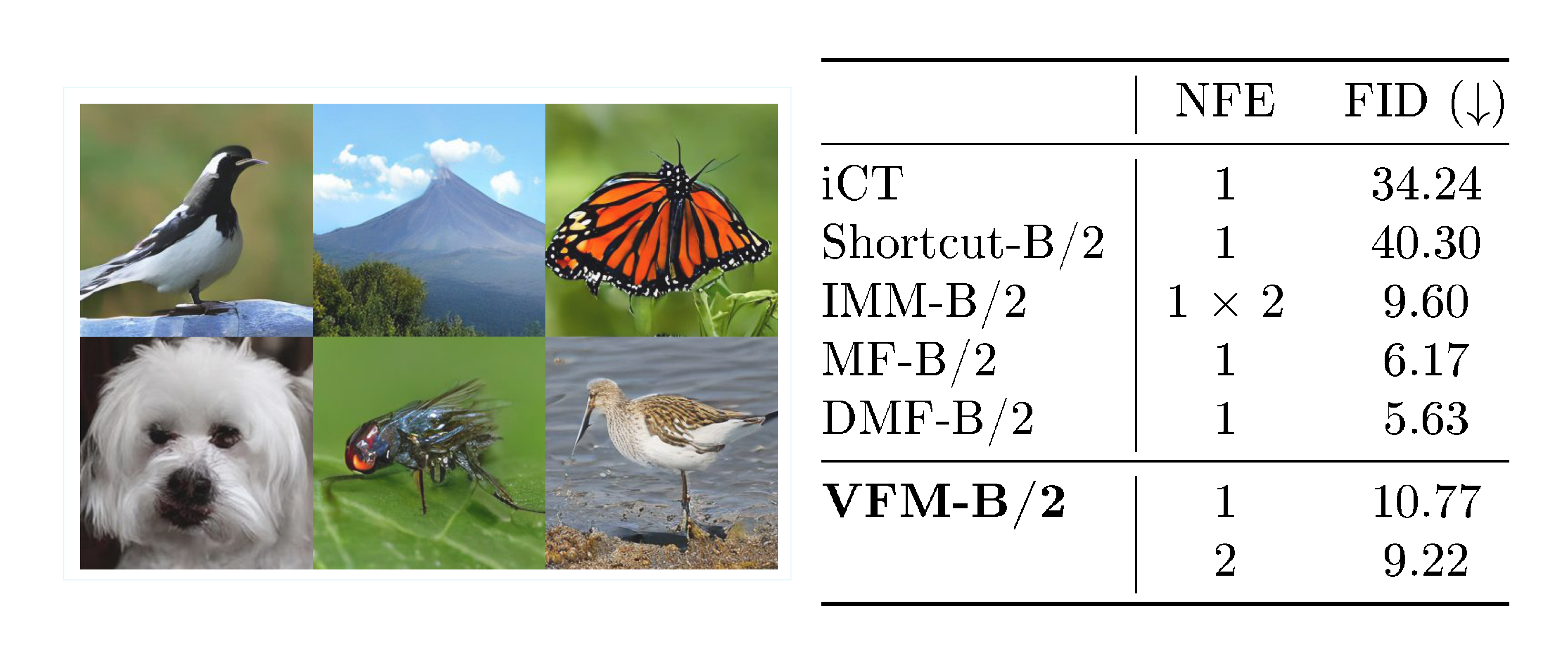

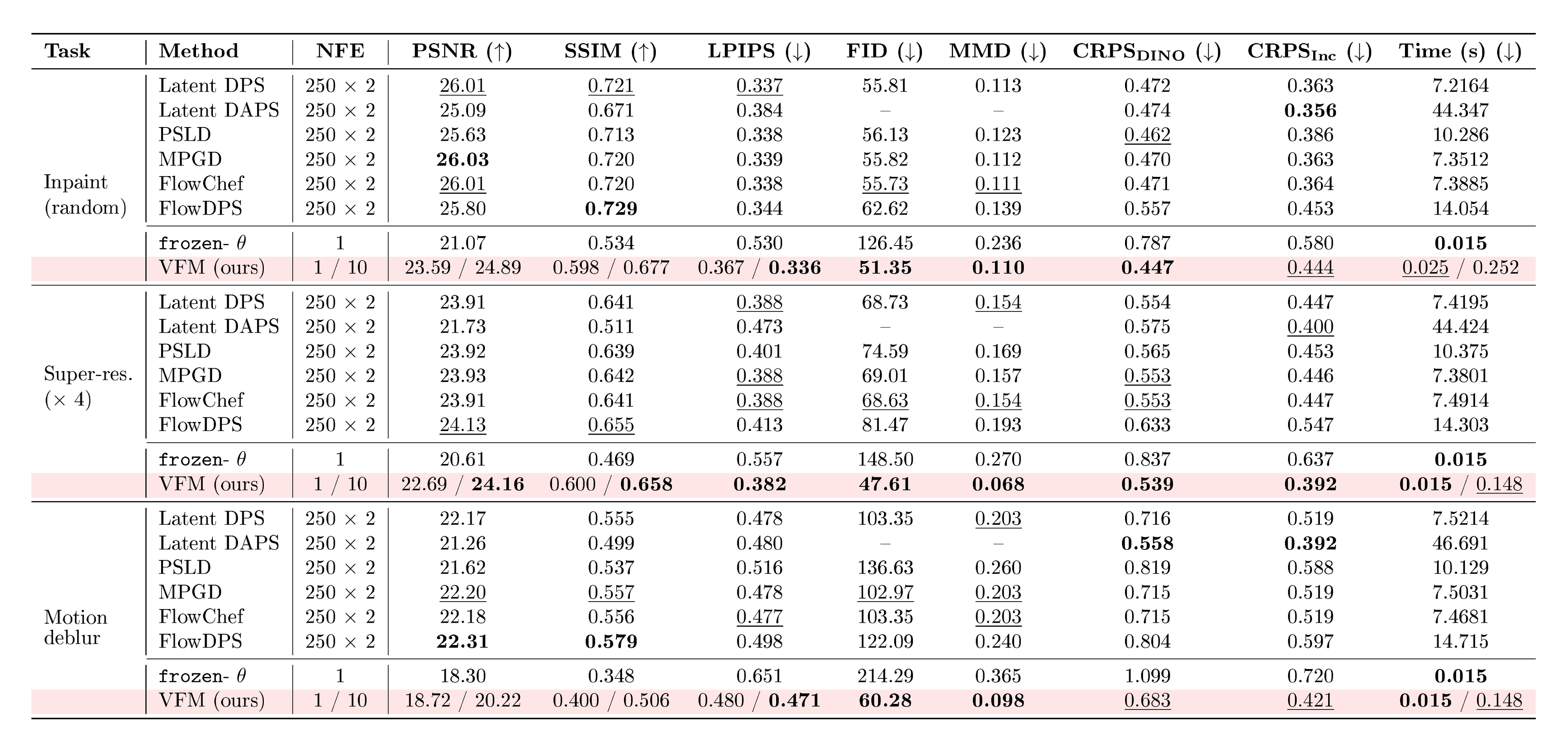

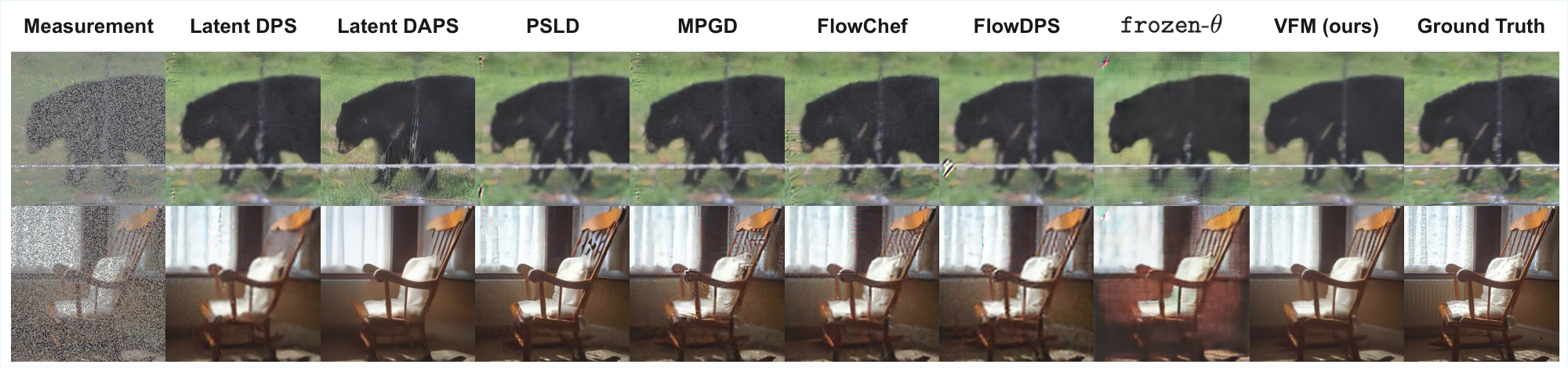

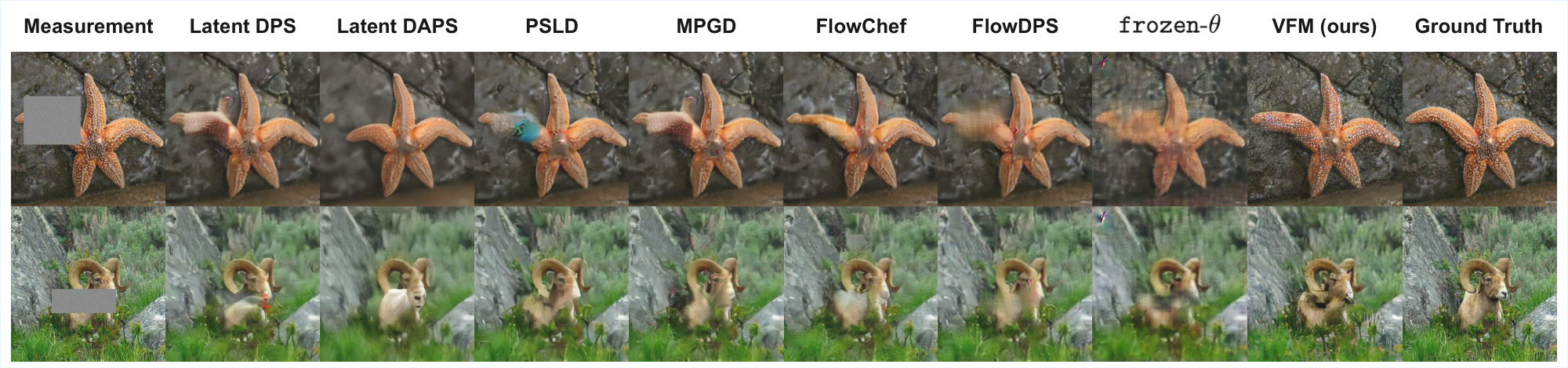

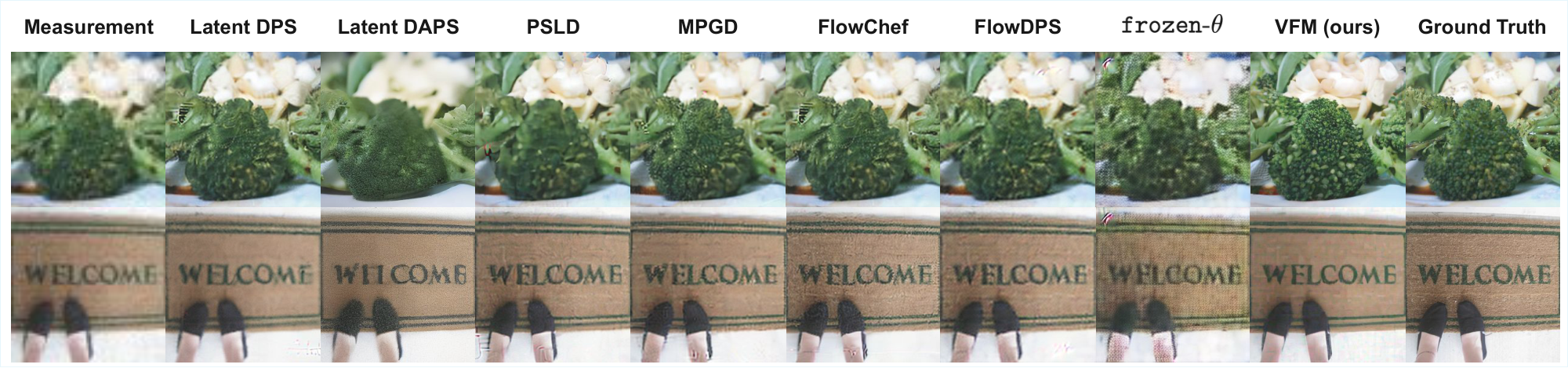

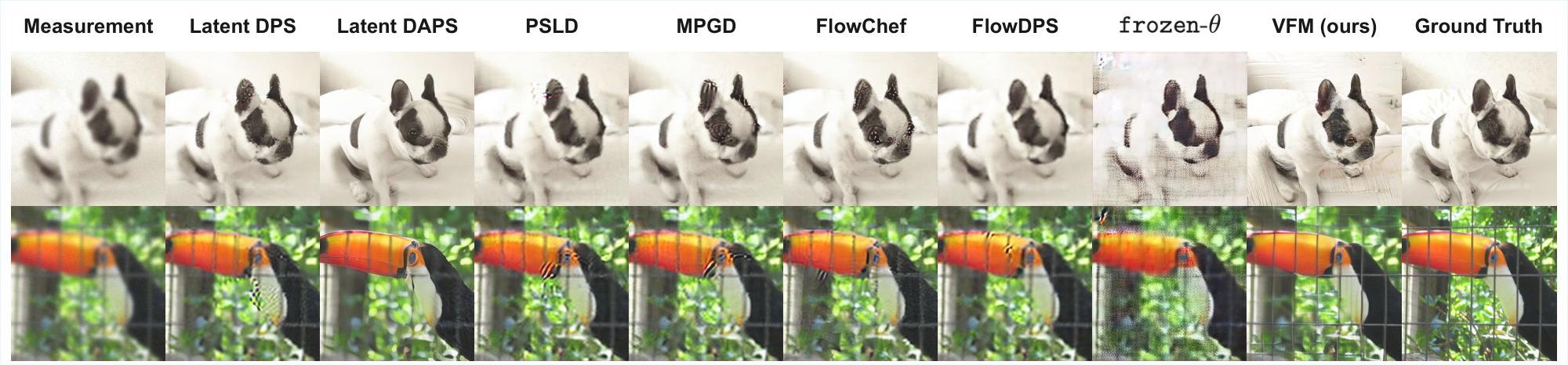

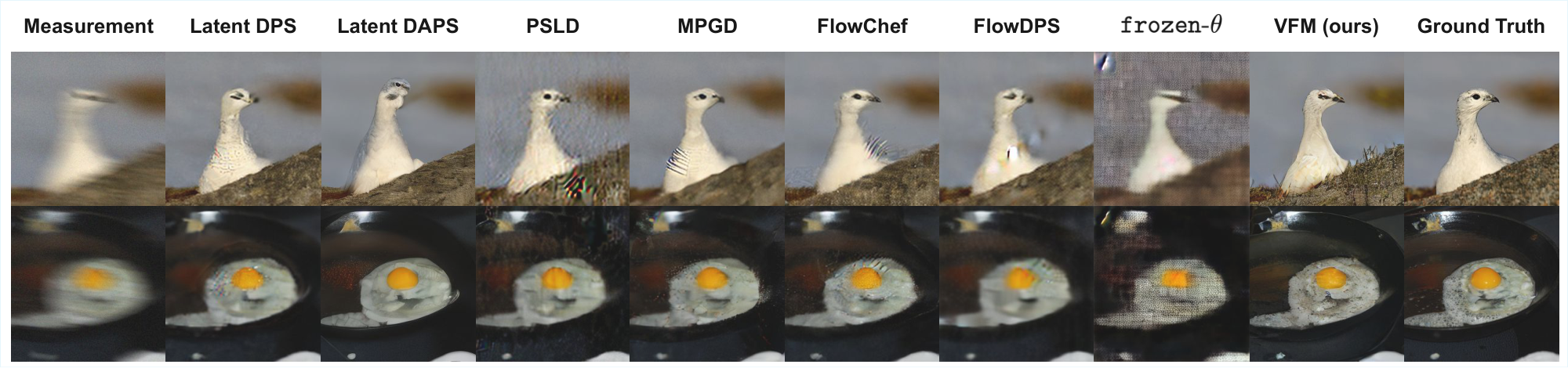

4.2 Image Inverse Problems

::: {caption="Table 1: Quantitative comparison on ImageNet for box inpainting and Gaussian deblurring. Best results are in bold, second best are $\underline{underlined}$. $\uparrow$ : higher is better, $\downarrow$ : lower is better. For VFM, we display results for single samples and average over 10 samples, displayed as {sample} / {average}. VFM achieves the best results on LPIPS, FID, MMD, CRPS, with a significantly reduced wall-clock time."}

:::

We evaluate VFM on standard image inverse problems using ImageNet 256 $\times$ 256, comparing against established guidance-based solvers, as well as the frozen- $\theta$ baseline considered in our earlier 2D experiment. For VFM, we amortize over the problems, as described in Section 3.2. All methods operate in the latent space of SD-VAE [20]. We provide further details of our experimental settings in Appendix B.2.

Comparison with guidance-based methods.

Table 1 reports quantitative results on box inpainting and Gaussian deblurring tasks (additional tasks are in Table 2, Appendix). For VFM, we report results for both single posterior samples and averaged estimates over 10 posterior samples, shown as {sample}/{average}. For all guidance-based baselines, we use the same flow-matching backbone (SiT-B/2) used to initialize our mean-flow model.

Across both tasks, we observe that VFM is consistently better than the baselines on distributional metrics (FID, MMD & CRPS), e.g., on box inpainting, the FIDs on the baselines range between 63–76, while we achieve an FID of 33.3. These improvements align with the qualitative results in Figure 3, where we observe that VFM exhibits notable diversity in the inpainted region, while maintaining visual sharpness. Guidance-based methods generally struggle with box-inpainting, especially when operating in latent space.

On pixel-space fidelity metrics (PSNR, SSIM), guidance methods consistently scores higher than a single VFM draw. However, both PSNR and SSIM typically reward mean behavior and thus prefer smoother results [21]. To confirm this, we observe that averaging multiple VFM samples narrows this gap and even exceeds the baselines in some instances, e.g., on Gaussian deblurring. On LPIPS, which is a feature-space perceptual similarity metric, we find that VFM is competitive even without averaging; this is consistent with LPIPS being more aligned with the perceptual quality than PSNR or SSIM [21].

We also note the significant speed advantage of VFM at inference time: we used 250 sampling steps for the guidance methods with an additional $\times 2$ cost for classifier-free guidance [22], while VFM requires only one step to achieve competitive results, as displayed. This results in around two orders of magnitude lower wall-clock time, e.g., DAPS [23] has an inference cost close to a minute; in comparison, the $\sim 0.03$ s cost of VFM is instantaneous.

Benefits of joint training.

The frozen- $\theta$ baseline, while fastest at inference time, performs poorly across all metrics, exhibiting visible artifacts and blurriness. This highlights the importance of jointly training the flow map $f_\theta$ and the adapter $q_\phi$, consistent with our observations from the 2D experiment that the flow map itself needs to adjust for the adapter to approximate the conditional distributions well. By training jointly, we observe surprisingly strong perceptual quality, despite the simple Gaussian structural assumption used in the variational posterior.

Unconditional generation.

To assess the robustness of VFM, we also evaluate unconditional generation from the trained flow map. In Figure 4, we compare the FID on 50, 000 unconditional samples generated from the flow map in VFM, against various baselines with similar architecture sizes [24, 25, 26, 27]. We fine-tune the SiT-B/2 model (trained for 80 epochs) for an additional 100 epochs. We note, however, that the baselines are trained for longer ($\sim ! 240$ epochs). Unconditional generation of VFM remains competitive, with 2-step sampling results achieving FID below 10 (see Figure 4 for visual results). To achieve this result, we emphasize the important role of the $\alpha$ parameter; we observe that the adapter's noise outputs retain some structure from the observations (see Figure 28, Appendix) and are therefore not representative of pure standard Gaussian noise. Thus, using $\alpha < 1$ is necessary to achieve good unconditional performance. In our experiments, we used $\alpha=0.5$.

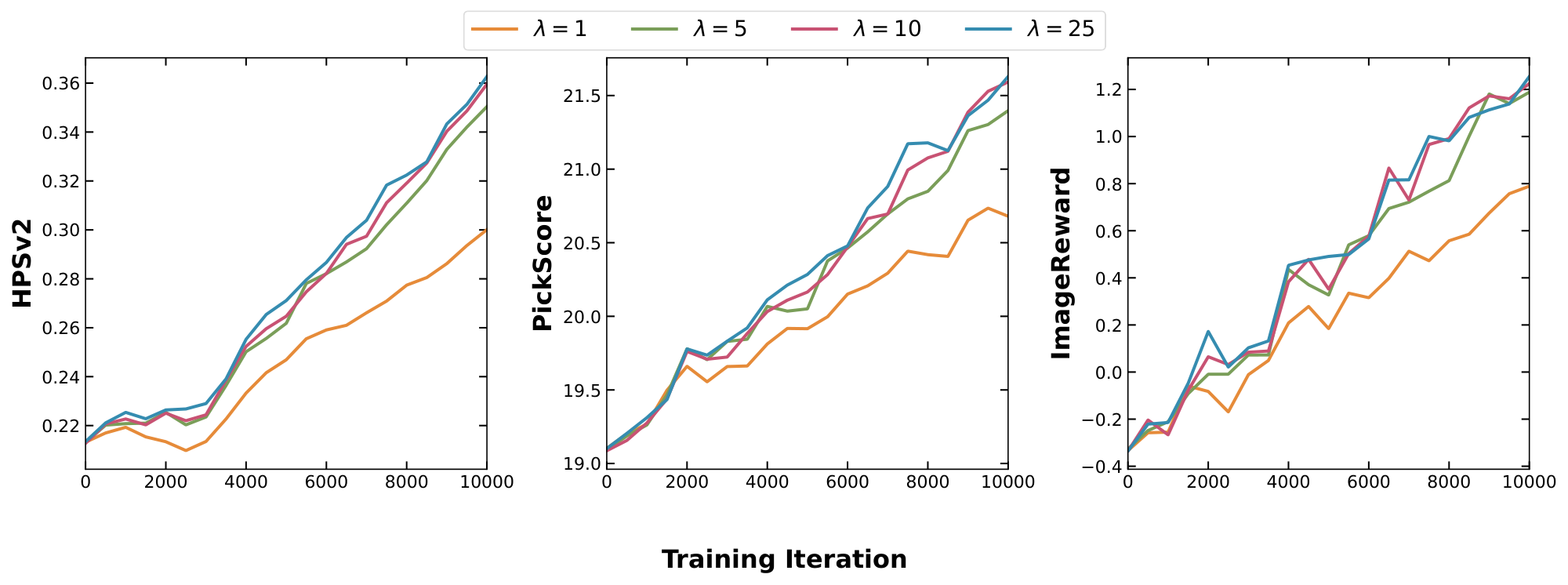

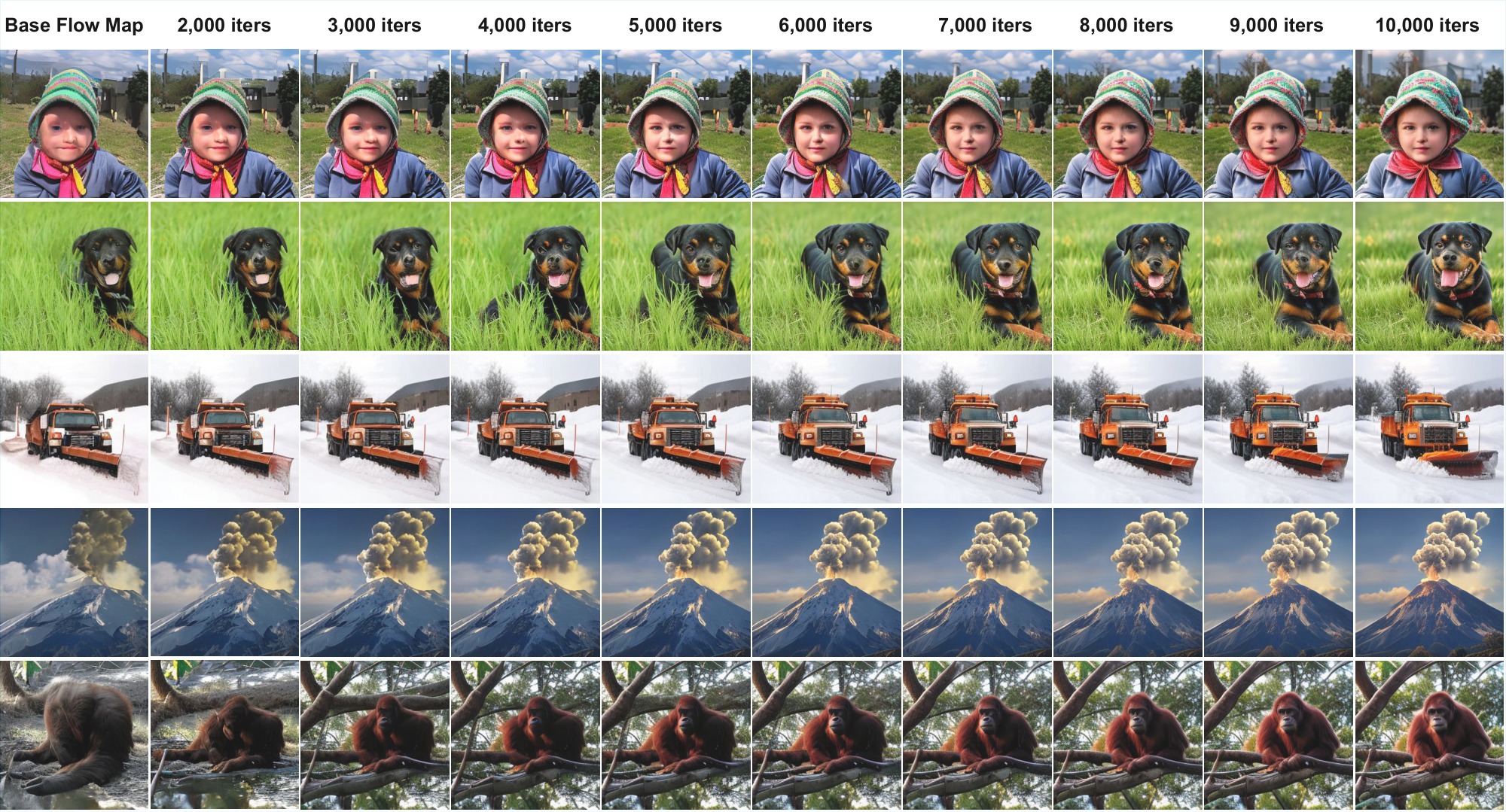

4.3 General Reward Alignment via VFM Fine-Tuning

Beyond solving standard inverse problems, the Variational Flow Map presents a highly efficient framework for general reward alignment. The goal is to fine-tune a pre-trained model such that its generated samples maximize a differentiable reward function $R(x, c)$ conditioned on a context $c$, while staying close to the original data distribution. This objective effectively corresponds to sampling from a reward-tilted distribution $p_{\text{reward}}(x|c) \propto p_{\text{data}}(x) \exp(\beta R(x, c))$.

Traditional flow and diffusion reward fine-tuning methods require expensive backpropagation through iterative sampling trajectories [28, 29, 30] or rely on approximations [31, 32]. In contrast, VFM achieves this by learning an amortized noise adapter $q_\phi(z|c)$ that directly maps the condition $c$ to a high-reward region of the latent space, while simultaneously fine-tuning the flow map $f_\theta$ to decode this noise into high-quality data. We formulate this by replacing the standard observation loss with a reward maximization objective:

$ \begin{align} \mathcal{L}{reward}(\theta, \phi) = -\lambda ;\mathbb{E}{c \sim p(c), z \sim q_\phi(z | c)}\left[R(f_\theta(z), c)\right] \end{align} $

where $\lambda$ controls the reward strength. In this context, the reward $R(x, c)$ can be viewed as the (unnormalized) log-likelihood of the context $c$ (e.g., a text prompt) given the generated sample.

To the best of our knowledge, this is the first rigorous, scalable framework for fine-tuning flow maps to arbitrary differentiable rewards. In particular, the fine-tuning process is very fast and stable. Starting from a pre-trained flow map, VFM achieves strong reward alignment in under $0.5$ epochs. The resulting model enables sampling from the reward-tilted distribution in a single neural function evaluation (1 NFE). We provide qualitative results in Figure 5 and present further training and generation details in Appendix B.3.

5. Related Works

Section Summary: Previous studies have explored using diffusion-based models for variational inference to approximate data distributions given observations, but they often depend on complex tools like normalizing flows that struggle with high-resolution images, or stick to simpler Gaussian approximations in the raw data space rather than more efficient noise spaces. Other approaches handle posterior inference in noise spaces with fixed generators and added complexity, or focus on stabilizing training for unconditional models through special noise mappings, differing from the current method's simpler setup that adapts the core model for conditional tasks. Finally, techniques for one-step conditional sampling tie closely to specific model types like consistency models, limiting their flexibility compared to broader flow-based approaches.

Variational/amortized inference with diffusion-based priors has been explored in previous works: [33] explores usage of score-based prior in variational inference to approximate posteriors $p(x|y)$ in data space and [34] extends this to the amortized inference setting. However, these approaches rely on normalizing flows to ensure sufficient flexibility for the variational posterior, making scaling to high-resolution settings difficult. The work [35] on the other hand, uses a Gaussian variational posterior similar to ours, but still performs variational inference in data space.

Noise space posterior inference for arbitrary generative models has been considered in ([36]). However, their method considers a frozen generator and compensates with a more flexible noise adapter based on neural SDEs, making training significantly more complex. In comparison, VFM uses a simpler adapter and instead unfreeze the generative flow map, so the model itself can adapt to the conditional task while keeping the objective simple.

We also note the work ([18]), which introduces Variational Consistency Training (VCT) to address instability issues in consistency model training by learning data-dependent noise couplings through a variational encoder that maps data into a better-behaved latent representation. While conceptually related to our work, the goal is different: VCT is aimed at improving stability of unconditional consistency training, whereas VFM is designed to amortize posterior sampling for conditional generation.

Finally, Noise Consistency Training (NCT) [37] also targets one-step conditional sampling, but via a different construction: they consider a diffusion process in $(z, y)$-space and learns a consistency map from intermediate states to $(x, y)$. This is strongly tied to consistency models and therefore do not generalize naturally to flow maps, considered state-of-the-art in one-step generative modeling.

6. Conclusion

Section Summary: Variational Flow Maps, or VFMs, offer a new way to quickly sample from complex data distributions and fine-tune AI models for rewards using just one or a few steps, making the process much more efficient. This approach trains a special mapping tool along with an adapter that smartly guesses the best starting noise based on imperfect inputs like blurry images, category labels, or text descriptions. Looking ahead, researchers plan to improve it by incorporating more flexible noise patterns and applying it to areas like video processing to ensure smoother transitions between frames.

We proposed Variational Flow Maps (VFMs) to enable highly efficient posterior sampling and reward fine-tuning with just a single (or few) sampling steps. VFM leverages a principled variational objective to jointly train a flow map alongside an amortized noise adapter, which infers optimal initial noise from noisy observations, class labels, or text prompts. A natural next step is to relax our current Gaussian adapter assumption by using more expressive noise models, such as normalizing flows or energy-transformers [38], which can capture richer, non-Gaussian conditional structures. Another exciting direction for future research is to extend the VFM framework to other distillation methods and modalities; for instance, one could tackle video inverse problems, where latent noise evolution could be leveraged to promote temporal coherence among frames.

Impact Statement

Section Summary: The main aim is to cut down the computing power needed for generating specific outputs and sampling from probability distributions, which could speed up real-world uses in science and engineering while also lowering the energy used for running these models. As AI tools that create data become more common in everyday life, reducing these costs is a growing priority in machine learning. Variational flow maps advance this by allowing efficient, targeted sampling without any drop in quality.

The overarching goal of reducing the computational cost for conditional generation and posterior sampling has the potential not only to drive practical applications in scientific and engineering workflows that rely on fast generation of posterior samples, but also to help reduce the high energy cost for inference. This is especially valuable as generative models see widespread use in today's society; thus, the problem of lowering inference costs is an increasingly important challenge for machine learning. Variational flow maps take a step in this direction by enabling low-cost conditional sampling without sacrificing performance.

Acknowledgments

Section Summary: The authors express thanks to the Isambard-AI National AI Research Resource for providing the computing power used in their work. This resource is managed by the University of Bristol and funded by the UK government's Department for Science, Innovation and Technology through UK Research and Innovation, along with the Science and Technology Facilities Council. Further support came from a US Department of Defense fellowship awarded to Professor Andrew Stuart and a grant from the Office of Naval Research to the SciAI Center.

The authors acknowledge the use of resources provided by the Isambard-AI National AI Research Resource (AIRR) [39]. Isambard-AI is operated by the University of Bristol and is funded by the UK Government’s Department for Science, Innovation and Technology (DSIT) via UK Research and Innovation; and the Science and Technology Facilities Council [ST/AIRR/I-A-I/1023]. ST is supported by a Department of Defense Vannevar Bush Faculty Fellowship held by Prof. Andrew Stuart, and by the SciAI Center, funded by the Office of Naval Research (ONR), under Grant Number N00014-23-1-2729.

Appendix

Section Summary: The appendix outlines the theoretical underpinnings of a machine learning model involving latent variables and observations. It first derives the model's loss function by using Kullback-Leibler divergence to approximate the joint probability distribution of data, observations, and hidden variables, resulting in three key terms: one for fitting data, one for matching observations, and a regularization term to align the inferred latent distribution with a standard prior. The second part proves a key proposition in a simplified linear-Gaussian setup, demonstrating that training the model's generation and inference components together is essential for accurately recovering the true posterior distribution, while separate training often fails.

A. Theory

A.1 Derivation of the loss

We recall that the VFM objective is obtained by matching the following two representations of $p(x, y, z)$ using the KL divergence:

$ \begin{align} q_\phi(z | y) p(y | x) p(x) \approx p_\theta(x, y | z) p(z), \end{align} $

where we assumed that

$ \begin{align} p_\theta(x, y | z) = \mathcal{N}(x | f_\theta(z), \sigma^2 I) \mathcal{N}(y | A f_\theta(z), \tau^2 I). \end{align}\tag{10} $

By direct computation, this yields

$ \begin{align} &KL(q_\phi(z | y) p(y | x) p(x) || p_\theta(x, y | z) p(z)) \ &= -\int \log \frac{p_\theta(x, y | z) p(z)}{q_\phi(z | y) p(y | x) p(x)} q_\phi(z | y) p(y | x) p(x) dx dy dz \ &= -\mathbb{E}{q\phi(z | y) p(y | x)p(x)}\left[\log p_\theta(x, y|z)\right] + \mathbb{E}{p(y | x)p(x)}\left[KL\left(q\phi(z|y) || p(z)\right)\right] + \underbrace{\mathbb{E}{p(y|x) p(x)}[\log (p(y|x) p(x))]}{\leq 0} \ &\leq -\mathbb{E}{q\phi(z | y) p(y | x)p(x)}\left[\log p_\theta(x, y|z)\right] + \mathbb{E}{p(y)}\left[KL\left(q\phi(z|y) || p(z)\right)\right] \ \begin{split} &\stackrel{Equation 10}{=} -\mathbb{E}{q\phi(z | y) p(y)}\left[\log \mathcal{N}(x | f_\theta(z), \sigma^2 I) + \log \mathcal{N}(y | \mathcal{A} f_\theta(z), \tau^2 I)\right] + \mathbb{E}{p(y | x)p(x)}\left[\mathcal{KL}\left(q\phi(z|y) || p(z)\right)\right], \end{split} \end{align}\tag{11} $

where we used that $\mathbb{E}{p(y|x) p(x)}[\log (p(y|x) p(x))] \leq 0$ since this is the negative Shannon entropy of the joint distribution $H(p(x, y)) := -\mathbb{E}{p(x, y)}[\log (p(x, y))] \geq 0$. This yields

$ \begin{align} &KL(q_\phi(z | y) p(y | x) p(x) || p_\theta(x, y | z) p(z)) \leq \frac{1}{2\tau^2}\mathcal{L}{data}(\theta, \phi) + \frac{1}{2\sigma^2} \mathcal{L}{obs}(\theta, \phi) + \mathcal{L}_{KL}(\phi), \nonumber \end{align} $

where

$ \begin{align} \mathcal{L}{data}(\theta, \phi) &= \mathbb{E}{q_\phi(z | y) p(y | x)p(x)}\left[|x - f_\theta(z)|^2\right], \ \mathcal{L}{obs}(\theta, \phi) &= \mathbb{E}{q_\phi(z | y) p(y)}\left[|y - Af_\theta(z)|^2\right], \ \mathcal{L}{KL}(\phi) &= \mathbb{E}{p(y)}\left[KL\left(q_\phi(z|y) || p(z)\right)\right]. \end{align}\tag{12} $

A.2 Proof of Proposition 1

This section provides the formal proofs for Proposition 1 within a Linear-Gaussian framework. We analyze the interaction between the generative map $f_\theta$ and the variational posterior $q_\phi$ to demonstrate that joint optimization is necessary for exact posterior mean recovery under diagonal constraints. The derivation proceeds from characterizing the optimal parameters to proving the almost sure failure of separate training in Proposition 12. We conclude with Remark 13, which discusses the extension of these results to non-linear cases through the lens of Jacobian alignment and symmetry restoration.

Data and Observation Model.

We assume the ground truth data $x \in \mathbb{R}^d$ follows a Gaussian distribution:

$ \begin{align} x \sim p_{data}(x) = \mathcal{N}(x|m, C), \end{align} $

where $m \in \mathbb{R}^d$ is the data mean and $C \in \mathbb{R}^{d\times d}$ is the symmetric positive definite (SPD) covariance matrix. The observation $y \in \mathbb{R}^{d_y}$ is obtained via a linear operator $A \in \mathbb{R}^{d_y \times d}$ with additive Gaussian noise:

$ \begin{align} y = Ax + \epsilon, \quad \epsilon \sim \mathcal{N}(0, \sigma^2 I), \end{align} $

where $\sigma > 0$ is the noise level. Consequently, the marginal distribution of observations is given by

$ \begin{align} p(y) = \mathcal{N}(y|\mu_y, \Sigma_y), \quad where \mu_y = Am, \quad \Sigma_y = ACA^\top + \sigma^2 I. \end{align} $

Generative Model.

We define the generative model $f_\theta: \mathbb{R}^d \to \mathbb{R}^d$ as a linear map acting on a standard Gaussian latent variable $z$:

$ \begin{align} &z \sim p(z) = \mathcal{N}(z|0, I), \ &x = f_\theta(z) = K_\theta z + b_\theta, \end{align} $

where $\theta = {K_\theta, b_\theta}$ are the learnable parameters with $K_\theta \in \mathbb{R}^{d \times d}$ and $b_\theta \in \mathbb{R}^d$. The induced model distribution is $p_\theta(x) = \mathcal{N}(b_\theta, K_\theta K_\theta^\top)$.

Amortized Inference (Adapter).

We parameterize the variational posterior (noise adapter) $q_\phi(z|y)$ as a multivariate Gaussian distribution:

$ \begin{align} q_\phi(z|y) = \mathcal{N}(\mu_\phi(y), \Sigma_\phi(y)), \end{align} $

where $\mu_\phi: \mathbb{R}^{d_y} \to \mathbb{R}^d$ and $\Sigma_\phi: \mathbb{R}^{d_y} \to \mathbb{R}^{d\times d}$ are generally parameterized by neural networks. While one may optionally restrict $\Sigma_\phi(y)$ to be a diagonal matrix for computational efficiency.

In the following sections, we will derive the optimal solutions for $\theta = {K_\theta, b_\theta}$ and $\phi$ under the separate training and joint training paradigms, respectively.

Training Objective.

Recall that in the general framework, we minimized a joint objective consisting of a data matching term, observation matching term, and a KL divergence term:

$ \begin{split} \mathcal{L}(\theta, \phi) = &\underbrace{\mathbb{E}{y \sim p{\mathrm{data}}(y)} \mathbb{E}{z \sim q\phi(z|y)} \left[\frac{1}{2\sigma^2} | y - A f_\theta(z) |^2 \right]}{\mathcal{L}{\text{obs}}: \text{ Observation Loss}} \ &+ \underbrace{\mathbb{E}{x \sim p{\mathrm{data}}(x)} \mathbb{E}{y \sim p(y|x)} \mathbb{E}{z \sim q_\phi(z|y)} \left[\frac{1}{2\tau^2} | x - f_\theta(z) |^2 \right]}{\mathcal{L}{\text{data}}: \text{ Data Fitting Loss}}\ &+ \underbrace{\mathbb{E}{y \sim p{\mathrm{data}}(y)} \left[\mathrm{KL}(q_\phi(z|y) , ||, p(z)) \right]}{\mathcal{L}{\mathrm{KL}}: \text{ KL Loss}}. \end{split}\tag{13} $

In the linear-Gaussian theoretical analysis, the generative map $f_\theta(z) = K_\theta z + b_\theta$ is explicitly parameterized as a single-step affine transformation. Note that $\mathcal{L}{\text{data}}$ corresponds to the negative expected log-likelihood term $-\mathbb{E} \left[\log \mathcal{N}(x|f{\theta}(z), \tau^2 I) \right]$.

Definition 4: Matrix Sets and Measure

We denote the set of $d \times d$ orthogonal matrices as the orthogonal group $\mathbb{O}(d) := { Q \in \mathbb{R}^{d \times d} \mid Q^\top Q = I }$. The space $\mathbb{O}(d)$ is equipped with the unique normalized Haar measure $\nu_{\mathbb{O}(d)}$, representing the uniform distribution over the group. Furthermore, let $\mathbb{S}^d$ represent the space of $d \times d$ real symmetric matrices. The subsets of symmetric positive semi-definite (SPSD) and symmetric positive definite (SPD) matrices are denoted by $\mathbb{S}+^d := { M \in \mathbb{S}^d \mid x^\top M x \ge 0, \forall x \in \mathbb{R}^d }$ and $\mathbb{S}{++}^d := { M \in \mathbb{S}^d \mid x^\top M x > 0, \forall x \in \mathbb{R}^d \setminus {0} }$, respectively. We denote the set of $d \times d$ real diagonal matrices as $\mathbb{D}(d) := { \mathrm{diag}(d_1, \dots, d_d) \mid d_i \in \mathbb{R} }$. We denote the determinant of a square matrix $M$ by $|M|$.

Lemma 5: Optimal Generative Parameters via KL Minimization

Consider the data distribution $p_{\mathrm{data}}(x) = \mathcal{N}(m, C)$ and the induced model distribution $p_\theta(x) = \mathcal{N}(b_\theta, \Sigma_\theta)$ with $\Sigma_\theta = K_\theta K_\theta^\top$. Let $C = U \Lambda^2 U^\top$ be the eigen-decomposition of the data distribution covariance, where $U \in \mathbb{O}(d)$ and $\Lambda \in \mathbb{D}(d)$ has positive entries. The set of optimal parameters $\Theta^* := \arg\min_{\theta} \mathrm{KL}(p_{\mathrm{data}}(x) , ||, p_\theta(x))$ is given by:

$ \Theta^* = { {K_\theta, b_\theta} \mid b_\theta = m, , K_\theta = U \Lambda Q, , \forall Q \in \mathbb{O}(d) }. $

Proof: The KL divergence between two multivariate Gaussians is minimized if and only if their first and second moments match, i.e., $b_\theta = m$ and $\Sigma_\theta = C$. Substituting the parameterization $\Sigma_\theta = K_\theta K_\theta^\top$ and the eigen-decomposition of $C$, the second condition becomes $K_\theta K_\theta^\top = U \Lambda^2 U^\top = (U\Lambda)(U\Lambda)^\top$. This equality holds if and only if $K_\theta = U \Lambda Q$ for some $Q \in \mathbb{R}^{d \times d}$ such that $Q Q^\top = I$, which implies $Q \in \mathbb{O}(d)$.

Definition: Optimal Loss Value

We define the optimal loss value for any $\theta\in\Theta^*$ and any $\phi$ as:

$ \mathcal{L}\mathrm{opt} = \min{\theta\in\Theta^*, \phi} \mathcal{L}(\theta, \phi). $

Lemma 6

Consider the joint training objective $\mathcal{L}(\theta, \phi)$ in the Linear-Gaussian setting. For fixed generative parameters $\theta = {K_\theta, b_\theta}$, the optimal variational posterior $q_{\phi^*}(z|y) = \mathcal{N}(\mu^*(y), \Sigma^*(y))$ that minimizes the loss Equation 13 (under the constratint that $\Sigma(y)\in\mathbb{S}_{++}^d$) is given by:

$ \begin{align} \mu^*(y) &:= K_\phi y + b_\phi, \ \Sigma^*(y) &:= \Sigma_\phi, \end{align} $

where

$ \begin{align} \Sigma_\phi &:= \left(I_d + \frac{1}{\tau^2} K_\theta^\top K_\theta + \frac{1}{\sigma^2} K_\theta^\top A^\top A K_\theta \right)^{-1}, \tag{a} \ K_\phi &:= \Sigma_\phi K_\theta^\top \left(\frac{1}{\sigma^2} A^\top + \frac{1}{\tau^2} K \right), \tag{b} \ b_\phi &:= \Sigma_\phi K_\theta^\top \left[-\frac{1}{\sigma^2} A^\top A b_\theta + \frac{1}{\tau^2} (I_d - K A) m - \frac{1}{\tau^2} b_\theta \right], \tag{c} \end{align}\tag{14} $

and $K = C A^\top (A C A^\top + \sigma^2 I_{d_y})^{-1}$ denotes the Kalman gain matrix associated with the data distribution. In particular, this shows that the optimal covariance $\Sigma^*$ is independent of $y$, and the optimal mean $\mu^*(y)$ is an affine function of $y$

Proof: The total loss is expressed as the expectation $\mathcal{L} = \mathbb{E}{y \sim p(y)} [J(y; \mu, \Sigma)]$, where $\mu := \mu\phi(y)$ and $\Sigma := \Sigma_\phi(y)$. The pointwise objective $J(y; \mu, \Sigma)$ is

$ \begin{align} J(y; \mu, \Sigma) &= \frac{1}{2\sigma^2} \left(| y - A b_\theta - A K_\theta \mu |^2 + \mathrm{Tr}(K_\theta^\top A^\top A K_\theta \Sigma) \right) \nonumber \ &\quad + \frac{1}{2\tau^2} \left(\mathbb{E}{x|y} [| x - b\theta - K_\theta \mu |^2] + \mathrm{Tr}(K_\theta^\top K_\theta \Sigma) \right) \nonumber \ &\quad + \frac{1}{2} \left(\mathrm{Tr}(\Sigma) + |\mu|^2 - \ln |\Sigma| \right). \end{align}\tag{15} $

Differentiating $J$ with respect to $\Sigma$ yields

$ \frac{\partial J}{\partial \Sigma} = \frac{1}{2} \left(\frac{1}{\sigma^2} K_\theta^\top A^\top A K_\theta + \frac{1}{\tau^2} K_\theta^\top K_\theta + I_d \right) - \frac{1}{2} \Sigma^{-1}. $

The stationary point of this gradient corresponds to the constant optimal covariance matrix $\Sigma_\phi$ defined in Equation 14a, naturally satisfying the SPD restriction. Similarly, the gradient with respect to the variational mean $\mu$ is given by

$ \nabla_\mu J = -\frac{1}{\sigma^2} K_\theta^\top A^\top (y - A b_\theta - A K_\theta \mu) - \frac{1}{\tau^2} K_\theta^\top (\mathbb{E}[x|y] - b_\theta - K_\theta \mu) + \mu. $

Rearranging the terms for the condition $\nabla_\mu J = 0$, it follows that

$ \left(I_d + \frac{1}{\sigma^2} K_\theta^\top A^\top A K_\theta + \frac{1}{\tau^2} K_\theta^\top K_\theta \right) \mu = \frac{1}{\sigma^2} K_\theta^\top A^\top (y - A b_\theta) + \frac{1}{\tau^2} K_\theta^\top (\mathbb{E}[x|y] - b_\theta).\tag{16} $

Observing that the coefficient matrix on the left-hand side is $\Sigma_\phi^{-1}$, we obtain the expression for the optimal mean

$ \mu^*(y) = \Sigma_\phi K_\theta^\top \left[\frac{1}{\sigma^2} A^\top y - \frac{1}{\sigma^2} A^\top A b_\theta + \frac{1}{\tau^2} \mathbb{E}[x|y] - \frac{1}{\tau^2} b_\theta \right].\tag{17} $

Substituting the conditional expectation of the data distribution $\mathbb{E}[x|y] = K y + (I_d - K A) m$ into Equation 17 results in

$ \mu^*(y) = \Sigma_\phi K_\theta^\top \left(\frac{1}{\sigma^2} A^\top + \frac{1}{\tau^2} K \right) y + \Sigma_\phi K_\theta^\top \left[-\frac{1}{\sigma^2} A^\top A b_\theta + \frac{1}{\tau^2} (I_d - K A) m - \frac{1}{\tau^2} b_\theta \right].\tag{18} $

This affine structure identifies $K_\phi$ and $b_\phi$ as defined in Equation 14b and 14c.

Corollary 7

We can optimize $\phi\in\Phi$ where

$ \Phi := { (K_\phi, b_\phi, \Sigma_\phi) \mid K_\phi \in \mathbb{R}^{d \times d_y}, b_\phi \in \mathbb{R}^d, \Sigma_\phi \in \mathbb{S}^d_{++} }.\tag{19} $

Proof: The functional forms derived in Lemma 6 show that any $q_\phi$ not belonging to this parametric family is strictly sub-optimal for the joint loss $\mathcal{L}(\theta, \phi)$, thus reducing the search space to the coefficients ${K_\phi, b_\phi, \Sigma_\phi}$.

Definition: Separate Training

The separate training paradigm consists of a two-stage sequential optimization. First, the generative parameters $\theta = {K_\theta, b_\theta}$ are obtained by minimizing the unconditional KL divergence

$ \begin{align} \theta^* = \operatorname*{argmin}\theta \mathrm{KL}\left((f\theta)\sharp \mathcal{N}(0, I) , ||, p{\mathrm{data}}(x) \right), \end{align} $

which, in the linear-Gaussian case, implies $b_{\theta^*} = m$ and $K_{\theta^*} K_{\theta^*}^\top = C$. Subsequently, the variational parameters are determined by fixing $\theta^*$ and minimizing the joint objective

$ \begin{align} \phi^* = \operatorname*{argmin}_\phi \mathcal{L}(\theta^*, \phi). \end{align} $

Definition: Joint Training

The joint training paradigm optimizes $\theta$ and $\phi$ simultaneously by minimizing the regularized objective with $\alpha > 0$,

$ \begin{split} &\min_{\theta, \phi} \mathcal{L}(\theta, \phi) \ &\quad \text{s.t. }(f_\theta)\sharp \mathcal{N}(0, I) = p{\mathrm{data}}. \end{split} $

For the linear-Gaussian framework, this constraint restricts the search space of $\theta$ to the manifold

$ \Theta^* = { {K_\theta, b_\theta} \mid b_\theta = m, K_\theta K_\theta^\top = C }. $

Definition: Solution Sets

Let $\Theta^*$ be the set of optimal generative parameters from Lemma 5, and the set $\Phi$ is defined in Equation 19. We define the diagonal parameter space by restricting the covariance matrix to be diagonal, yielding

$ \Phi_{\mathbb{D}} := { (K_\phi, b_\phi, \Sigma_\phi) \in \Phi \mid \Sigma_\phi \in \mathbb{S}_{++}^d \cap \mathbb{D}(d) }. $

The solution sets for the training paradigms are defined as

$ \begin{align} \mathcal{S}^{\mathrm{sep}} &:= { (\theta^*, \phi), \theta^* \in \Theta^*\mid \phi = \operatorname*{argmin}{\phi' \in \Phi} \mathcal{L}(\theta^*, \phi') }, \ \mathcal{S}^{\mathrm{sep}}{\mathrm{diag}} &:= { (\theta^*, \phi), \theta^* \in \Theta^*\mid \phi = \operatorname*{argmin}{\phi' \in \Phi{\mathbb{D}}} \mathcal{L}(\theta^*, \phi') }, \ \mathcal{S}^{\mathrm{joint}} &:= { (\theta, \phi) \mid (\theta, \phi) = \operatorname*{argmin}{\theta' \in \Theta^*, \phi' \in \Phi} \mathcal{L}(\theta', \phi') }, \ \mathcal{S}^{\mathrm{joint}}{\mathrm{diag}} &:= { (\theta, \phi) \mid (\theta, \phi) = \operatorname*{argmin}{\theta' \in \Theta^*, \phi' \in \Phi{\mathbb{D}}} \mathcal{L}(\theta', \phi') }. \end{align} $

Lemma 8

For $Q\in\mathbb{O}(d)$ and $\theta(Q) := (U \Lambda Q, m) \in \Theta^*$, there exists a corresponding optimal parameter $\phi(Q) := (K_\phi(Q), b_\phi(Q), \Sigma_\phi(Q)) \in\Phi$ such that the joint loss Equation 13 is invariant to the choice of $Q$, i.e., $\mathcal{L}(\theta(Q), \phi(Q)) = \mathcal{L}_{\mathrm{opt}}$. In particular, we have the explicit expressions

$ \begin{align} \Sigma_\phi(Q) &:= Q^\top \left(I_d + \frac{1}{\tau^2} \Lambda^2 + \frac{1}{\sigma^2} \Lambda U^\top A^\top A U \Lambda \right)^{-1} Q, \tag{a}\ K_\phi(Q) &:= \Sigma_\phi(Q) Q^\top \Lambda U^\top \left(\frac{1}{\sigma^2} A^\top + \frac{1}{\tau^2} K \right), \tag{b}\ b_\phi(Q) &:= \Sigma_\phi(Q) Q^\top \Lambda U^\top \left[-\frac{1}{\sigma^2} A^\top A m + \frac{1}{\tau^2} (I_d - K A) m - \frac{1}{\tau^2} m \right].\tag{c} \end{align}\tag{20} $

Consequently, the solution sets for separate and joint training are

$ \begin{align} \mathcal{S}^{\mathrm{sep}} &= { (\theta(Q_{\mathrm{sep}}), \phi(Q_{\mathrm{sep}})) \mid Q_{\mathrm{sep}} \in \mathbb{O}(d) , \text{is fixed}}, \ \mathcal{S}^{\mathrm{joint}} &= { (\theta(Q), \phi(Q)) \mid \forall Q \in \mathbb{O}(d) }. \end{align} $

Proof: For a fixed $Q \in \mathbb{O}(d)$, let $K_{\theta} = U \Lambda Q$ and $b_\theta = m$. Substituting these into the optimality conditions Equation 14a–Equation 14c yields the parameterized forms of $\Sigma_\phi(Q)$, $K_\phi(Q)$, and $b_\phi(Q)$. The optimal precision matrix $P(Q) := (\Sigma_\phi(Q))^{-1}$ satisfies

$ \begin{align} P(Q) &= I_d + \frac{1}{\tau^2} Q^\top \Lambda U^\top U \Lambda Q + \frac{1}{\sigma^2} Q^\top \Lambda U^\top A^\top A U \Lambda Q \nonumber \ &= Q^\top \left(I_d + \frac{1}{\tau^2} \Lambda^2 + \frac{1}{\sigma^2} \Lambda U^\top A^\top A U \Lambda \right) Q \coloneqq Q^\top H Q, \end{align} $

where $H$ is a SPD matrix independent of $Q$ defined by

$ H \coloneqq I_d + \frac{1}{\tau^2} \Lambda^2 + \frac{1}{\sigma^2} \Lambda U^\top A^\top A U \Lambda. $

According to Lemma 6, the optimal covariance is given by Equation 20a,

$ \Sigma_\phi(Q) = Q^\top H^{-1} Q.\tag{21} $

According to equations 17 and 18, we have the optimal mean of $q_\phi(z|y)$ as

$ \mu_Q(y) = \Sigma_\phi(Q) K_\theta^\top v(y),\tag{22} $

where $v(y)$ is independent of $Q$. Specifically, by plugging the equation 22 and $K_\theta = U\Lambda Q$ into Equation 22, we know the optimal solution $K_\phi(Q)$ and $b_\phi(Q)$ as equations 20b and 20c, respectively.

Then we plug Equation 21, Equation 22 and $K_\theta = U\Lambda Q$ into the pointwise objective $J(y;\mu_Q, \Sigma_Q)$ Equation 15. All terms related to $Q$ will be canceled out because $QQ^\top = Q^\top Q = I$, reducing $J(y;\mu_Q, \Sigma_Q)$ to an expression independent of $Q$. Therefore, for every $Q \in \mathbb{O}(d)$, the pair $(\theta(Q), \phi(Q))$ achieves the global minimum $\mathcal{L}{\mathrm{opt}}$, forming the manifold $\mathcal{S}^{\mathrm{joint}}$. The separate training paradigm uniquely determines $Q{\mathrm{sep}}$ during the pre-training of the generative map, restricting the solution to a singleton.

Lemma 9

For any $(\theta, \phi) \in \mathcal{S}^{\mathrm{joint}}$, the product of the generative and variational weight matrices equals the Kalman gain $K = C A^\top (A C A^\top + \sigma^2 I_{d_y})^{-1}$, i.e., $K_\theta K_\phi = K$. Furthermore, the expected output of the generative inference process recovers the exact Bayesian posterior mean,

$ \mathbb{E}{z \sim q\phi(z|y)} [f_\theta(z)] = \mathbb{E}{p{\mathrm{data}}(x|y)} [x]. $

Specifically, this holds for the separate training where $\mathcal{S}^{\mathrm{sep}} = { (\theta(Q_{\mathrm{sep}}), \phi(Q_{\mathrm{sep}})) }\subset \mathcal{S}^\mathrm{joint}$ for a fixed $Q_{\mathrm{sep}} \in \mathbb{O}(d)$.

Proof: Substituting $\Sigma_\phi$ from Equation 14a into the expression for $K_\phi$ in Equation 14b, and applying the push-through identity $K_\theta (I_d + K_\theta^\top \mathcal{M} K_\theta)^{-1} = (I_d + K_\theta K_\theta^\top \mathcal{M})^{-1} K_\theta$ with $\mathcal{M} := \sigma^{-2} A^\top A + \tau^{-2} I_d$, we have

$ \begin{align} K_\theta K_\phi &= (I_d + C (\sigma^{-2} A^\top A + \tau^{-2} I_d))^{-1} C \left(\sigma^{-2} A^\top + \tau^{-2} K \right) \nonumber \ &= (C^{-1} + \sigma^{-2} A^\top A + \tau^{-2} I_d)^{-1} \left(\sigma^{-2} A^\top + \tau^{-2} K \right). \end{align}\tag{23} $

Using the identity of the Kalman gain, $(C^{-1} + \sigma^{-2} A^\top A) K = \sigma^{-2} A^\top$, and adding $\tau^{-2} K$ to both sides, we have

$ (C^{-1} + \sigma^{-2} A^\top A + \tau^{-2} I_d) K = \sigma^{-2} A^\top + \tau^{-2} K. $

Left-multiplying by $(C^{-1} + \sigma^{-2} A^\top A + \tau^{-2} I_d)^{-1}$ and comparing with Equation 23, we obtain $K_\theta K_\phi = K$.

Consider $\mathbb{E}{z \sim q\phi(z|y)} [f_\theta(z)] = K_\theta (K_\phi y + b_\phi) + b_\theta$. Since $b_\theta = m$ and $K_\theta K_\phi = K$, expanding $K_\theta b_\phi$ via Equation 14c yields

$ \begin{align} K_\theta b_\phi &= K_\theta \Sigma_\phi K_\theta^\top \left[-\sigma^{-2} A^\top A m + \tau^{-2} (I_d - KA) m - \tau^{-2} m \right] \nonumber \ &= -(C^{-1} + \sigma^{-2} A^\top A + \tau^{-2} I_d)^{-1} (\sigma^{-2} A^\top A + \tau^{-2} KA) m \nonumber \ &= -(C^{-1} + \sigma^{-2} A^\top A + \tau^{-2} I_d)^{-1} (\sigma^{-2} A^\top + \tau^{-2} K) Am = -KAm. \end{align} $

Therefore

$ \mathbb{E}{z \sim q\phi(z|y)} [f_\theta(z)] = Ky - KAm + m = m + K(y - Am) = \mathbb{E}{p{\mathrm{data}}(x|y)} [x]. $

Proposition 10

Assume that the observation operator $A$ and data covariance $C$ are in general position such that they are not simultaneously diagonalizable in the canonical basis. For separate training with $Q_\mathrm{sep}$ uniformly randomly sampled from $\mathbb{O}(d)$, the following properties hold:

- Sub-optimality of Separate Training: $\mathcal{S}^{\mathrm{sep}} \cap \mathcal{S}^{\mathrm{sep}}{\mathrm{diag}} = \emptyset$ a.s. w.r.t. $\nu{\mathbb{O}(d)}$ (Definition 4).

- Optimality of Joint Training: $\mathcal{S}^{\mathrm{joint}} \cap \mathcal{S}^{\mathrm{joint}}{\mathrm{diag}} \neq \emptyset$ and $\mathcal{S}^{\mathrm{joint}}{\mathrm{diag}} \subset \mathcal{S}^{\mathrm{joint}}$

Proof: For $Q\in\mathbb{O}(d)$ and $\theta(Q) \in \Theta^*$, recall from the proof in Lemma 8 by

$ P(Q) := (\Sigma_\phi^*(Q))^{-1} = Q^\top H Q, \quad \text{where} \quad H := I_d + \frac{1}{\tau^2} \Lambda^2 + \frac{1}{\sigma^2} \Lambda U^\top A^\top A U \Lambda. $

Let $\Sigma_\phi = \mathrm{diag}(\sigma_1^2, \sigma_2^2, \ldots, \sigma_d^2) \in \mathbb{D}(d)$. The covariance-dependent objective $J(\Sigma_\phi)$ according to 15 and its minimizer $\Sigma^*_{\mathrm{diag}, \phi}$ are:

$ \begin{align} J(\Sigma_\phi) &= \frac{1}{2} \sum_{i=1}^d \left(P_{ii}(Q) \sigma_{i}^2 - \ln \sigma_i^2 \right) + \text{const}, \ ^{-1} &= \mathrm{diag}(P(Q)) = \mathrm{diag}(\Sigma^*_\phi(Q)^{-1}), \end{align} $

where $\Sigma^*_\phi(Q) = P(Q)^{-1}$. The optimality gap $\Delta J(Q)$ between $\Sigma^*_{\mathrm{diag}, \phi}(Q)$ and the unconstrained $\Sigma^*_\phi(Q) = P(Q)^{-1}$ is:

$ \begin{split} \Delta J(Q) &:= J(\Sigma^*_{\mathrm{diag}, \phi}(Q)) - J(\Sigma^*_\phi(Q)) \ &= \frac{1}{2} \left(\mathrm{Tr}(P(Q) \Sigma^*_{\mathrm{diag}, \phi}(Q)) - \ln |\Sigma^*_{\mathrm{diag}, \phi}(Q)| \right) - \frac{1}{2} \left(\mathrm{Tr}(P(Q) \Sigma^*_\phi(Q)) - \ln |\Sigma^*_\phi(Q)| \right) \ &= \frac{1}{2} \left(\sum_{i=1}^d P_{ii}(Q) P_{ii}(Q)^{-1} + \ln \prod_{i=1}^d P_{ii}(Q) \right) - \frac{1}{2} \left(\mathrm{Tr}(I_d) + \ln |P(Q)| \right) \ &= \frac{1}{2} \left(d + \ln \prod_{i=1}^d P_{ii}(Q) \right) - \frac{1}{2} \left(d + \ln |P(Q)| \right) \ &= \frac{1}{2} \ln \left(\frac{\prod_{i=1}^d P_{ii}(Q)}{|P(Q)|} \right). \end{split} $

By Hadamard's inequality, $\Delta J(Q) \geq 0$ and the equality holds if and only if $P(Q) \in \mathbb{D}(d)$.

In separate training, $Q_{\mathrm{sep}}$ is fixed during pre-training and global optimality requires $P(Q_{\mathrm{sep}}) = Q_{\mathrm{sep}}^\top H Q_{\mathrm{sep}} \in \mathbb{D}(d)$. By the general position assumption of $A$ and $C$, $H = I_d + \tau^{-2} \Lambda^2 + \sigma^{-2} \Lambda U^\top A^\top A U \Lambda$ is not a diagonal matrix. The set of matrices ${Q \in \mathbb{O}(d) \mid Q^\top H Q \in \mathbb{D}(d)}$ corresponds exclusively to the orthogonal matrices whose columns are the eigenvectors of $H$. Because this forms a finite set of permutation and sign-flip matrices, it holds a measure of zero with respect to the normalized Haar measure $\nu_{\mathbb{O}(d)}$ on the continuous manifold $\mathbb{O}(d)$ (refer to the Definition 4).

According to the equality condition of the Hadamard's inequality, $P(Q_{\mathrm{sep}}) \notin \mathbb{D}(d)$ a.s., leading to $\Delta J(Q) > 0$, which implies that the optimal loss value reached by the diagonal constrained $\Sigma^*_{\mathrm{diag}, \phi}(Q)$ is bigger than $\mathcal{L}\mathrm{opt}$. According to Lemma 8, $\mathcal{S}^\mathrm{sep}$ has the optimal loss $\mathcal{L}\mathrm{opt}$. Therefore $\mathcal{S}^{\mathrm{sep}} \cap \mathcal{S}^{\mathrm{sep}}_{\mathrm{diag}} = \emptyset$.

In joint training, $Q$ is a learnable parameter optimized over $\mathbb{O}(d)$. The global minimum $\mathcal{L}{\mathrm{opt}}$ under the diagonal constraint is achieved if and only if the optimality gap vanishes, $\Delta J(Q) = 0$. By Hadamard's inequality, this condition holds if and only if $P(Q) = Q^\top H Q \in \mathbb{D}(d)$, which restricts $Q$ to the set of eigen-bases $\mathcal{V}(H) := { Q \in \mathbb{O}(d) \mid Q^\top H Q \in \mathbb{D}(d) }$. For any $Q \in \mathcal{V}(H)$, the optimal variational covariance $\Sigma^*{\phi}(Q) = P(Q)^{-1}$ inherently belongs to $\mathbb{D}(d)$. Because these specific configurations satisfy the diagonal constraint while simultaneously achieving the unconstrained global minimum, it follows that $\mathcal{S}^{\mathrm{joint}}{\mathrm{diag}} = { (\theta(Q), \phi(Q)) \mid Q \in \mathcal{V}(H) } \subset \mathcal{S}^{\mathrm{joint}}$, thereby confirming $\mathcal{S}^{\mathrm{joint}} \cap \mathcal{S}^{\mathrm{joint}}{\mathrm{diag}} \neq \emptyset$.

Lemma 11