Omnilingual MT: Machine Translation for {1,600} Languages

Omnilingual MT Team, Belen Alastruey$^{\dagger}$, Niyati Bafna$^{\dagger}$, Andrea Caciolai$^{\dagger}$, Kevin Heffernan$^{\dagger}$, Artyom Kozhevnikov$^{\dagger}$, Christophe Ropers$^{\dagger}$, Eduardo Sánchez$^{\dagger}$, Charles-Eric Saint-James$^{\dagger}$, Ioannis Tsiamas$^{\dagger}$, Chierh Cheng$^{\S}$, Joe Chuang$^{\S}$, Paul-Ambroise Duquenne$^{\S}$, Mark Duppenthaler$^{\S}$, Nate Ekberg$^{\S}$, Cynthia Gao$^{\S}$, Pere Lluís Huguet Cabot$^{\S}$, João Maria Janeiro$^{\S}$, Jean Maillard$^{\S}$, Gabriel Mejia Gonzalez$^{\S}$, Holger Schwenk$^{\S}$, Edan Toledo$^{\S}$, Arina Turkatenko$^{\S}$, Albert Ventayol-Boada$^{\S}$, Rashel Moritz$^{\ddagger}$, Alexandre Mourachko$^{\ddagger}$, Surya Parimi$^{\ddagger}$, Mary Williamson$^{\ddagger}$, Shireen Yates$^{\ddagger}$, David Dale$^{\perp}$, Marta R. Costa-jussà$^{\perp}$

FAIR at Meta

$^{\dagger}$ Core Contributors, alphabetical order

$^{\S}$ Other Contributors, alphabetical order

$^{\ddagger}$ Project Management, alphabetical order

$^{\perp}$ Technical Leadership, alphabetical order

Abstract

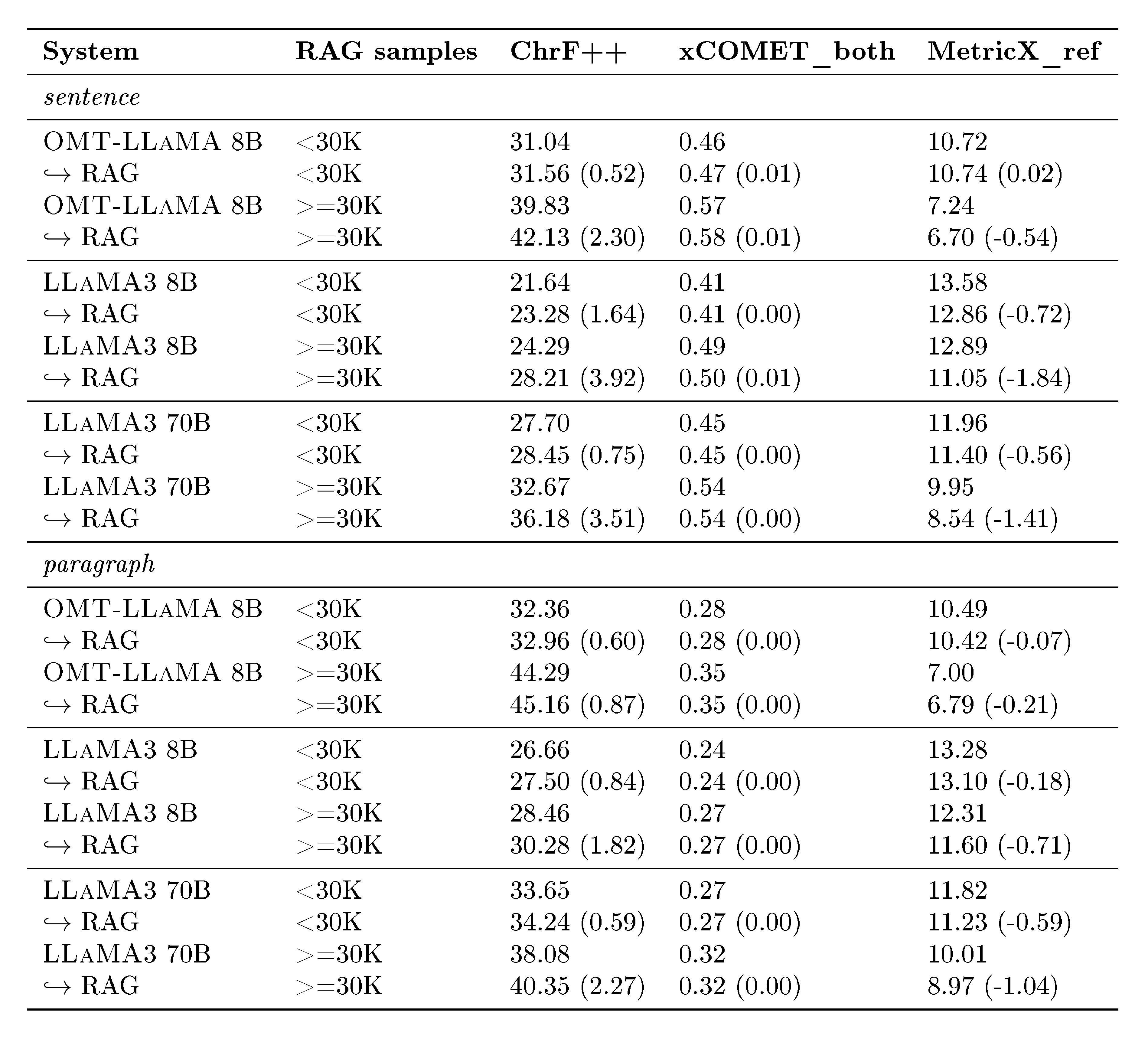

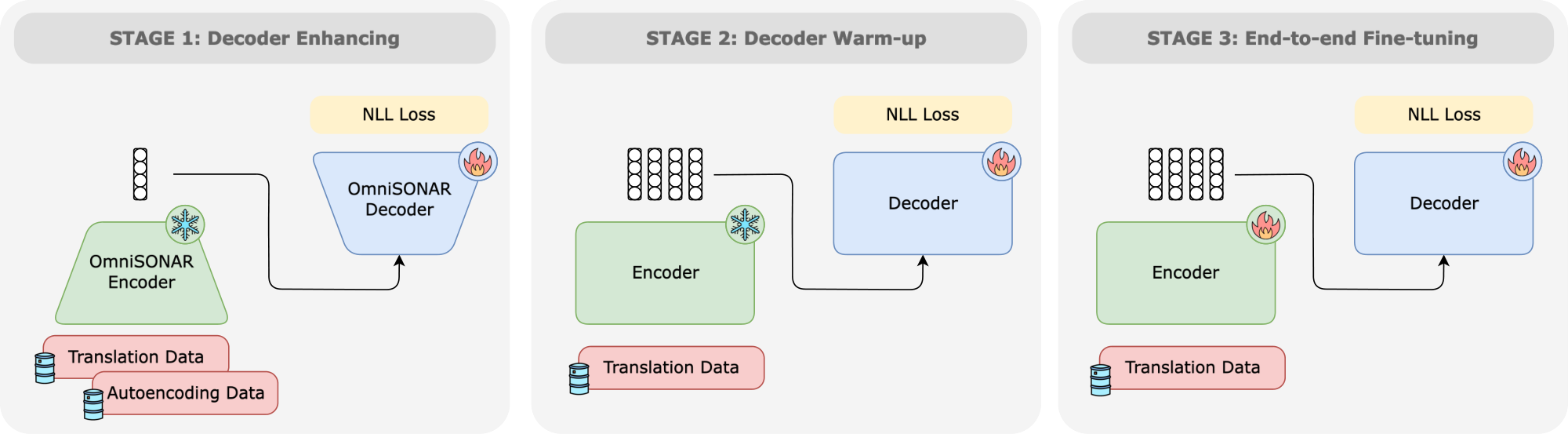

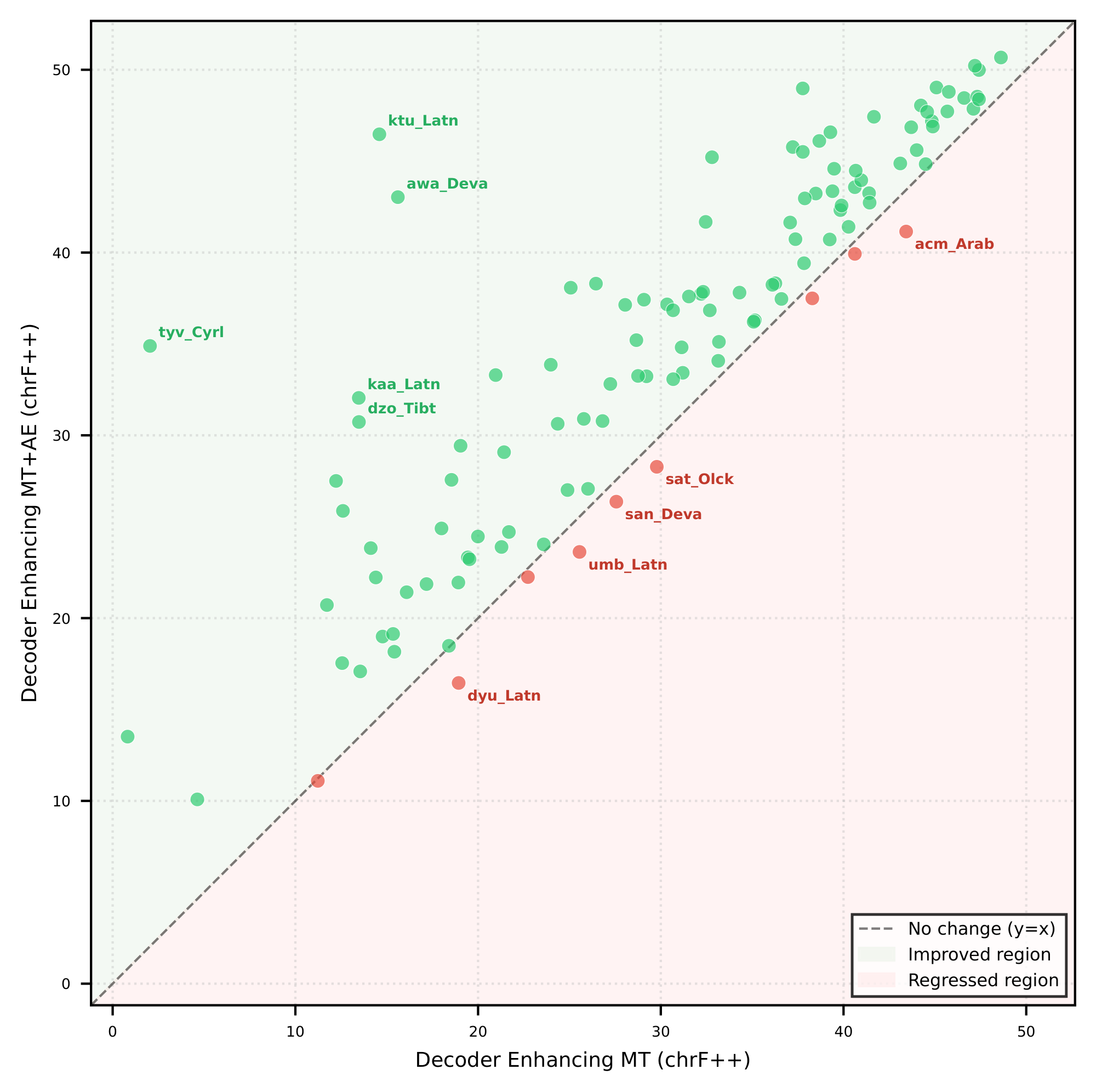

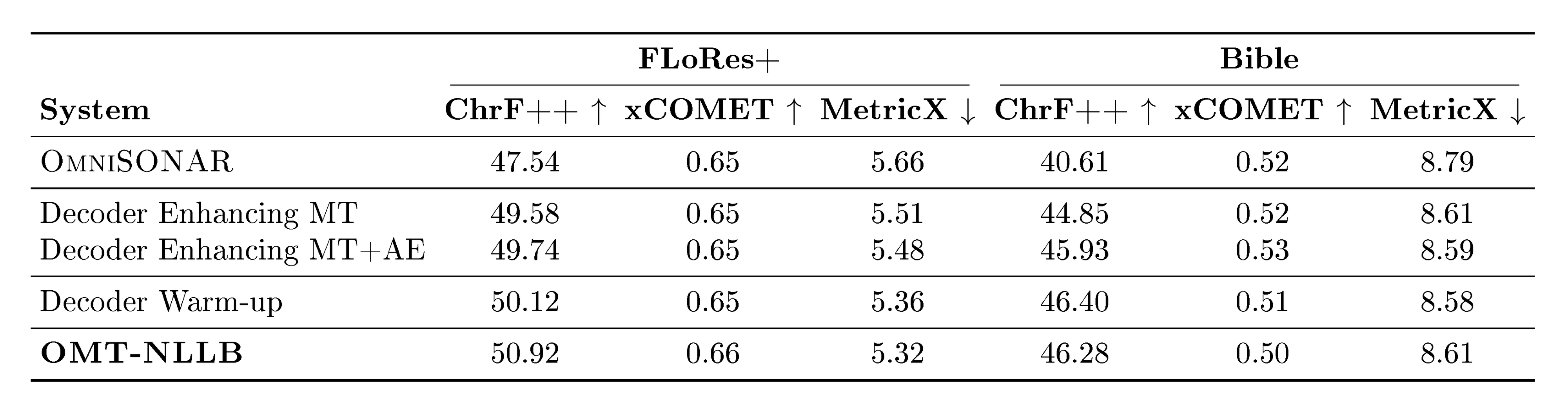

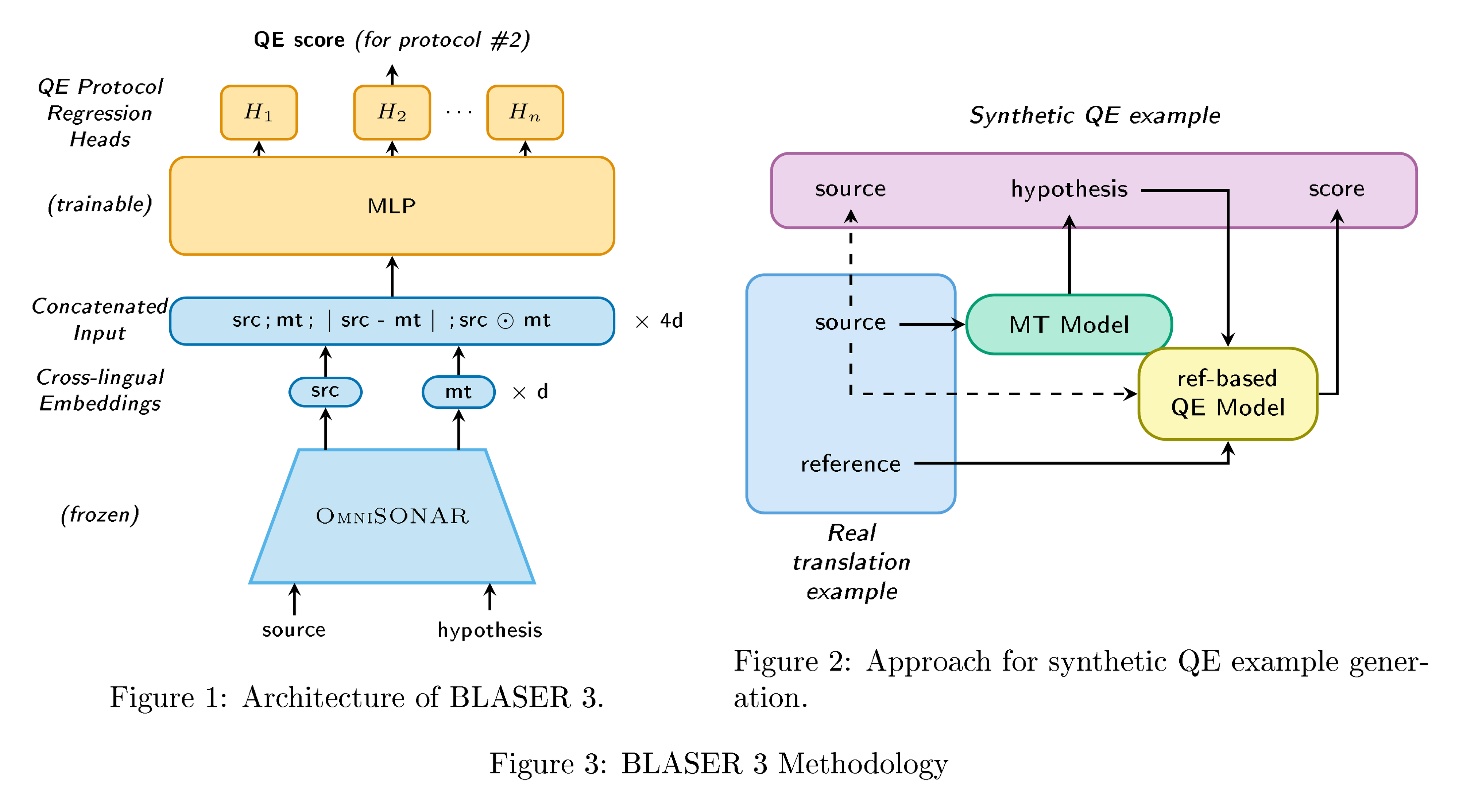

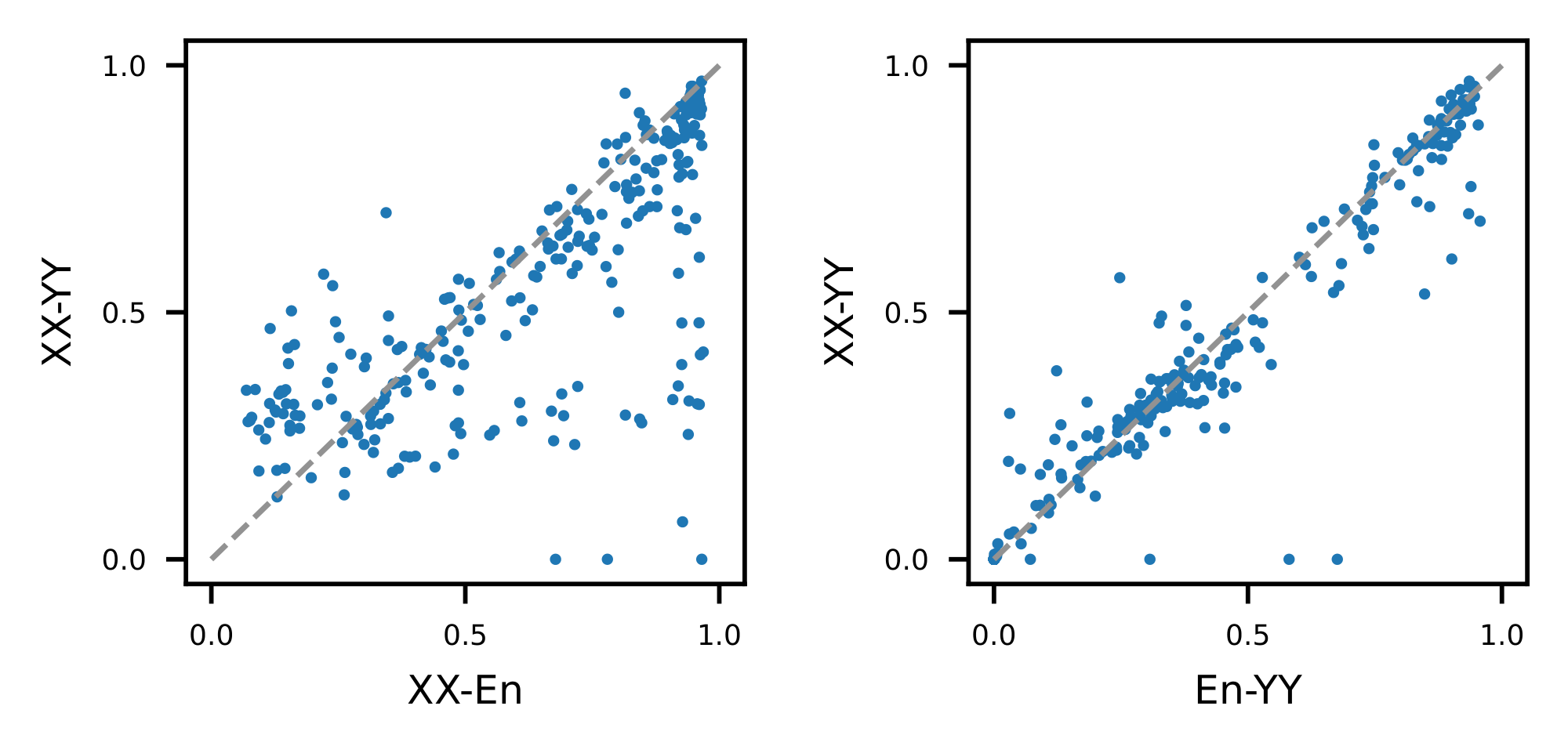

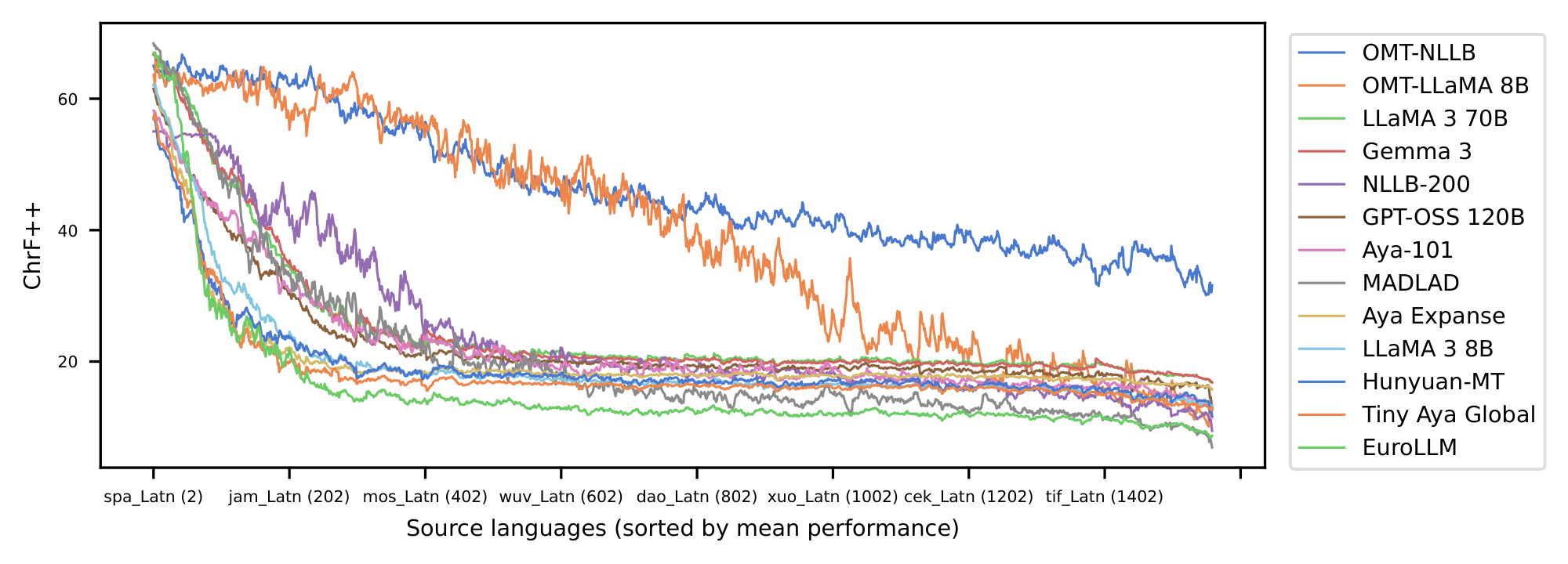

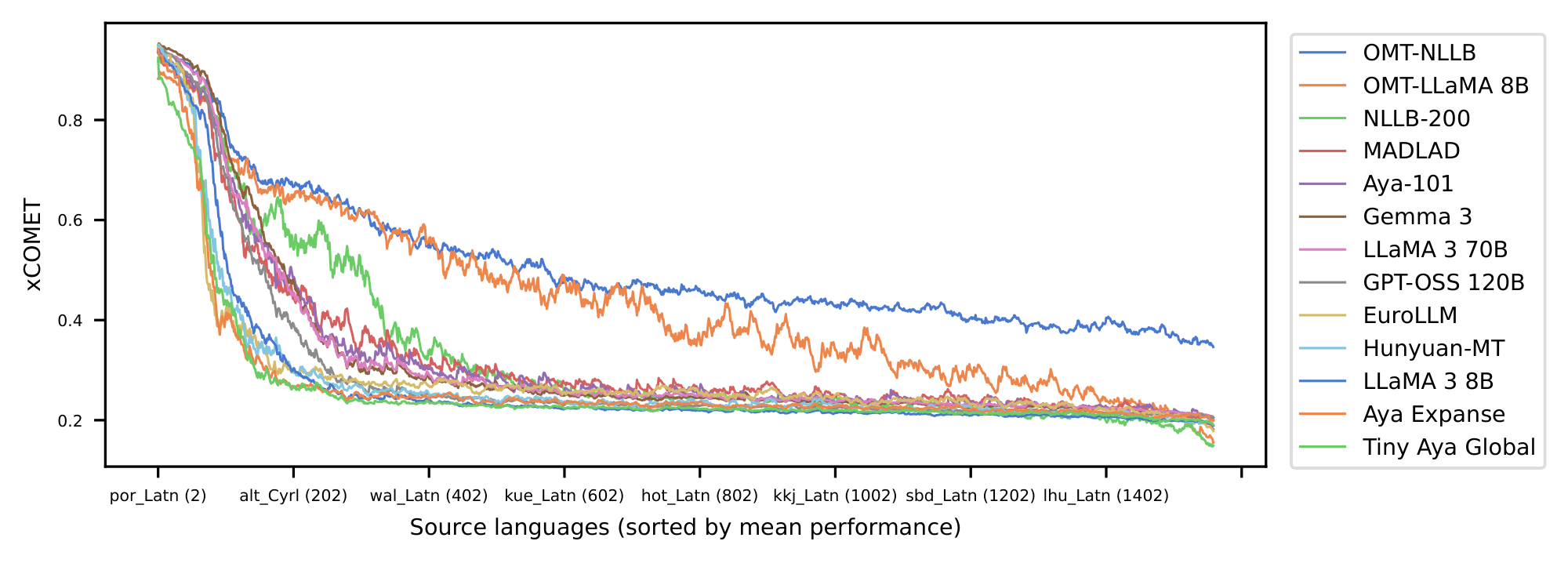

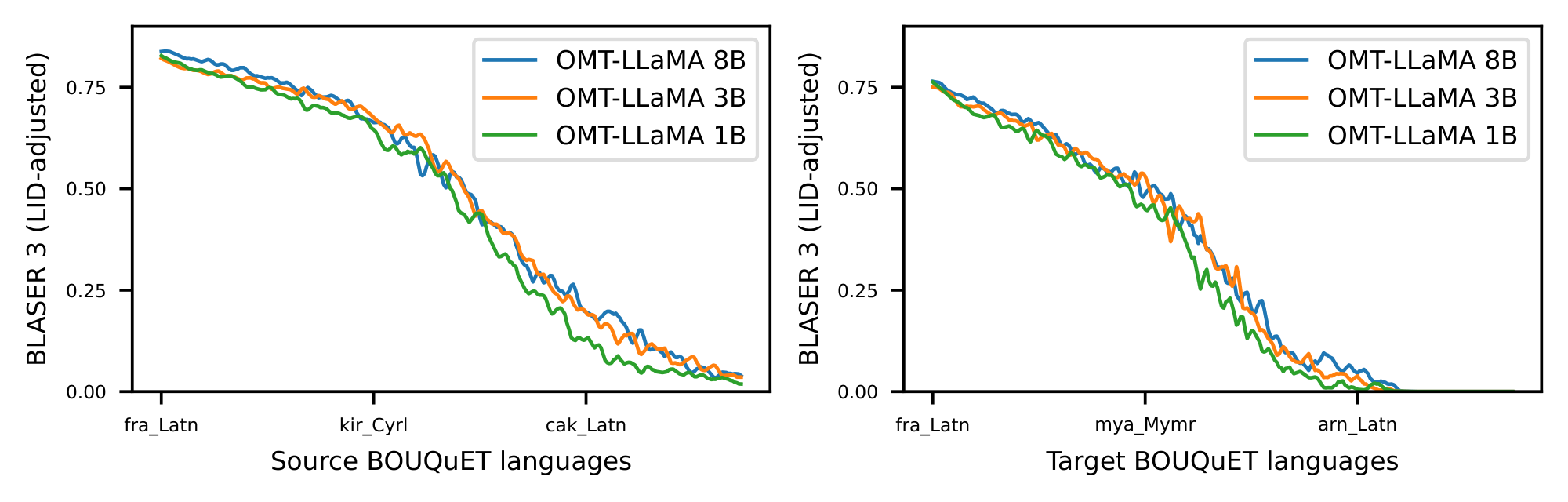

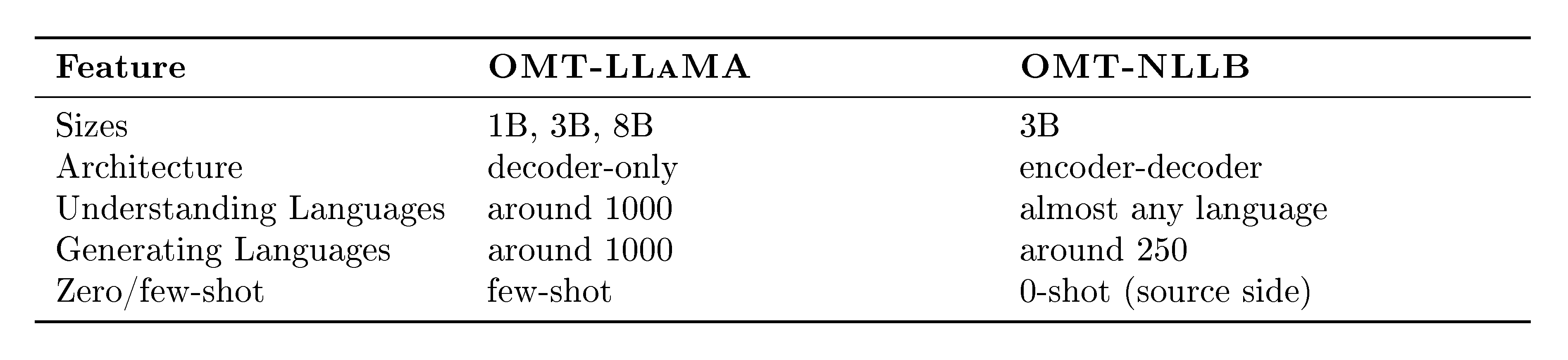

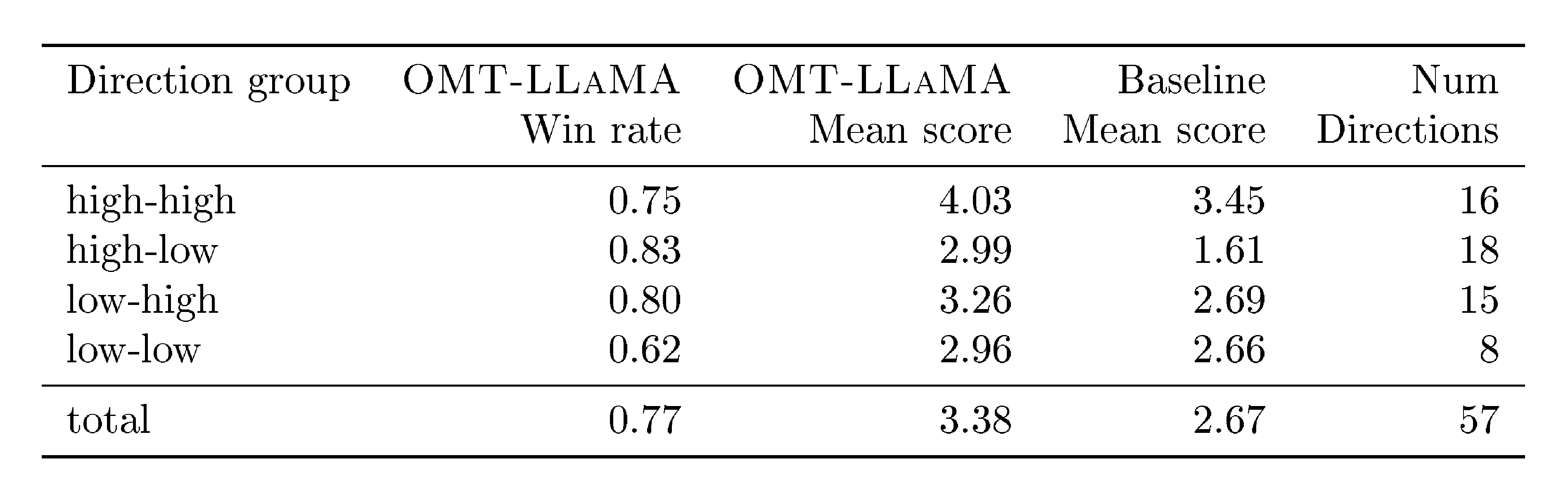

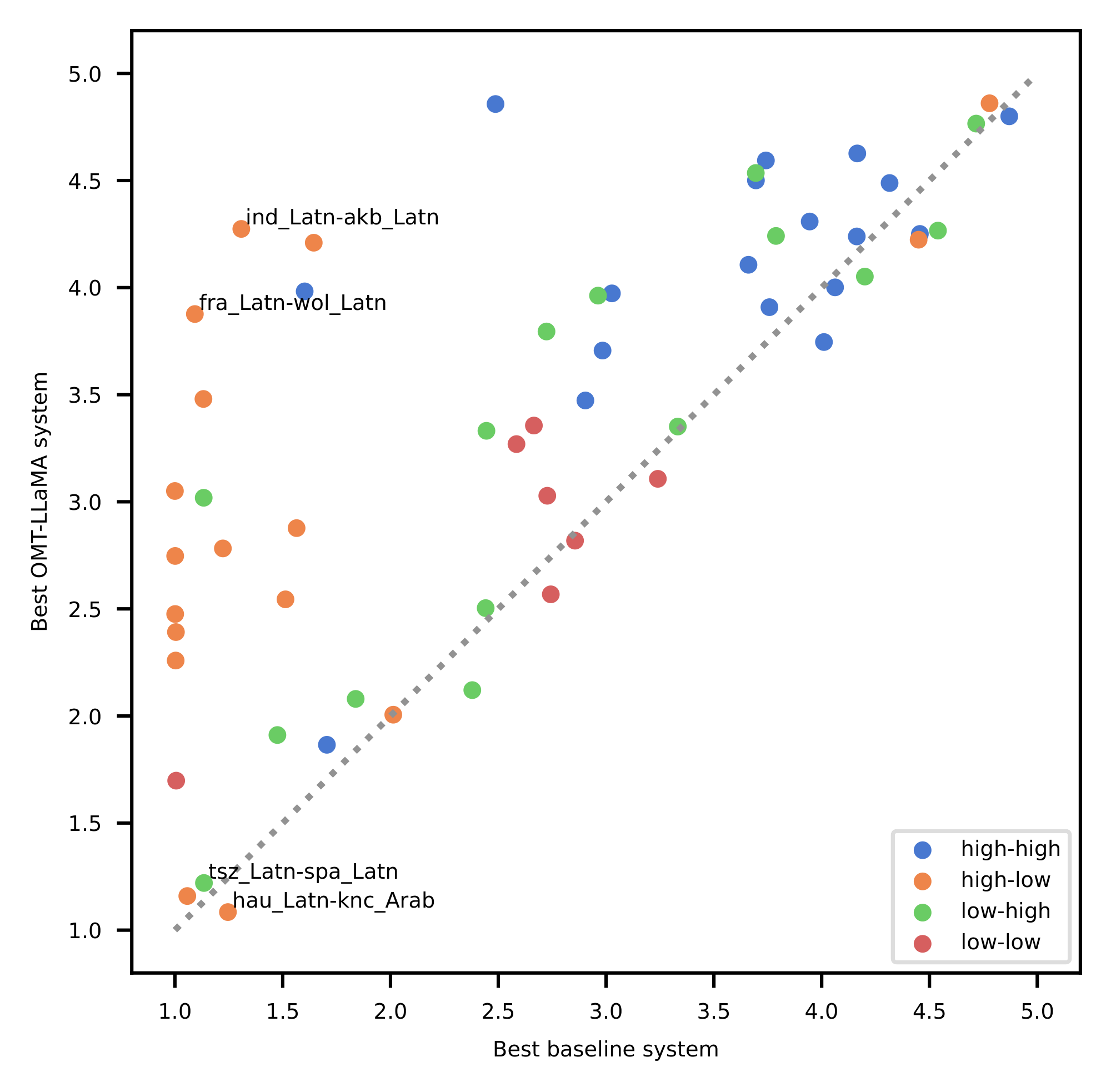

Advances made through No Language Left Behind (NLLB) have demonstrated that high-quality machine translation (MT) scale to 200 languages. Later Large Language Models (LLMs) have been adopted for MT, increasing in quality but not necessarily extending language coverage. Current systems remain constrained by limited coverage and a persistent generation bottleneck: while cross-lingual transfer enables models to somehow understand many undersupported languages, they often cannot generate them reliably, leaving most of the world’s 7,000 languages—especially endangered and marginalized ones—outside the reach of modern MT. Early explorations in extreme scaling offered promising proofs of concept but did not yield sustained solutions. We present Omnilingual Machine Translation (OMT), the first MT system supporting more than 1,600 languages. This scale is enabled by a comprehensive data strategy that integrates large public multilingual corpora with newly created datasets, including manually curated MeDLEY bitext, synthetic backtranslation, and mining, substantially expanding coverage across long-tail languages, domains, and registers. To ensure both reliable and expansive evaluation, we combined standard metrics with a suite of evaluation artifacts: BLASER 3 quality estimation model (reference-free), OmniTOX toxicity classifier, BOUQuET dataset (a newly created, largest-to-date multilingual evaluation collection built from scratch and manually extended across a wide range of linguistic families), and Met- BOUQuET dataset (faithful multilingual quality estimation at scale). We explore two ways of specializing an LLM for machine translation: as a decoder-only model ($\textsc{OMT-LLaMA}$) or as a module in an encoder–decoder architecture ($\textsc{OMT-NLLB}$). The former consists of a model built on $\textsc{LLaMA3}$, with multilingual continual pretraining and retrieval-augmented translation for inference-time adaptation. The latter is a model built on top of a multilingual aligned space ($\textsc{OmniSONAR}$, itself also based on $\textsc{LLaMA3}$), and introduces a training methodology that can exploit non-parallel data, allowing us to incorporate the decoder-only continuous pretraining data into the training of an encoder–decoder architecture. Notably, all our 1B to 8B parameter models match or exceed the MT performance of a 70B LLM baseline, revealing a clear specialization advantage and enabling strong translation quality in low-compute settings. Moreover, our evaluation of English-to- 1,600 translations further shows that while baseline models can interpret undersupported languages, they frequently fail to generate them with meaningful fidelity; $\textsc{OMT-LLaMA}$ models substantially expand the set of languages for which coherent generation is feasible. Additionally, OMT models improve in cross-lingual transfer, being close to solving the “understanding” part of the puzzle in MT for the 1,600 evaluated. Beyond strong out-of-the-box performance, we find that finetuning and retrieval-augmented generation offer additional pathways to improve quality for the given subset of languages when targeted data or domain knowledge is available. Our leaderboard and main humanly created evaluation datasets (BOUQuET and Met- BOUQuET) are dynamically evolving towards Omnilinguality and freely available.

Leaderboard and Available Evaluation: https://huggingface.co/spaces/facebook/bouquet

Correspondence: Marta R. Costa-jussà at mailto:[email protected], David Dale at mailto:[email protected]

Executive Summary: The rapid growth of machine translation (MT) has improved communication across languages, but current systems cover only about 200 languages effectively, leaving the vast majority of the world's 7,000 languages—particularly endangered and marginalized ones—out of reach. This gap persists despite advances in large language models (LLMs), which boost quality for major languages but fail to expand coverage reliably. A key issue is the "generation bottleneck": models can often understand low-resource languages through cross-lingual transfer but struggle to produce coherent output in them. With global diversity under threat from language loss, addressing this now is crucial to promote inclusion, support cultural preservation, and enable equitable access to technology in education, policy, and everyday communication.

This document introduces Omnilingual Machine Translation (OMT), a family of MT systems designed to support more than 1,600 languages—the broadest coverage to date. The work evaluates two approaches to specialize LLMs for translation: a decoder-only model (OMT-LLaMA) and an encoder-decoder model (OMT-NLLB), aiming to achieve high quality across diverse languages while overcoming data scarcity and evaluation challenges.

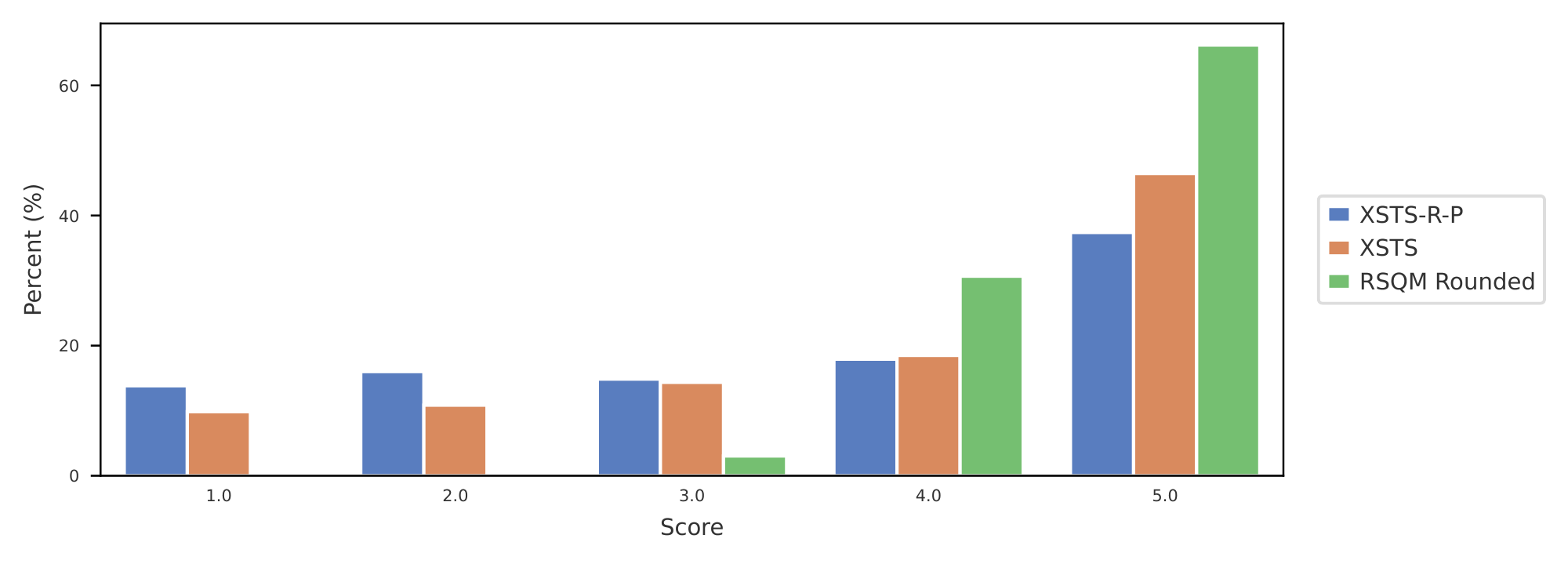

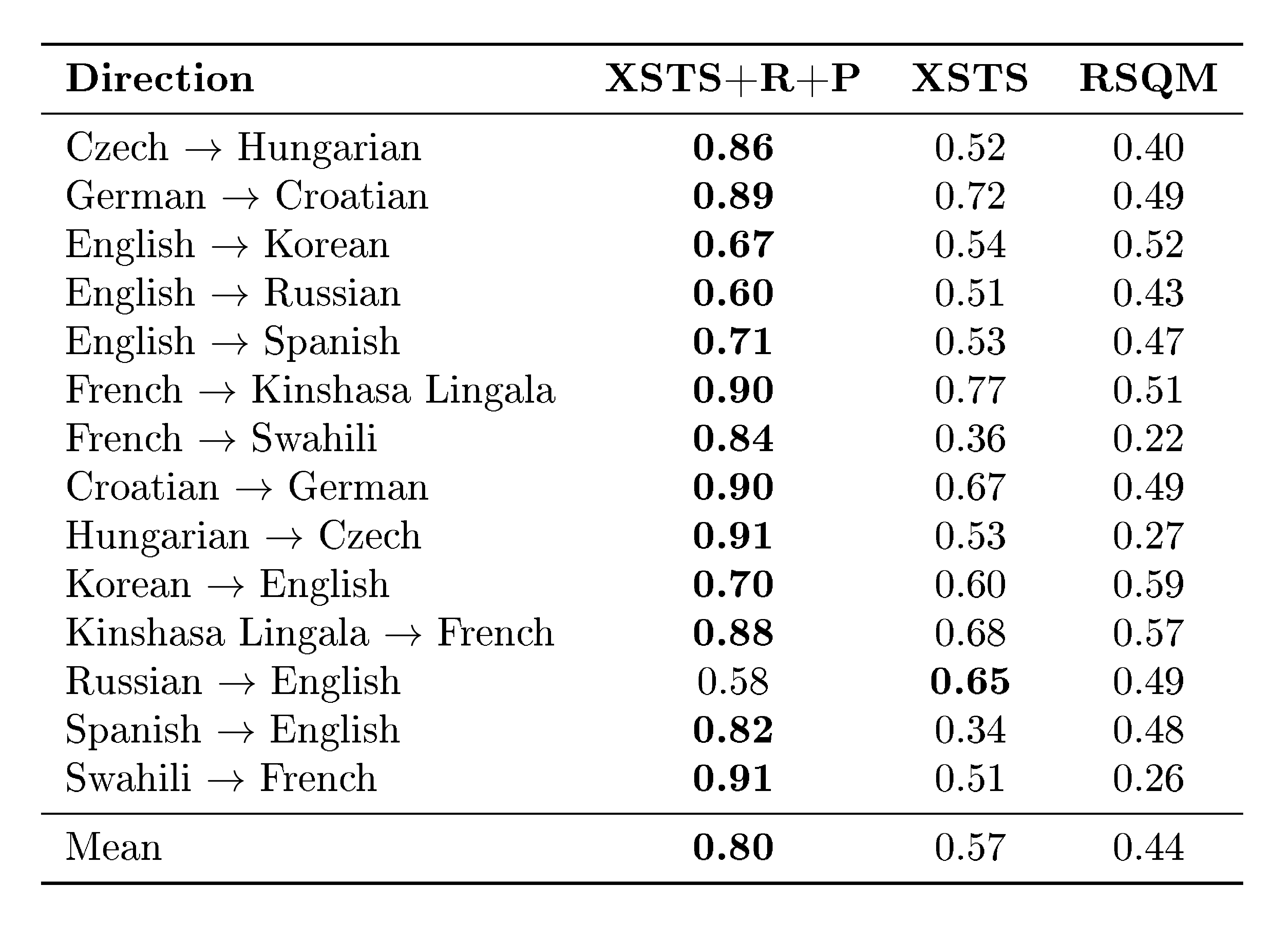

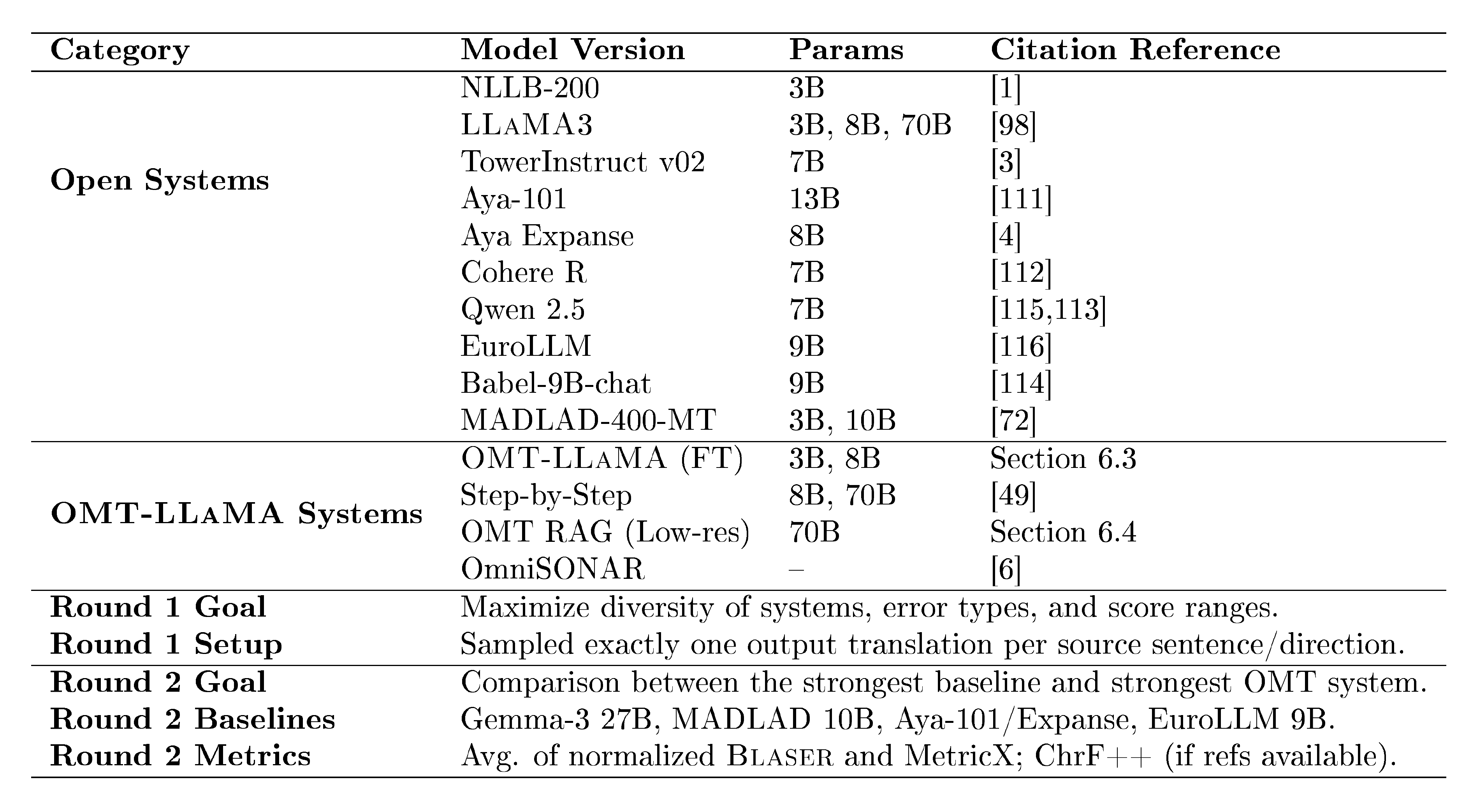

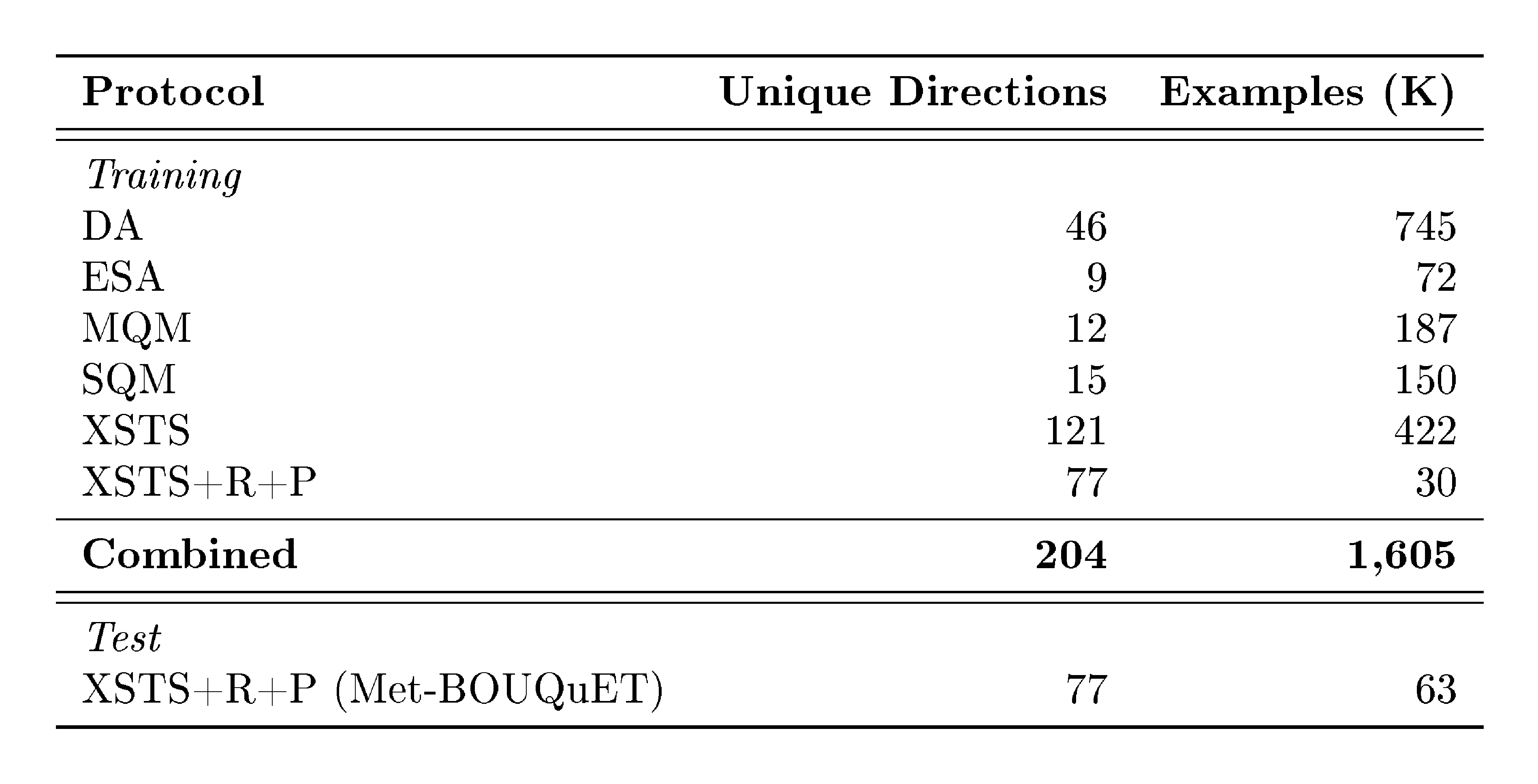

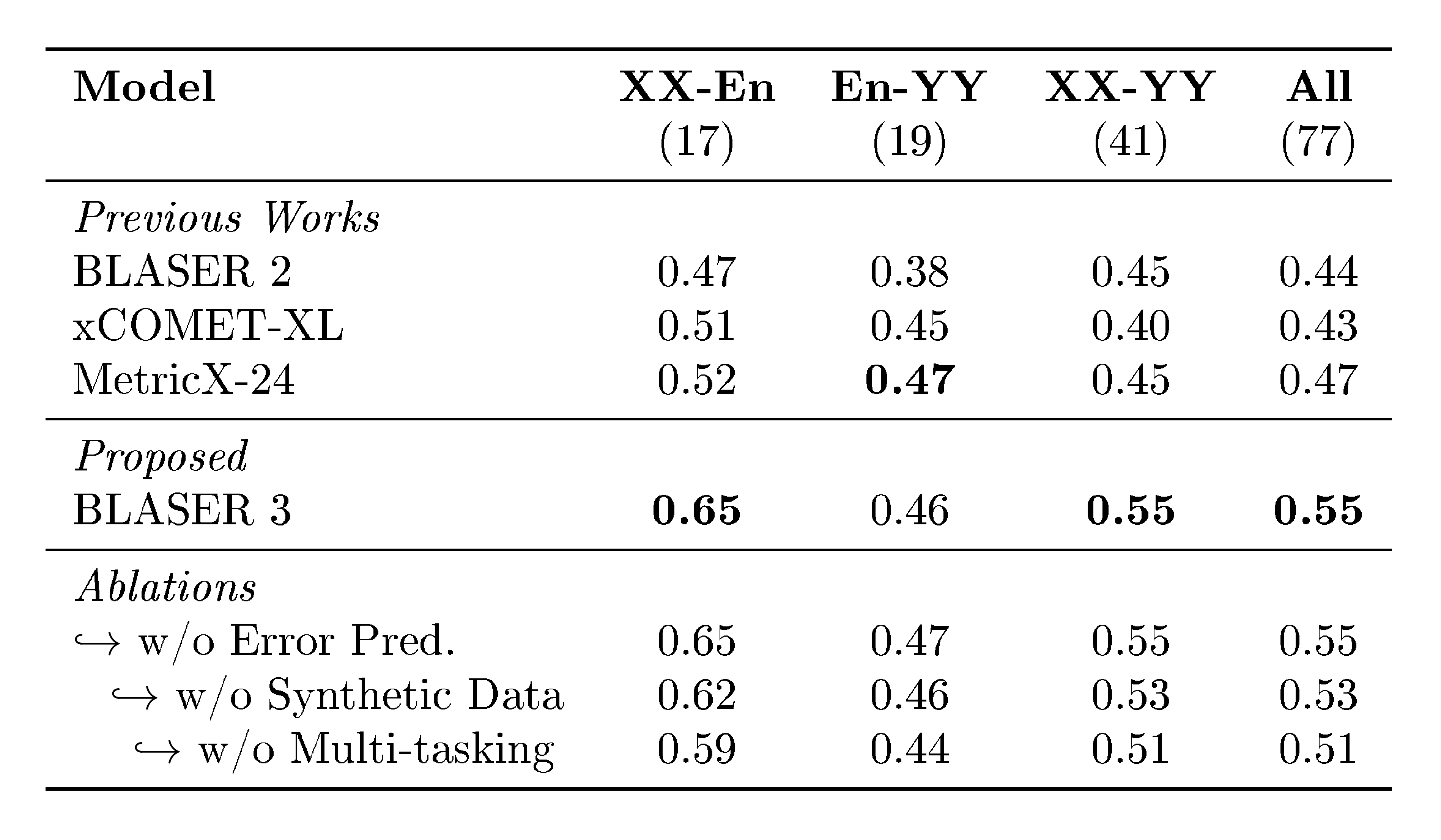

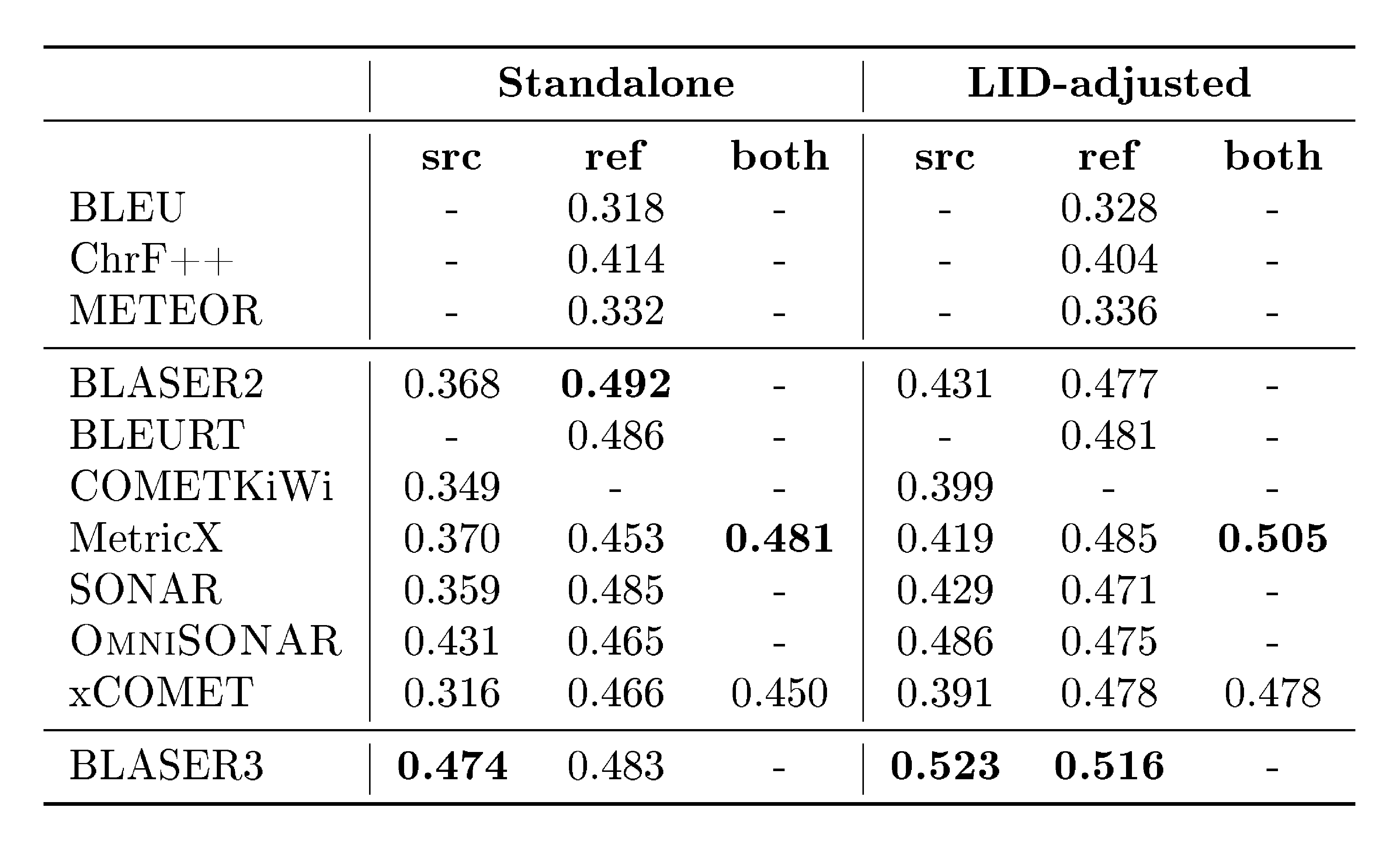

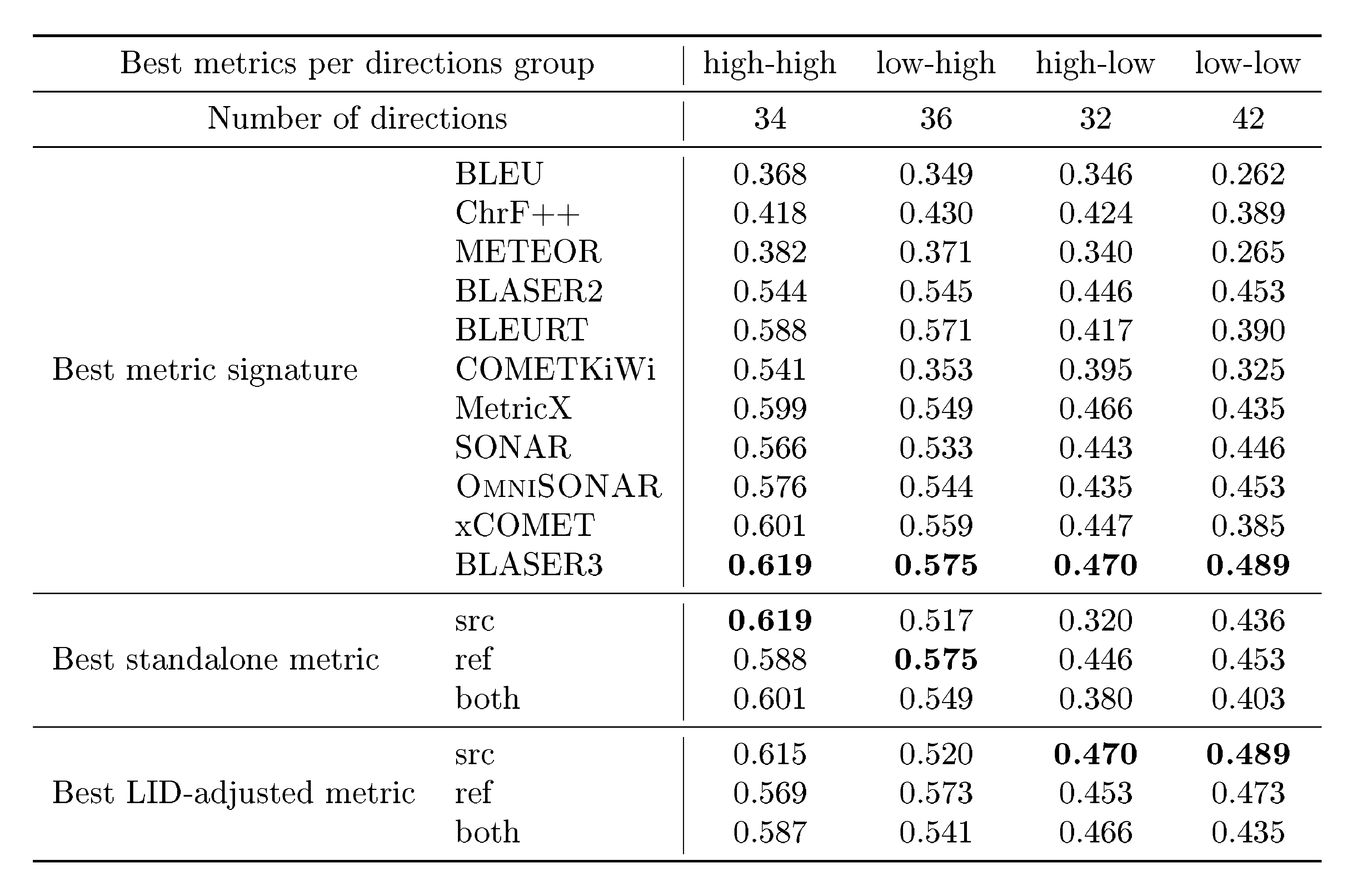

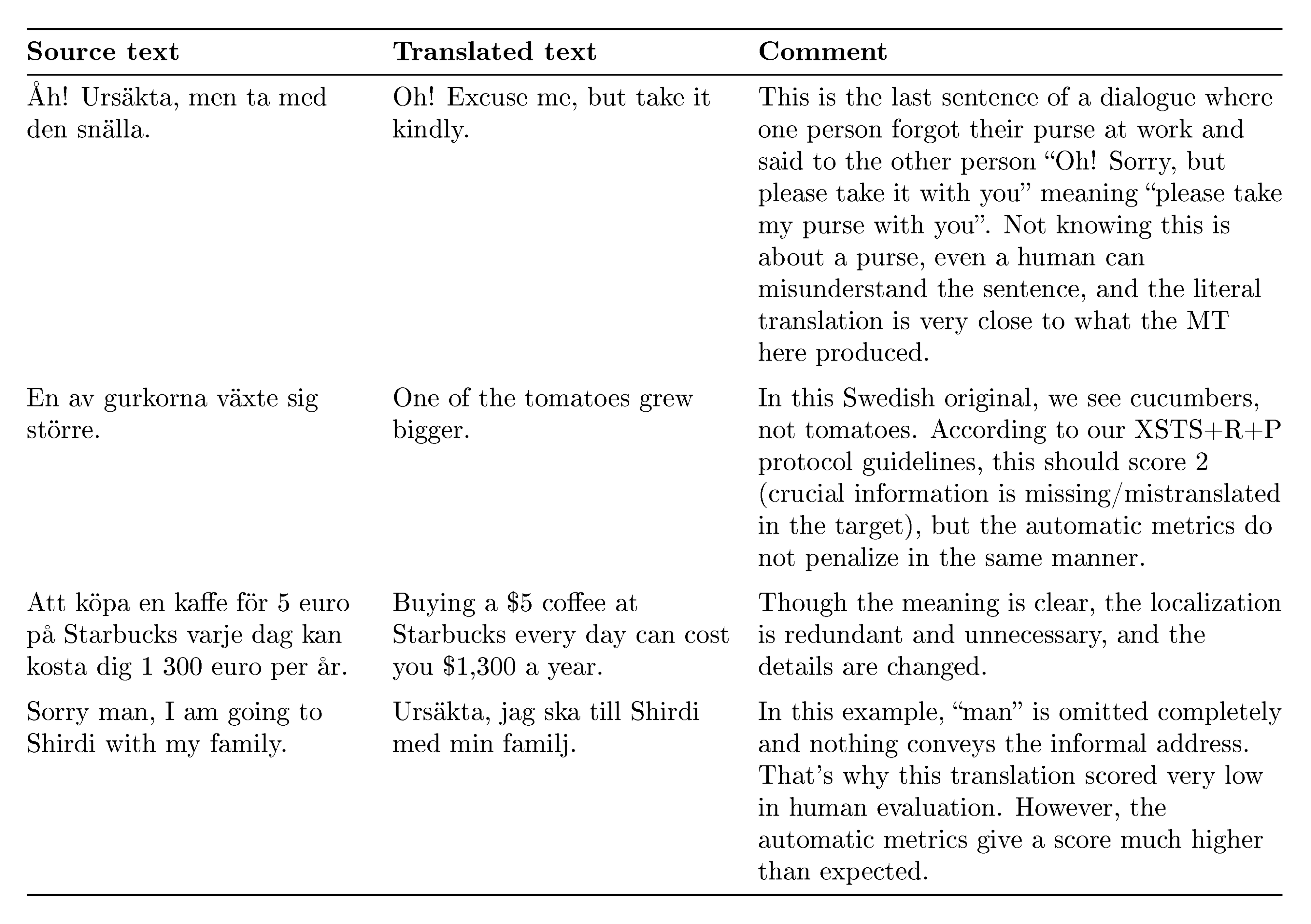

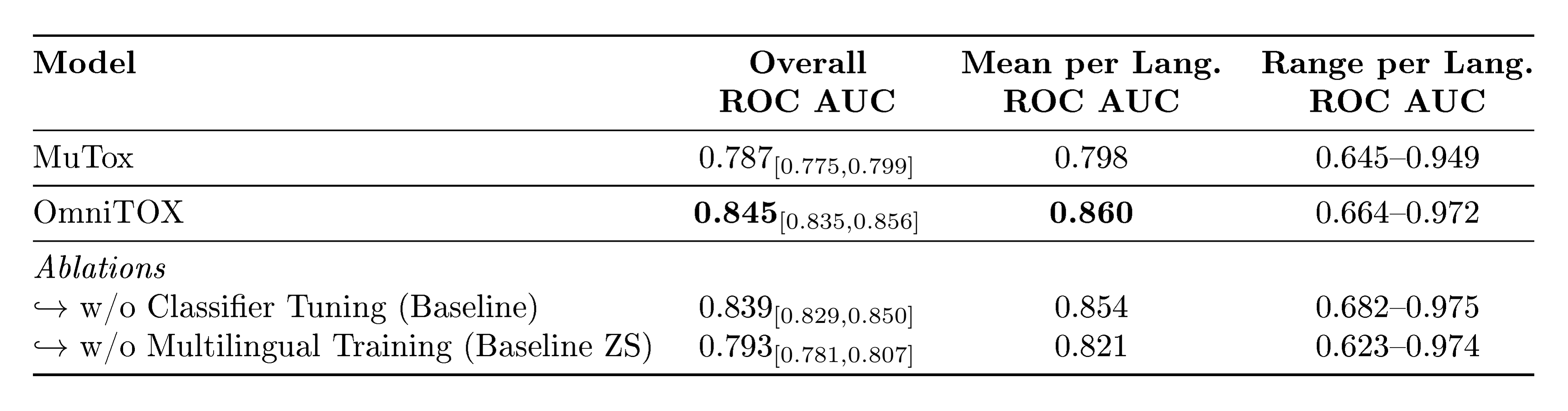

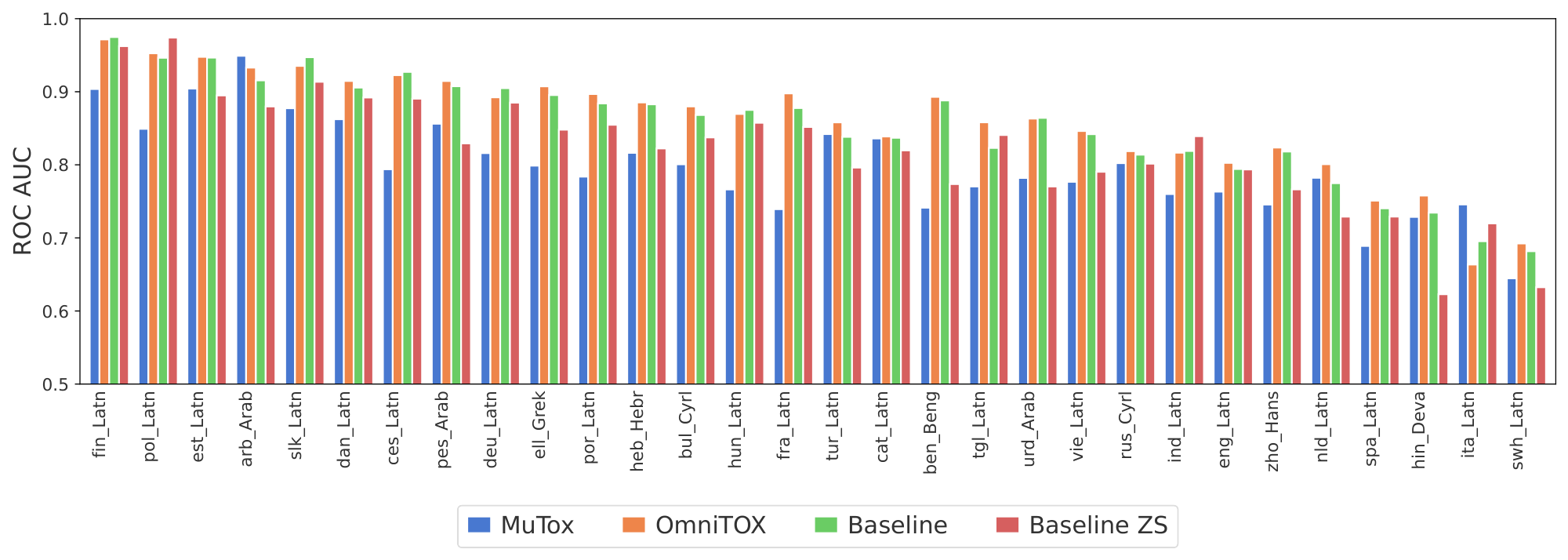

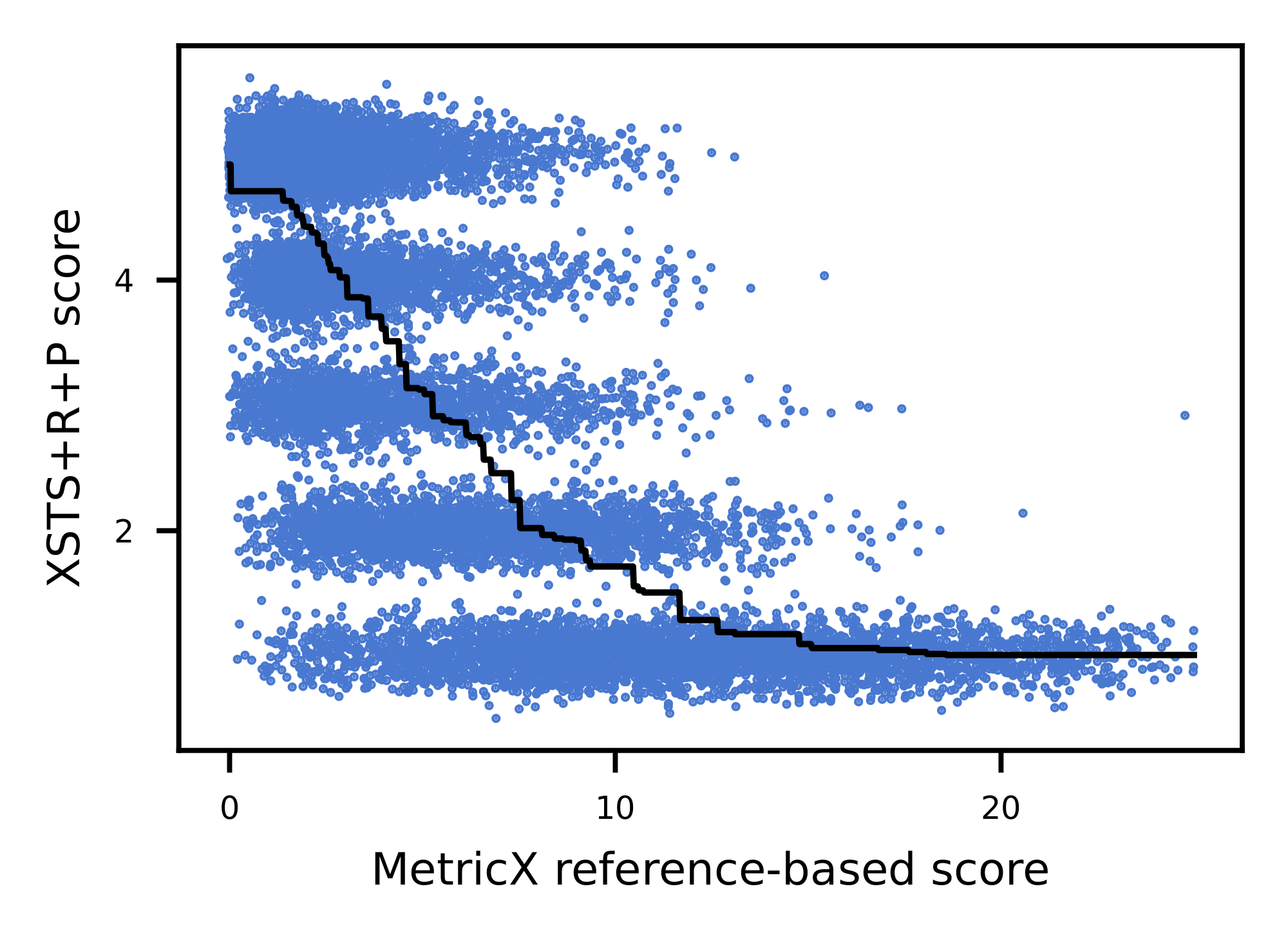

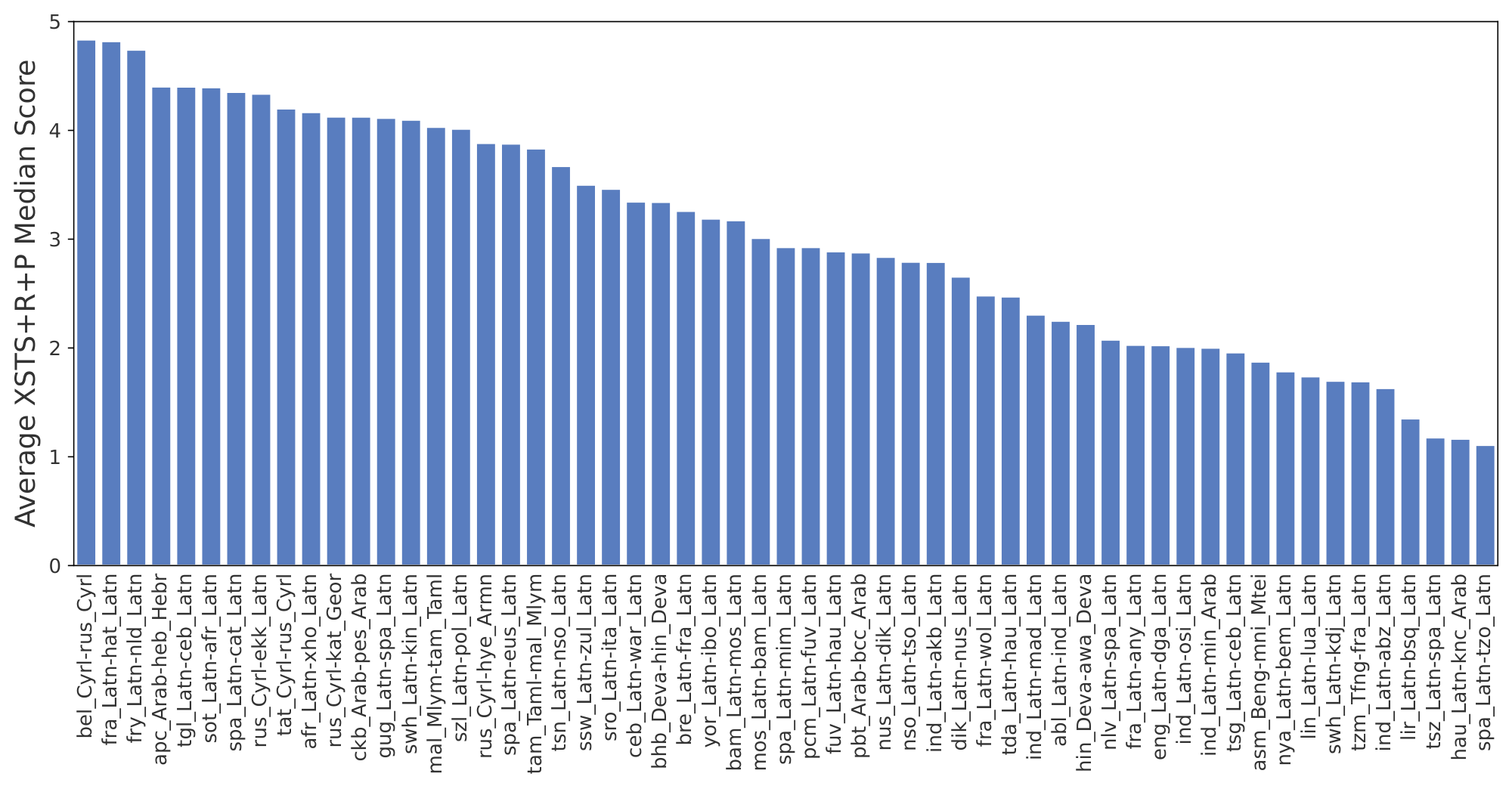

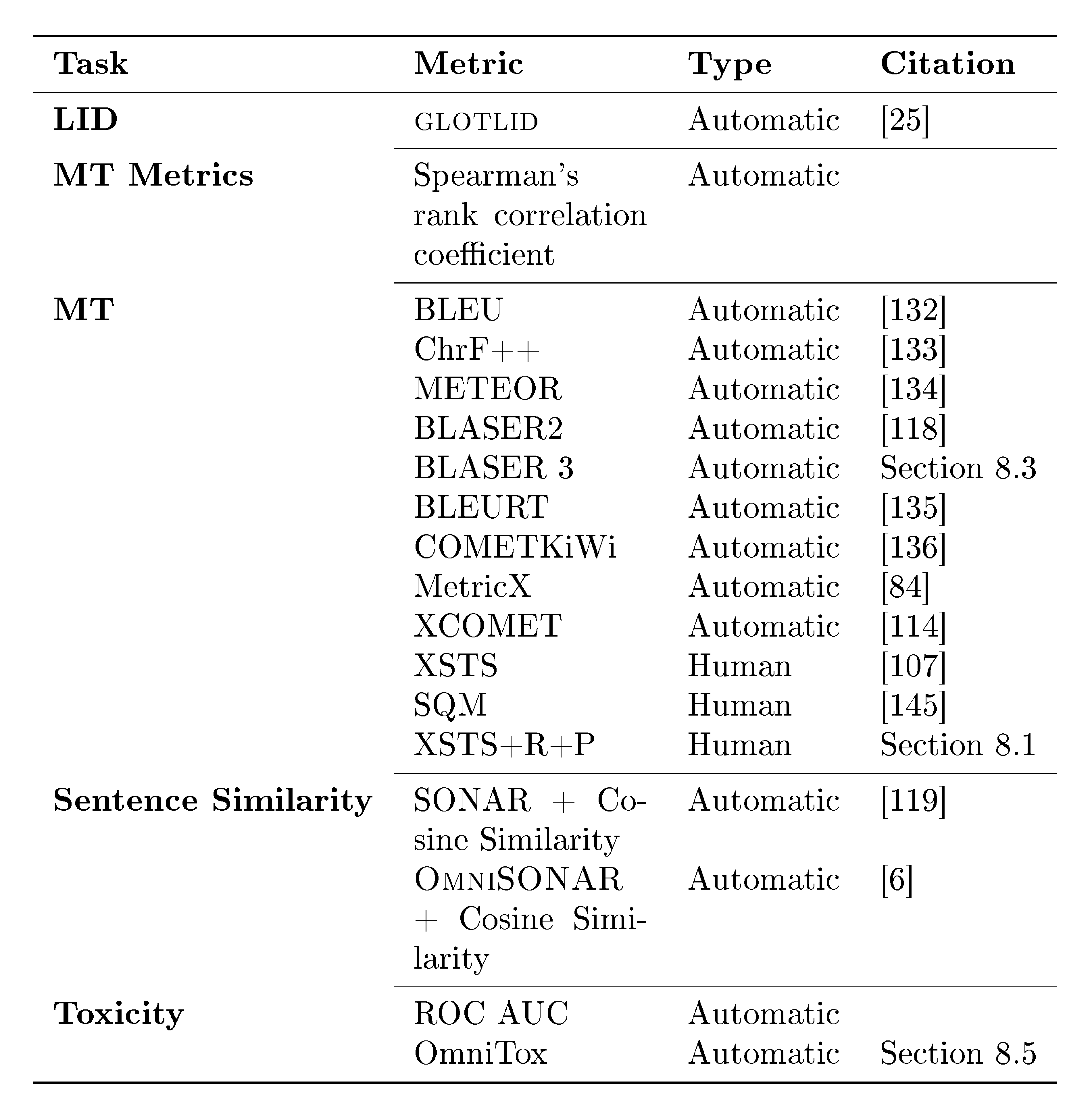

The team assembled one of the largest multilingual corpora by integrating public sources like Common Crawl and Bible translations with new datasets, including manually curated parallel texts (MeDLEY for 109 low-resource languages) and synthetic data from backtranslation and mining to fill gaps in underrepresented domains and registers. They extended model vocabularies to 256,000 tokens for better handling of rare scripts. For OMT-LLaMA, based on LLaMA3 (1B to 8B parameters), they applied continual pretraining on mixed monolingual and parallel data, followed by supervised fine-tuning, reinforcement learning, and retrieval-augmented generation for adaptation. OMT-NLLB, a 3B-parameter model, used a cross-lingually aligned encoder (OmniSONAR) with a novel training method to incorporate non-parallel data via autoencoding, then transitioned to full encoder-decoder attention on parallel data. Evaluation combined standard metrics like ChrF++ with new tools: a reference-free quality estimator (BLASER 3), toxicity detector (OmniTOX), and datasets (BOUQuET for diverse domains in 275+ languages; Met-BOUQuET for metric benchmarking across 161 directions), plus a human protocol (XSTS+R+P) assessing semantics, register, and context. Data spanned 2023–2025, with samples up to millions of sentences per source, assuming cross-lingual transfer aids low-resource cases.

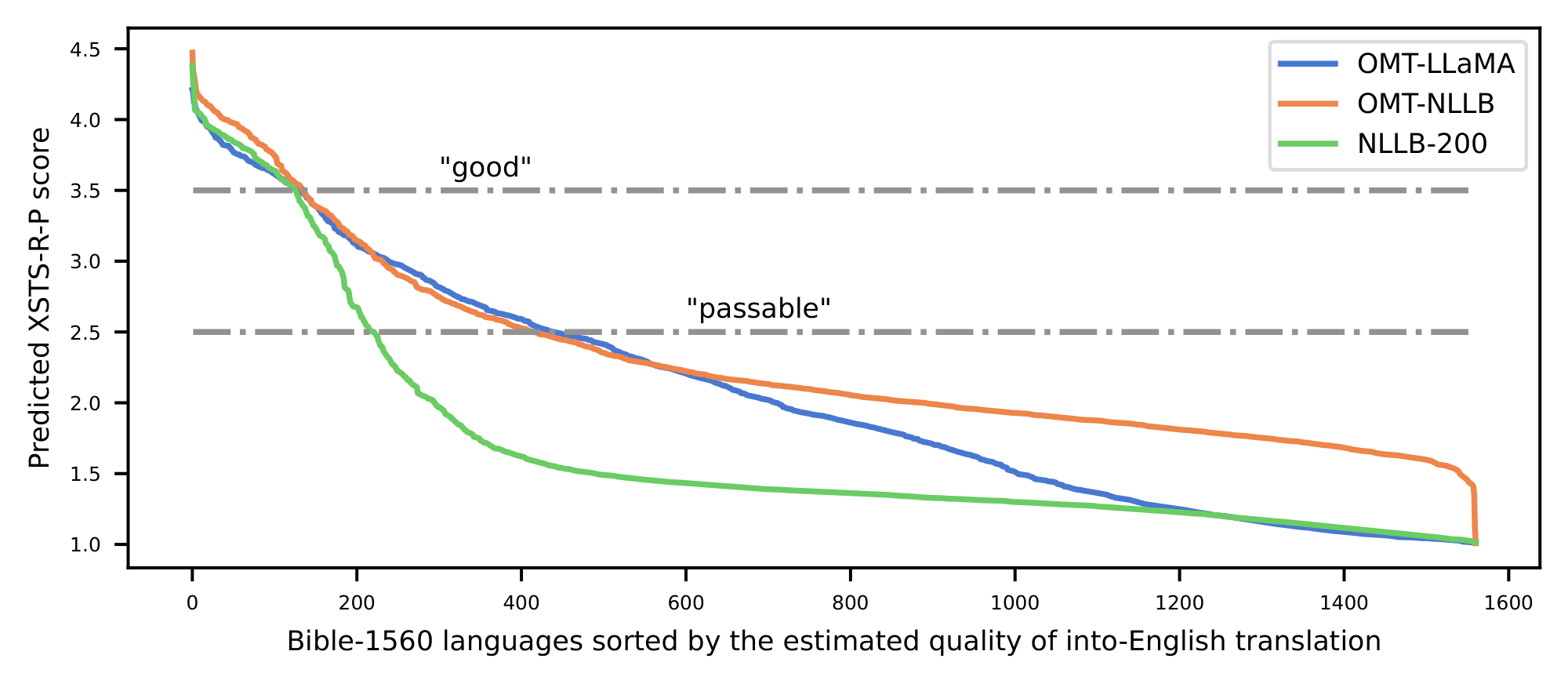

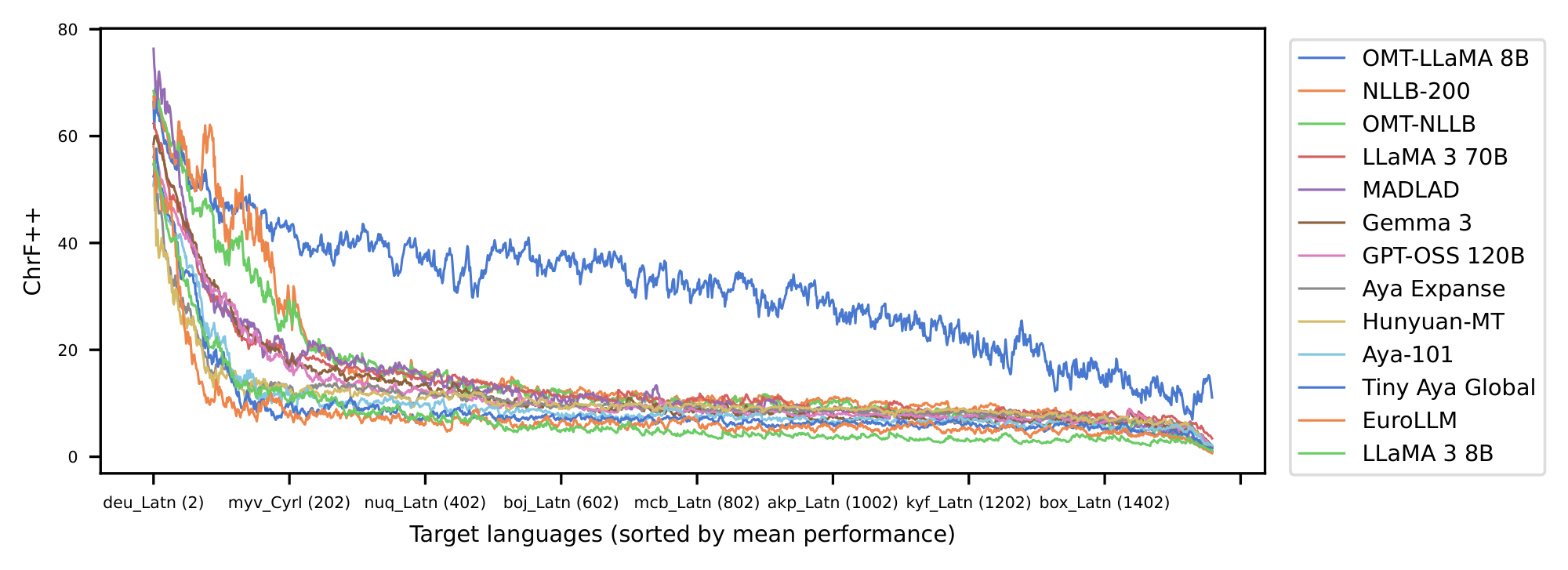

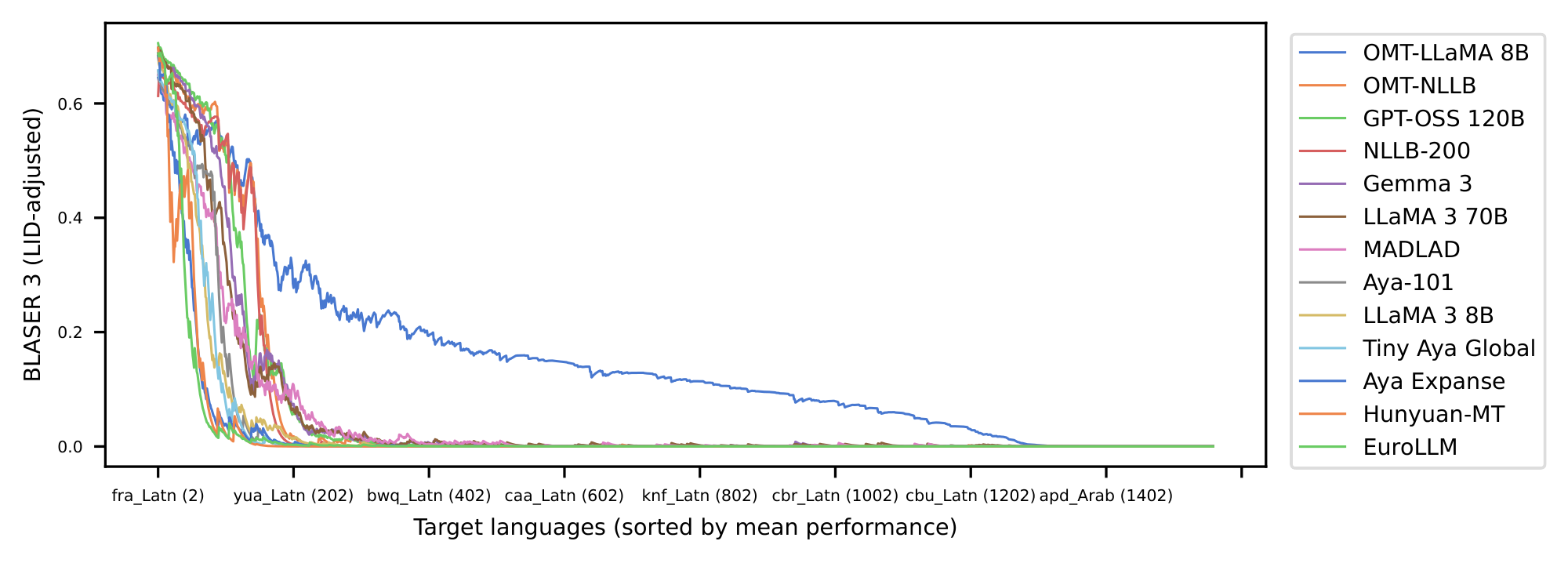

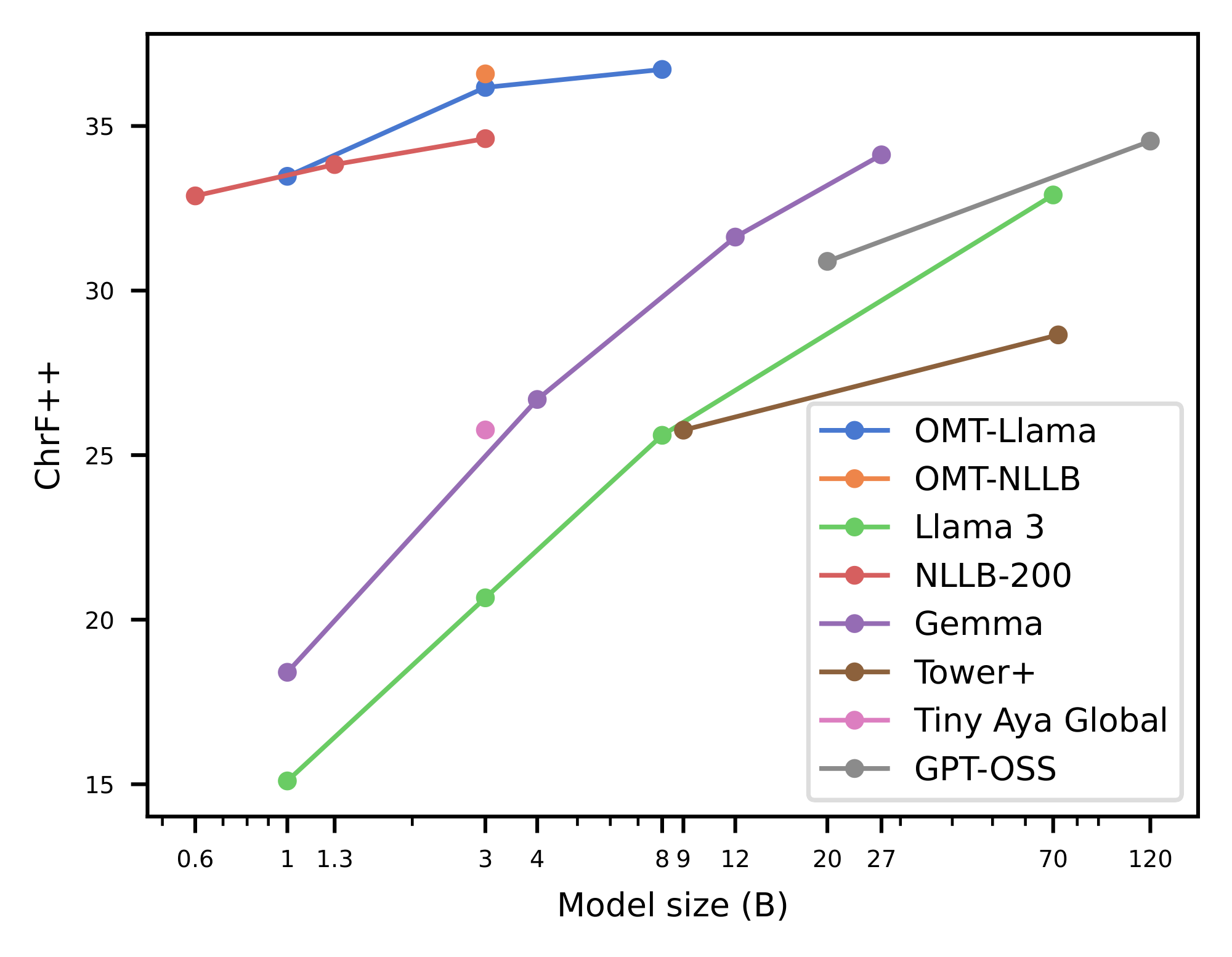

The analysis reveals five key findings. First, OMT expands effective coverage: models now "understand" over 400 languages (doubling prior benchmarks of 200) and provide non-trivial performance from/to 1,600 and 1,200 languages, respectively, outperforming baselines like NLLB and LLMs by wide margins on new state-of-the-art results for most. Second, smaller specialized models (1B–8B parameters) match or exceed a 70B LLM baseline, showing that targeted design yields better efficiency-performance trade-offs than sheer scale. Third, OMT overcomes the generation bottleneck, enabling coherent output for many previously unsupported languages—e.g., English-to-1,560 Bible translations show baselines interpret but fail to generate meaningfully, while OMT succeeds for far more. Fourth, new evaluation tools like BLASER 3 correlate strongly (Spearman ρ up to 0.70) with human judgments across 119 languages, outperforming prior metrics by 8–12% on low-resource pairs, with adjustments for language detection boosting reliability. Fifth, techniques like finetuning and retrieval-augmented generation improve targeted subsets by 5–10% when extra data is available.

These results mean OMT makes high-quality translation feasible for billions in low-resource communities, reducing risks of cultural erasure and enhancing safety by detecting toxicity across languages. Unlike expectations of endless scaling, it highlights specialization's edge, cutting compute costs by 90% for comparable performance and aiding low-resource deployment. This shifts MT from elite languages to global equity, impacting compliance in international policy and performance in cross-cultural tools, though it differs from past work by prioritizing generation over mere understanding.

Leaders should prioritize adopting OMT models—freely released with datasets and a leaderboard—for applications like global chat or content localization, opting for smaller variants in resource-limited settings. Trade-offs include decoder-only for flexibility (e.g., integrating reasoning) versus encoder-decoder for efficiency in pure translation. Next steps: Cascade with speech recognition for omnilingual speech-to-text; finetune for specific domains; invest in pilots for endangered languages. Further analysis on zero-resource cases and more human evaluations are needed before full-scale decisions.

While robust across 1,600+ languages, limitations include Bible data contamination in evaluations and gaps in monolingual corpora for ultra-rare languages, potentially inflating scores by 5–10%. Assumptions like cross-lingual transfer hold for most but falter in isolated families. Confidence is high in coverage gains (validated on diverse benchmarks) but moderate for zero-resource output—use cautiously there, relying on human checks.

1. Introduction

Section Summary: The No Language Left Behind project advanced machine translation to cover 200 languages effectively, but it highlighted major gaps for the world's 7,000 languages, especially rare and low-resource ones, where models can understand but struggle to generate text reliably due to limited data. This work introduces Omnilingual Machine Translation, a new system that supports over 1,600 languages—the widest coverage yet—by building massive, diverse datasets with human and synthetic inputs, and using specialized large language models in two architectures that expand vocabulary and improve performance. These models double the number of well-handled languages to over 400, outperform rivals on thousands more, show that targeted designs beat massive general-purpose models for efficiency, and pave the way for broader multilingual AI tools beyond just translation.

The recent success of No Language Left Behind (NLLB) ([1]) marked a turning point in multilingual translation. By demonstrating that high-quality MT could be extended to 200 languages, NLLB reshaped the research landscape and set a new standard for linguistic inclusion. It catalyzed new data pipelines, evaluation frameworks, and community partnerships that continue to benefit the entire field—including the work we present here. But NLLB also revealed a deeper asymmetry in multilingual MT. Modern models can often recognize or interpret long-tail languages through cross-lingual transfer, yet they struggle to produce them reliably. This generation bottleneck is compounded by a static, training-time definition of coverage: languages with little or no data simply never enter the system. Together, these constraints leave most of the world’s 7, 000 languages—especially endangered and underdocumented ones—effectively outside the reach of current MT technology. Early attempts to explore extreme scaling, such as Google’s Massively Multilingual Translation project ([2]), demonstrated the feasibility of reaching toward 1, 000 languages, but these efforts did not evolve into sustained work, and progress toward broader global coverage has stalled. However, notable progress has been made towards improving quality for top priority languages with decoder-only architectures (Large Language Models, LLMs) e.g. ([3, 4, 5]).

In this work, we introduce Omnilingual Machine Translation (Omnilingual MT), a family of multilingual translation systems that extend support to more than 1, 600 languages, the broadest coverage of any benchmarked MT system to date. To start, our data efforts included assembling and curating one of the largest and most diverse multilingual corpora to date, drawing from prior massive collections while substantially expanding coverage through new human-curated and synthetic data pipelines. More specifically, we integrated material from large-scale public sources and augmented them with newly created resources—including manually curated seed datasets and synthetic backtranslation—to address long-tail gaps in domains, registers, and under-documented languages.

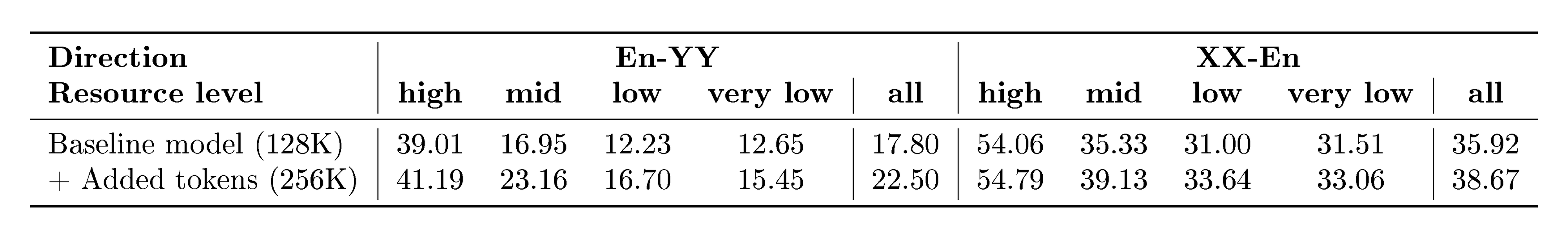

Omnilingual MT explores two complementary ways of specializing LLMs for translation: as a standalone decoder-only model and as a block within an encoder–decoder architecture. In the first approach, we extend $\textsc{LLaMA3}$ –based decoder-only models with a multilingual continual-pretraining recipe and retrieval-augmented translation for inference-time adaptation. In the second approach, we employ a cross-lingually aligned encoder ($\textsc{OmniSONAR}$ ([6]) built itself on top of $\textsc{LLaMA3}$) to build an encoder–decoder architecture that maintains the size of the original NLLB model while expanding its language coverage through a novel training methodology that exploits non-parallel data, reusing the continual-pretraining data from the decoder-only model. Both approaches share an expanded 256K-token vocabulary and improved pre-tokenization for underserved scripts, enabling large-scale language expansion to cover over 1, 000 languages.

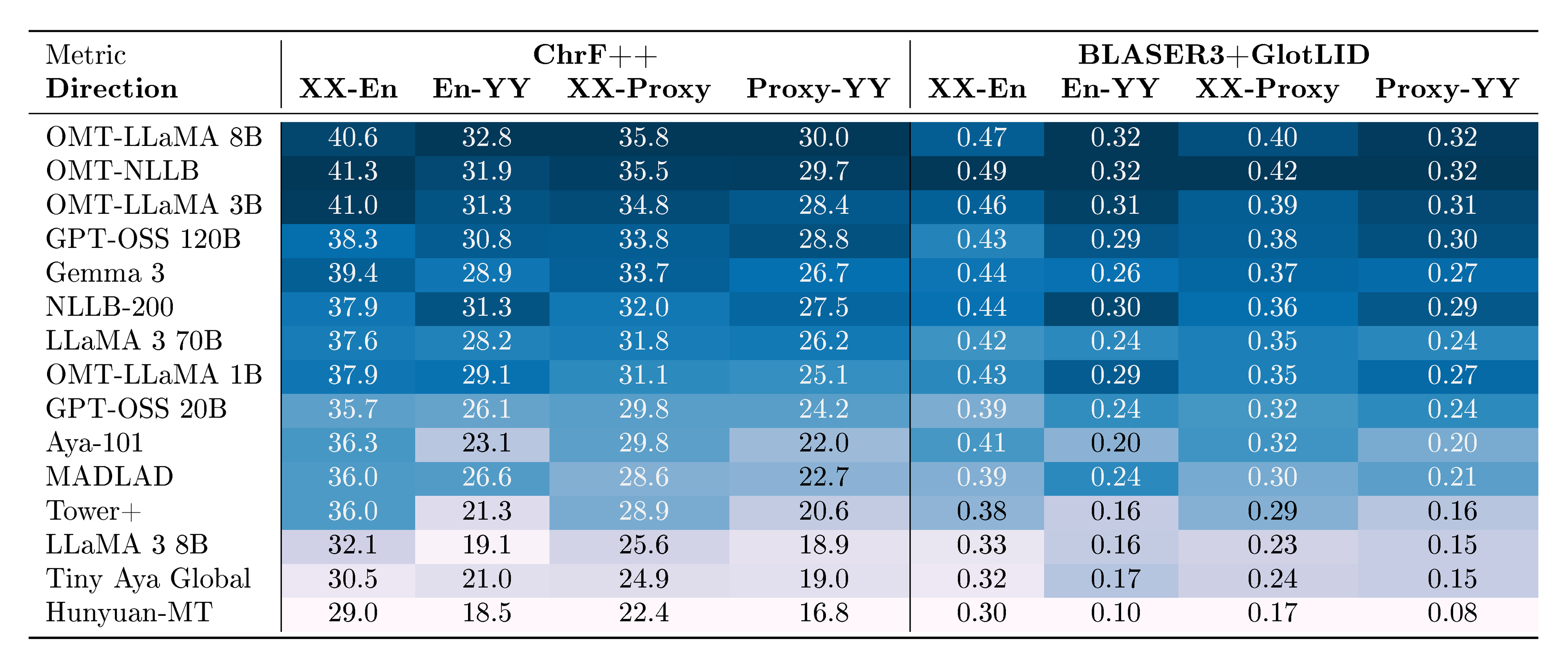

To ensure both reliable and expansive evaluation, we combined standard metrics such as MetricX and ChrF with a suite of evaluation artifacts developed for this effort. These include BLASER 3 quality estimation model (reference-free), OmniTOX toxicity classier, BOUQuET dataset (a newly created, largest-to-date multilingual evaluation collection built from scratch and manually extended across a wide range of linguistic families), and Met- BOUQuET dataset (which provides faithful multilingual quality estimation at scale).

Omnilingual MT expands the number of languages that modern models "understand sufficiently well" twofold, from about 200 to over 400 languages. Moreover, it offers non-trivial performance when translating from 1, 600 and into about 1, 200 languages, outperforming all competitive translation systems by a large margin and establishing new (and often first) state-of-the-art (SOTA) results for the majority of these 1, 600 languages.

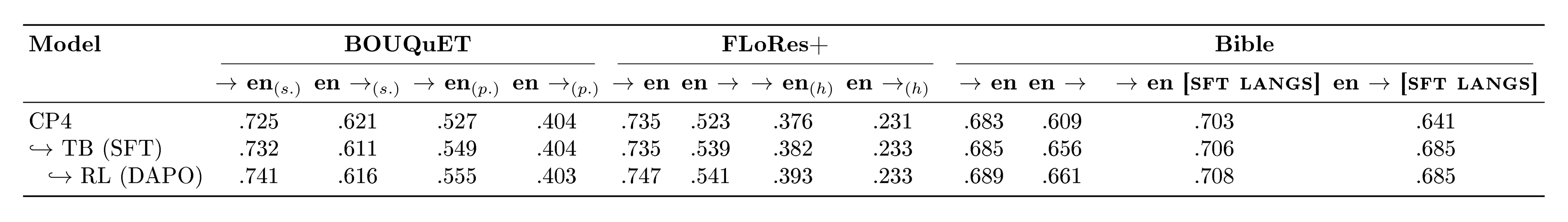

Notably, we show that specialized MT models offer superior efficiency–performance tradeoffs compared to general-purpose LLMs. More specifically, our 1B to 8B parameter models match or exceed the MT performance of a 70B-parameter LLM baseline, revealing a clear Pareto advantage: specialization, not scale, is perhaps a more reliable path to high-quality multilingual translation. This efficiency extends the practical reach of the model, enabling strong MT performance in real-world, low-compute contexts. In addition, our systematic evaluation of Omnilingual MT on English-to- 1, 560 Bible translations reveals a striking pattern: many baseline models can interpret undersupported languages, yet they often fail to generate them with even remote similarity to the target. Omnilingual MT substantially widens the set of languages for which coherent generation is possible, reinforcing the central claim of this work—that large-scale MT coverage requires not only cross-lingual understanding but robust language generation, which current baselines do not reliably provide. Beyond strong out-of-the-box performance, we analyze how targeted techniques, such as finetuning and retrieval-augmented generation, can further boost translation quality for individual languages. With this, Omnilingual MT not only provides broad coverage but also offers flexible pathways for further improving performance when additional data or domain knowledge is available.

Although Omnilingual MT is primarily designed for translation, we consider it as a general-purpose multilingual base model. Its architecture can be further trained to build multilingual LLMs, enabling future research that integrates translation, reasoning, dialog, and multimodal capabilities in thousands of languages. Moreover, with the recent release of Omnilingual ASR ([7]), Omnilingual MT can be cascaded with large-scale speech recognition to produce speech-to-text translation systems operating at a scale previously unattainable. The Omnilingual MT recipes for building models with unprecedented language support can in principle be reproduced on top of diverse base language models, and we hope that they will inspire communities, researchers, and practitioners to build systems that evolve alongside the world’s languages.

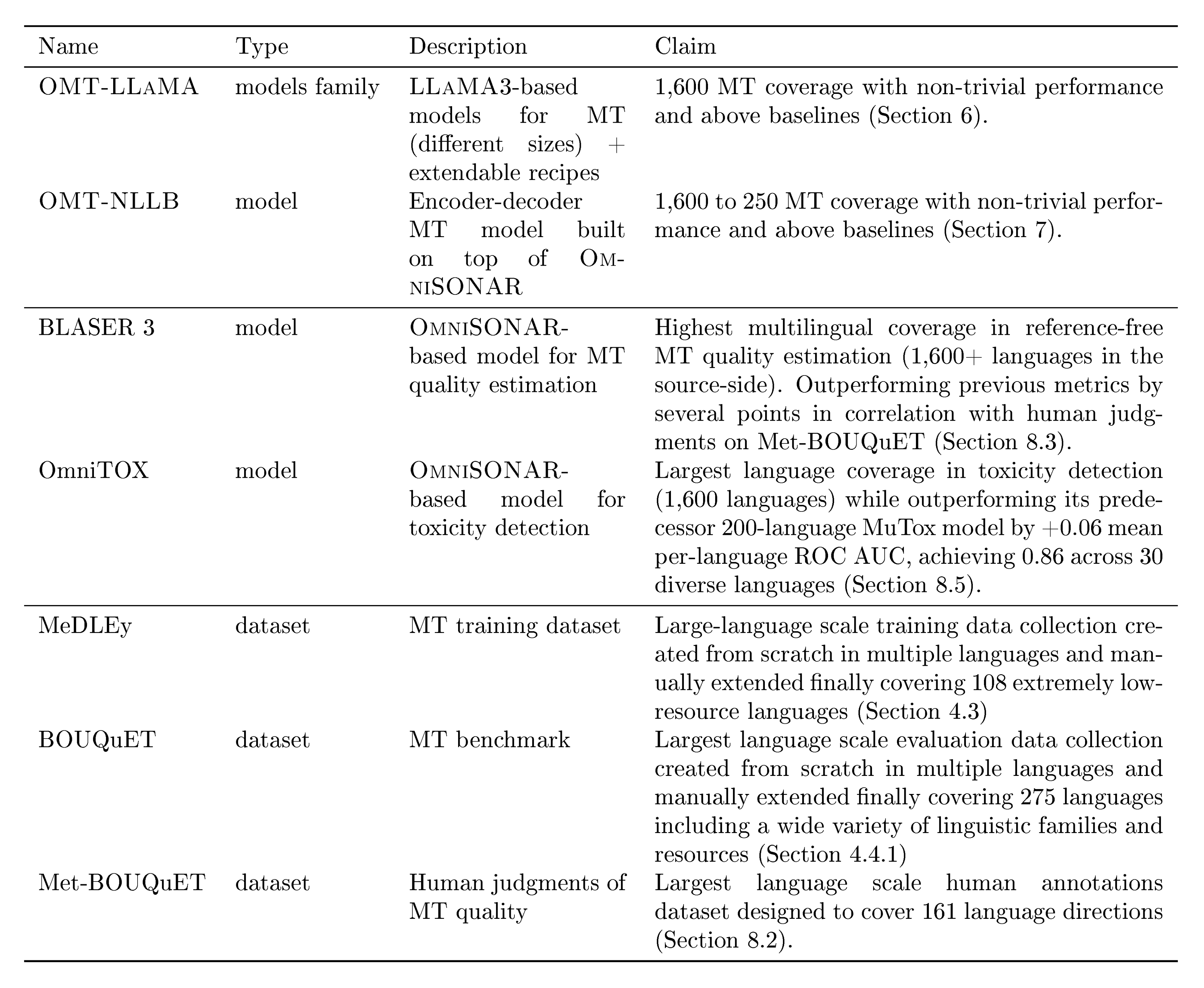

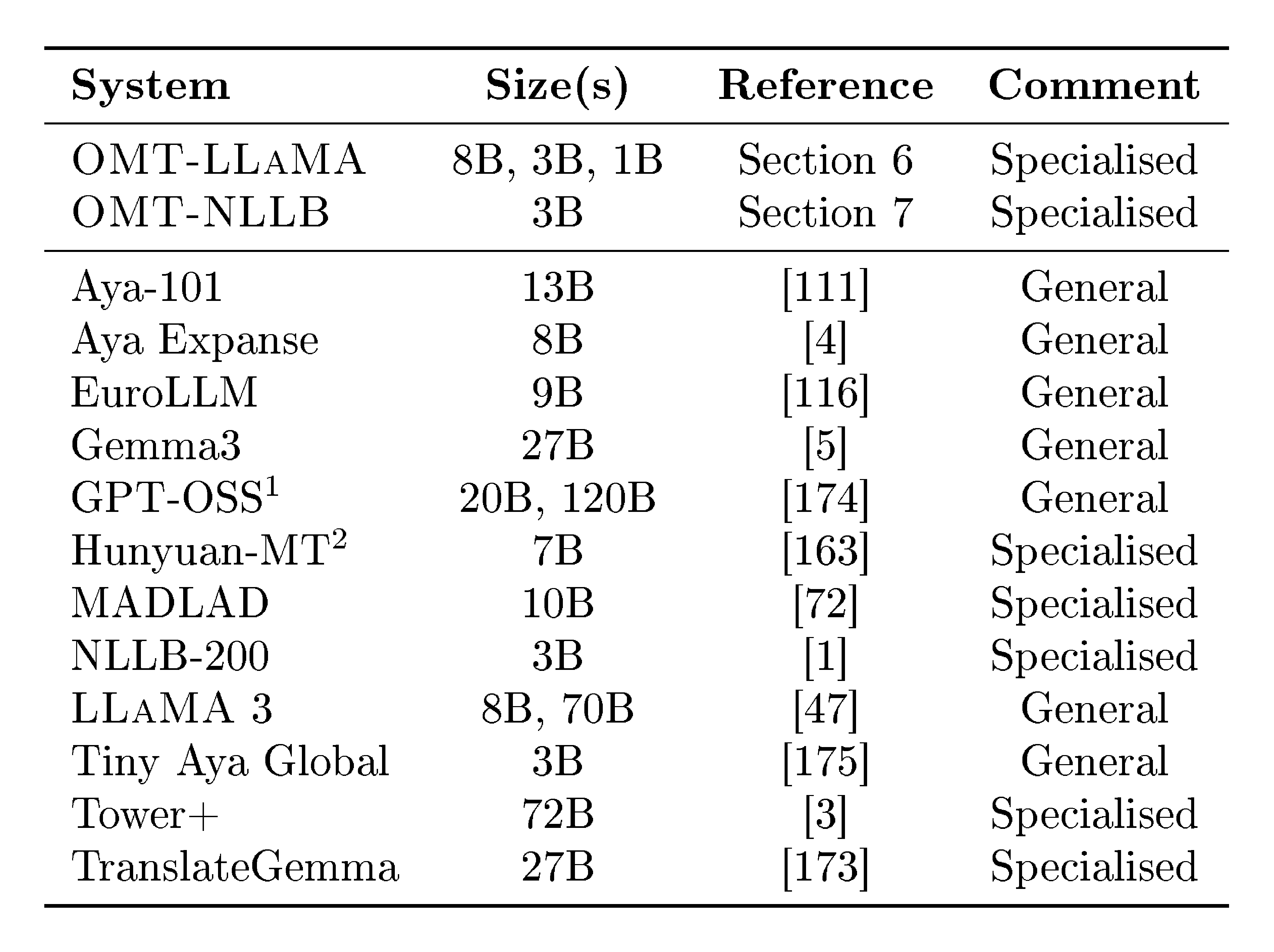

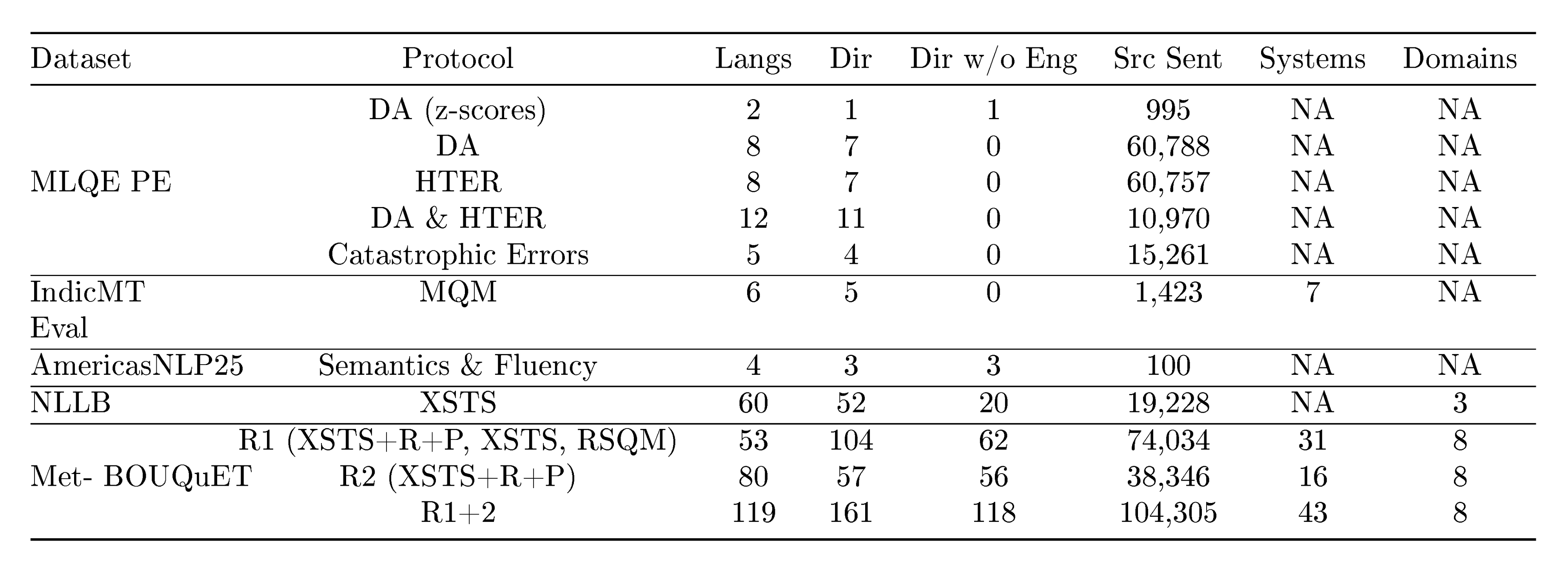

The main claims of our line of work are outlined in Table 1. Our translation models are built on top of freely available models. BOUQuET and Met-BOUQuET and the adjacent leaderboard are freely available^1.

::: {caption="Table 1: A summary of the corresponding claims of our line of work."}

:::

2. Expanding Machine Translation

Section Summary: Recent advances in machine translation, such as the NLLB model, have made high-quality translations possible for around 200 languages, challenging the old idea that adding more languages lowers overall performance and inspiring new data tools and standards. However, these systems still leave most of the world's 7,000 languages out, especially endangered ones, with models often understanding low-resource languages but struggling to produce accurate outputs in them. Efforts to expand coverage to 1,000 or more languages face hurdles like linguistic diversity, uneven data availability, and unreliable evaluation methods, calling for smarter ways to build adaptable, efficient systems that better represent global diversity.

Recent advances in multilingual MT have demonstrated that high-quality translation can extend far beyond high-resource languages. Most notably, the trajectory is best represented by NLLB ([1]), which demonstrated that it is possible to deliver strong translation quality for 200 languages, setting a new standard for linguistic inclusion. More specifically, NLLB illustrated that the long-standing curse of multilinguality-the tendency for quality to degrade as the number of languages increases—was not an insurmountable barrier. Through large-scale data curation, targeted architecture choices, and multilingual optimization strategies, NLLB achieved both breadth and quality, overturning the assumption that scaling coverage inevitably sacrifices performance. Since the release of NLLB, the work has reshaped the multilingual MT ecosystem in several ways, including establishing FLoRes-200 as the de facto evaluation standard, catalyzing new data pipelines across academia and industry, and enabling dozens of downstream models, fine-tuning efforts, and community adaptation projects that continue to rely on its multilingual backbone.

Despite the impact of this effort, NLLB and other projects in this current landscape continue to leave the vast majority of the world’s 7, 000 languages, especially endangered or marginalized, largely absent from technological representation. As a result, coverage plateaus at roughly the same frontier across systems. Compounding this issue, many models exhibit cross-lingual understanding of underserved languages through transfer, yet consistently fail to generate them with meaningful fidelity, revealing a generation bottleneck that further limits practical support for long-tail languages.

That said, several projects have started to explore scaling MT beyond the 200-language ceiling. Google’s Massively Multilingual Translation work investigated models covering up to 1, 000 languages, offering an early proof of concept that extreme multilingual scaling was technically possible ([2]). These efforts demonstrated that multilingual transfer can be leveraged even in very low-resource conditions. However, they did not yield sustained, or extensible systems, and none produced a practical path for continual expansion. Subsequent multilingual MT systems, including large decoder-only LLMs, mostly reporting MT quality improvements on top priority languages, have also increased language coverage indirectly. Their big-scale pretraining exposes them to a broader, if uneven, range of languages than purpose-built MT systems, allowing them to exhibit surprising zero-shot and few-shot translation abilities. Improvements in reasoning, instruction-following, and cross-lingual representations have also provided new avenues for multilingual transfer. Yet these gains remain dominated by high-resource languages, and large LLMs remain inefficient MT systems—often requiring tens of billions of parameters to match the MT performance of much smaller specialized models (see Section 9).

The expansion of MT is compounded by other problems. Long-tail languages, for one, bring substantial linguistic diversity ranging from rich morphological systems and agglutinative patterns to unique orthographic and script traditions—with available written data often distributed across different formats and community contexts ([8]). These linguistic and sociocultural features expose the brittleness of closed-coverage systems: adding a language requires far more than acquiring data; it requires modeling choices that account for typological diversity and social context. Furthermore, while large-scale multilingual corpora—including Bible-based datasets, Gatitos ([9]) and SMOL ([10]) with parallel texts, and large-scale web datasets like FineWeb2 ([11]) or HPLT 3.0 ([12])—have expanded the availability of multilingual training text, these corpora exhibit systematic gaps. They disproportionately represent formal registers, religious domains, and well-documented language families while underrepresenting dialect variation, colloquial styles, and many of the world’s marginalized languages. As a result, increasing dataset size does not reliably translate into broader or more equitable coverage. However, recent work using synthetic data ([13]), bitext mining, and multilingual transfer ([12]) has proven to be helpful in extending coverage.

In addition, large-scale evaluation remains a major bottleneck for multilingual MT. FLoRes+ ([14]), and the Aya benchmark ([15]) provide high-quality evaluation for hundreds of languages, but none provide coverage beyond 200–300 languages (there are few recent exceptions to this ([16])) . Reference-based metrics also struggle at scale: BLEU and ChrF++ fail to capture meaning adequacy, while reference-free metrics such as COMET, BLASER 3 and MetricX require careful calibration and validation in typologically diverse languages (see metric cards in Appendix C). Without reliable evaluation for long-tail languages, progress becomes difficult to measure and even harder to compare across systems. This problem becomes acute when scaling to 1, 000+ languages, where many systems can produce outputs that appear fluent yet remain unintelligible or unrelated to the target language, making generation accuracy particularly challenging to assess. The field requires multilingual quality-estimation frameworks that scale to thousands of languages while preserving metric fidelity.

Taken together, these limitations highlight that progress in massively multilingual MT now depends less on marginal model improvements than on rethinking how systems can grow, adapt, and represent the world’s linguistic diversity. What is needed is not only broader coverage but deeper support—models that can generate underserved languages robustly, operate efficiently at smaller scales, and provide reliable evaluation mechanisms for long-tail settings. Our goal is to operationalize this shift. Rather than building another large model centered on high-resource performance, we design Omnilingual MT to address the structural challenges of extreme coverage: data scarcity, typological diversity, long-tailed language generation, efficiency–performance tradeoffs, and the absence of evaluation frameworks for 1, 600+ languages.

This perspective also motivates how we organize the remainder of the paper. In Section 3, we move from the structural challenges outlined above to the linguistic realities of scaling to 1, 600+ languages. This section is specially relevant to inform about the concept of language; and related language features such as what does it take to qualify as pivot language, relevance of context, how to determine resource-levels.

Section 4 presents the data contributions in this work with special focus on under-represented languages. This section reports several well-known directions to expand data for pretraining MT models. Additionally, it reports more innovative diverse and representative post-training and evaluation datasets.

The three subsequent sections—Section 5, Section 6, and Section 7—describe the translation model architectures that we propose. We report several ablations to motivate our modeling decisions.

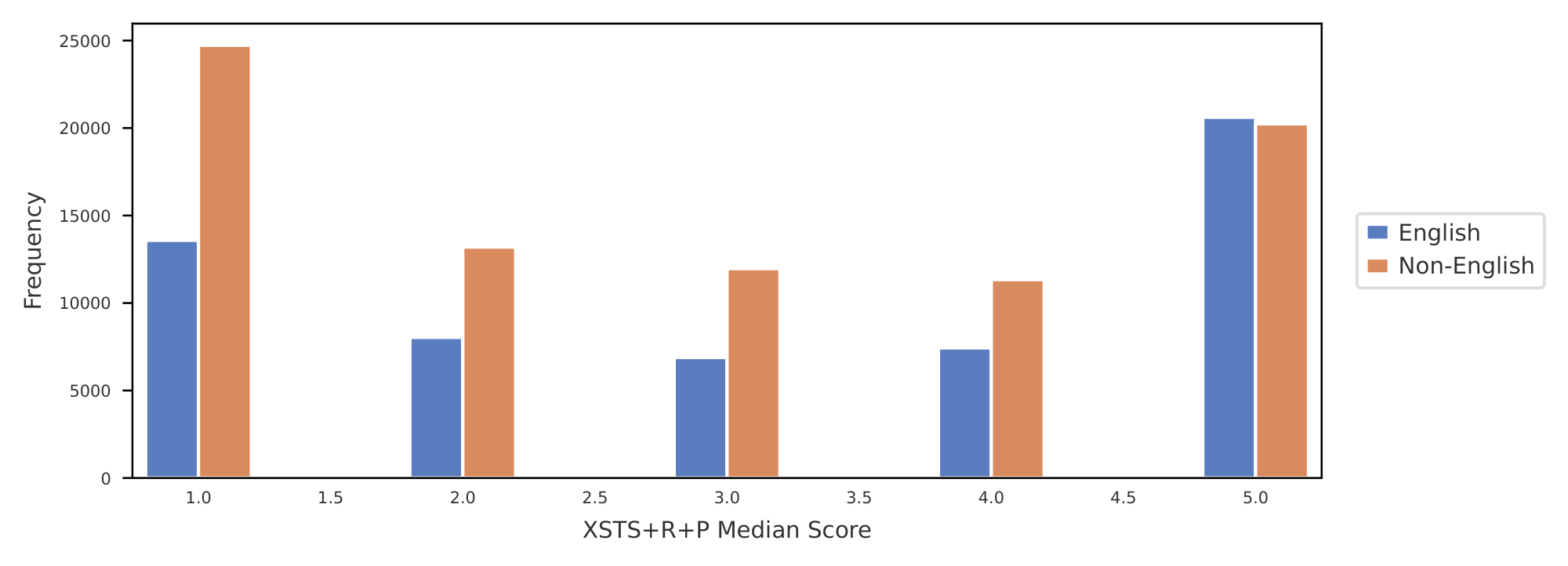

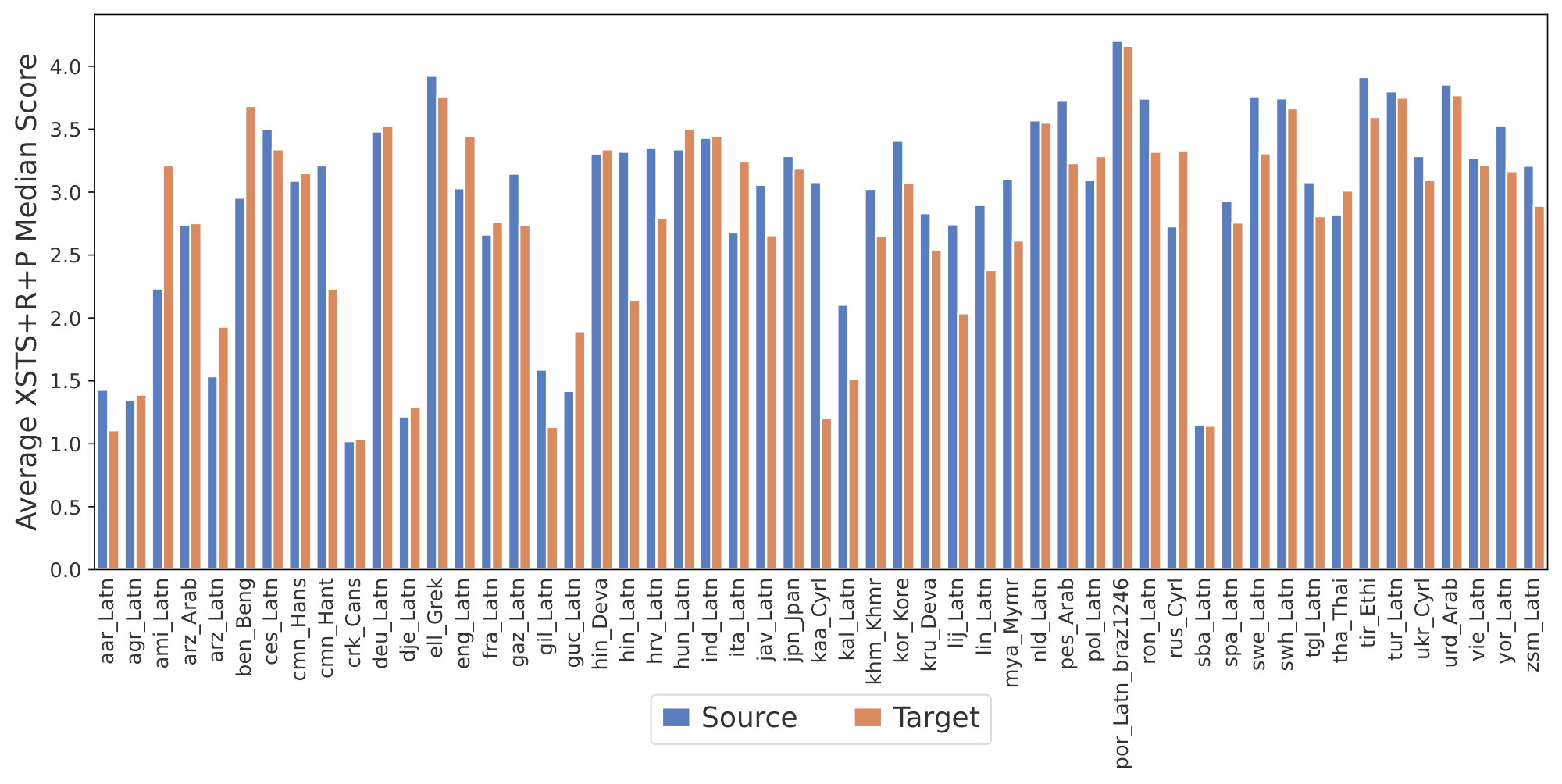

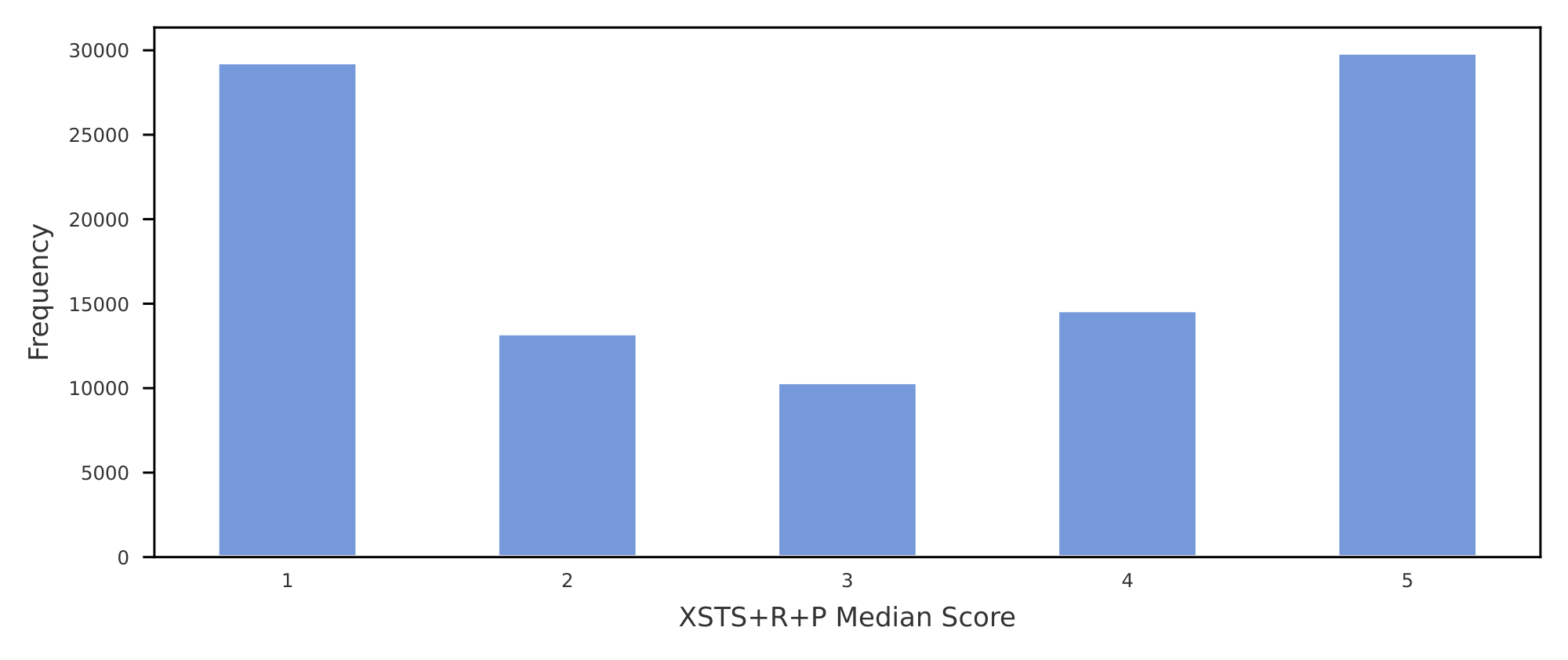

Next, Section 8 is dedicated to the contributions that we make towards expanding the MT metrics to Omnilinguality. We propose a variation of human evaluation protocol (XSTS+R+P) to better represent languages outside of English, build the largest human annotations collection on language coverage on MT quality (Met- BOUQuET), propose the largest multilingual MT quality metric (BLASER 3), and the largest multilingual toxicity detector (OmniTOX).

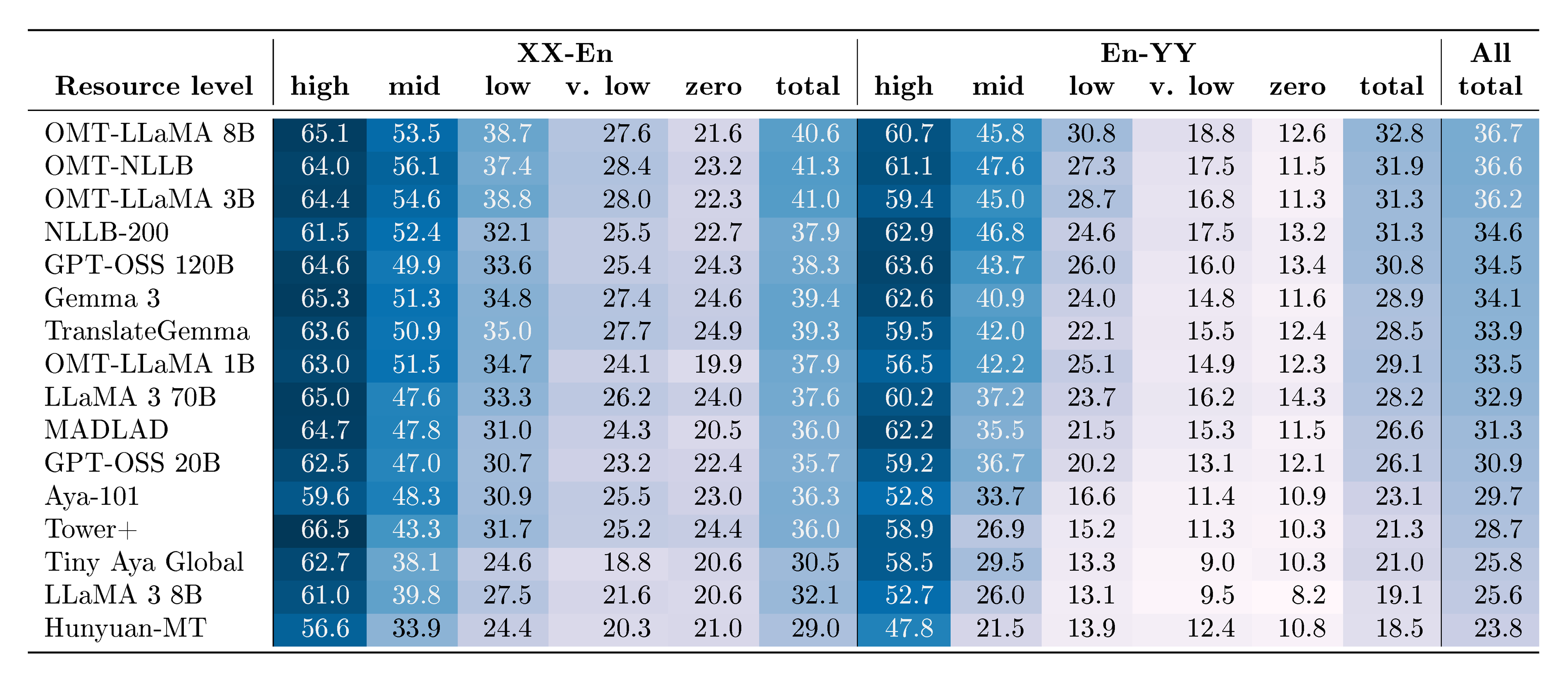

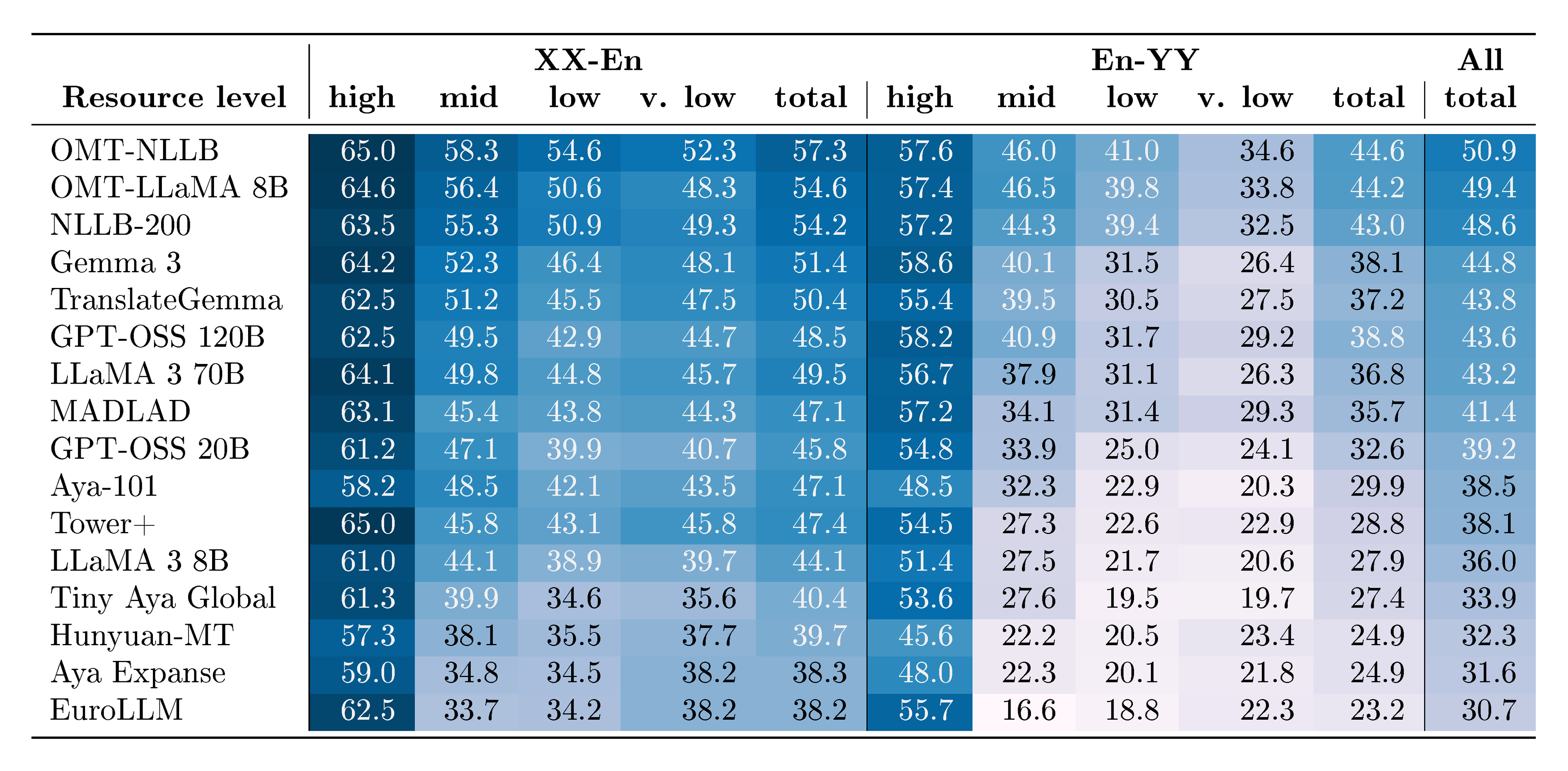

Section 9 reports the final results of our MT models evaluation focusing on answering questions such as language coverage and relative performance to external baselines.

The final sections focus on key features of the MT adoption problem space. Section 9.1.4 demonstrates how our smaller models achieve performance improvements over, or parity with, larger models. Section 10 addresses the growing trend of researchers fine-tuning NLLB for machine translation in their languages and adapting smaller $\textsc{LLaMA}$ models to various language-specific tasks, including translation. Building on this momentum, we demonstrate that our models are architecturally designed to facilitate such extensions and adaptations. The findings presented in this paper underscore the importance of continued investment in specialized models to enhance translation quality and expand language coverage in MT. Finally, Section 11 summarizes the conclusions and discusses the social impact of our work.

3. Languages

Section Summary: The section explains how languages are identified and referenced using standardized codes like ISO 639-3 for languages and ISO 15924 for writing systems, allowing for precise distinctions such as different scripts for Mandarin Chinese, while noting that classifications can be debated and that counts often include unique language-script combinations. It highlights the uneven global distribution of language speakers, with over half the world's population relying on the top 20 languages and the rest spread across thousands of others in a long-tail pattern, many of which are underserved due to limited access to technologies like machine translation. To address quality issues in training data and evaluations for these underserved languages, the authors discuss challenges like finding proficient translators amid generational language shifts and propose using pivot languages—high-resource ones familiar to speakers, such as Spanish for certain Indigenous languages—along with providing contextual details to improve translation workflows.

3.1 Referring to languages

In the absence of a strict scientific definition of what constitutes a language, we arbitrarily started considering as language candidates, and referring to those candidates as languages, those linguistic entities—or languoids, following [17]—that have been assigned their own ISO 639-3 codes.

We acknowledge that language classification in general, and the attribution of ISO 639-3 codes in particular, is a complex process, subject to limitations and disagreements, and not always aligned with how native speakers themselves conceptualize their languages. To allow for greater granularity when warranted, ISO 639-3 codes can be complemented with Glottolog languoid codes ([18]).

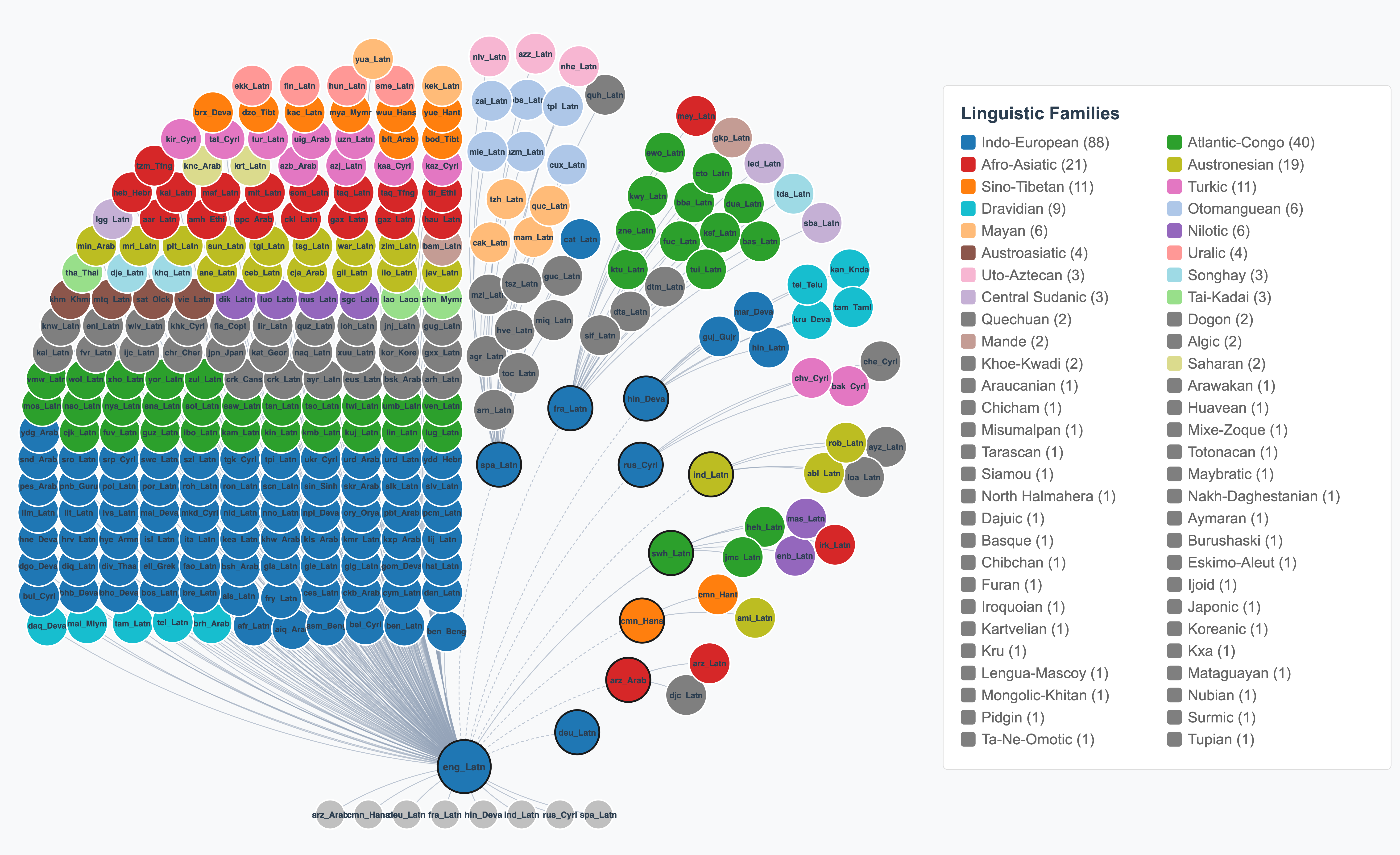

Additionally, as some languages can typically be written using more than a single writing system, all languages supported by our model are associated with the relevant ISO 15924 script code. For example, we use cmn_Hant to denote Mandarin Chinese written in traditional Han script and cmn_Hans for the same language written in simplified Han script. When counting languages throughout this paper, we typically count the distinct combinations of the language and the writing system, identified by the pair of ISO 639-3 and ISO 15924 codes.

Finally, the use of the phrases long-tail languages and underserved languages also needs further defining. There are over 7, 000 languages used in the world today used by over 8 billion human beings. The number of users is not evenly distributed among those languages, far from it. It is estimated ([19]) that slightly less than half of the world's population uses as their native languages (or L1) one of the 20 most used languages, which means that the other half uses as their L1 one of the remaining 7, 000+ languages. The same authors[^2] estimate that 88% of the world's population use as their L1 or L2 one of the 200 most used languages. Overall, we can see that the distribution of L1 users per language is quasi-zipfian, and therefore displays a conspicuous long tail (hence, our use of the phrase long-tail languages). It is not uncommon for many of the long-tail languages to be considered underserved, as defined in the following section.

[^2]: https://www.ethnologue.com/insights/ethnologue200/, last accessed 2026-02-18

3.2 Quality translations from or into underserved languages

In this section we discuss the main impediments to the creation of high-quality training or evaluation data that could partially offset the lack of existing data for underserved languages, and present non-English-centric solutions as an alternative to existing translation workflows. We use the phrase underserved languages as a synecdoche referring to communities of language users who do not have access to the full gamut of language technologies—and more specifically here to machine translation—in their respective native languages. The language technology industry often refers to those languages as low-resource languages because of the small amount of available data. We discuss resource-level classification at greater length in the next section, as this kind of classification carries some degree of arbitrariness that warrants further explanations.

The problem of low-quality translations into or out of underserved languages can be approached from at least two angles: training data and evaluation. On the training data front, mitigation strategies for observed quality issues entail creating additional parallel data; this is most often done by commissioning translations into underserved languages. From the standpoint of evaluation, quality issues can stem from the lack of evaluation datasets or the lack of useful human evaluation annotations. The common denominator to training data and evaluation shortcomings is the difficulty faced by the research community to commission high-quality work from proficient translators or bilingual speakers.

Determining pivot languages

Receiving high-quality work products from proficient translators or bilingual speakers implies, firstly, having access to said speakers and, secondly, creating optimal conditions for quality work. When it comes to underserved languages, it is important to consider that the vast majority of those languages score high on the intergenerational disruption scale [^3]. High disruption typically occurs when different generations of speakers become geographically estranged due to drastic changes in labor and macroeconomic settings (e.g., when a country's economy shifts its primary source of production from the primary sector to the secondary or tertiary sector). Corollary to this shift is a massive displacement of younger generations from rural areas to urban business and higher education centers. As a result, the linguistic profiles of those generations become differentiated. The older generations are proficient native speakers of the underserved language and, in most cases, proficient second-language speakers of an official language of the country where they reside. The younger generations are native or near-native proficient speakers of the official language and of a business or research lingua franca (more often than not, English) but they are not as proficient in the underserved language. For the above reasons, pairing underserved languages with English in human translation work is not always the optimal solution. Alternatively, we also need to provide for the pairing of underserved languages with high-resource languages at which native speakers are proficient. In this project, we refer to those alternate high-resource languages as pivot languages (e.g., Spanish used as a pivot language for translations into or out of Mískito [miq], or K'iche' [quc]).

[^3]: For additional information on language disruption and disruption scoring, please see [20].

Providing contextual information

Even when English is a possible—or the only available—pivot option, its lack of explicit grammatical markings is a constant reminder that sentences rarely speak for themselves, and that translators need a good amount of contextual information to produce quality translations, especially when moving away from the formal textual domain and closer to the conversational domain. For example, one of the many differences between those two domains is a shift from a predominance of unspecified third grammatical persons to first and second grammatical persons (often in the singular). In English, the pronouns I and you do not provide any intrinsic information about grammatical gender; in fact, you does not even provide any distinctive information about grammatical number, which is not complemented either by any form of verbal or adjectival inflection. This causes ambiguities that translators cannot resolve on their own, which in turn may lead to mistranslations that are not due to lack of proficiency but rather lack of relevant information. The same is true of information about language register and formality. In the conversational domain, English provides very few formality markers, and identifying language register markers may require a very high level of proficiency, which is only accessible to translators with extensive cultural experience. To mitigate these problems, we first ensured that all sentences to be translated be included in a paragraph (or what would be the equivalent of a paragraph in speech). We also provided translators with additional information about the following: the overall domain in which the paragraphs are most likely to be found, the protagonists depicted or referred to in the paragraphs, the language register most likely to be used in such situations, and the overall tone of the paragraphs (if any specific tones were to be conveyed).

3.3 Resource levels

Historically, languages in MT have typically been classified as either high-resource or low-resource (e.g., see WMT evaluations ([21, 22])). This classification facilitates the analysis of MT performance in highly multilingual and massively multilingual settings, among other applications.

The definition of low-resource languages is somewhat arbitrary, or at the very least, highly dynamic, as additional resources may be created at any time. More broadly in NLP, this classification is based on the availability of corpora, dictionaries, grammars, and overall research attention. One widely used definition in the field of MT originates from the NLLB work ([1]), in which the authors differentiate between high- and low-resource languages based on the amount of parallel data available for each language (with "documents" predominantly consisting of single sentences). Specifically, the threshold is set at 1 million parallel documents, above which a language is considered high-resource.

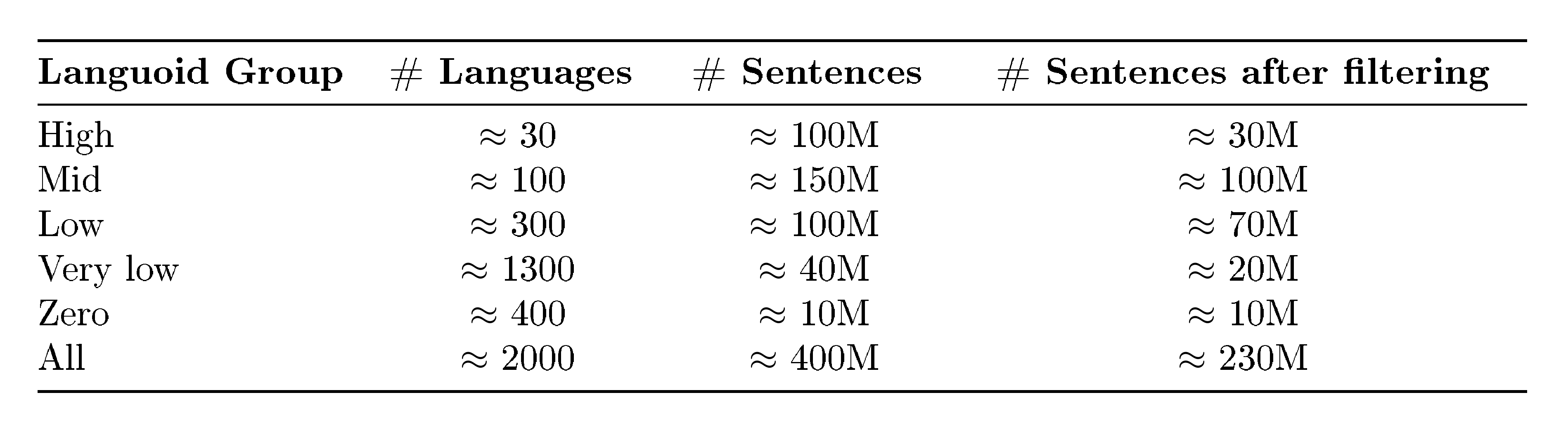

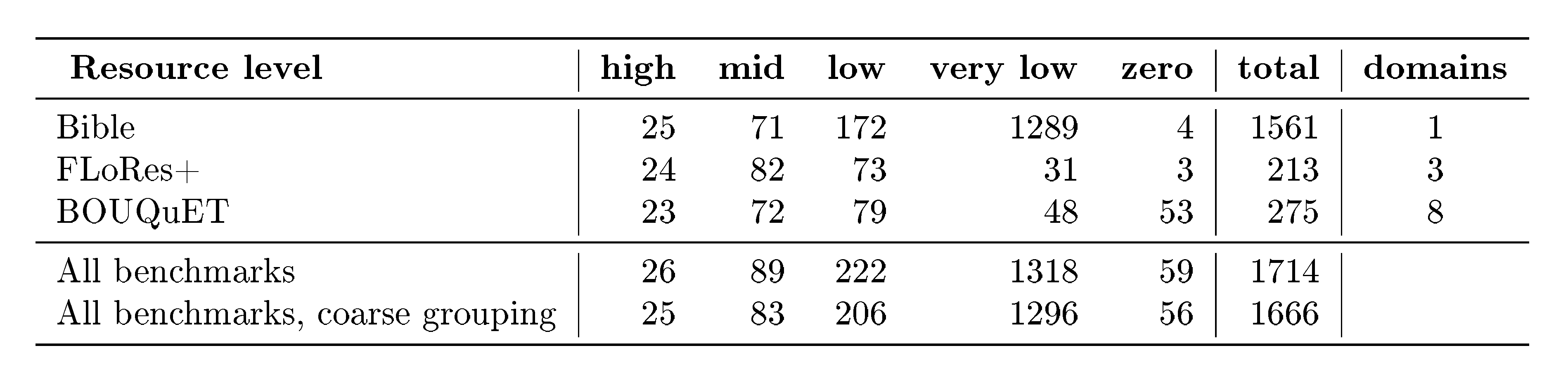

We want to revisit this definition because we are dealing with a much larger amount of languages than NLLB; and works with similar amount of languages ([23]) do not provide an explicit definition; we want to optimize for this definition to correlate with MT quality; and given the large amount of languages that we are covering, we want to further fine-grain our language resource classification by splitting low resource languages into low and extremely low resource, and by distinguishing high- and medium-resourced languages.

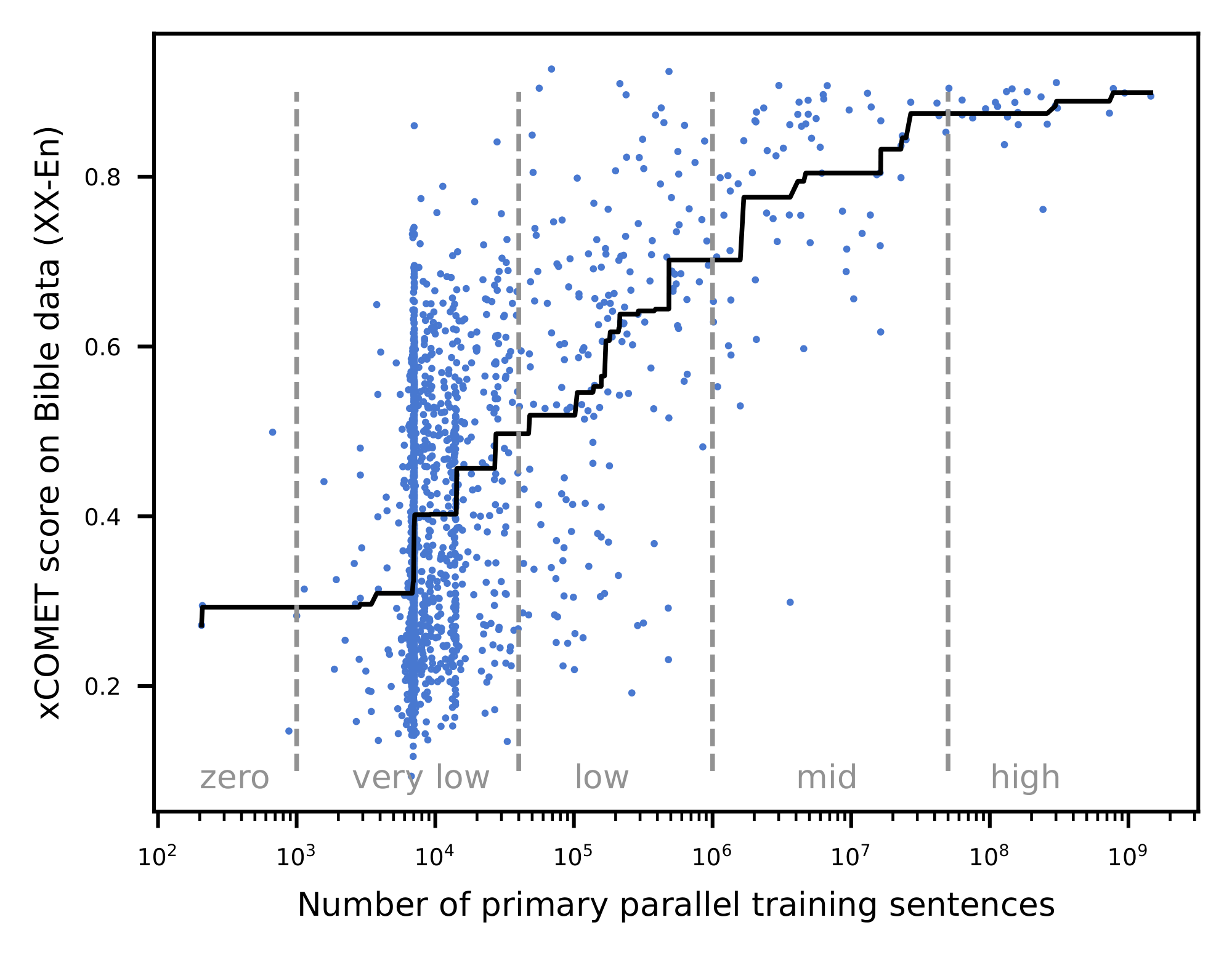

Based on our experiments (see Figure 1), we confirm a correlation between translation quality and the amount of parallel documents available. A clear shift in translation quality is observed for languages with more than 1 million parallel documents, which, following the NLLB convention, we establish as the threshold for the "low-resource" designation. An additional qualitative change is observed at approximately 40K parallel documents: this corresponds to a corpus size comparable to that of the Bible, supplemented by at least one additional source of parallel training data.

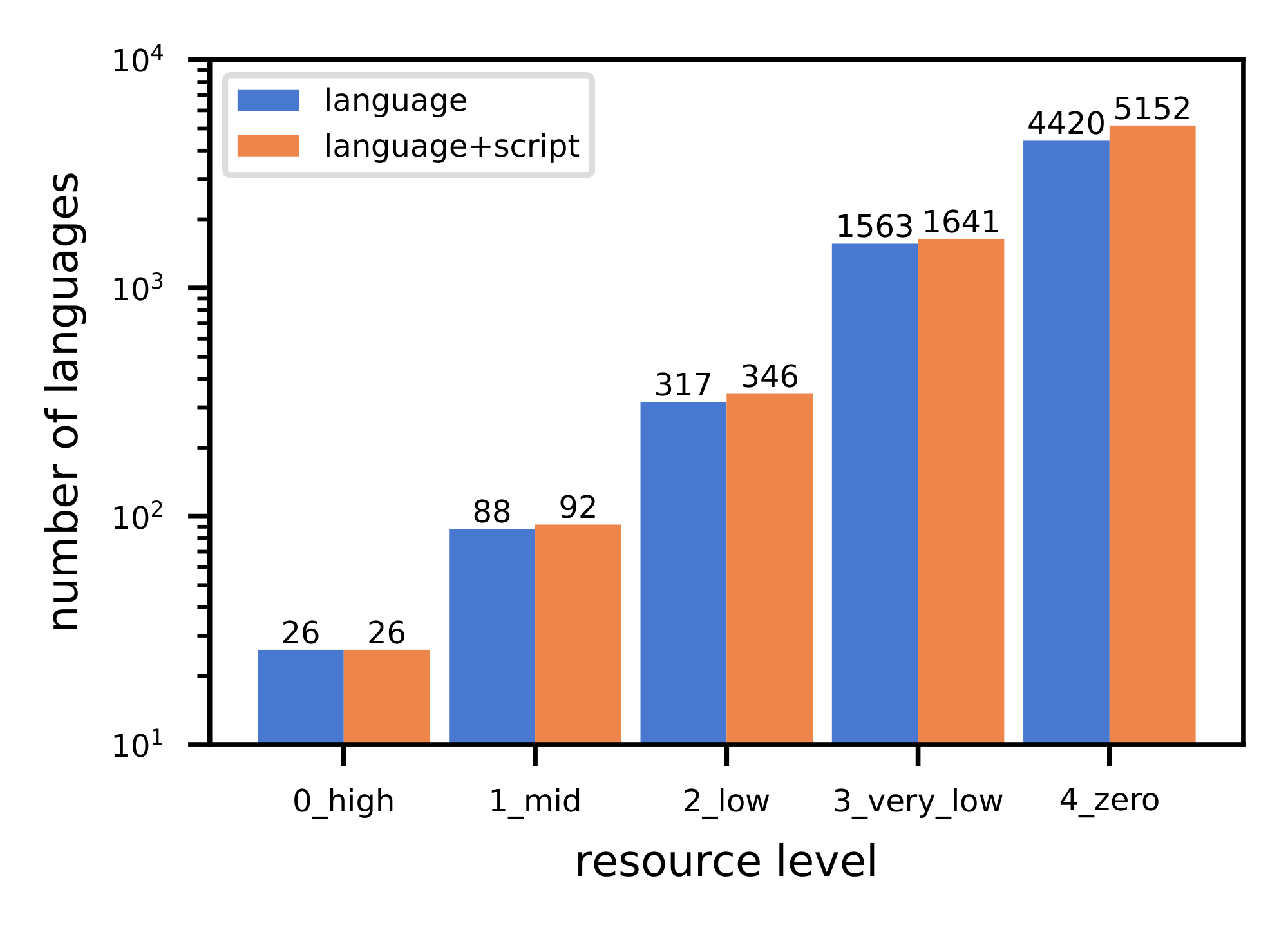

Therefore, the final classification relies on parallel documents available, with a language considered high resource if we have more than 50M document pairs (for such languages, MT quality of most systems is predictably high), mid resource above 1M, low resource if we have parallel documents between 40K and 1M, extremely low resource if we have between 40K and 1K parallel documents, and zero-resource below 1K (mostly to indicate that their training data size is much lower than even a typical Bible translation or a seed corpus, often represented only by a few sentences in a multilingual resource like Tatoeba). See the graph with distribution of languages per resource bucket in Figure 2.

This definition comes with some limitations. Most anomalies come from languages with less than 1k parallel documents on which we observe equally high translation quality. By doing a manual inspection on those languages, we could hypothesize that this bucket contains languages which are highly similar to other high resource languages and benefit from positive knowledge transfer. Another anomaly, but much more rare, is low-performing languages in the bucket of languages with more than 1M documents. In this case, again, by manual analysis we could hypothesize that there are languages for which we have extremely low quality data or very narrow domain distribution of it. Or, complimentary, rare scripts that are not well represented by the tokenizer and as a consequence low quality MT performance. Finally, sometimes we misattribute the available training data to other languages, due to loosely defined language boundaries (e.g. some data for a dialectal Arabic language could be identified simply with the ara_Arab code, pointing to the Arabic macro-language without specifying the language).

3.4 Describing languages in prompts

When prompting both $\textsc{OMT-LLaMA}$ models and instruction-following external baselines to translate, the precise format of describing the target language may affect the generation results. Different organizations prefer different language code formats, and to ensure interoperability of our prompts between diverse models, we opted for natural-language descriptions of the language varieties.

Our template for language names includes the language name itself, followed by optional brackets with the script, locale, or a dialect: for example, spa_Latn becomes "Spanish", cmn_Hans becomes "Mandarin Chinese (Simplified script)", eng_Latn-GB turns into "English (a variety from United Kingdom)", and twi_Latn_akua1239, into "Twi (Akuapem dialect)". We omit the script description for the languages that are "well-known" (using inclusion into FLORES-200 as a criterion) and that are expected to be using one single script in an overwhelming majority of scenarios.

For mapping the codes of languages, scripts and locales into their English names, we mostly rely on the Langcodes package, ^4, which in turn relies on the IANA language tag registry. For referring to dialects, we use their names from the Glottolog database[^5], but employ them only for disambiguating otherwise identical language varieties in FLoRes+.

[^5]: From the languoids table in https://glottolog.org/meta/downloads; currently we are using version 4.8.

4. Creating High-Quality Datasets

Section Summary: High-quality data is essential for building effective translation systems across thousands of languages, so this section emphasizes curating and selecting reliable sources for training and evaluation. For training through continual pretraining, the team uses diverse multilingual resources like filtered Common Crawl datasets covering over 2,000 languages, Bible translations for alignment, dictionaries such as Panlex, and sentence collections like Tatoeba, along with specialized parallel texts. To address gaps in underrepresented languages and topics, they also create new monolingual and aligned datasets from web sources, synthetic generations, and manual efforts, while relying on Bible subsets and benchmarks like FLoRes+ for evaluation.

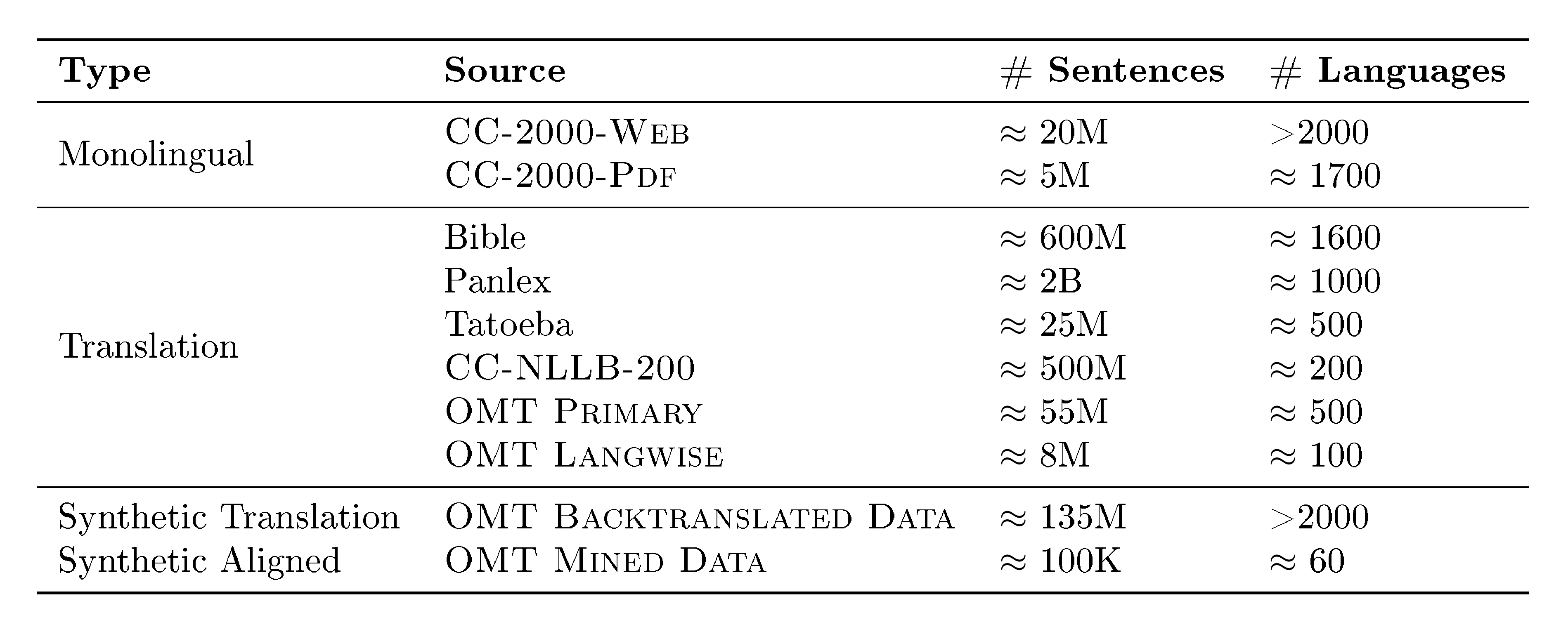

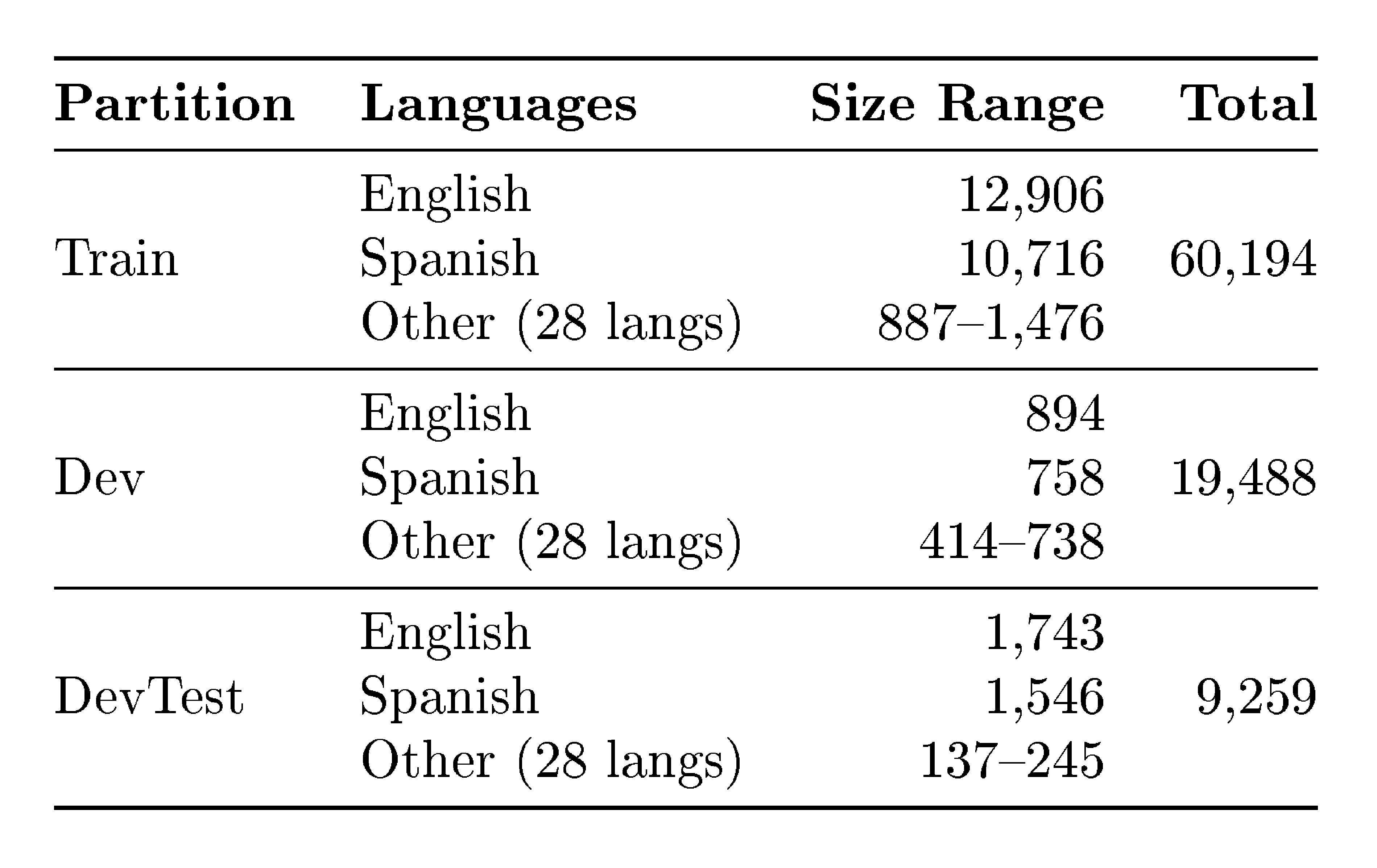

Access to high quality data is crucial to develop a high quality translation system. We give special focus to creating, selecting, and curating high-quality data for thousands of languages both for training and evaluation. In this section, regarding training, we mainly discuss continual pretraining (CPT) data, while main data for postraining is directly discussed in the corresponding section (Section 6.3). For CPT, we leverage mainly datasets in Table 2 and presented in Section 4.1. For evaluation, we rely on a subset of the Bible (Section 4.4.2) and several standard ones—e.g., FLoRes+ ([1]).

Beyond this, and to compensate for existing limitations in the existing data such as lack of long-tail languages, domains, registers and others, we curate new training datasets, both monolingual (inspired by [11] and [24]) and aligned (manual MeDLEy, parallel data inspired by [1], Section 4.3, and synthetic data, Section 4.2) as well as the BOUQuET evaluation dataset (Section 4.4.1).

4.1 Main CPT Training Data Collection

As follows, we mention and briefly categorize some highly multilingual text resources of various kinds: parallel and non-parallel, word-, sentence-, and document-level. Additionally, Table 2 summarises the main sources and volumes used to train our systems.

::: {caption="Table 2: A summary of the main sources and volumes (in number of sentences) used for CPT as detailed in Section 6.2."}

:::

Monolingual Datasets

We collect and curate two massively monolingual corpora, starting from snapshots of Common Crawl, ^6 inspired by the methodology and motivated by the results of [11] and [24]. We apply filters based on original URLs to discard low-quality pages, resulting in a URL-filtered version of Common Crawl. From this we create two datasets, that we refer to as $\textsc{CC-2000-Web}$ and $\textsc{CC-2000-Pdf}$, that collectively contain monolingual textual data sourced from web-pages or PDF documents spanning more than 2000 identifiable languages, as per the GlotLID model ([25]). Since our scope is continual pretraining (not full pretraining) and gathering more data for lower resource languages, we assign a fixed budget of at most 50 thousands documents per language, randomly sampling from the upstream corpora.

Bible texts

are used as one of the main parallel dataset for language analysis, for training and evaluation of MT systems. The Bible has the large language coverage (over 2000 languages) and many Bible translations are publicly available under permissive licenses. Finally, the Bible books are explicitly segmented into chapters and verses which are always preserved during translation, so aligning the translated text across the languages is trivial. Due to these reasons, Bible has already been used as the primary training set in several research works ([26, 7, 27, 28]). Additionally, we use the Bible to do part of our evaluation in order to have a reference-based benchmarking with large language coverage. We suggest using the Gospel by John as the test set, because the Gospels are the most translated from the Bible books, and John is considered to be the most different from the other Gospels. Training the Bible data has its caveats: its domain coverage is very limited, and language is often very old and formal. While training a model to understand such language might be alright, generating it would result in very unnatural style and various translation errors. Evaluating with the Bible shares the caviat of narrow domain and adds the contamination issue. We accept these risks and still use Bible both for training and evaluating, but we mitigate them using other sources (as explained in this section). We compile our Bible dataset from multiple sources[^7]

[^7]: With the prevailing majority of texts being downloaded using the eBible tool: https://github.com/BibleNLP/ebible.

Panlex

is a project collecting various dictionaries in a unified format^8. A processed dump of its database has 1012 languages containing at least 1, 000 entries, as well as over 6000 languages with at least one entry. This makes it probably the most multilingual publicly available dictionary.

Tatoeba

([29]) is a dataset of aprox 400 languages and 11M sententences. Overall, it is a large, open‑source collection of example sentences and their translations, built collaboratively by volunteers around the world. Its main goals are to provide a freely available multilingual resource for language learning, research, and the development of natural‑language‑processing tools.

$\textsc{CC-NLLB-200}$

We aim at building a system that improves upon NLLB-200 ([1]), at least retaining the performance on the 202 language varieties it covered. As a consequence, we apply the same URL filtering as we used to create $\textsc{CC-2000-Web}$ and $\textsc{CC-2000-Pdf}$ to a mixture of primary and mined datasets roughly reproducing the original data composition used to train NLLB-200 models, which we refer to as $\textsc{CC-NLLB-200}$.

$\textsc{OMT Primary}$

is a group of several massively multilingual datasets, some of which we describe as follows. SMOL ([10]) dataset includes sentences and small documents manually translated from English into 100+ languages. The docs are present both fully and by individual sentence pairs 2.4M rows. This dataset includes Gatitos ([9]), which is a dataset of 4000 words and short phrases translated from English into 173 low-resourced languages. BPCC ([30]) is a collection of various human-translated and mined texts in Indic languages, parallel with English. KreyolMT ([31]) contains bitexts for 41 Creole languages from all over the world. The dataset from the AmericasNLP shared task ([32]) represents 14 diverse Indigenous languages of the Americas. AfroLingu-MT ([33]) covers 46 African languages.

$\textsc{OMT Langwise}$

This dataset groups a set of less multilingual datasets, usually focused on a single low-resourced language or a group of related languages. A few examples of this compilation include ZenaMT ([34]) focused on Ligurian language, the Feriji dataset ([35]) for Zarma, and a dataset from [36] covering 6 low-resourced Finno-Ugric languages.

LTPP

Part of our training data mix constituted an extremely valuable parallel data from the Language Technology Partnership Program^9 which was launched with the purpose of expanding the support of underserved languages in AI models. Specifically, the compilation of parallel data from LTPP that we were able to use comprises 18 sources of various sizes and about 1.4M sentence pairs in total.

Limitations

Although we did a relevant effort to collect data, we are not exhaustive and detailed on its description and this inhibits replicability of our training, which is mitigated by the fact that we are sharing the model. More importantly, our current version of the model still misses many relevant sources.

4.2 Synthetic Data for CPT

For a significant portion of the languages we aim to support with our MT systems, there simply is no parallel data available, beside the Bible, and for some of them, not even the Bible has been translated yet[^10] or is not available for MT use. However, for several of them, publicly available monolingual corpora do exist and can be leveraged to generate synthetic parallel data via backtranslation and bitext mining, resulting in $\textsc{OMT Backtranslated Data}$ and $\textsc{OMT Mined Data}$.

[^10]: Bible translations statistics

4.2.1 Backtranslation

Motivation and related work

Backtranslation has become a standard technique to do data‑augmentation strategies for MT by translating monolingual target‑language data back into the source language ([37]). Since then, there have been several works exploring variations of this strategy. Edunov et al. [38] showed that iterative back‑translation, where the augmented data are repeatedly re‑translated, yields further gains and helps the model learn more robust representations. Subsequent work has extended the technique to low‑resource settings. [39] proposed copying monolingual sentences directly into the training data. [40] demonstrated that multilingual back‑translation can simultaneously improve translation across many language pairs by sharing a single encoder‑decoder architecture. [1] focused on efficiently in massively multilingual settings and they used a combination of neural and statistical MT translated data similarly to ([41]). More recently, ([13]) propose to use LLM‑based technique that generates topic‑diverse data in multiple low‑resource languages (LRLs) and back‑translate the resulting data. Several studies have investigated how to best filter ([42]) back‑translated sentences. Recently, the approach has even been proved useful in speech translation ([43]).

Methodology

To produce backtranslation data we mainly rely on the two massively monolingual datasets obtained from Common Crawl: $\textsc{CC-2000-Web}$ and $\textsc{CC-2000-Pdf}$. Furthermore, to increase domain diversity of our backtranslation data mix, we also rely on $\textsc{DCLM-Edu}$ ([44]) for educational-level forward-translated (out of English) data.

The backtranslation pipeline we build extracts clean monolingual texts from the monolingual corpora above, produces source- or target-side translations, and estimates the translation quality of the resulting synthetic bitext. Several of these steps are model-based, including but not limited to the translation step itself.

The first step consists of text segmentation, for which we use a fine-tuned version ([6]) of the $\textsc{sat-12l-sm}$ model ([45]), trained to predict the probability of a newline occurring at a given point in the text. Both sentence and paragraph boundaries can be obtained directly by tweaking the decision threshold. However, we find that resorting to heuristics to further refine these splits, e.g. re-splitting sentences deemed too long into smaller units, is beneficial.

After extracting textual units, the following step aims at removing noisy monolingual samples, i.e. units that are either too short or too long, and those for which we struggle to identify the language with enough certainty. For the language identification task we resort to $\textsc{GlotLID}$ ([46]), supporting 1, 880 languages at the time of writing. Empirically we find that $\textsc{GlotLID}$ top-1 score aligns well with human judgement on sample quality, with texts falling below certain thresholds either containing artifacts (e.g. HTML tags) or otherwise appearing as nonsensical text. We also find that this threshold is language-dependent, with a negative correlation between resourcefulness of the language and the average $\textsc{GlotLID}$ score of positive samples, when tested on annotated data. This suggests that, in line with intuition, texts in lower-resource languages are not just harder to translate but also to identify. We generalize this by calibrating $\textsc{GlotLID}$ scores on the aligned Bible, and define language-dependent thresholds for rejecting samples. This helps balance the competing objectives of keeping more data and rejecting lower quality samples.

For the translation step, we rely on two base MT systems: $\textsc{NLLB}$ ([1]) and $\textsc{LLaMA}$ 3 ([47]). The former is used as-is with no further fine-tuning to translate out of (or into) the 200 languages it supports, while the latter is used with no restriction, taking the best CPT and FT 8b model we were able to produce thus far. Notably, this model has been trained on both monolingual texts sampled from $\textsc{CC-2000-Web}$ itself and bitext from the Bible belonging to more than 1, 700 languages. This is crucial since the base model has not been explicitly optimized for tasks (e.g. translation) in languages, present in the original pre-training corpora, but beyond 8 high resource languages. Given the more demanding nature of producing translations with $\textsc{LLaMA}$ compared to $\textsc{NLLB}$, and that we already have data for languages covered by $\textsc{NLLB}$, we only run the former on a stratified sample of the monolingual corpora, down-sampling languages already supported by $\textsc{NLLB}$.

Finally, we estimate translation quality of the produced synthetic bitext with a mixture of model-based and model-free signals. For the model-based signals we rely on omnilingual latent space ($\textsc{OmniSONAR}$) similarity of source and target text ([6]). We find that other model-free signals such as unique character ratio are helpful to complement the model-based ones, as they are strong predictors of particular failure cases, e.g. repetition issues or MT systems producing translations that are just a copy of the source text.

Ablations

We run a series of ablations to understand how to effectively filter the produced data and incorporate it along other non-synthetic pre-existing corpora during continual pre-training.

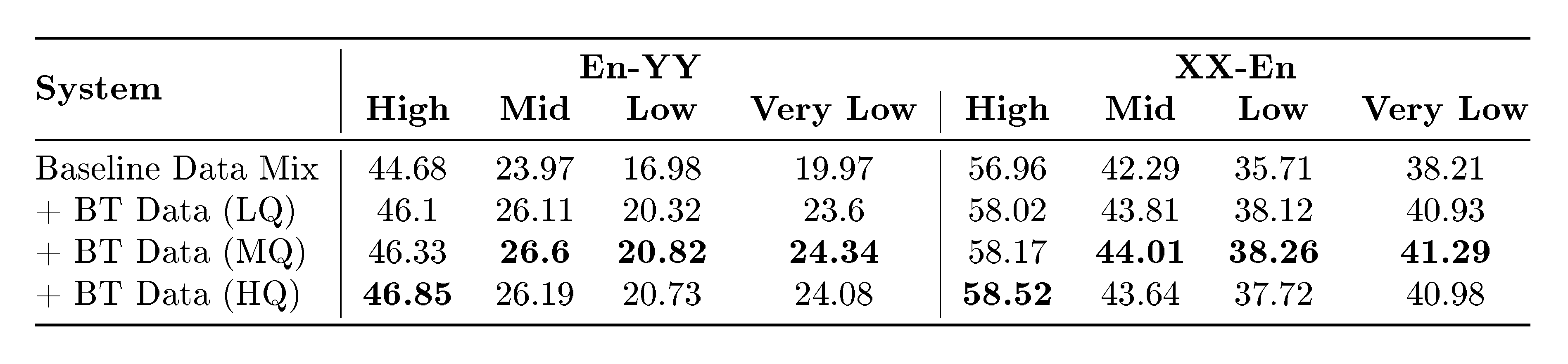

First, we study the downstream effect of filtering the backtranslation data according to cosine similarity of the translation in $\textsc{OmniSONAR}$ space. We first naively calibrate $\textsc{OmniSONAR}$ scores on the Bible development set, assuming perfectly uniform similarity estimation across languages, and find the mean latent space cosine similarity between aligned sentences: $\mu_{sim}$. Then, we define three thresholds, one standard deviation below ($LQ := \mu_{sim} - \sigma_{sim}$), at the mean ($MQ:= \mu_{sim}$) and above the mean ($HQ:= \mu_{sim} + \sigma_{sim}$). Then, we run an ablation training $\textsc{LLaMA}$ 3.2 3B Instruct on a stratified sample of $\textsc{CC-2000-Web}$, producing backtranslation data and then filtering according to these thresholds. We evaluate on FLoRes+, measuring translation quality over different language buckets (see Section 4.4) and comparing against a baseline fine-tuned on the same data mix but without backtranslation data. The results, summarized in Table 3, indicate that a uniform filtering strategy across language groups yield the best results, although filtering more aggressively on high-resource languages can yield even better results.

::: {caption="Table 3: ChrF++ when evaluating MT systems trained with different backtranslation data mixes."}

:::

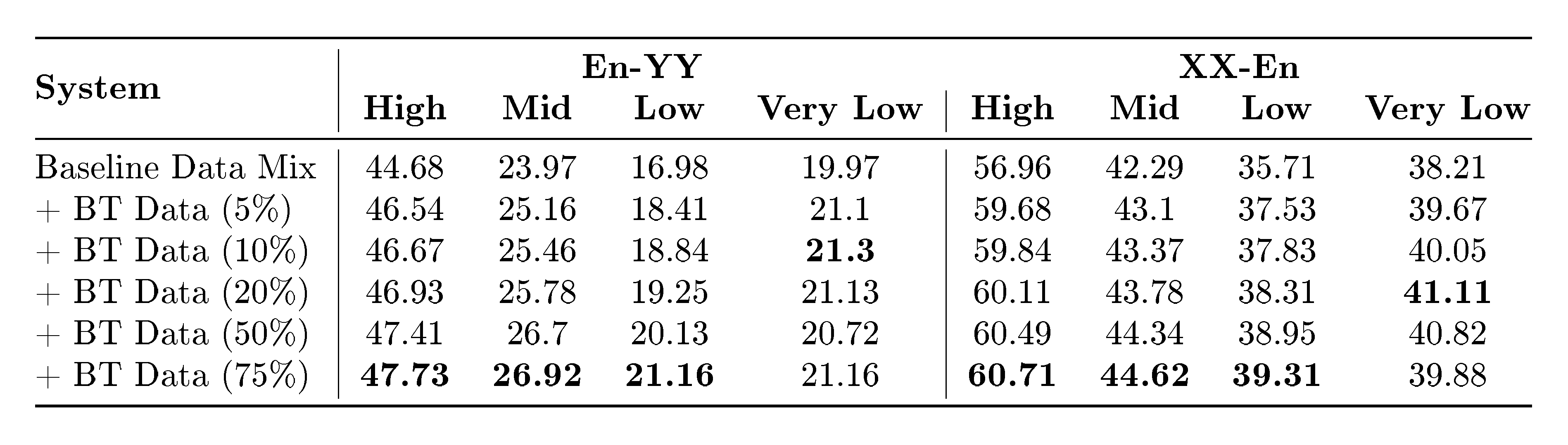

Second, after establishing a filtering strategy, we investigate the effect that mixing backtranslation data in different proportions along with pre-existing training corpora has on the downstream MT system performance. Given a fixed token budget for a training batch, we allocate $x%$ of those tokens to examples sampled from backtranslation data, exploring a range of $5%$ (ratio of 1:19 with respect to natural bitext) up to $75%$ (ratio of 3:1 with respect to natural bitext). The results reported in Table 4 show how the optimal performance for lower-resource languages is achieved when maintaining a ratio of 1:9 or 1:4 with natural bitext, as performance increases up to that point and then starts decreasing again. On the other hand, all the other buckets see increased performance as we increase the amount of backtranslation data.

::: {caption="Table 4: ChrF++ when evaluating MT systems trained with different backtranslation data mixes."}

:::

Dataset statistics

In Table 5 we summarize the resulting dataset obtained with the methodology outlined above. The dataset contains roughly 270 million sentences spanning more than 2, 000 languoids, that we divide in three buckets: high resource and low resource indicate languoids that were described as such in [1], while very low resource indicate any languoid not included among the ones supported by $\textsc{NLLB}$. The stratified sampling by languoid group at the source results in an artificially balanced distribution, with high resource languoids taking up 38% of the unfiltered data, low resource languoids taking up 35% and very low resource languoids the remaining 23%. The progressively more relaxed filtering strategy leads to a final distribution where 51% of the data is taken up by sentences belonging to low resource languoids, 26% belonging to very low resource languoids, and 23% belonging to high resource languoids.

::: {caption="Table 5: Statistics about resulting backtranslation data."}

:::

4.2.2 Bitext Mining

Motivation and related work

Complementary to backtranslation, bitext mining is another method for data augmentation which expands parallel corpora by automatically aligning pairs of text spans with semantic equivalence from collections of monolingual text. In order to find semantic equivalence, early works such as [48] attempted to find parallel text at the document level by examining an article's macro information such as the metadata and overall structure. Later works have applied more focus on the textual content within articles, leveraging methods such as bag-of-words ([49]) or Jaccard similarity ([50]). With the advances of representation learning, more recent approaches have begun to employ the use of embedding spaces by encoding texts and applying distance metrics within the space to determine similarity, moving beyond the surface-form structure. Works such as [51] and [52] used bilingual embedding spaces. However, a drawback to this approach is that custom embedding spaces are needed for each possible language pair, limiting the permissibility to scale. Alternatively, encoding texts with a massively multilingual embedding space allows for any possible pair to be encoded and subsequently mined, and has become the adopted backbone for many large-scale mining approaches ([53, 54, 55]). Generally, within this setting there are two main approaches: global mining and hierarchical mining. The latter focuses on first finding potential document pairs using methods such as URL matching, and then limiting the mining scope within each document pair only. Examples of such approaches are the European ParaCrawl project ([56, 57]). Alternatively global mining disregards any potential document pair as a first filtering step, and instead considers all possible text pairs across available sources of monolingual corpora ([58, 59]). This approach has yielded considerable success in supplementing existing parallel data for translation systems ([1, 42]).

Methodology

We adopt the global mining approach in this work. For our source of non-English monolingual corpora, we used $\textsc{CC-2000-Web}$ and FineWeb-Edu ([60]) as our source of English articles. We also considered $\textsc{DCLM-Edu}$ as an option for English texts. However, as $\textsc{DCLM-Edu}$ contains less articles than Fineweb-Edu, and given that the likelihood of a possible alignment increases as a function of the dataset size, we opted for the latter. We begin by first pre-processing our monoglingual data using the same sentence segmentation and LID methods as our backtranslation pipeline (see Section 4.2.1). Subsequently, we encode the resulting data into the massively multilingual $\textsc{OmniSONAR}$ embedding space. In order to help accelerate our approach, we use the FAISS library to perform quantization over our representations, and enable fast KNN search ([61]). We first train our quantizers on a sample of 50M embeddings for each language using product quantization ([62]), and then populate each FAISS index with all available quantized data. For our KNN search we set the number of neighbours fixed to $k = 3$, and to apply our approach at scale we leverage the stopes mining library^11 ([63]).

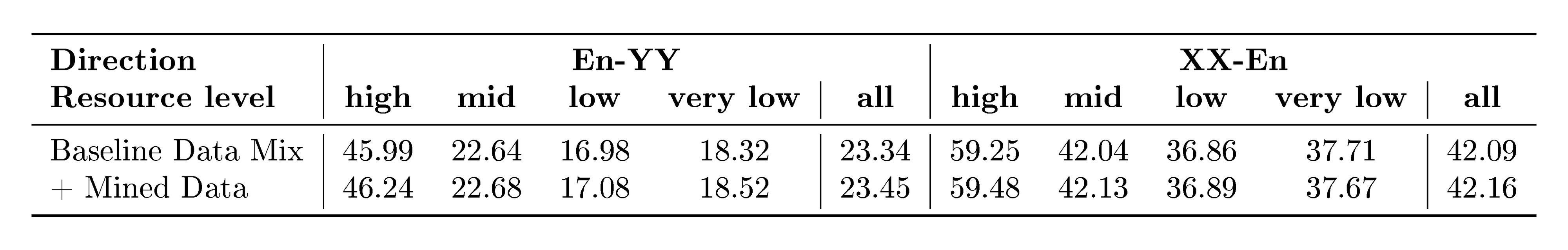

Ablation

In order to measure the effect of our resulting mined data, we performed a controlled ablation experiment. We choose the LLaMA3.2 3B Instruct model, and continuously pretrain it with two different data mixtures: one without the mined alignments, and a second supplemented with the mined data. In order to control for possible confounding variables, we fix the effective batch size, number of training steps, and all other hyperparameters for both models. Similar to our backtranslation ablations, we evaluate performance with the FLoRes+ benchmark using the metric ChrF++. Results are shown below in Table 6. Overall, we see improvements when adding in mined alignments to the data mixture showing the effectiveness across both high and low-resource settings. For example, langoids such as Greek and Turkish both see good relative improvements of 2.95% (47.4 $\rightarrow$ 48.8) and 2.74% (43.7 $\rightarrow$ 44.9) respectively. Similarly, we observe a 5.12% relative increase for the low-resource langoid N'Ko (13.00 $\rightarrow$ 13.67).

::: {caption="Table 6: ChrF++ on FLoRes+ when evaluating MT systems continuously pretrained with and without mined data, split by whether English is the target or the source language and by the resource level of the other language."}

:::

4.2.3 Conclusions and limitations

The synthetic data we produce plays an important role in boosting MT system performance for lower-resource languages. Here, we briefly discuss some limitations and potential future work to further improve the impact of synthetic data.

We work from a limited collection of Common Crawl snapshots, that cover only a portion of the human spoken languages. Furthermore, since we rely on resource-hungry models and algorithms for both backtranslation and mining, scaling up the approaches is expensive and we limit the production of synthetic data to stratified samples of those snapshots. A more thorough investigation of the relationship between synthetic data quantity and downstream MT performance might reveal scaling laws that can be used to take more informed sampling decisions.

The backtranslation approach we employ could be improved both in the generation and filtering phase. In the generation phase, previous work (e.g. [64, 65]) often employ backtranslation in an iterative fashion, using a base system to backtranslate monolingual data, using the synthetic bitext to build a system better than the base one, then using the new system to produce higher quality synthetic data, and repeating the cycle for a number of steps. In the filtering phase, we could complement latent space similarity metrics with LLM-as-a-judge approaches similar to [66]; if the base model is itself a LLM, we could investigate the ability of the model to effectively score its own translations, and the interference between this ability and translation ability as CPT on new backtranslated data progresses.

The mining approach could be significantly scaled up by considering alignment with pivot languages beyond English. For instance, aligning languages within the same family or group such as Spanish and Portuguese can enhance cross-lingual transfer by leveraging their structural and lexical similarities. This strategy not only facilitates more effective knowledge transfer between related languages but also helps to reduce the model's bias toward English-centric data, promoting greater linguistic diversity and inclusivity in multilingual applications.

4.3 Seed Data for Post-Training: MeDLEy

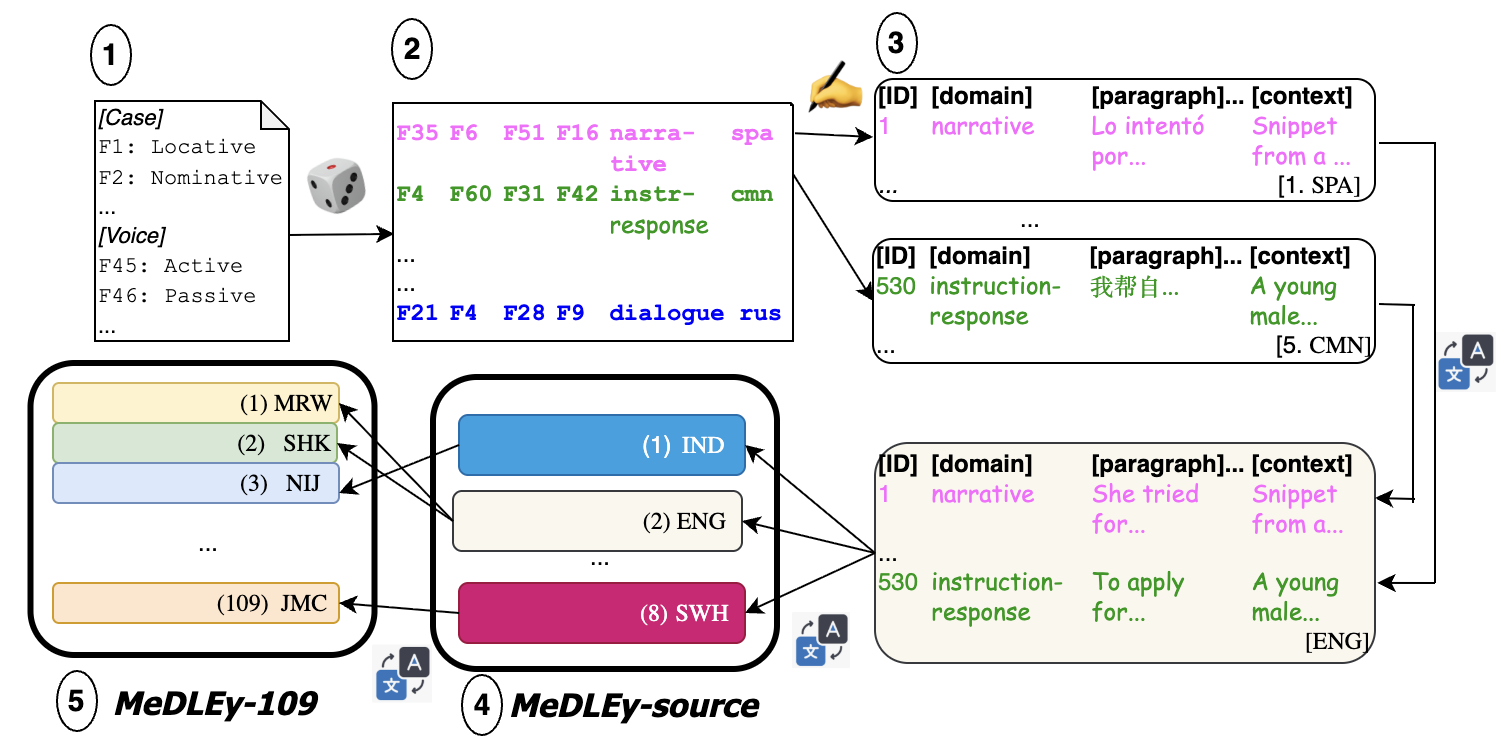

In this section we present MeDLEy, a multicentric, multiway, domain-diverse, linguistically-diverse, and easy-to-translate seed dataset. MeDLEy is a large scale data collection effort covering 109 LRLs. It MeDLEy-source and MeDLEy- 109. MeDLEy-source consists of $605$ manually constructed paragraphs with roughly $2200$ sentences and $34K$ words (counted in English). It is multicentric: source paragraphs are written in five source languages, thus including styles and cultural perspectives as well as topical subjects from a few different cultures. Each paragraph is accompanied with notes on any additional context required for its translation. It is then manually multiway parallelized across 8 pivot languages, increasing its accessibility to bilingual communities around the world. It is domain-diverse and grammatically diverse: it covers 5 domains and provides coverage to 61 cross-linguistic functional grammatical features that aim to cover a broad range of grammatical features in any arbitrary language that the dataset may be translated to. Further, we ensure that it is easy to translate: i.e., that it uses accessible, jargon-free language for lay community translators. MeDLEy- 109 provides professional translations of the dataset into 109 low-resource languages, as can be seen in Table 50. More details can be found in Appendix A, with examples from the dataset in Appendix A.7.

4.3.1 Motivation and related work

It is infeasible to manually curate data for LRLs at a large scale. Previous work emphasizes quality over quantity in the context of data collection for low-resource languages ([67, 68, 69]), and previous efforts seek to curate a small high-quality set of examples in these languages ([1, 10]). Such a "seed" dataset has various uses, such as training LID systems that can be used for data mining ([46]), or providing high-quality examples for few-shot learning strategies ([70, 71]). Importantly, while high-quality MT systems in both directions typically require training data at a much larger scale, seed datasets can be used to train models to translate into English with reasonable quality, which can then be used for bootstrapping synthetic bitext and better MT systems using monolingual data in LRLs ([37, 1]).

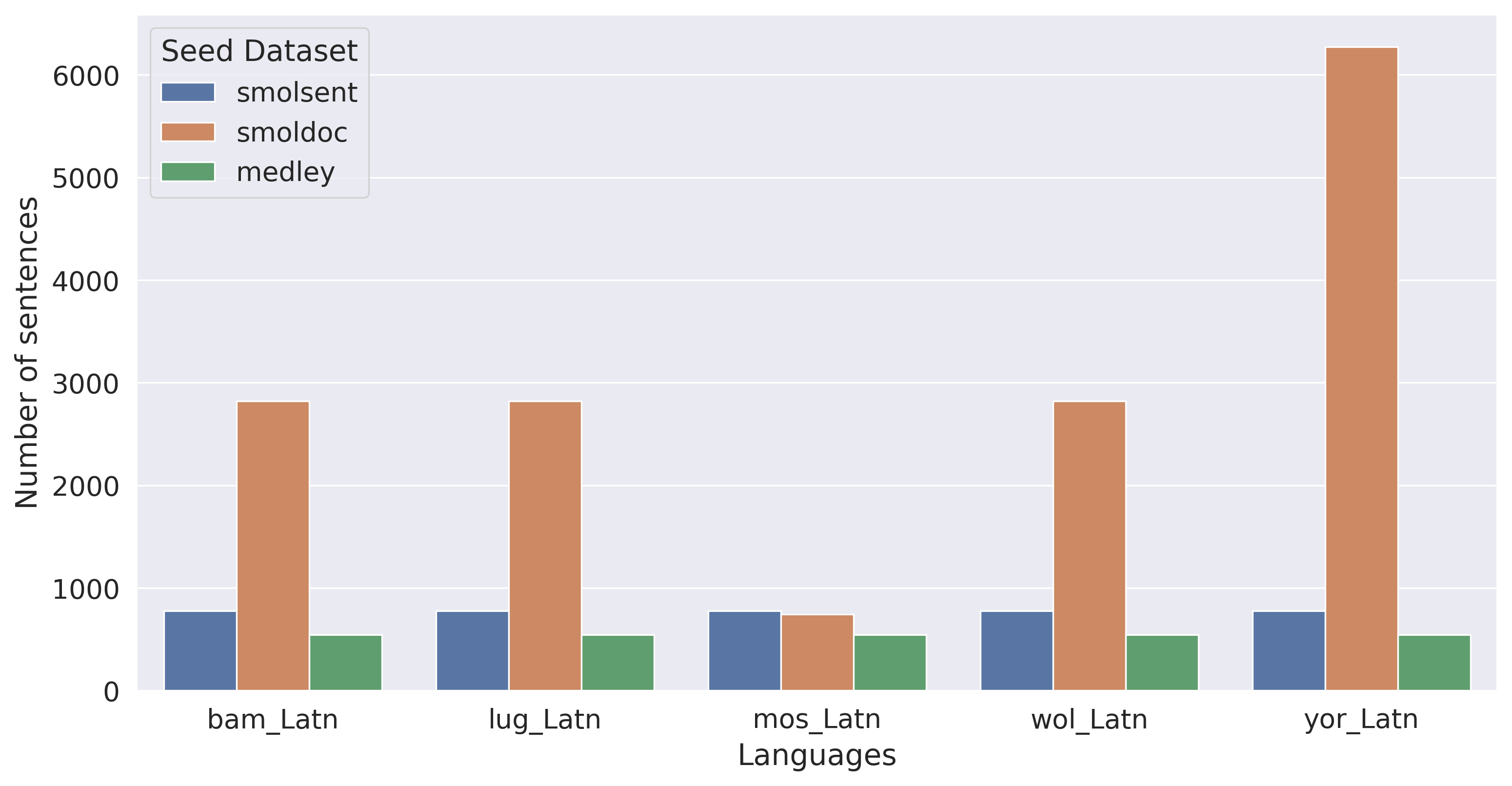

While there exist web-crawled monolingual and parallel datasets with low-resource languages such as $\textsc{MADLAD}$ ([72]), $\textsc{Glot500}$ ([73]), and $\textsc{NLLB}$ ([1]), these may be noisy and of unclear quality due to the scarcity of high-quality LRL content on the web ([74]) as well as LID quality issues for LRLs ([46]). There have been manual data collection efforts focusing on particular language groups, such as Masakhane ([75]), Turkish Interlingua ([76]), Kreyol-MT ([31]), HinDialect ([77]), as well as efforts for particular languages, such as Bhojpuri ([78]), Yoruba ([79, 80]), Quechua ([81]), among many others. $\textsc{NLLB}$-Seed is a highly-multilingual, professionally-translated parallel dataset, containing 6000 sentences from the Wikipedia domain translated into 44 languages ([1]). However, the most comparable effort to MeDLEy, in terms of scale, in collecting high-quality, professionally-translated parallel datasets is $\textsc{SMOL}$ suite ([10]). It consists of the $\textsc{SmolSent}$ and $\textsc{SmolDoc}$ datasets. The former consists of sentence-level source samples selected from web-crawled data translated into 88 language pairs, focusing on covering common English words. The latter consists of automatically generated source documents designed to cover a diverse range of topics and then translated into 109 languages. MeDLEy covers 92 languages not present in $\textsc{SMOL}$ or $\textsc{NLLB}$-Seed, contributing to the language coverage of existing datasets. MeDLEy also differs significantly in design considerations from the above, and it is the first such effort to focus on the coverage of grammatical phenomena in an arbitrary target language.

4.3.2 Approach

The goal of MeDLEy is to provide a bitext corpus that is domain-diverse and grammatically diverse in a large number of included languages. Given that a seed dataset is limited in size, it becomes crucial to include diverse and representative examples in it, so as to gain as much information as possible about the language. In this work, we focus on grammatical and domain diversity. The knowledge of a language's grammar is crucial to navigating the translation of basic situations into or out of that language. In order for an MT system to be flexible across various registers, domains, and sociopragmatic situations, it needs to be exposed to a variety of grammatical mechanisms used in those conditions.

What is grammar?

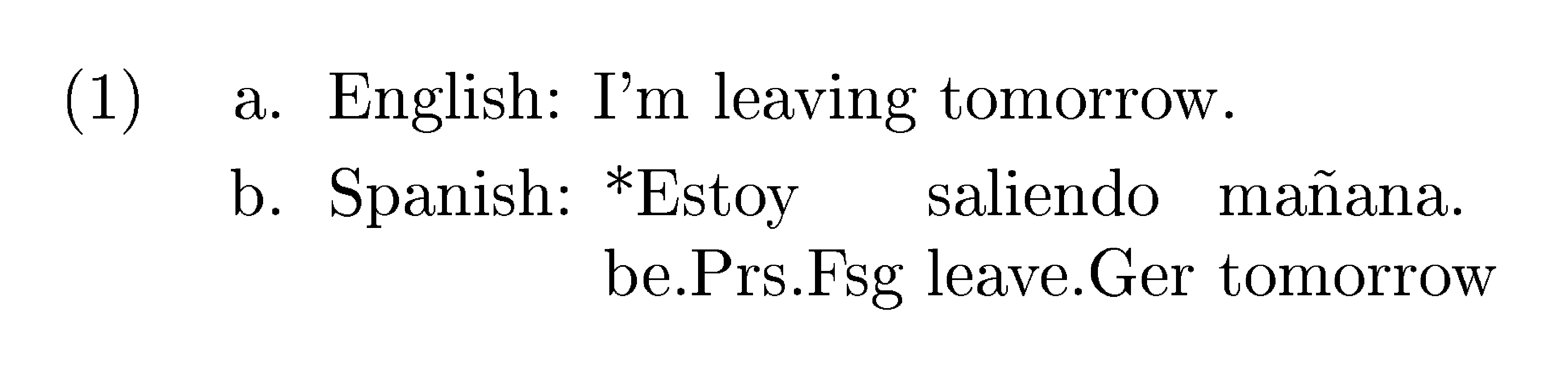

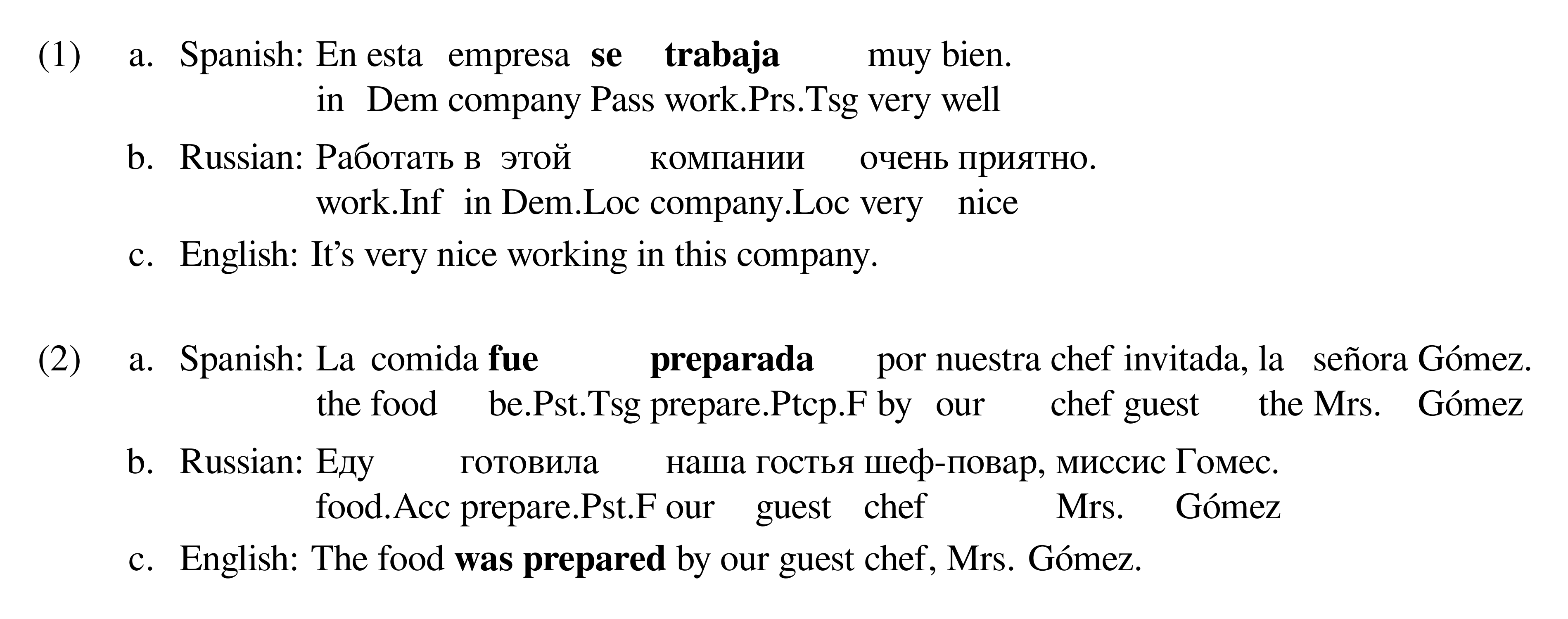

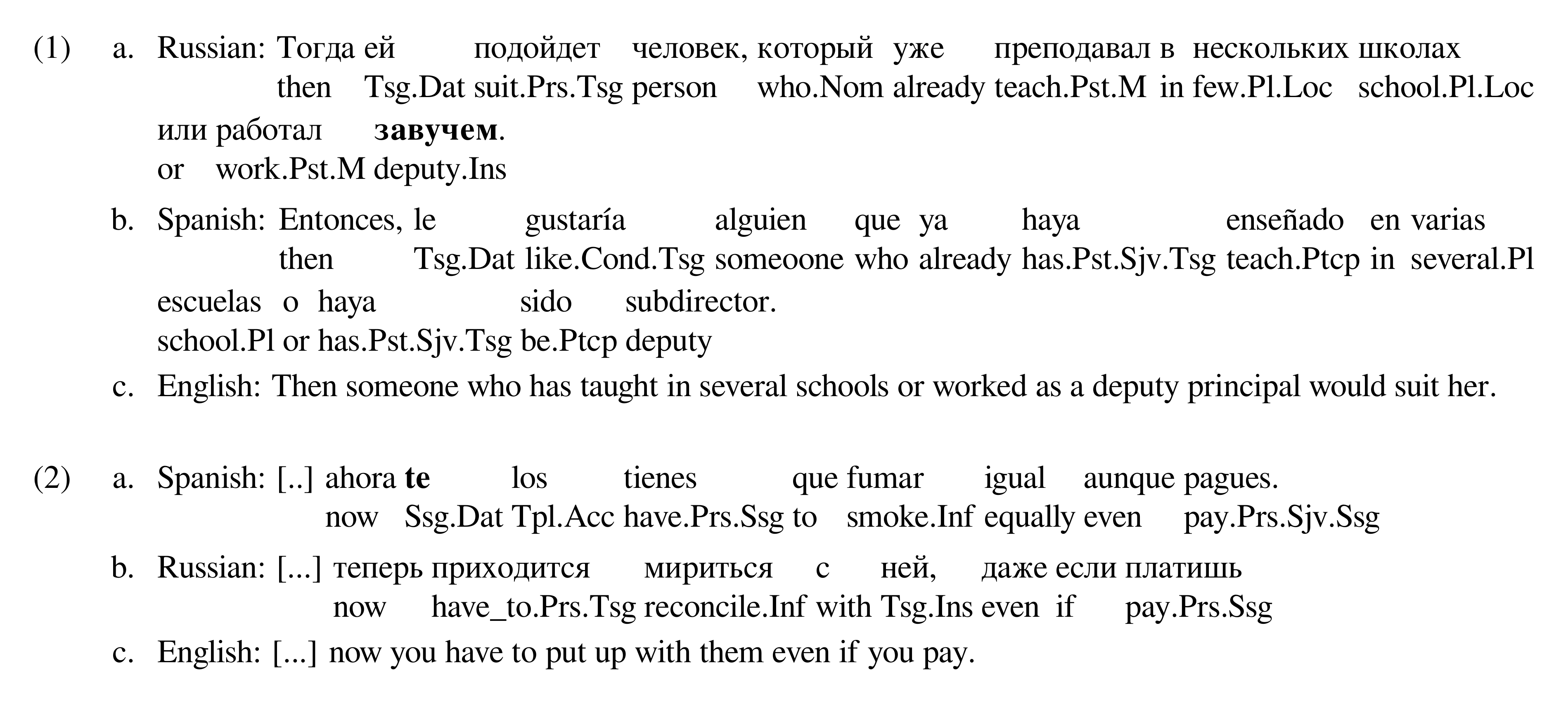

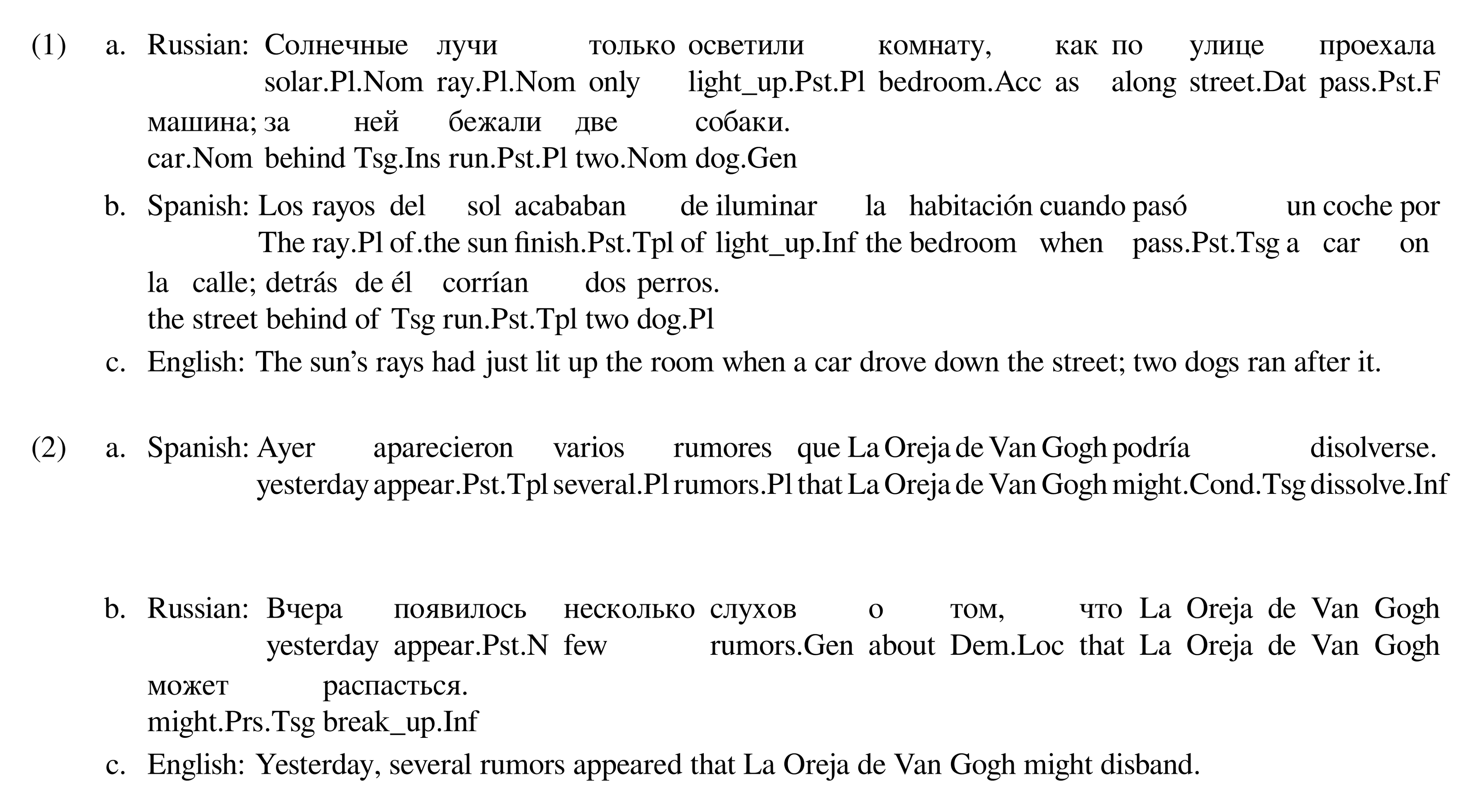

A language uses its grammar to systematically express certain kinds of information (for example, case is a grammatical mechanism used to express information about the role of a noun). In this work, we call the underlying meaning of a grammatical mechanism a grammatical function, and the actual shape of the grammatical mechanism used in the language the grammatical form. We show examples of functions and their forms in various languages in Table 7. Note that, as these examples show, these function-form pairs may be at all levels of linguistic structure, including morphology, syntax, and information structure. To refer to particular functions in this paper, we use canonical names associated with them for convenience. We refer to these as grammatical features. We construct our grammar schema in terms of these features.

Cross-linguistic variation

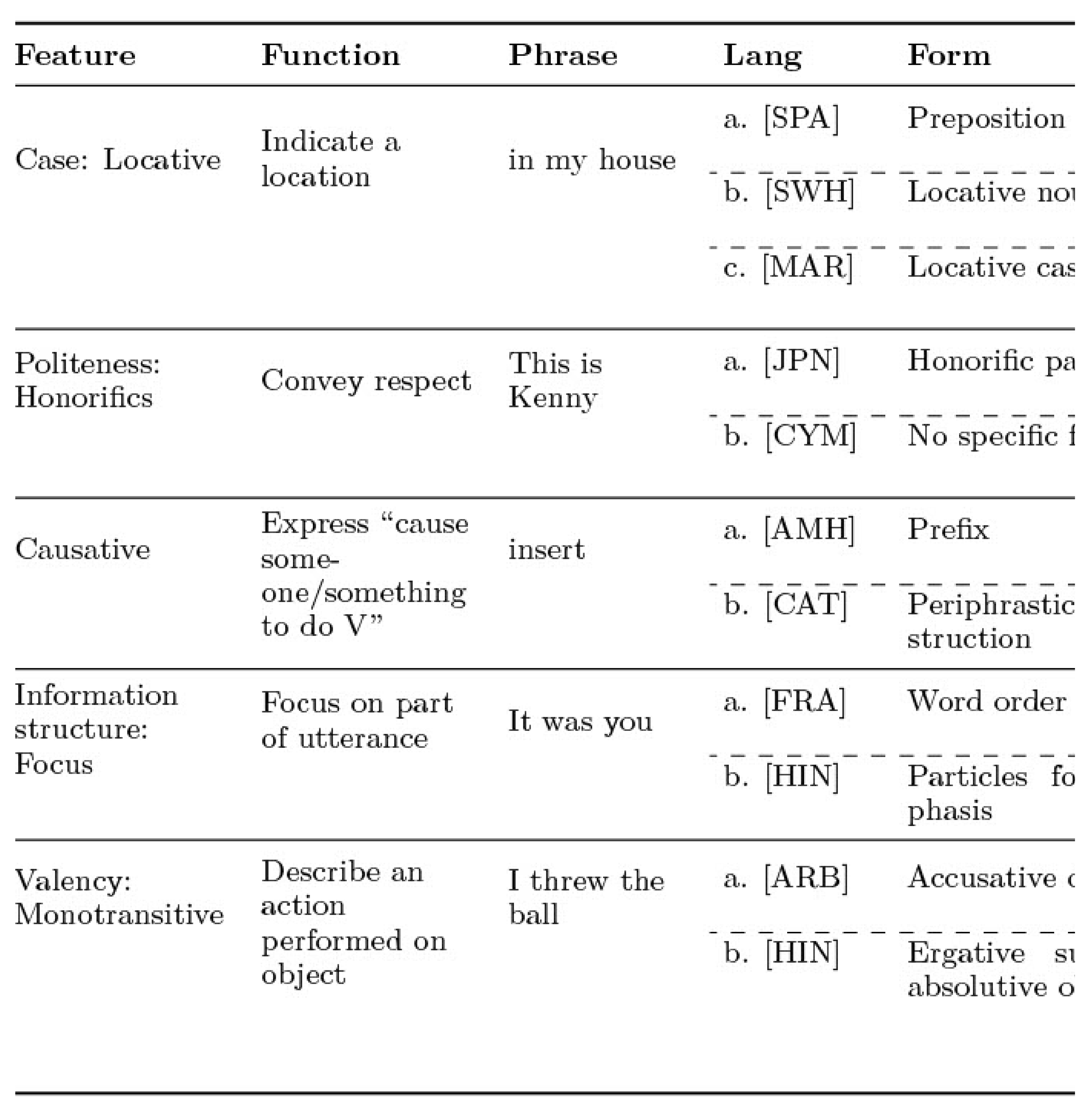

It is important to stress that languages vary extensively in terms of a) the set of forms they use to codify grammatical functions, b) in the manner of codification of a function (i.e. what form a particular function takes), and c) the mapping between form and function. First, the set of grammatical forms found in each language are not the same. For example, while some languages have honorifics to convey esteem or respect to address their interlocutors, other languages may not use any grammatical mechanism for this at all. Secondly, the same grammatical function may be codified into different grammatical mechanisms depending on the language, as in the example of the locative case (see Table 7). Finally, forms and functions often follow many-to-many relationships across languages. For example, the same feature can cover slightly different functions in two languages despite each having forms that share a core meaning with the other: English allows the so-called present continuous to express future events, while Spanish does not (1).

Building a grammatically-diverse corpus

::: {caption="Table 7: Examples of grammatical features and their associated functions or meanings, with various language dependent forms (specific mechanisms used to express that feature)."}

:::

Despite these differences, many grammatical functions codified in grammars tend to be shared across languages. For example, most languages have ways to differentiate which participants perform the action of an event and which ones experience it, the time at which an event occurred, how many referents there are, or whether an event is conditional upon another event taking place, to name a few.

Thus, achieving coverage over grammatical functions is a reasonable proxy for achieving coverage of grammatical phenomena at different levels of linguistic structure in a particular language. These grammatical functions can be enumerated up to a required degree of fine-grainedness at all linguistic levels with broad cross-linguistic coverage. Also note that, broadly speaking, most functions can be expressed in any language, regardless of the grammar of that language. For example, even though Spanish and English don't have a case system, it is certainly possible to express location in these languages akin to the Marathi locative case (see Table 7). Since the function is likely to be retained across translation, we can construct a source corpus that has high coverage over our grammatical features (in any source language), and expect that when it is translated into an arbitrary language, it will cover many grammatical phenomena in that language. For example, when we translate the English phrase "in my house" to Marathi, we gain coverage of the locative case in Marathi. We do not expect that each grammatical feature will be realized in the same manner across languages. However, we do expect that in many cases, the function associated with a feature will be manifested in some manner in a text or its context regardless of the language of the text. This allows us to build a grammatically-diverse source corpus that achieves broad grammatical coverage when translated into arbitrary target languages. This forms our cross-linguistic framework of grammatical diversity.

Dataset construction

Here we summarize the dataset construction process. The creation process involves: (1) curating a list of cross-linguistic grammatical features, as described above; (2) selecting domains (informative, dialogue, casual, narrative, and instruction-response) and source languages (English, Mandarin, Russian, Spanish, and German); (3) having expert native-speaker linguists craft natural, accessible source paragraphs based on templates combining grammatical features and domains, along with contextual notes; (4) translating these source paragraphs into eight pivot languages (English, Mandarin, Hindi, Indonesian, Modern Standard Arabic, Swahili, Spanish, and French) chosen to represent common L2 languages of low-resource language communities; and (5) commissioning professional translations from these pivots into numerous low-resource target languages selected for translator availability, prior coverage gaps, and language family diversity, resulting in grammatically diverse parallel text for underrepresented languages. See the list of grammatical features used, annotator guidelines, and more details about our approach in Appendix A.1 and Appendix A.2.

Grammatical features transfer and retention

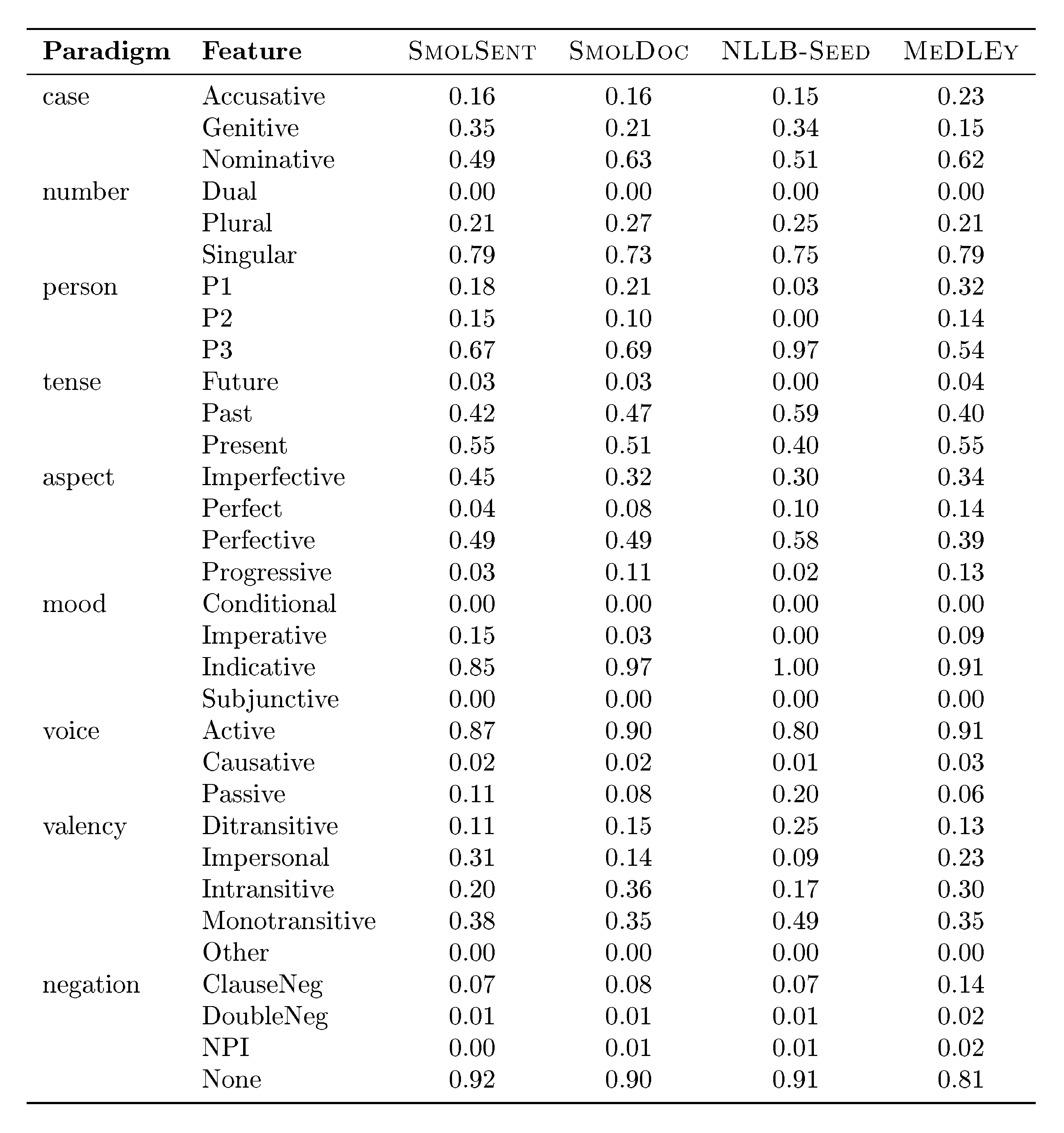

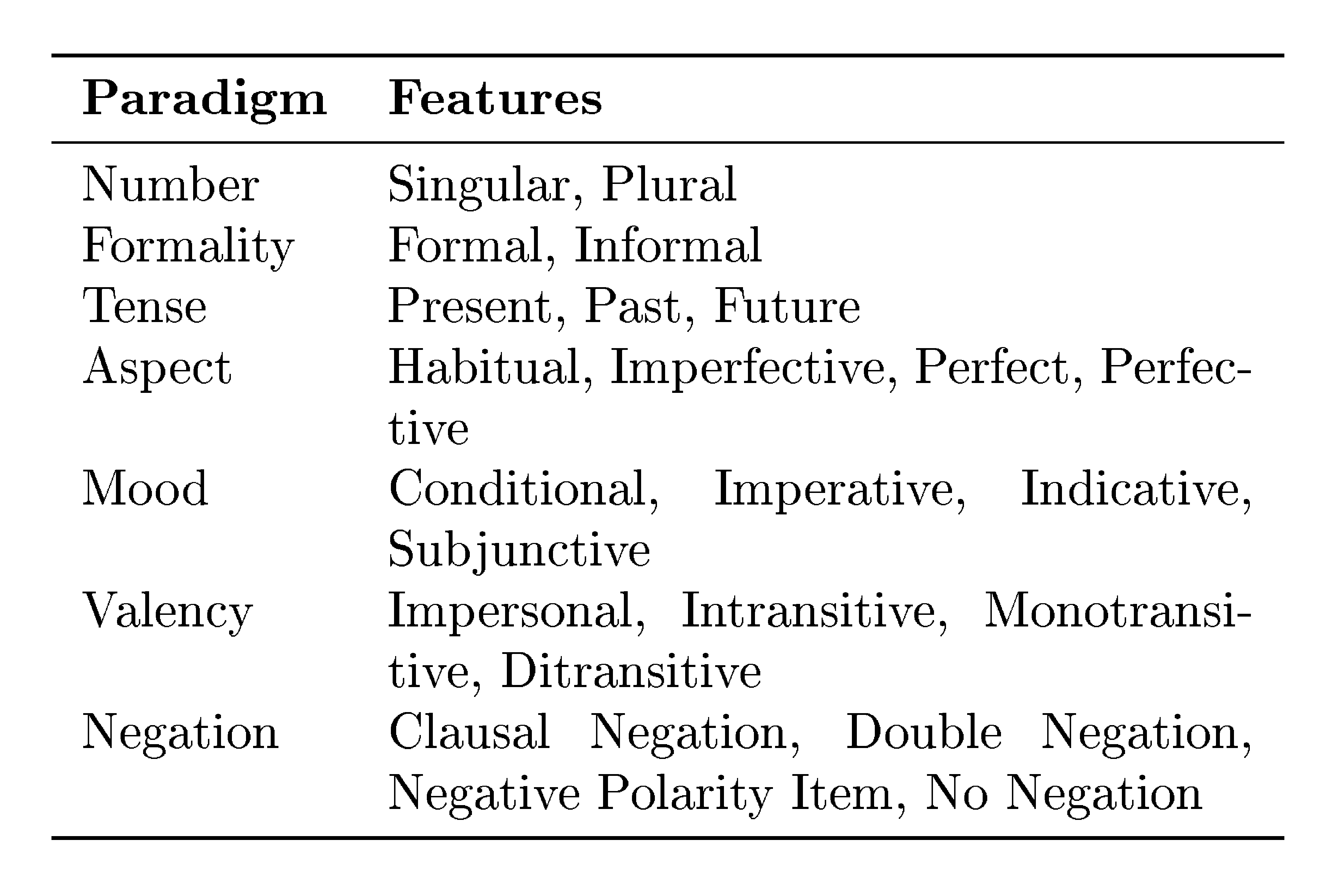

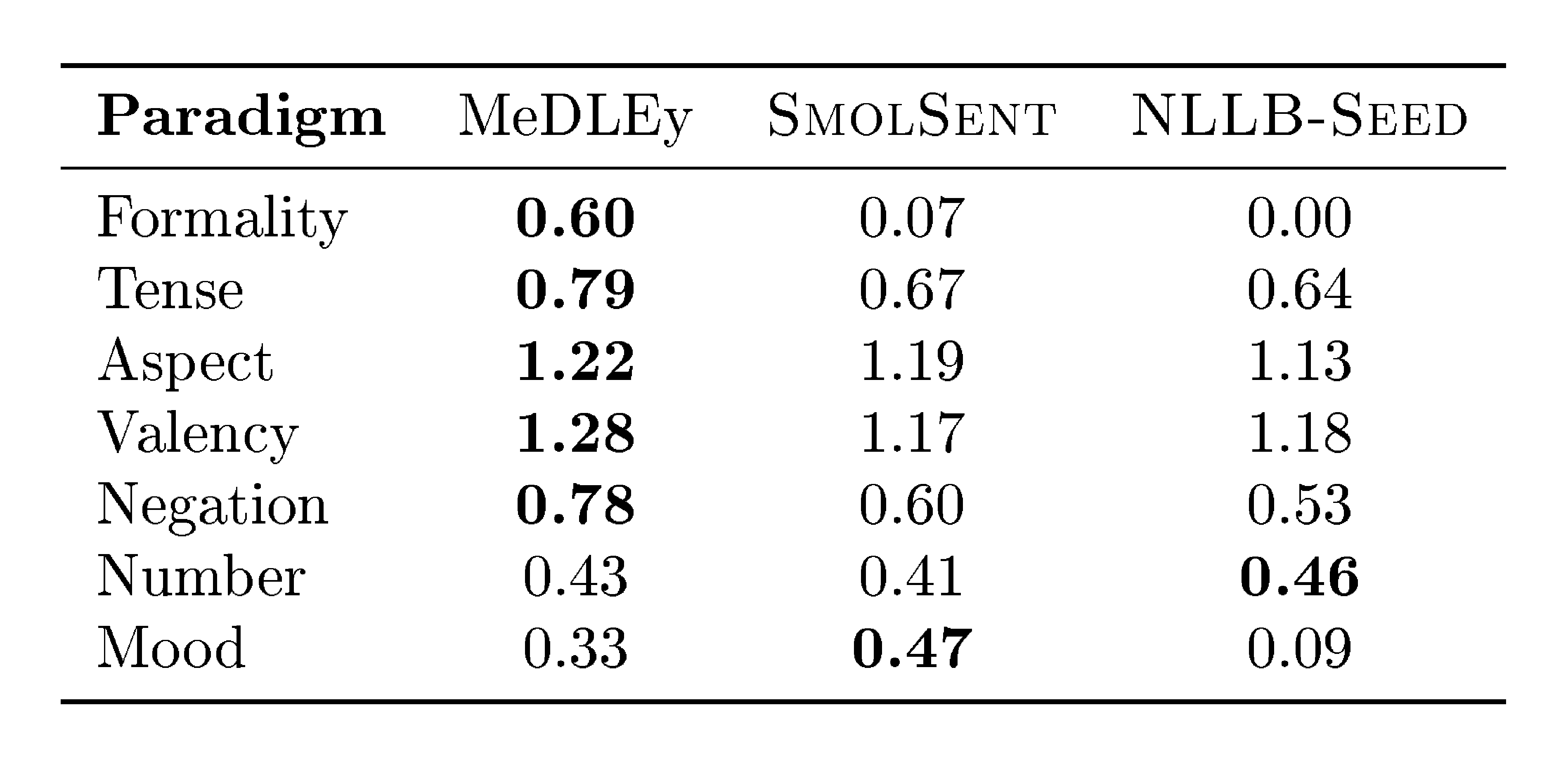

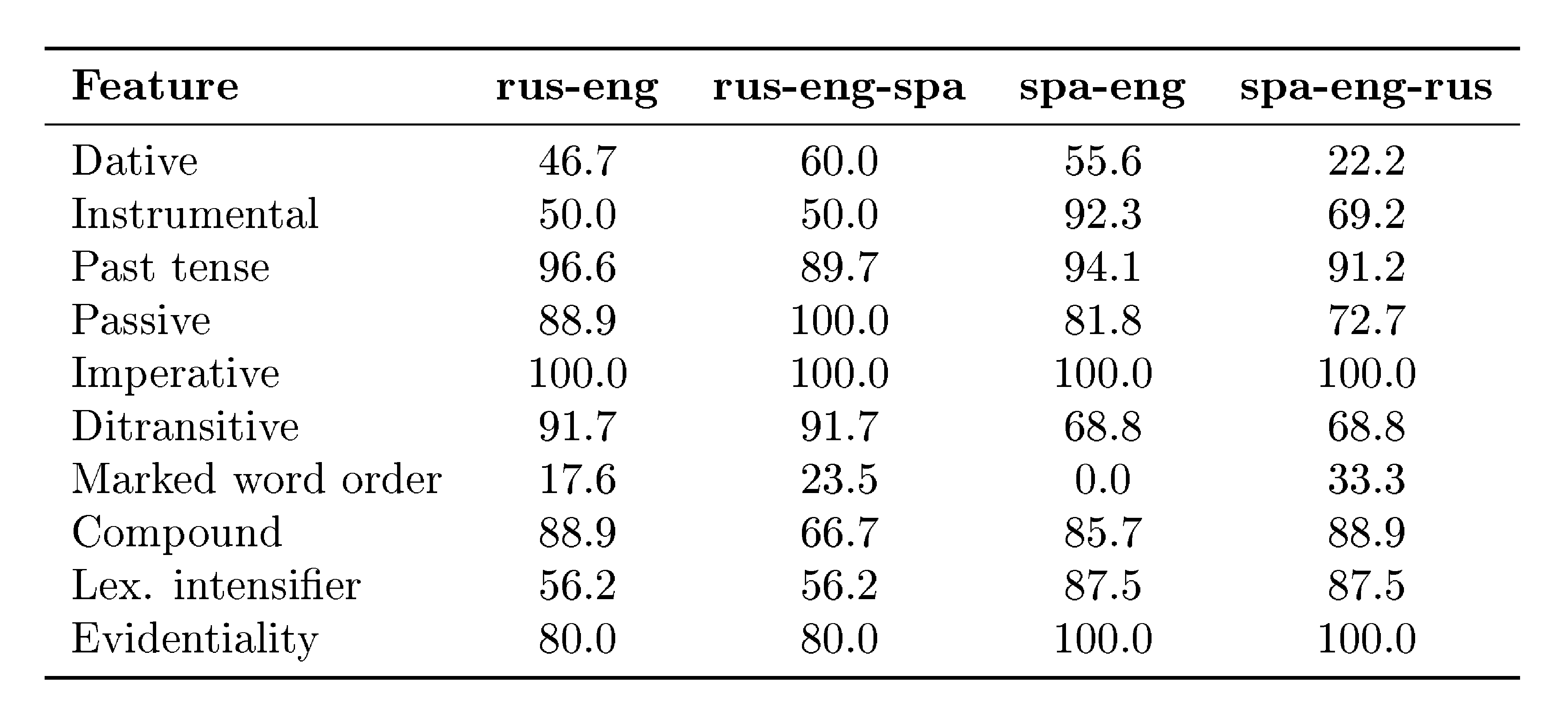

Given the aim of covering naturally rarer features in our dataset, we compare the entropy of grammatical feature distributions in our dataset versus other seed datasets, across 9 categories (e.g. tense, formality). We find that MeDLEy shows the highest entropy in 5 out of 9 categories, indicating that MeDLEy often has higher proportions of rarer features in a paradigm. Furthermore, given our assumptions of feature transfer via translation during the creation of MeDLEy, we measure the extent of feature transfer and the extent to which features are preserved across translation hops. We thus conduct a qualitative feature transfer analysis looking both at single-hop and 2-hop translations. We find that most morphosyntactic features have transfer rates above 50%, and interestingly, forms that do not surface in a target translation can resurface in next hop from that language, indicating that grammatical diversity is preserved in a language-dependent manner via translation. The grammatical feature distribution as well as feature retention analyses are detailed in Appendix A.8.

4.3.3 Experiments

MeDLEy may have several uses given its grammatical feature coverage and n-way parallel nature. We demonstrate its general utility for fine-tuning MT models for LRLs.

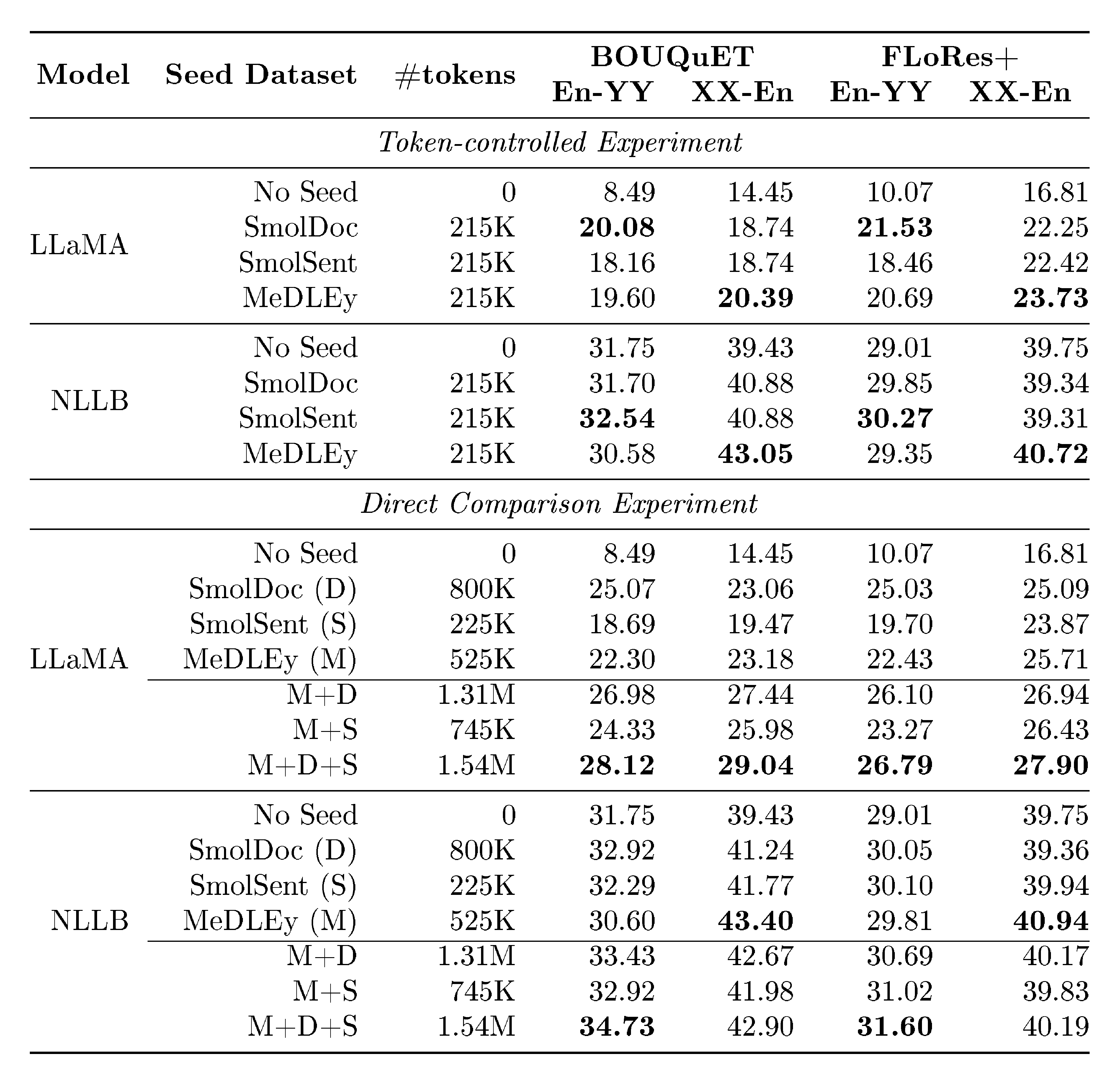

Experiment Setup

In particular, we perform a Token-controlled comparison and also measure Absolute and combined gains for models fine-tuned on MeDLEy versus other datasets. On the former, given that larger datasets are more expensive to annotate, we compare randomly sampled equally-sized training subsets of MeDLEy and baseline datasets in terms of number of tokens for a fair comparison, using the size of the smallest dataset as the token budget. For the latter, we report absolute gains from training on the entire dataset. We also look at additive gains from combining seed datasets, which may help inform decisions about language coverage in future seed datasets.

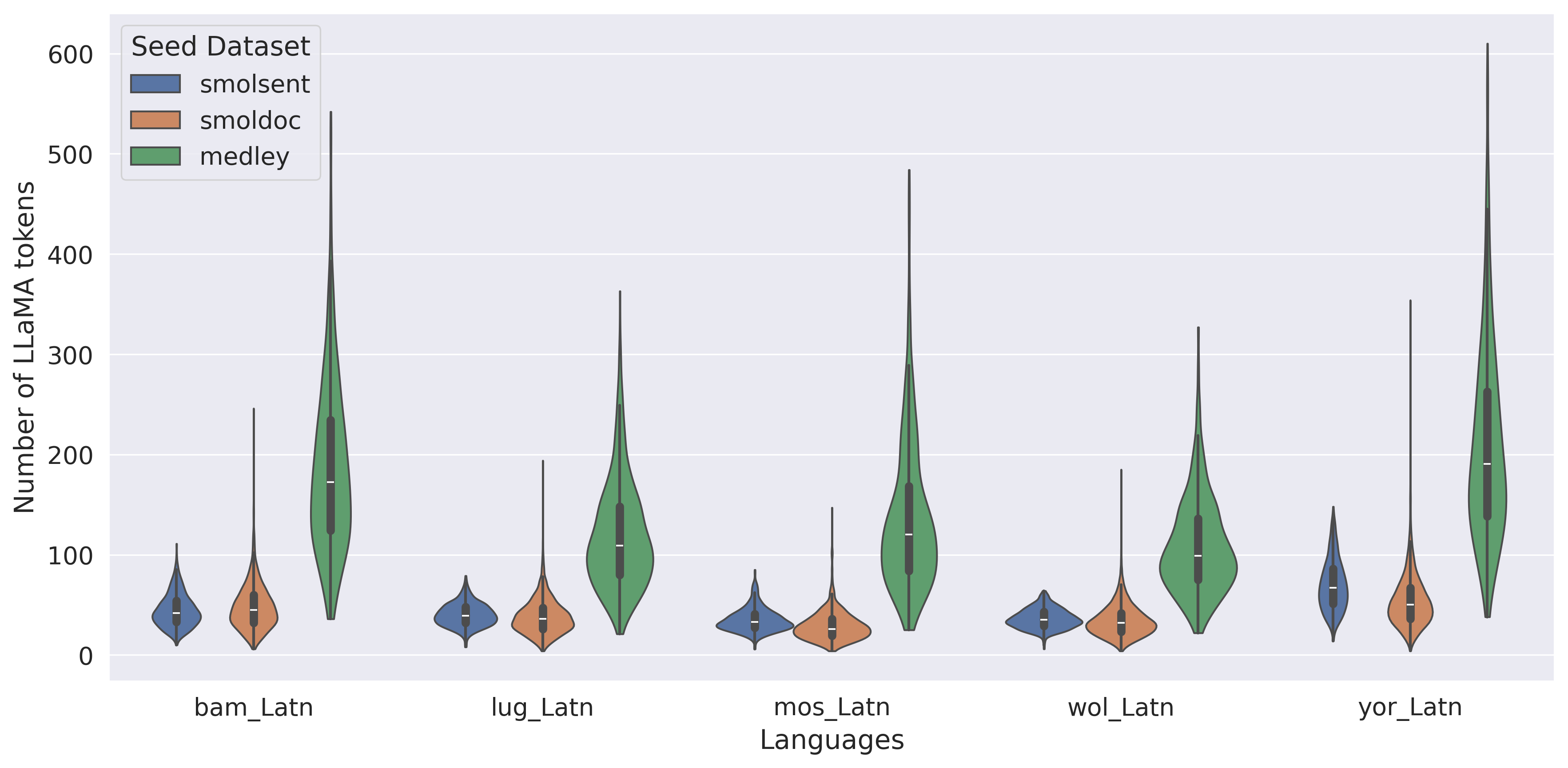

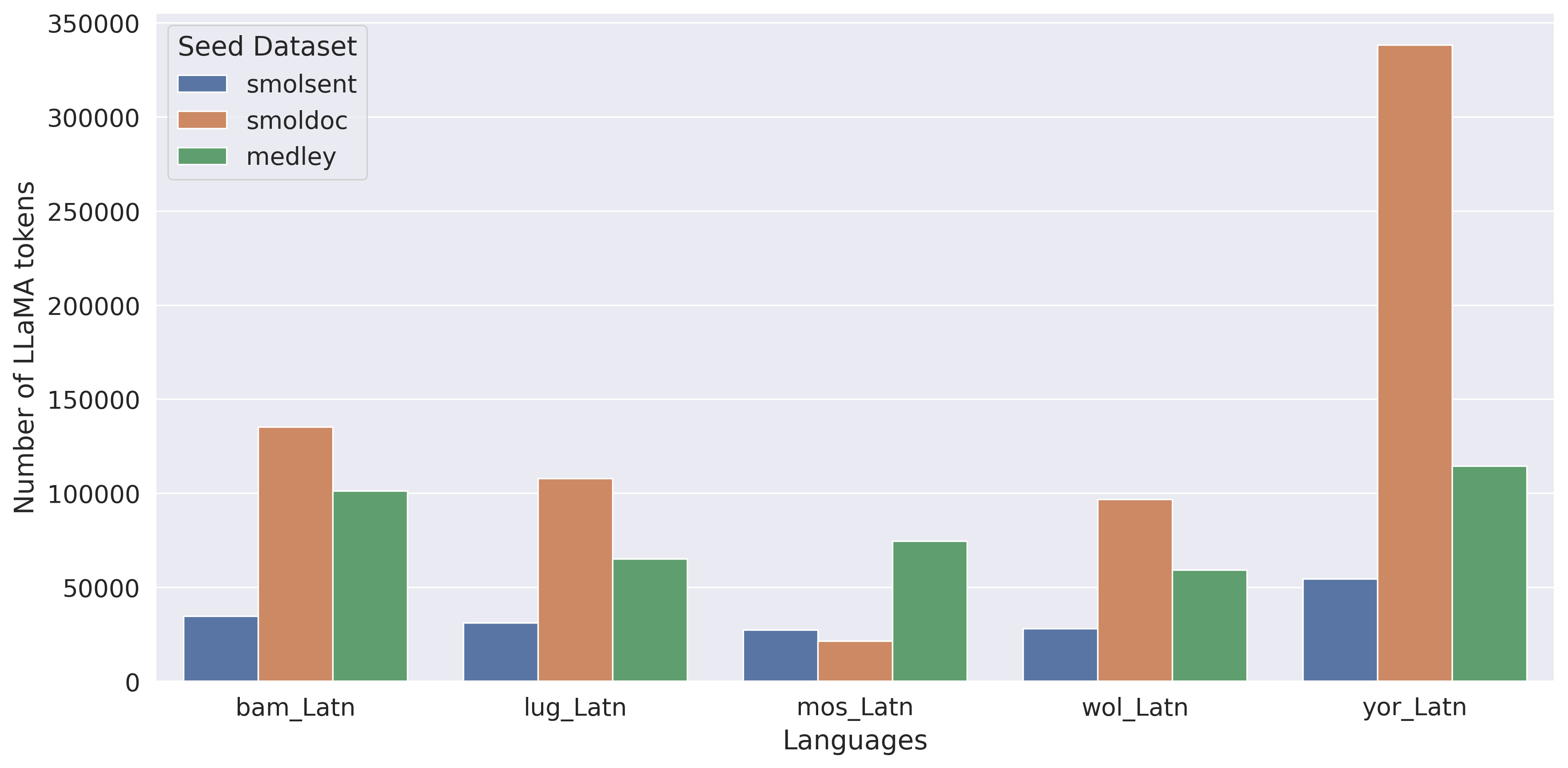

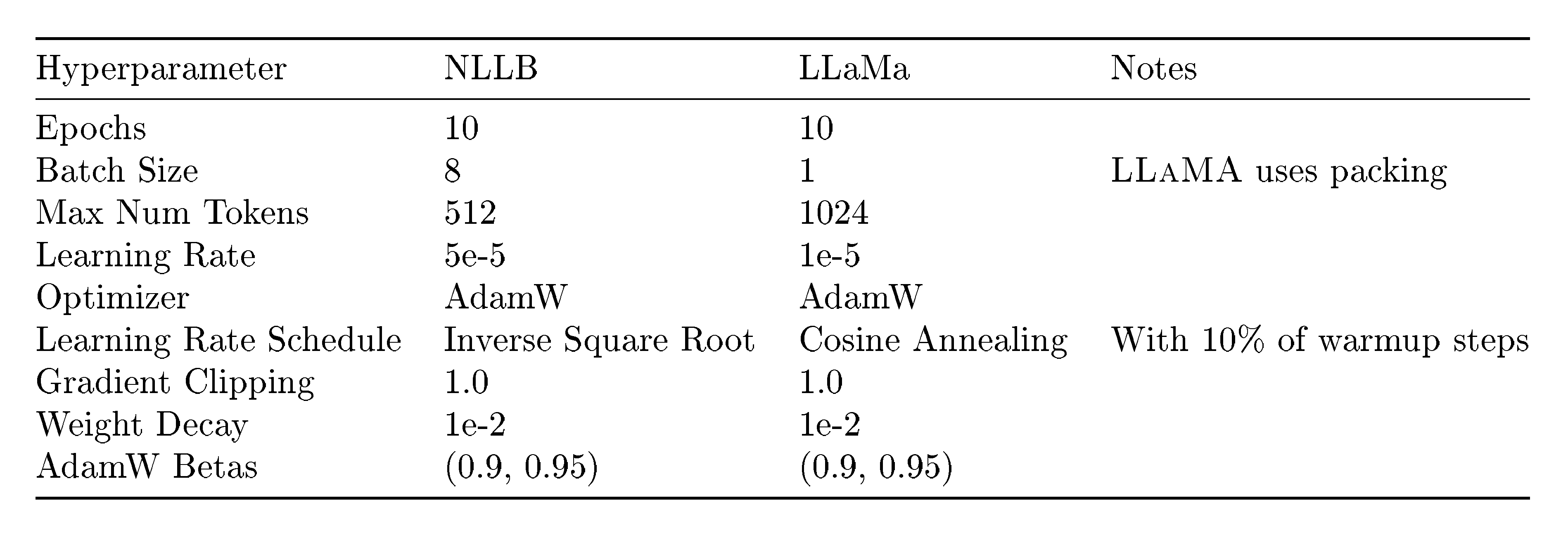

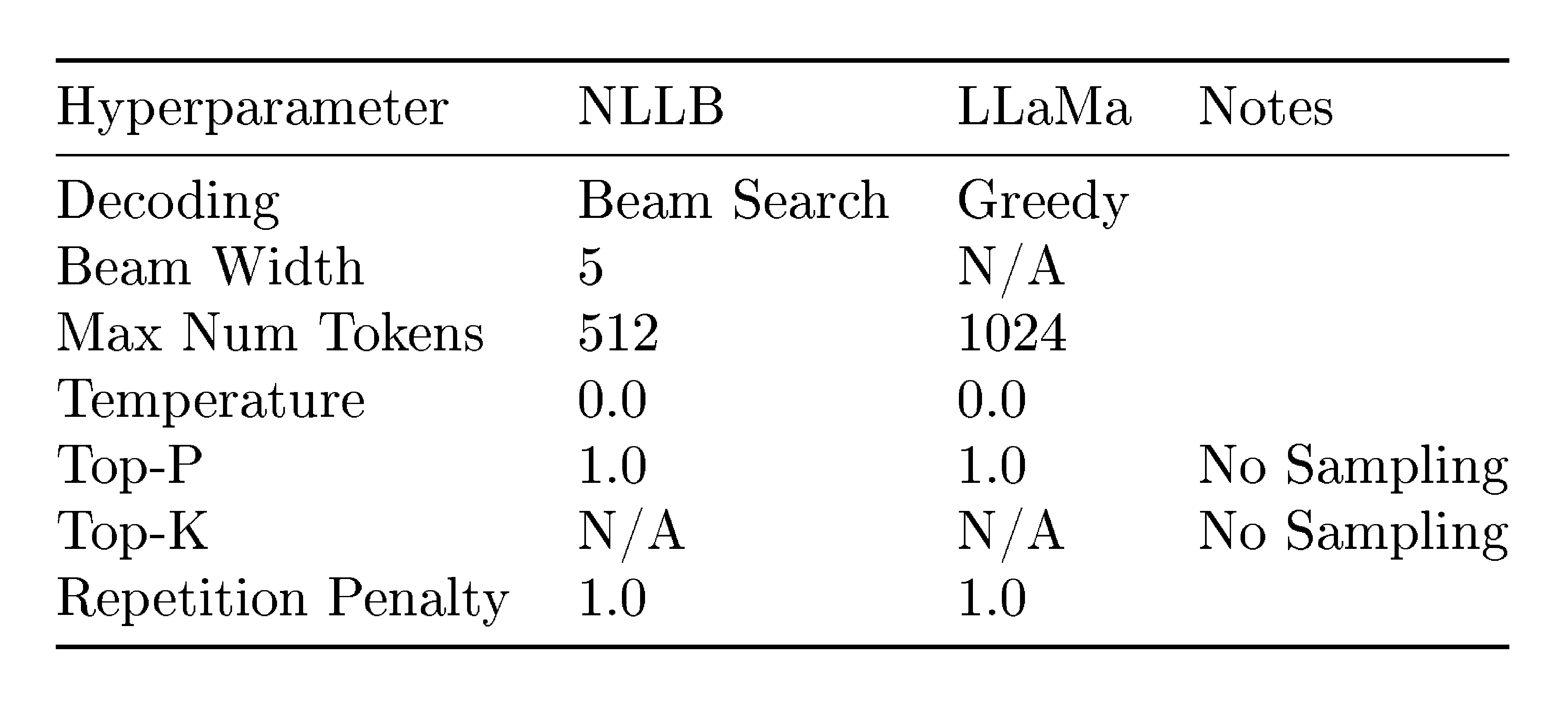

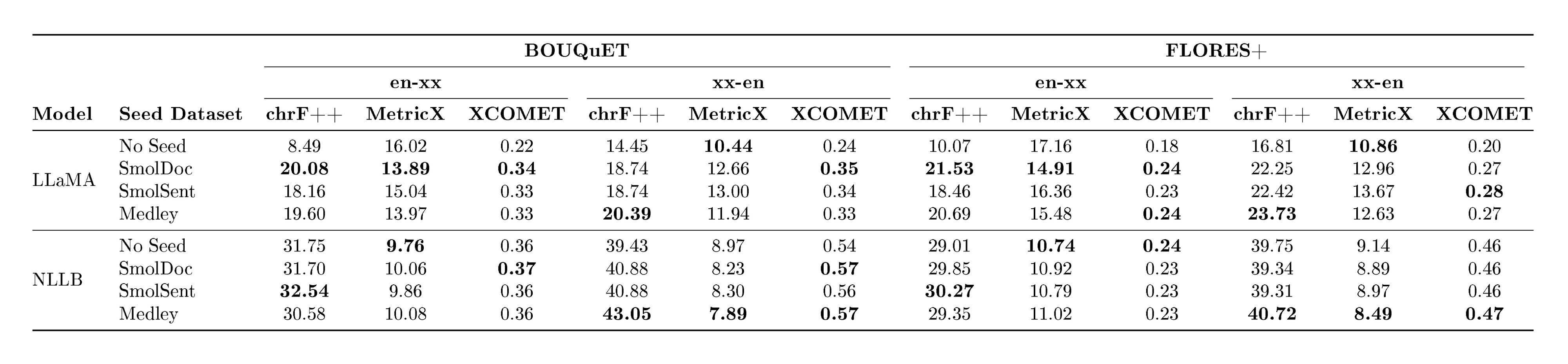

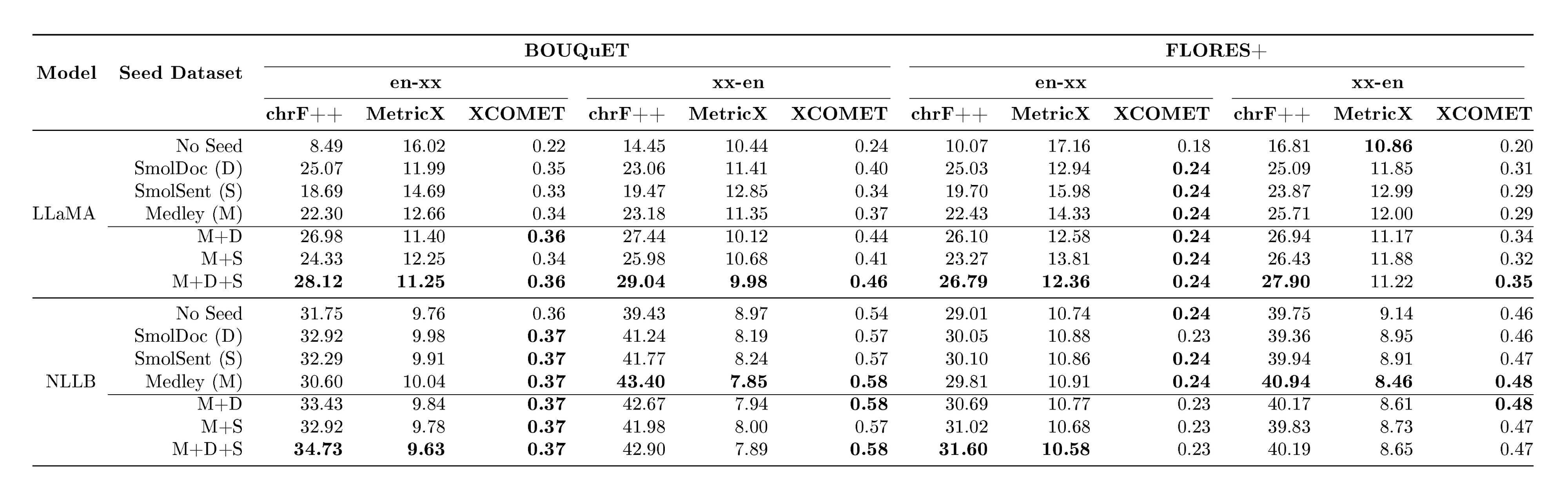

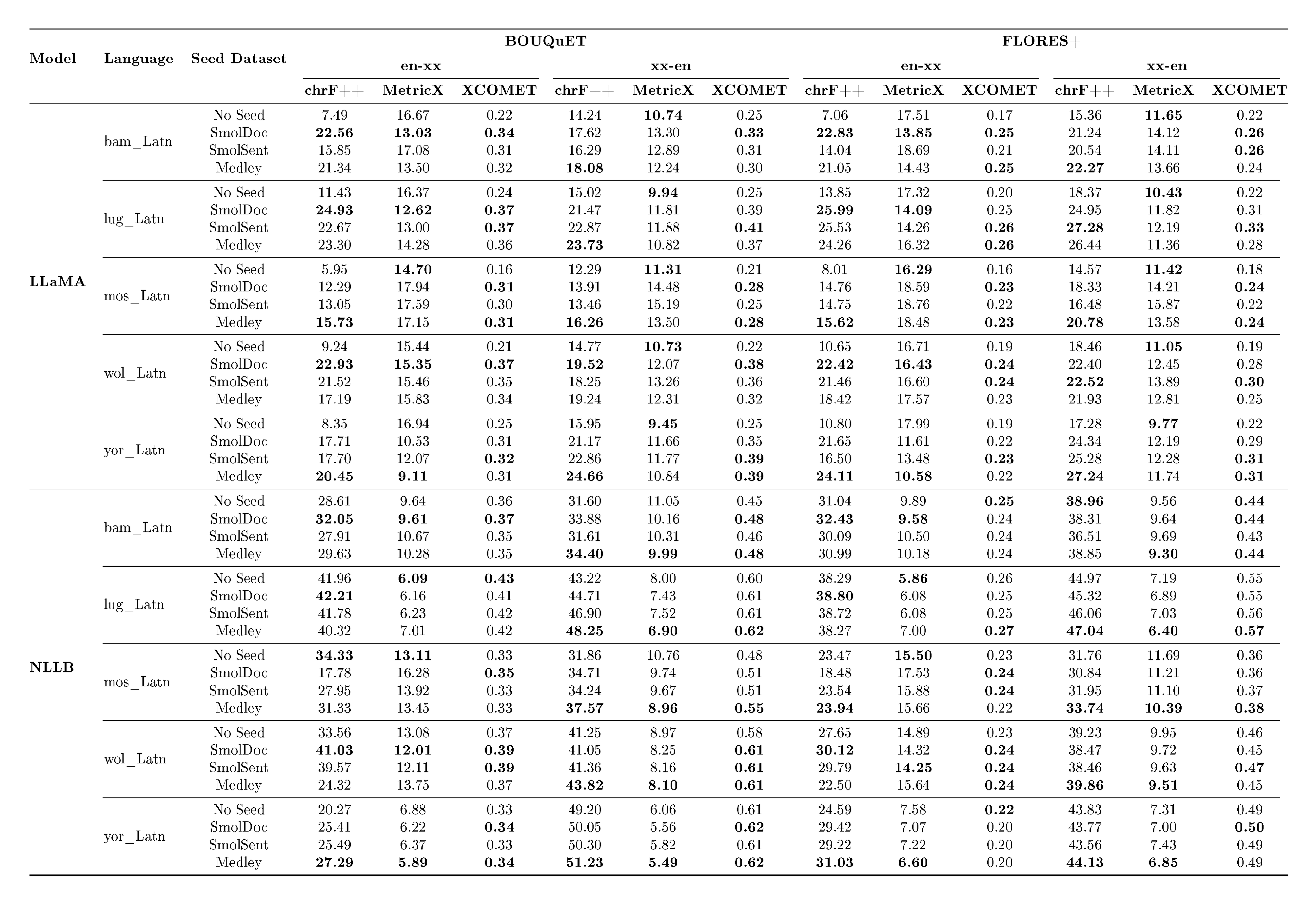

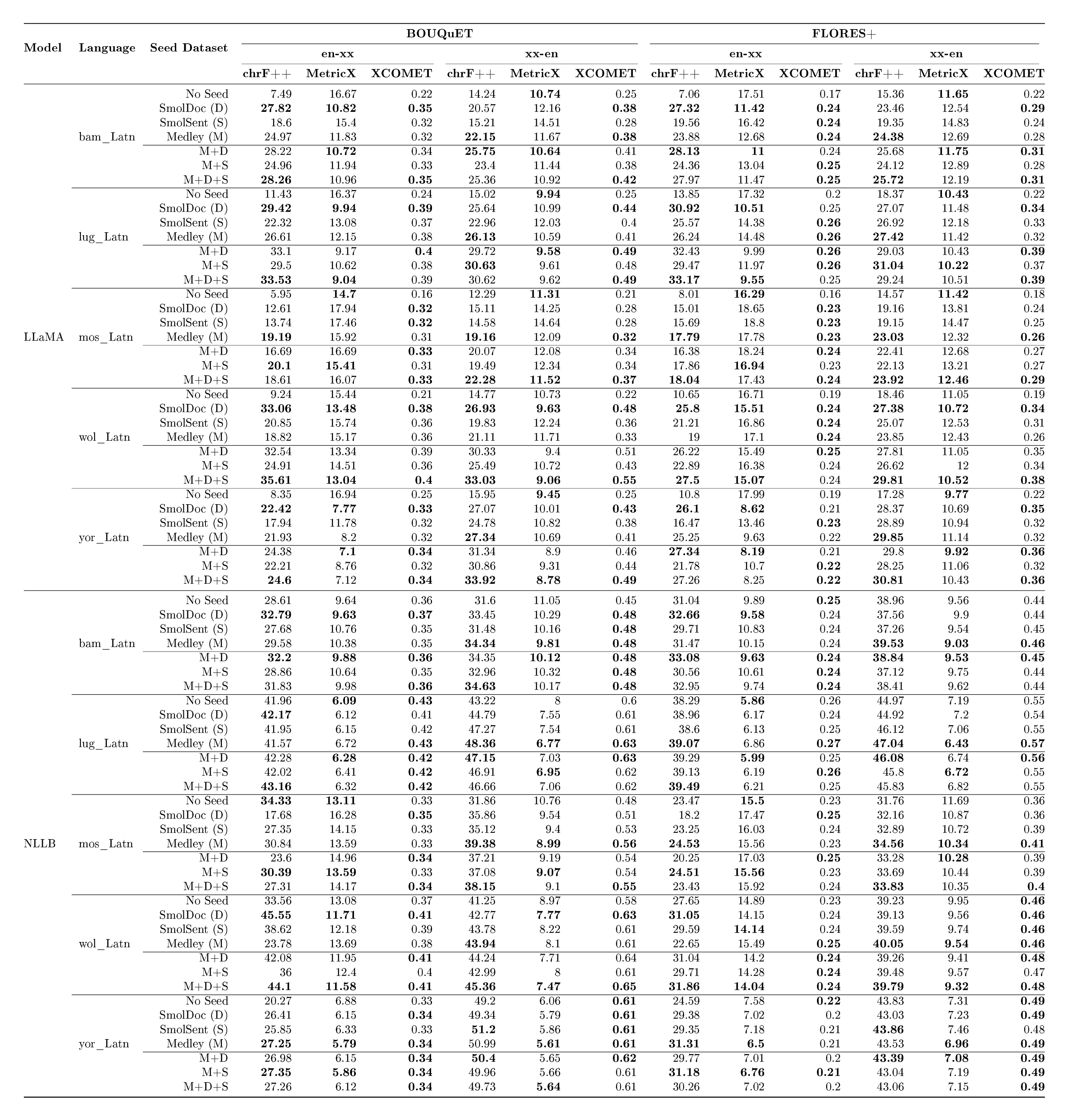

We evaluate the performance of the fine-tuned models on FLoRes+([1]) and BOUQuET ([82]), considering 5 languages that are in the intersection of all the datasets. In particular, we experiment with $\textsc{NLLB-200-3.3B}$ ^12 as a representative of sequence-to-sequence (seq2seq) models ([1]), and $\textsc{LLaMA-3.1-8B-Instruct}$ ^13 representing LLM-based MT, and fine-tune them to obtain language-specific checkpoints, considering into- and out-of-English separately. More precise details about the experiments setup can be found in Appendix A.10.

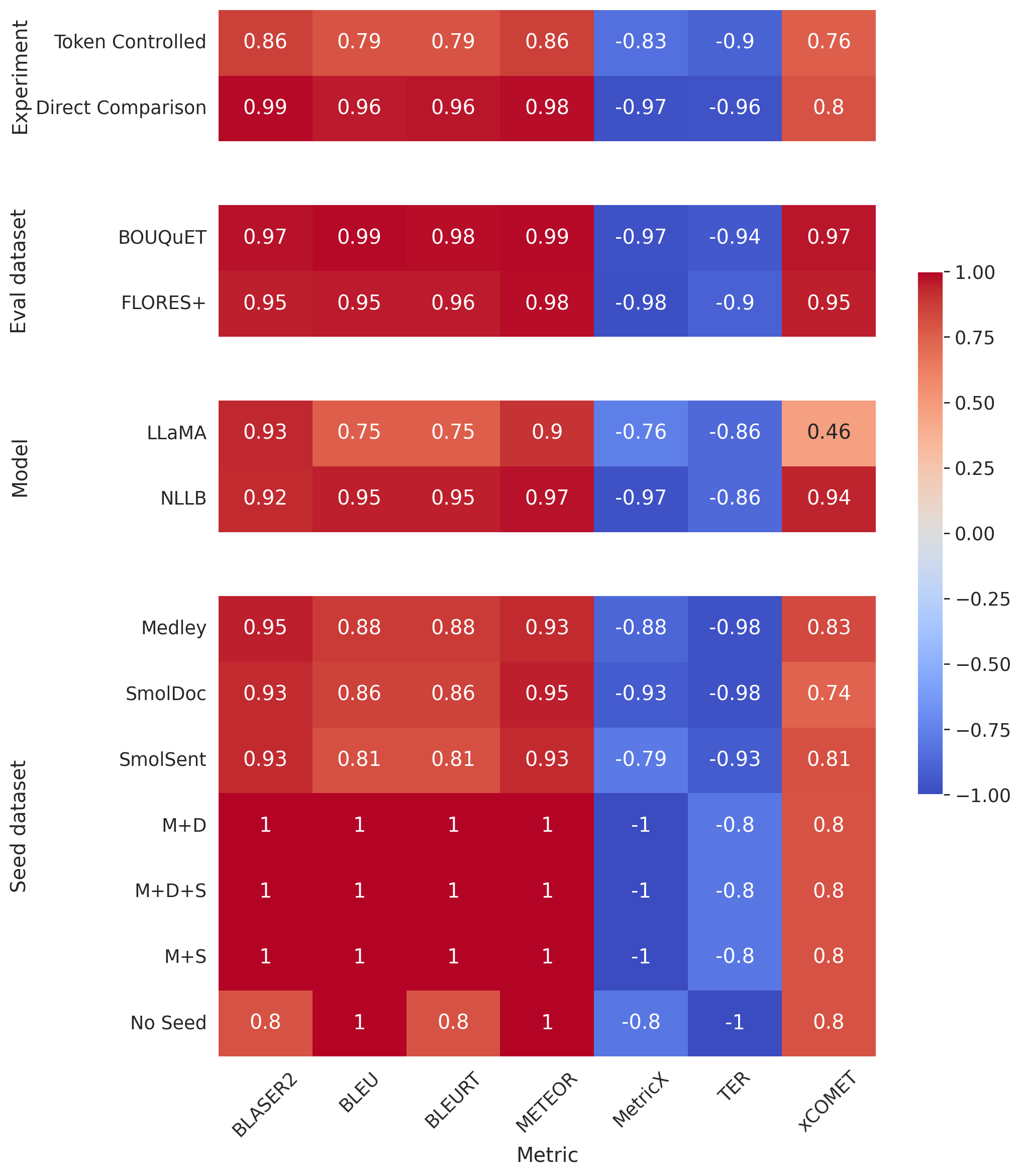

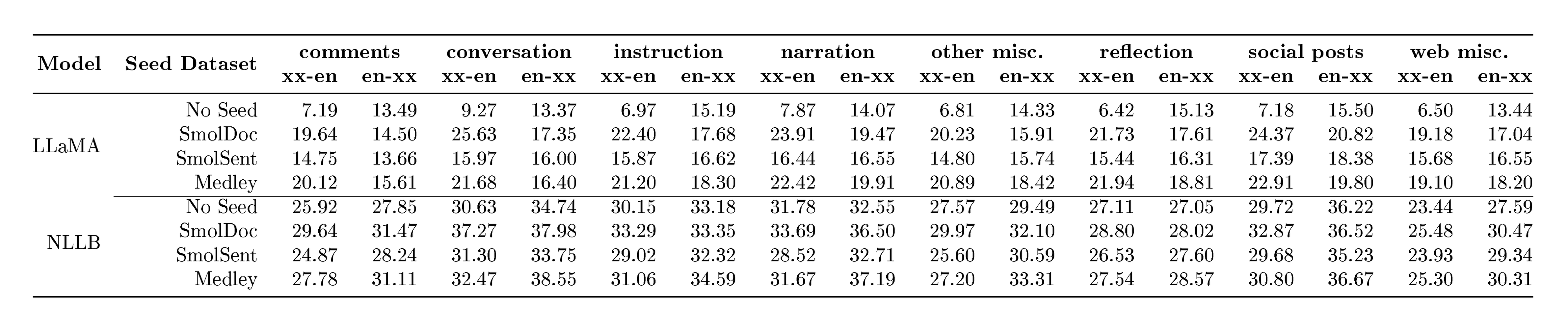

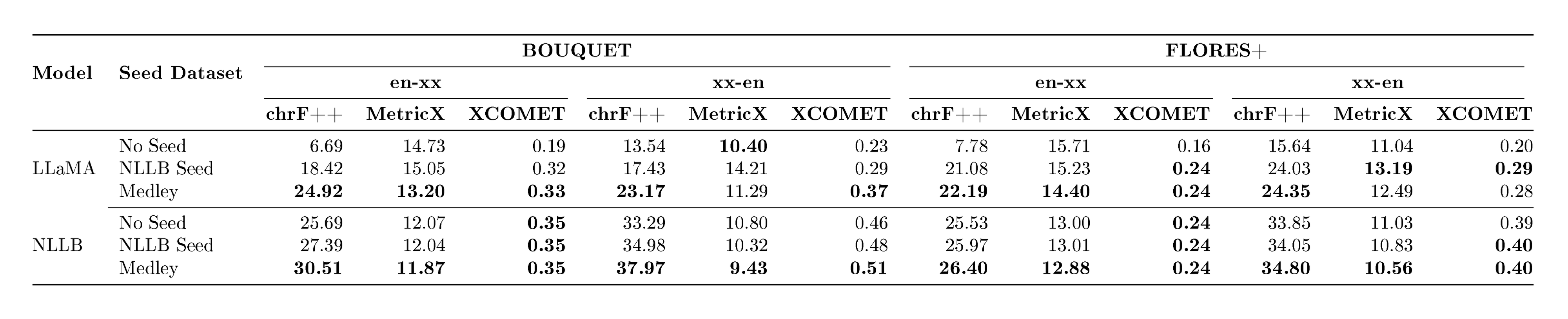

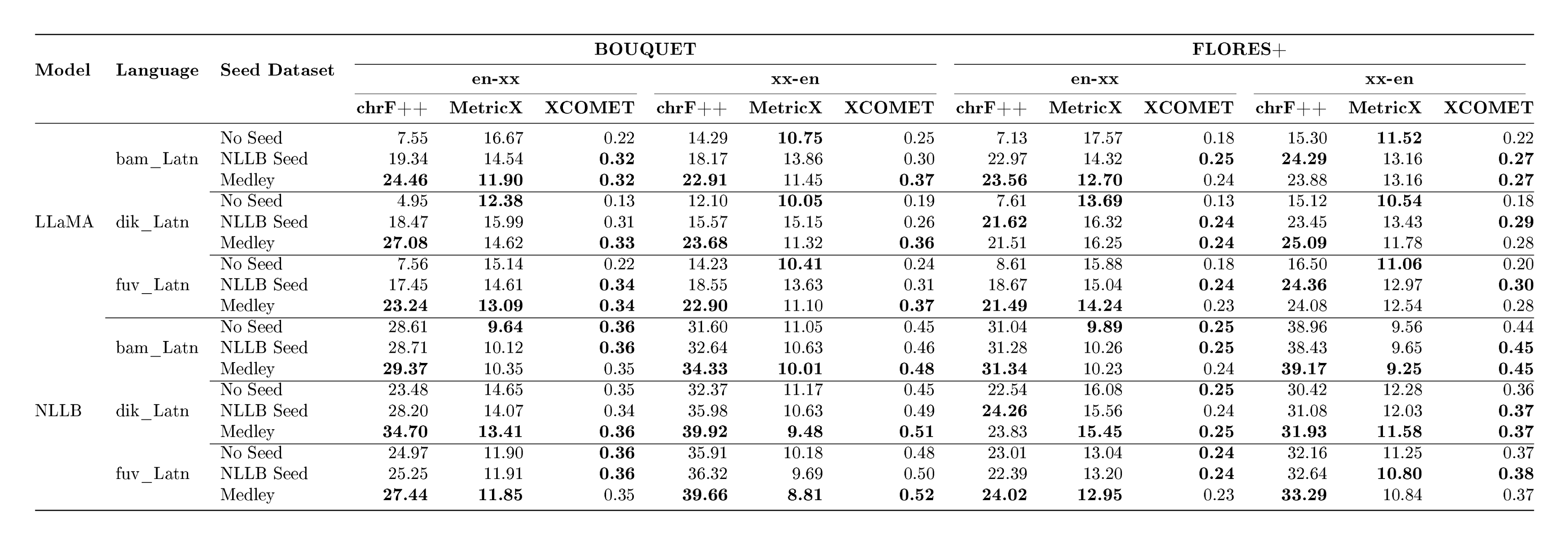

Experiment Results

::: {caption="Table 8: Token-controlled and Direct comparison: reporting number of $\textsc{LLaMA}$ tokens on involved languages (Bambara, Mossi, Wolof, Yoruba, and Ganda, for both evaluation datasets) and ChrF++ numbers."}

:::

The overall results of our experiments are reported in Table 8. A more detailed breakdown of these results can be found in Appendix A.11. We see that MeDLEy matches or outperforms baseline datasets in the token-controlled setting, and shows gains in the into-English direction, while adding MeDLEy to existing datasets yield generally modest gains. We also show similar findings on a comparison on $\textsc{NLLB}$-Seed on a separate set of intersection languages[^14], see Table 46. We confirm these trends over various other MT evaluation metrics such as xCOMET and MetricX ([83, 84]), see Figure 25. This supports a major application of seed datasets, i.e., synthetic data generation from monolingual LRL data via better xx-en systems as discussed in Section 4.3.1.

[^14]: In addition, we also show that $\textsc{NLLB}$-Seed contains a high proportion of difficult-to-translate texts potentially due to technical or obscure terminology (54% as compared to 10.41%), which may hinder lay community translators.

4.3.4 Conclusions and limitations