Dive into Claude Code: The Design Space of Today's and Future AI Agent Systems

Jiacheng Liu$^{1}$, Xiaohan Zhao$^{1}$, Xinyi Shang$^{1, 2}$, Zhiqiang Shen$^{1, \dagger}$

$^{1}$ VILA Lab, Mohamed bin Zayed University of Artificial Intelligence

$^{2}$ University College London

$^{\dagger}$ Corresponding author

Abstract

Claude Code is an agentic coding tool that can run shell commands, edit files, and call external services on behalf of the user. This study describes its comprehensive architecture by analyzing the publicly available TypeScript source code$^{a}$ and further comparing it with OpenClaw, an independent open-source AI agent system that answers many of the same design questions from a different deployment context. Our analysis identifies five human values, philosophies, and needs that motivate the architecture (human decision authority, safety and security, reliable execution, capability amplification, and contextual adaptability) and traces them through thirteen design principles to specific implementation choices. The core of the system is a simple while-loop that calls the model, runs tools, and repeats. Most of the code, however, lives in the systems around this loop: a permission system with seven modes and an ML-based classifier, a five-layer compaction pipeline for context management, four extensibility mechanisms (MCP, plugins, skills, and hooks), a subagent delegation and orchestration mechanism, and append-oriented session storage. A comparison with OpenClaw, a multi-channel personal assistant gateway, shows that the same recurring design questions produce different architectural answers when the deployment context changes: from per-action safety evaluation to perimeter-level access control, from a single CLI loop to an embedded runtime within a gateway control plane, and from context-window extensions to gateway-wide capability registration. We finally identify six open design directions for future agent systems, grounded in recent empirical, architectural, and policy literature. Our GitHub is available at: https://github.com/VILA-Lab/Dive-into-Claude-Code.

[^1]: v2.1.88, link. Disclaimer: All materials used in this work are obtained from publicly available online sources. We have not used any private, confidential, or unauthorized materials, and we do not intend to infringe any copyright or intellectual property rights. The original intellectual property rights to the source code belong to Anthropic.

Correspondence: Zhiqiang Shen (mailto:[email protected])

Executive Summary: The rise of AI-assisted software development has shifted from simple code suggestions to fully agentic systems that autonomously plan, execute, and iterate on tasks like debugging or refactoring. Tools like Claude Code, developed by Anthropic, represent this evolution by allowing AI to run commands, edit files, and interact with external services on a user's behalf. However, while such systems promise to boost productivity, their internal architectures remain opaque, limiting developers' ability to build safer, more effective agents. This lack of insight is especially pressing now, as AI agents enter production workflows, raising questions about safety, reliability, and long-term impacts on human skills amid growing adoption in engineering teams.

This document sets out to map the architecture of Claude Code through a detailed analysis of its source code, identify the human-centered values and principles driving its design, compare it with an open-source alternative called OpenClaw, and highlight open challenges for future AI agent systems. The goal is to provide a blueprint for how production-grade agents balance autonomy with control, informed by real implementation choices.

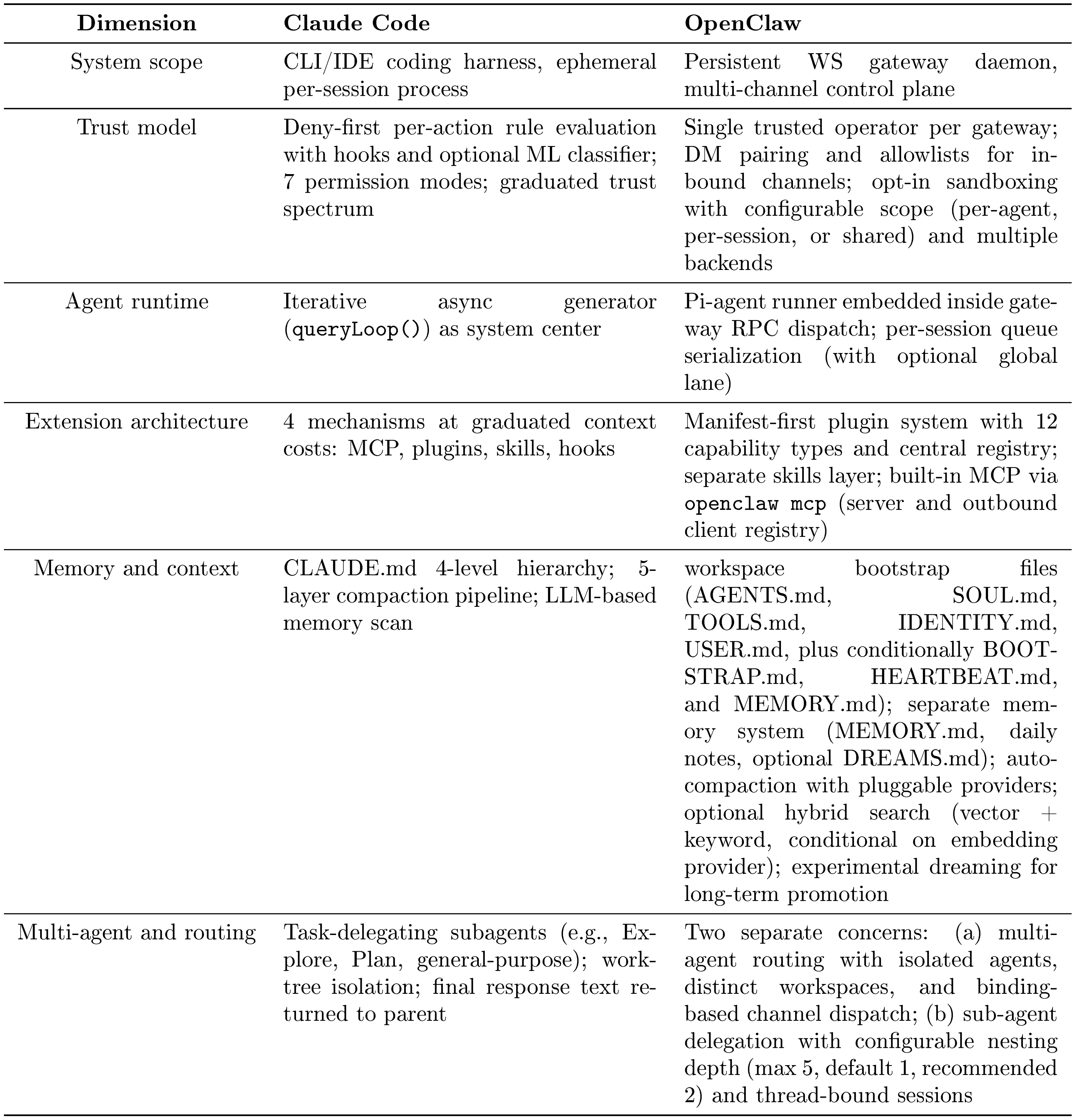

The analysis draws from a reverse-engineering of Claude Code's publicly available TypeScript source code (version 2.1.88), covering about 500,000 lines across 1,800 files, supplemented by Anthropic's official documentation and community insights. It traces key subsystems without running the code, focusing on high-level structures like the agent loop and safety layers. A side-by-side comparison with OpenClaw, a multi-channel personal AI gateway, examines six design dimensions such as trust models and extensibility, using its open-source code for contrast. The study assumes a deployment context of developer machines with bounded computational resources, emphasizing credibility through direct file references and avoidance of unverified inferences.

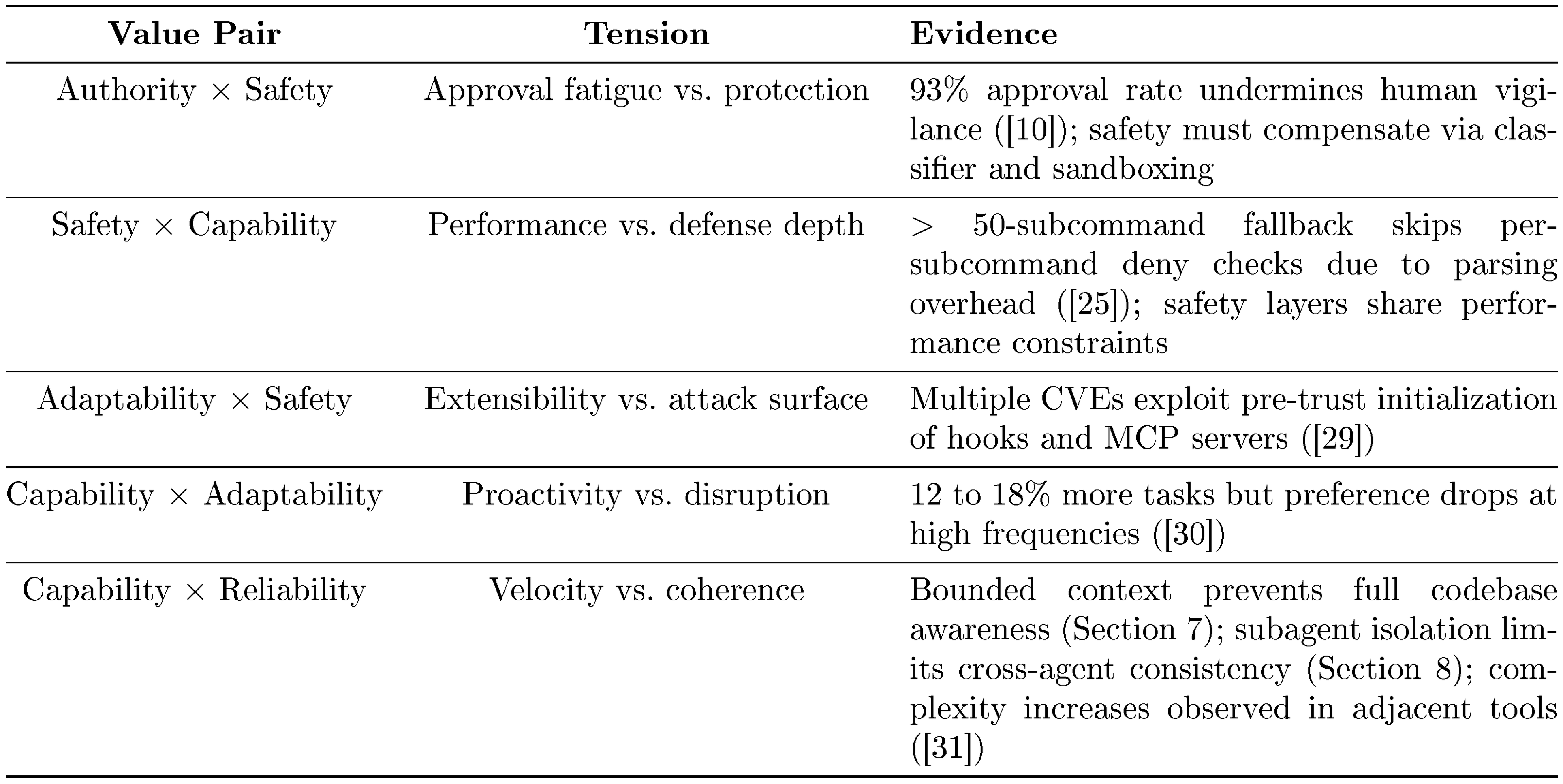

The most important findings center on Claude Code's core design: a simple iterative loop where the AI model proposes actions and the surrounding "harness" executes them, comprising just 1.6% decision logic and 98.4% operational infrastructure. This reflects five guiding human values—prioritizing user control, protecting against harm, ensuring dependable outputs, amplifying what users can achieve, and adapting to specific contexts—which translate into 13 principles like deny-first safety and progressive context management. Key implementations include a seven-layer permission system that blocks risky actions by default (with users approving 93% of prompts, prompting automated classifiers to reduce fatigue), a five-stage pipeline that compresses conversation history to fit the AI's limited memory window (up to 1 million tokens, but often pressured by verbose outputs), four extensibility options (from low-cost hooks to high-integration external servers) that let users customize without bloating the system, isolated subagents for task delegation (e.g., one explores code while another verifies), and append-only storage for auditable session histories. In contrast, OpenClaw, designed for persistent multi-app assistance, favors gateway-wide controls over per-action checks, shared memory across channels, and plugin registries that extend the entire system rather than single sessions—showing how context shapes choices, with Claude Code enabling 27% more ambitious tasks per internal surveys.

These findings mean AI agents like Claude Code excel at short-term task acceleration, enabling new workflows without heavy planning frameworks, but they trade off global awareness for local efficiency—potentially leading to duplicated code or overlooked conventions due to memory limits. Safety layers reduce risks like unauthorized file changes, yet shared performance constraints (e.g., token costs) could weaken them under load, as seen in documented vulnerabilities. Compared to expectations of rigid orchestration in other tools, Claude Code's model-trusting approach amplifies capabilities cost-effectively but highlights a gap: it boosts immediate output (e.g., 20-40% longer sessions over time) at possible risk to long-term developer comprehension, with studies showing AI users scoring 17% lower on code understanding tests and contributing to rising code complexity by 40%.

Leaders should prioritize investments in harness infrastructure—such as layered safety and compaction tools—over complex decision scaffolds, as these yield reliable gains with capable models. For next steps, conduct pilot evaluations of Claude Code in teams to measure outcomes like error rates and skill retention, then prototype enhancements like cross-session memory for better continuity and evaluation hooks to catch "silent" failures (78% of AI issues per industry reports). Explore governance integrations for compliance with emerging rules like the EU AI Act, weighing trade-offs between proactive agents (boosting completion by 12-18%) and user control. If results confirm comprehension risks, design features that promote human oversight, such as comprehension checks during delegation.

While the analysis confidently maps the architecture (verified against code), uncertainties include build variations from feature flags and untested runtime behaviors, plus a focus on one system that may not generalize. Readers should verify with live deployments before decisions, treating this as a strong foundation rather than exhaustive proof.

1. Introduction

Section Summary: AI-assisted software development has progressed from simple autocomplete tools like GitHub Copilot to advanced agentic systems like Anthropic's Claude Code, which can independently plan, execute tasks, and iterate on code changes using an "agentic loop." This study examines Claude Code's architecture through its source code to reveal how it handles key challenges in safety, context management, extensibility, and more, drawing on design principles inspired by human values and highlighting new workflows that enable tasks engineers might not otherwise attempt. By contrasting it with the open-source OpenClaw system and identifying open questions for future agents, the work aims to guide the creation of more capable and principled AI tools.

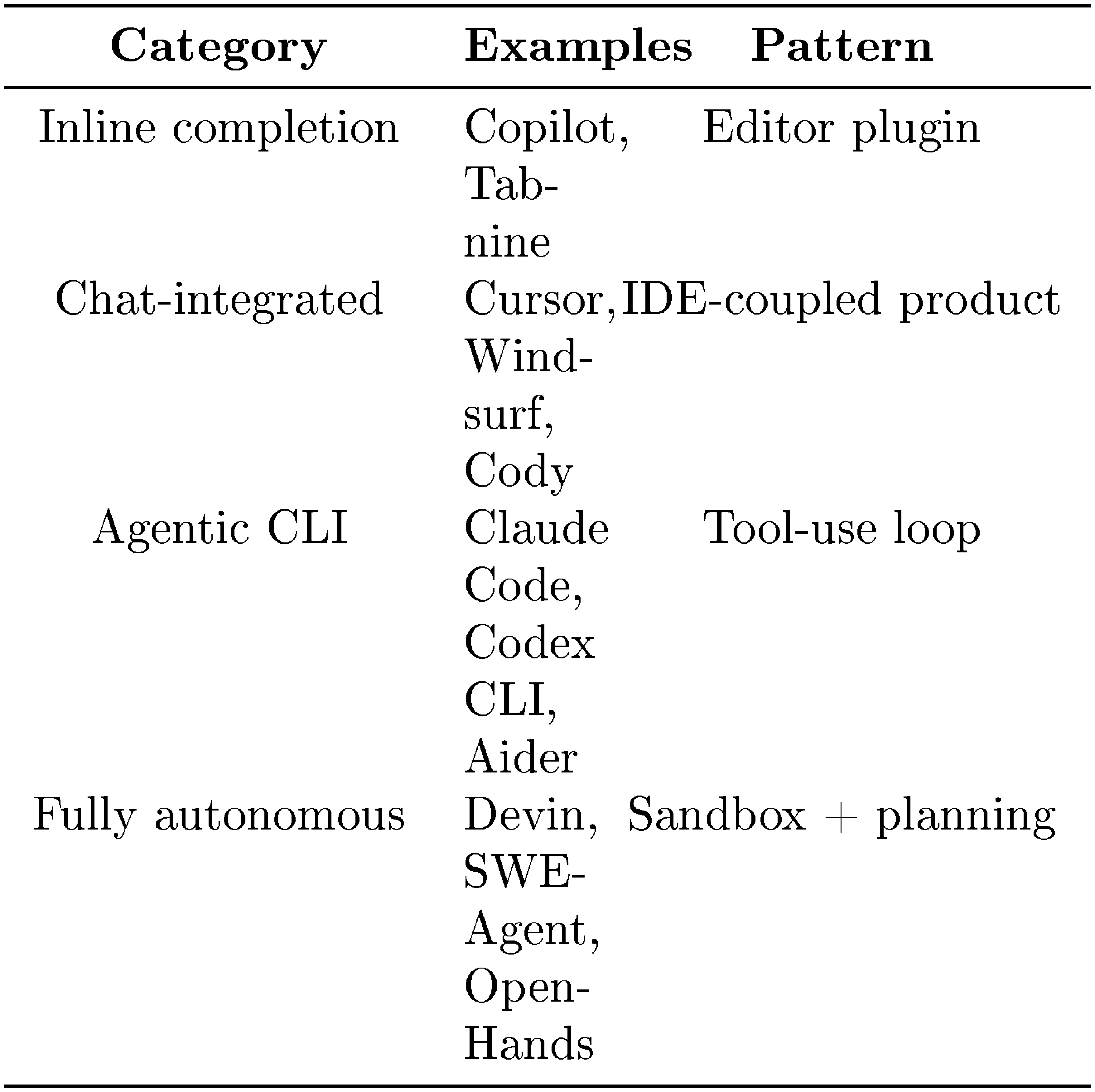

AI-assisted software development has evolved from autocomplete-style tools such as GitHub Copilot ([1]), through IDE-integrated assistants like Cursor ([2]), to fully agentic systems that autonomously plan multi-step modifications, execute shell commands, read and write files, and iterate on their own outputs. Claude Code ([3]) is an agentic coding tool released by Anthropic ([4]). Its official documentation describes an "agentic loop" that plans and executes actions toward accomplishing a goal and can call tools, evaluate results, and continue until the task is done ^2. This shift from suggestion to autonomous action introduces architectural requirements that have no counterpart in completion-based tools. These requirements define a design space, a set of recurring questions spanning topics such as safety, context management, extensibility, and delegation that every coding agent must navigate. This study uses source-level analysis of Claude Code to show how one production system answers these questions.

Despite growing adoption, Anthropic publishes user-facing documentation for Claude Code but not detailed architectural descriptions. This study uses source code analysis to describe architectural design decisions. Anthropic's internal survey of 132 engineers and researchers ([5]) reports that about 27% of Claude Code-assisted tasks were work that would not have been attempted without the tool, suggesting that the architecture enables qualitatively new workflows rather than merely accelerating existing ones.

In this work, we first identify five human values/philosophies and thirteen design principles that motivate the architecture (Section 2), then organize the analysis in three parts:

- Design-space analysis. We identify recurring design questions (where reasoning lives, how the iteration loop is structured, what safety posture to adopt, how the extension surface is partitioned, how context is managed, how work is delegated across subagents, and how sessions persist) and analyze Claude Code's answers through a 7-component high-level structure and a 5-layer subsystem architecture, tracing each choice to specific source files (Section 3). The analysis aims to build a deep understanding of the system mechanism, with the goal of informing the design of better and more powerful agent systems.

- Architectural contrast with OpenClaw. Beyond analyzing Claude Code itself, we also compare its design philosophy with that of open-source agent system OpenClaw ([6]), a multi-channel personal assistant gateway, across six design dimensions to show how the same recurring questions produce different answers under different deployment contexts (Section 10), in order to highlight both the common principles and the key differences between commercial and open-source software. This comparison helps reveal how deployment setting, product goals, safety requirements, and user assumptions shape architectural choices in different ways. By examining where these systems converge and where they diverge, our study aims to provide useful guidance and practical insights for the design of future, more capable agent systems.

- Open directions for future agent systems. Building on the design-space analysis and the OpenClaw contrast, Section 12 identifies six open directions spanning the observability-evaluation gap, cross-session persistence, harness boundary evolution, horizon scaling, governance, and the evaluative lens, each drawing on empirical, architectural, and policy literature. Used as an evaluative lens, our study also reveals an open question: while the Claude Code agent system substantially amplifies the short-term capabilities of programmers and end users, it offers limited mechanisms that explicitly support long-term human improvement, deeper understanding, and sustained codebase coherence.

The core agent loop is a while-true cycle with state management. The surrounding subsystems for safety, extensibility, context management, delegation, and persistence make up the bulk of the implementation. Source-level analysis[^3] allows us to identify design choices, subsystem boundaries, and implementation trade-offs directly from the system itself rather than inferring them solely from product descriptions.

[^3]: Our analysis is grounded primarily in the source code, supplemented by official Anthropic documentation and selected community analysis, Appendix B details the evidence base and methodology.

Running example.

To keep the architecture concrete, we trace the task "Fix the failing test in auth.test.ts" through Section 3, Section 4, Section 5, Section 6, Figure 6, Section 8, and Section 9. This example illustrates how a seemingly simple user request activates multiple architectural layers, including tool invocation, permission checks, context selection, iterative repair, delegation, and session persistence.

Paper organization.

Section 2 identifies the human values and design principles that motivate the architecture. Section 3 introduces the high-level architecture and the design questions it answers. Section 4, Section 5, Section 6, Figure 6, Section 8, and Section 9 each analyze a major subsystem's design choices. Section 10 contrasts the analysis with OpenClaw, Section 11 provides discussion, and Section 12 surveys open questions for future agent systems. Section 13 and Section 14 then cover related work and conclusions. Appendix B describes the evidence base and methodology.

2. Design Philosophies, Design Principles and Architectural Motivations

Section Summary: This section explores the human-centered motivations behind Claude Code's architecture, highlighting five core values: keeping ultimate decision-making in human hands for informed control, ensuring safety and privacy to protect against risks even during lapses, delivering reliable and verifiable execution that aligns with user intent over time, amplifying human capabilities to enable new kinds of work with minimal effort, and adapting flexibly to individual user contexts as trust builds. These values guide thirteen design principles that address common challenges in building coding agents, such as balancing autonomy with oversight. The principles contrast with alternatives like rigid rule-based systems or isolated execution environments, setting the stage for deeper analysis of specific features in later sections.

Production coding agents are built by humans, for humans, and the architectural decisions they embed reflect what their creators believe matters. This section identifies the human values that motivate Claude Code's design, traces them through recurring design principles, and frames the design-space questions that organize the analysis in Section 3, Section 4, Section 5, Section 6, Figure 6, Section 8, and Section 9.

Anthropic's framework for safe agents states a central tension: "Agents must be able to work autonomously; their independent operation is exactly what makes them valuable. But humans should retain control over how their goals are pursued" ([7]). Claude's Constitution resolves this not through rigid decision procedures but by cultivating "good judgment and sound values that can be applied contextually" ([8]). These commitments, together with empirical findings about how developers actually use the tool ([5, 9]), point to five human values that shape the architecture.

2.1 Five Values and Philosophies

Human Decision Authority.

The human retains ultimate decision authority over what the system does, organized through a principal hierarchy (Anthropic, then operators, then users) that formalizes who holds authority over what ([8]). The system is designed so that humans can exercise informed control: they can observe actions in real time, approve or reject proposed operations, interrupt compatible in-progress operations, and audit after the fact. When Anthropic found that users approve 93% of permission prompts ([10]), the response was not to add more warnings but to restructure the problem: defined boundaries (sandboxing, auto-mode classifiers) within which the agent can work freely, rather than per-action approvals that users stop reviewing once habituated ([11]).

Safety, Security, and Privacy.

The system protects humans, their code, their data, and their infrastructure from harm, even when the human is inattentive or makes mistakes. This is distinct from Human Decision Authority: where authority is about the human's power to choose, safety is about the system's obligation to protect even when that power lapses. Anthropic's safe-agents framework separately identifies securing agent interactions and protecting privacy across extended interactions as core commitments ([7]). The auto-mode threat model ([10]) explicitly targets four risk categories: overeager behavior, honest mistakes, prompt injection, and model misalignment.

Reliable Execution.

The agent does what the human actually meant, stays coherent over time, and supports verifying its work before declaring success. This value spans both single-turn correctness (did it interpret the request faithfully?) and long-horizon dependability (does it remain coherent across context window boundaries, session resumption, and multi-agent delegation?). Anthropic's product documentation ([12]) describes a three-phase loop that the agent repeats until the task is complete: gather context, take action, and verify results. The agent design guidance ([13]) further emphasizes that "ground truth from the environment" at each step assesses progress. The harness-design guidance ([14]) likewise notes that "agents tend to respond by confidently praising the work, " even when quality is mediocre, motivating separation of generation from evaluation.

Capability Amplification.

The system materially increases what the human can accomplish per unit of effort and cost. Anthropic's internal survey ([5]), discussed in Section 1, suggests that the architecture enables qualitatively new workflows, not merely faster existing ones: approximately 27% of tasks represented work that would not otherwise have been attempted. The system is described by its creators as "a Unix utility rather than a traditional product, " built from the smallest building blocks that are "useful, understandable, and extensible" ([15]). The architecture invests in deterministic infrastructure (context management, tool routing, recovery) rather than decision scaffolding (explicit planners or state graphs), on the premise that increasingly capable models benefit more from a rich operational environment than from frameworks that constrain their choices.

Contextual Adaptability.

The system fits the user's specific context (their project, tools, conventions, and skill level) and the relationship improves over time. The extension architecture (CLAUDE.md, skills, MCP, hooks, plugins) provides configurability at multiple levels of context cost (Section 6 and Figure 6). Longitudinal data ([9]) shows that the human-agent relationship evolves: auto-approve rates increase from approximately 20% at fewer than 50 sessions to over 40% by 750 sessions. This pattern, described as autonomy that is "co-constructed by the model, the user, and the product, " means the system is designed for trust trajectories rather than fixed trust states. MCP's donation to the Linux Foundation's Agentic AI Foundation ([16]) reflects the ecosystem dimension of this value.

2.2 Design Principles

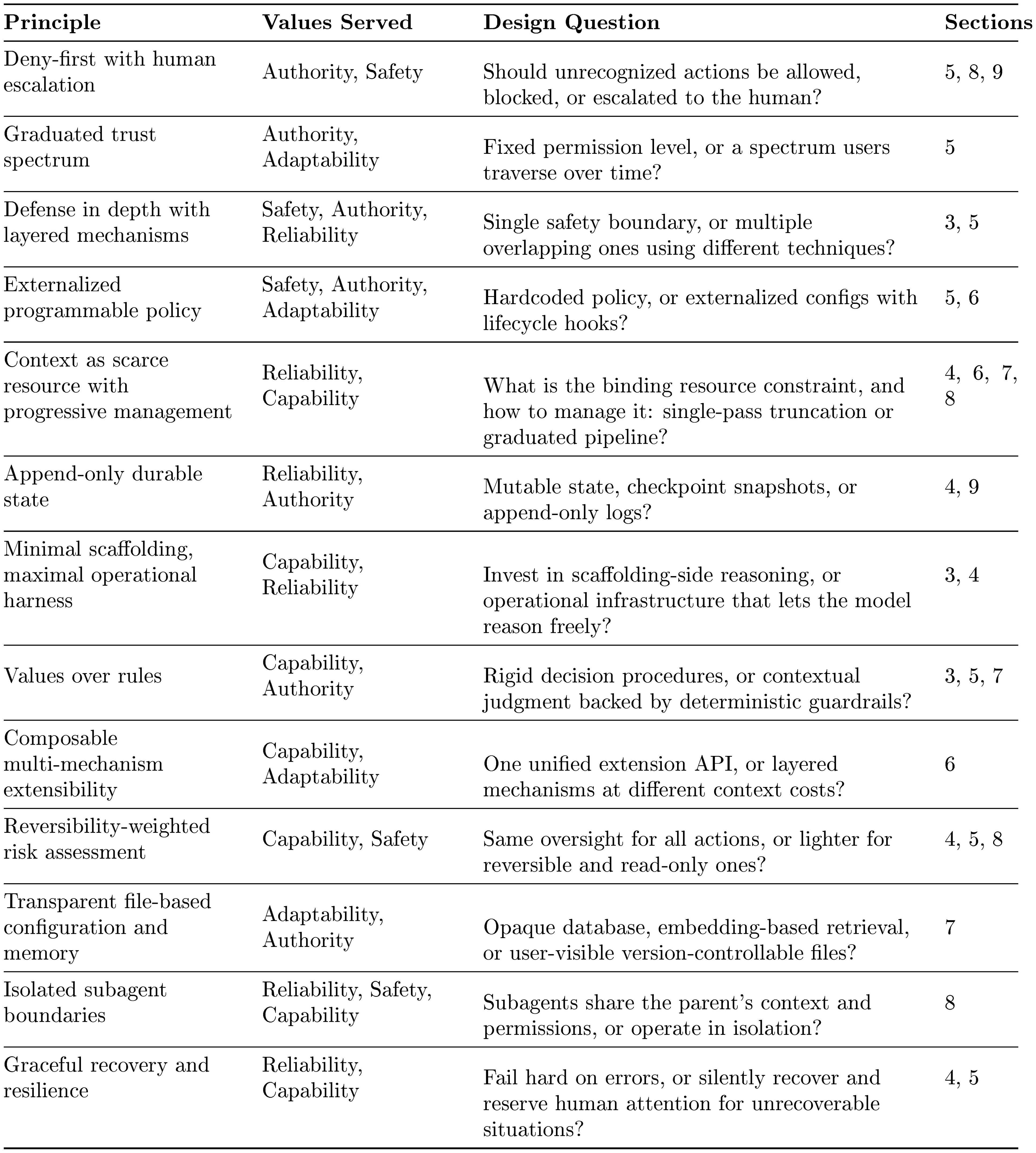

These values are operationalized through thirteen design principles, each answering a recurring question that production coding agents must resolve. Table 1 summarizes the principles; subsequent sections (Section 3–Section 9) trace each through specific implementation choices.

::: {caption="Table 1: Design principles, the values they serve, and the design-space question each answers. Principles map to multiple values; implementations appear in the sections indicated."}

:::

These principles can be read against three major alternative design families. First, rule-based orchestration: frameworks such as LangGraph ([17]) encode decision logic as explicit state graphs with typed edges, choosing scaffolding over minimal harness. Second, container-isolated execution: SWE-Agent and OpenHands ([18, 19]) rely on Docker isolation rather than layered policy enforcement. Third, version-control-as-safety: tools like Aider ([20]) use Git rollback as the primary safety mechanism rather than deny-first evaluation. Claude Code's principle set is distinctive in combining minimal decision scaffolding with layered policy enforcement, values-based judgment with deny-first defaults, and progressive context management with composable extensibility.

2.3 From Values to Architecture

Each value traces through its principles to specific architectural decisions:

- Human Decision Authority motivates deny-first evaluation, the graduated trust spectrum, append-only state (auditable history), externalized programmable policy, and values-over-rules (Section 5, Section 9, Section 6, and Figure 6).

- Safety, Security, and Privacy motivates defense in depth, deny-first defaults, reversibility-weighted assessment, externalized policy, and isolated subagent boundaries (Section 5 and Section 8).

- Reliable Execution motivates context-as-scarce-resource, append-only durable state, graceful recovery, isolated subagent boundaries, and defense in depth (Section 4, Figure 6, Section 8, and Section 9).

- Capability Amplification motivates minimal scaffolding, composable extensibility, reversibility-weighted risk, context management, and graceful recovery (Section 4, Section 6, and Section 5).

- Contextual Adaptability motivates transparent file-based memory, composable extensibility, the graduated trust spectrum, and externalized programmable policy (Figure 6, Section 6, and Section 5).

These mappings also reveal what the architecture does not do: it does not impose explicit planning graphs on the model's reasoning, does not provide a single unified extension mechanism, and does not restore all session-scoped trust-related state across resume. These absences are consistent with the principle set above.

2.4 An Evaluative Lens: Long-term Capability Preservation

The five values above describe what the architecture is designed to serve. This paper also applies a sixth concern, whether the architecture preserves long-term human capability, as an evaluative lens. This concern is real: Anthropic's own study of 132 engineers and researchers ([5]) documents a "paradox of supervision" in which overreliance on AI risks atrophying the skills needed to supervise it, and independent research ([21]) finds that developers in AI-assisted conditions score 17% lower on comprehension tests. However, this concern is not prominently reflected as a design driver in the architecture or in Anthropic's stated design values. We therefore treat it not as a co-equal value but as a cross-cutting concern: a question applied across all five values in Section 11, asking whether short-term amplification comes at the cost of long-term human understanding, codebase coherence, and the developer pipeline.

3. Architecture Overview

Section Summary: Claude Code's architecture addresses key design challenges in building production coding agents, such as where reasoning occurs, how many execution systems to use, safety approaches, and primary resource limits. In this setup, the AI model handles reasoning and suggests actions through structured requests, while a secure harness executes them after permission checks, using a single unified loop for all interfaces to ensure consistency and security. The system emphasizes a deny-first safety model with multiple protective layers, treats the AI's context window as the main bottleneck managed by various compression techniques, and illustrates these choices through a running example of fixing a failing test file.

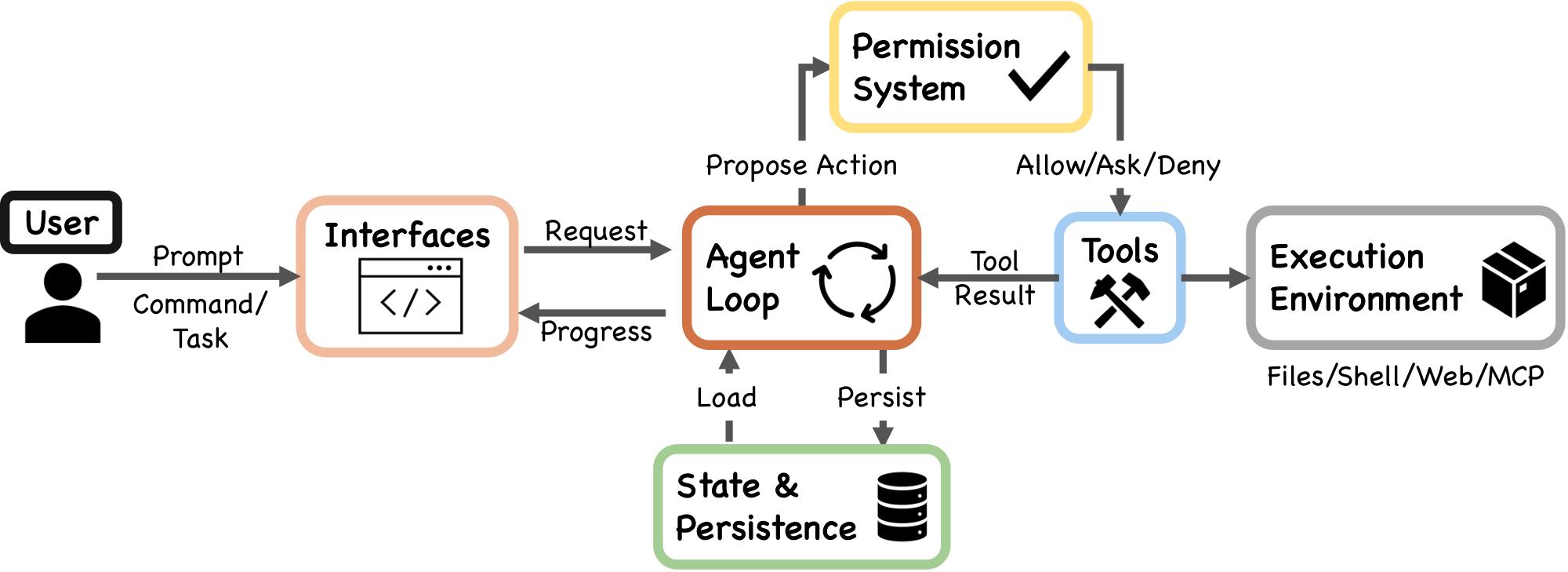

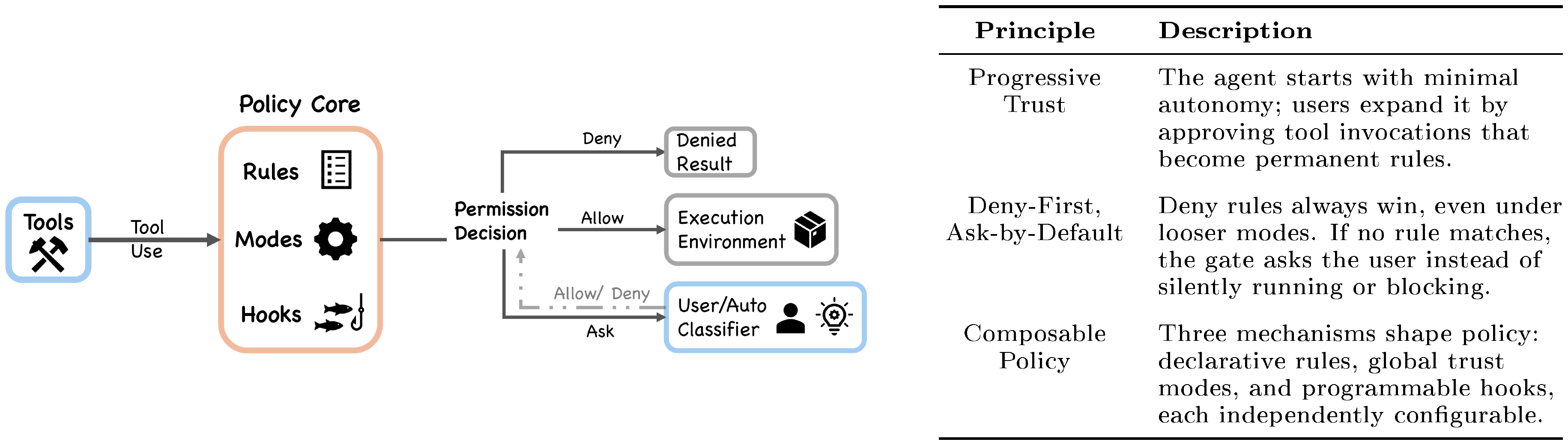

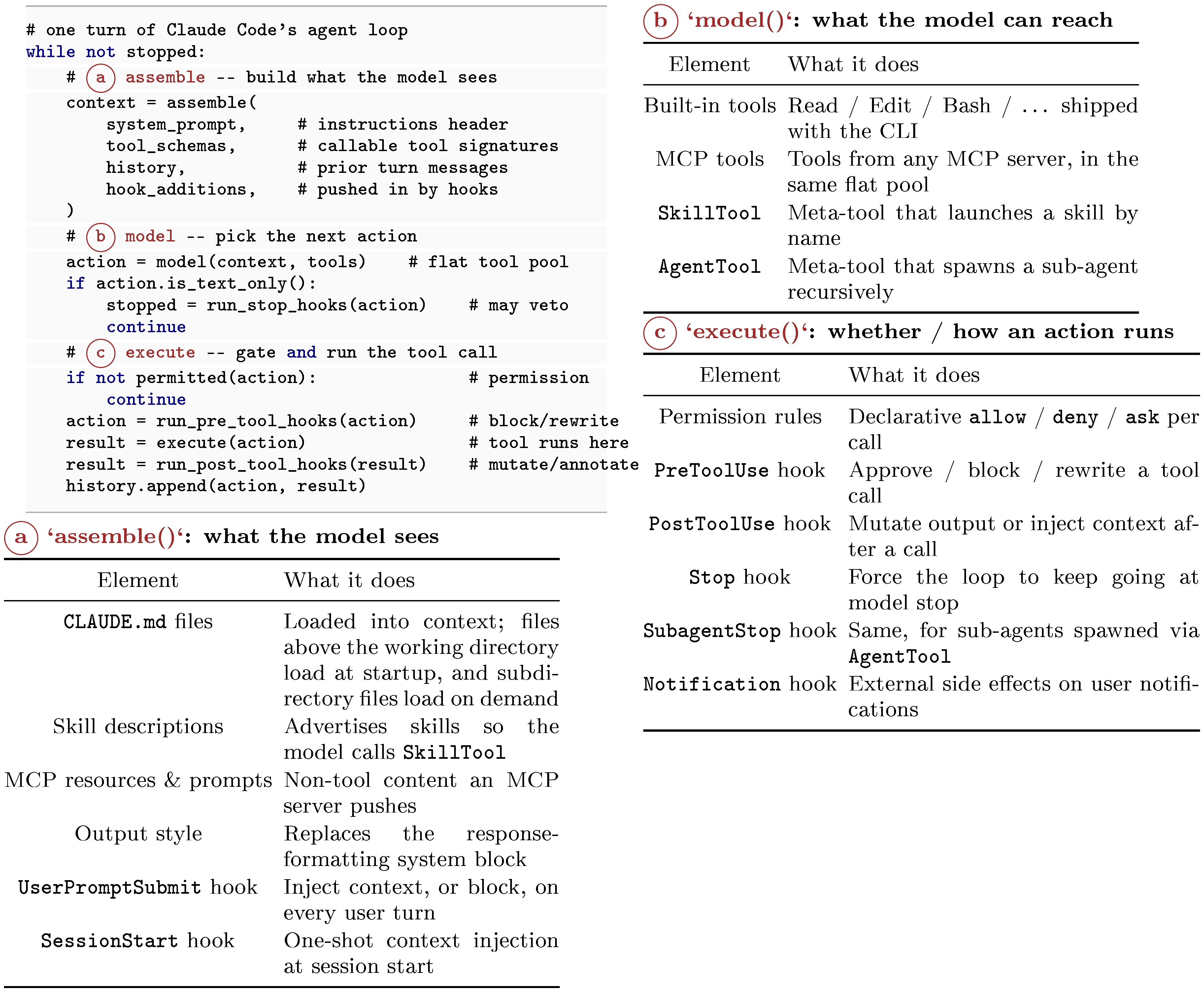

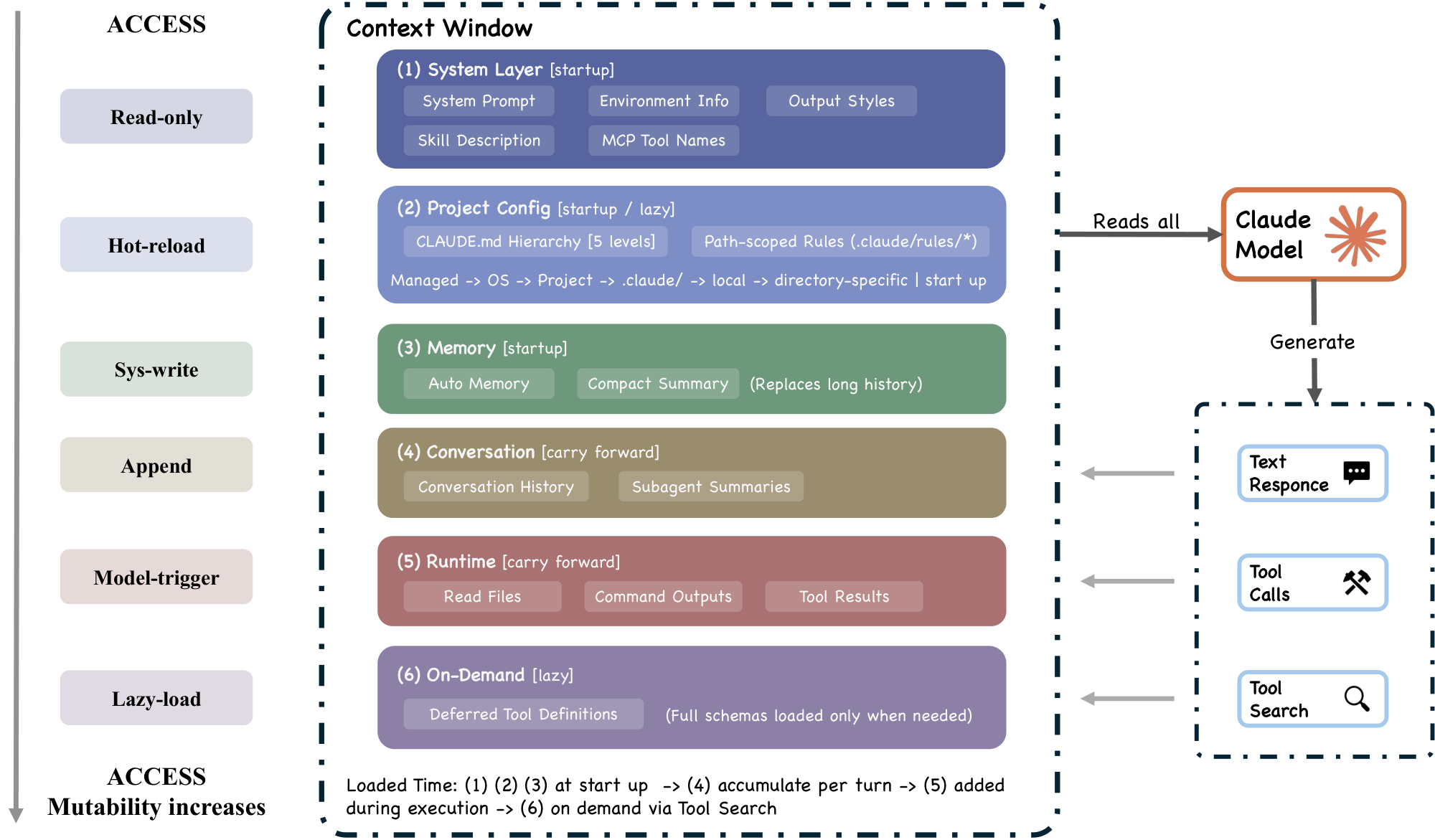

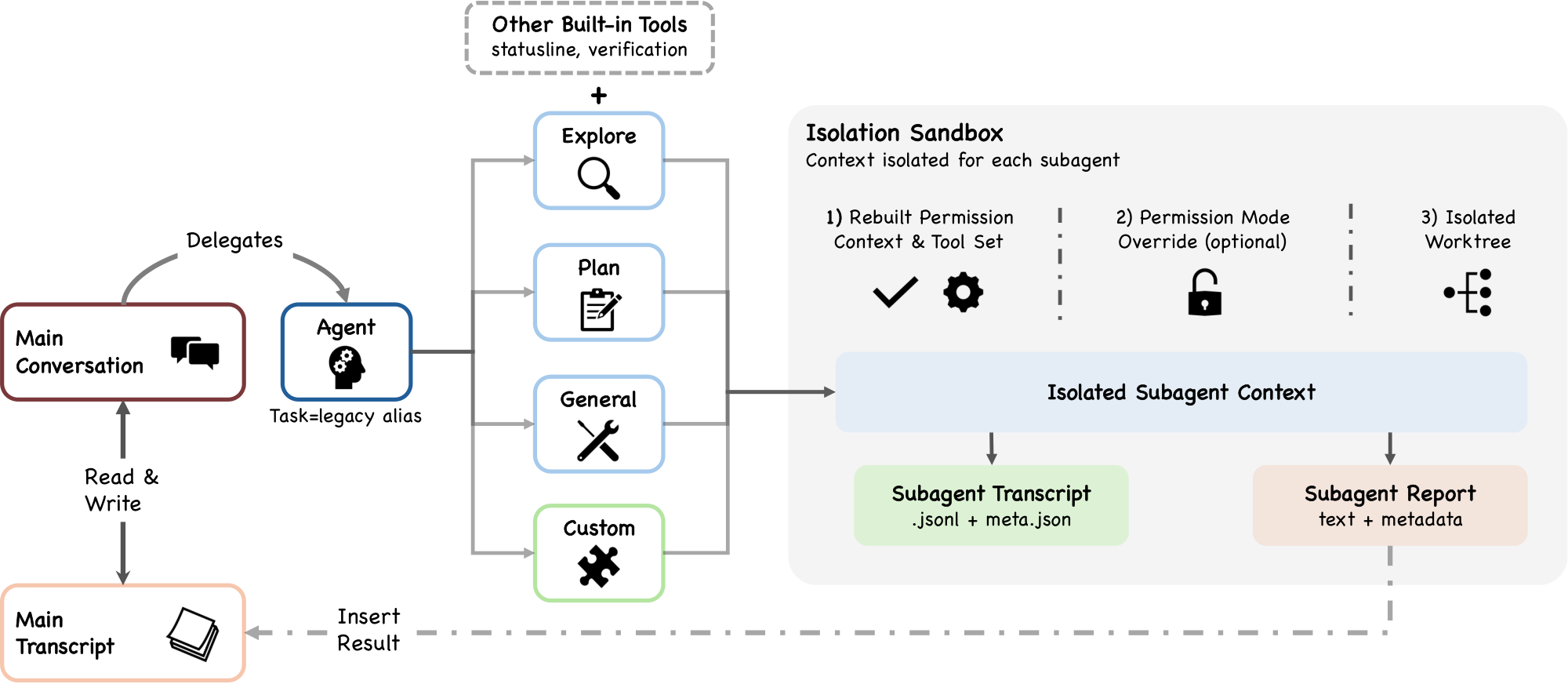

Building a production coding agent requires answering several recurring design questions: where should reasoning live, how many execution engines are needed, what safety posture to adopt, and what resource to treat as the binding constraint. Claude Code's architecture can be read as one set of answers to these questions. At the implementation level, the system has seven components connected by a main data flow: a user submits a prompt through one of several interfaces, which feeds into a shared agent loop. The agent loop assembles context, calls the Claude model, receives responses that may include tool-use requests, routes those requests through a permission system, and dispatches approved actions to concrete tools that interact with the execution environment. Throughout this process, state and persistence mechanisms record the conversation transcript, manage session identity, and support resume, fork, and rewind operations.

3.1 Design Questions and Running Example

The description is organized around four design questions that recur across production coding agents, each grounding one or more of the design principles identified in Table 1. Each question is introduced here with Claude Code's answer, a note on plausible alternatives, and then demonstrated progressively through Section 4, Section 5, Section 6, Figure 6, Section 8, and Section 9.

Where does reasoning live?

In Claude Code, the model reasons about what to do; the harness is responsible for executing actions. The model emits tool_use blocks as part of its response, and the harness parses them, checks permissions, dispatches them to tool implementations, and collects results (query.ts). The model never directly accesses the filesystem, runs shell commands, or makes network requests. This separation has a security consequence: because reasoning and enforcement occupy separate code paths, a compromised or adversarially manipulated model cannot override the sandboxing, permission checks, or deny-first rules implemented in the harness. The model's only interface to the outside world is the structured tool_use protocol, which the harness validates before execution. Community analysis of the extracted source estimates that only about 1.6% of Claude Code's codebase constitutes AI decision logic, with the remaining 98.4% being operational infrastructure, a ratio that illustrates how thin the core agent reasoning layer is. Alternative designs invest more heavily in scaffolding-side reasoning: Devin maintains explicit planning and task-tracking structures, while LangGraph ([17]) routes control flow through developer-defined state graphs.

How many execution engines?

Claude Code uses a single queryLoop() function that executes regardless of whether the user is interacting through an interactive terminal, a headless CLI invocation, the Agent SDK, or an IDE integration (query.ts). Only the rendering and user-interaction layer varies. Other systems use mode-specific engines: for example, an IDE integration may follow a different code path than a CLI tool, trading uniformity for surface-specific optimization.

What is the default safety posture?

Claude Code's default safety posture is deny-first with human escalation: deny rules override ask rules override allow rules, and unrecognized actions are escalated to the user rather than allowed silently (permissions.ts). Multiple independent safety layers (permission rules, PreToolUse hooks, the auto-mode classifier when enabled, and optional shell sandboxing) apply in parallel, so any one can block an action (Section 5). This combines the deny-first with human escalation and defense in depth with layered mechanisms principles from Table 1. Alternative approaches shift the trust boundary elsewhere: SWE-Agent and OpenHands ([18, 19]) rely on container-based isolation to contain arbitrary execution, while Aider ([20]) uses git-based rollback as its primary safety net.

What is the binding resource constraint?

In Claude Code, the context window (200K for older models, 1M for the Claude 4.6 series) is the binding resource constraint. Five distinct context-reduction strategies execute before every model call (query.ts), and several other subsystem decisions (lazy loading of instructions, deferred tool schemas, summary-only subagent returns) exist to limit context consumption (Figure 6). The five-layer pipeline exists because no single compaction strategy addresses all types of context pressure. Budget reduction targets individual tool outputs that overflow size limits. Snip handles temporal depth. Microcompact reacts to cache overhead. Context collapse manages very long histories. Auto-compact performs semantic compression as a last resort. Each layer operates at a different cost-benefit tradeoff, and earlier, cheaper layers run before costlier ones. Alternative architectures treat other resources as the primary bottleneck, for instance compute budget (limiting the number of model calls or tool invocations) or working memory (maintaining an explicit scratchpad rather than relying on the conversation history).

Running example.

To ground these principles, we thread a single task through Section 3, Section 4, Section 5, Section 6, Figure 6, Section 8, and Section 9: "Fix the failing test in auth.test.ts." In this section the user submits the prompt through one of Claude Code's interfaces. Subsequent sections trace the request through the query loop, permission gate, tool pool, context window, subagent delegation, and session persistence.

3.2 High-Level System Structure

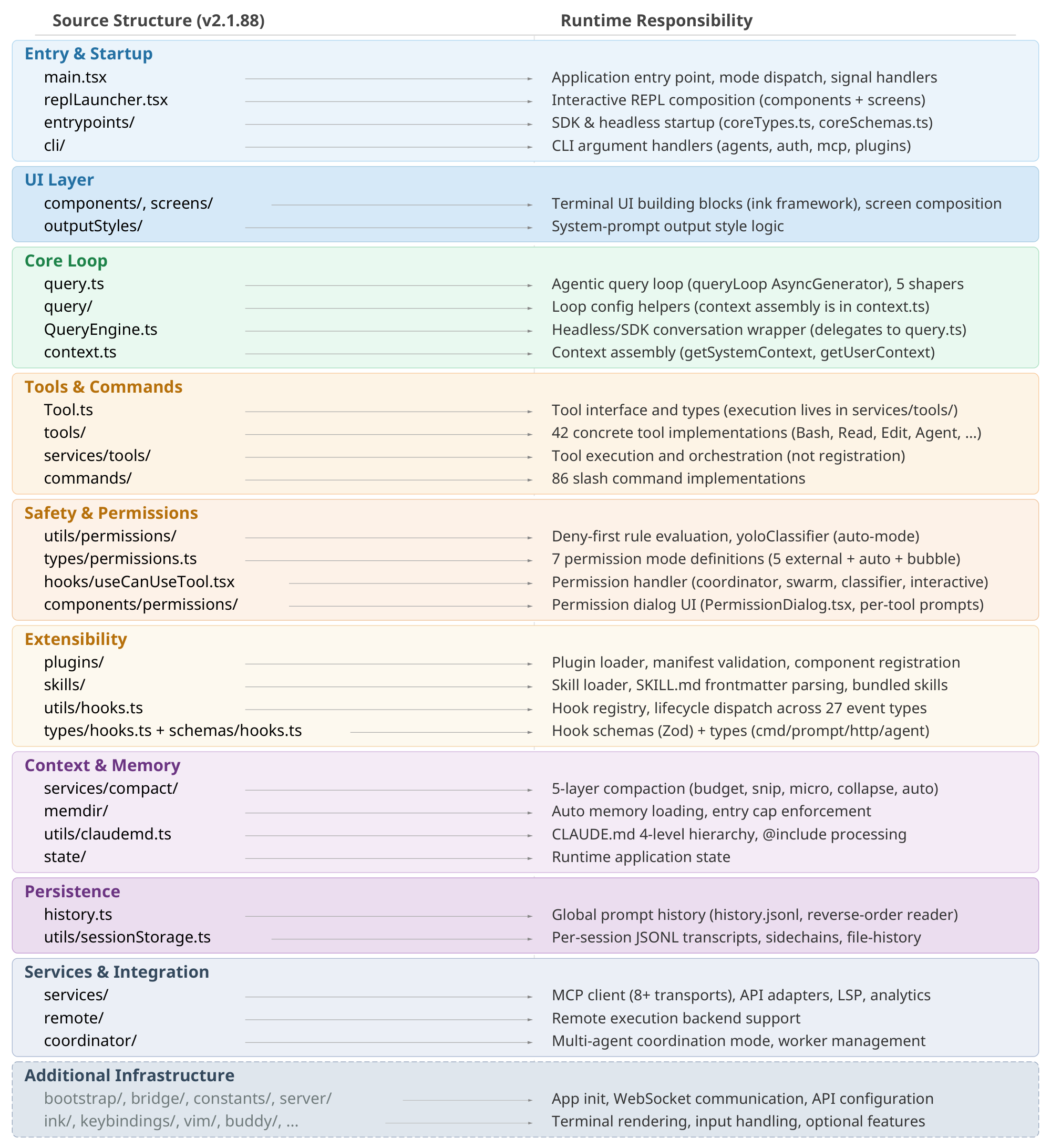

The seven-component model (Figure 1) maps directly to source files:

- User: Submits prompts, approves permissions, reviews output.

- Interfaces: Interactive CLI, headless CLI (

claude -p), Agent SDK, and IDE/Desktop/Browser. All surfaces feed the same loop. - Agent loop: The iterative cycle of model call, tool dispatch, and result collection, implemented as the

queryLoop()async generator inquery.ts. - Permission system: Deny-first rule evaluation (

permissions.ts), the auto-mode ML classifier, and hook-based interception (types/hooks.ts). - Tools: Up to 54 built-in tools (19 unconditional, 35 conditional on feature flags and user type) assembled via

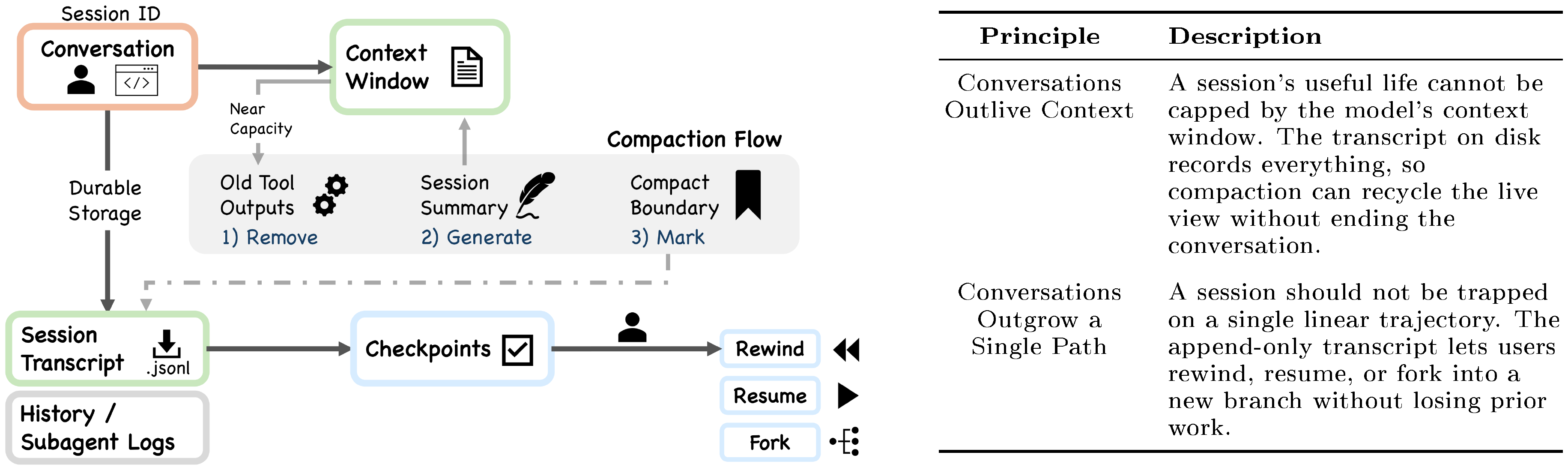

assembleToolPool()(tools.ts), merged with MCP-provided tools. Plugins contribute indirectly through MCP servers and the skill/command registry. - State & persistence: Mostly append-only JSONL session transcripts (

sessionStorage.ts), global prompt history (history.ts), and subagent sidechain files. - Execution environment: Shell execution with optional sandboxing (

shouldUseSandbox.ts), filesystem operations, web fetching, MCP server connections, and remote execution.

The data flow follows a left-to-right spine: the user submits a request through an interface, which enters the agent loop. The loop proposes actions to the permission system; approved actions reach tools, which interact with the execution environment and return tool_result messages back to the loop. State and persistence sit alongside the loop, recording transcripts and loading prior session data.

The application entry point main() in main.tsx initializes security settings (including NoDefaultCurrentDirectoryInExePath to prevent Windows PATH hijacking), registers signal handlers for graceful shutdown, and dispatches to the appropriate execution mode.

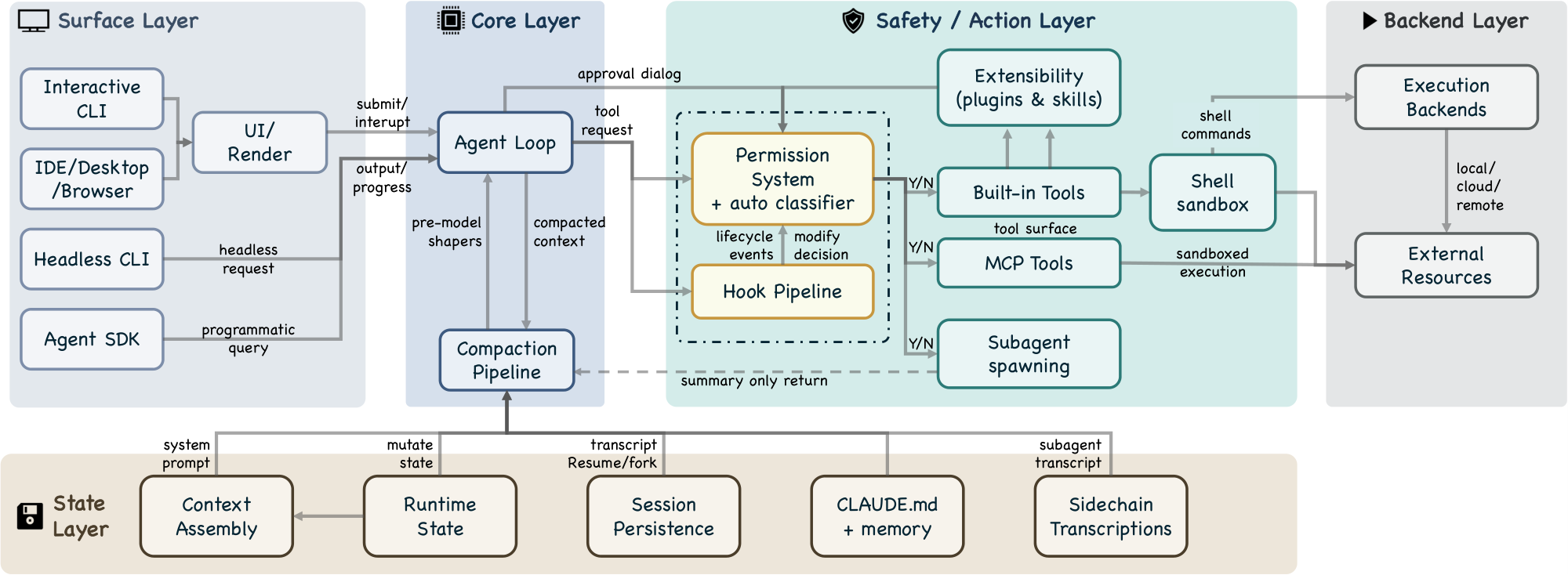

3.3 Layered Subsystem Decomposition

The five-layer decomposition (Figure 3) expands the seven-component model into a finer-grained view, mapping each layer to specific source directories.

Surface layer (entry points and rendering).

The src/entrypoints/ directory contains startup paths, including the SDK entry with coreTypes.ts, controlSchemas.ts, and coreSchemas.ts. The src/screens/ directory composes full-screen layouts, and src/components/ provides terminal UI building blocks via the ink framework. The interactive CLI launches a terminal UI with real-time streaming, permission dialogs, and progress indicators. The headless CLI (claude -p) creates a QueryEngine instance for single-shot processing. The Agent SDK emits typed events via async generators.

Core layer (agent loop, compaction pipeline).

The queryLoop() async generator (query.ts) implements the iterative agent loop, consuming assembled context from the state layer and dispatching tool requests to the safety/action layer. Before every model call, a compaction pipeline of five sequential shapers (query.ts:365–453) manages context pressure: budget reduction, snip, microcompact, context collapse, and auto-compact (Section 4.3 and Section 7.3).

Safety/action layer (permission system, hooks, extensibility, tools, sandbox, subagents).

The permission system (permissions.ts) implements deny-first rule evaluation with up to seven permission modes (if also counting internal-only bubble and feature-gated auto) (types/permissions.ts) and an integrated auto-mode ML classifier (yoloClassifier.ts) that provides a two-stage fast-filter and chain-of-thought evaluation of tool safety (Section 5). A hook pipeline spanning 27 event types (coreTypes.ts; output schemas in types/hooks.ts) can block, rewrite, or annotate tool requests; of these, 5 are safety-related while the remaining 22 serve lifecycle and orchestration purposes (Section 6). An extensibility subsystem allows plugins and skills to register tools and hooks into the runtime. Tool pool assembly via assembleToolPool() (tools.ts) merges built-in and MCP-provided tools. Approved shell commands pass through a shell sandbox (shouldUseSandbox.ts) that restricts filesystem and network access independently of the permission system. Subagent spawning via AgentTool (AgentTool.tsx, runAgent.ts) is dispatched through the same buildTool() factory as all other tools, re-entering the queryLoop() with an isolated context window and returning only a summary to the parent (Section 8).

State layer (context assembly, runtime state, persistence, memory, sidechains).

Context assembly is a memoized state loader, not a routing hub: getSystemContext() (context.ts) computes session-level system context including git status, and getUserContext() (context.ts) loads the CLAUDE.md hierarchy and current date. Both are cached for reuse: system context is appended to the system prompt, while user context is added as a user-context message. The src/state/ directory manages runtime application state. Session transcripts are stored as mostly append-only JSONL files at project-specific paths (sessionStorage.ts). The CLAUDE.md + memory subsystem provides a four-level instruction hierarchy (claudemd.ts) from managed settings to directory-specific files, plus auto-memory entries that Claude writes during conversations (Section 7.2). Sidechain transcripts (sessionStorage.ts:247) store each subagent's conversation in a separate file, preventing subagent content from inflating the parent context (Section 8.3). Global prompt history is maintained in history.jsonl (history.ts). Resume and fork operations reconstruct session state from transcripts (conversationRecovery.ts).

Backend layer (execution backends, external resources).

Shell command execution with optional sandboxing (BashTool.tsx, PowerShellTool.tsx), remote execution support (src/remote/), MCP server connections across multiple transport variants including stdio, SSE, HTTP, WebSocket, SDK, and IDE-specific adapters (services/mcp/client.ts), and 42 tool subdirectories in src/tools/ implement concrete tool logic.

3.4 QueryEngine: A Clarification

The class documentation at QueryEngine.ts states: "QueryEngine owns the query lifecycle and session state for a conversation. It extracts the core logic from ask() into a standalone class that can be used by both the headless/SDK path and (in a future phase) the REPL." The class is a conversation wrapper for non-interactive surfaces, not the engine itself. Its constructor accepts a QueryEngineConfig with initial messages, an abort controller, a file-state cache, and other per-conversation state. Its submitMessage() method is an async generator that orchestrates a single turn. The shared query path lives in query() (query.ts), which wraps an internal queryLoop(); QueryEngine delegates to query().

This distinction matters architecturally: the interactive CLI also calls query(), bypassing QueryEngine entirely. The shared code path is the loop function, not the engine class.

3.5 Permission and Safety Layers

The safety-by-default principle is implemented through seven independent layers. A request must pass through all applicable layers, and any single layer can block it:

- Tool pre-filtering (

tools.ts): Blanket-denied tools are removed from the model's view before any call, preventing the model from attempting to invoke them. - Deny-first rule evaluation (

permissions.ts): Deny rules always take precedence over allow rules, even when the allow rule is more specific. - Permission mode constraints (

types/permissions.ts): The active mode determines baseline handling for requests matching no explicit rule. - Auto-mode classifier: An ML-based classifier evaluates tool safety, potentially denying requests the rule system would allow.

- Shell sandboxing (

shouldUseSandbox.ts): Approved shell commands may still execute inside a sandbox restricting filesystem and network access. - Not restoring permissions on resume (

conversationRecovery.ts): Session-scoped permissions are not restored on resume or fork. - Hook-based interception (

types/hooks.ts): PreToolUse hooks can modify permission decisions; PermissionRequest hooks can resolve decisions asynchronously alongside the user dialog (or before it, in coordinator mode).

These layers are described in detail in Section 5.

3.6 Context as Bottleneck: Beyond Compaction

Beyond the five-layer compaction pipeline (detailed in Figure 6), several other subsystem decisions reflect the context-as-bottleneck constraint:

- CLAUDE.md lazy loading: The base CLAUDE.md hierarchy is loaded at session start, but additional nested-directory instruction files and conditional rules are loaded only when the agent reads files in those directories, preventing unused instructions from consuming context.

- Deferred tool schemas: When ToolSearch is enabled, some tools include only their names in the initial context; full schemas are loaded on demand.

- Subagent summary-only return: Subagents return only summary text to the parent, not their full conversation history (Section 8).

- Per-tool-result budget: Individual tool results are capped at a configurable size, preventing a single verbose output from consuming disproportionate context.

4. Turn Execution: The Agentic Query Loop

Section Summary: When a user submits a coding task like fixing a failing test, Claude Code processes it through a reactive loop that mimics a simple while-loop, allowing the AI to reason, call tools, and iterate until the task is resolved. Each loop turn follows a fixed sequence: it assembles conversation history and context, queries the model for responses that may include tool requests, executes those tools in a streaming manner for efficiency while ensuring safe concurrency, and adds results back into the loop until no more tools are needed. This design emphasizes keeping context lean through compaction and summaries, enabling quick recovery from errors while prioritizing simplicity over complex branching paths.

When the user submits "Fix the failing test in auth.test.ts, " the input enters a reactive loop, one of several possible orchestration patterns for coding agents. This section examines Claude Code's choice of a simple while-loop architecture and traces one turn of that loop end-to-end, illustrating three design principles from Table 1: minimal scaffolding with maximal operational harness, context as scarce resource with progressive management, and graceful recovery and resilience.

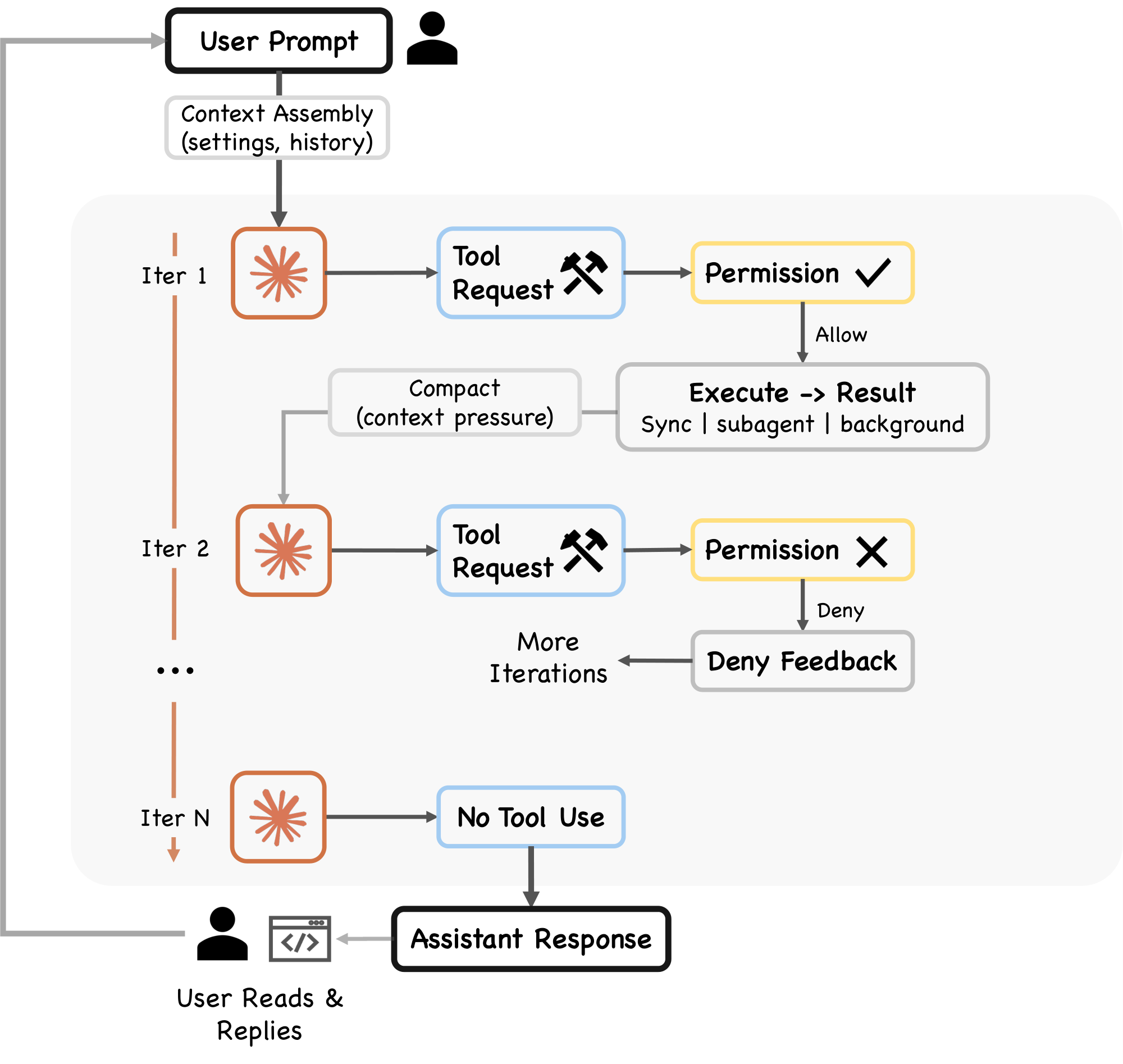

4.1 The Query Pipeline

Each turn follows a fixed sequence (Figure 2, query.ts):

- Settings resolution. The

queryLoop()function destructures immutable parameters including the system prompt, user context, permission callback, and model configuration. - Mutable state initialization. A single

Stateobject stores all mutable state across iterations, including messages, tool context, compaction tracking, and recovery counters. The loop's sevencontinuepoints (the "continue sites") each overwrite this object in one whole-object assignment rather than mutating fields individually. - Context assembly. The function

getMessagesAfterCompactBoundary()retrieves messages from the last compact boundary forward, ensuring that compacted content is represented by its summary rather than the original messages. - Pre-model context shapers. Five shapers execute sequentially (Section 4.3).

- Model call. A

for awaitloop overdeps.callModel()streams the model's response, passing assembled messages (with user context prepended), the full system prompt, thinking configuration, the available tool set, an abort signal, the current model specification, and additional options including fast-mode settings, effort value, and fallback model. - Tool-use dispatch. If the response contains

tool_useblocks, they flow to the tool orchestration layer (Section 4.2). - Permission gate. Each tool request passes through the permission system (Section 5).

- Tool execution and result collection. Tool results are added to the conversation as

tool_resultmessages, and the loop continues. - Stop condition. If the response contains no

tool_useblocks (text only), the turn is complete.

The queryLoop() function is defined as an AsyncGenerator, yielding StreamEvent, RequestStartEvent, Message, TombstoneMessage, and ToolUseSummaryMessage events as it progresses. This generator-based design enables streaming output to the UI layer while maintaining a single synchronous control flow within the loop.

Claude Code's reactive loop follows the ReAct pattern ([22]): the model generates reasoning and tool invocations, the harness executes actions, and results feed the next iteration. Alternative orchestration patterns include explicit graph-based routing ([17]), where control flow is defined as a state machine with typed edges, and tree-search methods ([23]) that explore multiple action trajectories before committing. Anthropic's own documentation ([13]) identifies five composable workflow patterns (prompt chaining, routing, parallelization, orchestrator-workers, and evaluator-optimizer) of which Claude Code primarily uses the orchestrator-workers pattern for subagent delegation (Section 8) while keeping the core loop reactive. The reactive design trades search completeness for simplicity and latency: each turn commits to one action sequence without backtracking.

4.2 Tool Dispatch and Streaming Execution

When the model response contains tool_use blocks, the system chooses between two execution paths. The primary path uses StreamingToolExecutor, which begins executing tools as they stream in from the model response, reducing latency for multi-tool responses. The fallback path uses runTools() in toolOrchestration.ts, which iterates over partitions produced by partitionToolCalls(). Both paths classify tools as concurrent-safe or exclusive. Read-only operations can execute in parallel, while state-modifying operations like shell commands are serialized.

The StreamingToolExecutor (StreamingToolExecutor.ts) manages concurrent execution with two coordination mechanisms:

- Sibling abort controller. Fires when any Bash tool errors, immediately terminating other in-flight subprocesses rather than letting them run to completion.

- Progress-available signal. Wakes up the

getRemainingResults()consumer when new output is ready.

Results are buffered and emitted in the order tools were received, so output order stays the same even when tools run in parallel. This is important because the model expects tool results in the same order as its tool-use requests. This concurrent-read, serial-write execution model occupies a middle ground between fully serial dispatch and more aggressive speculative approaches such as PASTE ([24]), which speculatively pre-executes predicted future tool calls while the model is still generating, hiding tool latency through speculation.

The tool result collection phase iterates over updates from either the streaming executor or the synchronous runTools() generator. Each update may carry a tool result, an attachment, or a progress event. A special check detects hook_stopped_continuation attachments: if a PostToolUse hook signals that the turn should not continue, a shouldPreventContinuation flag is set. Results are normalized for the Anthropic API via normalizeMessagesForAPI(), filtering to keep only user-type messages.

4.3 Pre-Model Context Shapers

Five context shapers execute sequentially in query.ts before every model call, each operating on the messagesForQuery array. The five shapers run in sequence, with earlier steps applying lighter reductions before later steps apply broader compaction.

Budget reduction.

(applyToolResultBudget()). Enforces per-message size limits on tool results, replacing oversized outputs with content references. Exempt tools (those where maxResultSizeChars is not finite) retain their full output. Content replacements are persisted for agent and session query sources to enable reconstruction on resume. Budget reduction runs before microcompact because microcompact operates purely by tool_use_id and never inspects content; the two compose cleanly.

Snip.

(snipCompactIfNeeded(), gated by HISTORY_SNIP). A lightweight trim that removes older history segments, returning {messages, tokensFreed, boundaryMessage}. The snipTokensFreed value is plumbed to auto-compact because the main token counter derives context size from the usage field on the most recent assistant message, and that message survives snip with its pre-snip input_tokens still attached; snip's savings are therefore invisible to the counter unless passed through explicitly.

Microcompact.

Fine-grained compression that always runs a time-based path and optionally a cache-aware path (gated by CACHED_MICROCOMPACT). When the cached path is enabled, boundary messages are deferred until after the API response so they can use actual cache_deleted_input_tokens rather than estimates. Returns {messages, compactionInfo} where compactionInfo may include pendingCacheEdits.

Context collapse.

Gated by CONTEXT_COLLAPSE. A read-time projection over the conversation history. The source comments explain: "Nothing is yielded; the collapsed view is a read-time projection over the REPL's full history. Summary messages live in the collapse store, not the REPL array. This is what makes collapses persist across turns." Unlike the other shapers, context collapse does not mutate the REPL's stored history; it replaces the messagesForQuery array with a projected view via applyCollapsesIfNeeded(), so the model sees the collapsed version while the full history remains available for reconstruction.

Auto-compact.

The fifth shaper, triggering a full model-generated summary via compactConversation() in compact.ts. This function executes PreCompact hooks, creates a summary request using getCompactPrompt(), and calls the model to produce a compressed summary. The result feeds into buildPostCompactMessages() (compact.ts). Auto-compact fires only when the context still exceeds the pressure threshold after all four previous shapers have run.

4.4 Recovery Mechanisms

The query loop implements several recovery mechanisms for edge cases:

- Max output tokens escalation: When the response hits the output token cap, the system can retry with an escalated limit, subject to a GrowthBook flag and the absence of an existing override or environment-variable cap. Up to three recovery attempts are allowed per turn (

MAX_OUTPUT_TOKENS_RECOVERY_LIMIT = 3). - Reactive compaction (gated by

REACTIVE_COMPACT): When the context is near capacity, reactive compact summarizes just enough to free space. ThehasAttemptedReactiveCompactflag ensures this fires at most once per turn. - Prompt-too-long handling: If the API returns a

prompt_too_longerror, the loop first attempts context-collapse overflow recovery and reactive compaction. Only after these fail does it terminate withreason: 'prompt_too_long'. - Streaming fallback: The

onStreamingFallbackcallback handles streaming API issues, allowing the loop to retry with a different strategy. - Fallback model: The

fallbackModelparameter enables switching to an alternative model if the primary model fails.

4.5 Stop Conditions

Multiple conditions can terminate the loop:

- No tool use: The model produces only text content (the primary stop condition).

- Max turns: The configurable

maxTurnslimit is reached. - Context overflow: The API returns

prompt_too_long. - Hook intervention: A PostToolUse hook sets

hook_stopped_continuation. - Explicit abort: The

abortControllersignal fires.

The turn pipeline determines how tool requests are orchestrated and recovered. The next section examines the gate that determines whether each request is permitted to execute at all.

5. Tool Authorization and Control Boundaries

Section Summary: Claude Code ensures safe tool use in coding tasks by following a deny-first approach, where tools like running code commands must pass multiple safety checks and often require user approval rather than being allowed automatically. This system includes seven permission modes that range from strict planning with full human oversight to more autonomous options with automated evaluations, all built on layered defenses like pre-filtering denied tools, rule checks, and hooks to prevent misuse even if users approve requests without close attention. The design addresses the problem of users habitually clicking yes to prompts, maintaining independent safeguards through sandboxing and reversible actions to minimize risks.

Production coding agents adopt different safety architectures: layered policy enforcement, OS-level sandboxing, or version-control-based rollback. Claude Code combines the first two, implementing four design principles from Table 1: deny-first with human escalation, graduated trust spectrum, defense in depth with layered mechanisms, and reversibility-weighted risk assessment.

When Claude decides to execute a tool (for example, running npm test via BashTool to reproduce the auth test failure), the request enters the permission pipeline shown in Figure 4. Every tool invocation passes through the permission system, and the default behavior is to deny or ask rather than allow silently. This default is motivated by a documented behavioral pattern: Anthropic's auto-mode analysis ([10]) found that users approve approximately 93% of permission prompts, indicating that approval fatigue renders interactive confirmation behaviorally unreliable as a sole safety mechanism. Because users habitually approve without careful review, the system must maintain safety independently of human vigilance. This motivates the architectural commitment to deny-first evaluation, blanket-deny pre-filtering, and sandboxing as independent layers that operate regardless of user attentiveness.

5.1 Permission Modes and Rule Evaluation

Seven permission modes exist across the type definitions (5 external modes at types/permissions.ts; auto added conditionally; bubble in the type union):

plan: The model must create a plan; execution proceeds only after user approval.default: Standard interactive use. Most operations require user approval.acceptEdits: Edits within the working directory and certain filesystem shell commands (mkdir, rmdir, touch, rm, mv, cp, sed) are auto-approved; other shell commands require approval.auto: An ML-based classifier evaluates requests that do not pass fast-path checks (gated byTRANSCRIPT_CLASSIFIER).dontAsk: No prompting, but deny rules are still enforced.bypassPermissions: Skips most permission prompts, but safety-critical checks and bypass-immune rules still apply.bubble: Internal-only mode for subagent permission escalation to the parent terminal.

The five externally visible modes (acceptEdits, bypassPermissions, default, dontAsk, plan) are defined in the EXTERNAL_PERMISSION_MODES array. The auto mode is conditionally included only when the TRANSCRIPT_CLASSIFIER feature flag is active. The bubble mode exists in the type union but not in either mode array; it is used internally for subagent permission escalation (Section 8).

Permission rules are evaluated in deny-first order (permissions.ts). The toolMatchesRule() function checks deny rules first: a deny rule always takes precedence over an allow rule, even when the allow rule is more specific. A broad deny ("deny all shell commands") cannot be overridden by a narrow allow ("allow npm test"). The rule system supports tool-level matching (by tool name) and content-level matching (matching specific tool input patterns, such as Bash(prefix:npm)).

The seven modes span a graduated autonomy spectrum, from plan (user approves all plans before execution) through default and acceptEdits to bypassPermissions (minimal prompting). This gradient reflects a recurring design tension: as autonomy increases, the system must shift from interactive approval to automated safety checks. Other agent systems resolve this tension differently. SWE-Agent and OpenHands ([18, 19]) use Docker container isolation, sandboxing the agent's entire execution environment rather than evaluating individual tool invocations. Aider ([20]) relies on Git as a safety net, making all changes reversible through version control. Claude Code's approach layers multiple policy-enforcement mechanisms on top of optional container sandboxing, trading simplicity for fine-grained control over individual actions.

5.2 The Authorization Pipeline

The full authorization pipeline proceeds through several stages:

Pre-filtering.

Before any tool request reaches runtime evaluation, filterToolsByDenyRules() (tools.ts) strips blanket-denied tools from the model's view entirely at tool pool assembly time. The documentation states: "Uses the same matcher as the runtime permission check, so MCP server-prefix rules like mcp__server strip all tools from that server before the model sees them." This prevents the model from attempting to invoke forbidden tools, so the model does not waste calls on them.

PreToolUse hook.

Registered hooks fire as part of the permission pipeline. A PreToolUse hook can return a permissionDecision to deny or ask, or an updatedInput that modifies the tool's input parameters (types/hooks.ts). A hook allow does not bypass subsequent rule-based denies or safety checks. In the interactive path, the user dialog is queued first and hooks run asynchronously; coordinator and similar background-agent paths await automated checks before showing the dialog.

Rule evaluation.

The deny-first rule engine evaluates the request. MCP tools are matched by their fully qualified mcp__server__tool name, and server-level rules match all tools from that server.

Permission handler.

The handler in useCanUseTool.tsx branches into one of four paths based on runtime context:

- Coordinator: For multi-agent coordination mode. Attempts automated resolution (classifier, hooks, rules) before falling back to user interaction.

- Swarm worker: Handles worker agents in a multi-agent swarm with their own resolution logic.

- Speculative classifier: When

BASH_CLASSIFIERis enabled and the tool is BashTool, a speculative classifier races a pre-started classification result against a timeout. If the classifier returns with high confidence, the tool is approved instantly without user interaction. - Interactive: The fallback path. Presents the standard user approval dialog through the terminal UI.

In coordinator and some background paths, automated resolution is attempted before user interaction. In the standard interactive path, the dialog can appear first while hooks or classifier checks continue in parallel. When the classifier or a deny rule blocks an action, the system treats the denial as a routing signal rather than a hard stop: the model receives the denial reason, revises its approach, and attempts a safer alternative in the next loop iteration. The PermissionDenied hook event (Section 6) enables external code to observe and respond to these denials programmatically. This recovery-oriented design means that permission enforcement shapes the agent's behavior rather than simply halting it.

5.3 Auto-Mode Classifier and Hook Lifecycle

The auto-mode classifier (yoloClassifier.ts) participates in permission decisions when enabled. When TRANSCRIPT_CLASSIFIER is enabled, the classifier loads three prompt resources:

- A base system prompt.

- An external permissions template.

- For Anthropic-internal users, a separate internal template.

The classifier evaluates the proposed tool invocation against the conversation transcript and the permission template, producing an allow, deny, or request for manual approval. The function isUsingExternalPermissions() checks USER_TYPE and a forceExternalPermissions config flag to select the appropriate template.

Of the 27 hook events defined in the source (coreTypes.ts), five participate directly in the permission flow, each with a specific Zod-validated output schema (types/hooks.ts):

- PreToolUse: Can return

permissionDecision(deny or ask, but allow does not bypass subsequent checks),permissionDecisionReason, andupdatedInput(modify parameters). - PostToolUse: Can inject

additionalContextand, for MCP tools, returnupdatedMCPToolOutputto modify results before they enter the context. - PostToolUseFailure: Can inject

additionalContextfor error-specific guidance. - PermissionDenied: Can provide

retryguidance after auto-mode denials. - PermissionRequest: Can return a

decisionofallowordeny. In coordinator and similar paths, this can resolve before the user dialog. In the standard interactive path, it can also run alongside the dialog.

For non-MCP tools, the tool_result is emitted before the PostToolUse hook fires. For MCP tools, the result is delayed until after post hooks have run, enabling updatedMCPToolOutput to take effect.

5.4 Shell Sandboxing

Shell sandboxing provides an additional layer of protection for Bash and PowerShell commands (shouldUseSandbox.ts). The shouldUseSandbox() function checks whether sandboxing is globally enabled, whether the invocation has opted out, and whether the command matches any exclusion patterns.

When active, the sandbox provides filesystem and network isolation independent of the application-level permission model. A command can be permission-approved but still sandboxed, or permission-denied and never reach the sandbox check. The two systems operate on different axes: authorization versus isolation.

The layered safety architecture rests on an independence assumption: if one layer fails, others catch the violation. However, several layers share common performance constraints. Security researchers ([25]) have documented that commands with more than 50 subcommands fall back to a single generic approval prompt instead of running per-subcommand deny-rule checks, because per-subcommand parsing caused UI freezes. This example demonstrates that defense-in-depth can degrade when its layers share failure modes, a structural tension between safety and performance analyzed further in Section 11.3.

The permission pipeline governs whether a tool request executes. The next section examines what determines which tools exist in the first place: the extensibility architecture that assembles the model's action surface.

6. Extensibility: MCP, Plugins, Skills, and Hooks

Section Summary: Claude Code extends its functionality through four main mechanisms—MCP servers, plugins, skills, and hooks—that allow users to add custom tools, instructions, and behaviors at different stages of the agent's operation. MCP servers connect external resources like databases, plugins bundle and distribute various components for broad enhancements, skills provide domain-specific guidance such as custom code linting, and hooks intercept events to modify actions like tool execution or permissions. These approaches follow design principles of flexible, layered extensibility and programmable policies, balancing power with the limits of the AI's context window.

A recurring design question for coding agents is how to structure the extension surface: a single unified mechanism, a small number of specialized mechanisms, or a layered stack with different context costs. The analysis here illustrates two design principles from Table 1: composable multi-mechanism extensibility and externalized programmable policy. Returning to the running example, once Claude is trying to repair auth.test.ts and the earlier npm test request has been mediated by the permission system (Section 5), the next question is what extension-enabled action surface is available for the repair. When a turn begins in Claude Code, the model sees not just built-in tools like BashTool and FileReadTool, but also database query tools from an MCP server, a custom lint skill from .claude/skills/, and tools contributed by an installed plugin. These arrive through four mechanisms that extend the agent at different points of the loop: MCP servers provide external tool integration, plugins package and distribute bundles of components, skills inject domain-specific instructions, and hooks intercept the tool execution lifecycle. Anthropic's documentation ([12]) presents a broader view that includes CLAUDE.md (Figure 6) and subagents (Section 8) alongside the four mechanisms analyzed here. We treat CLAUDE.md and subagents in their own sections because they operate in different subsystems (context construction and delegation, respectively), but the context-cost ordering is architecturally significant: it reveals how each extension point trades off expressiveness against the bounded context window.

6.1 Four Extension Mechanisms

The mechanisms are implemented in distinct source directories (Figure 5) and serve different integration patterns:

MCP servers.

The Model Context Protocol is the primary external tool integration path. MCP servers are configured from multiple scopes: project, user, local, and enterprise, with additional plugin and claude.ai servers merged at runtime (services/mcp/config.ts). The MCP client (services/mcp/client.ts) supports multiple transport types: stdio, SSE, HTTP, WebSocket, SDK, plus IDE-specific variants (sse-ide, ws-ide) and an internal claudeai-proxy. Each connected server contributes tool definitions as MCPTool objects. Dedicated built-in tools ListMcpResourcesTool and ReadMcpResourceTool provide access to MCP resources.

Plugins.

Plugins serve a dual role: they are both a packaging format and a distribution mechanism. The PluginManifestSchema (utils/plugins/schemas.ts) accepts ten component types: commands, agents, skills, hooks, MCP servers, LSP servers, output styles, channels, settings, and user configuration. The plugin loader (utils/plugins/pluginLoader.ts) validates manifests and routes each component to its respective registry: commands and skills surface through the SkillTool meta-tool, agents appear in definitions consumed by AgentTool, hooks merge into the hook registry, MCP and LSP servers fold into their standard configurations, and output styles modify response formatting. A single plugin package can therefore extend Claude Code across multiple component types simultaneously, making plugins the primary distribution vehicle for third-party extensions.

Skills.

Each skill is defined by a SKILL.md file with YAML frontmatter. The parseSkillFrontmatterFields() function (loadSkillsDir.ts) parses 15+ fields including display name, description, allowed tools (granting the skill access to additional tools), argument hints, model overrides, execution context ('fork' for isolated execution), associated agent definitions, effort levels, and shell configuration. Skills can define their own hooks, which register dynamically on invocation. Bundled skills are registered in-memory at startup. When invoked, the SkillTool meta-tool injects the skill's instructions into the context.

Hooks.

The source code defines 27 hook events spanning tool authorization (PreToolUse, PostToolUse, PostToolUseFailure, PermissionRequest, PermissionDenied), session lifecycle (SessionStart, SessionEnd, Setup, Stop, StopFailure), user interaction (UserPromptSubmit, Elicitation, ElicitationResult), subagent coordination (SubagentStart, SubagentStop, TeammateIdle, TaskCreated, TaskCompleted), context management (PreCompact, PostCompact, InstructionsLoaded, ConfigChange), workspace events (CwdChanged, FileChanged, WorktreeCreate, WorktreeRemove), and notifications (coreTypes.ts, coreSchemas.ts). Of these, 15 have event-specific output schemas with rich fields supporting permission decisions, context injection, input modification, MCP result transformation, and retry control (types/hooks.ts). Persisted hook commands configured via settings and plugins use four command types: shell commands (type: command), LLM prompt hooks (type: prompt), HTTP hooks (type: http), and agentic verifier hooks (type: agent) (schemas/hooks.ts). The runtime additionally supports non-persistable callback hooks (type: callback) used by the SDK and internal instrumentation (types/hooks.ts). Hook sources include settings.json, plugins, and managed policy at startup; skill hooks register dynamically on invocation (utils/hooks.ts). The five tool-authorization events are detailed in Section 5.3.

6.2 Tool Pool Assembly

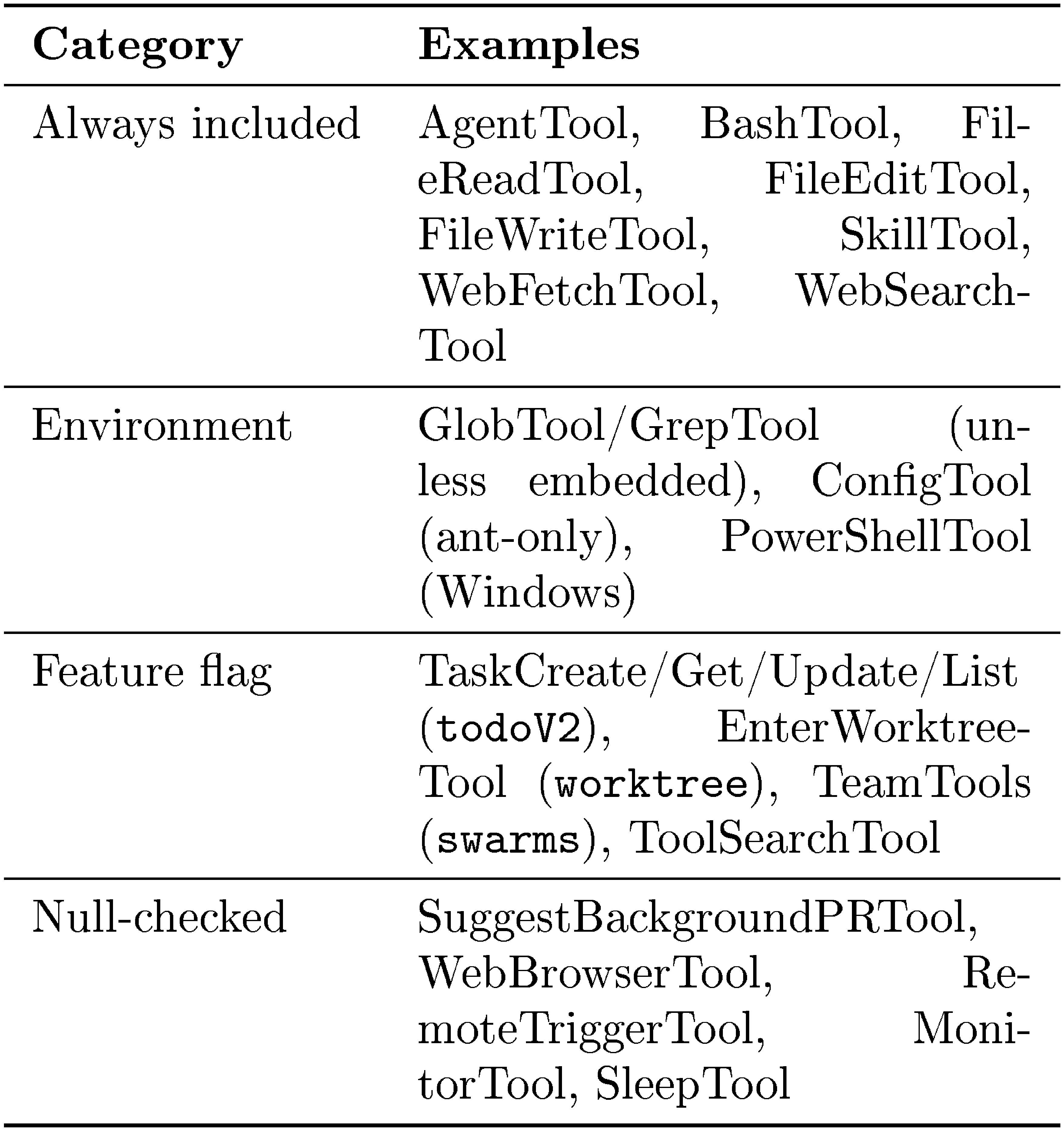

The assembleToolPool() function at tools.ts is documented as "the single source of truth for combining built-in tools with MCP tools." The assembly follows a five-step pipeline:

- Base tool enumeration.

getAllBaseTools()(tools.ts) returns an array of up to 54 tools: 19 are always included (such asBashTool,FileReadTool,AgentTool,SkillTool), and 35 more are conditionally included based on feature flags, environment variables, and user type. Anthropic-internal users get additional internal tools. Worktree mode enablesEnterWorktreeToolandExitWorktreeTool. Agent swarms enable team tools. When embedded search tools are available in the Bun binary, dedicatedGlobToolandGrepToolare omitted. - Mode filtering.

getTools()(tools.ts) applies mode-specific filtering. InCLAUDE_CODE_SIMPLEmode, only Bash, Read, and Edit are available (orREPLToolin the REPL branch; plus coordinator tools if applicable). Each tool'sisEnabled()method is called for runtime availability checks. - Deny rule pre-filtering.

filterToolsByDenyRules()(tools.ts) strips blanket-denied tools from the model's view before any call. - MCP tool integration. MCP tools from

appState.mcp.toolsare filtered by deny rules and merged with built-in tools. - Deduplication. Tools are deduplicated by name, with built-in tools taking precedence over MCP tools.

Both REPL.tsx (via the useMergedTools hook) and AgentTool.tsx (when building the worker tool set) invoke this function, ensuring consistent assembly across all execution paths. At request time, deferred tools may be hidden from the model's context until explicitly queried via ToolSearch (tools.ts).

Agent-based extension (custom agent definitions via .claude/agents/*.md and plugin-contributed agents) is covered in Section 8, because agents differ fundamentally from the four mechanisms above: they create new, isolated context windows rather than extending the current one.

6.3 Why Four Mechanisms?

Given that each additional extension mechanism increases the surface area developers must learn, a natural question is why Claude Code uses four distinct mechanisms rather than consolidating into one or two. The answer lies in the observation that different kinds of extensibility impose different costs on the context window, and a single mechanism cannot span the full range from zero-context lifecycle hooks to schema-heavy tool servers without forcing unnecessary trade-offs on extension authors.

:Table 2: What each extension mechanism uniquely provides. Context cost refers to how much of the bounded context window the mechanism consumes when active.

| Mechanism | Unique Capability | Context Cost | Insertion Point |

|---|---|---|---|

| MCP servers | External service integration (multi-transport) | High (tool schemas) | model():tool pool |

| Plugins | Multi-component packaging + distribution | Medium (varies) | All three points |

| Skills | Domain-specific instructions + meta-tool invocation | Low (descriptions only) | assemble():context injection |

| Hooks | Lifecycle interception + event-driven automation | Zero by default | execute():pre/post tool |

As Table 2 summarizes, each mechanism trades deployment complexity for a different kind of extensibility. MCP servers provide runtime tool integration (the model gains new callable tools) at the cost of server management overhead and context budget consumed by tool schemas. Skills shape how the agent thinks (not just what tools it has) at minimal context cost, since only frontmatter descriptions (not full content) stay in the prompt. Hooks provide cross-cutting lifecycle control (blocking, rewriting, or annotating tool calls) with no context footprint by default, though hooks can opt into injecting additional context. Plugins bundle any combination of the other three into distributable packages, acting as the packaging and distribution layer rather than a distinct runtime primitive. The graduated context-cost ordering (zero for hooks, low for skills, medium for plugins, high for MCP) means that cheap extensions can scale widely without exhausting the context window, while expensive ones are reserved for cases that genuinely require new tool surfaces.

Some agent frameworks provide a single extension mechanism, typically a tool-only API where all customization arrives as additional callable tools. Others use two tiers, separating tools from configuration or instruction injection. Claude Code's four-mechanism approach can accommodate a broader range of extension patterns, from zero-context event handlers to full external service integrations, but it increases the learning curve developers face when deciding which mechanism to use for a given integration task.

7. Context Construction and Memory

Section Summary: This section explains how the AI agent, called Claude Code, builds and manages its limited context window to handle ongoing tasks, treating context as a precious resource that needs careful organization and summarization to avoid overload. It draws from various sources like system prompts, environment details, conversation history, tool results, and special instruction files called CLAUDE.md, which are organized in a clear hierarchy from global user settings to project-specific rules for easy reading and editing. Unlike more complex systems using databases or hidden searches, this approach prioritizes transparency with plain text files, allowing users to inspect, change, and version-control what the agent remembers and follows.

How an agent manages its context window and persists user instructions is a central design choice, with different systems choosing between file-based transparency, database-backed retrieval, and opaque learned representations. The design choices here implement two principles from Table 1: context as scarce resource with progressive management and transparent file-based configuration and memory.

By this point in the running example, the task has accumulated state: the original request, the npm test permission outcome, the tool pool assembled in Section 6, and any file reads or command outputs gathered so far. This section asks how that growing state is packed into Claude Code's bounded context window before the next model call.

Before the model is called, the agent loop assembles a context window from the tool pool (Section 6), CLAUDE.md files, auto memory, and conversation history. The following subsections cover the assembly order, the CLAUDE.md hierarchy, and the multi-step compaction pipeline.

7.1 Context Window Assembly

The context window (Figure 6) is assembled from the following sources, some at initial assembly and others injected late during the turn:

- System prompt, incorporating output style modifications and any

–append-system-promptflag content. - Environment info via

getSystemContext()(context.ts): git status (skipped in remote mode or when git instructions are disabled) and an optional cache-breaking injection for internal builds (gated byBREAK_CACHE_COMMAND). Memoized once per session. - CLAUDE.md hierarchy via

getUserContext()(context.ts): four-level instruction file hierarchy (Section 7.2). Also memoized. - Path-scoped rules: conditional and directory-matched rules that load lazily when the agent reads files in matching directories.

- Auto memory: contextually relevant memory entries prefetched asynchronously.

- Tool metadata: skill descriptions, MCP tool names, and deferred tool definitions (via ToolSearch, on demand).

- Conversation history: carried forward, subject to compaction.

- Tool results: file reads, command outputs, subagent summaries.

- Compact summaries: replacing older history segments.

The system prompt assembly at query.ts combines system context with the base prompt via asSystemPrompt(appendSystemContext(systemPrompt, systemContext))(). User context (CLAUDE.md and date) is prepended to the message array via prependUserContext(). This separation means CLAUDE.md content occupies a different structural position in the API request than the system prompt, potentially affecting model attention patterns.

Several context sources are injected late, after the main window is constructed: relevant-memory prefetch (query.ts), MCP instructions deltas (only new or changed server instructions), agent listing deltas, and background agent task notifications. The context window is therefore not static at assembly time but can grow during the turn.

7.2 CLAUDE.md Hierarchy and Auto Memory

A design principle shapes the memory system: stored context should be inspectable and editable by the user. CLAUDE.md files are plain-text Markdown rather than structured configuration or opaque database entries. This transparency choice trades expressiveness for auditability: users can read, edit, version-control, and delete any instruction the agent sees ([26]). Alternative memory architectures illustrate the trade-off. Retrieval-augmented approaches use embedding-based lookup to surface relevant prior context, gaining flexibility at the cost of inspectability: the user cannot easily see or edit what the retrieval system considers relevant. Database-backed memory offers structured querying but requires additional infrastructure and is opaque to version control. Claude Code's file-based approach makes every instruction the agent sees directly readable, editable, and committable alongside the codebase. The system does not use embeddings or a vector similarity index for memory retrieval; instead it uses an LLM-based scan of memory-file headers to select up to five relevant files on demand, surfacing them at file granularity rather than entry granularity. Embedding-based systems can retrieve individual entries more selectively, at the cost of inspectability and the infrastructure needed to maintain an index.

CLAUDE.md files follow a multi-level loading hierarchy. The source header (claudemd.ts) defines four memory types:

- Managed memory (e.g.

/etc/claude-code/CLAUDE.mdon Linux): OS-level policy for all users. - User memory (

/.claude/CLAUDE.md): private global instructions. - Project memory (

CLAUDE.md,.claude/CLAUDE.md, and.claude/rules/*.mdin project roots): instructions checked into the codebase. - Local memory (

CLAUDE.local.mdin project roots): gitignored, for private project-specific instructions.

File discovery traverses from the current directory up to root, checking for all project and local memory files in each directory. Files closer to the current directory have higher priority (loaded later).

Files load in "reverse order of priority": later-loaded files receive more model attention. For root-to-CWD directories, unconditional rules from .claude/rules/*.md load eagerly at startup. For nested directories below CWD, even unconditional rules are loaded lazily when the agent reads files in matching directories. This means the model's instruction set can evolve during a conversation as new parts of the codebase are explored.

CLAUDE.md content is delivered as user context (a user message), not as system prompt content (context.ts). This architectural choice has a significant implication: because CLAUDE.md content is delivered as conversational context rather than system-level instructions, model compliance with these instructions is probabilistic rather than guaranteed. Permission rules evaluated in deny-first order (Section 5) provide the deterministic enforcement layer. This creates a deliberate separation between guidance (CLAUDE.md, probabilistic) and enforcement (permission rules, deterministic). The function calls setCachedClaudeMdContent() to cache the loaded content for the auto-mode classifier, to avoid an import cycle between the CLAUDE.md loader and the permission system.

Memory files support an nclude directive for modular instruction sets (processMemoryFile() at claudemd.ts). Syntax variants include @path, @./relative, @ /home, and @/absolute. The directive works in leaf text nodes only (not inside code blocks). In the implementation, the including file is pushed first and included files are appended after it, circular references are prevented by tracking processed paths, and non-existent files are silently ignored.

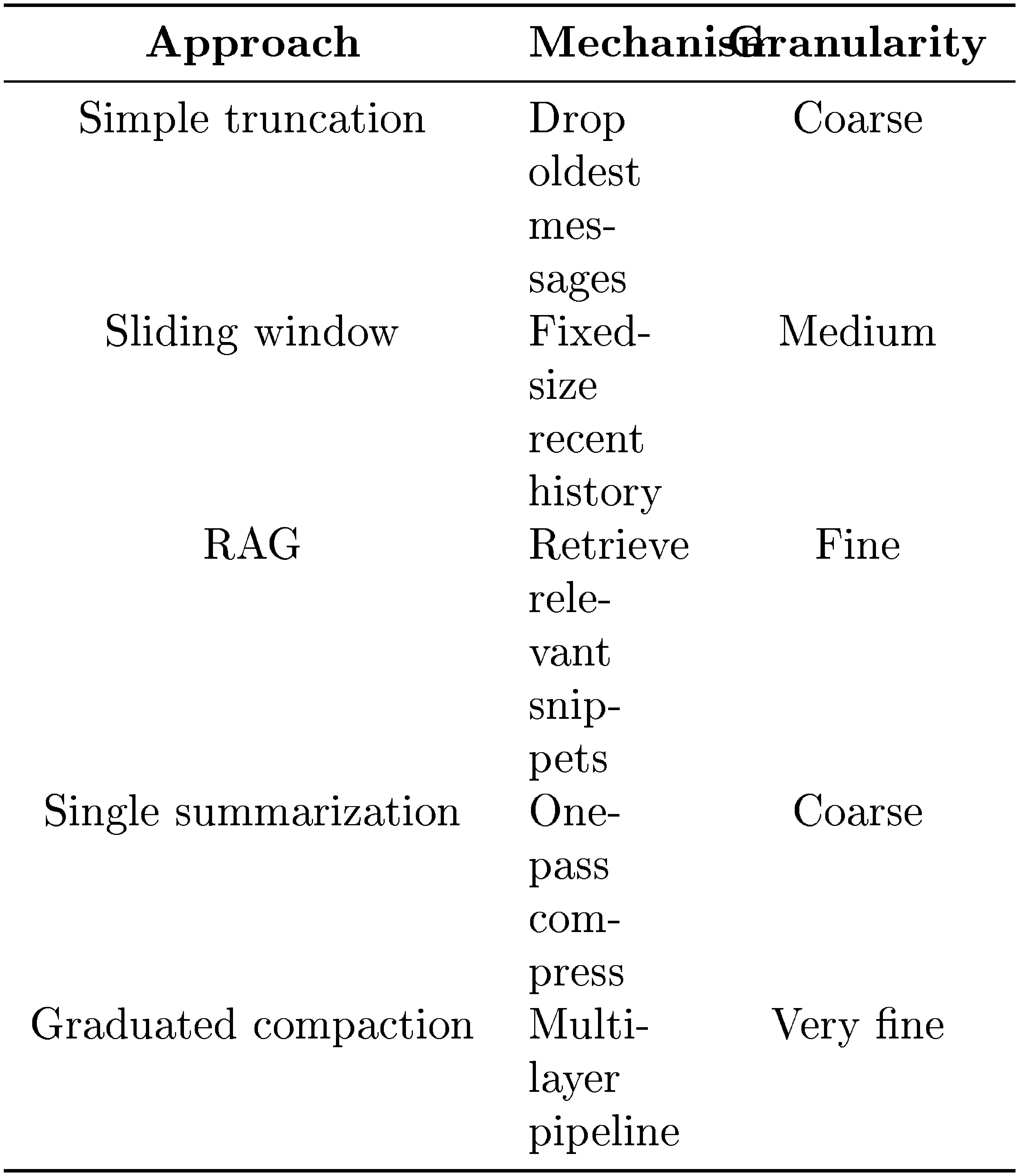

7.3 Compaction Pipeline