Towards System 2 Reasoning in LLMs: Learning How to Think With Meta Chain-of-Thought

Violet Xiang$^{2}$, Charlie Snell$^{3}$, Kanishk Gandhi$^{2}$, Alon Albalak$^{1}$, Anikait Singh$^{2}$, Chase Blagden$^{1}$, Duy Phung$^{1}$, Rafael Rafailov$^{2,1}$, Nathan Lile$^{1}$, Dakota Mahan$^{1}$, Louis Castricato$^{1}$, Jan-Philipp Fränken$^{2}$, Nick Haber$^{2}$, Chelsea Finn$^{2}$

$^{1}$ SynthLabs.ai

$^{2}$ Stanford University

$^{3}$ UC Berkeley

Abstract

We propose a novel framework, Meta Chain-of-Thought (Meta-CoT), which extends traditional Chain-of-Thought (CoT) by explicitly modeling the underlying reasoning required to arrive at a particular CoT. We present empirical evidence from state-of-the-art models exhibiting behaviors consistent with in-context search, and explore methods for producing Meta-CoT via process supervision, synthetic data generation, and search algorithms. Finally, we outline a concrete pipeline for training a model to produce Meta-CoTs, incorporating instruction tuning with linearized search traces and reinforcement learning post-training. Finally, we discuss open research questions, including scaling laws, verifier roles, and the potential for discovering novel reasoning algorithms. This work provides a theoretical and practical roadmap to enable Meta-CoT in LLMs, paving the way for more powerful and human-like reasoning in artificial intelligence.

Authors are ordered randomly. Correspondence to [email protected].

Give a man a fish and you feed him for a day; teach a man to fish and you feed him for a lifetime. -Proverb

Executive Summary: Large language models (LLMs) have transformed fields like natural language processing by predicting the next word in a sequence, enabling tasks from translation to content generation. However, they often falter on complex reasoning problems, such as advanced mathematics or puzzles requiring deep exploration, where even top models like GPT-4o produce errors on problems that simplify to basic algebra. This limitation stems from training data that captures only the final solutions, not the iterative, trial-and-error thinking humans use for hard problems. As AI applications expand into science, engineering, and decision-making, improving reasoning is critical to make LLMs more reliable and versatile, especially now with growing demands for AI in high-stakes areas like research and policy.

This document proposes Meta Chain-of-Thought (Meta-CoT), a framework to enhance LLMs' reasoning by modeling the hidden "thinking" process behind solutions, drawing from cognitive science's idea of deliberate, effortful thought (System 2 reasoning). It aims to evaluate why current methods fall short and demonstrate how to train models that internalize this process for better performance on tough tasks.

The authors combine theory, empirical analysis, and practical methods. They analyze behaviors in leading models like OpenAI's o1 and DeepSeek-R1 using math benchmarks with thousands of problems from high-school to Olympiad levels, spanning recent years. Key techniques include generating synthetic data through search algorithms like Monte Carlo Tree Search and A* on verifiable math problems, then using instruction tuning to teach models these search patterns and reinforcement learning (RL) to refine them. They assume access to outcome verifiers (e.g., correct answers) and focus on domains like math for reliability, without delving into code or tools.

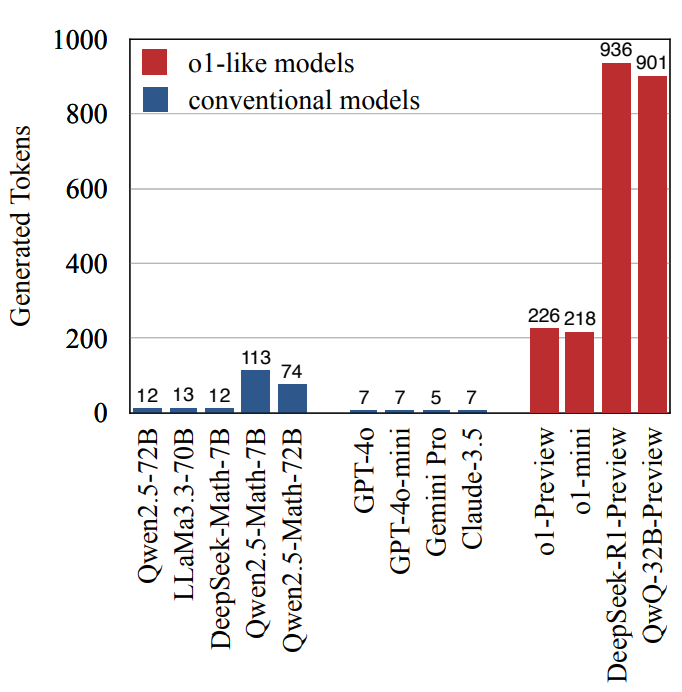

The core findings highlight three main insights. First, traditional Chain-of-Thought prompting boosts simple reasoning but fails on complex tasks because training data lacks the non-linear exploration—such as backtracking or verifying dead ends—that real solutions require; advanced models like o1 generate 2-5 times more tokens on hard problems, suggesting they simulate this internally. Second, inference-time search (sampling multiple paths and selecting the best) lifts accuracy by 20-40% on benchmarks, with tree-based methods like A* being 3-4 times more efficient than random sampling, but verifiers (models scoring paths) lag oracles by 20-30%, creating a persistent gap. Third, training models on linearized search traces via instruction tuning and RL induces in-context exploration: models learn to generate 2-6 attempts per problem, adapting more on difficult ones, with RL outperforming tuning alone by 5-10% in self-correction, though prompting elicits fake behaviors without real gains.

These results mean LLMs can approach human-like reasoning more closely, reducing errors in areas like scientific modeling or financial analysis by enabling deliberate exploration without external tools. Unlike expectations that scaling model size alone suffices, the work shows search internalization shifts performance curves leftward (higher accuracy at lower compute), cutting risks from hallucinations and timelines for AI deployment. It challenges prior views by revealing that RL, not just data volume, drives meta-reasoning, potentially unlocking novel problem-solving strategies.

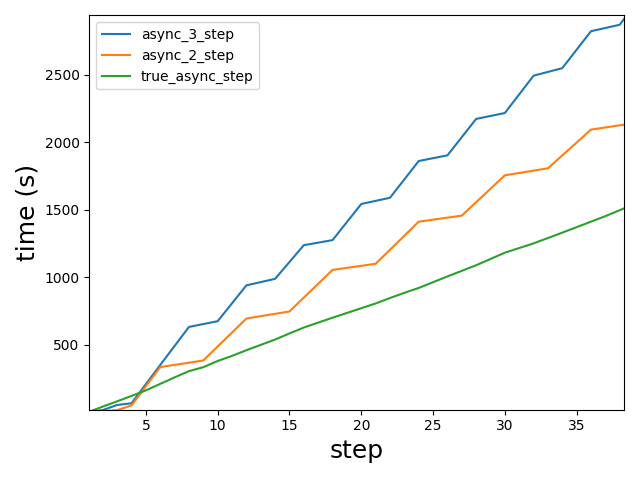

Next, organizations should pursue the outlined pipeline: start with instruction tuning on synthetic search data from the new "Big MATH" dataset (over 1 million cleaned, verifiable math problems), followed by RL using discounted rewards to balance compute and accuracy. For decisions, prioritize verifiable domains like math or code; pilot on internal tasks to test efficiency gains. If resources allow, explore options like tool integration (e.g., calculators) for 20-50% faster scaling or meta-search (search over model outputs) to handle longer contexts.

Uncertainties include data quality gaps in non-math domains and the verifier gap, which could limit open-ended tasks like proofs without human annotation. Compute constraints mean results are preliminary for models beyond 70 billion parameters; confidence is strong in the framework's validity (supported by multiple studies) but moderate for full-scale deployment, warranting caution on unverified applications until more pilots confirm benefits.

1. Introduction

Section Summary: Large language models, which predict the next word in a sequence to understand and generate text, excel at simple tasks like basic math but often fail at more complex problems, such as simplifying algebraic expressions, unless guided to think step by step through a method called chain-of-thought. Even then, these models have limits in capturing the deeper, iterative reasoning needed for advanced tasks. This paper explores those limitations and introduces Meta Chain-of-Thought, a new approach inspired by how humans think, which models hidden thinking processes, provides evidence from top AI models, and outlines ways to train systems for better reasoning using techniques like search algorithms and reinforcement learning.

1.1 Motivation

A key aspect of the current era of Large Language Models has been the foundational principle of next-token prediction ([1, 2]). That is, tokenizing text (or other continuous modalities) into a discrete sequence in the following way:

$ \text{"The quick brown fox jumps over the lazy dog."}\to \mathbf{y}_1, \mathbf{y}_2, \ldots, \mathbf{y}_n, $

where $\mathbf{y}i$ are elements of some finite vocabulary and, subsequently, train a large parameterized neural network $p{\theta}$ (transformer) model with the following maximum likelihood objective:

$ \mathcal{L}{\theta} =\mathbb{E}{\mathcal{D}{\text{train}}}\left[-\sum_t \log p{\theta}(\mathbf{y}{t+1}|\mathbf{y}{\leq t})\right]. $

Behind this approach is a simple principle often abbreviated as "compression is intelligence", or the model must approximate the distribution of data and perform implicit reasoning in its activations in order to predict the next token (see Solomonoff Induction; [3]). That is, language models learn the implicit meaning in text, as opposed to the early belief some researchers held that sequence-to-sequence models (including transformers) simply fit correlations between sequential words.

A fundamental question, however, is: What is the relationship between the complexity of the underlying data stream and the model's capability to learn the data-generating algorithm? While natural language has some irreducible entropy, this question holds even in deterministic scenarios (as demonstrated below). Mathematical reasoning is a good domain to demonstrate and evaluate these questions. One can ask an LLM to generate a response to questions like:

"What is 1+2?"

to which most immediately answer " $3''$. We can increase the complexity of the question by asking, for example:

"What is the value of

$

\frac{(x^2 - 1)(x + 1)}{x^3 - x} - \frac{1}{x}

$

evaluated at $x=\pi$?"

This is actually a simple question since the expression is canonically equal to 1, yet even powerful LLMs such as GPT-4o and Claude fail to answer it correctly even once. That is, the true conditional generative process $p(\mathbf{y}_{t+1}|\mathbf{y}_t)$ could have arbitrarily high computational complexity even when it is deterministic as in the example above. In contrast, if we instruct models to "think step by step" and produce a chain-of-thought we can significantly improve their capabilities producing traces like the following:

We are tasked with evaluating

$ \frac{(x^2 - 1)(x + 1)}{x^3 - x} - \frac{1}{x} $

at $x = \pi$ . Let us simplify the expression before substituting $x = \pi$ .

Step 1: Simplify $(x^2 - 1)(x + 1)$

The numerator of the first term can be rewritten:

$ x^2 - 1 = (x - 1)(x + 1), $

so

$ (x^2 - 1)(x + 1) = (x - 1)(x + 1)^2. $

Step 2: Simplify $x^3 - x$ in the denominator Factorize $x^3 - x$ :

$ x^3 - x = x(x^2 - 1) = x(x - 1)(x + 1). $

Thus, the first term becomes:

$ \frac{(x^2 - 1)(x + 1)}{x^3 - x} = \frac{(x - 1)(x + 1)^2}{x(x - 1)(x + 1)}. $

Cancel $(x - 1)$ and one factor of $(x + 1)$ :

$ \frac{(x^2 - 1)(x + 1)}{x^3 - x} = \frac{x + 1}{x}. $

Step 3: Subtract $\frac{1}{x}$ Now substitute this simplified result into the full expression:

$ \frac{x + 1}{x} - \frac{1}{x}. $

Combine the terms over the common denominator $x$ :

$ \frac{x + 1}{x} - \frac{1}{x} = \frac{x + 1 - 1}{x} = \frac{x}{x} = 1. $

Step 4: Evaluate at $x = \pi$ The expression simplifies to $1$, which is independent of $x$ . Thus, the value at $x = \pi$ is:

$ \boxed{1}. $

There have been multiple explanations for this phenomenon ([4, 5, 6, 7]), however we focus on the complexity hypothesis ([4]). Specifically, a CoT expansion allows for (in-theory) arbitrarily large amounts of compute to be deployed towards the prediction of answer tokens. A large theoretical literature has been developed arguing for the representational complexity of transformers with and without CoT ([4, 8, 9]). The literature says that endowing LLMs with CoT allows them to represent new orders of complexity and (with assumptions, such as infinite memory) may even make them Turing complete. However, in practice, today's LLMs can only reliably solve problems of limited complexity ([10]).

1.2 Outline

In this paper, we investigate the limitations of current LLMs in handling complex reasoning tasks and propose a novel framework, Meta Chain-of-Thought (Meta-CoT), to address these shortcomings. We argue that traditional Chain-of-Thought (CoT) methods, while effective for simpler problems, fail to capture the true data-generating process of complex reasoning which often involves a non-linear, iterative, and latent process of exploration and verification. Meta-CoT extends CoT by explicitly modeling this latent "thinking" process, which we hypothesize is essential for solving problems that require advanced reasoning capabilities.

We draw inspiration from Cognitive Science's dual-process theory, framing Meta-CoT as a form of System 2 reasoning. We establish the theoretical foundations of Meta-CoT, demonstrating how it can be realized through systematic search processes, and how these processes can be internalized within a single auto-regressive model. We then present empirical evidence supporting our claims, including analyses on state-of-the-art models like OpenAI's o1 ([11]) and DeepSeek-R1 ([12]), which exhibit behaviors consistent with internalized (in-context) search. We further explore methods for training models on Meta-CoT through process supervision, and synthetic data generation via search algorithms like Monte Carlo Tree Search (MCTS) and A*.

Finally, we outline a concrete pipeline for achieving Meta-CoT in a single end-to-end system, incorporating instruction tuning with linearized search traces and reinforcement learning (RL) post-training. We discuss open research questions, including the scaling laws of reasoning and search, the role of verifiers, and the potential for discovering novel reasoning algorithms through meta-RL. We also present the "Big MATH" project, an effort to aggregate over 1, 000, 000 high-quality, verifiable math problems to facilitate further research in this area. Our work provides both theoretical insights and a practical road map to enable Meta-CoT in LLMs, paving the way for more powerful and human-like reasoning in artificial intelligence.

2. Meta Chain-Of-Thought

Section Summary: This section introduces the meta chain-of-thought process as a way to better capture how humans solve complex reasoning problems, like advanced math puzzles, by accounting for hidden mental steps that aren't laid out in a simple step-by-step sequence. It explains that while standard chain-of-thought prompting works well for straightforward tasks, such as basic algebra, it falls short for tougher problems because their solutions aren't generated linearly from left to right; instead, they involve interconnected ideas that require understanding the whole approach upfront. Using a notoriously difficult math olympiad problem about rotating lines and points as an example, the section argues that real reasoning often relies on latent thoughts and exploration, which current language models struggle to replicate through autoregressive training.

In this section, we first formulate the meta chain-of-thought process and discuss how it can describe the problem solving process for complex reasoning problems. Then, we describe and demonstrate why classical chain-of-thought fails under certain circumstances.

2.1 Deriving The Meta-CoT Process

A question to ask ourselves is: Should language models with Chain-Of-Thought prompting really be able to express any function, and thus solve arbitrarily complex problems, which was the theoretical point of the previous section? We will stick with the mathematical reasoning domain for the purpose of the discussion. Today, the capabilities of frontier models are enough for a large class of mathematical reasoning problems. Current state-of-the art systems such as GPT-4o and Claude largely solve the Hendrycks MATH Levels 1-3 Benchmark ([13]), however, they still struggle with advanced problems such as those in Levels 4 and 5, HARP ([14]) and Omni-MATH ([15]) (as well as other advanced reasoning tasks). We put forward the following theory to explain these empirical observations.

Reasoning data present in pre-training corpuses does not represent the true data generation process, especially for complex problems, which is a product of extensive latent reasoning. Moreover, this process generally does *not* occur in a left-to-right, auto-regressive, fashion.

In more details, the CoT reasoning data prevalent in the pre-training corpus and post-training instruction tuning follows the true data-generating process for solutions of simple problems such as algebraic computations, counting, basic geometry etc.. That is, for example, the textbook solutions for solving high-school algebra present the general process of generating those solutions. If we follow some set of steps or approaches present in existing textbooks, we can eventually arrive at the solution. Hence, these are learnable with a constant-depth transformers that can express the complexity of each individual step in the process. In contrast, complex reasoning problems do not follow that pattern. We may have a set of triples $(\mathbf{q}, \mathbf{S}, \mathbf{a})$ of questions $\mathbf{q}$, solution steps $\mathbf{S}=(\mathbf{s}_1, \ldots, \mathbf{s}_n)$ and (optionally) answers $\mathbf{a}$, but the true data generation process is not auto-regressive:

$ \mathbf{q}\to\mathbf{z}_1\to\ldots\to\mathbf{z}_K\to(\mathbf{s_1}, \ldots, \mathbf{s}_n, \mathbf{a}),\tag{1} $

where $\mathbf{z}_i$ are the latent "thoughts" left out of the solutions steps, which can be represented fully with left-to-right generation, while the dataset solution $\mathbf{S}=(\mathbf{s}_1, \ldots, \mathbf{s}_n)$ is generated jointly. Let us illustrate this with an example from the International Mathematics Olympiad 2011. This is the (in)famous "windmill" problem:

"Let $\mathcal{S}$ be a finite set of at least two points in the plane. Assume that no three points of $\mathcal{S}$ are collinear. A windmill is a process that starts with a line $\ell$ going through a single point $P \in \mathcal{S}$. The line rotates clockwise about the pivot $P$ until the first time that the line meets some other point belonging to $\mathcal{S}$. This point, $Q$, takes over as the new pivot, and the line now rotates clockwise about $Q$, until it next meets a point of $\mathcal{S}$. This process continues indefinitely. Can we choose a point $P$ in $\mathcal{S}$ and a line $\ell$ going through $P$ such that the resulting windmill uses each point of $\mathcal{S}$ as a pivot infinitely many times."

which has the following solution:

"Let $|S|=n$. Now consider an arbitrary point $P$ in $S$ and choose a line $l$ through $P$ which splits the points in the plane into roughly equal chunks. Next notice that as the line rotates it will sweep a full $2\pi$ angle against some fixed reference frame. Now take another random point $P'$ and similarly constructed stationary line $l'$. At some point in the windmill process we will have $l \parallel l'$. However notice that $l$ and $l'$ split the points into the same two sets and are parallel. Therefore we must have that $l \equiv l'$ and thus $l$ passes through $P'$. This of course holds for any $P' \in S$. Applying the same argument recursively yields the final proof that it is in fact possible to make such a construction for any set $S$ with these properties."

The solution above does not use any prior knowledge and fits within a few sentences. Yet, this problem was considered among the most difficult in the competition (there were only a handful of solutions among the 600+ participants). What makes the problem difficult is the highly non-linear structure of the solution. Most participants would follow the standard "generative" solution process and investigate approaches based on the convex hull construction or use tools from Hamiltonian graph theory, none of these leading to a solution. Instead, participants who solved the problem followed an experimental approach with a lot of geometric exploration and inductive reasoning. Moreover, the solution itself is not linear. It's hard to see the utility of the proposed construction in the beginning without the analysis of the dynamics of $l$. Essentially, to start generating the solution requires that we already know the full approach. The underlying generative process of the solution is not auto-regressive from left-to-right.

We can formalize this argument through the interpretation of reasoning as a latent variable process ([16]). In particular, classical CoT can be viewed as

$ p_{\text{data}}(\mathbf{a}|\mathbf{q}) \propto \int \underbrace{p_{\text{data}}(\mathbf{a}|\mathbf{s}1, \ldots, \mathbf{s}n, \mathbf{q})}{\text{Answer Generation}} \underbrace{\prod{t=1}^n p_{\text{data}}(\mathbf{s}t | \mathbf{s}{< t}, \mathbf{q})}_{\text{CoT}} d\mathbf{S}, $

i.e., the probability of the final answer being produced by a marginalization over latent reasoning chains. We claim that for complex problems, the true solution generating process should be viewed as

$ p_{\text{data}}(\mathbf{a}, \mathbf{s}_1, \ldots, \mathbf{s}n|\mathbf{q}) \propto \int \underbrace{p{\text{data}}(\mathbf{a}, \mathbf{s}1, \ldots, \mathbf{s}n|\mathbf{z}1, \ldots, \mathbf{z}k, \mathbf{q})}{\text{Joint Answer+CoT}} \underbrace{\prod{t=1}^K p{\text{data}}(\mathbf{z}t | \mathbf{z}{< t}, \mathbf{q})}{\text{Meta-CoT}} d\mathbf{Z}, $

i.e., the joint probability distribution of the solution $(\mathbf{a}, \mathbf{s}_1, \ldots, \mathbf{s}_n)$ is conditioned on the latent generative process. Notice that this argument is a meta-generalization of the prior CoT argument, hence why we will refer to the process $\mathbf{q}\to\mathbf{z}_1\to\ldots\to\mathbf{z}_K$ as Meta-CoT.

2.2 Why Does (Classical) CoT Fail?

:::: {cols="1"}

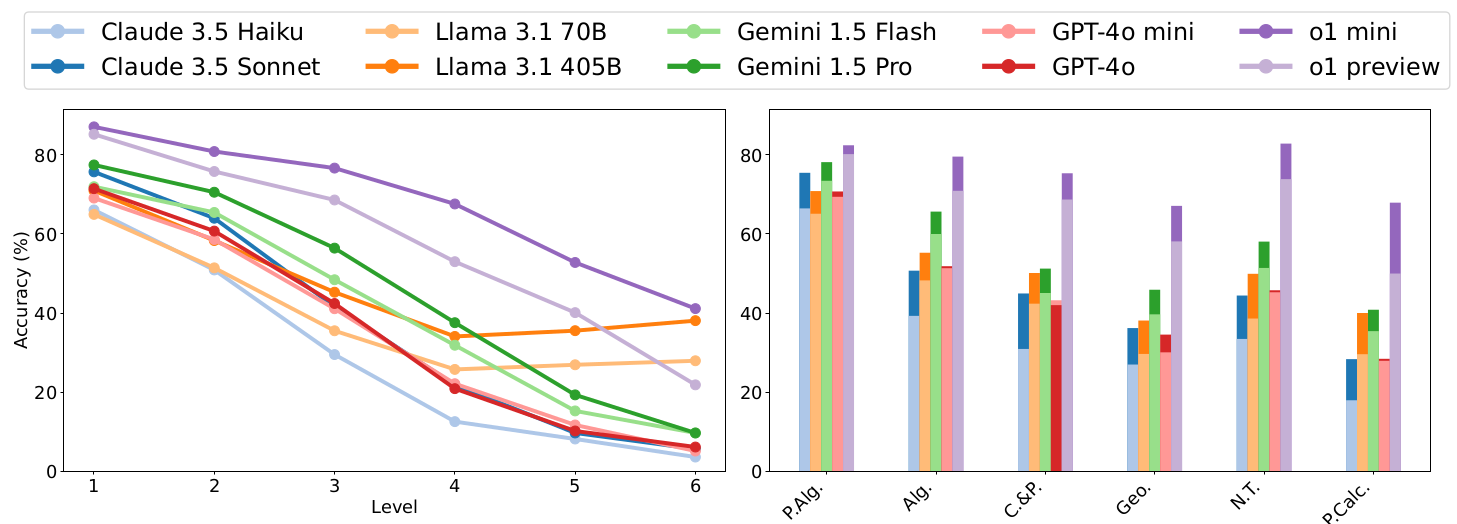

Figure 1: Top: Performance of current frontier models by size on the HARP mathematics benchmark ([14]) by difficulty level and topic. The OpenAI O1 series significantly out-performs prior generation models across the board. Source: Figure 3 in ([14]). Bottom Average number of tokens generated by each model grouped by difficulty level, as well as average number of tokens in human-generated solutions (using GPT4 tokenizer). Source: Figure 4 in ([14]). ::::

Based on the previous discussion, a natural question follows: Why do LLMs fail at these advanced reasoning tasks? Above we proposed that the pre-training and instruction-tuning corpora consist of data of the type $(\mathbf{q}, \mathbf{s}1, \ldots, \mathbf{s_n, \mathbf{a}})$, which do not contain the true data generating process as shown in Equation 1. Indeed, the solution to the windmill problem above is widely available on the internet, but there is little to no discussion about the ways in which commonly used convex hull or planar graph arguments fail. This is true in general - textbooks contain advanced proofs but not the full thought process of deriving these proofs. We can then apply the same general meta-argument of why CoT is necessary to the Meta-CoT case: simply because the conditional solution-level distribution $p{\text{data}}(\mathbf{a}, \mathbf{s}_1, \ldots, \mathbf{s}n|\mathbf{q})$ (without the intermediate Meta-CoT) on hard reasoning questions can have arbitrarily high complexity in the same way that $p{\text{data}}(\mathbf{a}|\mathbf{q})$ can have arbitrarily high complexity in the standard CoT setting. We will examine some empirical evidence for our stance in the following sections.

We will argue in the following chapters that the OpenAI o1 model series performs full Meta-CoT reasoning in an auto-regressive fashion at inference time. A useful analysis is presented in a new mathematics benchmark with challenging high-school Olympiad-level problems ([14]). Figure 1 sourced from that work shows the relevant results. First, we see that the o1 family of models significantly outperforms "standard" reasoning models across the board. However, the gap between o1 and other models' performance increases on higher difficulty problems (with the interesting exception of the LLaMa 3.1 models), that is, problems which have higher solution complexity.

Furthermore, the bottom half of Figure 1 shows the average number of tokens generated grouped by problem difficulty level. First, we see that outside of the o1 series of models, LLMs generate solutions of comparable lengths to humans. While this may initially appear quite intriguing, suggesting that models are learning to approximate or replicate human reasoning, the simple explanation is that models are learning solutions to match the training data - i.e. $p_{\text{data}}(\mathbf{a}, \mathbf{s}_1, \ldots, \mathbf{s}_n|\mathbf{q})$. Much more intriguingly, the o1 series of models exhibits significantly different token behavior. We see that:

- On level 1 problems the o1 series generates a comparable number of tokens to human-written solutions. These are the types of problems where the training solutions likely match the true data generation process and each individual logical step can be internalized in a constant-depth transformer.

- At higher difficulty, the o1 series of models generates significantly more tokens per problem and also widens the performance gap over the classical reasoning models. In fact the gap between the inference compute used by the o1 model and prior series of models seems to scale with the complexity of the problems. We hypothesize that in those more challenging problems the solutions do NOT in fact represent the true data generative process, which is instead better approximated by the more extensive Meta-CoT generated by the o1 family of models.

Of course, in practice the distinction between these two is not so clear cut, and in fact the constant-depth transformer can likely internalize part of the Meta-CoT generative process as evidenced by the gradation of (Meta-)CoT lengths from Levels 2-6 in Figure 1. In the next chapter we will discuss in greater detail what the Meta-CoT process actually represents.

3. Towards Deliberate Reasoning With Language Models - Search

Section Summary: The section explains why language models struggle with advanced reasoning tasks, arguing that their training data lacks representation of the true problem-solving process, which involves a significant gap between generating solutions and verifying them, often requiring extensive search efforts not captured in typical text corpora. Through experiments fine-tuning a Llama 3.1 model on math problems, it shows that allowing more computation during inference—such as generating multiple solutions and selecting the best via voting or an oracle—dramatically boosts performance, even with limited training, highlighting a persistent divide between generation and verification capabilities. This suggests that scaling up inference-time search could help models tackle complex problems more effectively, though challenges remain in verifying solutions without perfect feedback.

In the previous section we introduced the Meta-CoT process and argued that LLMs fail on advanced reasoning tasks because the training data does not adequately represent the true data generation process, i.e. text corpora do not include (or only include limited amounts of) Meta-CoT data. So the remaining question is: what does the true data generating process look like?

- First, we argue that for many advanced reasoning or goal-oriented problems there exist meaningful gaps between the complexity of generation and verification. This is of course one of the fundamental open problems of theoretical computer science and any attempt to prove this is significantly beyond the scope of the current writing, but we will review what we believe to be compelling empirical evidence from the literature.

- Second, assuming a non-trivial generator-verifier gap, we argue that the solutions to challenging problems present in text corpora are the outcomes of an extended search process, which itself is not represented in the data.

We will dive deeper into these two points in the remainder of this section.

3.1 Inference-Time Compute: Search

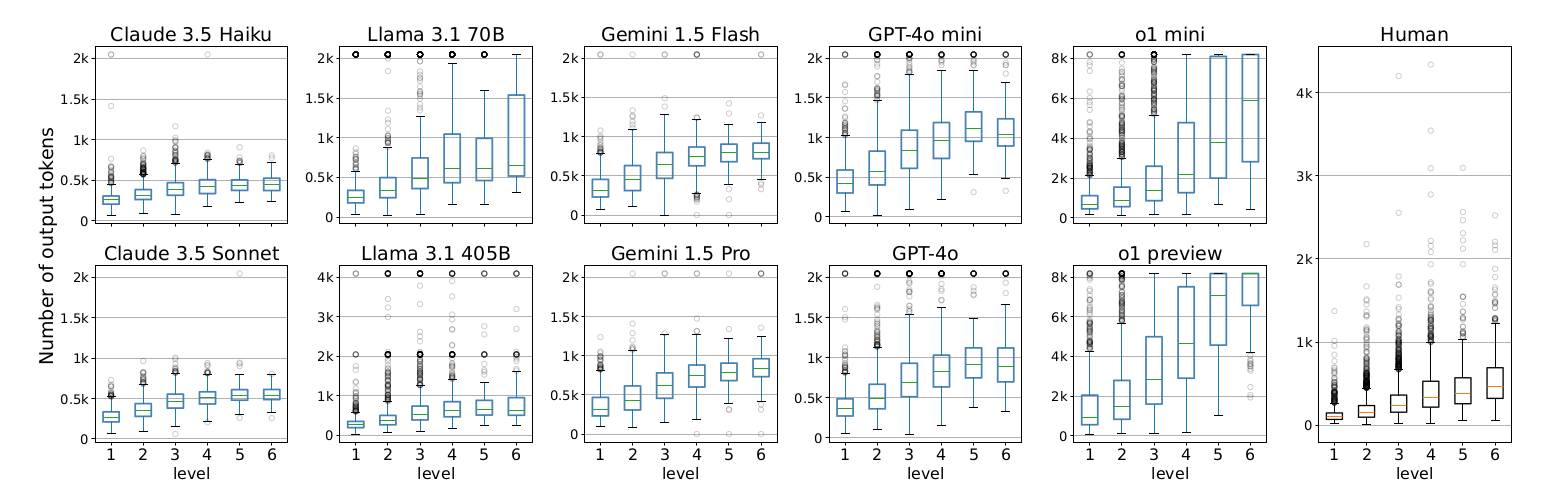

The first point above (generation-verification gap) has recently become a popular research and discussion direction under the framework of "deploying inference-time compute" and we explore this in our first experiment. We start with a LLaMa 3.1 8B base model ([17]) and carry out extensive supervised fine-tuning on the Numina MATH dataset ([18]). Refer to Figure 5 for results and Section 8.1 for dataset details. For each intermediate checkpoint we evaluate performance on the Hendrycks MATH ([13]) 500 problems evaluation dataset ([19]). Based on the results, we make a few observations here:

- We evaluate pass@ $k$ (i.e. using an oracle verifier) on intermediate checkpoints and see a significant jump in performance for increasing $k$. While zero-shot performance with greedy decoding improves from about 20% to 40% (see the base filter on the left side of Figure 5), even the first model checkpoint outperforms these results at pass@ $4$ (right side of Figure 5). Moreover, the pass@64 for the final checkpoint of an 8B model achieves accuracy close to 85%, outperforming the zero-shot performance of many current frontier models.

- We also evaluate performance under majority voting with $k=8$ and $k=64$. There is continuous improvement for both increased training and samples, with maj@64 outperforming the greedy model performance with only 15% of the training compute without access to a ground-truth verifier.

These results demonstrate that even as we directly optimize for answer generation ability by finetuning on increasing amounts of SFT data, there remains a consistent verifier-generator gap, as evidenced by the improved performance in botht eh pass@k and majority voting settings. Recent literature has observed similar results on post-training sampling ([19, 20, 10]). However, most of these studies do not systematically evaluate the effects of varying amounts of training data, compute, and model size which we believe is a fruitful direction for additional empirical work. These questions are important as the observed gains from additional inference might disappear at larger scales and training - i.e. the model may be able to fully internalize the reasoning process. This definitely seems to be the case for advanced models and simpler benchmarks like GSM8k ([21]). While we observe the opposite result in our experiments, we admit that our results are the outcomes of preliminary study and additional work is required, but we will argue from a theoretical point in Section 6 that a persistent search gap remains in domains with high enough epistemic uncertainty. Besides this point, the question remains whether the improvement from increased inference can be effectively achieved without oracle verifiers or environment feedback. In theory, it is possible to generate correct solutions under an increased inference budget, but we may not be able to verify them effectively, as verification complexity may be just as high as, or even higher than, generation complexity. We will address this issue next.

3.2 Inference-Time Compute: Verification

![**Figure 6:** **Scaling trends for verifier models** on algorithmic reasoning, grade-school math (GSM8k), and transfer from GSM8k to MATH. The performance of all verifiers improves in the best-of-N setting, as N increases. Figure sourced from ([22]).](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/verifierscaling.png)

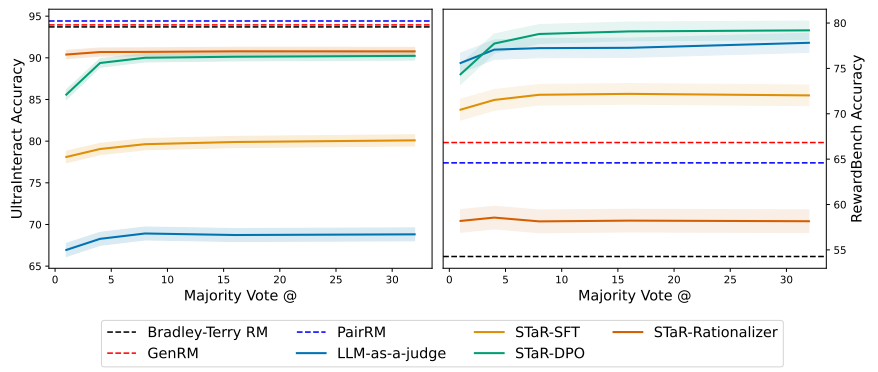

Several works focus on training verifier models, which explicitly evaluate the correctness of reasoning steps and solutions. Verifiers can be trained either using explicit binary classification ([21, 19, 10, 23, 24]) or modeling evaluation directly in natural language, using the LLM-as-a-judge prior ([22, 25]). The unifying formulation of these approaches is the model $v_{\theta}$ which evaluates a reasoning process $v_{\theta}(\mathbf{q}, \mathbf{S})\to[0, 1]$. Under this framework, $K$ candidate solutions $(\mathbf{S}^1, \ldots, \mathbf{S}^K)$ can be generated from a fixed generator $\pi_{\theta}(\cdot|\mathbf{q})$ and ranked based on their evaluation score.

$ \mathbf{S}^*=\arg\max {v_{\theta}(\mathbf{q}, \mathbf{S}^1), \ldots, v_{\theta}(\mathbf{q}, \mathbf{S}^K)} $

For empirical results, we refer the reader to Figure 6 sourced from ([22]) which evaluates a number of verifier models $v_{\theta}$. Regardless of the efficiency of the verifier, there is a significant improvement in performance with additional online sampling. Moreover using explicitly trained verifier models outperforms naive inference-compute scaling strategies such as self-consistency or majority voting.

A question remains regarding the effect of using a fixed generation model (policy): Could this model be under-trained, and if it were further trained, could its zero-shot performance improve to the point where additional online search no longer provides meaningful improvement? We will address this in Section 3.4.

3.3 From Best-of-N To General Search

![**Figure 7:** Reasoning via Planning (RAP) demonstrates the search procedure described here. If we have access to a state evaluator, we can truncate branches with low values and backtrack to promising nodes, without resampling the same steps again. Source: Figure 2 in ([26]).](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/RAP.png)

So far, we empirically explored best-of-N approaches, generating multiple full solutions independently and selecting the most promising one based on scores. However, this approach is inefficient because it requires exploring full solution paths, even if a mistake occurs early on, and may repeatedly sample the same correct steps. Instead, we can model reasoning as a Markov Decision Process (MDP), defined by the tuple $ \mathcal{M} = (\mathcal{S}, \mathcal{A}, P, R, \gamma) $, where:

- $ \mathcal{S} $ : the set of states, where each state $ \mathbf{S} \in \mathcal{S} $, consists of the prompt and generations so far, i.e. $\mathbf{S}_t = (\mathbf{q}, \mathbf{s}_1, \ldots, \mathbf{s}_t)$.

- $ \mathcal{A} $ : the set of actions, where each action $ \mathbf{a} \in \mathcal{A} $ will be represented as the next reasoning step $\mathbf{a}{t+1}=\mathbf{s}{t+1}$.

- $ P(\mathbf{s}' \mid \mathbf{s}, \mathbf{a}) $ : the transition probability function, representing the probability of transitioning to state $ \mathbf{s}' $ when taking action $ \mathbf{a} $ in state $ \mathbf{s} $ . For simplicity, we will mostly consider the deterministic transition function $P(\cdot|\mathbf{s}_{t+1}, (\mathbf{q}, \mathbf{s}_1, \ldots, \mathbf{s}_t))\to (\mathbf{q}, \mathbf{s}_1, \ldots, \mathbf{s}t, \mathbf{s}{t+1})$ that appends the next reasoning step to the context. In general, the environment dynamics can be more complex. For example, models with tool access have to call the actual tool and receive the environment feedback in context or even modify their environment such as the cases of SWE and Web agents.

- $ R(\mathbf{s}, \mathbf{a}) $ : the reward function, which provides a scalar reward for taking action $ \mathbf{a} $ in state $ \mathbf{s} $ . We will assume zero intermediate rewards and final reward of 1 for a correct solution and zero otherwise, although this is not strictly necessary in the presence of a good process reward model ([27]).

- $ \gamma \in [0, 1] $ : the discount factor, balancing the trade-off between further computation and rewards.

We refer to the LLM generating the reasoning steps as the policy $\mathbf{s}{t+1}\sim\pi{\theta}(\cdot|\mathbf{S}_t)$. In addition we refer to a solution starting from $\mathbf{s}_0=\mathbf{q}$ as an episode or a trajectory. We will also use the notation $\mathbf{z}_t$ to represent individual reasoning steps that are part of the Meta-CoT and correspondingly denote $\mathbf{Z}_t=(\mathbf{q}, \mathbf{z}_1, \ldots, \mathbf{z}_t)$.

In the prior section we considered generating and ranking full solutions, which may be inefficient. We can extend the concept of a solution verifier from the prior section, to estimating the probability that a particular intermediate state will lead to a solution: $v_{\theta}(\mathbf{q}, \mathbf{S}_t) \to [0, 1]$. These models have become more widely known as Process Reward Models (PRMs) ([19]). If we have access to such a model, we can improve the efficiency of the search process with the following steps:

- Terminate a solution attempt that is not making progress, or is incorrect prior to reaching the final answer.

- Reset the agent to any intermediate, previously visited, state that has a high likelihood of success.

Notice that with these two operations, and the general structure of language, we can implement any tree search procedure. This is the premise of several approaches ([28, 26, 29]) with the RAP method ([26]) illustrated in Figure 7.

![**Figure 8:** ToT efficiency on the game of 24 shown as accuracy (y-axis) vs. # nodes visited (x-axis). Source: Figure 3 in [28].](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/ToTScaling.png)

These approaches use differing search strategies (DFS/BFS vs. MCTS) and process guidance evaluation (generative self-evaluation vs. Monte-Carlo rollouts), but they all share the same core idea: formulate the reasoning problem as tree search guided by an intermediate heuristic function. As noted above, in theory, tree search does not induce a fundamental capability shift over parallel sampling, however, it may induce significant efficiency gains as demonstrated by [28]. In particular, Figure 8 (sourced from [28]) shows nearly 4 times increased efficiency, in terms of inference budget, on a toy reasoning problem (Game of 24) when using a tree-structured search approach compared to parallel sampling. While these earlier works focus on zero-shot (or close to zero-shot) performance on simple reasoning tasks, it is important to note that tree-search methods have been successfully scaled and deployed to a number of realistic agentic applications ([30, 31, 20, 32]).

3.4 Is Search (Inference Time Compute) A Fundamental Capability Shift?

:::: {cols="2"}

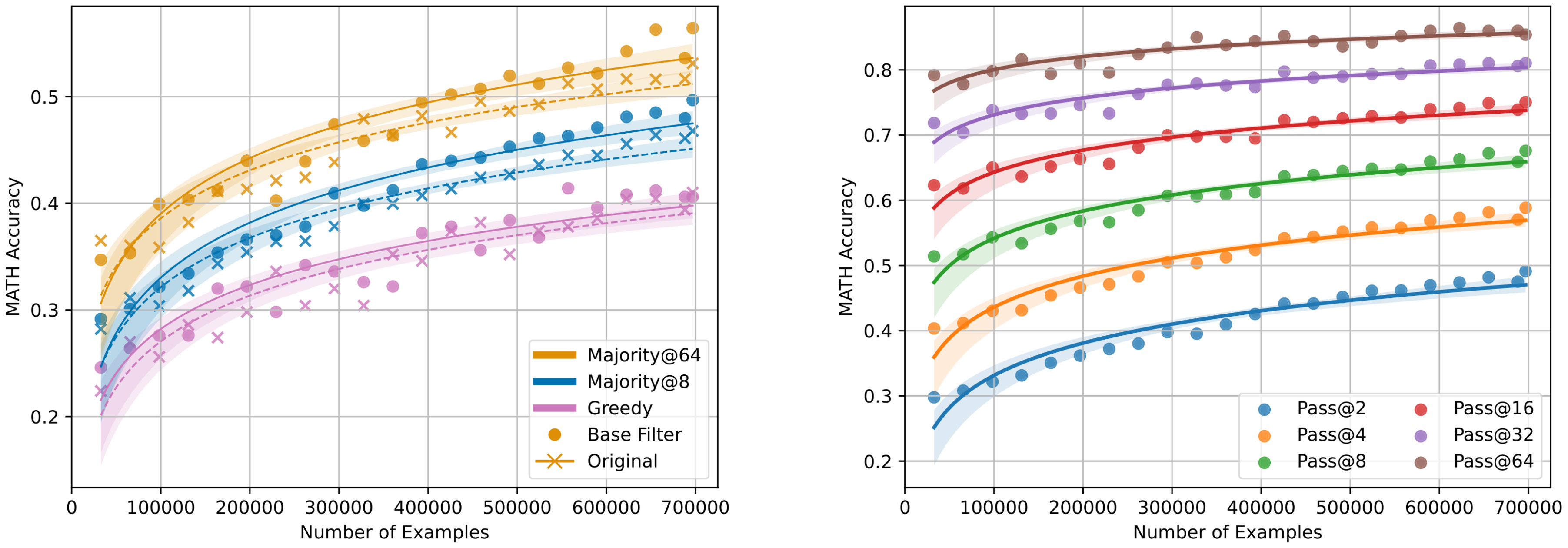

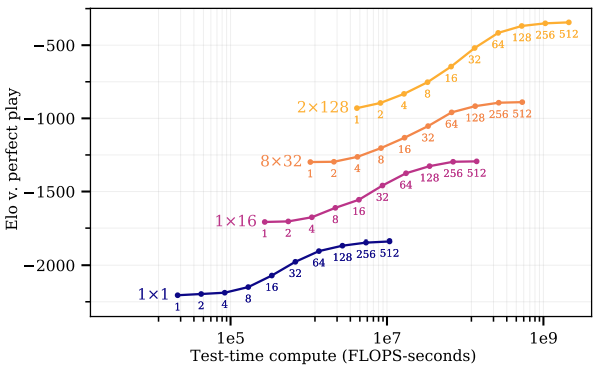

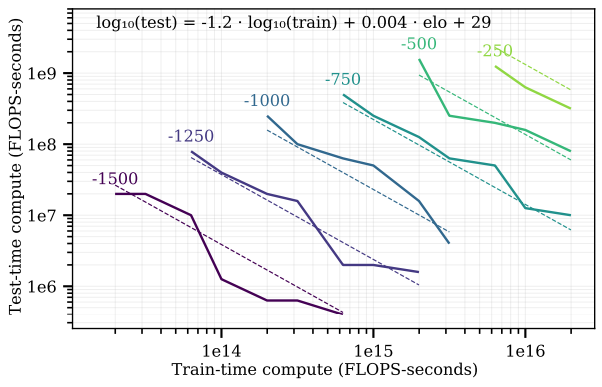

Figure 9: Scaling trends for MCTS at training and inference-time on board games. Left: Elo scores of models trained with different architectures (depth $\times$ width) where each point represents the Elo score of that model evaluated with the labeled tree size (between 1 to 512 nodes). The curves demonstrate that the performance of each model snapshot follows a sigmoid pattern with respect to the test-time compute budget. Source: Figure 8 in ([33]). Right: The trade-off between train-time and test-time compute, with progressively improving Elo (from bottom-left to top-right). Source: Figure 9 in ([33]). ::::

As pointed out earlier, the question remains whether inference-time search is a fundamental new capability or whether it is accessible with additional training. Results from classical RLHF tuning ([34]) suggest that this is a learnable capability, where zero-shot performance of post-trained models matches or outperforms the best-of-N paradigm.

We stipulate that performance on complex reasoning tasks is governed by a scaling law, which involves model size, training data (compute) and inference time compute.

This is indeed consistent with the theoretical results of [9] and the intuition presented in Section 2. Larger models are more capable of internalizing the Meta-CoT process in their activations, and are also capable of using longer inference-time Meta-CoT to approximate solutions with significantly higher computational complexity. Empirically, we have limited (but promising) evidence towards this hypothesis. A major prior work to study these questions is [33] which carries

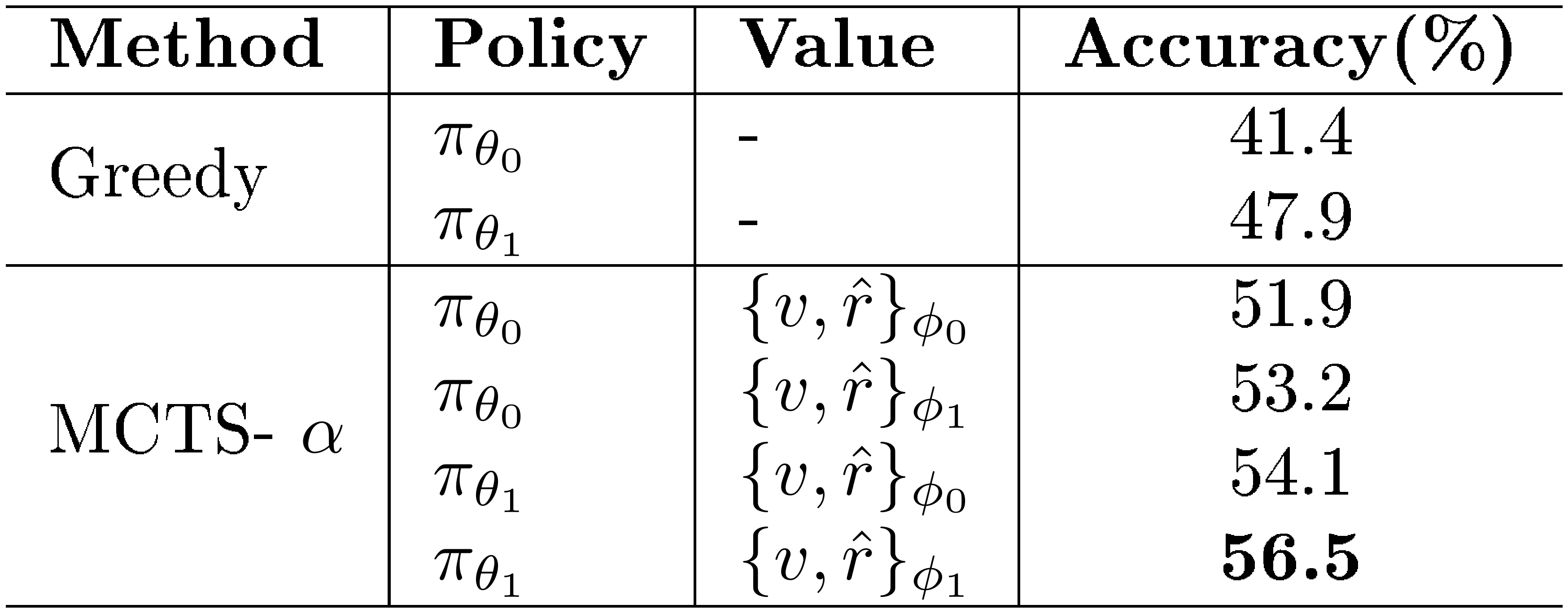

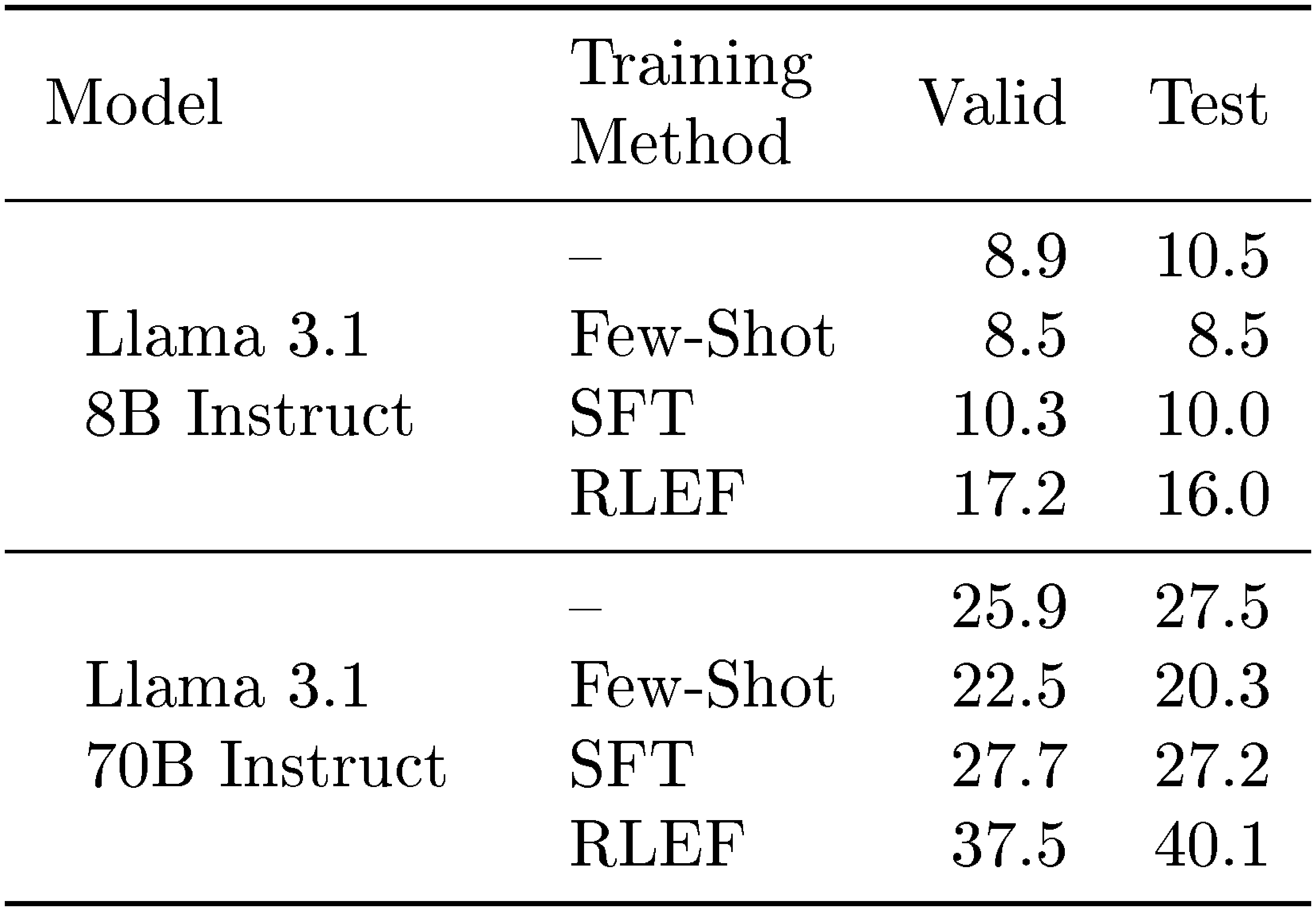

::: {caption="Table 1: Iterative update results on GSM8k. $\theta_0, \phi_0$ are the old parameters while $\theta_1, \phi_1$ are the new ones. TS-LLM can boost performance by training LLM policy, value, or both. Source: Table 4 in ([35])."}

:::

out studies using the AlphaZero algorithm ([36]) on board games. This approach fits our desiderata very well as the underlying MCTS algorithm jointly scales the policy and value (verifier) models' training in conjunction with search. Moreover, this family of board games have a clear generator-verifier gap as generating optimal strategies at intermediate steps can be quite computationally complex, while verifying a winning condition is trivial. The major empirical results on scaling are shown in Figure 9. On the right side we see that performance increases both with increased training compute and model size, as outlined earlier. Interestingly, on the left we see the performance of using different quantities of compute (i.e., search with a value function) during inference. There is also a clear scaling trend, showing improved performance with additional online search at each intermediate checkpoint of training. In fact, the results in this domain indicate there is a clear log-log scaling trade-off between train-time and test-time compute deployment. Currently, we have limited evidence of similar scaling laws in LLMs because such a training pipeline requires significant resources. One major work towards that goal is [35] which carries out two iterations of MCTS fine-tuning using a LLaMa 7B on the GSM8k dataset ([21]). They show improved performance in zero-shot evaluations of the policy, as well as significant gains from using additional inference-time search, at both iterations 1 and 2 (full results are shown in Table 1). However, their work does not ablate the model size, data scaling, or inference-time search scaling, which remain under-explored in the literature for LLM reasoning.

4. Towards Meta-CoT Reasoning

Section Summary: This section explores how to build advanced reasoning, called Meta-CoT or System 2 thinking, directly into a single AI model by incorporating search mechanisms, driven by the need for efficiency in handling repeated or similar ideas and the potential to unlock super-intelligent problem-solving through novel strategies. It argues that internalizing this reasoning avoids redundant steps common in current models and could enable breakthroughs in tackling previously unsolvable problems. The discussion introduces bootstrapping techniques like the Self-Taught Reasoner (STaR), which generates and refines synthetic reasoning steps iteratively without needing perfect examples, and extends it to Meta-STaR for training models on search-based traces to simulate deliberate thinking in sequence.

In prior sections we: introduced the concept of Meta-CoT and argued that it is necessary for advanced reasoning, discussed the generator-verifier gap as a fundamental limitation, argued for search as a fundamental building block of the Meta-CoT, and discussed the utility of approaches integrating generator, verifier, and search components. However, the question remains on how to integrate these into a model to perform Meta-CoT or "System $2''$ reasoning. The first question we need to answer is: why do we actually need to internalize deliberate reasoning inside a single model? We propose two main reasons:

- Efficiency: By incorporating search within the context of an auto-regressive model, exploration can be done efficiently since the model has access to all previously visited nodes, in context. Unique to the case of reasoning in natural language, many branches may contain semantically similar content, unlike other domains (e.g., board games), motivating the need for improved efficiency. In fact, even advanced reasoning models carry out many repeated steps of semantically identical reasoning as we show in Figure 20 and Figure 21.

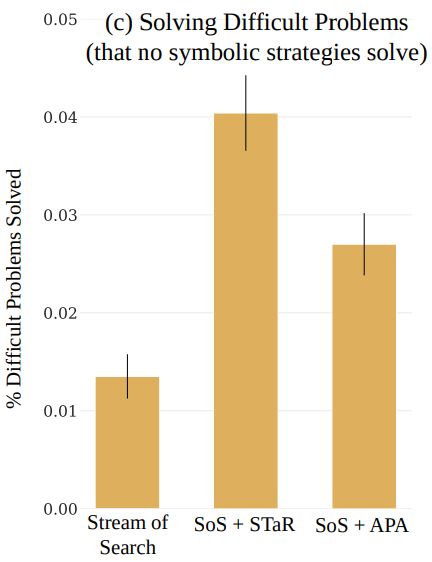

- Super-Intelligence: If an auto-regressive model can learn to implement search algorithms in-context, then additional RL training may enable the model to discover novel reasoning approaches. Essentially, we propose that training a model capable of internal System 2 reasoning (e.g. Meta-CoT) and search is an optimization over algorithms rather than specific outputs, possibly yielding novel modes of problem solving. This will potentially allow the model to solve classes of problems previously unsolvable under symbolic-bases tree-search approaches as we've outlined in Section 3.3 and Section 3.4.

In the remainder of this section, we explore how to train a model to internalize such a reasoning system.

4.1 Bootstrapping Meta-CoT

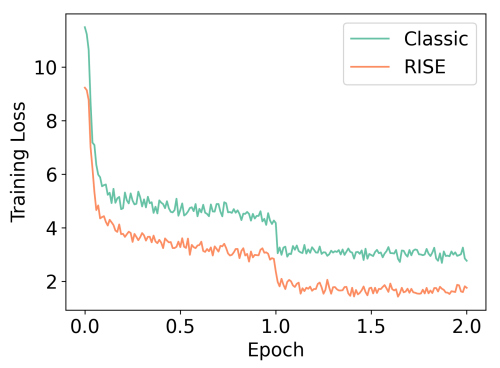

In this subsection, we overview the core idea behind the Self-Taught Reasoner (STaR) approach ([37, 38, 39]) to bootstrapping intermediate CoT steps and how to use a similar concept to generalize to meta-reasoning strategies.

4.1.1 Self-Taught Reasoner

The STaR method introduces an iterative bootstrapping approach designed to improve the reasoning capability of LLMs ([37]). STaR focuses on training models to generate and refine rationales, particularly for tasks requiring complex reasoning in a reinforcement learning-based manner. In this formulation we assume we have access to a dataset $\mathcal{D}={\mathbf{q}^{(i)}, \mathbf{a}^{(i)}}{i=1}^N$ of questions $\mathbf{q}$ that require reasoning along with corresponding answers $\mathbf{a}$. Notice that we do not require access to ground-truth rationales for these problems. We begin by prompting a model $\hat{\mathbf{a}}^{(i)}, \hat{\mathbf{S}}^{(i)}\sim\pi(\mathbf{a}, \mathbf{S}|\mathbf{q}^{(i)})$ to provide CoT rationale $\hat{\mathbf{S}}^{(i)}=\mathbf{s}1^{(i)}, \ldots, \mathbf{s}{N_i}^{(i)}$ and final answer $\hat{\mathbf{a}}^{(i)}$. We then filter the generated data, keeping only rationales that lead to a correct final answer (i.e., $\hat{\mathbf{a}}^{(i)}=\mathbf{a}^{(i)}$) to create a dataset of questions, (bootstrapped) rationales and answers $\mathcal{D}{\text{STaR}}={\mathbf{q}^{(i)}, \hat{\mathbf{S}}^{(i)}, \mathbf{a}^{(i)}}{i=1}^N$. $\mathcal{D}{\text{STaR}}$ is then used to train a model with the standard supervised fine-tuning objective:

$ \mathcal{L}{\mathrm{STaR}}(\pi{\phi}) = -\mathbb{E}{(\mathbf{q}, \hat{\mathbf{S}}, \mathbf{a}) \sim \mathcal{D}{\text{STaR}}} \left[-\log \pi_{\phi}(\mathbf{a}, \hat{\mathbf{S}}|\mathbf{q}) \right].\tag{2} $

The above procedure is repeated over several iterations. The core idea behind STaR is to generate a training dataset of synthetic rationales through sampling and verification. We will extend that idea to the the concept of Meta-CoT below.

4.1.2 Meta-STaR

We can generalize the above idea to Meta-CoT in a straightforward way. Consider a base policy $\pi_{\theta}$ combined with some general search procedure over intermediate steps. Given a question $\mathbf{q}$ we perform the search procedure repeatedly to generate search traces $\hat{\mathbf{z}}_1, \ldots, \hat{\mathbf{z}}_K$ until we find a final solution $(\mathbf{s}1, \ldots, \mathbf{s}n)$. If we can verify the final produced solution $v(\mathbf{S})\to{0, 1}$, for example by using a formalization and verification approach (as in AlphaProof^3) or some other outcome verification, we can then apply a similar approach to STaR. For example, we can construct a dataset $\mathcal{D}{\text{STaR}}={\mathbf{q}^{(i)}, \hat{\mathbf{Z}}^{(i)}, \hat{\mathbf{S}}^{(i)}}{i=1}^N$ and use a similar training objective as before:

$ \mathcal{L}{\mathrm{Meta-STaR}}(\pi{\phi}) = -\mathbb{E}{(\mathbf{q}, \hat{\mathbf{Z}}, \hat{\mathbf{S}}) \sim \mathcal{D}{\text{STaR}}} \left[-\log \pi_{\phi}(\hat{\mathbf{S}}, \hat{\mathbf{Z}}|\mathbf{q}) \right].\tag{3} $

Essentially, we can use a base policy and search procedure to generate synthetic search data and then train the model to implement these in-context through the Meta-CoT concept. We are effectively proposing to linearize the search approaches described in Section 3 and teach an auto-regressive model to run them sequentially. So far we have deliberately been vague about how these search procedures and datasets look. We will now provide examples and proof of concept from the literature on practical approaches to this problem as well as synthetic examples of realistic training data.

4.2 Empirical Examples Of Internalizing Search

When we formulate search in a sequential fashion we can explicitly parameterize each component in language, or choose leave it implicit ([40]). Note that models trained with standard next token prediction still need to implicitly internalize all of these components anyway in order to accurately model the search sequence, even if they are not explicitly verbalized. However, allowing the model to vocalize it's certainty or estimated progress could allow for additional modeling capacity or be useful for interpretability purposes. We will present some examples of auto-regressive search procedures from the literature in the following section.

4.2.1 Small-Scale Empirical Results on Internalizing Search

![**Figure 13:** **A$^*$ planning algorithm outline** for a simple maze navigation task, along with a state and action tokenization scheme. The search representation explicitly models nodes and queue state, the search procedure and the cost and heuristic evaluation. Source: Figure 1 in ([41]).](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/searcformer.png)

![**Figure 14:** **Model performance vs. training compute** when using the A$^*$ planning algorithm (Search Augmented) vs. no search (Solution Only). We see that the search augmented models perform much better across all training scales (charts a and b). In particular performance is consistent with the search formulation of Section 3.4. Figure c) shows performance in terms of task complexity as maze size increases. Results are consistent with the Meta-CoT complexity argument presented in Section 2 and results on the HARP benchmark in Figure 1. Source: Figure 2 in ([41]).](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/searchformer_results.png)

Two particular prior works that explore the idea of in-context search are [42] and [41] which focus on mazes and other classical RL environments. The formulation from [41] is shown in Figure 13, which illustrates linearizing A* search. In our framework the "Trace" corresponds to the Meta-CoT $\mathbf{Z}$, and the "Plan" is the CoT output $\mathbf{S}$. In this setting the search procedure is stated explicitly as it shows node states, actions, costs and heuristic values. In this "stream" format we can then use standard auto-regressive language models with a next token-prediction objective to train a model to internalize the search process. Evaluation results are shown in Figure 14 sourced from the same paper. We observe empirical effects consistent with the scaling law hypothesis presented in Section 3.4; there is consistent improvement with additional training data and model size (train-time compute) across the board. A particularly interesting observation is the complexity scaling relationship in part (c) of the figure. At smaller mazes (lower complexity) the model directly producing the Plan (CoT) and performs comparably to smaller search (Meta-CoT) augmented models, however as maze size (complexity) increases we see a widening gap in performance between the search-augmented and zero-shot models. This is essentially identical to the results shown in Figure 1 on the challenging HARP benchmark ([14]) between the prior frontier models and the o1 series. These empirical observations are well aligned with the intuition we presented in Section 2. For small mazes (low complexity problems) models are capable of internalizing the reasoning process, but as problem complexity (maze size) increases this becomes more challenging and model performance falls off compared to models which explicitly carries out a search procedure. Unfortunately, [41] did not publish inference compute scaling laws, but given the algorithmic structure of the training data we can presume that inference-time tokens scale with the same complexity as the A* search algorithm, which can be exponential in the branching factor, while the plan length is linear in $n$. These results would also be consistent with the inference costs on advanced math reasoning tasks reported in Figure 1.

![**Figure 15:** Inference compute scaling relationships for the o1 model (Left, sourced from ([11]) on AIME, Stream-of-Search on the Game of 24 (Middle) and MAV-MCTS on Chess (Right, sourced from ([43])). These figures show performance of a single model under different token sampling budgets.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/complex_fig_e5d9cd41c8ad.png)

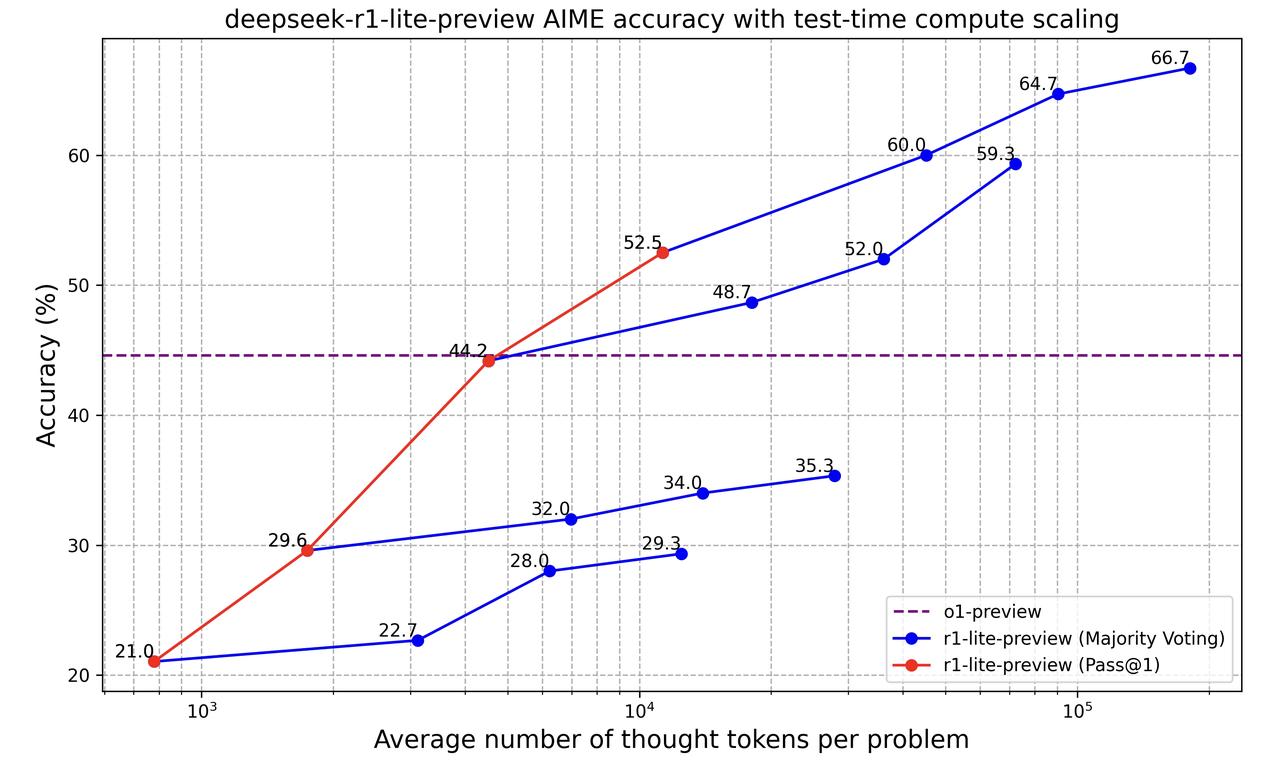

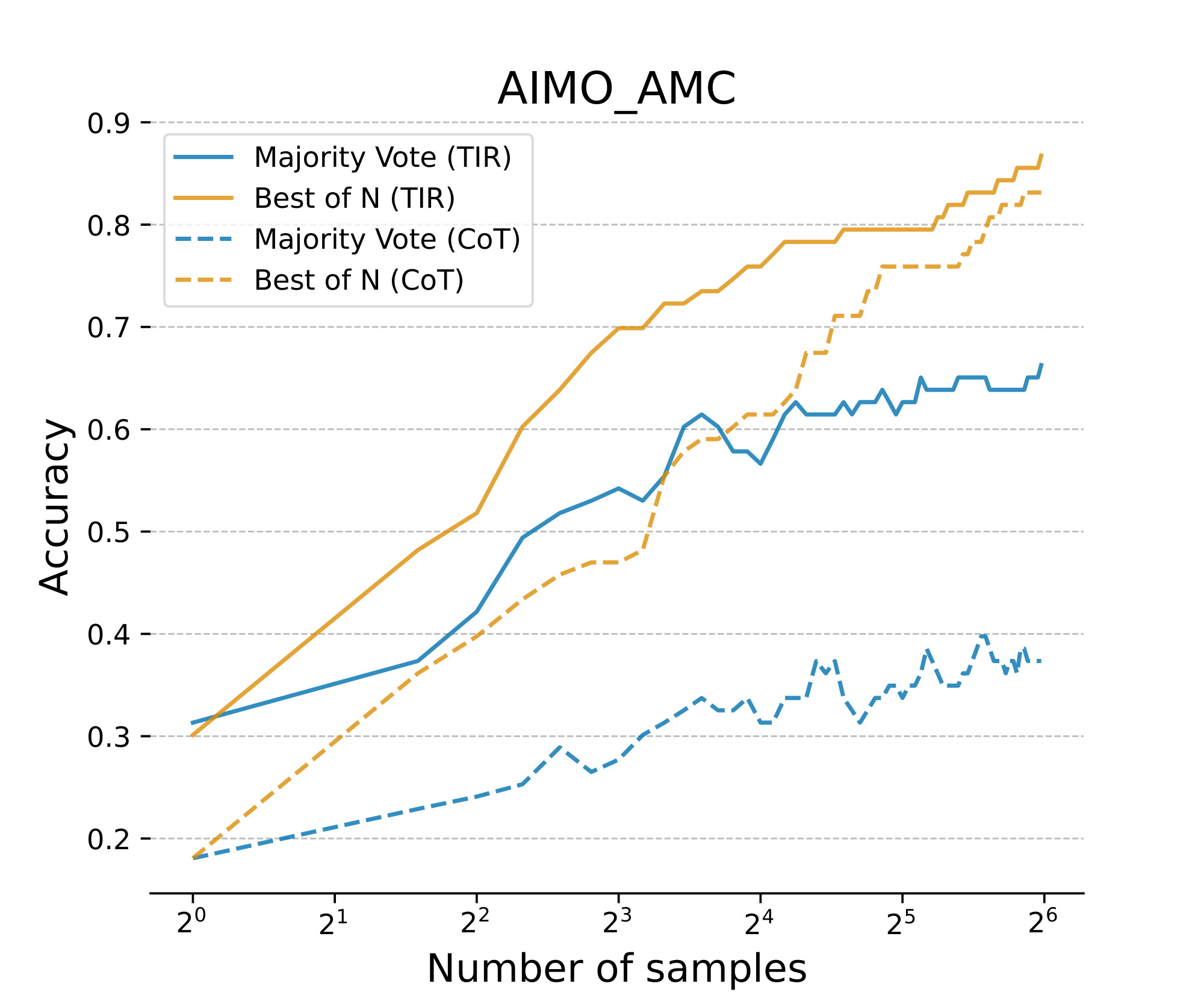

[40] extend the linearized search idea to a more realistic reasoning task - the Countdown game - which requires the model to predict a sequence of mathematical operations on a given set of numbers to match a target value. While [40] use a fixed 250M parameter transformer model and do not explore or discuss the role of model size, training data, and complexity in terms of scaling performance, we obtain additional results in terms of inference-time scaling, shown in Figure 15. Our findings demonstrate a consistent log-linear relationship between tokens spent and success rate. Similar results were also observed in recent work by [43], who train language models on linearized search traces obtained from MCTS on board game environments. Similar to the work of [40], they find consistent improvements in performance as the model is given additional search budget at test-time (Figure 15 right). Note that these models demonstrate an inference-time scaling law with the same functional form as the o1 model on difficult mathematics problems ([11]).

4.2.2 In-context Exploration For LLMs

While the prior section showed promise in teaching auto-regressive language models to internalize complex search strategies involving exploration and backtracking, it remains unclear whether these results can generalize to realistic language domains. In this section we will overview several recent works, which show promise in internalizing episode-level search. Both [44] and [10] evaluate results using open-source LLMs in the 7B and larger range on problems from the MATH dataset ([13]). They pose the problem as sequential sampling - i.e. given a problem $\mathbf{q}$, generating full solutions from the same model auto-regressively as

$ \mathbf{S}^j\sim \pi_{\theta}(\cdot|\mathbf{S}^{j-1}, \ldots, \mathbf{S}^1, \mathbf{q})\tag{4} $

where $\mathbf{S}^i$ are full solutions to the problem $\mathbf{q}$. Both works formulate the problem as self-correction, or revisions, during training. The approach generates training data by concatenating a number of incorrect solutions with the correct revision and training on a linearized sequence (although the exact training objective use a particular weighting grounded in RL ([45])). The general objective follows the form

$ \min_{\theta}\ \mathbb{E}{\mathbf{S}^{i}\sim\pi{\text{ref}}(\cdot|\mathbf{q}), \mathbf{q}\sim\mathcal{D}{\text{train}}}\left[-\log \pi{\theta}(\mathbf{S}^*|\mathbf{S}^{j-1}, \ldots, \mathbf{S}^1, \mathbf{q})\right]\tag{5} $

where $j$ is a fixed number of in-context exploration episodes sampled from a fixed distribution $\pi_{\text{ref}}$ (i.e. $\pi_0$) and $\mathbf{S}^*$ is some optimal solution. Essentially, this can be considered a linearization of the Best-Of-N search strategy presented in Section 3.1 with rejection sampling. In this setting, the Meta-CoT represents search in full episodes $\mathbf{Z}=\mathbf{S}^1, \ldots, \mathbf{S}^{j-1}$ and $\mathbf{S}=\mathbf{S}^j$. At test time we can further control the quantity of compute by iteratively sampling from

$ \mathbf{S}^i\sim \pi_{\theta}(\cdot|\mathbf{S}^{i-1}, \ldots, \mathbf{S}^{i-j}, \mathbf{q}). $

Representative results for this approach are are shown in Figure 16, sourced from ([10]). We see clear improvement in the pass@1 metric with additional amounts of in-context exploration episodes with nearly 6-7% gain from zero-shot to the level of saturation. At the same time, auto-regressive generation shows clearly better scaling properties than independent parallel sampling (Figure 16 right). These results indicate that the model learns some degree of in-context exploration and self-correction.

![**Figure 16:** **Left**: Pass@1 accuracy of a revision model after the specified number of generations (revisions). **Right**: Scaling performance of the best-of-N strategy under parallel and auto-regressive (in-context) sampling. The performance gap indicates that the model learns some degree of in-context exploration and self-correction. Source: Figure 6 from ([10]).](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/pass1_and_comparing_seq_parallel_old_corrected.png)

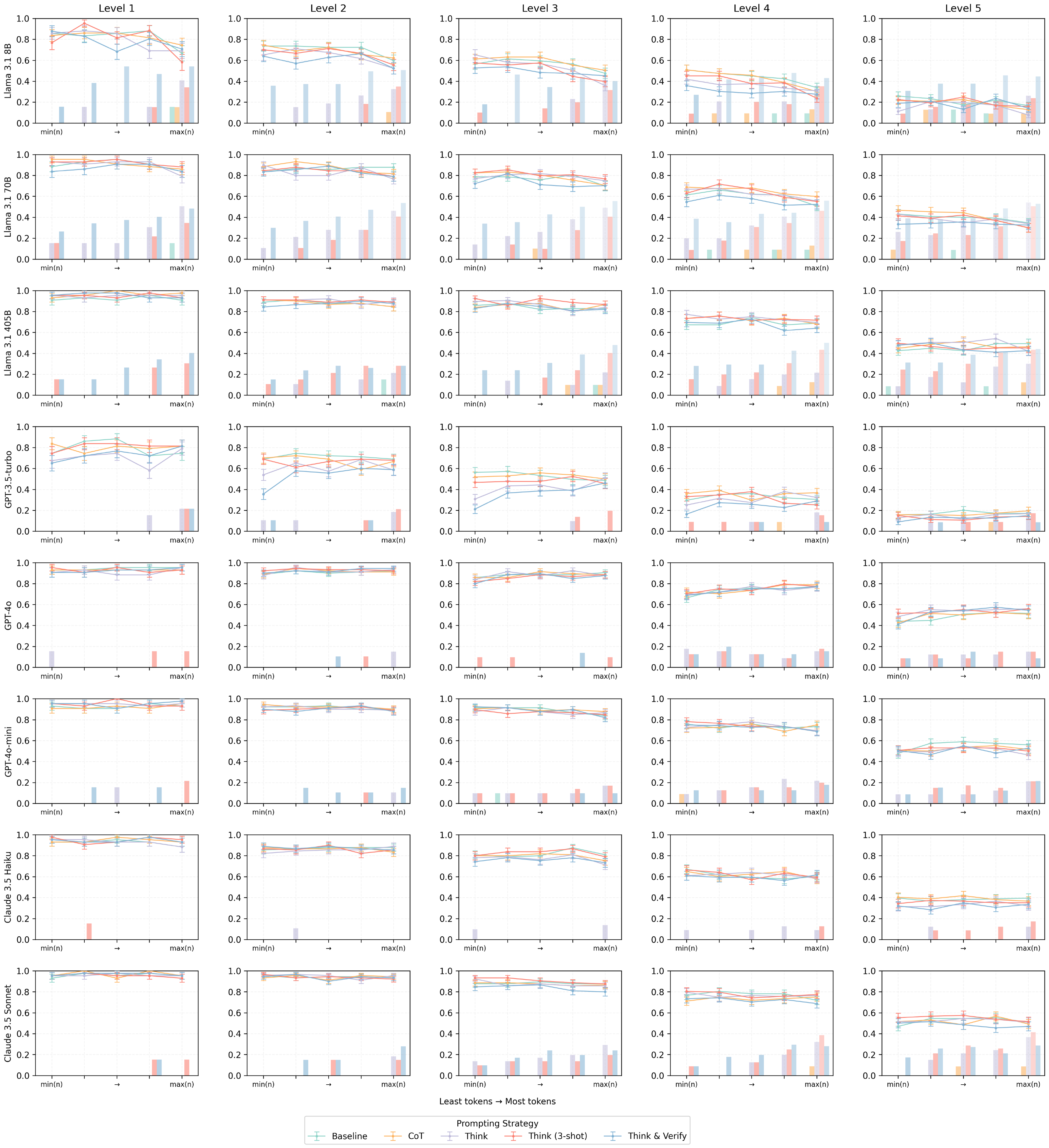

4.2.3 Using variable Compute

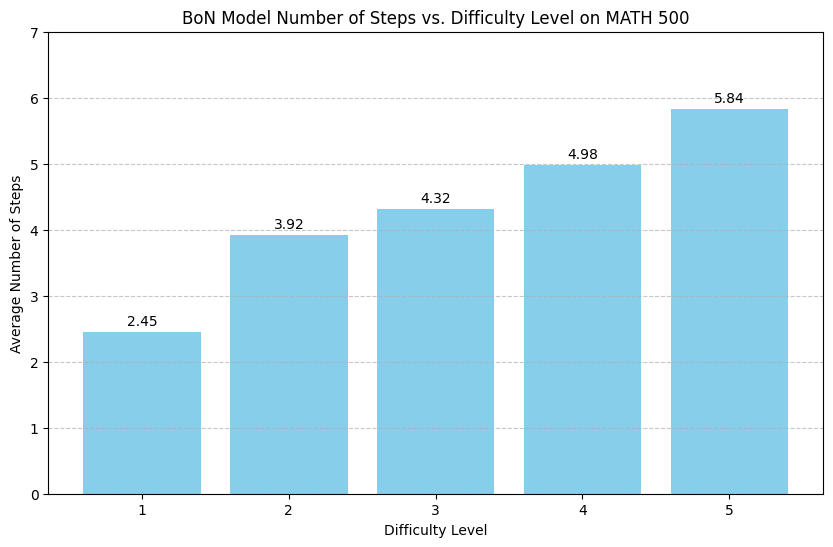

While the above approaches demonstrate promise for the model's capability to carry-out in-context search, they are trained with a fixed number of revisions and use a pre-determined number of revisions at test time. This is not ideal, as ideally the model would be able to use arbitrary amounts of compute until it arrives at a solution with high enough confidence. We repeat the above experiment using a uniform number of in-context solutions during training (ranging between 0-7), allowing the model to generate up to 8 solutions at inference time by optimizing

$ \min_{\theta}\ \mathbb{E}{\mathbf{S}^{i}\sim\pi{\text{ref}}(\cdot|\mathbf{q}), \mathbf{q}\sim\mathcal{D}{\text{train}}}\left[-\log \pi{\theta}(\mathbf{S}^*, \textbf{EOS}|\mathbf{S}^{j-1}, \ldots, \mathbf{S}^1, \mathbf{q})\right], j\sim\text{Unif}(1, 8) $

This formulation encourages the model to continue revising it's solution until it reaches a solution with high confidence of correctness. Interestingly, our model generates an increasing number of solutions based on question difficulty. Summary statistics by problem difficulty are shown in Figure 17 (right), where the model generates an average of 2.45 solutions for Level 1 problems and an average of 5.84 for Level 5 problems, consistent with the behavior shown in Figure 1 and Figure 14 (c). Specifically, this demonstrates that the model has internalized the need for extended exploration on complex reasoning tasks.

Our best performing run achieved an improvement of 2% over the LLaMa 3.1 8B Instruct model that we initialize our models from. We attribute this to a mismatch between the instruct model's RL post-training, the off-policy SFT fine-tuning we utilize, and the limited amount of training data in the MATH train dataset. Indeed, any regular SFT training we performed on the RL post-trained model actually worsened performance.

We are currently exploring post-training on pre-RL base models with extended datasets.

4.2.4 Backtracking in LLMs

In the prior sections, we reviewed evidence that auto-regressive models can internalize complex search strategies in simple domains. We also showed that LLMs can learn in-context exploration at the episode-level. However, whether models can implement complex search strategies (e.g. those outlined in Section 3) auto-regressively remains an open question in public research. Specifically, we refer to the ability to terminate a reasoning chain prior to completion, and the ability to reset (semantically) to an arbitrary previously visited state in-context. These two steps can be unified under the concept of backtracking. Here we will review some recent works demonstrating that LLMs can learn to backtrack.

Recent works have demonstrated that training on data with backtracking can improve language models on simple reasoning tasks ([46, 23]) find that language models can sometimes "recognize" their errors internally, but do not have the required mechanisms to self-correct. Similar to our motivation with Meta-CoT, their aim is for a single model to both recognize errors and self-correct in-context. In their approach they create training data with incorrect steps directly followed by the correction. The backtracking is signified by a special token, [BACK], at the end of an incorrect step to allow the model to explicitly state it's belief that an error has occurred. That is: given a dataset $\mathcal{D}_{\text{train}}$ of questions $\mathbf{q}$ and correct reasoning CoT solutions $\mathbf{S}=\mathbf{s}_1, \ldots, \mathbf{s}_n$ the training objective becomes

$ \mathcal{L}{\text{backtrack}}(\theta) = -\mathbb{E}{\mathbf{s}1, \ldots, \mathbf{s}n\sim \mathcal{D}{\text{train}}, t\sim\text{Unif}(1, n)}\left[\log \pi{\theta}(\mathbf{s}_1, \ldots, \mathbf{s}_t^-, \text{[BACK]}, \mathbf{s}_t, \ldots, \mathbf{s}_n|\mathbf{q})\right]\tag{6} $

where $t$ is a randomly sampled time step in the solution and $\mathbf{s}_t^-$ is a single incorrect reasoning step. This is in contrast to the standard approach, which only trains on the correct solution chains:

$ \mathcal{L}{\text{standard}}(\theta) = -\mathbb{E}{\mathbf{S}\sim \mathcal{D}{\text{train}}}\left[\log \pi{\theta}(\mathbf{S}|\mathbf{q})\right].\tag{7} $

[46] explore inserting incorrect steps at varying rates (between 1% and 50%) and find that high rates of incorrect steps actually leads to improved downstream performance. In particular, they find that a 50% rate of incorrect steps (objective in Equation 6) leads to an increase from 78% to 94% accuracy on hard math problems as compared to training on only correct solutions Equation (7, CoT). While promising, these results are only verified on small models (124M parameters).

In contrast, [47] teach LLMs to backtrack based on safety considerations using the larger Gemma 2B and LLaMa 3 8B models. In particular, following the above notation, given a prompt $\mathbf{q}$ and two possible answers - a safe option $\mathbf{S}^+ = \mathbf{s}_1^+, \ldots, \mathbf{s}_n^+$ and an unsafe option $\mathbf{S}^-=\mathbf{s}1^-, \ldots, \mathbf{s}{n'}^-$, where $\mathbf{s}$ here represent individual tokens (unlike before where they stood for logical steps), they optimize the objective:

$ \begin{align} \begin{split} \mathcal{L}(\theta) = -\mathbb{E}{(\mathbf{q}, \mathbf{S}^+, \mathbf{S}^-)\sim \mathcal{D}{\text{train}}, t\sim\text{Unif}(1, n')}[& \log \pi_{\theta}(\text{[BACK]}, \mathbf{S}^+|\mathbf{S}t^-, \mathbf{q}) \quad + \ & \log \pi{\theta}(\mathbf{S}^+|\mathbf{q})]. \end{split} \end{align}\tag{8} $

That is a combination of the Meta-CoT and regular CoT objectives as outlined above. Additionally, notice that this objective masks out the unsafe completion, while the prior work trains on all tokens including the incorrect logical steps. While the approach of [46] backtracks for a single logical step (correction) this work always resets the agent to the initial state. SFT training is successful in teaching the model to backtrack and improves the safety characteristics over supervised fine-tuning on just the safe answer (only the second term of Equation 8). However, these effects appear weak in regular SFT models, but are significantly improved through further downstream RL training, which we will discuss later on.

4.3 Synthetic Meta-CoT Via Search

In the prior sections we argued for an approach to reasoning that teaches an LLM to internalize an auto-regressive search procedure in-context. We also reviewed several recent works showing that small auto-regressive models can carry out in-context exploration at the episode level, and larger models can learn individual step backtracking. In this section, we explore how to construct synthetic data for realistic Meta-CoT that involves full-scale in-context tree search.

Setup.

For demonstrative purposes, we use the math problem presented by [11] as our benchmark task, where Gemini 1.5 Pro ([48]) achieves a Pass@128 score of 6.25% (8/128 correct) – notably being the only frontier model (without advanced reasoning) to demonstrate non-zero performance at the time of our experiments. We use the same RL formulations for state and actions as presented in Section 3.3. We explore two principal search algorithms for generating synthetic training data: Monte Carlo Tree Search (MCTS) and A* variants. Both approaches necessitate a heuristic state estimation function, for which we employ pure Monte-Carlo rollouts following the methodology of [36]. Specifically, we estimate the value of a partial solution trajectory as

$ v(\mathbf{S}t, \mathbf{q}) = \mathbf{E}{\mathbf{S}{\geq t+1}^j\sim \pi{\theta}(\mathbf{S}{\geq t+1}|\mathbf{S}t, \mathbf{q})}\frac{1}{K}\sum{j=1}^K r^*([\mathbf{S}^j{\geq t+1}, \mathbf{S}_t], \mathbf{q})\tag{9} $

where $r^*$ is the verifiable ground-truth outcome reward. In our experiments, we sample 128 completions from the partial solution and evaluate the mean success rate under ground-truth outcome supervision. In Appendix E, the numerical values of the states are listed after each step.

4.3.1 Monte-Carlo Tree Search

![**Figure 18:** MCTS tree for the math problem presented by [11]. The red node indicates the solution.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/MCTSTree.png)

We conduct an example based on Monte-Carlo Tree Search (MCTS), which seeks to balance exploration and exploitation. The MCTS implementation of [36] has been widely applied to the reasoning domain ([49, 35]), and we mostly follow their implementation with some modifications to account for the structure of our search problem (see Appendix D).

We present the search trace for our example problem - all the actions taken during the search (i.e., the Meta-CoT in a linear format) - in Appendix E.2. The numbers following each reasoning step represent the value estimates. In our initial MCTS attempt we obtained a trace with an excessive number of backtracks and repetitions, including from high-value states (as high as 1.0) with the resulting exploration tree is shown in Figure 18. We believe these effects are due to the exploration bonus in MCTS search. We did not carry out extensive ablations on the search parameters due to speed and costs. Since we use pure MC rollouts ("simulations") for state value estimation, a single tree uses up to 20 million tokens inference (a cost of $\sim$ $100 per tree). Moreover the process can take up to half an hour due to API limits. Because of these issues we also evaluate a more efficient best-first exploration strategy, which we present below.

4.3.2 A* search

We begin with an exploration of a type of best-first search based on the work by [30], which itself loosely follows an A* approach. The search procedure maintains a frontier $\mathcal{F}$ of states, which is implemented as a max priority queue. Similarly to the MCTS approach, each state $\mathbf{S}_t$ consists of the question $\mathbf{q}$ and a partial solution consisting of generated reasoning steps $(\mathbf{s}_1, \ldots, \mathbf{s}_t)$. At each iteration, the state $\mathbf{S}_p \leftarrow \text{pop}(\mathcal{F})$ with the highest value $v_p = v(\mathbf{S}_p, \mathbf{q})$ is selected, where $v_p\in [0, 1]$ is the value of the partial solution $\mathbf{S}p$ including current and previous reasoning steps. At each node the policy $\pi{\phi}$ proposes $b$ candidate next steps, each of which is evaluated by $v$ and added to $\mathcal{F}$ if the depth of the tree $|(\mathbf{s}_0, \ldots, \mathbf{s}_p)|$ has not reached the maximum depth search limit $d$. For the purpose of generating synthetic data, we run the search until we find a solution that is correct using the ground-truth verifier. The resulting tree is shown in Figure 19. It shows more consistent flow of the

![**Figure 19:** Resulting A* search tree on the math problem from [11]. This trace presents more of a best-first approach with fewer backtracks, concentrated around key steps, as compared to the one produced by MCTS in Figure 18.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/ASSearch.png)

reasoning steps, with less backtracking concentrated around a few key steps.

4.4 Do Advanced Reasoning Systems Implement In-Context Search?

In this section we will investigate whether advanced reasoning systems, such as OpenAI's O1 ([11]), DeepSeek R1 ([12]) and Gemini 2.0 Flash Thinking Mode ^4 and the Qwen QwQ [50] implement in-context search. We provide successful reasoning traces for the same math problem in Appendix E.2.

Starting with OpenAI's o1 model, by carefully examining the provided mathematical reasoning trace, we observe:

- Inconsistent flow of thought - consecutive steps do not logically continue the prior state.

- Backtracking - the model carries out "semantic backtracking" - frequently returning to the same logical points.

- Repetition - the model often repeats logical steps.

The qualitative behaviors observed in o1 (Figure 20 left) are similar to those in the example synthetic trace (Figure 21) generated by Gemini 1.5 with and MCTS-like search processes. In particular, there are abrupt changes in logical flow of the (Meta) CoT, which is natural as the model backtracks between branches of the tree. Moreover, the model may explore multiple child nodes of the same parent which are different strings, but can also be very semantically similar leading to repetitive logic. This is clear in the provided trace, as the model repeats logical statement and goes over the same derivations multiple times. Note also that we do not claim the model is implementing tree search at test time, but rather that as much as the model's output are expected to resemble it's training data, we hypothesize that examples of search were used during training (likely model initialization). We will specifically address the need and effects of RL training in Section 6.

![**Figure 20:** Examples of intermediate traces from o1 ([11]), DeepSeek-R1 ([12]), and Gemini 2.0 Flash Thinking Mode. We highlight two types of steps: Backtracking, where the model visits a bad state and returns to a previously visited step, and Verification, where the model assesses the correctness of the previous output. Inconsistent logical flow and repetition are present in all three traces. DeepSeek-R1 and Gemini 2.0 Flash Thinking Mode both exhibit generative verification before reaching an answer, while Gemini makes an incorrect verification and returns to the initial state. Full search traces can be found in Appendix E.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/q643mhbq/complex_fig_d4ff2b62fb63.png)

The DeepSeek R1 model [12] also exhibits similar behaviors, as shown in Figure 20, however, it also carries out a significant amount of self-evaluation steps. This could be achieved by integrating a form of self-criticism ([51, 52]) or a generative verifier ([22]) in the search trace. The LATS framework ([29]) uses a similar approach, combining MCTS search with self-criticism and shows empirical improvements from self-reflection. Another alternative for synthetic data generation is the "Iteration-Of-Thought" approach [53] which also interleaves generation with inner dialogue. This would explain the rather smooth logical flow of the R1 model, which does not exhibit as much abrupt back-tracking, as compared to O1. As mentioned earlier, in order to adequately model the search process the model must internalize an evaluation mechanism. However, providing an explicit CoT verification may be able to expand the model computational capacity and improve self-verification. This is an empirical question, which is currently unclear in open research.

Gemini 2.0 Flash Thinking Mode appears to implement a somewhat different structure. Specifically, the flow of reasoning qualitatively appears smoother with fewer logically inconsistent steps. Moreover, it backtracks less frequently and often returns to the initial state. In fact in the provided example the model solves the problem correctly and then fully re-generates a new solution from scratch (backtracks from the final state to the initial one). It's behavior seems to be to attempt a full solution, which may be terminated early based on some search heuristic. In cases where the solution attempt is unsuccessful, the model attempts a different solution approach, rather than branch at the step-level in a tree search structure. This seems more consistent with a revision-based strategy as reflected in past works ([44, 23, 54]). The Qwen QwQ model [50] shows similar behavior, generating multiple solutions in-context, as also pointed out by [55].

5. Process Supervision

Section Summary: Process supervision enhances AI reasoning searches by using Process Reward Models (PRMs) to score intermediate steps in a solution chain, allowing the system to backtrack from poor paths and explore better ones more effectively. These PRMs are trained on pre-trained language models with datasets of partial solutions labeled either by human experts or through efficient Monte Carlo simulations that estimate values based on final outcomes, avoiding the high costs of direct human annotation for complex problems. Experiments show that PRM accuracy improves with more training data, reducing prediction errors and boosting search performance, though they still lag behind perfect verifiers.

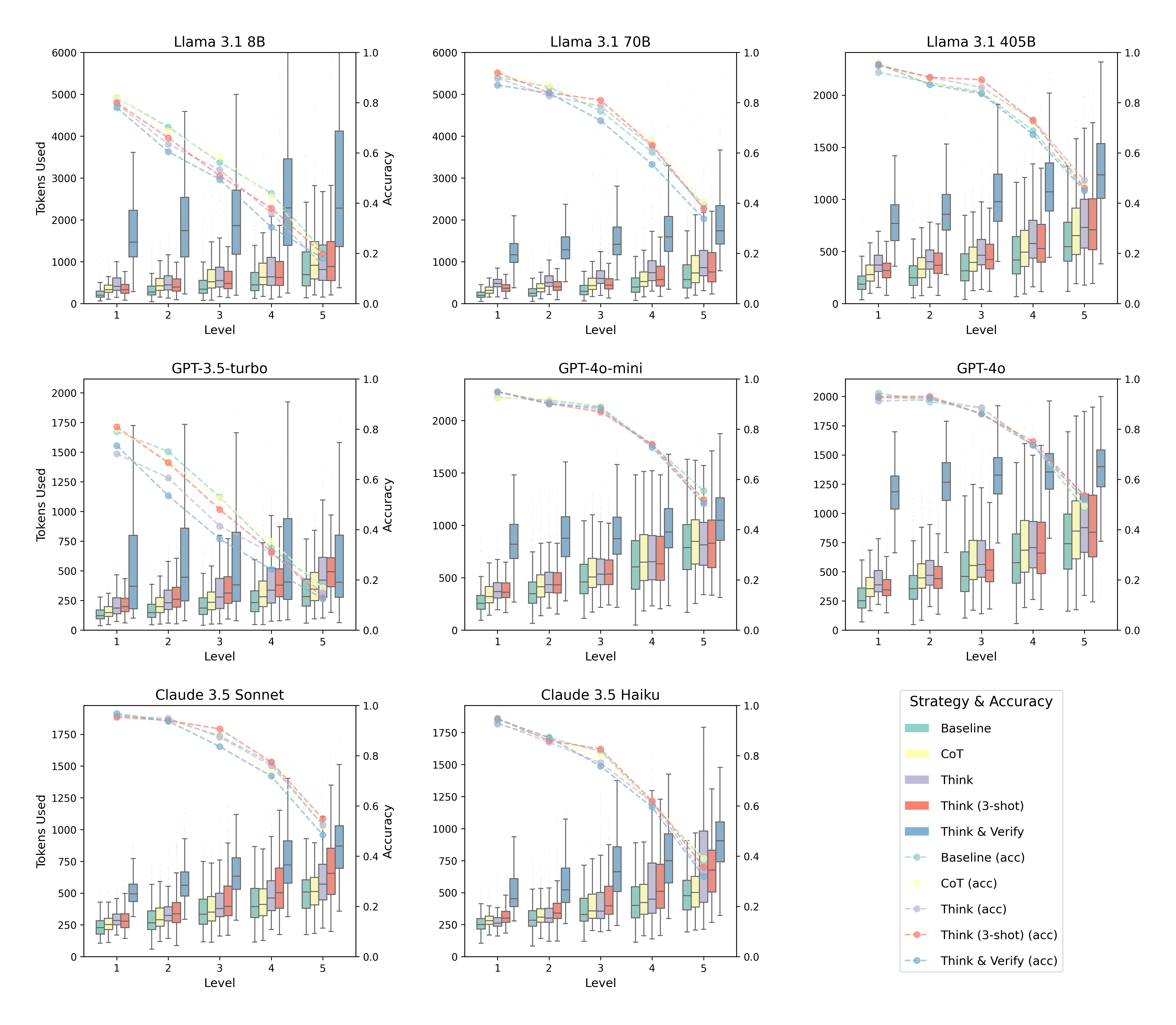

A key component of the search approaches presented in prior sections is the evaluation function $v(\mathbf{q}, \mathbf{S}_t)$, which scores intermediate states in a reasoning chain. These evaluation functions have become widely known as Process Reward Models (PRM). By incorporating process supervision, the search mechanism gains the flexibility to backtrack to earlier promising states when suboptimal paths are encountered, thereby enabling more effective exploration. However, the question of how to efficiently access such capabilities remains an open question. In Section 4.3 we showed examples of using outcome-based verification with MCTS in combination with Monte-Carlo rollouts. However, this approach can only be used during training due to the necessity for ground-truth answers, and moreover it is extremely computationally inefficient. As mentioned earlier, a single training example requires up to 20 million inference tokens, costing up to hundreds of dollars. It is significantly more efficient to amortize the evaluation procedure into a single parameterized model, and we will outline strategies for building such process guidance models below.

5.1 Learning Process Reward Models

Parameterized PRMs are built on top of pre-trained models, either using a linear head or the logits of specific tokens. The model takes the question $\mathbf{q}$ and a partial solution $\mathbf{S}t$ as input and outputs a single scalar value $v{\theta}(\mathbf{q}, \mathbf{S}t)\to[0, 1]$. Given a dataset $\mathcal{D}{\text{train}}$ of partial solutions $\mathbf{S}t$ and corresponding value targets $y{\mathbf{S}t}$ the model is generally optimized with a standard cross-entropy classification loss. A central question for training PRMs is: where do the supervision labels $y{\mathbf{S}t}$ come from? One approach is to have human annotators provide step-by-step level evaluation of reasoning problems, as done by [19]. While their work showed promise in terms of empirical results, this method is challenging to scale due to the high annotation time and cost, especially as evaluating hard reasoning problems requires high-caliber experts. An alternative approach presented by [56] only relies on access to outcome verification - i.e. problems with a ground truth answer. The proposed approach is to amortize the Monte Carlo state-value estimation into a parameterized function. Essentially, this method fits an empirical value function of the reference rollout policy where the targets $y{\mathbf{S}_t}$ are represented by Equation 9. This idea has been widely adopted in follow-up works ([10, 23]) and further extended ([27]).

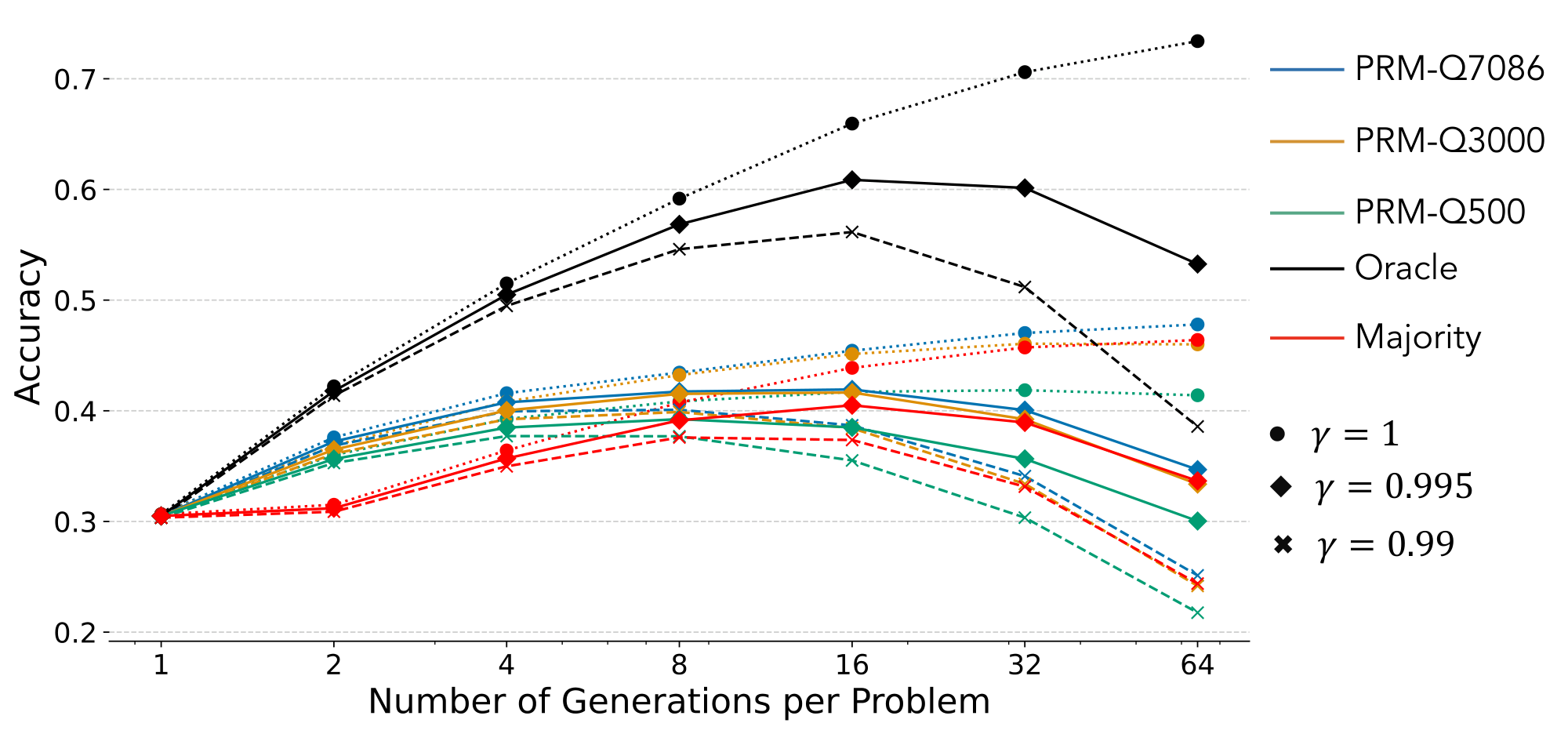

5.2 PRM Quality And Its Effect On Search

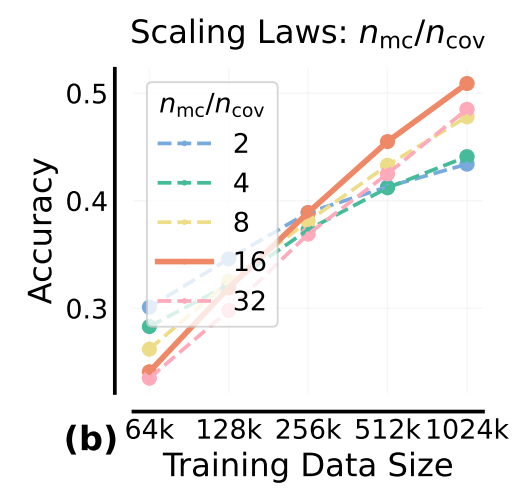

The performance and efficiency of search at test-time depends on the quality of the PRM ([24, 23]). [24] demonstrate effective scaling (in both training data size and label quality) of a specific variant of PRMs that estimate values based on the improvement in likelihood of the correct answer after a step. The accuracy of test-time search improves log-linearly with training data size, and the quality of learned value labels improve with more Monte Carlo estimates. [23] show that oracle verifier-enabled search is orders of magnitude more efficient than a learned PRM with noisy value estimates.

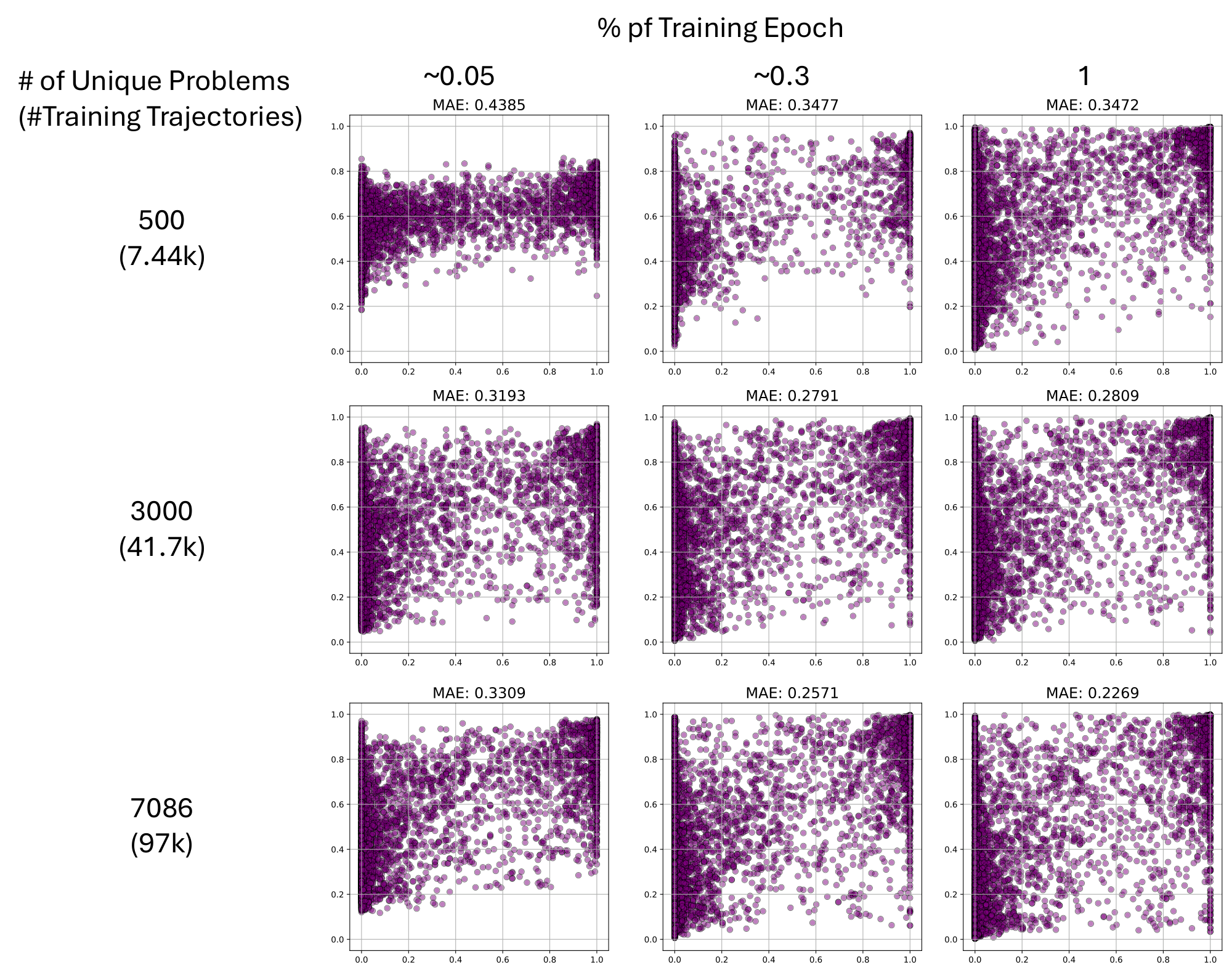

In this section we conduct an experiment demonstrating the scaling characteristics of a PRM. To train our PRM, we first need to generate diverse solution trajectories where each solution step is annotated with a ground truth value. To do so, we use the method from [56] to obtain ground truth values, performing 16 Monte Carlo (MC) rollouts for each step of a seed solution. We generate the seed solutions and step-level MC rollouts from a supervised finetuned (SFT) Llama3.1-8B using the PRM800K ([19]) dataset. The PRM training data uses 7, 086 unique questions - each with seed solutions - and after removing duplicate seed solutions results in 97, 000 trajectories in the training data. To evaluate the scaling performance with increasing data, we split the small set of data into three subsets: one with 500 unique questions, one with 3, 000 unique questions, and one with all 7, 086 unique questions. We create an evaluation set using the MATH-500 dataset ([13, 19]) by generating step-by-step solutions from the SFTed model and step-level ground truth values from 128 MC rollouts.

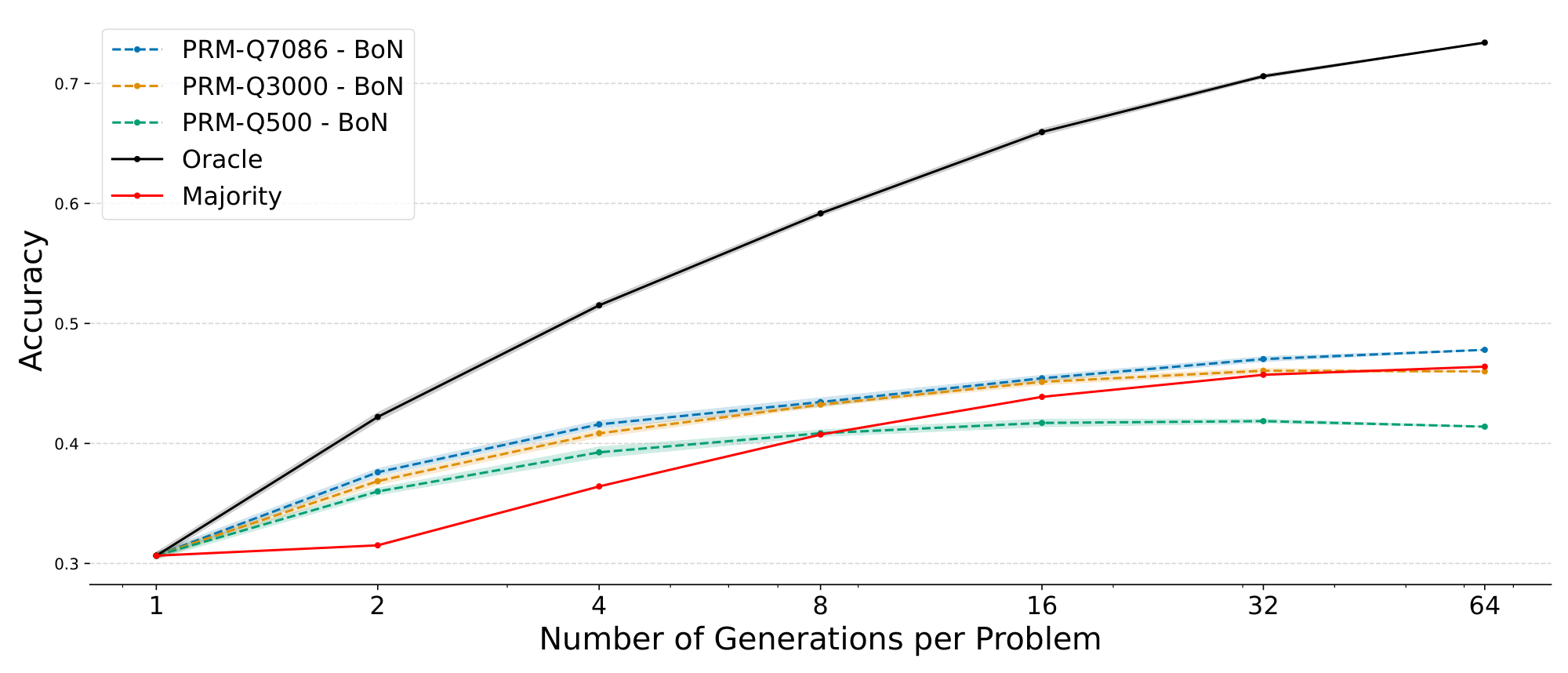

With this trained PRM, we find a reduction in the absolute error of predicted values when comparing PRMs that are trained across datasets of different sizes, as well as a selection of intermediate checkpoints in Figure 22. We observe that: 1) the prediction error decreases as the size of the training data increases, and 2) when the size of the dataset is small, improvement converges early during training (around 30% of an epoch for Qs=500 and Qs=3000). Although these findings are based on small-scale experiments, we anticipate continued improvement in prediction errors with larger datasets and more extensive training, suggesting significant potential in further refining and scaling PRMs. Additionally, we evaluate the performance of the three fully-trained PRMs as outcome verifiers when performing a Best-of-N search during inference time. Figure 23 left shows that the PRM's ability to verify full solutions improves as they are trained with more data, yet there exists a remarkable gap between the trained PRMs and an oracle PRM. Additionally, we observe that the PRM's ability to guide the search process towards the correct answer with a more efficient path also improves as the increased accuracy and reduced number of tokens used in the search process are both observed in Figure 23 right. One interesting remaining question is: what is the scaling law for these process supervision models?

:::: {cols="2"}

Figure 23: Left: Scaling curves for Best-of-N (BoN) using PRMs trained with different number of questions with oracle and majority vote. Right: Beam search (N=5, beam width=4) accuracy and number of tokens used during search with the same PRMs. With more training data, the PRM's ability to verify at outcome-level and process-level improves. ::::

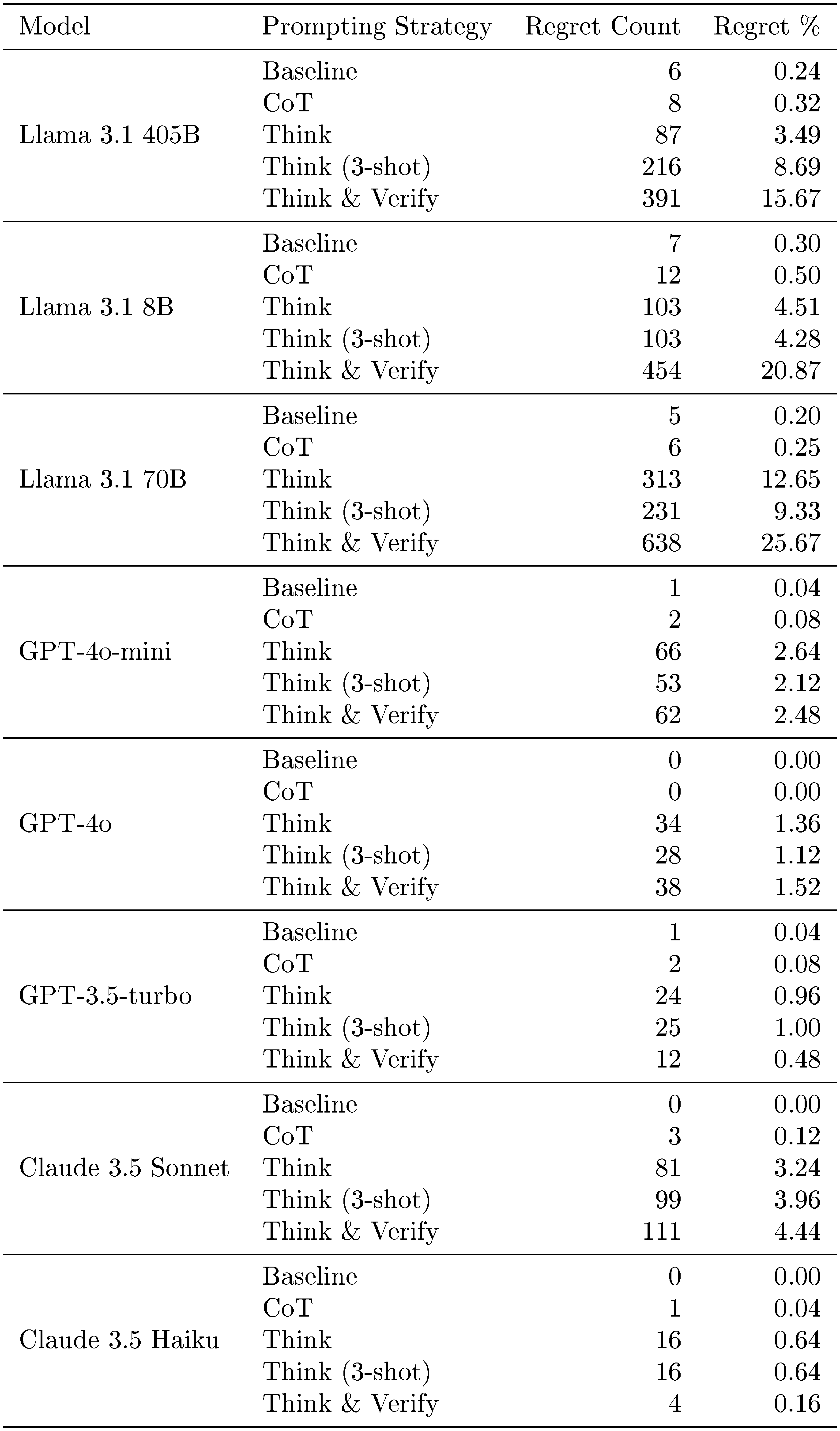

5.3 Verifiable Versus Open-Ended Problems