GLM-5: from Vibe Coding to Agentic Engineering

GLM-5 Team Zhipu AI & Tsinghua University (For the complete list of authors, please refer to the Contribution section)

Abstract

We present GLM-5, a next-generation foundation model designed to transition the paradigm of vibe coding to agentic engineering. Building upon the agentic, reasoning, and coding (ARC) capabilities of its predecessor, GLM-5 adopts DSA to significantly reduce training and inference costs while maintaining long-context fidelity. To advance model alignment and autonomy, we implement a new asynchronous reinforcement learning infrastructure that drastically improves post-training efficiency by decoupling generation from training. Furthermore, we propose novel asynchronous agent RL algorithms that further improve RL quality, enabling the model to learn from complex, long-horizon interactions more effectively. Through these innovations, GLM-5 achieves state-of-the-art performance on major open benchmarks. Most critically, GLM-5 demonstrates unprecedented capability in real-world coding tasks, surpassing previous baselines in handling end-to-end software engineering challenges. Code, models, and more information are available at https://github.com/zai-org/GLM-5.

Executive Summary: The rapid evolution of large language models (LLMs) has shifted their role from simple tools for generating text to active problem solvers, particularly in software engineering and complex decision-making. However, high computational costs, limited efficiency in handling long interactions, and challenges in adapting to real-world tasks like autonomous coding remain major barriers. These issues are especially pressing now, as businesses and developers demand AI that can autonomously plan, implement, and iterate on projects—reducing human oversight while managing resources effectively amid growing demands for scalable AI in industries like tech and finance.

This document introduces GLM-5, a new open-source foundation model developed by Zhipu AI and Tsinghua University, aimed at demonstrating a shift from casual "vibe coding" (human-guided prompting) to "agentic engineering" (AI-driven autonomy). It evaluates GLM-5's capabilities in reasoning, coding, and agentic tasks, while showcasing innovations to cut costs and boost performance.

To build GLM-5, the team started with pre-training on a 28.5 trillion token dataset focused on code, reasoning, and diverse web content, scaling the model to 744 billion parameters using a mixture-of-experts architecture. A mid-training phase extended context handling to 200,000 tokens with agent-specific data from real software repositories. Post-training involved supervised fine-tuning on chat, reasoning, and coding examples, followed by multi-stage reinforcement learning (RL) for reasoning, agentic behaviors, and general alignment—using asynchronous RL to handle long interactions efficiently. Key efficiencies came from DeepSeek Sparse Attention (DSA), which cuts computation by 1.5 to 2 times for long sequences, and optimizations for Chinese hardware platforms like Huawei Ascend. The process drew on data from 2024–2025, including over 10,000 verifiable software environments, ensuring credibility through large-scale, diverse sources and comparisons to models like Claude Opus 4.5.

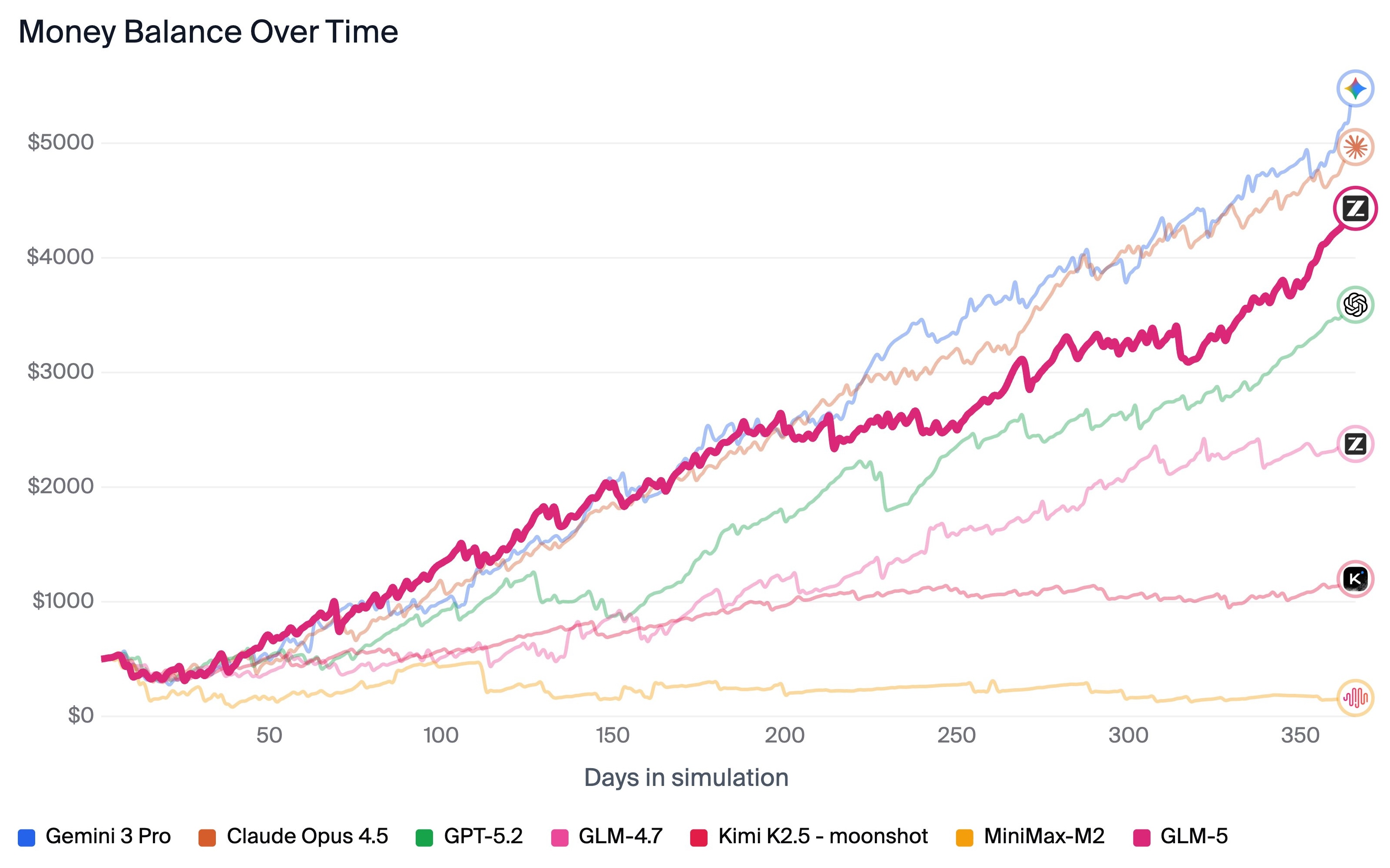

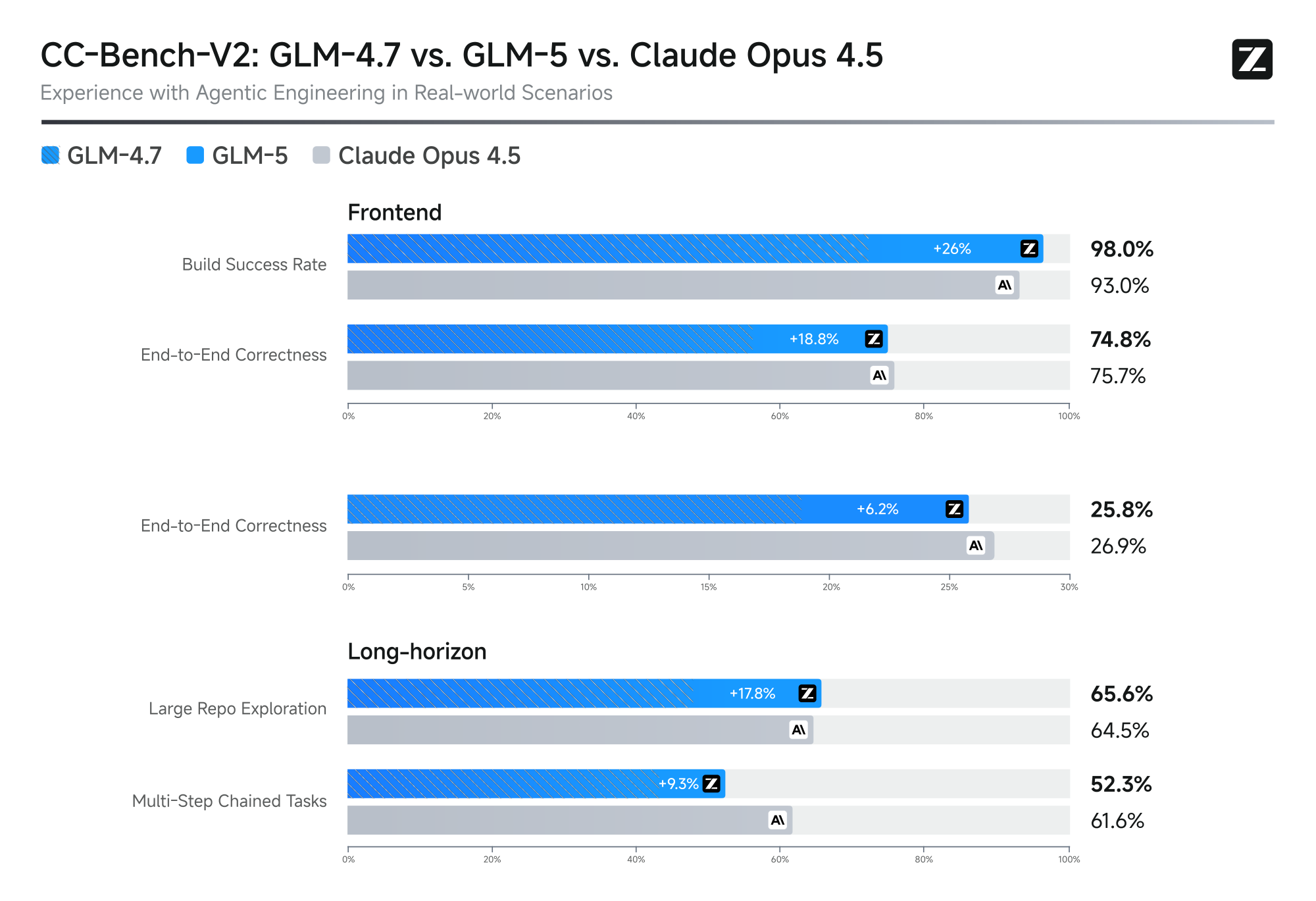

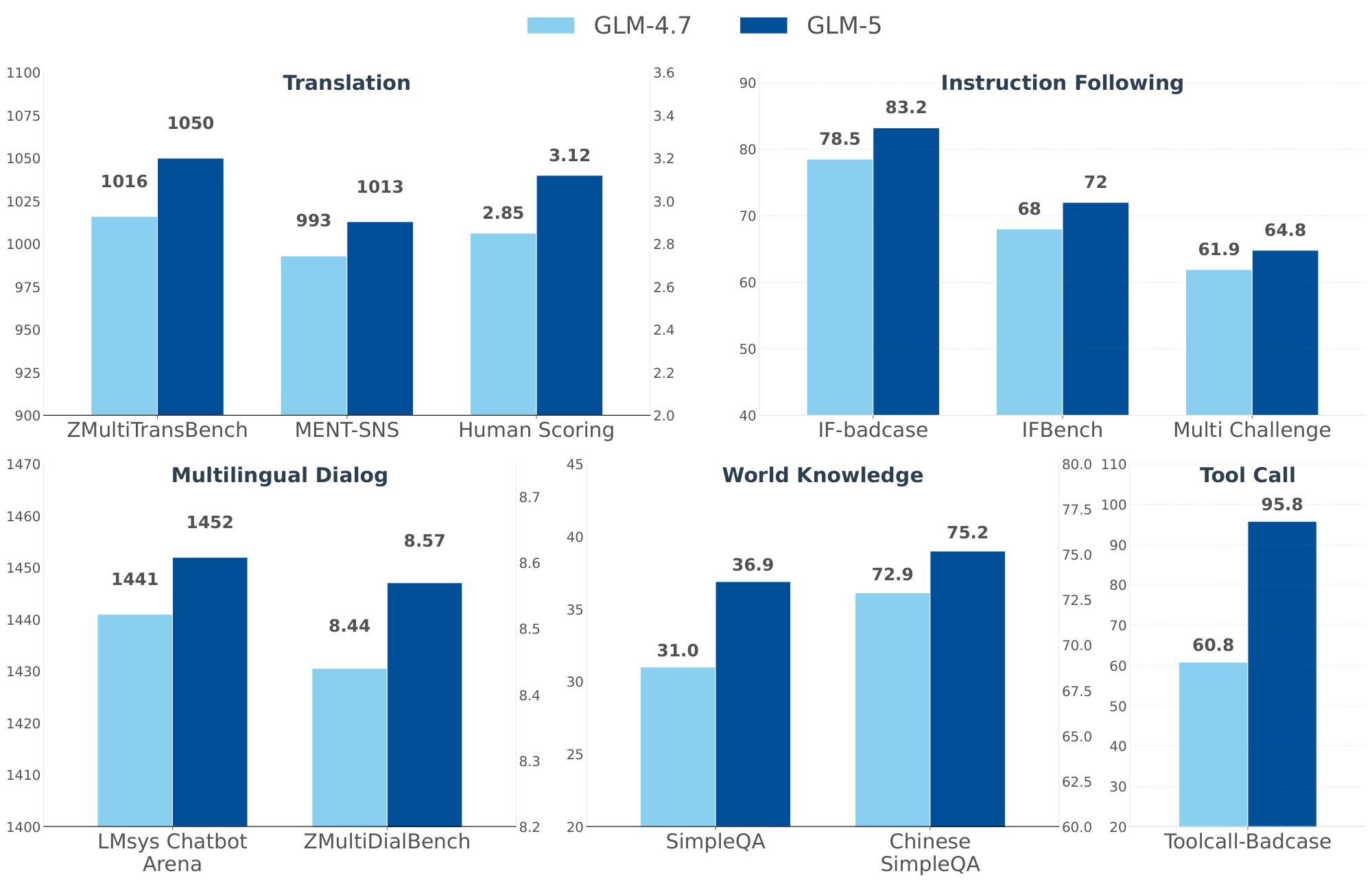

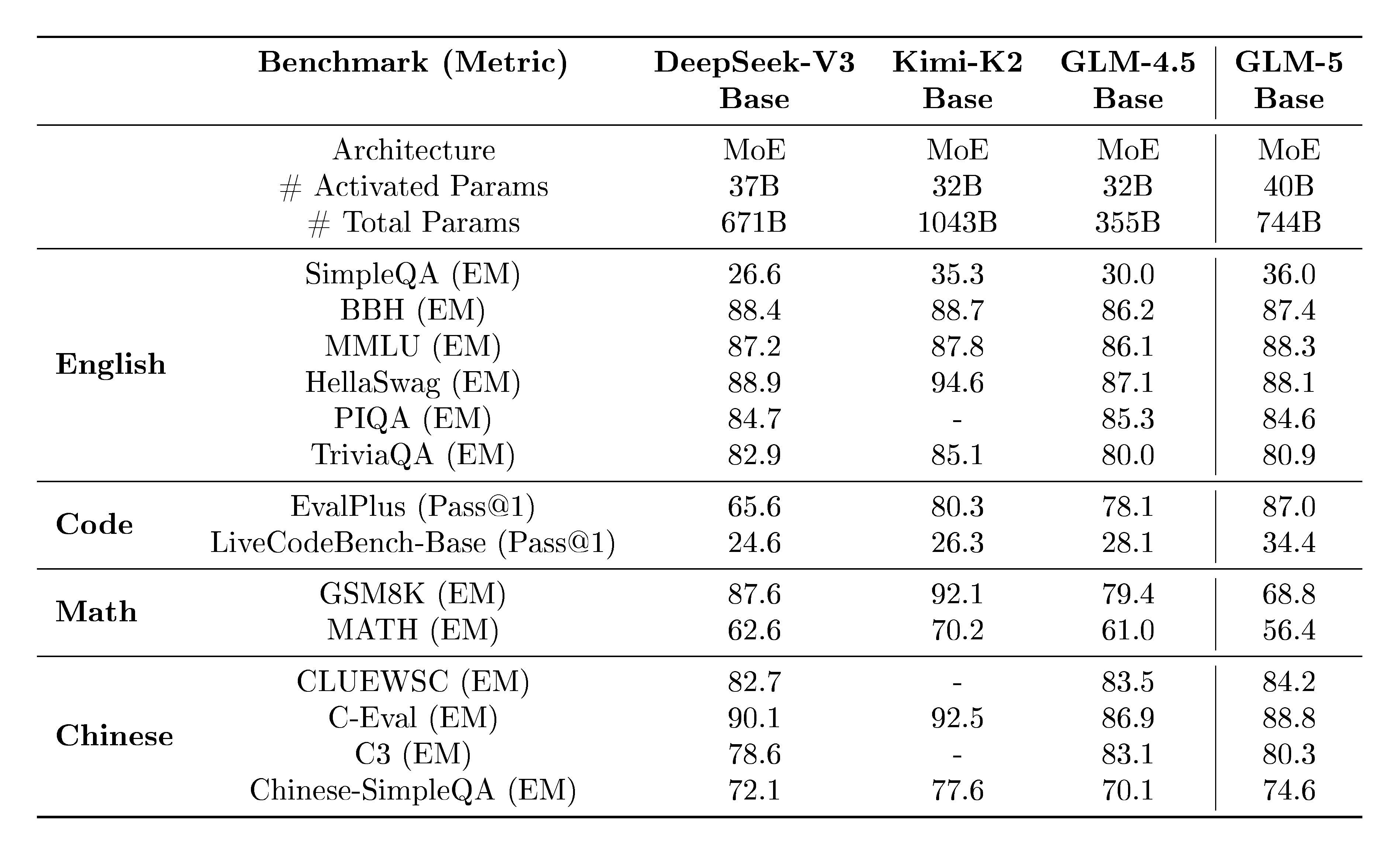

GLM-5 delivers top performance among open models on key benchmarks, with an average 20% gain over its predecessor GLM-4.7 and scores matching or exceeding proprietary leaders like Claude Opus 4.5 and GPT-5.2 on eight agentic, reasoning, and coding tests. It ranks first on LMArena for text and code tasks based on millions of human judgments, and achieves an Intelligence Index score of 50—the first for any open model, up 8 points from GLM-4.7, driven by better agentic skills and reduced errors. In long-horizon simulations, it excels, ending with a $4,432 bank balance on Vending-Bench 2 (top open model, nearing Claude's level) and outperforming priors on multi-step coding chains. Real-world coding evaluations on CC-Bench-V2 show 98% build success for frontend tasks and comparable pass rates to Claude on backend unit tests, though end-to-end completion lags slightly.

These results mean GLM-5 can handle complex, hours-long tasks like running a simulated business or fixing software bugs across languages, cutting training and inference costs while maintaining accuracy—potentially halving GPU needs for long contexts. This advances safety and performance in AI agents by enabling self-correction and tool use, narrowing the open-proprietary gap and supporting compliance in regulated sectors. Unlike expectations of efficiency trade-offs, GLM-5 shows no quality loss from DSA, making it more deployable on domestic hardware and reducing reliance on foreign tech.

Decision-makers should prioritize integrating GLM-5 into development workflows for cost savings and faster prototyping, especially in multilingual or code-heavy applications; options include fine-tuning for specific domains versus using the base model for broad tasks, with the former offering 10-20% gains but higher setup costs. Further work is needed, such as piloting in production environments and gathering more diverse real-world data. Limitations include benchmark sensitivities (e.g., judge prompts) and gaps in ultra-long consistency, leading to moderate confidence in absolute real-world rankings—readers should validate via custom tests before full adoption.

1. Introduction

Section Summary: The introduction discusses the need to rethink efficiency and architecture in pursuing artificial general intelligence, beyond just scaling up models, as large language models evolve from knowledge stores to active problem-solvers facing challenges in computational cost and real-world adaptability, especially in software engineering. It presents GLM-5 as a major advancement, achieving top performance on key benchmarks like those from ArtificialAnalysis.ai and LMArena for text and coding tasks, with about 20% improvement over its predecessor GLM-4.7 and leading scores among open-weight models, including strong results in long-term planning simulations. This progress stems from an innovative training pipeline involving massive data, extended context handling, reinforcement learning, and a new sparse attention mechanism to cut costs while boosting capabilities.

The pursuit of Artificial General Intelligence (AGI) requires not only scaling model parameters but also fundamentally rethinking the efficiency of intelligence and the architecture of autonomous improvement. With the release of GLM-4.5, we demonstrated that uniting Agentic, Reasoning, and Coding (ARC) capabilities into a single Model-of-Experts (MoE) architecture could yield state-of-the-art results across diverse benchmarks. However, as Large Language Models (LLMs) transition from passive knowledge repositories to active problem solvers, the dual challenges of computational cost and real-world adaptability—particularly in complex software engineering—have become the primary bottlenecks.

We present GLM-5, our next-generation flagship model designed to overcome these barriers. GLM-5 represents a paradigm shift in both performance and efficiency, achieving state-of-the-art status on major open leaderboards, including ArtificialAnalysis.ai, the LMArena Text, and the LMArena Code. More significantly, GLM-5 redefines the standard for real-world coding, demonstrating an unprecedented ability to handle complex, end-to-end software development tasks that go far beyond the scope of traditional static benchmarks like SWE-bench.

Results.

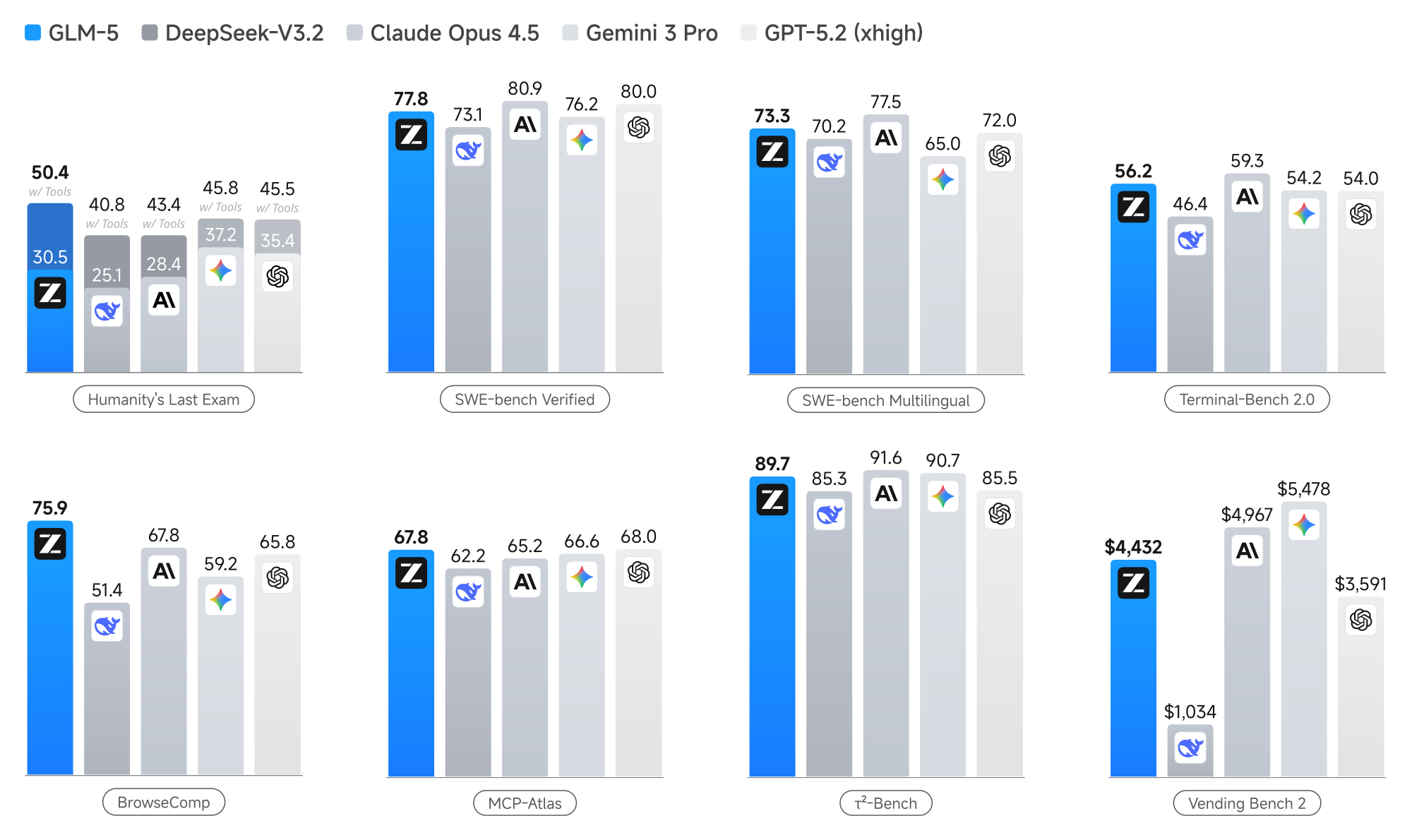

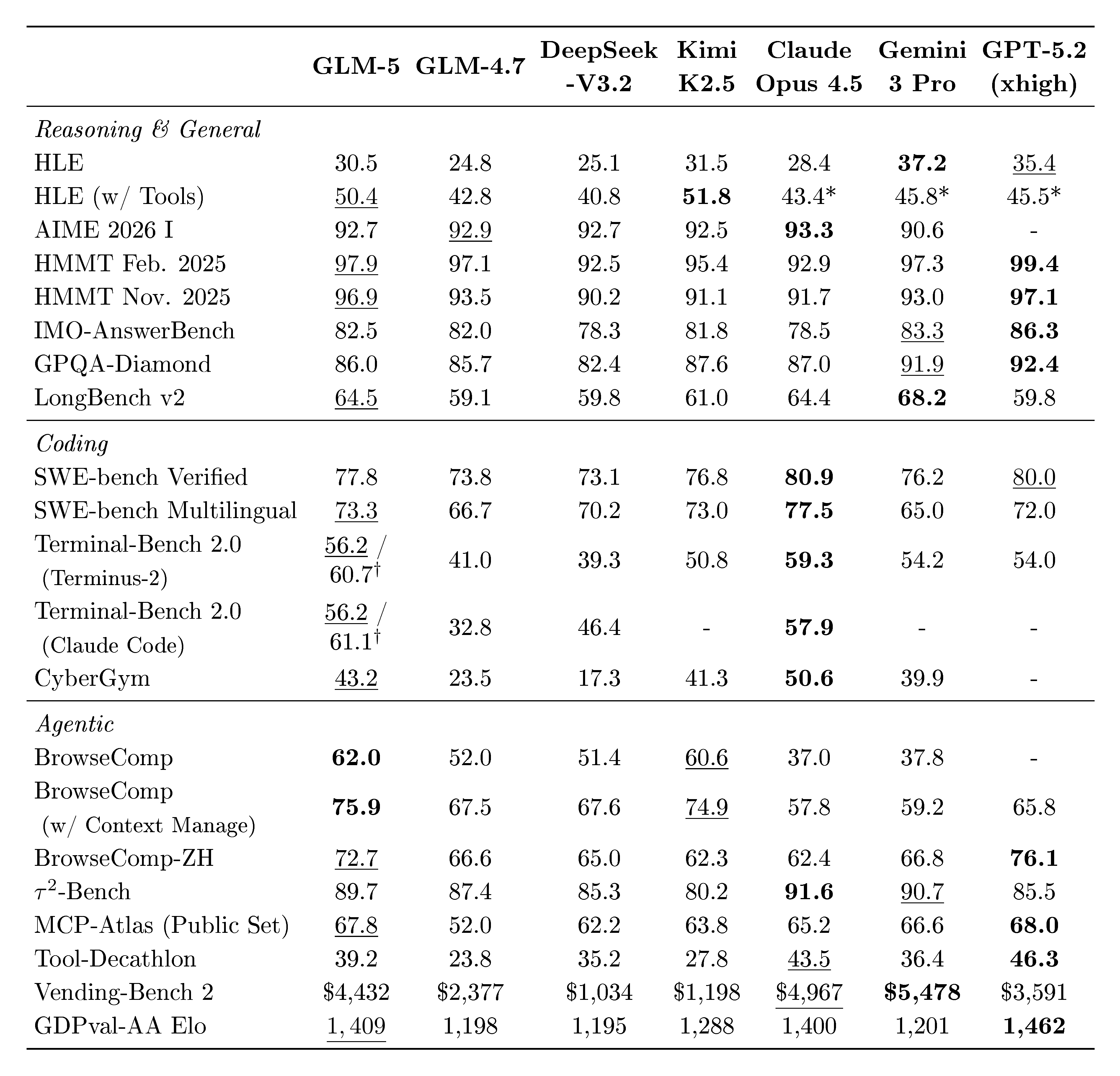

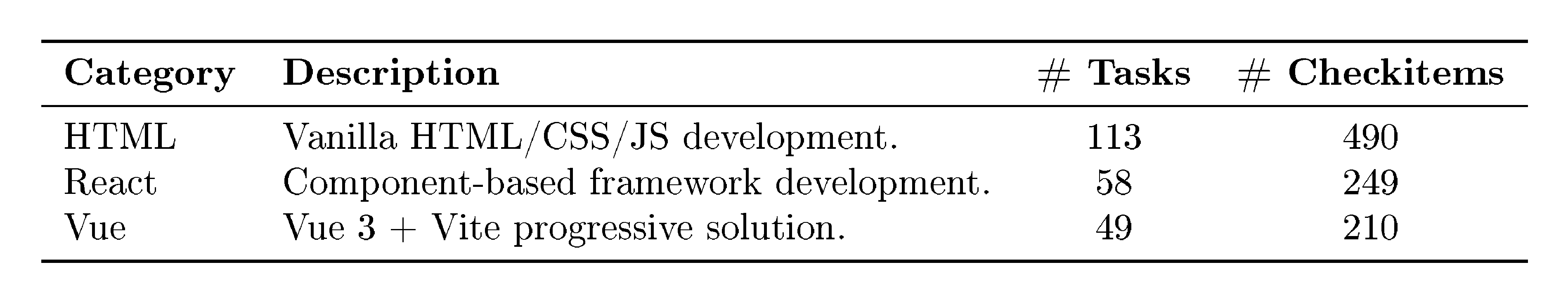

Figure 1 shows the results of GLM-5, GLM-4.7, Claude Opus 4.5, Gemini 3 Pro, and GPT-5.2 (xhigh) on 8 agentic, reasoning, and coding benchmarks: Humanity's Last Exam [1], SWE-bench Verified [2], SWE-bench Multilingual [3], Terminal-Bench 2.0 [4], BrowseComp [5], MCP-Atlas [6], $\tau^2$-Bench [7, 8], Vending Bench 2 [9]. On average, GLM-5 achieves about 20% improvement over our last version GLM-4.7, and is comparable to Claude Opus 4.5 and GPT-5.2 (xhigh), and better than Gemini 3 Pro.

GLM-5 scores 50 on the Intelligence Index v4.0 and is the new open weights leader (Cf. Figure 2), up from GLM-4.7's score of 42 - an 8 point jump driven by improvements across agentic performance and knowledge/hallucination. This is the first time an open weights model has achieved a score of 50 on the Artificial Analysis Intelligence Index v4.0.

:::: {cols="2"}

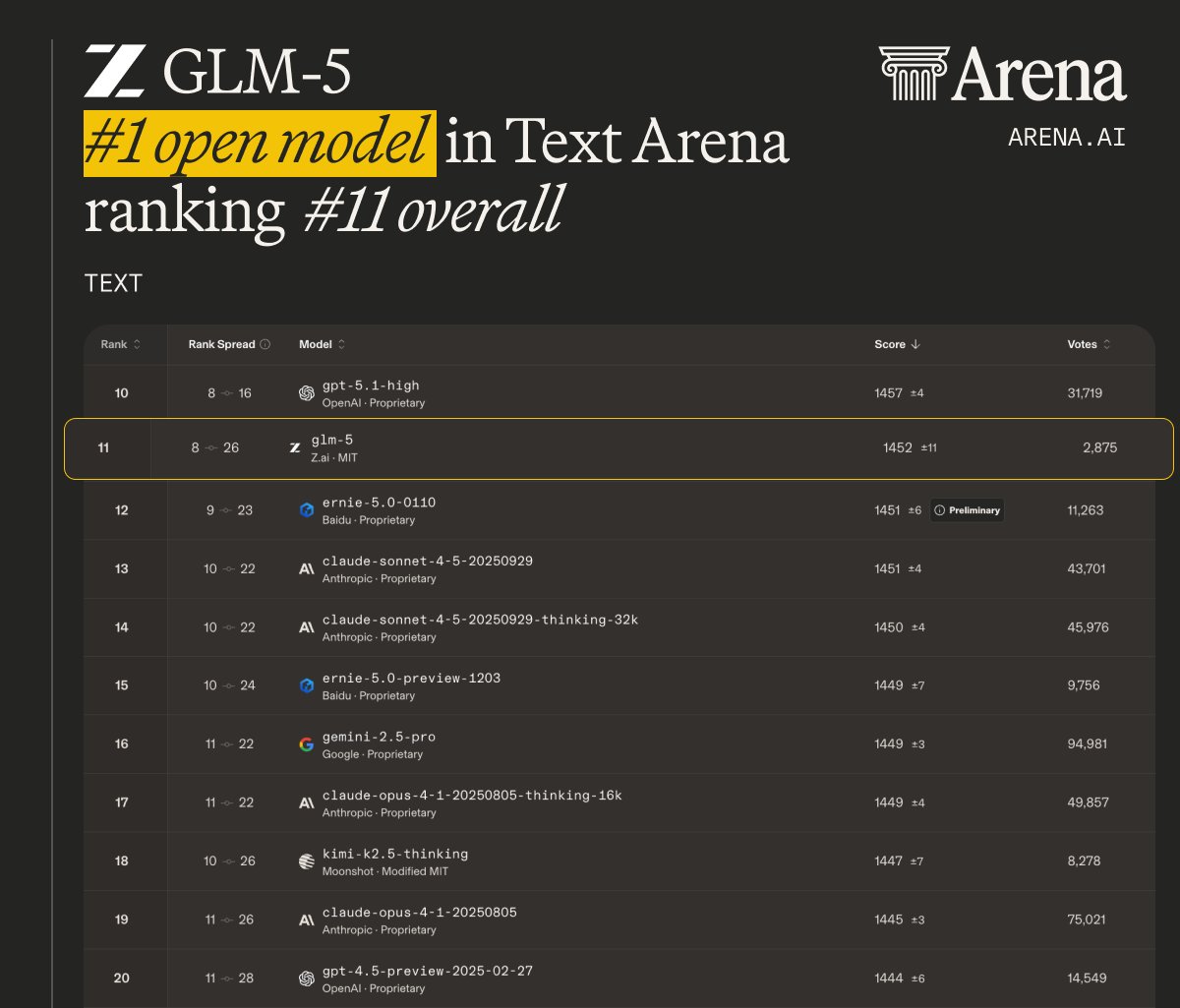

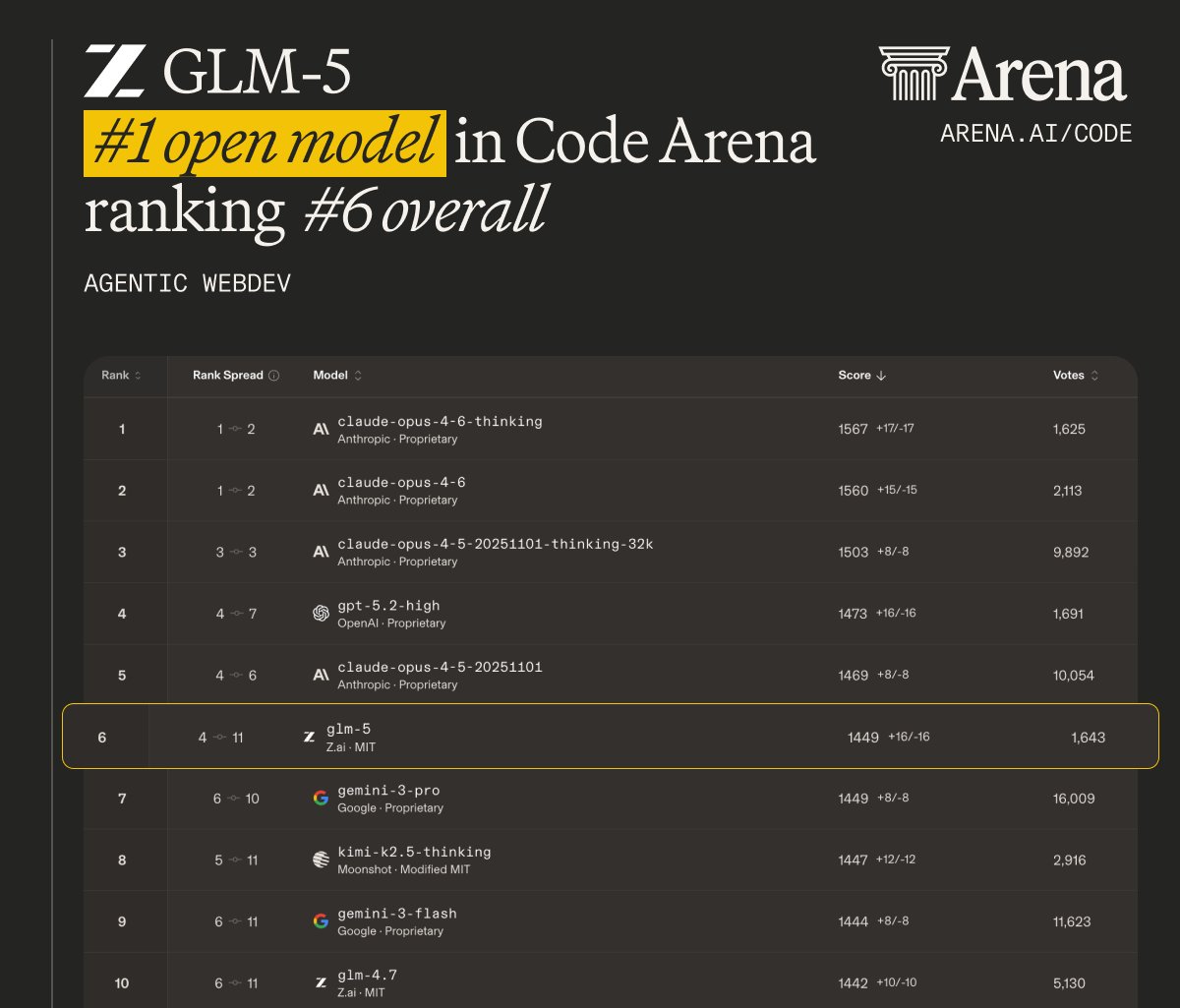

Figure 3: On LMArena, GLM-5 is the #1 open model in both Text Arena and Code Arena. ::::

:::: {cols="2"}

Figure 7: Results on several long-horizon tasks. Left: Vending-Bench 2; Right: CC-Bench-V2. ::::

LMArena, initiated by UC Berkeley, is a transparent, shared space to evaluate and compare frontier AI capabilities by human judgment with millions of real tasks, including writing, coding, reasoning, designing, searching, and creating. The large volume of human interactions generates signals of real-world utility, making it different from the other static benchmarks. Figure 3 shows that GLM-5 again is the #1 open model in both Text Arena and Code Arena, and overall on par with Claude-Opus-4.5 and Gemini-3-pro.

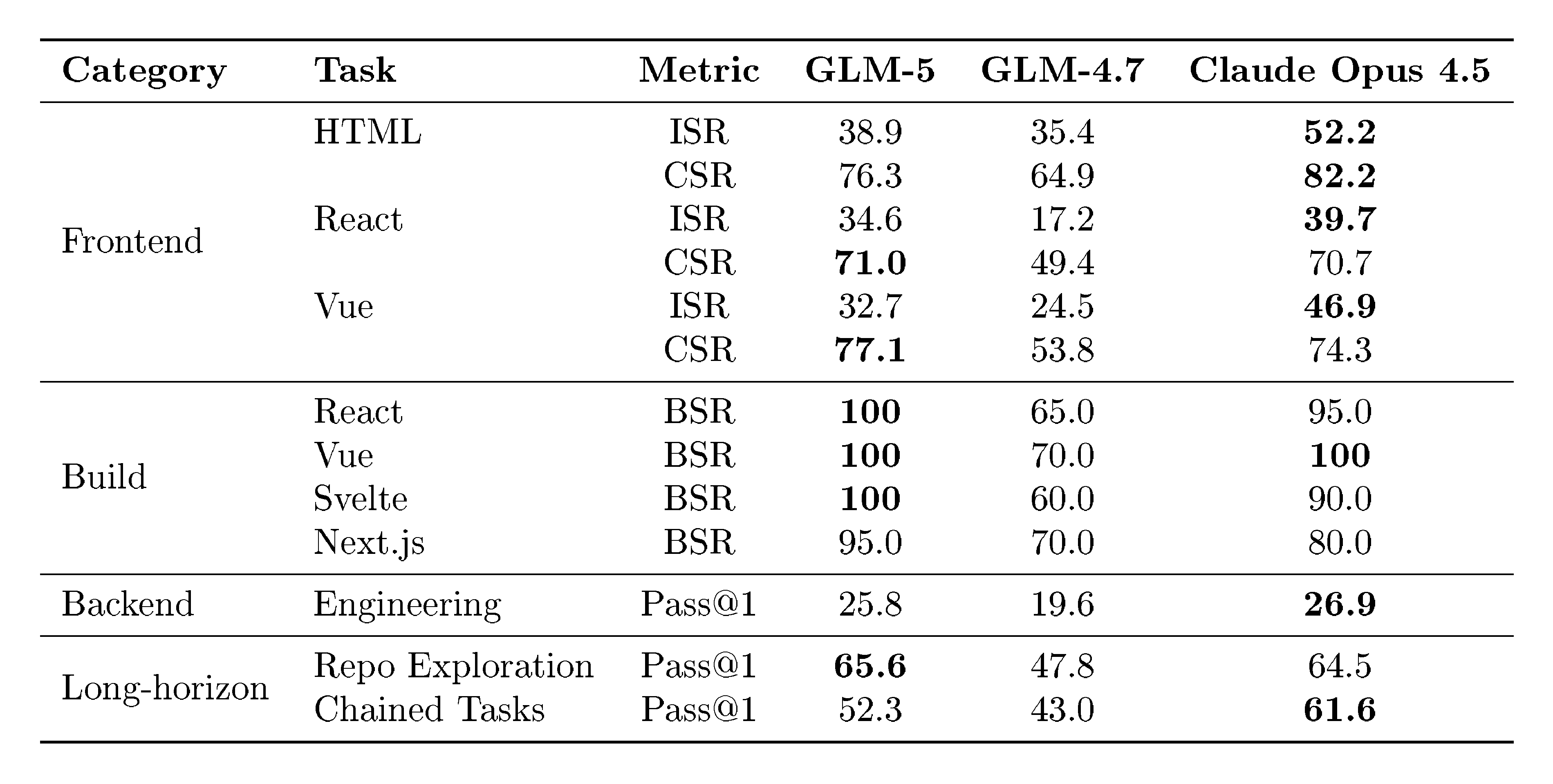

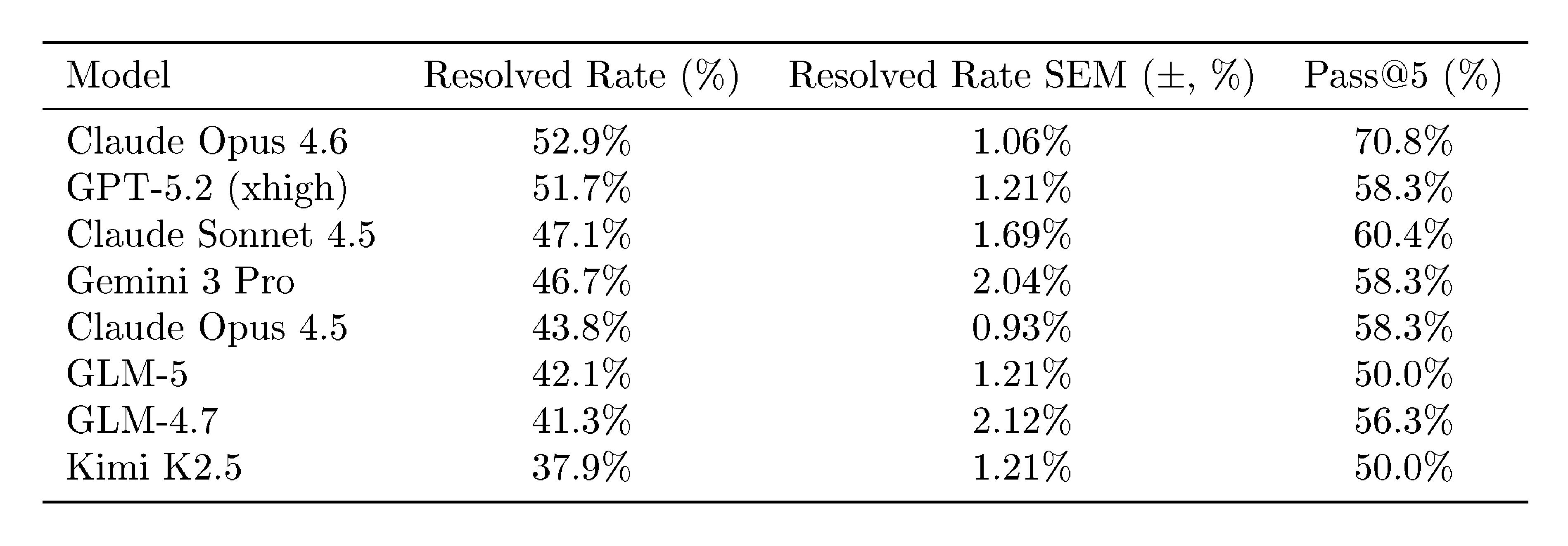

Long-term coherence in agents becomes more and more important. Coding agents can now write code autonomously for hours, and the length and breadth of tasks AI models are able to complete are likely to increase. We use two benchmarks, Vending-Bench 2 and CC-Bench-V2, to evaluate how GLM-5 is able to complete long-horizon tasks. Vending-Bench 2 is a benchmark for measuring AI model performance in running a business over long time horizons. Models are tasked with running a simulated vending machine business over a year and are scored on their bank account balance at the end. Figure 7 (left) shows that GLM-5 ranks #1 among all open-source models, finishing with a final account balance of $4, 432. It approaches Claude Opus 4.5, demonstrating strong long-term planning and resource management. Figure 7 (right) further shows results on our internal evaluation suite CC-Bench-V2. GLM-5 significantly outperforms GLM-4.7 across frontend, backend, and long-horizon tasks, narrowing the gap with Claude Opus 4.5.

Methods.

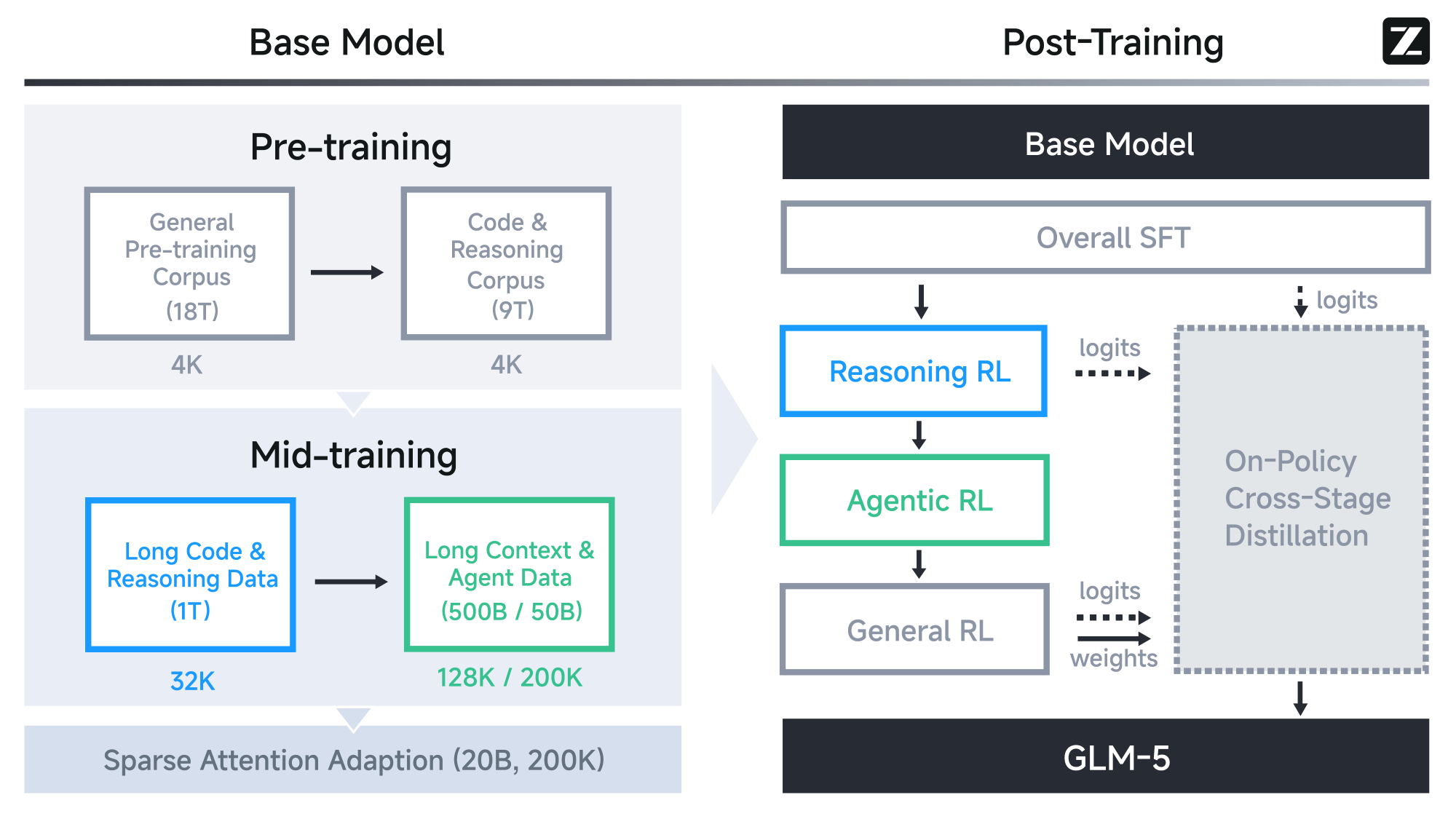

Figure 11 shows the overall training pipeline of GLM-5. Our Base Model training began with a massive 27 trillion token corpus, prioritizing code and reasoning early on. We then employed a distinct Mid-training phase to progressively extend context length from 4K to 200K, focusing specifically on long-context agentic data to ensure stability in complex workflows. In Post-Training, we moved beyond standard SFT. We implemented a sequential Reinforcement Learning pipeline—starting with Reasoning RL, followed by Agentic RL, and finishing with General RL. Crucially, we utilized On-Policy Cross-Stage Distillation throughout this process to prevent catastrophic forgetting, ensuring the model retains its sharp reasoning edge while becoming a robust generalist. In summary, the leap in GLM-5’s performance is driven by the following technical contributions:

First, we adopt DSA (DeepSeek Sparse Attention) [10], a novel architectural innovation that significantly reduces both training and inference costs. While GLM-4.5 improved efficiency through a standard MoE architecture, DSA allows GLM-5 to dynamically allocate attention resources based on token importance, drastically lowering the computational overhead without compromising long-context understanding or reasoning depth. With DSA, we scale the model parameters up to 744B and extend the training token budget to 28.5T tokens.

Second, we have engineered a new asynchronous reinforcement learning infrastructure. Building on the "slime" framework and the decoupled rollout engines initialized in GLM-4.5, our new infrastructure further decouples generation from training to maximize GPU utilization. This system allows for massive-scale exploration of agent trajectories without the synchronization bottlenecks that previously hampered iteration speed, significantly improving the efficiency of our RL post-training pipeline.

Third, we present novel asynchronous Agent RL algorithms designed to enhance the quality of autonomous decision-making. In GLM-4.5, we utilized iterative self-distillation and outcome supervision to train agents. For GLM-5, we have developed asynchronous algorithms that allow the model to learn from diverse, long-horizon interactions continuously. These algorithms are specifically optimized to improve the model's planning and self-correction capabilities in dynamic environments, directly contributing to our dominance in real-world coding scenarios.

Last, one more technical contribution lies in the fact that, from the first day, GLM-5 is full-stack adapted to Chinese GPU ecosystems. We have successfully completed deep optimization—spanning from underlying kernels to upper-level inference frameworks—across seven mainstream domestic chip platforms, including Huawei Ascend, Moore Threads, Hygon, Cambricon, Kunlunxin, MetaX, and Enflame.

With these advancements, GLM-5 stands not just as a more powerful model but as a more efficient and practical foundation for the next generation of AI agents. We release GLM-5 to the community to further advance the frontier of efficient, agentic general intelligence.

2. Pre-Training

Section Summary: The pre-training stage of GLM-5 builds a base language model by processing a massive 28.5 trillion tokens to enhance general language understanding and coding abilities, followed by mid-training for advanced agent and long-context handling. The model scales up to 744 billion parameters across 256 experts and 80 layers, roughly doubling the size of its predecessor, while introducing innovations like Multi-latent Attention to save memory and speed up long sequences, and Multi-token Prediction with shared parameters to boost efficiency during inference. Additionally, it incorporates DeepSeek Sparse Attention through continued training from a dense model, enabling cost-effective handling of 128,000-token contexts by focusing only on relevant information.

Similar to GLM-4.5, the base model of GLM-5 goes through two stages: pre-training for general language and coding capacity, and mid-training for agentic and long-context capacity. We extend the training token budget for all the training stages of GLM-5, totaling 28.5 trillion tokens for the base model.

2.1 Architecture

Model size scaling.

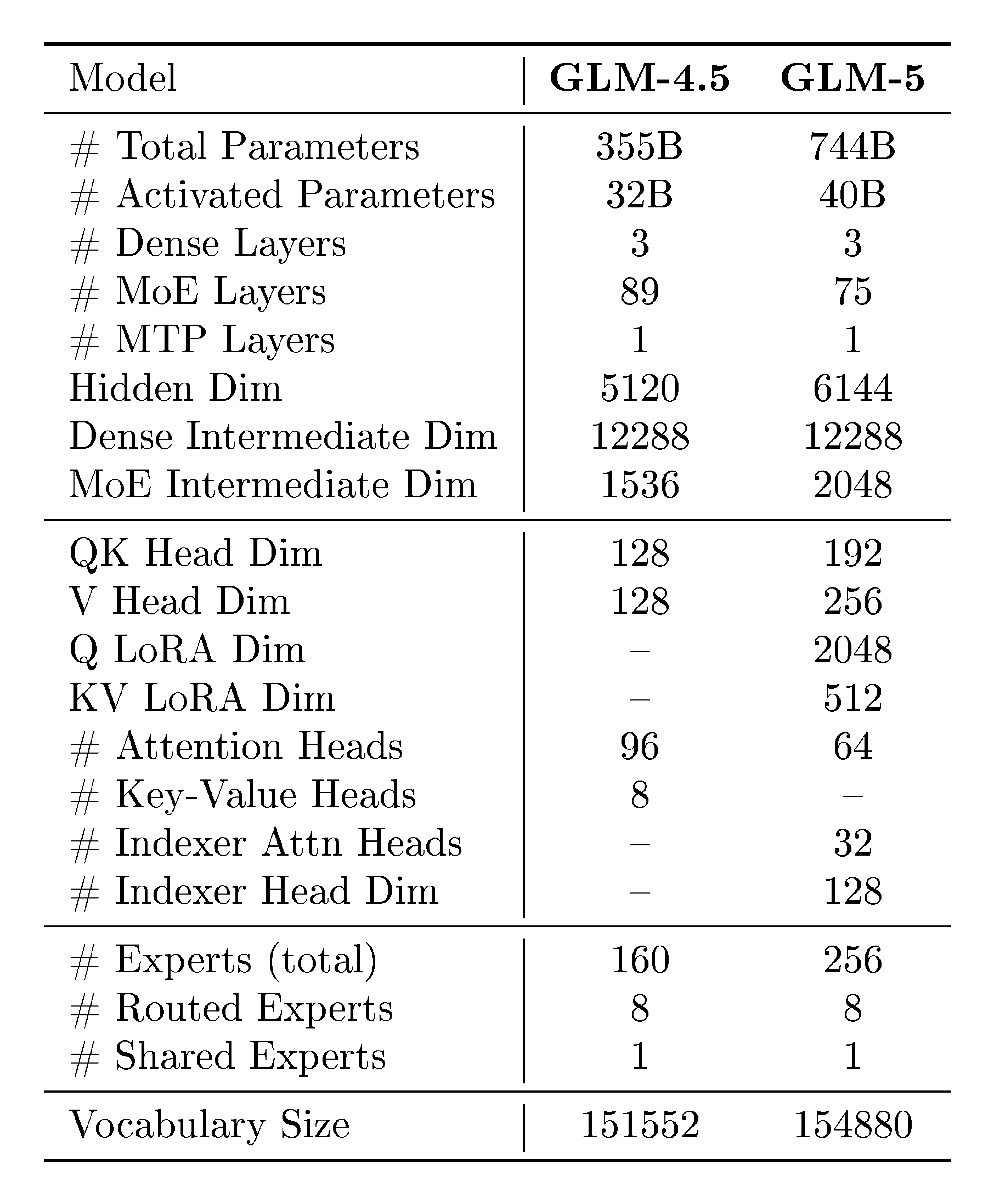

GLM-5 scales to 256 experts and reduces its layer count to 80 to minimize expert parallelism communication overhead. This results in a 744B parameter model (40B active parameters), doubling the total size of GLM-4.5, which utilized 355B total and 32B active parameters.

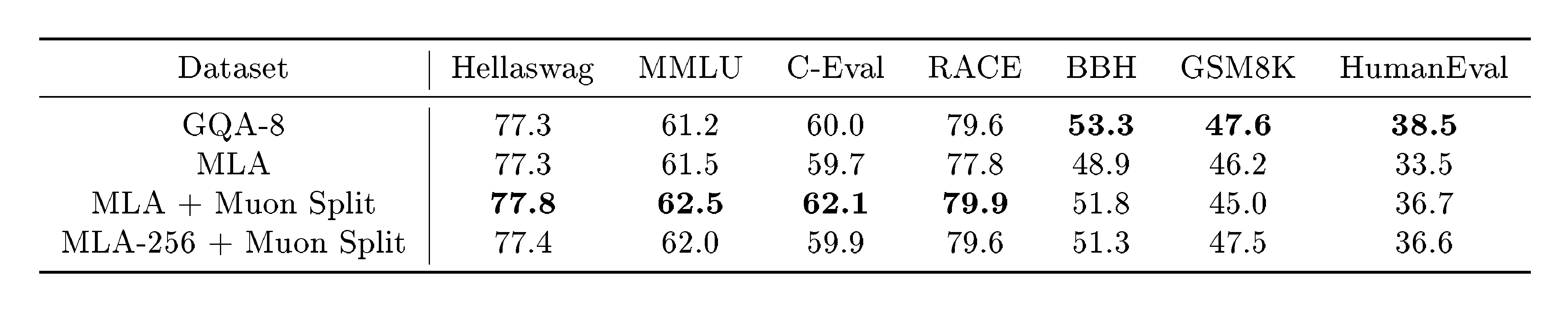

::: {caption="Table 1: Evaluation results for GQA-8 and variants of MLA."}

:::

Multi-latent Attention.

By employing reduced key-value vectors, Multi-latent attention (MLA) [11] matches the effectiveness of Grouped-Query Attention (GQA) but offers superior GPU memory savings and faster processing for long-context sequences.

However, in our experiments with Muon optimizer, we find that MLA with a 576-dimension latent KV-cache cannot match the performance of GQA with 8 query groups (denoted as GQA-8, 2048-dimension KV-cache). To overcome the performance gap, we propose an adaptation to the recipe of Muon optimizer in GLM-4.5. In the original recipe, we apply matrix orthogonalization to the up-projection matrices $W^{UQ}, W^{UK}, W^{UV}$ for multi-head queries, keys, and values. Instead, we split these matrices into smaller matrices for different heads and apply matrix orthogonalization to these independent matrices. The method, denoted as Muon Split, enables projection weights for different attention heads to update at different scales. As shown in Table 1, the method effectively improves the performance of MLA to match that of GQA-8. In practice, we also find that with Muon Split, the scale of attention logits of GLM-5 remains stable during pre-training without any clipping strategy.

Another disadvantage of MLA is its high computational cost during decoding. In decoding, MLA performs a 576-dimensional dot product, higher than the 128-dimensional computation of GQA. While the number of attention heads in DeepSeek-V3 is selected according to the roofline of H800 [12], it is inappropriate for other hardware. Given the Multi-head Attention (MHA) style of MLA during training and prefilling, we increase the head dimension from 192 to 256 and decrease the number of attention heads by 1/3. This keeps the training computation and the number of parameters constant while decreasing the decoding computation. The variant, denoted as MLA-256 in Table 1, matches the performance of MLA under Muon Split.

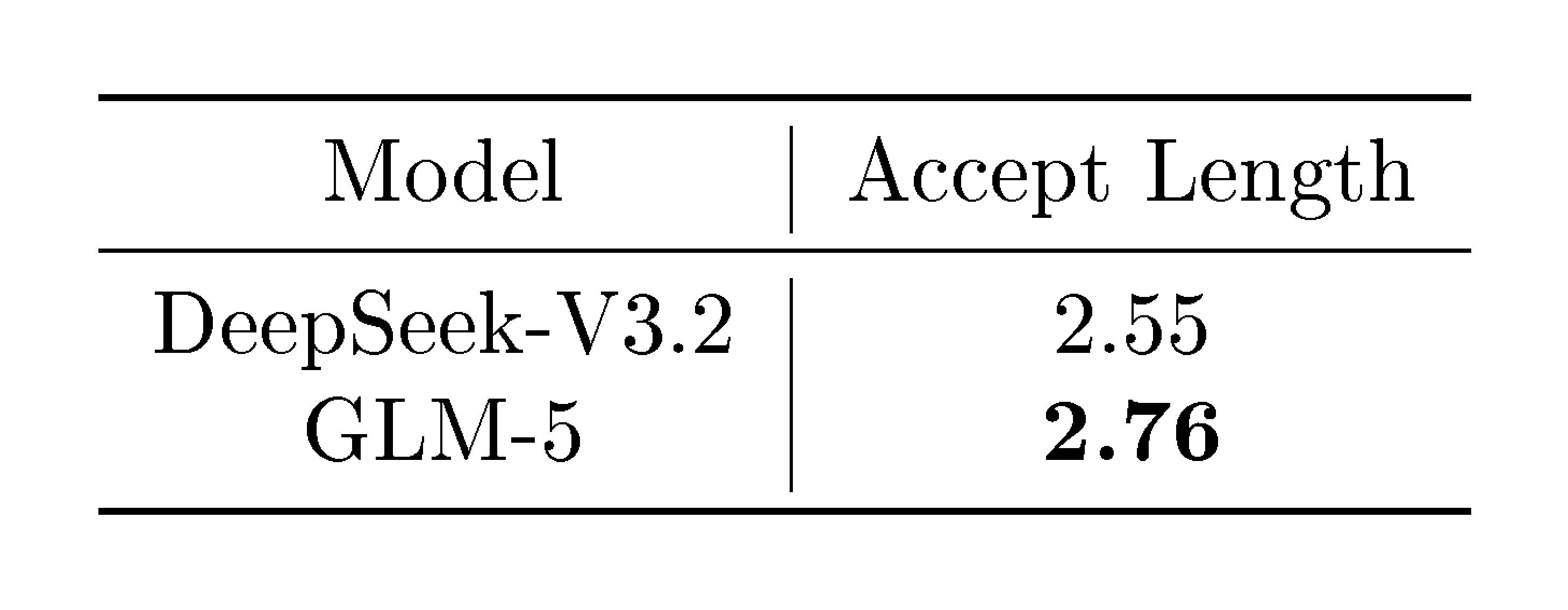

::: {caption="Table 2: Comparison of accept lengths of DeepSeek-V3.2 and GLM-5."}

:::

Multi-token Prediction with Parameter Sharing.

Multi-token prediction (MTP) [13, 14] increases the performance of base models and acts as draft models for speculative decoding [15]. However, during training, to predict the next $n$ tokens, $n$ MTP layers are required. As a result, the memory usage of MTP parameters and the kv cache scales linearly with the number of speculative steps. Instead, DeepSeek-V3 is trained with a single MTP layer and predicts the next 2 tokens during inference. The training-inference discrepancy reduces the acceptance rate of the second token. Therefore, we propose sharing the parameters of 3 MTP layers during training. This keeps the memory cost of the draft model consistent with DeepSeek-V3 while increasing the acceptance rate. In Table 2, we show that the acceptance length of GLM-5 is longer than DeepSeek-V3.2, given the same number of speculative steps (4) in our private prompt set.

2.1.1 Continued Pre-Training with DeepSeek Sparse Attention (DSA)

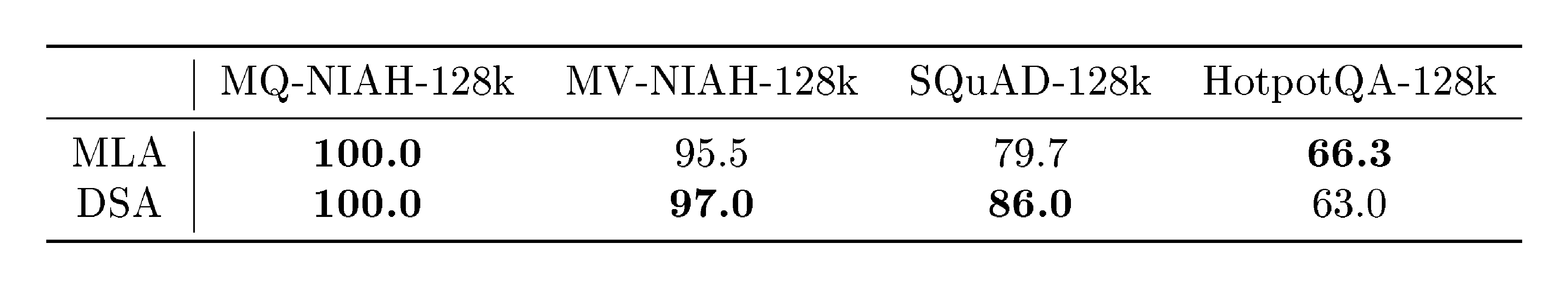

::: {caption="Table 3: Comparison of long-context benchmarks between MLA and DSA base models."}

:::

We use DSA in our training. The core philosophy of DSA [10] is to replace the traditional dense $O(L^2)$ attention—which becomes prohibitively expensive at $128\text{K}$ contexts—with a dynamic, fine-grained selection mechanism. Unlike fixed patterns (like sliding windows), DSA "looks" at the content to decide which tokens are important. What makes DSA particularly interesting from a researcher's perspective is how it was introduced via Continued Pre-Training from a dense base model. This avoided the "astronomical" cost of training from scratch. The transition follows a two-stage "dense warm-up and sparse training adaptation" strategy. DeepSeek-V3.2-Exp maintains the same benchmark performance as its dense predecessor, proving that 90% of attention entries in long contexts are indeed redundant. DSA reduces the attention computation by roughly 1.5-2× for long sequences, which is very important for the reasoning-heavy agents we are building, being able to handle 128K contexts at half the GPU cost.

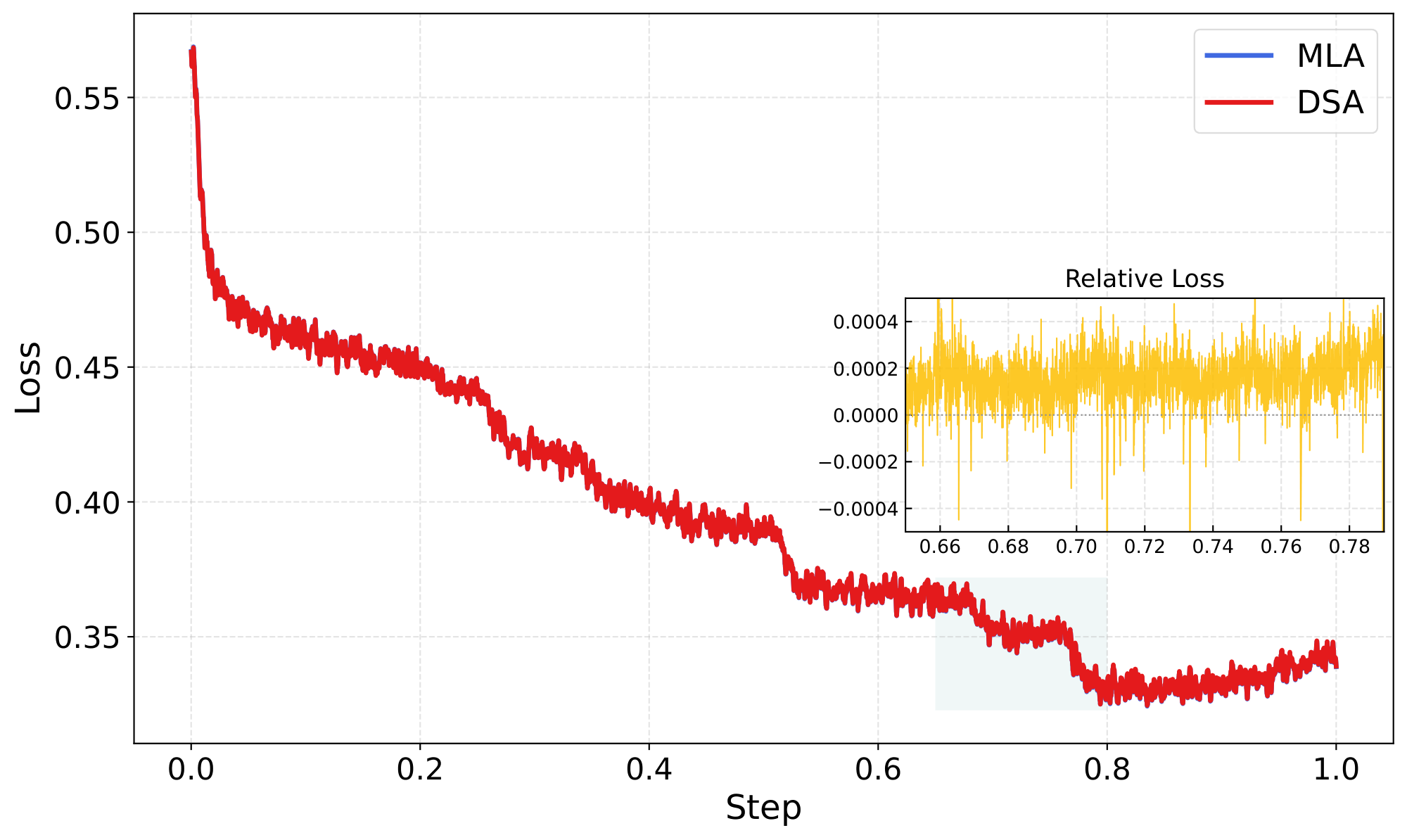

The DSA training begins from the base model at the end of mid-training. The warm-up stage goes through 1000 steps with each step trained on 14 sequences of 202, 752 tokens and a maximum learning rate of 5e-3. The sparse adaptation stage follows the training data and hyperparameters of mid-training and goes through 20B tokens. Although the training budget is much smaller than that of DeepSeek-V3.2 (943.7B tokens), we find that it is enough to adapt the DSA model to match the performance of the original MLA model. As shown in Table 3, the long-context performance of the DSA model is close to that of the MLA model. To further validate the effectiveness of DSA training, we fine-tune the DSA and MLA models with the same SFT data, respectively, and find that the two models tie in training loss and evaluation benchmarks.

2.1.2 Ablation Study of Efficient Attention Variants

Beyond DSA [16], we explore several alternative efficient attention mechanisms based on GLM-9B[^3]. The baseline employs group query attention across all 40 layers and has been fine-tuned with a 128K-token context window. We evaluate the following approaches:

[^3]: One of our GLM-4 series models, available at https://github.com/zai-org/GLM-4

- {{Sliding Window Attention (SWA) Interleave:}} A fixed alternating pattern of full-attention and windowed-attention layers applied uniformly across the network.

- {{Gated DeltaNet (GDN)} [17]}: A linear attention variant that replaces the quadratic softmax attention computation with a gated linear recurrence, reducing the computational cost of attention from quadratic to linear in sequence length.

Building on these baselines, we propose two improvements:

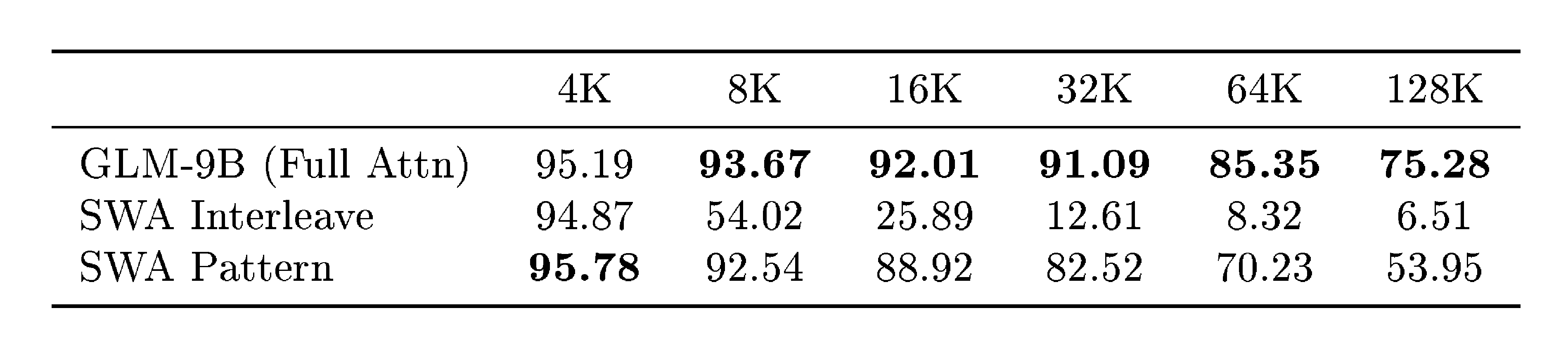

- SWA Pattern (Search-Based): Inspired by PostNAS [18], we introduce a search-based adaptation method that identifies the optimal subset of layers for SWA conversion while retaining full attention in the remaining layers. We employ a beam search strategy to determine the configuration that maximizes performance on long-context downstream tasks. To mitigate computational costs, we conduct the search exclusively at a 16K context length and generalize the resulting pattern to all other input lengths. Specifically, we use a beam size of 8, optimizing two layers per step; for GLM-9B (40 layers), the process converges in approximately 10 steps. At each step, candidate patterns are evaluated on the RULER benchmark [19] at 16K context length, and the top-8 candidates are retained for the subsequent step. The final derived pattern is

SFSSFFSSSFFFFSSFSFFFFFFSFSFSSFSSFSFSSFSSS, whereSandFdenote SWA and full-attention layers, respectively. As shown in Table 4, this search-based configuration significantly outperforms the fixed interleaved approach. Notably, despite being optimized only at 16K, the pattern exhibits robust length generalization, maintaining effective across all tested context lengths. - SimpleGDN: A minimalist linearization strategy designed for maximal reuse of pre-trained weights, improving upon GDN for continual-training adaptation. We remove the

Conv1dand explicit gating modules entirely and instead directly map the pre-trained Query, Key, and Value projection weights into the linear recurrence formulation. This simplification eliminates the need for additional parameters while preserving the efficiency benefits of linear attention.

::: {caption="Table 4: RULER benchmark results for the GLM-9B baseline and two SWA variants without any additional training. Both SWA methods use a 1:1 ratio of full-attention to SWA layers with a 4096-token window size. The search-based SWA pattern is discovered once at 16k context length and applied uniformly across all input lengths."}

:::

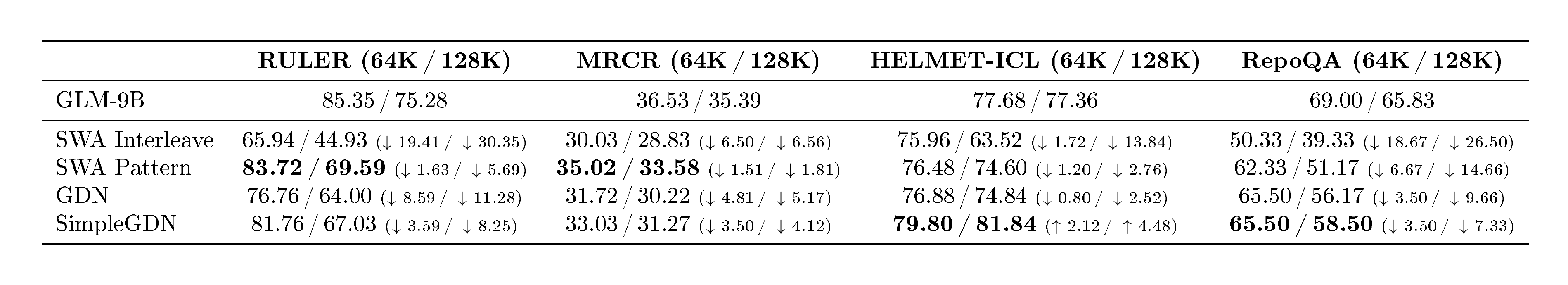

We evaluate all methods on four long-context benchmarks: RULER [19], MRCR^4, HELMET-ICL [20], and RepoQA [21]. Results are summarized in Table 5. We continually train each method on 190B tokens with a 64K context length, maintaining a 1:1 ratio between efficient attention layers and full attention layers. For the GDN and SimpleGDN methods, we follow the Jet-Nemotron [18] pipeline.

::: {caption="Table 5: Long-context benchmark results. All efficient attention variants are continual-trained from the GLM-9B full-attention baseline. SWA pattern denotes search-based layer selection; SWA interleave denotes the fixed alternating pattern. $\Delta$ @64K and $\Delta$ @128K show the difference relative to the full-attention baseline at 64K and 128K context lengths, respectively."}

:::

The results in Table 5 reveal a clear trade-off hierarchy among efficient attention methods. Naively interleaved sliding window attention (SWA) causes catastrophic degradation on long-context tasks (e.g., $-$ 30.35 on RULER@128K), while search-based layer selection substantially narrows this gap by preserving full attention where it matters most. Linear attention variants such as GDN further improve quality but at the cost of additional parameters; SimpleGDN strikes the best balance by maximally reusing pre-trained weights. Nevertheless, all of these methods incur an inherent accuracy gap on fine-grained retrieval tasks—up to 5.69 points on RULER@128K and 7.33 on RepoQA@128K—due to the unavoidable information loss introduced by efficient attention mechanisms during continual-training adaptation, even when half of the layers retain full attention. In contrast, DSA is lossless by construction: its lightning indexer achieves token-level sparsity without discarding any long-range dependencies, enabling application to all layers with no quality degradation.

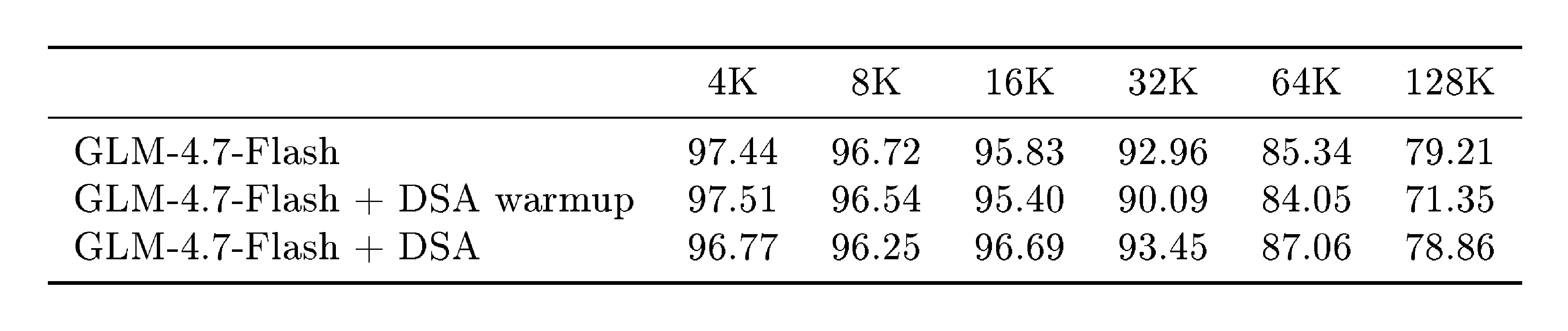

To verify this, we conduct a small-scale DSA experiment on GLM-4.7-Flash^5 with multi-latent attention. Following the standard DSA recipe, training proceeds in two stages: (i) a warmup phase that trains only the indexer for 1{, }000 steps (batch size 16) while keeping all base-model weights frozen, followed by (ii) a joint-training phase in which both the model and the indexer are co-trained on 150B tokens. Table 6 summarizes the results on RULER across context lengths from 4K to 128K. Even the warmup-only variant ({GLM-4.7-Flash + DSA warmup}) already preserves the vast majority of baseline performance; the drop is modest and concentrated at the longest context window (128K: $79.21 \to 71.35$), while shorter contexts remain virtually unaffected. After the full 150B-token joint-training phase, GLM-4.7-Flash + DSA closes nearly all of this residual gap: it surpasses the baseline at 16K ($+0.86$), 32K ($+0.49$), and 64K ($+1.72$), while incurring only a 0.35-point deficit at 128K.

::: {caption="Table 6: RULER benchmark results for the GLM-4.7-Flash with DSA. The warmup-only variant trains only the indexer while keeping the base model frozen, the full DSA variant jointly trains both for 150B tokens."}

:::

2.2 Pre-training Data

Web.

Building upon the GLM-4.5 data pipeline, we refined our selection criteria for massive web datasets. We introduced another DCLM [22] classifier based on sentence embeddings to identify and aggregate additional high-quality data beyond standard classifiers. To address the challenge of long-tail knowledge, we utilized a World Knowledge classifier—optimized via Wikipedia entries and LLM-labeled data—to distill valuable information from otherwise medium-low-quality data.

Code.

We expand the code pre-training corpus with refreshed snapshots from major code hosting platforms and a larger collection of code-containing web pages, resulting in a 28% increase in fuzzily deduplicated unique tokens. To improve corpus integrity and reduce noise, we fix metadata alignment issues in Software Heritage code files and adopt a more accurate language classification pipeline. We follow GLM-4.5’s quality-aware sampling strategy for source code and code-related web documents. In addition, we train dedicated classifiers for a broader set of low-resource programming languages (e.g., Scala, Swift, Lua, etc.), improving sampling quality for these languages.

Math & Science.

We collect high-quality math & science data from webpages, books, and papers to further increase the reasoning abilities. Specifically, the content extraction pipelines for webpages and PDF parsing mechanisms for books and papers are refined to increase data quality. We adopt large language models to score candidate documents and only retain the most educational content. For long-context documents, we develop a chunk-and-aggregate scoring algorithm to increase scoring accuracy. Filtering pipelines are conducted to strictly avoid the use of synthetic, AI-generated, or template-based data.

2.3 Mid-Training

Building upon the mid-training framework introduced in GLM-4.5, we scale up both the training volume and the maximum context length in GLM-5 to further strengthen the model's reasoning, long-context, and agentic capabilities.

Extended context and training scale.

We progressively extend the context window across three stages: 32K (1T tokens), 128K (500B tokens), and 200K (50B tokens). Compared to the 128K maximum in GLM-4.5, the additional 200K stage substantially improves the model's ability to process ultra-long documents and complex multi-file codebases. Long documents and synthetic agent trajectories are up-sampled at the later stages accordingly.

Software engineering data.

We retain the paradigm of concatenating repo-level code files, commit diffs, GitHub issues, pull requests, and relevant source files into unified training sequences. In GLM-5, we relax the repository-level filtering criteria to broaden the pool of eligible repositories, yielding approximately 10 million issue–PR pairs, while strengthening quality filtering at the individual issue level to reduce noise. We also retrieve a larger set of relevant files for each issue–PR pair, resulting in richer development contexts and broader coverage of real-world software engineering scenarios. After filtering, the issue–PR portion of the dataset comprises approximately 160B unique tokens.

Long-context data.

Our long-context training set comprises both natural and synthetic data. Natural data is curated from books, academic papers, and documents from general pre-training corpora employing multi-stage filtering (PPL, deduplication, length) and upsampling knowledge-intensive domains. In synthetic data construction, inspired by NextLong [23] and EntropyLong [24], we employed diverse techniques to build long-range dependencies. Highly similar texts were aggregated via interleaved packing to produce sequences, aiming to mitigate the lost-in-the-middle phenomenon and improve performance across a range of long-context tasks. At the 200K stage, we additionally incorporated a small proportion of MRCR-like data, with multiple variants designed to extend OpenAI's original paradigm, to strengthen recall in extended multi-turn dialogues. Empirically, we find that increasing data diversity progressively enhances the model's long-context performance; notably, a subsequent 200K mid-training stage, building upon the initial 128K phase, further bolstered the model’s performance even within the 128K context window.

2.4 Training Infrastructure

2.4.1 Memory Efficiency

Flexible MTP placement. Under interleaved pipeline parallelism [25], model components are flexibly assigned to stages. The MTP module spans embedding, transformer, and output components. It incurs substantially higher memory usage than other modules, leading to stage-level imbalance. We co-locate the MTP output layer with the main output layer on the final stage to enable parameter sharing, while placing its embedding and transformer components on the preceding stage. This reduces memory pressure on the final stage and improves balance across pipeline ranks.

Pipeline ZeRO2 gradient sharding. Each pipeline rank maintains multiple stages [25], and naively each stage requires a full gradient buffer for accumulation and optimizer updates. Inspired by ZeRO2 [26], we shard gradients across data-parallel ranks so that each stage stores only a 1/dp fraction of the full gradients. In addition, we retain full accumulation buffers for only two stages at a time and reuse them via double buffering. While one stage buffer accumulates gradients over consecutive microbatches, gradient synchronization for the previous stage buffer is performed in parallel. This reduces persistent gradient memory to per-stage sharded buffers plus only two full buffers for rolling accumulation, without additional synchronization overhead in practice.

Zero-redundant communication for the Muon distributed optimizer. Naive Muon implementations all-gather full model parameters on each data-parallel rank, causing transient memory spikes and redundant communication. We restrict all-gather to parameter shards owned by each rank and overlap local computation with shard communication. This eliminates redundant communication and significantly reduces optimizer-related peak memory overhead.

Pipeline activation offloading. During pipeline warmup, forward execution advances ahead of backpropagation, prolonging the lifetime of intermediate activations. We offload the activations to host memory after forward execution and reload them prior to backward execution [27]. Offloading is applied at layer granularity to further reduce peak memory usage. Combined with fine-grained recomputation, this largely eliminates the need to keep activations resident in GPU memory. Offload and reload are scheduled to overlap with computation while avoiding contention with peer-to-peer communication and MoE token routing (dispatch and combination). This substantially reduces the activation memory footprint with near-zero overhead.

Sequence-chunked output projection for peak memory reduction. Output projection and cross-entropy loss incur transient memory overhead from storing activations for backpropagation and promoting them to higher precision during loss computation. To reduce this overhead, we partition the input sequence into smaller chunks and compute projection and loss independently on each chunk, completing forward and backward passes and releasing activations before moving on. As a result, peak memory usage decreases as the number of chunks increases. With an appropriate chunk count, this approach alleviates output-layer memory pressure while maintaining performance comparable to unchunked execution.

2.4.2 Parallelism Efficiency

Efficient deferred weight gradient computation. To reduce pipeline bubbles, we defer some weight gradient computation of the critical path [28]. Fine-grained deferral with optimized storage and communication overlap improves throughput while keeping memory overhead bounded.

Efficient long-sequence training. Longer sequences exacerbate load imbalance across data parallel and pipeline parallel groups. We address this through workload-aware sequence reordering, dynamic redistribution of attention computation, and flexible partitioning of data parallel ranks into context-parallel groups of varying sizes [29, 30]. A hierarchical all-to-all overlaps intra-node and inter-node communication for QKV tensors to reduce latency.

2.4.3 INT4 Quantization-aware training

To provide better accuracy at low-precision, we apply INT4 QAT in the SFT stage. Moreover, to further mitigate the training time overhead, we have developed a quantization kernel applicable to both training and offline weight quantization, which ensures bitwise-identical behavior between training and inference.

3. Post-Training

Section Summary: The post-training phase of GLM-5 refines the base model into a versatile assistant excelling in reasoning, coding, and task automation through a step-by-step alignment process, beginning with supervised fine-tuning on diverse data like chats, math problems, and coding scenarios, and extending the model's context handling to over 200,000 tokens. This fine-tuning introduces flexible thinking modes, such as step-by-step reasoning before responses or preserving thoughts across conversations to maintain consistency in long tasks. Subsequent reinforcement learning stages specialize in reasoning and agent behaviors, followed by a general alignment step and a distillation technique to boost performance without losing prior gains.

The post-training phase of GLM-5 aims to transform the base model into a highly capable assistant with robust reasoning, coding, and agentic abilities. As illustrated in Figure 11, our pipeline follows a progressive alignment strategy: starting with multi-task Supervised Fine-Tuning (SFT) that introduces sophisticated interleaved thinking modes, followed by specialized Reinforcement Learning (RL) stages for reasoning and agentic tasks, and concluding with a general RL stage for human-style alignment. By leveraging on-policy cross-stage distillation as the final refinement, GLM-5 effectively mitigates capability regression while harnessing the performance gains from each training stage.

3.1 Supervised Fine-Tuning

Compared with GLM-4.5, GLM-5 significantly expands the scale of Agent and Coding data during the SFT stage. The SFT corpus of GLM-5 covers three major categories:

- General Chat: question answering, writing, role-playing, translation, multi-turn dialogue, and long-context interactions;

- Reasoning: mathematical, programming, and scientific reasoning;

- Coding & Agent: frontend and backend engineering code, tool calling, coding agents, search agents, and general-purpose agents.

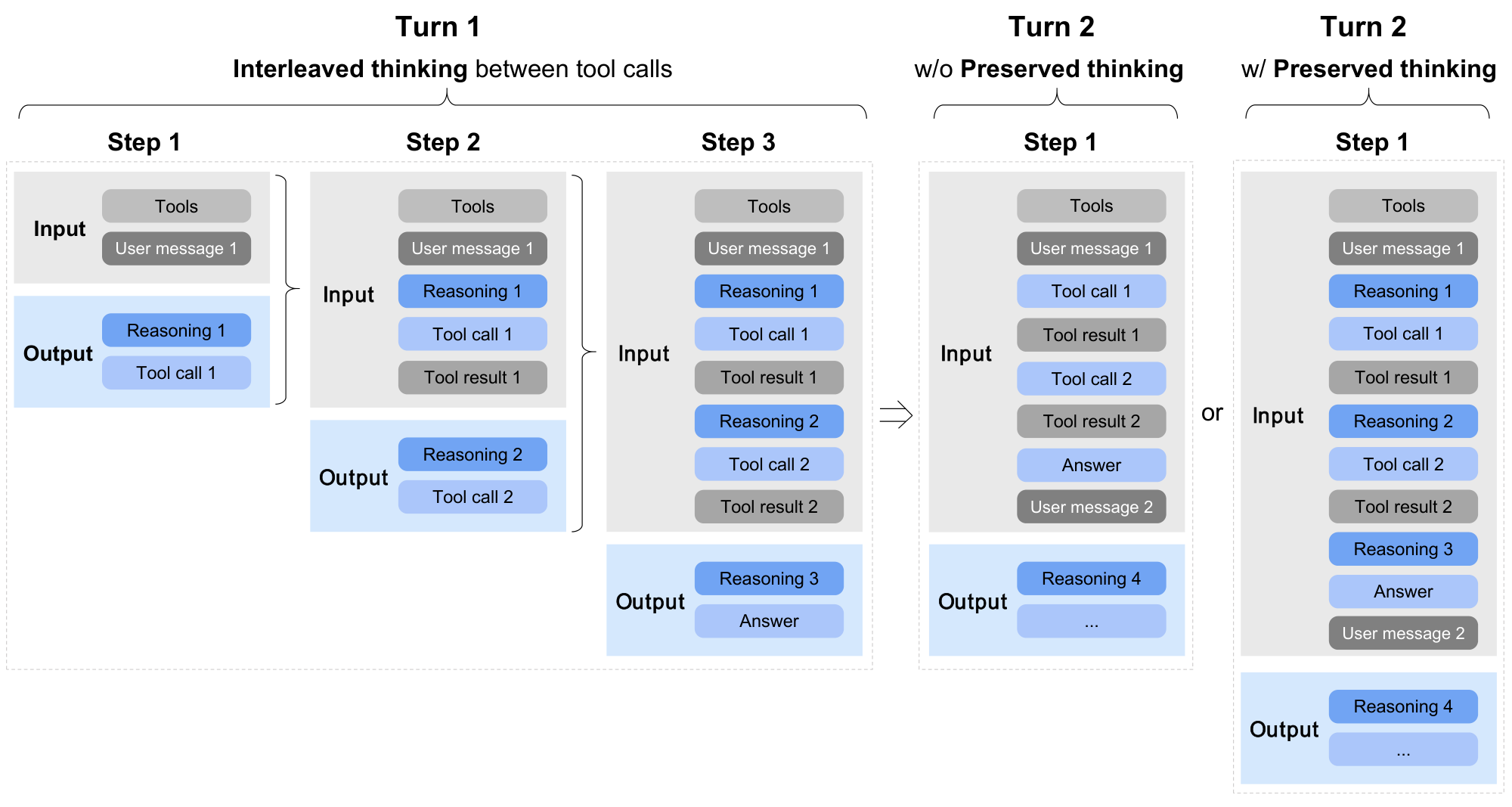

Additionally, GLM-5 extends the maximum context length to 202, 752 tokens during SFT. Along with an updated chat template, the model supports three distinct thinking characteristics (see Figure 13), including:

- Interleaved Thinking: the model thinks before every response and tool call, improving instruction following and the quality of generation[^1].

- Preserved Thinking: in coding agent scenarios, the model automatically retains all thinking blocks across multi-turn conversations, reusing existing reasoning instead of re-deriving it from scratch. This reduces information loss and inconsistencies, and is well-suited for long-horizon, complex tasks[^2].

- Turn-level Thinking: the model supports per-turn control over reasoning within a session—disable thinking for lightweight requests to reduce latency/cost, enable it for complex tasks to improve accuracy and stability.

[^1]: Interleaved thinking was first introduced by https://platform.claude.com/docs/en/build-with-claude/extended-thinking#interleaved-thinking

[^2]: Preserved thinking was also adopted by Claude since Opus 4.5. See https://platform.claude.com/docs/en/build-with-claude/extended-thinking#thinking-block-preservation-in-claude-opus-4-5-and-later

By thinking between actions and maintaining consistency across turns, GLM-5 achieves more stable and controllable behavior on complex tasks.

For General Chat, we optimize the response style to be more logical and concise compared to GLM-4.5. For role-playing tasks, we collect and construct a broader and more diverse dataset covering multiple languages and role configurations. In particular, we define several evaluation dimensions—including instruction following, linguistic expressiveness, creativity, logical coherence, and long-dialogue consistency—and apply both automatic and human filtering to curate and refine the data.

For Reasoning tasks, we further enhance the depth of the model's reasoning. Specifically, for logical reasoning, we construct verifiable problems and synthesize high-quality data using rejection sampling. For mathematical and scientific problems, a difficulty-based filtering process is applied, retaining only problems that are challenging for the GLM-4.7 model.

For Coding and Agent tasks, compared to GLM-4.5, GLM-5 constructs a large number of execution environments to obtain high-quality trajectories, with particular emphasis on real-world scenarios and long-horizon tasks. We further improve the SFT data using expert reinforcement learning and rejection sampling. Erroneous segments within trajectories are retained but masked out in the loss function, allowing the model to learn error correction behaviors without reinforcing incorrect actions.

3.2 Reasoning RL

RL algorithm backbone.

Our RL algorithm builds upon GRPO [31] and incorporates the IcePop technique [32] to mitigate the training-inference mismatch, i.e., the discrepancy between the inference distribution and the training distribution during RL optimization. We explicitly distinguish between the training policy $\pi^{\text{train}}$, used for gradient updates, and the inference policy $\pi^{\text{infer}}$, used for trajectory sampling. Compared to the original IcePop formulation, we remove the KL regularization term to accelerate RL improvement. The final optimization loss is:

$ \begin{aligned} \mathcal{L}(\theta)= -\mathbb{E}{ x \sim \mathcal{D}, {y_i}{i=1}^{G} \sim \pi^{\text{infer}}{\theta{\text{old}}}(\cdot \mid x) } &\Bigg[\frac{1}{G} \sum_{i=1}^{G} \frac{1}{|y_i|} \sum_{t=1}^{|y_i|} \operatorname{pop} (\rho_{i, t}, 1/\beta, \beta) \ &\cdot \min!\left(r_{i, t}\hat{A}{i, t}, \operatorname{clip}!\left(r{i, t}, 1-\epsilon_{\text{low}}, 1+\epsilon_{\text{high}} \right) \hat{A}_{i, t} \right) \Bigg],

\end{aligned}\tag{1} $

where the training-inference mismatch ratio is defined as \begin{align*} \rho_{i, t} = \frac{ \pi_{\theta_{old}}^{train}(y_{i, t} \mid x, y_{i, <t}) }{ \pi_{\theta_{old}}^{infer}(y_{i, t} \mid x, y_{i, <t}) }. \end{align*}

The operator $\operatorname{pop}(\cdot)$ suppresses samples whose mismatch ratio deviates excessively: \begin{align*} \operatorname{pop}($\rho_{i, t}, 1/\beta, \beta)$ = \begin{cases} $\rho_{i, t}$, & 1/ $\beta \le \rho_{i, t} \le \beta$,

0, & otherwise. \end{cases} \end{align*}

The PPO-style importance ratio and the group-normalized advantage follow the original GRPO definition: \begin{align*} $r_{i, t}$ = $\frac{ \pi_\theta^{\text{train}}(y_{i, t}\mid x, y_{i, <t}) }{ \pi_{\theta_{\text{old}}}^{\text{train}}(y_{i, t}\mid x, y_{i, <t}) }$,

$\hat{A}_{i, t}$ = $\frac{ R_i - \operatorname{mean}(R_1, \dots, R_G) }{ \operatorname{std}(R_1, \dots, R_G) }$. \end{align*}

During training, we set hyperparameters $\beta=2, \epsilon_{\text{low}}=0.2, \epsilon_{\text{high}}=0.28$. Training is performed entirely on-policy with a group size of 32 and a batch size of 32.

DSA RL insights.

We conduct a very large-scale RL training on a model based on the DSA architecture. Compared with MLA, DSA introduces an additional indexer that retrieves the top-k most relevant key-value entries and computes attention sparsely over the retrieved subset. The retrieved top-k results are critical for RL stability. This is analogous to how MoE models use routing replay [33] to preserve the activated top-k experts to ensure training-inference consistency. However, directly adapting this strategy to indexer replay, i.e., storing the indexer’s top-k indices at every token position is clearly impractical, since the $k = 2048$ used by the indexer is much larger than the $k$ typically used in MoE, and storing all these indices would incur enormous storage costs as well as significant communication overhead between the training engine and the inference engine.

We find that adopting a deterministic top-k operator effectively resolves the training-inference mismatch in DSA indexer token selection. Compared with the non-deterministic CUDA-based top‑k implementation used in SGLang’s DSA Indexer, directly using the naive torch.topk is slightly slower but deterministic. It produces more consistent outputs and yields substantial RL gains. In contrast, other non-deterministic top‑k operators (e.g., CUDA or TileLang implementations) caused drastic performance degradation during RL after only a few steps, accompanied by a sharp drop in entropy. Therefore, throughout our RL stages, we use torch.topk as the default top-k operator in the DSA Indexer in our training engine. We also freeze the indexer parameters by default during RL to accelerate training and prevent unstable learning in the indexer.

Mixed domain reasoning RL.

In the Reasoning RL stage, we perform mixed RL training over four domains: mathematics, science, code, and tool-integrated reasoning (TIR). For mathematics and science, we curate data from both open-source datasets [34, 35] and co-developed collections with external annotation vendors. We further apply difficulty filtering to focus training on problems that GLM-4.7 solves correctly only rarely or fails consistently, while remaining solvable by stronger teacher models (e.g., GPT-5.2 xhigh and Gemini 3 Pro Preview). For code, we cover both competitive programming style tasks and scientific coding tasks. The former is primarily sourced from Codeforces and representative datasets such as TACO [36] and SYNTHETIC-2-RL [37], while the latter is constructed from internal problem pools by decomposing questions into the minimal code implementations required for correct solutions. For TIR, we reuse the more challenging subset of mathematics and science RL data, and additionally co-build STEM questions with annotation vendors that are explicitly designed to be answered with external tools. During RL training, we assign domain and source-specific judge models or evaluation systems to produce binary outcome rewards. We keep the overall mixture roughly balanced across the four domains, and consistently observe stable and significant gains in each domain under the mixed RL setting.

3.3 Agentic RL

To facilitate agentic performance of GLM-5, we develop a fully asynchronous and decoupled RL framework and optimize GLM-5 in coding and search agent tasks. Naive synchronous RL suffers from severe GPU idle time during long-horizon agent rollouts. By decoupling inference and training engines via a central Multi-Task Rollout Orchestrator, we achieve high-throughput joint training across diverse agentic workloads.

To maintain training stability under asynchronous off-policy conditions, we introduce two key mechanisms. First, a Token-in-Token-out (TITO) gateway eliminates re-tokenization mismatches by preserving exact action-level correspondence. Second, we employ a Direct Double-sided Importance Sampling, which applies a token-level clipping mechanism ($[1-\epsilon_\ell, 1+\epsilon_h]$) to rollout log-probabilities, while efficiently controlling off-policy bias without tracking historical policy checkpoints. We also employ a DP-aware routing to maximize KV-cache reuse during long-context inference for large-scale MoE models for speed up. To scaling agentic environments, we scale verifiable training environments across three domains: over 10K real-world Software Engineering (SWE), terminal tasks, and high-difficulty multi-hop search tasks. More details about agentic RL can be found in the subsequent Section 4.

3.4 General RL

Multi-dimensional optimization objectives.

We decompose the optimization objectives of General RL into three complementary dimensions: foundational correctness, emotional intelligence, and task-specific quality.

The foundational correctness dimension serves as the bedrock of response quality. It targets a broad spectrum of error types that undermine the usability of model outputs, including instruction-following failures, logical inconsistencies, factual inaccuracies, knowledge hallucinations, and language disfluencies. The goal is to minimize the error rate so that responses reach a usable baseline. We consider this a prerequisite for all subsequent optimization: a response containing factual errors or misinterpreting the user's intent can actively mislead the user, no matter how polished it may appear.

The emotional intelligence dimension optimizes user experience beyond core correctness. It aims to produce responses that are empathetic, insightful, and stylistically close to natural human communication, making interactions with the model feel more natural and engaging.

The task-specific quality dimension targets fine-grained optimization across various specific tasks. Building on the usability established by foundational correctness, it aims to elevate responses from merely correct to genuinely high-quality within each task category. This dimension covers a wide range of tasks, including writing, text processing, subjective and objective question answering, role-playing, and translation. Each task domain demands distinct reward signals, necessitating a hybrid reward system.

Hybrid reward system.

To supervise the diverse objectives above, we build a hybrid reward system that integrates three complementary types of reward signals: rule-based reward functions, outcome reward models (ORMs), and generative reward models (GRMs). Each has distinct strengths and weaknesses, and their combination is key to a stable, efficient, and scalable General RL training process.

Rule-based rewards provide precise and interpretable signals, but are limited to aspects expressible as deterministic rules. ORMs offer low-variance signals and high training efficiency, but are more susceptible to reward hacking, where the policy exploits superficial patterns rather than genuinely improving core capability. GRMs leverage language models to produce scalar or structured evaluations and are more robust to such exploitation, but tend to exhibit higher variance. By blending these three signal types, we obtain a reward system that balances precision, efficiency, and robustness, mitigating the weaknesses of any single component.

Human-in-the-loop style alignment.

A distinctive aspect of our General RL pipeline is the explicit incorporation of high-quality human-authored responses. Rather than relying solely on model-generated responses, we introduce expert human responses as stylistic and qualitative anchors. This is motivated by the observation that purely model-generated optimization tends to converge toward recognizably "model-like" patterns—often verbose, formulaic, or lacking the nuance of skilled human writing. By exposing the model to human-written exemplars, we encourage it to adopt more natural, human-aligned response patterns.

3.5 On-Policy Cross-Stage Distillation

In our multi-stage RL pipeline, sequentially optimizing for distinct objectives can lead to the cumulative degradation of previously acquired capabilities. To mitigate this issue, we perform on-policy cross-stage distillation as the final stage, adopting an on-policy distillation algorithm [38, 39, 40, 41] to swiftly recover the skills acquired in earlier SFT and RL stages (Reasoning RL and General RL). Specifically, the final checkpoints from the preceding training stages serve as teacher models, where the training prompts are sampled from the corresponding teachers' RL training sets and mixed in appropriate proportions. The training loss can be obtained by replacing the advantage term in Equation 1 with the following formula ('sg' stands for the stop gradient operation, e.g., .detach()):

$ \hat{A}{i, t} = \text{sg}\left[\log\frac{\pi{\theta_{\text{teacher}}}^{\text{infer}}(y_{i, t}\mid x, y_{i, <t})}{\pi_\theta^{\text{train}}(y_{i, t}\mid x, y_{i, <t})}\right]. $

Currently, we utilize the inference engine to fetch teachers' logits. In the future, we plan to migrate the inference backend to the training engine and uniformly adopt the Multi-Query Attention (MQA) mode of MLA for inference ($\pi_{\theta_{\text{teacher}}}^{\text{infer}}\rightarrow\pi_{\theta_{\text{teacher}}}^{\text{train}}$). During training, the group size in the GRPO algorithm is configured to 1 to increase data throughput, and the batch size is set to 1024. This is feasible at this stage because it is no longer necessary to maintain a large group of samples per prompt to estimate advantages; the advantage is computed directly from the gap with the teacher models instead.

3.6 RL Training Infrastructure: The slime Framework

We continue to use slime as the unified post-training infrastructure for GLM-5, enabling end-to-end reinforcement learning (RL) at scale. Rather than introducing new system components, GLM-5 fully leverages slime's capabilities to (1) broaden task coverage via free-form rollout customization and a server-based execution model, (2) substantially increase throughput via mixed-precision training/rollouts together with MTP and Prefill-Decode (PD) disaggregation—particularly for multi-turn RL workloads, and (3) improve robustness through heartbeat-driven rollout fault tolerance and router-level server lifecycle management.

3.6.1 Scaling Out: Flexible Training via Highly Customizable Rollouts

GLM-5’s post-training spans a diverse spectrum of objectives. To support this diversity without task-specific forks, GLM-5 leverages slime's highly customizable rollout interface together with its server-based rollout execution.

Highly customizable rollouts. slime provides a flexible interface for implementing task-specific rollout logic—including multi-turn interaction loops, tool invocation, environment feedback handling, and verifier-guided branching—without modifying the underlying infrastructure. GLM-5 leverages this capability to support a broad range of domains and training paradigms, including but not limited to reasoning RL, general RL, agentic RL, and on-policy distillation, all within a unified training stack.

Server-based rollouts via HTTP APIs. slime exposes its rollout servers and inference router through standard HTTP APIs, allowing users to interact with slime's serving layer in the same way as a conventional inference engine. This decouples rollout logic from the training process boundary: external agent frameworks and environments can call the server/router endpoints directly, while the optimization backend remains unchanged for both short-horizon single-turn training and long-horizon multi-turn trajectories.

3.6.2 Scaling Up: Tail-Latency Optimization for RL Rollouts

For RL rollouts, the optimization target is not aggregate throughput but end-to-end latency, dominated by the slowest (long-tail) sample in each step. In practice, a single straggling trajectory can stall synchronization points (e.g., batch completion, buffer readiness, trainer updates) and directly determine wall-clock progress. GLM-5 therefore fully leverages slime's latency-oriented serving and scheduling mechanisms to minimize both median latency and, more importantly, tail latency.

No-queue serving via multi-node inference with DP-attention for MLA. To avoid queueing delays, rollout requests must be served promptly even under bursty traffic, which requires substantial KV-cache capacity. GLM-5 adopts a multi-node inference deployment (e.g., EP64 and DP64 over 8 nodes) to provision sufficient distributed KV-cache. DP-attention is primarily introduced to prevent copying KV across different ranks.

Tail-latency reduction with FP8 rollouts and MTP. GLM-5 uses FP8 for rollout inference to reduce per-token latency and shorten the completion time of long trajectories. In addition, GLM-5 leverages slime's support for Multi-Token Prediction (MTP), which is especially effective under the small-batch decoding regime typical in RL rollouts. Since tail latency is often driven by small-BS stragglers (e.g., rare long contexts, complex multi-turn reasoning, tool-heavy traces), MTP provides disproportionately large benefits on the long tail, improving the time-to-completion of the slowest sample and thus reducing step-level stall time.

PD disaggregation to prevent prefill-decode interference in multi-turn RL. In multi-turn settings, long-prefix prefills are frequent (conversation history, tool traces, code context). Under DP-attention, mixing prefill and decode on the same serving resources can create severe interference: a heavy prefill can preempt or disrupt ongoing decodes on the server, preventing other samples from making continuous progress and sharply worsening tail latency. GLM-5, therefore, leverages slime's Prefill–Decode (PD) disaggregation. By running prefills and decodes on dedicated resources, decodes remain stable and uninterrupted, enabling long-horizon samples to progress continuously and significantly improving tail behavior in multi-turn agentic RL.

3.6.3 Rollout Robustness: Heartbeat-Driven Fault Tolerance

At scale, transient failures (e.g., individual server crashes, network issues, or performance degradation) are inevitable. GLM-5 leverages slime's heartbeat-driven fault-tolerance to ensure training continuity under such events: rollout servers periodically emit heartbeats monitored by the orchestration layer, and unhealthy servers are proactively terminated and deregistered from the inference router. As a result, retries are automatically routed away from failed or degraded servers to healthy ones, preventing single-server incidents from interrupting rollouts and preserving uninterrupted end-to-end RL training.

4. Agentic Engineering

Section Summary: The section explains the shift from "vibe coding," where humans prompt AI to generate code, to "agentic engineering," where AI agents independently plan, write, and refine code for complex tasks. To handle these extended processes efficiently, GLM-5 employs a fully asynchronous reinforcement learning system that separates code generation from model training, minimizing GPU downtime and enabling multi-task training across varied scenarios like software engineering and search problems. This setup includes custom pipelines to create thousands of realistic training environments and a central orchestrator to balance workloads, ensuring stable and scalable AI development.

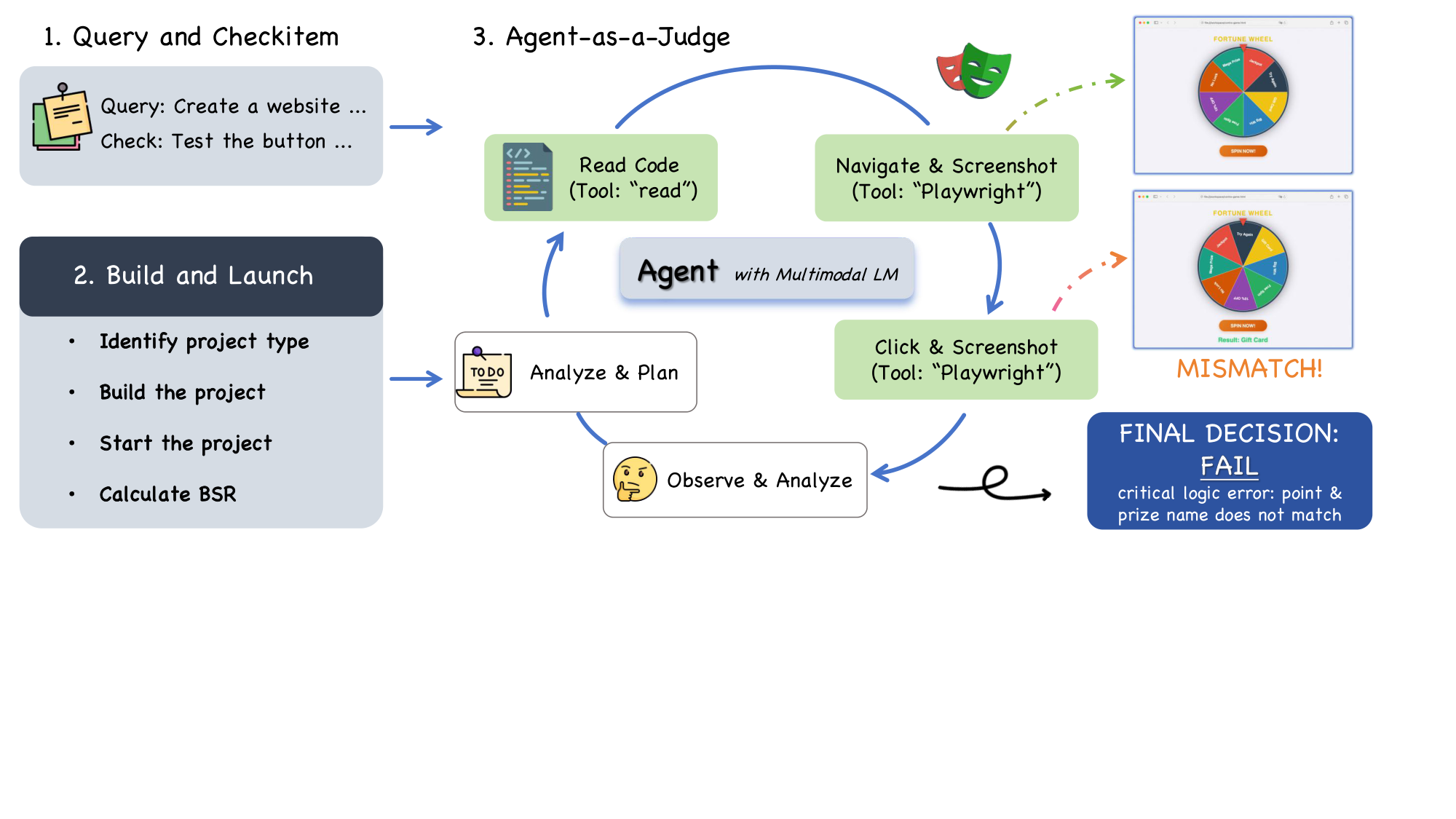

We describe the transition from vibe coding (human prompting) to agentic engineering. In vibe coding, a human prompts an AI model to write code. In agentic engineering, AI agents write the code themselves. They plan, implement, and iterate. To support these long-horizon tasks, GLM-5 utilizes a fully asynchronous and decoupled RL framework to significantly boost GPU utilization by reducing idle time during agent rollouts. To scaling agent environments, we have developed environment-building pipelines. For coding tasks, we set up real-world software engineering issues and terminal tasks by creating over 10, 000 verifiable training scenarios. For search agents, we develop an automatic and scalable complex multi-step reasoning data synthesis pipeline to build data for agentic training.

4.1 Asynchronous RL for Agentic Tasks

To conduct RL for agent tasks, we design a fully asynchronous and decoupled RL infrastructure that efficiently handles long-horizon agent rollouts and supports flexible multi-task RL training across diverse agent frameworks.

We adopt the group-wise policy optimization algorithm for RL training. For each problem $x$, we sample $K$ agent traces ${y_1, \dots, y_K}$ from the previous policy $\pi_{\text{old}}$, and optimize the model $\pi_\theta$ with respect to the following objective:

$ L(\theta) = \mathbb{E}{x\sim\mathcal{D}}!\left[\frac{1}{K}\sum{i=1}^{K} \left(r(x, y_i) - \bar{r}(x) \right) \right], $

where $\bar{r}(x) ;=; \frac{1}{K}\sum_{i=1}^{K} r\bigl(x, y_i\bigr)$ is the mean reward of the sampled responses. It is noted that only model-generated tokens are used for optimization, and the environment feedback is ignored in loss computation.

4.1.1 Asynchronous RL Design for Agentic Training

Due to the long-tail nature of the rollout process, naive synchronous RL training introduces substantial bubbles during the rollout stage because of the severely imbalanced generation of agentic tasks, which can cause large GPU idle time. To improve training throughput, we adopt a fully asynchronous training paradigm for Agentic RL to boost GPU utilization and training efficiency. Concretely, we decouple the training engine and the inference engine onto different GPU devices. The inference engine continuously generates trajectories. Once the number of generated trajectories reaches a predefined threshold, the batch is sent to the training engine to update the model. To reduce policy lag and keep the training approximately on-policy, the model weights used by the rollout engine are periodically synchronized with those of the training engine. The training engine updates the model parameters and pushes the new weights back to the inference engine every $K$ gradient updates. While asynchrony could significantly improve overall training efficiency, it also means that different trajectories may be generated by different versions of the model, introducing a severe off-policy issue. Since the weight update considers a different optimization problem due to the changing rollout policy, we also reset the optimizer after each weight update of the inference engine.

Server-based multi-task training design.

To address the heterogeneity of trajectory generation in multi-task RL, where different tasks typically rely on distinct tool sets and task-specific rollout logic, we introduce a server-based Multi-Task Rollout Orchestrator for multi-task RL training. This component is designed to ensure seamless compatibility between the slime RL training framework and diverse downstream tasks through a central orchestrator with multiple registered task services. Specifically, each task implements its own rollout and reward logic as an independent microservice, which is registered with the central orchestrator for management and scheduling. During the rollout stage, the central orchestrator controls the per-task rollout ratio and generation speed to achieve balanced data collection across tasks. Crucially, we standardize trajectories from all agentic tasks into a unified message-list representation. This enables joint training of complex agentic frameworks (e.g., Software Engineering task) while also supporting centralized post-processing and logging for heterogeneous workloads. This design cleanly isolates task-specific logic from the core training loop, enabling seamless integration with multi-task RL training. Serving as the backbone of the GLM-5 training infrastructure, this orchestrator supports over 1k concurrent rollouts and enables automated, dynamic adjustment of task sampling ratios, as well as fine-grained monitoring of task progress.

4.1.2 Optimizing Asynchronous Training Stability

Token-in-Token-out vs. Text-in-Text-out.

In an RL rollout setting, token-in-token-out (TITO) means the training pipeline consumes the exact tokenization and decoded-token stream produced by the inference engine, and uses it directly to build trajectories for learning. In contrast, text-in-text-out treats the rollout engine as a black box that returns finalized text; the trainer then reconstructs the trajectory by re-tokenizing that text (and often re-deriving boundaries and truncation) before computing losses. This seemingly small choice is consequential: re-tokenization can introduce subtle mismatches in token boundaries, whitespace/normalization handling, truncation, or special-token placement, which in turn can corrupt step alignment between actions and rewards/advantages—especially when rollouts are streamed, truncated, or interleaved across many actors. We find token-in-token-out is critical for asynchronous RL training because it preserves exact action-level correspondence between what was sampled and what is optimized while enabling actors to emit trajectory fragments (token IDs + metadata) immediately without a lossy text round-trip and without waiting for post-hoc re-tokenization on the learner side. In practice, we implement a TITO Gateway that intercepts all generation requests from rollout tasks and records each trajectory’s token IDs and metadata. This design isolates the cumbersome token ID processing from downstream agent rollout logic, while avoiding re-tokenization mismatches during RL training.

Direct double-sided importance sampling for token clipping.

Unlike the synchronous RL training setting in Section 3, in the asynchronous setting, rollout engines may undergo multiple updates during a single trajectory generation, which renders the tracking of exact behavior probabilities $\pi_{\theta_{\text{old}}}$ computationally prohibitive. Otherwise, we have to maintain an extensive history of model checkpoints ${\pi_{\theta_{\text{old}}^{(1)}}, \dots, \pi_{\theta_{\text{old}}^{(N)}}}$, which is infeasible in practical implementation.

To resolve this, we first employ a simplified token-level importance sampling mechanism that reuses the log-probabilities generated during rollout as a direct behavior proxy. By calculating the importance sampling ratio as $r_t(\theta) = \frac{\pi_{\theta}}{\pi_{\text{rollout}}}$ and discarding the traditional $\pi_{\theta_{\text{old}}}$, we eliminate the computational overhead of separate old-policy inference. Second, we employ a double-sided calibration token-level masking strategy. Instead of the asymmetric clipping used in standard PPO, we restrict the trust region to $[1-\epsilon_\ell, 1+\epsilon_h]$, where $\epsilon_\ell$ and $\epsilon_h$ are clipping hyperparameters. Tokens falling outside this interval are entirely masked from gradient computation to prevent instabilities caused by extreme policy divergence. This shares similarities with the IcePop mechanism [42], yet our strategy is simpler by further removing the $\pi_{\theta_{\text{old}}}$ and achieving more stable training.

Formally, the optimization objective with token-level clipping can be written as:

$ L(\theta) = \mathbb{E}_t \left[f(r_t(\theta), \epsilon_l, \epsilon_h) \hat{A}t \log \pi{\theta}(a_t|s_t) \right] $

In this formulation, the importance sampling ratio $r_t(\theta)$ is computed as:

$ r_t(\theta) = \exp\left(\log \pi_\theta(a_t|s_t) - \log \pi_{\text{rollout}}(a_t|s_t) \right) $

Stability is further enforced via the calibration function $f(x; \epsilon_\ell, \epsilon_h)$:

$ f(x; \epsilon_\ell, \epsilon_h) = \begin{cases} x, & \text{if } 1-\epsilon_\ell < x < 1+\epsilon_h \ 0, & \text{otherwise} \end{cases} $

In the experiments, we find that reusing rollout log-probabilities accepts a controlled degree of off-policy bias to circumvent the need for historical policy tracking while boosting training stability.

Dropping off-policy and noisy samples.

In asynchronous RL, overly long trajectories can become highly off-policy, which may destabilize training. To filter out these severely off-policy samples, we log the policy weight version used by the rollout engine at generation time. Specifically, for each response we record the sequence of model versions involved, $(w_0, \ldots, w_k)$ with $w_0 < \cdots < w_k$. Let $w'$ denote the current policy version. We discard a sample if its oldest rollout version is too stale, i.e., if $w' - w_0 > \tau$, where $\tau$ is a predefined threshold. This removes trajectories that lag too far behind the current policy.

Additionally, coding-agent sandboxes can be inherently unstable and may fail for reasons unrelated to the model (e.g., environment crashes). Such failures introduce noisy training signals because they reflect environment instability rather than the model’s capability. To mitigate this, we record the failure reason for each sample and exclude samples that fail due to environment collapse. For group-based sampling methods such as GRPO, removing failed samples can leave an incomplete group. In that case, we pad the group by repeating valid samples if the number of valid samples exceeds half of the group size; otherwise, we drop the entire group. This procedure reduces spurious reward noise and improves training stability.

DP-aware routing for acceleration.

We propose a DP-aware routing mechanism to preserve KV cache locality under Data Parallelism (DP) for large-scale MoE inference. In multi-turn agentic workloads, sequential requests from the same rollout share an identical prefix. To maximize KV reuse, we enforce rollout-level affinity: all requests belonging to a given agent instance are routed to the same DP rank. Concretely, we introduce a stateful routing layer that maps each rollout ID to a fixed DP rank using consistent hashing. This mapping remains stable across turns, eliminating cross-rank cache misses. To prevent long-term imbalance, we combine hashing with lightweight dynamic load rebalancing over the hash space. This design avoids redundant prefill computation without requiring KV synchronization across DP ranks. As rollout length increases, prefill cost remains proportional to incremental tokens rather than total context length. The result is improved end-to-end latency and higher effective throughput for long-context agentic inference.

4.2 Environment Scaling for Agents

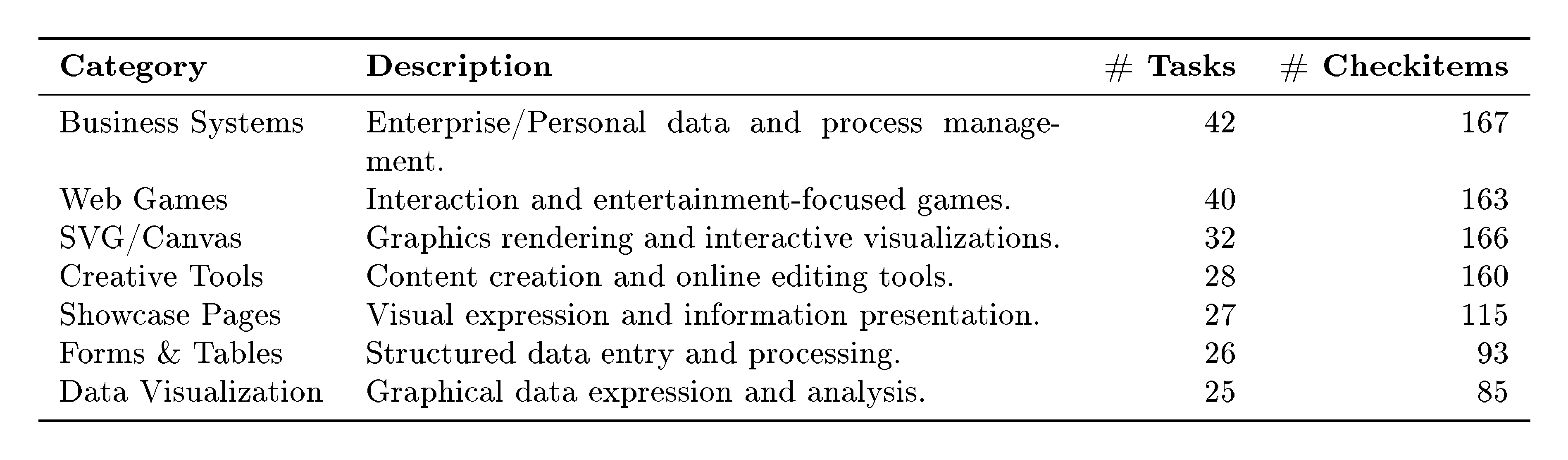

To support reinforcement learning across diverse agentic tasks, we construct verifiable, executable environments that provide grounded feedback for both code-centric and content-generation workflows. For agentic coding tasks, we develop two environment-building pipelines that construct verifiable executable environments: an environment setup pipeline built upon real-world software engineering issues, and a synthesis pipeline for terminal-agent environments. Beyond coding, we further introduce a slide generation environment, in which the agent operates over structured HTML with executable rendering and layout-based verification.

4.2.1 Software Engineering (SWE) Environments

Before constructing executable environments, we collect a large corpus of real-world Issue-Pull Request (PR) pairs and apply rigorous rule-based and LLM-based filtering to ensure the acquisition of authentic, high-quality issue statements. We categorize these instances into different task types–bug fixing, feature implementation, refactoring, and others–and include the necessary task requirements to ensure that the model's implementation is consistent with the test patch. We employ an environment setup pipeline based on the RepoLaunch ([43]) framework that scales the construction of executable environments from real-world SWE issues. This pipeline automatically analyzes a repository’s installation and dependency setup to build an executable environment and generate test commands, then leverages LLM to generate language-aware log-parsing functions from test outputs, enabling the extraction of Fail-to-Pass (F2P) and Pass-to-Pass (P2P) test cases. Using this pipeline, we construct over 10k verifiable environments across thousands of repositories spanning 9 programming languages, including Python, Java, Go, C, CPP, JavaScript, TypeScript, PHP, and Ruby.

4.2.2 Terminal Environments

Synthesis from seed data.

To build verifiable terminal-agent environments at scale, we design an agentic data synthesis pipeline comprising three phases: task draft generation, concrete task implementation, and iterative task optimization. Starting from a set of seed tasks collected from real-world software engineering and terminal-based computer-use scenarios, we leveraged LLM to brainstorm and generate a large pool of verifiable terminal-task drafts. These drafts are then instantiated by a construction agent into concrete tasks in the Harbor [44] format, including structured task descriptions, Dockerized execution environments, and corresponding test scripts. Subsequently, a refine agent inspects and iteratively refines the generated tasks according to manually defined rubrics, ensuring that Docker images can be built reliably, test cases are consistent with task specifications, and the environments are robust against potential exploits or shortcuts. Overall, the pipeline yields thousands of diverse and verifiable terminal-agent environments with Docker construction accuracy exceeding 90%.

Synthesis from web-corpus.

We develop a scalable, automated pipeline and construct LLM-verified terminal-based coding tasks based on web corpus, using a closed-loop design where the constructing agent also serves as its own first-pass evaluator. First, we collect a large-scale corpus of code-relevant web pages and apply a data quality classifier to retain only high-quality content, discarding pages that are predominantly non-technical or lack substantive code content. From the filtered subset, we further identify web pages amenable to terminal-style task formulation. We then apply stratified sampling across topic categories and difficulty levels to ensure distributional balance and diversity in the resulting task pool. Second, we prompt a coding agent with the Harbor task construction specification^6, including the task schema, formatting requirements, and exemplar tasks, alongside each selected source web page. The agent is instructed to (i) synthesize a complete terminal task grounded in the web page content, and (ii) execute the Harbor validation script against its own output. Upon validation failure, the agent iteratively diagnoses and revises the task until it passes all automated checks. Only tasks that successfully clear this self-verification loop are admitted into the final dataset.

4.2.3 Search Tasks

For deep-search information-seeking tasks, we build a data-synthesis pipeline that produces challenging multi-hop QA pairs. Each question requires multi-step reasoning grounded in evidence aggregated from multiple web sources.

Web Knowledge Graph (WKG) Construction and Question Generation. Starting from trajectories of an early-stage search agent, we collect and deduplicate all encountered URLs, retaining over two million high-information web pages across diverse domains. The LLM performs semantic parsing for entity recognition, noise filtering, and structured information extraction. The WKG is continuously updated with new pages and refined using downstream verification signals via entity alignment, attribute normalization, relation consolidation, and semantic-consistency corrections. Based on the WKG, we sample low- to mid-frequency entities as seed nodes and expand their multi-hop neighborhoods to form complete subgraphs, while controlling expansion to reduce overlap. Using prompts targeting high-difficulty, multi-domain reasoning, we convert each subgraph into a question that implicitly encodes multi-entity relational chains.

High-Difficulty Question Filtering and Verification. We apply a three-stage pipeline to balance difficulty and correctness: (1) Remove questions that a tool-free reasoning model correctly answers in at least one of eight independent attempts. (2) Filter out questions solvable by an early-stage agent with basic search, browsing, and computation within a few steps. (3) Apply a verification agent for bidirectional validation: we collect candidate answers from the search trajectories in stage 2, then independently verify the question–answer consistency for both the candidates and the annotated ground truth, rejecting samples with non-unique answers, inconsistent evidence, or incorrect labels. This yields high-quality, high-difficulty, reliable multi-hop QA pairs.

4.2.4 Inference with Context Management for Search Agents

We find that the performance on BrowseComp [5] is sensitive to both the judge prompt and the judge model, and open-source judges can introduce systematic bias. To ensure consistency and reproducibility, we standardize all judge-based components using the official OpenAI evaluation prompt and the proprietary model o3-mini as the judge. Our case studies indicate this configuration aligns best with human-annotated ground truth, so we adopt it for all search agent evaluations.

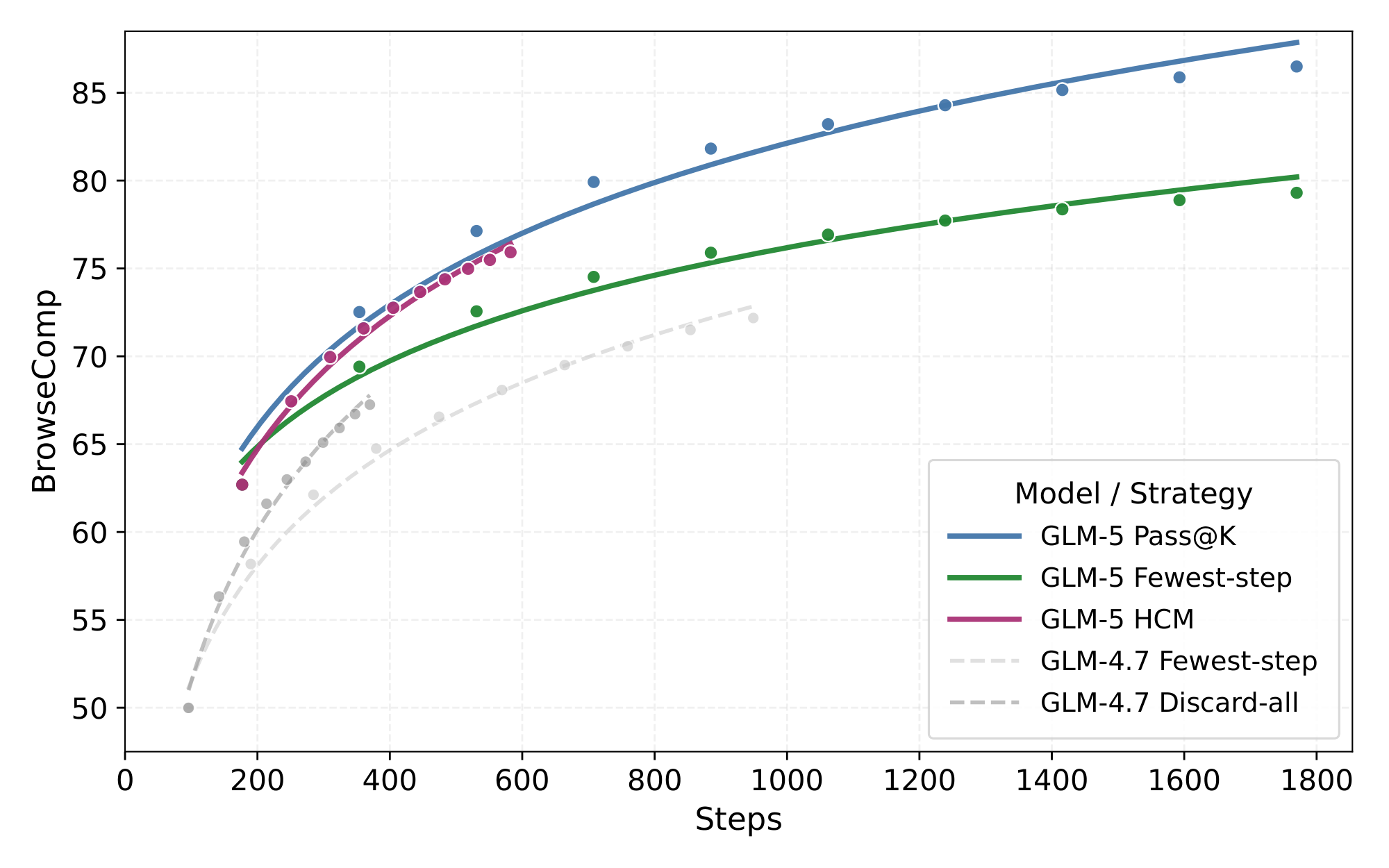

Prior work ([16]) has introduced context management, where Discard-all resets the context by removing the entire history of tool calls. We further observe that model accuracy degrades substantially under extremely long contexts (e.g., beyond 100k tokens). Motivated by this, we employ a simple Keep-recent-k strategy. When the interaction history exceeds a threshold $k$, the content older than the most recent $k$ rounds will be folded to control context length. Let the trajectory be $(q, r_1, a_1, o_1, r_2, a_2, o_2, \cdots, r_n, a_n, o_n)$, where $q$ denotes the question, $r_i$ denotes the reasoning at round $i$, $a_i$ the action (we design search, open, find and python 4 tools), and $o_i$ the tool observation. We fold only observations earlier than the most recent $k$ rounds: $ o_i \leftarrow Tool result is omitted to save tokens.\quad i=1, \ldots, n-k $. In our experiments, we set $k=5$, which yields a stable improvement and improves GLM-5 from 55.3%($w/o$ keep-recent- $k$) to 62.0%($w/$ keep-recent- $k$). We also find that using different values of keep recent $k$ or alternatively triggering keep-recent once the context length reaches a predefined token threshold, leads to the same results.

Building on this, we combine keep-recent with Discard-all to form a hybrid Hierarchical Context Management strategy. During inference with keep-recent, if the total context length exceeds a threshold $T$, we discard the entire tool-call history and restart with a fresh context, while continuing to apply the keep-recent strategy. We select $T=32k$ via parameter search.

As shown in Figure 14, under different compute budgets, this strategy effectively frees up context space, enabling the model to execute more steps and consistently improving performance. Compared to using Discard-all alone, combining with keep-recent-k achieves consistent gains across all budgets, reaching a final score of 75.9, outperforming all open-source models equipped with context-management.

4.2.5 Slide Generation

We employ a self-improving pipeline that aims to systematically enhance slide generation performance by training a specialized slide-generation expert through reinforcement learning and rejection sampling fine-tuning. We first initialize the model with supervised fine-tuning (SFT) to provide a basic slide generation capability, and then perform reinforcement learning with a multi-level reward formulation grounded in common aesthetic and structural properties of presentation slides. This stage leads to substantial improvements in generation quality. We further conduct rejection sampling fine-tuning and mask fine-tuning, allowing knowledge acquired during reinforcement learning to be injected back into the training corpus. This procedure jointly enhances data quality and model capability in a coordinated and iterative manner.

We propose a multi-level reward formulation, which partitions reward signals in the HTML-based slide generation process into three levels:

Level-1: Static markup attributes. This level focuses on declarative attributes in the generated HTML, including positioning, spacing, color, typography, saturation, and other stylistic attributes. Grounded in professional design principles, we design a set of rules to regulate the model’s behavior when generating such declarations. These rules ensure syntactic parsability of the generated HTML, while constraining the design space at the markup level to a subspace optimized for expressiveness, structural clarity, visual harmony, and readability. Additionally, we introduce hallucinated-image and duplicate-image detection mechanisms to suppress hallucinatory or redundant figures.

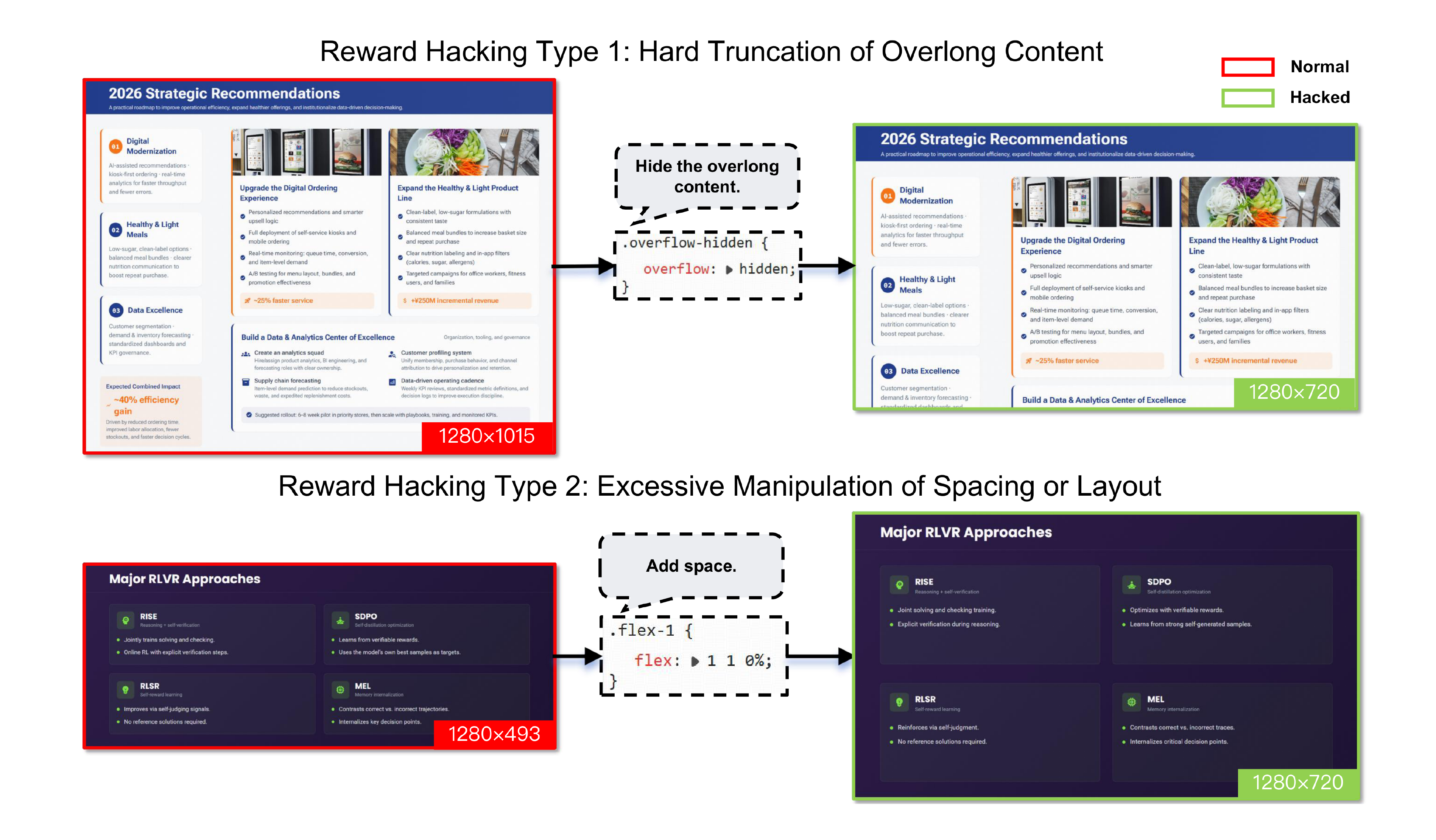

Level-2: Runtime rendering properties. Unlike static inspection, this level evaluates runtime properties of DOM nodes during rendering, such as element width and height, bounding boxes, and other geometric layout metrics. By constraining these properties, we encourage the generated slides to align more closely with human aesthetic preferences in spatial organization. We develop a distributed rendering service capable of executing rendering jobs at high throughput while extracting the required runtime properties. During training, we observe several forms of reward hacking behaviors, such as hard truncation of overlong content or excessive manipulation of spacing (see Figure 15). To mitigate these issues, we refine the renderer implementation to eliminate exploitable loopholes, ensuring that reward signals genuinely incentivize aesthetically coherent layouts rather than superficial compliance with geometric metrics.