Composable Text Controls in Latent Space with ODEs

Guangyi Liu$^{1,3\dagger}$, Zeyu Feng$^2$, Yuan Gao$^2$, Zichao Yang$^4$, Xiaodan Liang$^{3,5}$,

Junwei Bao$^6$, Xiaodong He$^6$, Shuguang Cui$^1$, Zhen Li$^1$, Zhiting Hu$^2$

$^1$FNii, CUHK-Shenzhen, $^2$UC San Diego, $^3$MBZUAI,

$^4$Carnegie Mellon University, $^5$DarkMatter AI Research, $^6$JD AI Research

[email protected], [email protected], [email protected]

$^\dagger$Work done when Guangyi Liu was a Ph.D. candidate at CUHK-Shenzhen.

Abstract

Real-world text applications often involve composing a wide range of text control operations, such as editing the text w.r.t. an attribute, manipulating keywords and structure, and generating new text of desired properties. Prior work typically learns/finetunes a language model (LM) to perform individual or specific subsets of operations. Recent research has studied combining operations in a plug-and-play manner, often with costly search or optimization in the complex sequence space. This paper proposes a new efficient approach for composable text operations in the compact latent space of text. The low-dimensionality and differentiability of the text latent vector allow us to develop an efficient sampler based on ordinary differential equations (ODEs) given arbitrary plug-in operators (e.g., attribute classifiers). By connecting pretrained LMs (e.g., GPT2) to the latent space through efficient adaption, we then decode the sampled vectors into desired text sequences. The flexible approach permits diverse control operators (sentiment, tense, formality, keywords, etc.) acquired using any relevant data from different domains. Experiments show that composing those operators within our approach manages to generate or edit high-quality text, substantially improving over previous methods in terms of generation quality and efficiency.$^1$

$^1$Code: https://github.com/guangyliu/LatentOps

Executive Summary: Real-world applications like content creation, customer service chatbots, and personalized marketing often require manipulating text in multiple ways at once—for example, changing sentiment to positive, shifting tense to future, and adjusting formality to professional—all while keeping the core meaning intact. Traditional methods train separate models for each combination, which is costly and impractical given the endless possibilities and limited labeled data. Recent plug-and-play approaches try to combine controls on the fly but struggle with inefficiency due to the complexity of raw text sequences, leading to poor quality or slow performance. This problem is especially pressing now as AI text tools proliferate, demanding scalable ways to generate or edit high-quality, controlled outputs without retraining massive models each time.

This paper introduces LatentOps, a method to evaluate and demonstrate efficient, composable control over text generation and editing using a compact latent space—a simplified, continuous representation of text derived from pretrained models. The goal is to show how to plug in diverse controls, sample desired latent representations, and decode them into fluent text, outperforming existing techniques in quality and speed.

The authors adapt large pretrained language models like GPT-2 into a variational autoencoder framework, tuning only a small fraction of parameters (e.g., added layers for encoding and decoding) to create a low-dimensional latent space from datasets like Yelp reviews (443,000 sentences) and Amazon comments (555,000 sentences), covering 2015–2018 data. They then define controls as simple classifiers for attributes like sentiment (positive/negative), tense (past/present/future), and formality (formal/informal), trained on as few as 200 examples per class from various sources. These form an energy-based model in the latent space, from which samples are drawn efficiently using ordinary differential equations—a robust numerical solver that simulates a reverse diffusion process from noise to structured representations. For credibility, the setup assumes independent attributes and uses public datasets, with experiments run on a single high-end GPU.

Key findings highlight LatentOps's strengths. First, for generating new text with multiple attributes (e.g., negative sentiment, future tense, formal style), it achieves 93–99% accuracy across controls, compared to 56–67% for baselines like FUDGE and PPLM, while producing more diverse outputs (self-BLEU scores 13–21 vs. 28–36). Second, fluency remains strong, with perplexity scores around 25–30—close to human-written text (24.5)—versus baselines' overly simplistic outputs. Third, in editing existing text sequentially (e.g., from formal/positive/present to informal/negative/past), success rates reach 61–92% per attribute with 42–48% content preservation (via CTC scores), outperforming FUDGE (35–49% accuracy) and Style Transformer (36–82%). For simultaneous edits, accuracy hits 95% for sentiment and tense, balancing preservation and change better than competitors. Finally, runtime is dramatically faster: 5.5 seconds for 150 samples, 6.6 times quicker than FUDGE and 578 times faster than PPLM, due to low-dimensional sampling.

These results mean LatentOps enables practical, on-the-fly text manipulation with minimal data or tuning, reducing costs by avoiding full model retraining and improving output quality for applications like automated reviews or ad copy. It shifts from sequence-level struggles to latent-space efficiency, yielding more reliable results than prior methods, which often fail on combined controls or introduce errors. This matters for risk reduction in AI-generated content (e.g., avoiding off-brand tones) and performance gains, potentially cutting development timelines from weeks to hours. Unlike expectations of needing vast supervision, it works well few-shot, highlighting latent spaces' untapped power for composability.

Next steps should involve piloting LatentOps in production for specific use cases, such as integrating it with business tools for dynamic content. Decision-makers could prioritize deploying it for single-sentence tasks like email personalization, weighing trade-offs: high accuracy and speed versus slightly lower fluency in diverse outputs. Further work is needed to extend to longer texts (e.g., paragraphs), requiring tests on broader datasets and refined decoders—potentially via larger models like GPT-3 adaptations. Options include starting small with attribute classifiers from internal data or partnering for custom ones, balancing quick wins against deeper validation.

Limitations include a focus on single sentences, which may limit applicability to complex documents where context dependencies arise; assumptions of attribute independence could falter with entangled traits like sarcasm. Data gaps exist for niche domains, and while classifiers train fast, rare attributes might need more examples. Overall confidence is high for short-text controls (supported by consistent outperformance on two datasets), but caution is advised for untested scales or languages—recommend reviewing full experiments before scaling.

1. Introduction

Section Summary: Many text-related tasks require combining operations like changing a text's sentiment, formality, or keywords, or generating new text with specific traits, but traditional methods struggle because they need custom models for every possible combination, which is impractical and data-intensive. Recent plug-and-play techniques try to guide pre-trained language models with constraints, but they falter due to the tricky, high-dimensional nature of text sequences. The new approach, called LatentOps, simplifies this by performing these operations in a compact, continuous "latent space" using efficient sampling and adapting a large model like GPT-2 to produce high-quality edited or generated text, outperforming existing methods in experiments on editing and creation tasks.

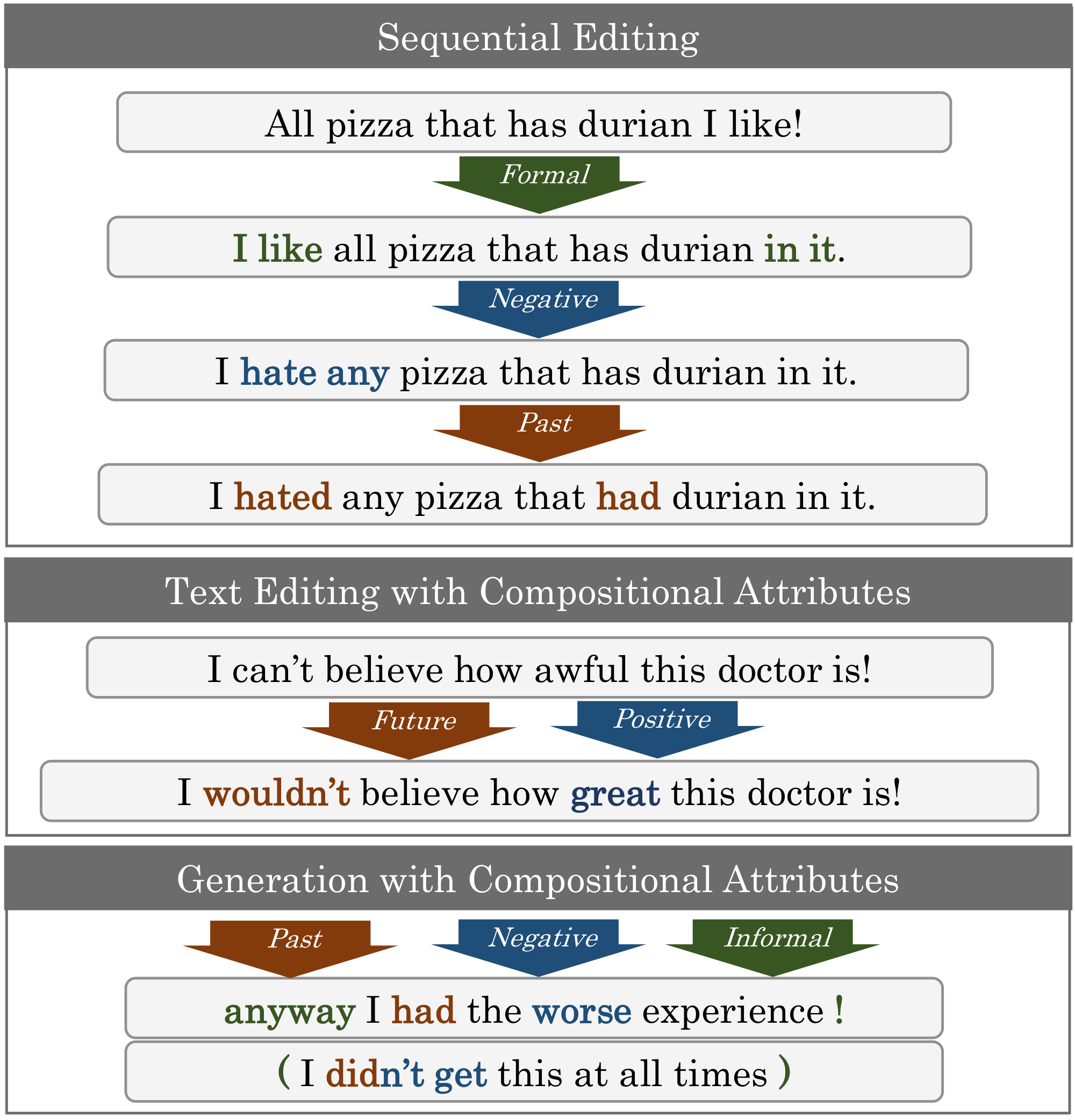

Many text problems involve a diverse set of text control operations, such as editing different attributes (e.g., sentiment, formality) of the text, inserting or changing the keywords, generating new text of diverse properties, and so forth. In particular, different composition of those operations are often required in various real-world applications (Figure 1).

Conventional approaches typically build a conditional model (e.g., by finetuning pretrained language models) for each specific combination of operations [1, 2, 3], which is unscalable given the combinatorially many possible compositions and the lack of supervised data. Most recent research thus has started to explore plug-and-play solutions. Given a pretrained language model (LM), those approaches plug in arbitrary constraints to guide the production of desired text sequences [4, 5, 6, 7, 8, 9]. The approaches, however, typically rely on search or optimization in the complex text sequence space. The discrete nature of text makes the search/optimization extremely difficult. Though some recent work introduces continuous approximations to the discrete tokens ([10, 9, 6]), the high dimensionality and complexity of the sequence space still renders it inefficient to find the accurate high-quality text.

In this paper, we develop $\textsc{LatentOps}$, a new efficient approach that performs composable control operations in the compact and continuous latent space of text. $\textsc{LatentOps}$ permits plugging in arbitrary operators (e.g., attribute classifiers) applied on text latent vectors, to form an energy-based distribution on the low-dimensional latent space. We then develop an efficient sampler based on ordinary differential equations (ODEs) [11, 12, 13] to draw latent vector samples that bear the desired attributes.

A key challenge after getting the latent vector is to decode it into the target text sequence. To this end, we connect the latent space to pretrained LM decoders (e.g., GPT-2) by efficiently adapting a small subset of the LM parameters in a variational auto-encoding (VAE) manner [14, 15].

Previous attempts of editing text in latent space have often been limited to single attribute and small-scale models, due to the incompatibility of the latent space with the existing transformer-based pretrained LMs [16, 17, 18, 19, 20]. $\textsc{LatentOps}$ overcomes the difficulties and enables a single large LM to perform arbitrary composable text controls.

We conduct experiments on three challenging settings, including sequential editing of text w.r.t. a series of attributes, editing compositional attributes simultaneously, and generating new text given various attributes. Results show that composing operators within our method manages to generate or edit high-quality text, substantially improving over respective baselines in terms of quality and efficiency.

2. Background

Section Summary: Energy-based models define probability distributions for data like text using an energy function, but generating samples from them is challenging due to complex normalization, especially in the vast space of text sequences. Traditional sampling methods like Langevin dynamics are often unstable, so the approach here adapts more reliable ordinary differential equations from diffusion processes, originally used in image generation, to efficiently sample in a compact latent space rather than directly in text space. Variational auto-encoders help by mapping text to a continuous latent space via an encoder and decoder, and this work efficiently modifies pretrained language models like GPT-2 by tuning only a few parameters to create such a space without full retraining.

2.1 Energy-based Models and ODE Sampling

Given an arbitrary energy function $E(\bm x)\in \mathbb{R}$, energy-based models (EBMs) define a Boltzmann distribution:

$ \small p(\bm x) = e^{-E(\bm x)} / Z,\tag{1} $

where $Z=\sum_{\bm{x} \in \mathcal{X}} e^{-E(\bm x)}$ is the normalization term (the summation is replaced by integration if $\bm x \in \mathcal{X}$ is a continuous variable). EBMs are flexible to incorporate any functions or constraints into the energy function $E(\bm x)$. Recent work has explored text-based EBMs (where $\bm x$ is a text sequence) for controllable text generation [21, 22, 23, 24, 8, 9]. Despite the flexibility, sampling from EBMs is rather challenging due to the intractable $Z$. The text-based EBMs face with even more difficult sampling due to the extremely large and complex (discrete or soft) text space.

Langevin dynamics (LD) [25, 26] is a gradient-based Markov chain Monte Carlo (MCMC) approach often used for sampling from EBMs ([27, 28, 29, 9]). It is considered as a more efficient way compared to other gradient-free alternatives (e.g., Gibbs sampling ([30])). However, due to several critical hyperparameters (e.g., step size, number of steps, noise scale), LD tends to be sensitive and unrobust in practice ([12, 31, 32]).

On the other hand, stochastic/ordinary differential equations (SDEs/ODEs) [33] offer another sampling technique recently applied in image generation ([11, 12]). An SDE characterizes a diffusion process that maps real data to random noise in continuous time $t\in[0, T]$. Specifically, let $\bm{x}(t)$ be the value of the process following $\bm{x}(t)\sim p_t(\bm{x})$, indexed by time $t$. At start time $t=0$, $\bm{x}(0)\sim p_0(\bm{x})$ which is the data distribution, and at the end $t=T$, $\bm{x}(T)\sim p_T(\bm{x})$ which is the noise distribution (e.g., standard Gaussian). The reverse SDE instead generates a real sample from the noise by working backwards in time (from $t=T$ to $t=0$). More formally, consider a variance-preserving SDE ([11]) whose reverse is written as

$ \small \text{d}\bm x=-\frac{1}{2}\beta(t)[\bm x+2\nabla_{\bm x}\log p_t(\bm x)]\text{d}t + \sqrt{\beta(t)}\text{d}\bar{\bm w},\tag{2} $

where d $t$ is an infinitesimal negative time step; $\bar{\bm w}$ is a standard Wiener process when time flows backwards from $T$ to $0$; and the scalar $\beta(t):=\beta_0 + (\beta_T - \beta_0)t$ is a time-variant coefficient linear w.r.t. time $t$. Given a noise $\bm{x}(T)\sim p_T(\bm{x})$, solving the above reverse SDE returns a $\bm{x}(0)$ that is a sample from the desired distribution $p_0(\bm{x})$. One could use different numerical solvers to this end. [34, 35, 36]. The SDE sampler sometimes need to combine with an additional corrector to improve the sample quality ([11]).

Further, as shown in ([11, 37]), each (reverse) SDE has a corresponding ODE, solving which leads to samples following the same distribution. The ODE is written as (see Appendix A for the derivations):

$ \small \text{d}\bm x=-\frac{1}{2}\beta(t)[\bm x+\nabla_{\bm x}\log p_t(\bm x)]\text{d}t.\tag{3} $

Solving the ODE with relevant numerical methods [38, 39, 40] corresponds to an sampling approach that is more efficient and robust ([11, 12]).

In this work, we adapt the ODE sampling for our approach. Crucially, we overcome the text control and sampling difficulties in the aforementioned sequence-space methods, by defining the text control operations in a compact latent space, handled by a latent-space EBMs with the ODE solver for efficient sampling.

2.2 Latent Text Modeling with Variational Auto-Encoders

Variational auto-encoders (VAEs) [14, 41] have been used to model text with a low-dimensional continuous latent space with certain regularities ([15, 1]). An VAE connects the text sequence space $\mathcal{X}$ and the latent space $\mathcal{Z}\subset\mathbb{R}^d$ with an encoder $q(\bm z|\bm x)$ that maps text $\bm{x}$ into latent vector $\bm{z}$, and a decoder $p(\bm x|\bm z)$ that maps a $\bm{z}$ into text. Previous work usually learns text VAEs from scratch, optimizing the encoder and decoder parameters with the following objective:

$ \small \begin{split} &\mathcal{L}{\text{VAE}}(\bm x) = \ &-\mathbb{E}{q(\bm z|\bm x)}[\log p(\bm x|\bm z)]

- \text{KL}(q(\bm z|\bm x) || p_{\text{prior}}(\bm z)), \end{split}\tag{4} $

where $p_{\text{prior}}(\bm{z})$ is a standard Gaussian distribution as the prior, and $\text{KL}(\cdot||\cdot)$ is the Kullback-Leibler divergence that pushes $q_{\text{enc}}$ to be close to the prior. The first term encourages $\bm{z}$ to encode relevant information for reconstructing the observed text $\bm{x}$, while the second term adds regularity so that any $\bm{z} \sim p_{\text{prior}}(\bm{z})$ can be decoded into high-quality text in the text sequence space $\mathcal{X}$. Recent work ([42, 43]) scales up VAE by initializing the encoder and decoder with pretrained LMs (e.g., BERT [44] and GPT-2 [45], respectively). However, they still require costly finetuning of the whole model on the target corpus.

In comparison, our work converts a given pretrained LM (e.g., GPT-2) into a latent-space model efficiently by tuning only a small subset of parameters, as detailed more in § 3.3.

3. Composable Text Latent Operations

Section Summary: LatentOps is a method that adapts existing language models like GPT-2 to perform flexible manipulations on text by working in a hidden, compact "latent" space rather than directly with the text itself. It uses a special encoder-decoder system called a VAE to connect text to this latent space and energy-based models to combine desired traits, such as positive sentiment or informal style, allowing efficient sampling of latent points that generate controlled text outputs. This approach simplifies text editing and creation, avoids complex direct text processing, and requires only minor tweaks to pretrained models, with further details on its math, sampling, and setup covered in the subsections.

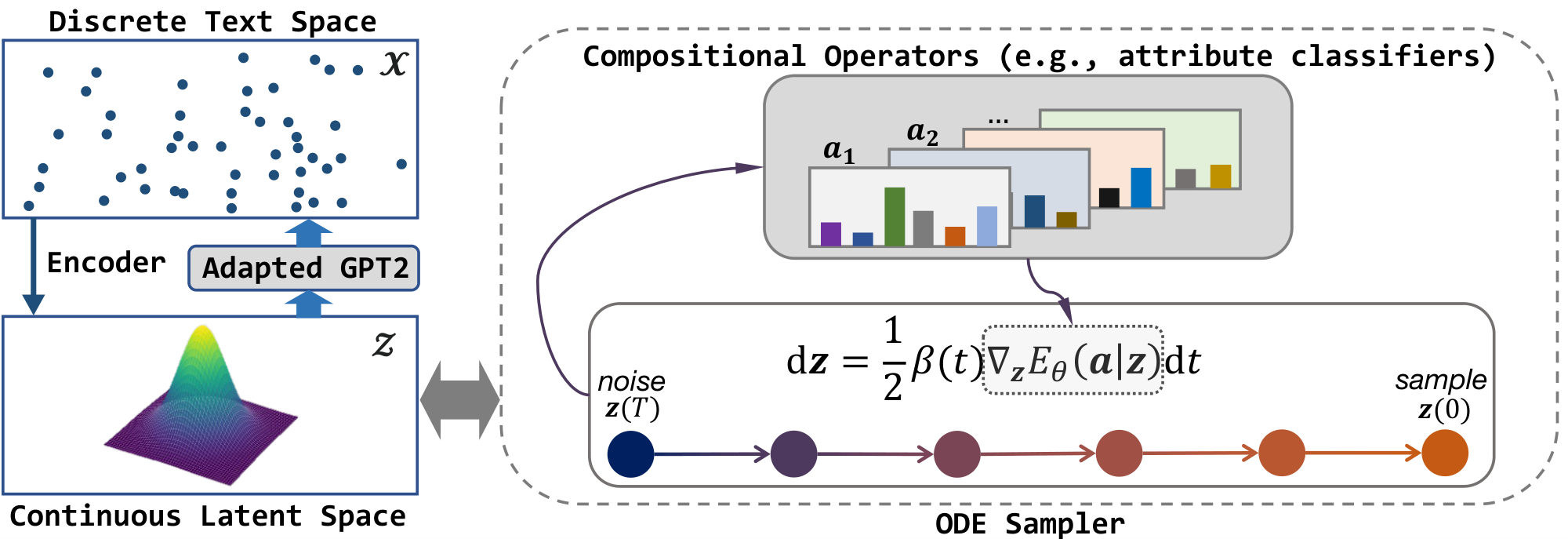

We develop our approach $\textsc{LatentOps}$ that quickly adapts a given pretrained LM (e.g., GPT-2) to enable composable text latent operations. The approach consists of two components, namely a VAE based on the pretrained LM that connects the text space with a compact continuous latent space, and EBMs on the latent space that permits arbitrary attribute composition and efficient sampling.

More specifically, the VAE decoder $p(\bm{x} | \bm{z})$ offers a way to map any given latent vector $\bm{z}$ into the corresponding text sequence. Therefore, text control (e.g., editing a text or generating a new one) boils down to finding the desired vector $\bm{z}$ that bears the desired attributes and characteristics. To this end, one could plug in any relevant attribute operators (e.g., classifiers), resulting in a latent-space EBM that characterizes the distribution of $\bm{z}$ with the desired attributes. We could then draw the $\bm{z}$ samples of interest, performed efficiently with an ODE solver. Figure 2 gives an illustration of the approach.

$\textsc{LatentOps}$ thus avoids the difficult optimization or sampling in the complex text sequence space as compared to the previous plug-and-play methods [e.g., 5, 4, 9]. Our approach is also compatible with the powerful pretrained LMs, requiring only minimal adaptation to equip the LMs with a latent space, rather than costly retraining from scratch as in the recent diffusion LM [46].

In the following, we first present the latent-space EBM formulation (§ 3.1) for composable operations, and derive the efficient ODE sampler (§ 3.2); we discuss the parameter-efficient adaptation of pretrained LMs for the latent space (§ 3.3); we then discuss the implementation details (§ 3.4).

3.1 Composable Latent-Space EBMs

We aim to formulate the latent-space EBMs such that one can easily plug in arbitrary attribute operators to define the latent distribution of interest. Besides, as we want to obtain fluent text with the VAE decoder $p(\bm{x}|\bm{z})$ described in § 3.3, the latent distribution over $\bm{z}$ should match the structure of the VAE latent space.

Formally, let $\bm a={a_1, a_2, ...}$ be a vector of desired attribute values, where each $a_i \in \mathbb{R}$ (e.g., positive sentiment, or informal writing style). Note that $\bm{a}$ does not have a prefixed length as one can plug in any number of attributes to control on the fly. In general, to assess if a vector $\bm{z}$ bears the desired attribute $a_i$, we could use any function $f_i$ that takes in $\bm{z}$ and $a_i$, and outputs a score measuring how well $a_i$ is carried in $\bm{z}$. For a categorical attribute (e.g., sentiment, either positive or negative), one of the common choices is to use a trained attribute classifier, where $f_i(\bm{z})$ is the output logit vector and $f_i(\bm{z})[a_i] \in \mathbb{R}$ is the logit of the particular class $a_i$ of interest. For clarity of presentation, we focus on categorical attributes and classifiers in the rest of the paper, and assume the attributes are independent with each others.

We are now ready to formulate the latent-space EBMs by plugging in the attribute classifiers. Specifically, we define the joint distribution:

$ \small p(\bm z, \bm a) := p_{\text{prior}}(\bm z) p(\bm a | \bm z) = p_{\text{prior}}(\bm z) \cdot e^{- E(\bm{a}|\bm z)} / Z,\tag{5} $

where $p_{\text{prior}}(\bm{z})$ is the Gaussian prior distribution of VAE (§ 2.2), and $p(\bm{a}|\bm{z})$ is formulated with energy function $E(\bm{a}|\bm z)$ to encode the different target attributes. Such a decomposition of $p(\bm z, \bm a)$ results in two key desirable properties: (1) The marginal distribution over $\bm{z}$ equals the VAE prior, i.e., $\sum_{\bm{a}} p(\bm{z}, \bm{a}) = p_{\text{prior}}(\bm{z})$. This facilitates the VAE decoder to generate fluent text; (2) the energy function in $p(\bm a | \bm z)$ enables the combination of arbitrary attributes, with $E(\bm{a}|\bm z) = \sum_{i} \lambda_i E_i(a_i|\bm z)$. Each $\lambda_i \in \mathbb{R}$ is the balance weight, and $E_i$ is the defined as the negative log probability (i.e., the normalized logit) of $a_i$ to make sure the different attribute classifiers have outputs at the same scale for combination:

$ \small E_i(a_i|\bm z) = -{f_{i}(\bm z)}[a_i] + \log\sum\nolimits_{a'i}\exp(f{i}(\bm z)[a'_i]).\tag{6} $

3.2 Efficient Sampling with ODEs

Once we have the desired distribution $p(\bm z, \bm a)$ over the latent space and attributes, we would like to draw samples $\bm{z}$ given the target attribute values $\bm{a}$. The samples can then be fed to the VAE decoder (§ 3.3) to obtain the desired text. As discussed in § 2.1 and also shown in our ablation study in § D.4, sampling with ODEs has the benefits of robustness compared to Langevin dynamics that is sensitive to hyperparameters, and efficiency compared to SDEs that require additional correction.

We now derive the ODE sampling in the latent space. Specifically, we adapt the ODE from Equation 3 into our latent-space setting, which gives:

$ \small \begin{split} &\text{d}\bm z=-\frac{1}{2}\beta(t)[\bm z+\nabla_{\bm z}\log p_t(\bm z, \bm a)]\text{d}t \ &=-\frac{1}{2}\beta(t)\left [\bm z +\nabla_{\bm z}\log p_t(\bm a|\bm z) + \nabla_{\bm z}\log p_t(\bm z) \right] \text{d}t. \end{split}\tag{7} $

For $p_t(\bm z)$, notice that at $t=0$, $p_0(\bm{z})$ is the VAE prior distribution $p_{\text{prior}}(\bm z)$ as defined in Equation 5, which is the same as $p_T(\bm{z})$ (i.e., the Gaussian noise distribution after diffusion). This means that in the diffusion process, we always have $p_t(\bm{z})=\mathcal{N}(\bm 0, I)$ that is time-invariant ([12]). Similarly, for $p_t(\bm{a} | \bm{z})$, since the input $\bm{z}$ follows the time-invariant distribution and the classifiers $f_i$ are fixed, the $p_t(\bm a|\bm z)$ is also time-invariant. Plugging the definitions of those components, we obtain the simple ODE formulation:

$ \small \begin{split} \text d\bm z&=-\frac{1}{2}\beta(t)[\bm z-\nabla_{\bm z} E (\bm a|\bm z)- \frac{1}{2}\nabla_{\bm z}||\bm z||^2_2]\text dt\ & = \frac{1}{2}\beta(t)\sum_{i=1}^n \nabla_{\bm z} E(a_i|\bm z) \text dt. \end{split}\tag{8} $

We can then easily create latent samples conditioning on the given attribute values, by drawing $\bm{z}(T) \sim \mathcal{N}(\bm 0, I)$ and solving the Equation 8 with a differentiable neural ODE solver^1 [47, 48] to obtain $\bm{z}(0)$. In § 3.4, we discuss more implementation details with approximated starting point $\bm{z}(T)$ for text editing and better empirical performance.

3.3 Adapting Pretrained LMs for Latent Space

To decode the $\bm{z}$ samples into text sequences, we equip pretrained LMs (e.g., GPT-2) with the latent space through parameter-efficient adaptation. More specifically, we adapt the autoregressive LM into a text latent model within the VAE framework (§ 2.2). Differing from the previous VAE work that trains from scratch or finetunes the full parameters of pretrained LMs ([42, 43, 1]), we show that it is sufficient to only update a small portion of the LM parameters to connect the LM with the latent space, while keeping the LM capability of generating fluent coherent text. Specifically, we augment the autoregressive LM with small MLP layers that pass the latent vector $\bm{z}$ to the LM, and insert an additional transformer layer in between the LM embedding layer and the original first layer. The resulting model then serves as the decoder in the VAE objective Equation (4), for which we only optimize the MLP layers, the embedding layer, and the inserted transformer layer, while keeping all other parameters frozen. For the encoder, we use a BERT-small model [44, 49] and finetune it in the VAE framework. As discussed later in § 3.4, the tuned encoder can be used to produce the initial $\bm z$ values in the ODE sampler for text editing.

3.4 Implementation Details

We discuss more implementation details of the method. Overall, given an arbitrary text corpus (e.g., a set of text from any domain of interest), we first build the VAE by adapting the pretrained LMs as described in § 3.3. Once the latent space is established, we keep it (including all the VAE components) fixed, and perform compositional text operations in the latent space on the fly.

Acquisition of attribute classifiers

We can acquire attribute classifiers $f_i(\bm{z})$ on the frozen latent space by training using arbitrary datasets with annotations. Specifically, we encode the input text into the latent space with the VAE encoder, and then train the classifier to predict the attribute label given the latent vector. Each classifier, as is built on the semantic latent space, can be trained efficiently with only a small number of examples (e.g., 200 per class). This allows us to acquire a large diversity of classifiers (e.g., sentiment, formality, different keywords) in our experiments (§ 4) using readily-available data from different domains, and flexibly compose them together to perform operations on text in the domain of interest.

Initialization of ODE sampling

To sample $\bm{z}$ with the ODE solver (§ 3.2), we need to specify the initial $\bm{z}(T)$. For text editing operations (e.g., transferring sentiment from positive to negative) that start with a given text sequence, we initialize $\bm{z}(T)$ to the latent vector of the given text by the VAE encoder. We show in our experiments that the resulting $\bm{z}(0)$ samples as the solution of the ODEs can preserve the relevant information in the original text while obtaining the desired target attributes.

For generating new text of target attributes, the normal way is to sample $\bm{z}(T)$ from the prior Gaussian distribution $\mathcal{N}(\bm 0, I)$. However, due to the inevitable gap between the prior distribution and the learned VAE posterior on $\mathcal{Z}$, such a Gaussian noise sample does not always lead to coherent text outputs. We thus follow ([42, 43]) to learn a small (single-layer) GAN ([50]) $p_{\text{GAN}}(\bm z)$ that simulates the VAE posterior distribution, using all encoded $\bm z$ of real text as the training data. We then generate the initial $\bm{z}(T)$ from the $p_{\text{GAN}}$.

Sample selection

The compact latent space learned by VAE allows us to conveniently create multiple semantically-close variants of a sampled $\bm{z}(0)$ and pick the best one in terms of certain task criteria. Specifically, we add random Gaussian noise perturbation (with a small variance) to $\bm{z}(0)$ to get a set of vectors close to $\bm{z}(0)$ in the latent space and select one from the set. We found the sample perturbation and selection is most useful for operations related to the text content. For example, in text editing (§ 4.2), we pick a vector based on the content preservation (e.g., BLEU with the original text) and attribute accuracy. More details are provided in § B.

4. Experiments

Section Summary: Researchers tested a new method called LatentOps for creating and editing text with specific traits like positive or negative sentiment, past or future tense, and formal or informal style, using datasets from Yelp reviews and Amazon comments. They compared it to other approaches and a fully trained model, measuring how well the text matched the desired traits, its natural flow, and variety. The results showed LatentOps outperforming rivals in accuracy and diversity while keeping text reasonably fluent, as seen in sample generations that better hit all combined traits without common errors.

We conduct extensive experiments of composable text controls to show the flexibility and efficiency of $\textsc{LatentOps}$, including generating new text of compositional attributes (§ 4.1) and editing existing text in terms of desired attributes sequentially or simultaneously (§ 4.2). All code will be released upon acceptance.

\begin{tabular}{llcccccc}

\toprule

\multirow{3}{*}{Attributes} &\multirow{3}{*}{Methods} & \multicolumn{4}{c}{Accuracy $\uparrow$} & Fluency $\downarrow$ & Diversity $\downarrow$ \\\cmidrule(r){3-6}\cmidrule(r){7-7}\cmidrule(r){8-8}

& &S & T & F & G-M &PPL & sBL \\

\midrule

\multirow{5}{*}{S}

& GPT2-FT& 0.98 & -&-&0.98 & 10.6 & 23.8\\\cmidrule{2-8}

& PPLM &0.86&-&-&0.86&11.8&31.0 \\

& FUDGE&0.77&-&-&0.77&\textbf{10.3}&27.2\\

& Ours &\textbf{0.99} &-&-&\textbf{0.99} & 30.4 & \textbf{13.0} \\

\midrule

\multirow{5}{*}{S+T}

& GPT2-FT &0.98&0.95&-&0.969&9.0&36.8 \\\cmidrule{2-8}

& PPLM&0.81&0.59&-&0.677&15.7&28.7 \\

& FUDGE&0.67&0.63&-&0.565&\textbf{11.0}&35.9\\

& Ours &\textbf{0.98}& \textbf{0.93}& -&\textbf{0.951}& 25.2& \textbf{19.7}\\

\midrule

\multirow{5}{*}{S+T+F}

& GPT2-FT &0.97&0.92&0.87&0.919&10.3&36.8 \\\cmidrule{2-8}

& PPLM &0.82&0.57&0.56&0.598&17.5&30.5 \\

&FUDGE&0.67&0.64&0.62&0.556&\textbf{11.5}&35.9\\

& Ours &\textbf{0.97}& \textbf{0.92}& \textbf{0.93} &\textbf{0.937} & 25.8 & \textbf{21.1}\\

\midrule

\end{tabular}

Setup

We evaluate in two domains, including the Yelp review [51] preprocessed by [52] and the Amazon comment corpus [53]. For each domain, we quickly adapt the GPT2-large to equip with a latent space as described in § 3.3. The resulting VAE models then serve as the base model, on which we plug in various attribute classifiers for generation and editing. We consider the attributes of sentiment (positive, negative), formality (formal, informal), and tense (pase, present, future). (We also study other attributes related to diverse keywords, which we present in § D.2.3). The sentiment/tense classifiers are quickly acquired by training on a small subset of Yelp and Amazon instances (200 labels per class), where the sentiment labels were readily available in the corpus and the tense labels are automatically parsed (§ D.1). There is no formality information in the Yelp/Amazon corpora, yet the flexibility of $\textsc{LatentOps}$ allows us to acquire the formality classifier using a separate dataset GYAFC [54]. § D.1 gives more details of the setup.

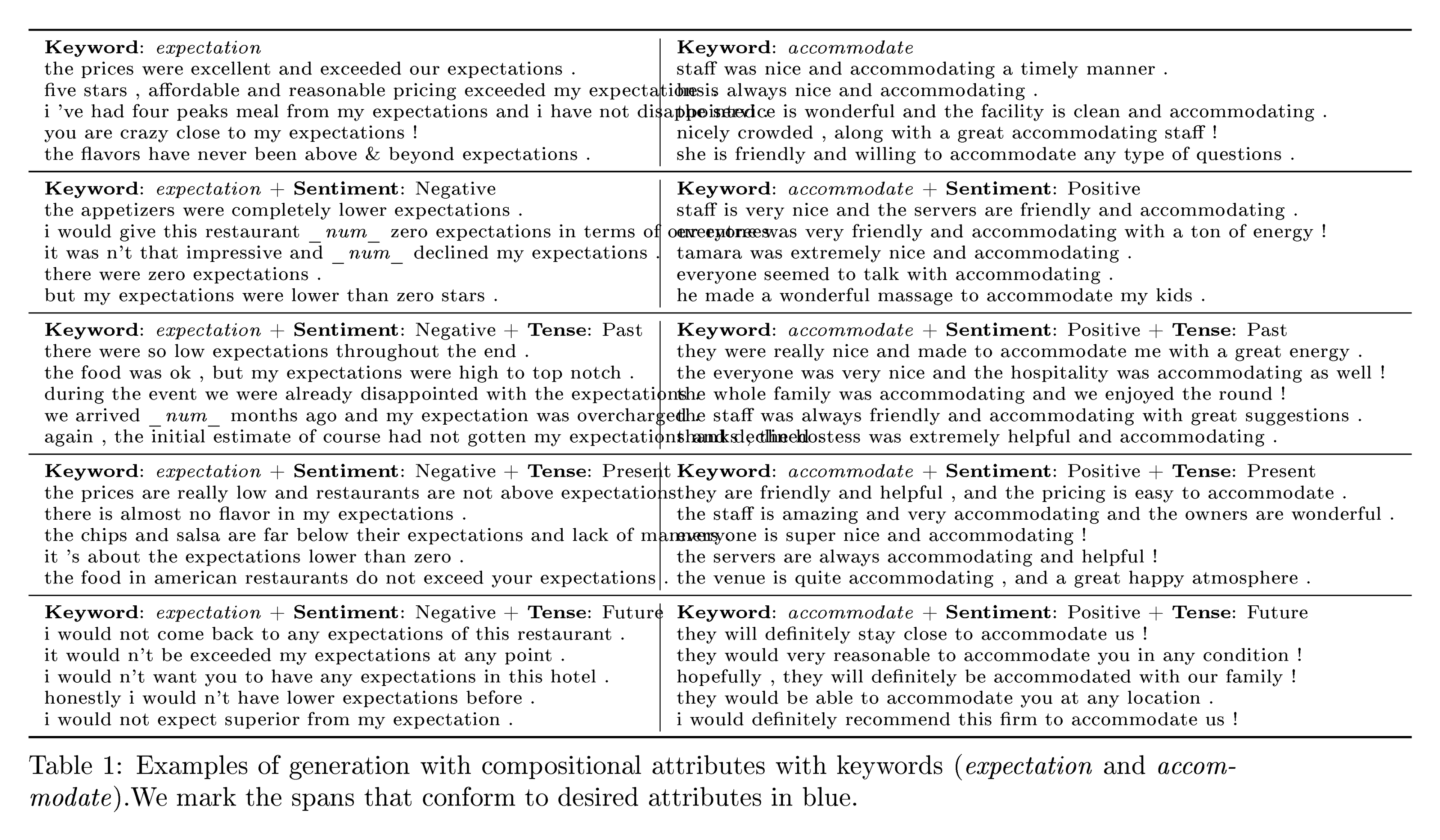

4.1 Generation with Compositional Attributes

We apply $\textsc{LatentOps}$ to generate new text of arbitrary desired attributes on Yelp domain.

Baselines

We compare with the previous plug-and-play text control approaches PPLM [4] and FUDGE [5]. As mentioned earlier, both approaches apply attribute classifiers on the complex sequence space, with an autoregressive LM as a base model. We obtain the base model by finetuning GPT2-large on the above domain corpus (e.g., Yelp). We further compare with an expensive supervised method GPT2-FT which finetunes a GPT2-large for each combination of attributes. To get the supervised data (§ D.2.1), we automatically annotate the domain corpus for formality and tense labels with a trained classifier and tagger, respectively. Metrics

Attribute accuracy is given by a BERT classifier to evaluate the success rate. Perplexity (PPL) is calculated by a GPT2 finetuned on the corresponding domain to measure fluency. We calculate self-BLEU (sBL) to evaluate the diversity. For each case, we sample 150 sequencs to evaluate.

4.1.1 Experimental Results

We list the average results of each combination in Table 1. $\textsc{LatentOps}$ achieves observably higher accuracy and diversity, even compared with the fully-supervised method (i.e., GPT2-FT). For fluency, the perplexity of our $\textsc{LatentOps}$ is within a regular interval (the perplexity of human-annotated data is 24.5). However, the baselines obtain excessive perplexity at the expense of diversity.

\begin{tabular}{m{0.45\textwidth}}

\toprule

\textbf{Negative + Future + Formal}\\

\midrule

GPT2-FT: \\

\quad i will not be back.\\

\quad would not recommend this location to anyone. [No Subject]\\

\quad would not recommend them for any jewelry or service. [No Subject]\\

\quad if i could give this place zero stars, i would.\\

\midrule

PPLM:\\

\quad i could not recommend them at all.\\

\quad i could not believe this was not good!\\

\quad this was a big deal, because the food was great. \\

\quad i could not recommend them.\\

\midrule

FUDGE:\\

\quad not a great pizza to get a great pie! [No Tense]\\

\quad however, this place is pretty good. \\

\quad i have never seen anything like these. \\

\quad will definitely return. [No Subject]\\

\midrule

Ours:\\

\quad i would not believe them to stay .\\

\quad i will never be back .\\

\quad i would not recommend her to anyone in the network .\\

\quad they will not think to contact me for any reason .\\

\bottomrule

\end{tabular}

Table 2 shows some generated samples. Ours yields fluent sentences that mostly satisfy the controls. Moreover, GPT2-FT performs similar, although it misses the subject in the second and the third examples. PPLM may fail due to the lack of global concern, e.g., the double negation leads to positive sentiment in the second example. Both PPLM and FUDGE could hardly succeed in all the controls simultaneously since it operates on the sequence space of an autoregressive LM, which is arduous to coordinate the controls. Refer to § D.2.2 for more generated examples and analysis.

4.1.2 Runtime Efficiency

To quantify the computational cost of each method, we evaluate the consumed time for generating 150 examples. We start timing after the models are loaded and before the generation starts. And we end timing right after 150 sentences are generated. We run five times for each method and average the results as final results, shown in Table 3. Since we sample in the low-dimensional compact latent space, our method is 6.6 $\times$ faster than FUDGE and 578 $\times$ faster than PPLM.

:Table 3: Results of generation time of each method.

| Methods | PPLM | FUDGE | Ours |

|---|---|---|---|

| Time (s) | 3182 (578 $\times$) | 36.1 (6.6 $\times$) | 5.5 (1 $\times$) |

4.2 Text Editing

We evaluate our model's text editing ability on both Yelp and Amazon domains, i.e, changing sentences' sentiment, tense and formality attributes sequentially (§ 4.2.1) or altogether (§ 4.2.2).

Baselines

Since few previous works can handle the sequential and compositional attributes editing task, we mainly compare with FUDGE [5]. Moreover, we train three Style Transformer [55] models (for sentiment, tense, and formality, respectively) to sequentially edit the source sentences as a baseline of sequential editing. To show the superiority of our $\textsc{LatentOps}$, we also conduct text editing with single attribute and compare with several recent state-of-the-art methods (§ D.3.1). We adopt the same setting (few-shot) as in § 4.1 for FUDGE and our $\textsc{LatentOps}$. It is noteworthy that $\textsc{LatentOps}$ is precisely the same model as in § 4.1, so it does not require further training.

Metrics

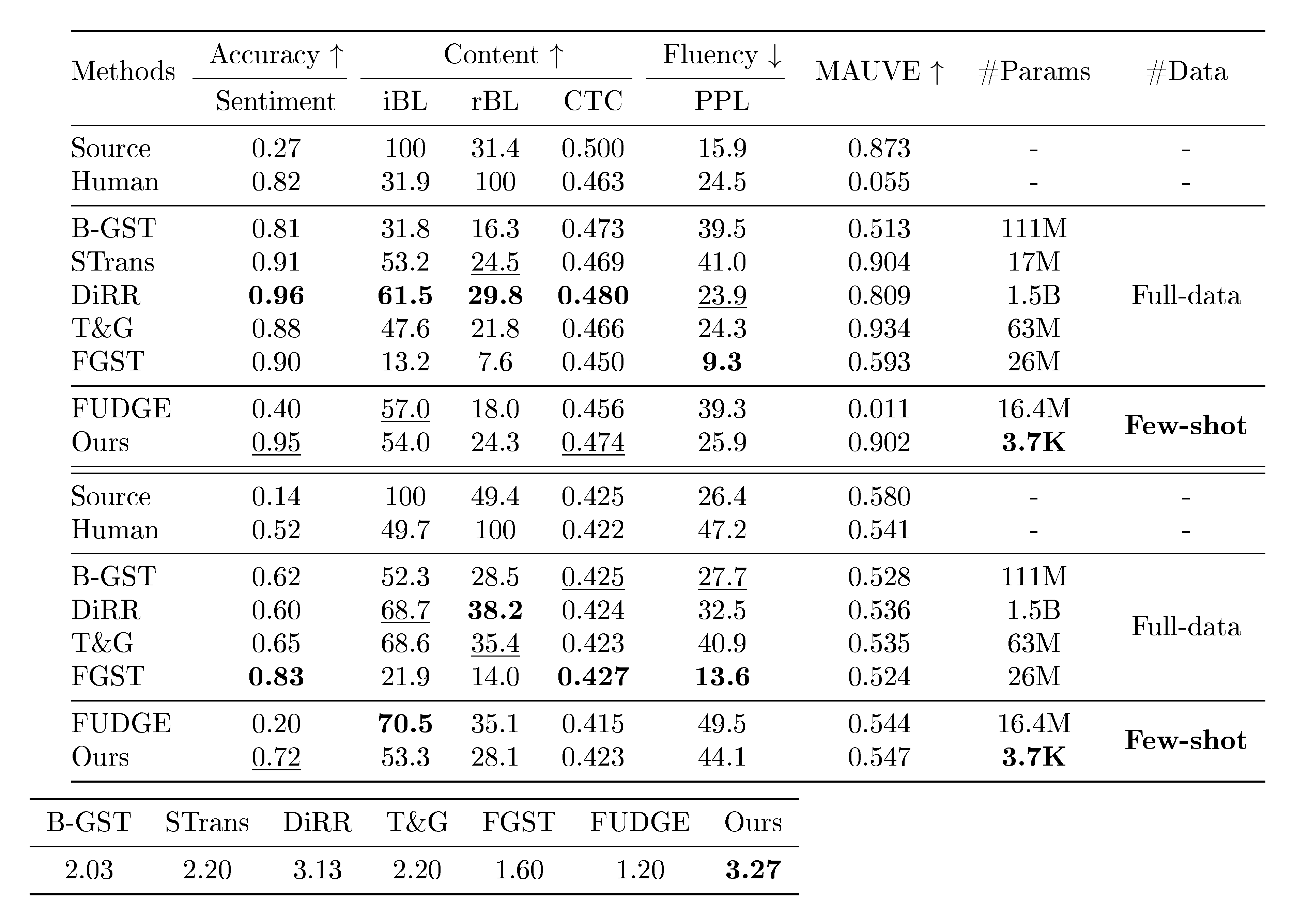

Besides success rate and fluency mentioned in § 4.1, we evaluate the ability of content preservation. Since it is a critical measure lying in the field of text editing, we utilize two metrics: input-BLEU (iBL, BLEU between input and output) and CTC score [56] (bi-directional information alignment between input and output). For single attribute setting, we also evaluate reference-BLEU (rBL, BLEU between human-annotated ground truth and output) and perform human evaluations (§ D.3.4).

4.2.1 Sequential Editing

In this section, we give the results of sequential editing, whose goal is to edit the given text by changing an attribute each time and keep the main content consistent. We consider the situation that source sentences are with formal manner, positive sentiment and present tense (selected by external classifiers in Yelp), and the goal is to transfer the source sentences to informal manner, negative sentiment and past tense, separately and sequentially. Potential entanglements exist among these attributes, and it is hard to control each attribute independently.

The automatic evaluation results are listed in Table 4. $\textsc{LatentOps}$ performs the best on acquiring desired controls and maintaining others and achieves a balanced trade-off among accuracy, content alignment, and fluency. FUDGE fails to introduce the informal manner, while it achieves better formality controls after introducing negative sentiment, showing its deficiency of ability of disentanglement. Furthermore, although FUDGE preserves the most content, it mistakes the core and puts the cart (content) before the horse (accuracy). STrans performs plain overall and cannot guarantee fluency well.

\begin{tabular}{llcccccc}

\toprule

\multirow{3}{*}{Attributes}&\multirow{3}{*}{Methods}&\multicolumn{3}{c}{Accuracy}&\multicolumn{2}{c}{Content $\uparrow$}&Fluency $\downarrow$ \\ \cmidrule(r){3-5} \cmidrule(r){6-7} \cmidrule(r){8-8}

& & F & S & T & iBL & CTC &PPL\\\midrule

\multirow{3}{*}{Informal}& FUDGE & 0.04& 0.06& 0.0 &\textbf{99.4}&0.479&\textbf{19.3} \\

& STrans & 0.45 & 0.14 & {0.06} & {65.4} & 0.470& 36.0 \\

& Ours & \textbf{0.85} & {0.07} & 0.07 & 64.2 & \textbf{0.482}& {20.2} \\\midrule

\multirow{3}{*}{+ Negative}& FUDGE & 0.49 & 0.35 & 0.10 & \textbf{48.6} & 0.451 & {35.0} \\

&STrans & 0.38 & 0.82 & 0.10 & {42.4} & 0.457 & 39.9 \\

& Ours & \textbf{0.75} & \textbf{0.92} & {0.07} & 42.1 & \textbf{0.468}& \textbf{28.7} \\\midrule

\multirow{3}{*}{\ \ + Present} & FUDGE & 0.48 & 0.35 & 0.10 & \textbf{49.3} & {0.452}& \textbf{30.7} \\

& STrans & 0.36 & 0.81 & 0.50 & {25.6} & 0.453& 45.4 \\

& Ours & \textbf{0.61} & \textbf{0.83} & \textbf{0.74} & 20.7 & \textbf{0.461}&{31.5}\\\bottomrule

\end{tabular}

We provide some examples in Table 5. The formality control of FUDGE makes no effect. Besides, FUDGE would introduce some irrelevant information, e.g., garlic pizza and thing's. A similar situation exists in STrans, e.g., ate and korean food. More examples and analysis are in § D.3.2.

4.2.2 Text Editing with Compositional Attributes

We give the results of text editing with compositional attributes on Yelp, aiming to edit attributes of sentiment and tense of the source sentences. The automatic evaluation results are listed in Table 6. $\textsc{LatentOps}$ achieves a higher success rate and content alignment (CTC). FUDGE performs better on iBL and worse on CTC. As demonstrated by [56], the two-way approach (CTC) is more effective and exhibits a higher correlation than single-directional alignment (e.g., BLEU), which is consistent with our observation: FUDGE prefers to generate long sentences that contain the spans in source (raise iBL), but it will also introduce irrelevant information (lower CTC). We give some examples in § D.3.3 to support the claim.

4.3 Ablation Study

To clarify the advantage of sampling from ODE, we compare different sampling methods, including Stochastic Gradient Langevin Dynamics (SGLD) and Predictor-Corrector sampler with SDE in § D.4.

\begin{tabular}{ll}

\toprule

Source & the flowers and prices were great . \\

\midrule

FUDGE:&\\

+ informal&the flowers and prices were great. [Formal]\\

\quad + negative& garlic pizza and prices were great.\\

\quad\quad + present&garlic pizza and prices were great.\\

STans:&\\

+ informal&the flowers and prices were great ?\\

\quad+ negative&the ate and prices were terrible ?\\

\quad\quad+ present&the ate and prices are terrible ?\\

Ours:& \\

+ informal& and the flowers and prices were great ! \\

\quad+ negative& and the flowers and prices were terrible !\\

\quad\quad+ present& and the flowers and prices are terrible !\\\midrule

Source & best korean food on this side of town .\\\midrule

FUDGE:&\\

+ informal&best korean food on this side of town. [Formal]\\

\quad+ negative&thing's best korean food on this side of town.\\

\quad\quad+ present &thing's best korean food on this side of town. [No Tense]\\

STans:&\\

+ informal&best korean food on this side of town korean food . [Formal]\\

\quad+ negative&only korean food on this side of town korean food .\\

\quad\quad+ present&only korean food on this side of town korean food . [No Tense]\\

Ours:& \\

+ informal&best korean food on this side of town ! \\

\quad + negative& worst korean food on this side of town ! \\

\quad\quad+ present& this is worst korean food on this side of town !\\\bottomrule

\end{tabular}

5. Related Work

Section Summary: Recent works on generating controlled text, such as making it positive or in a certain tense, fall into two main categories: directly tweaking the sequence of words during creation, or altering hidden representations behind the text that can then be turned into words. In the first approach, methods like PPLM and FUDGE use classifiers to guide language models step by step, adjusting hidden states or word probabilities, while others like MUCOCO and COLD optimize soft word distributions or sample using energy models for more precise control. The second approach works in a "latent space" by encoding text into compact forms with tools like VAEs or auto-encoders, then editing those forms with additional networks or diffusion processes to produce desired outputs, as seen in PPVAE, Plug and Play, and LDEBM.

Recent works on text generation can be divided into two categories. One generates desirable texts by directly modifying the text sequence space. The other operates on the latent space to obtain a representation that can be decoded into sequence with desired attributes. More detailed discussions can be found in Section § C.

5.1 Text Control in Sequence Space

Pretrained LM has shown tremendous success in text generation, and many have studied large autoregressive LMs such as GPT-2 on conditional generation by performing operations on the sequence space of the language models. For example, [4] proposes a plug-and-play framework that utilizes gradients of attribute classifiers to modify the hidden states of the pretrained LM at every step, named PPLM. FUDGE [5] follows a similar architecture but incorporates classifiers that predict the conditional probability of a complete sentence given prefixes to adjust the vocabulary probability distribution given by LM. Differing from these two approaches with left-to-right decoding, MUCOCO [6] formulates the decoding process as a multi-objective continuous optimization that combines loss of pretrained LM and attributes classifiers. The optimization gradient is applied directly to the soft representation consisting of each token's vocabulary distribution. COLD [9] adopts the exact soft representation but uses an energy-based model with attribute constraints and Langevin Dynamics to sample.

\begin{tabular}{lccccc}

\toprule

\multirow{3}{*}{Methods} & \multicolumn{2}{c}{Accuracy $\uparrow$} & \multicolumn{2}{c}{Content $\uparrow$} & Fluency $\downarrow$ \\\cmidrule(r){2-3}\cmidrule(r){4-5}\cmidrule(r){6-6}

& Sentiment & Tense & iBL & CTC &PPL \\

\midrule

FUDGE & 0.36 & 0.56 & \textbf{56.5} & 0.450 & \textbf{17.3}\\

Ours & \textbf{0.95} & \textbf{0.95} & {37.1}& \textbf{0.465} & 30.1 \\\bottomrule

\end{tabular}

5.2 Text Control in Latent Space

Another common approach to control text generation is modifying text representation in the latent space. Some methods [57, 17] utilize a VAE to encode the input sequence into $\bm z$ in the latent space and then use attribute networks that are jointly trained with the VAE to obtain $\bm z^\prime$ that can be decoded into the desired sequence. PPVAE [19] uses an unconditional Pre-train VAE and a conditional Plugin-VAE to achieve the goal. Plug and Play [58] follows a similar framework but replaces the VAE with an Auto-encoder and the Plugin-VAE with an MLP to obtain a desired vector $\bm z^\prime$. Some methods use an attribute classifier to edit the latent representation $\bm{z}$ with Fast-Gradient-Iterative-Modification [16]. Because of the recent success of diffusion models, LDEBM [59] proposes a diffusion process in the latent space whose reverse process is constructed with a sequence of EBMs for text generation.

6. Conclusions

Section Summary: Researchers have created a new method called LatentOps that efficiently controls and combines operations within a simplified, hidden representation of text, allowing for flexible manipulations in a low-dimensional space. This approach uses an energy-based system and a sampler based on mathematical equations to generate text from these representations, linking seamlessly to existing AI language models without needing extensive retraining. The technique demonstrates strong compositionality and performance across various tasks, with plans to extend it to more complex text generation in the future.

We have developed a new efficient approach that performs composable control operations in the compact latent space of text, named $\textsc{LatentOps}$. The proposed method permits combining arbitrary operators applied on a latent vector, resulting in an energy-based distribution on the low-dimensional continuous latent space. We develop an efficient and robust sampler based on ODEs that effectively samples from the distribution guided by gradients. We connect the latent space to popular pretrained LM by efficient adaptation without finetuning the whole model. We showcase its compositionality, flexibility and firm performance on several distinct tasks. In future work, we can explore the control of more complicated texts.

Ethical Considerations

Section Summary: This paper explores challenges in creating efficient, flexible text operations within a compact hidden representation of language, testing them on standard public datasets, with potential uses in generating tailored text, transferring styles, enhancing data, and enabling learning from few examples. The underlying variational autoencoders (VAEs) replicate patterns from their training data, so any biases in that data—along with new ones from the model's design or training—can lead to biased outputs. Researchers must recognize the risk of these tools being used to create deceptive or false information and approach their development with responsibility.

The contributions of this paper mostly focus around the fundamental challenges in designing an efficient approach for composable text operations in the compact latent space of text, and the proposed method is examined on commonly used public datasets. This work has applications in conditional text generation, text style transfer, data augmentation, and few-shot learning.

VAEs, the framework of our latent model, are trained to mimic the training data distribution, and, bias introduced in data collection will make VAEs generate samples with a similar bias. Additional bias could be introduced during model design or training. However, such techniques could be misused to produce fake or misleading information, and researchers should be aware of these risks and explore the techniques responsibly.

Limitations

Section Summary: This paper focuses on analyzing single sentences to study the controllability and compositionality of a proposed method in a controlled environment, which helps gain clear insights without dealing with complex data issues. However, the results may not apply directly to longer texts, which involve intricate structures, dependencies, and contexts that could challenge the method's performance and require further adjustments. Future research could expand the approach to handle more complex writing and compare it with other techniques.

The primary focus of this paper is the analysis of single sentences. Our objective has been to deeply understand the potential of the proposed method, with a particular emphasis on its controllability and compositionality. Analyzing single sentences offers a relatively controlled setting, making it easier to derive clear insights and manage data complexities.

However, it's important to recognize that our findings, while based on single sentences, may not directly translate to longer textual content. Lengthier texts bring with them complex structures, dependencies, and nuanced contexts that might affect the performance of our methods. Adapting to these challenges may require further refinements.

Considering the scope of our current investigation, there exists significant opportunity for future research. This includes not only adapting our methodology to handle more intricate textual scenarios but also contrasting its performance with other potential approaches. Such explorations remain promising directions for forthcoming studies.

A Derivation of ODE Formulation

Section Summary: This section explains how to derive a deterministic ordinary differential equation (ODE) from stochastic differential equations (SDEs) that model diffusion processes, starting with a general form where random changes are added to a system's evolution over time. For their specific approach, the authors use a variance-preserving SDE as the forward process, which gradually adds noise to data, and then derive the corresponding reverse-time SDE to undo this noise by following the probability flow backward. From there, they simplify to an ODE that removes the randomness entirely, resulting in a smooth path for generating data without stochastic elements.

A.1 General Form

Let's consider the general diffusion process defined by SDEs in the following form (see more details in Appendix A and D.1 of [11]):

$ \text{d}\bm{x} = \bm f(\bm x, t)\text{d}t +\bm{G}(\bm x, t)d\bm w,\tag{9} $

where $\bm f(\cdot, t):\mathbb{R}^d\rightarrow\mathbb{R}^d$ and $\bm{G}(\cdot, t):\mathbb{R}^d\rightarrow\mathbb{R}^{d\times d}$. The corresponding reverse-time SDE is derived by [33]:

$ \text d \bm x=\left{ \bm f(\bm x, t) - \nabla_{\bm x} \cdot[\bm{G}(\bm{x}, t) \bm{G}(\bm{x}, t)^{\text T}] - \bm{G}(\bm{x}, t) \bm{G}(\bm{x}, t)^{\text T}\nabla_{\bm x} \log p_t(\bm x) \right}\text d t+ \bm{G}(\bm{x}, t)\text d \bar{\bm w},\tag{10} $

where we refer $\nabla_{\bm x}\cdot \bm F(\bm x) := [\nabla_{\bm x}\cdot \bm f^1(\bm x), ..., \nabla_{\bm x}\cdot \bm f^d(\bm x)]^{\text T}$ for a matrix-valued function $\bm F(\bm x):=[\bm f^1(\bm x), ..., \bm f^d(\bm x)]^{\text T}$, and $\nabla_{\bm x}\cdot \bm f^i(\bm x)$ is the Jacobian matrix of $f^i(\bm x)$. Then the ODE corresponding to 9 has the following form:

$ \text d \bm x=\left{ \bm f(\bm x, t) - \frac{1}{2}\nabla_{\bm x}\cdot[\bm{G}(\bm{x}, t) \bm{G}(\bm{x}, t)^{\text T}] -\frac{1}{2}\bm{G}(\bm{x}, t) \bm{G}(\bm{x}, t)^{\text T}\nabla_{\bm x}\log p_t(\bm x) \right}\text d t.\tag{11} $

A.2 Derivation of Our ODE

In this work, we adopt the Variance Preserving (VP) SDE [11] to define the forward diffusion process:

$ \text{d} \bm x = -\frac{1}{2}\beta(t)\bm x \text{d}t+\sqrt{\beta(t)}\text{d} \bm w, $

where the coefficient functions of Equation 9 are $\bm f(\bm x, t)=-\frac{1}{2}\beta(t)\bm x\in\mathbb{R}^d$ and $\bm{G}(\bm{x}, t)=\bm G(t)=\sqrt{\beta(t)}\bm I_d\in\mathbb{R}^{d\times d}$, independent of $\bm x$. Following Equation 10, the corresponding reverse-time SDE is derived as:

$ \begin{split} \text d \bm x &= \left[-\frac{1}{2}\beta(t)\bm x - \beta(t)\nabla_{\bm x}\cdot\bm I_d - \beta(t)\bm I_d\nabla_{\bm x}\log p_t(\bm x) \right]\text d t + \sqrt{\beta(t)}\bm I_d \text d \bar{\bm w}\ & = \left[-\frac{1}{2}\beta(t)\bm x - \beta(t)\nabla_{\bm x}\log p_t(\bm x) \right]\text d t + \sqrt{\beta(t)}\text d \bar{\bm w}\ &= -\frac{1}{2}\beta(t)\left[\bm x + 2\nabla_{\bm x}\log p_t(\bm x) \right]\text d t + \sqrt{\beta(t)}\text d \bar{\bm w}, \end{split} $

which infers to the Equation 2. Then, we derive the deterministic process (ODE) on the basis of Equation 11:

$ \begin{split} \text d \bm x &= \left[-\frac{1}{2}\beta(t)\bm x -\frac{1}{2}\beta(t)\nabla_{\bm x}\cdot\bm I_d-\frac{1}{2}\beta(t)\bm I_d\nabla_{\bm x}\log p_t(\bm x) \right]\text d t\ &=\left[-\frac{1}{2}\beta(t)\bm x -\frac{1}{2}\beta(t)\nabla_{\bm x}\log p_t(\bm x) \right]\text d t\ &=-\frac{1}{2}\beta(t)\left[\bm x +\nabla_{\bm x}\log p_t(\bm x) \right]\text d t, \end{split} $

which gives the derivation of Equation 3.

B Evaluation of Sample Selection Strategy

Section Summary: The researchers evaluate a sample selection strategy for improving text editing and keyword-based generation tasks by generating multiple output variations from a model's latent space and choosing the best one based on criteria like attribute matching and similarity to the original text. In experiments on Yelp reviews, using more samples (up to 20) leads to better results, with higher accuracy in sentiment editing and stronger preservation of the source content's meaning, as shown in evaluation metrics and trend graphs. Examples illustrate how this approach produces similar but slightly varied descriptions from the same input, allowing selection of the most fitting version.

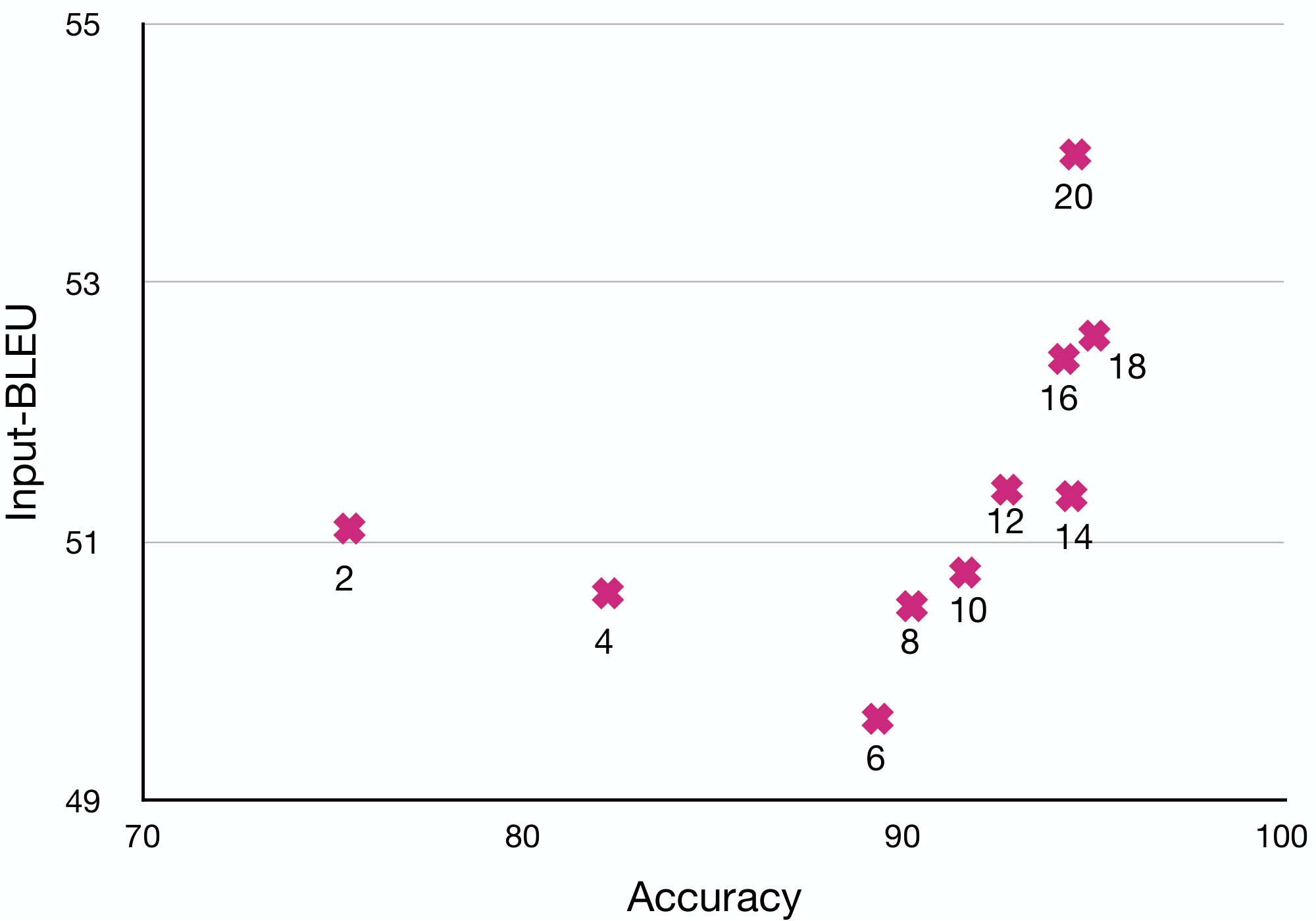

As we stated in § 3.4, we adopt a sample selection strategy for content-related generation tasks (text editing and generation with keywords). Previous works also have similar strategies to improve the generation quality (i.e., PPLM [4] and FUDGE [5]).

Since our latent model is trained by VAE objective, a sample $\bm x \in\mathcal{X}$ corresponds to a distribution $\mathcal{N}(\bm\mu, \bm\sigma^2)$ in $\mathcal{Z}$. Thus, we can search for better output by expanding the search space through sampling $\bm z_n\sim\mathcal{N}(\bm\mu, \bm\sigma^2)$, where $n=1, ..., N$, and pick the best. Specifically, from ODE sampling, $\bm z(0)$ acts as the mean, and the variance $\bm \sigma^2$ is predefined. We generate $\bm z_n$ by sampling $\bm\epsilon_n$ from standard Gaussian:

$ \bm z_n = \bm z(0) + \bm\sigma\odot\bm\epsilon_n, \quad \bm \epsilon_n\sim\mathcal N(\bm 0, \bm I). $

We decode each $\bm z_n$ and pick the best one according to the criterion of the task. We prefer the output that conforms to the desired attribute and achieves a high BLEU score with the source text for the text editing task. We want the output that contains the desired keyword or its variants for the generation with keywords.

In our experiments (text editing and generation with keywords), we set $N=20$ as the default. To better demonstrate the strategy's improvement, we provide the quantitative and qualitative results towards different $N$.

We follow the same setting of text editing with single attribute on Yelp (§ D.3.4). The automatic evaluation results are shown in Table 7. As $N$ increases, all the metrics get improved. To reflect the trend of change in accuracy and content preservation, we plot Figure 3, which indicates that large $N$ gives better accuracy and better input-BLEU.

: Table 7: Automatic evaluation results towards to different $N$ on Yelp review dataset. We mark the best bold and the second best underline.

| $N$ | Accuracy $\uparrow$ | \multicolumn{3}{c}{Content $\uparrow$} | Fluency $\downarrow$ | | :---: | :---: | :---: | :---: | :---: | :---: | | | Sentiment | iBL | rBL | CTC | PPL | | 2 | 0.75 | 51.1 | 21.4 | 0.4737 | 26.3 | | 4 | 0.82 | 50.6 | 22.0 | 0.4729 | 26.7 | | 6 | 0.89 | 49.6 | 22.3 | 0.4729 | 26.2 | | 8 | 0.9 | 50.5 | 22.2 | 0.4732 | 25.9 | | 10 | 0.92 | 50.8 | 23.1 | 0.4730 | 26.2 | | 12 | 0.93 | 51.4 | 23.2 | 0.4733 | 26.1 | | 14 | 0.94 | 51.4 | 23.0 | 0.4732 | 26.9 | | 16 | 0.94 | 52.4 | 23.4 | 0.4737 | 25.9 | | 18 | 0.95 | 52.6 | 23.6 | 0.4739 | 25.8 | | 20 | 0.95 | 54.0 | 24.2 | 0.4743 | 25.9 |

We also provide some examples in Table 8. One observation is that all the outputs from the same source sequence describe similar scenarios but slightly differ in expression. Thus, we can select the most suitable expression based on predefined rules.

\begin{tabular}{l|l}

\toprule

Source&there is definitely not enough room in that part of the venue .\\

Target&there is so much room in that part of the venue\\\midrule

&there is definitely plenty of room in that perfect location .\\

&there is definitely no room enough in that venue to be the best part .\\

&there is definitely plenty of room right in that venue .\\

&there is definitely plenty of room right in the venue that needs .\\

&there is definitely plenty of room right in that venue .\\

&there is definitely enough room that can be right in the venue .\\

&there is definitely nothing better in room for that type of venue .\\

&there is definitely plenty of room in the right venue for that level .\\

&there is definitely nothing better in that room style of place .\\

&there is definitely a good room inside that best of all need in space .\\

&there is definitely plenty of room in the right level that is appropriate .\\

&there is definitely enough room in that right part of the venue .\\

&there is definitely plenty of room right in the deck that is needed .\\

&there is definitely enough room in that good atmosphere .\\

&there is definitely plenty of room in the right area, which is comfortable .\\

&there is definitely plenty of room in that perfect state of the place .\\

&there is definitely plenty of room that ideal in the location .\\

&there is definitely enough room in that perfect venue to all .\\

&there is definitely plenty of room in the right venue as well .\\

&there is definitely plenty of room available in the overall venue, too .\\

\midrule[1pt]

Source&it is n't terrible, but it is n't very good either .\\

Target&it is n't perfect, but it is very good .\\\midrule

&it is n't terrible, but it is very good also !\\

&it is very good, but it does n't even look great !\\

&it is n't terrible, but it is very good and definitely is good !\\

&it is n't great, but it is definitely very good !\\

&it is n't terrible, it is good and the menu is definitely great !\\

&it is n't terrible, but it is n't very good either .\\

&it is n't terrible, but it is very good also .\\

&it is n't terrible, but it is very good also !\\

&it is n't terrible, but it is definitely very good !\\

&it is very good, and it is n't terrible either .\\

&it is n't terrible, but it is very good and well made !\\

&it is very good, but it 's not really great either .\\

&it is n't terrible, but it is very good and well worth it .\\

&it is n't terrible, but it is definitely very good and good !\\

&it is n't terrible, but it is very good also !\\

&it is n't terrible, but it is very good and definitely is great !\\

&it is n't terrible, but it is very good also .\\

&it is n't terrible, but it is n't very good either .\\

&it is n't terrible, but it is very good also .\\

&it is n't terrible, but it is very good and always great !\\

\midrule[1pt]

Source&the food was pretty bad, i would not go there again .\\

Target&the food was great, i would go there again.\\\midrule

&he food was pretty good, i would go there again .\\

&the food was pretty good, i would def go there again !\\

&the food was pretty good, i would go again !\\

&the food was pretty good, i would go there again !\\

&the food was pretty good, i would definitely go there again .\\

&the food was pretty good, i would go back there again .\\

&the food was pretty good, i would definitely go back again .\\

&the food was pretty good, i would definitely go there again !\\

&the food was pretty good, i would definitely go there again .\\

&the food was pretty good, i would always go there again .\\

&the food was pretty good, i would go there again .\\

&the food was pretty good, i would not go there again .\\

&the food was pretty good, i would go there again .\\

&the food was pretty good, i would go back there again .\\

&the food was pretty good, i would go there again .\\

&the food was pretty good, i would definitely go there again !\\

&the food was pretty good, i would not go there again .\\

&the food was pretty good, i would definitely go there again .\\

&the food was pretty good, i would definitely go back again .\\

&the food was pretty good, i would go here again .\\

\bottomrule

\end{tabular}

C Distinguishing from Other Works

Section Summary: This section explains how LatentOps stands out from similar models like LACE by focusing on the unique challenges of working with text, which is made of distinct units and comes in varying lengths, unlike simpler image data. While LACE relies on pre-trained image generation networks that require extra tools for labeling and human cleanup during training, LatentOps cleverly repurposes large language models within a more efficient encoding framework, allowing direct and faster training without those hassles. Overall, LatentOps builds its core operations right in the text's hidden space, keeping things simpler and more aligned for tasks like creating or editing text.

In this section, we outline the foundational and methodological differences that set $\textsc{LatentOps}$ apart from models like LACE ([12]). The underlying motivation for our approach is fundamentally different. Text, unlike images, is characterized by discrete values and varying lengths, making it inherently more challenging to model. Given these complexities, there are only a handful of works exploring text operations within a condensed latent space. If we can fully comprehend this text latent space, we can align various textual tasks, such as generation and text editing, with operations in the latent domain.

LACE ([12]), for instance, builds its foundation on pre-trained GANs. In order to train their classifiers, class labels for latent vectors are essential. This necessitates the use of external classifiers to retrieve the class labels of the latent vectors, with human intervention required to filter out subpar samples. Our approach, on the other hand, effectively adapts large pre-trained LMs to the VAE framework. For specific datasets, the VAE is not only efficient but also expeditious in its training. Thanks to the bi-directional mapping between the latent space and text space, we can directly train the classifier using the marginal distribution.

Furthermore, LACE establishes a joint distribution in the image space and then transitions to the latent space using the reparameterization trick. Contrarily, our model defines its joint distribution directly within the text latent space.

Additionally, the architecture of LACE, built upon the GAN latent space, necessitates certain specialized regularization terms in its energy function for different tasks to optimize performance. Our model benefits from the more structured latent space provided by the VAE, allowing our energy function to remain straightforward and consistently aligned with our practical definitions.

D More Details and Results of Experiments

Section Summary: This section delves into the experimental setup and results for testing a text generation model that controls multiple attributes like sentiment, tense, formality, and keywords. It uses datasets such as Yelp, Amazon, and GYAFC, with BERT-small as the encoder and GPT2-large as the decoder in a variational autoencoder framework, evaluating performance through metrics like BLEU scores and attribute accuracy via fine-tuned classifiers. Key findings include minimal impact from varying encoder sizes but significant gains from larger decoders like GPT2-large, improved training stability, and comparisons with baselines like PPLM and FUDGE for generating text with combined attributes.

In this section, we provide more details and results of the experiments (§ 4).

D.1 Setup

The Yelp dataset and Amazon dataset contain 443K/4K/1K and 555K/2K/1K sentences as train/dev/test sets, respectively. Since Yelp and Amazon datasets[^2][^3] are mainly developed for sentiment usage, we annotate them with a POS tagger to get the tense attribute to test the ability of our model that can be extended to an arbitrary number of attributes. Besides, we also use GYAFC dataset [54] to include the formality attribute. Note that the GYAFC dataset has somewhat different domains from Yelp/Amazon, which can be used to test our model's out-of-domain generalization ability. All the datasets are in English.

[^3]: The datasets are distributed under CC BY-SA 4.0 license.

We adopt BERT-small[^4] and GPT2-large[^5] as the encoder and decoder of our latent model, respectively. The training paradigm follows § 3.4, and some training tricks [42] (i.e., cyclical schedule for KL weight and KL thresholding scheme) are applied to stabilize the training of the latent model. All the attributes are listed in Table 9. All the models are trained and tested on a single Tesla V100 DGXS with 32 GB memory. Input-BLEU, reference-BLEU and self-BLEU are implemented by nltk [60] package.

[^4]: The BERT model follows the Apache 2.0 License.

[^5]: The GPT2 model follows the MIT License.

We employ a BERT classifier to determine attribute accuracy, serving as a metric for the evaluation of the success rate. More precisely, we finetune BERT-base models dedicated to classification tasks using the respective dataset. For instance, when evaluating sentiment, the classifier tailored for the Yelp dataset registers accuracies of 97.1% and 97.3% on the dev and test sets, respectively. Meanwhile, for the Amazon dataset, the sentiment classifier records accuracies of 86.9% and 85.7% on the dev and test sets.

In our experiments of generation with single attribute, we also incorporate the MAUVE metric ([61]), an automatic measure of the gap between neural text and human text for text generation. In alignment with the official recommendations associated with MAUVE, we select a random subset of 10,000 sentences from the training set to serve as reference sentences.

For the operator (classifier) $f_i(\bm z)$, we adopt a four-layer MLP as the network architecture as shown in Table 10. Since the number of trainable parameters of the classifier is small, it is rapid to train and sample.

:Table 9: All attributes and the corresponding dataset are used in our experiments.

| Style | Attributes | Dataset |

|---|---|---|

| Sentiment | Positive / Negative | Yelp, Amazon |

| Tense | Future / Present / Past | Yelp |

| Keywords | Existence / No Existence | Yelp |

| Formality | Formal / Informal | GYAFC |

:Table 10: The architecture of the attribute classifier.

| Input | Layer 1 | Layer 2 | Layer 3 | Layer 4 |

|---|---|---|---|---|

| $\bm z\in\mathbb{R}^{64}$ | Linear 43, LeakyReLU | Linear 22, LeakyReLU | Linear 2, LeakyReLU | Linear #logits |

Observations on Scalability

In our experiments with different encoder and decoder scales, several observations emerged. Firstly, while we evaluated various encoder models, including BERT, RoBERTa, and other pre-trained language models (PLMs) of different scales, the distinctions in performance were minimal. In this context, BERT-small proved sufficiently robust, serving as an effective encoder for the VAE framework. Secondly, the scale of the decoder was observed to significantly influence performance. We examined a spectrum of models, ranging from GPT2-base to GPT2-xl. Through these tests, GPT2-base and GPT2-large emerged as the optimal choices, providing a harmonious blend of performance results and computational efficiency. Stability of VAE training

The stability of VAE training has benefited from recent innovations, as evidenced by works like [42]. Our empirical observations, corroborated by subsequent research such as [43], attest to these advancements. In addition, our approach's constrained parameter training further enhances this stability. As a result, training the VAE has become less of a challenge or bottleneck than before.

D.2 Generation with Compositional Attributes

The section is a supplement of § 4.1, we give more details of experimental configuration, generated examples and discussion.

D.2.1 More Details of Baselines

We compare our method with PPLM [4], FUDGE [5], and a finetuned GPT2-large [45]. PPLM and FUDGE are plug-and-play controllable generation approaches on top of an autoregressive LM as the base model. For fair comparison (§ 3.3), we obtain the base model by finetuning the embedding layer and the first transformer layer of pretrained GPT2-large on the Yelp review dataset with unlabeled data. All the classifiers/discriminators of PPLM, FUDGE and our $\textsc{LatentOps}$ are trained by a small subset of the original dataset (200 labeled data instances per class). PPLM

requires a discriminator attribute model (or bag-of-words attribute models) learned from a pretrained LM's top-level hidden layer. At decoding, PPLM modifies the states toward the increasing probability of the desired attribute via gradient ascent. We only consider the discriminator attribute model, which is consistent with other baselines and ours. We follow the default setting of PPLM, and for each attribute, we train a single layer MLP as the discriminator. FUDGE

has a discriminator that takes in a prefix sequence and predicts whether the generated sequence would meet the conditions. FUDGE could control text generation by directly modifying the probabilities of the pretrained LM by the discriminator output. We follow the architecture of FUDGE and train a discriminator for each attribute. Furthermore, we tune the $\lambda$ parameter of FUDGE which is a weight that controls how much the probabilities of the pretrained LM are adjusted by the discriminator, and we find $\lambda$ =10 yields the best results. We follow the default setting of FUDGE, and for each attribute, we train a three-layer LSTM followed by a Linear as the discriminator. GPT2-FT

is a finetuned GPT2-large model that is a conditional language model, not plug-and-play. Specifically, we train an external classifier for the out-of-domain attribute (i.e., formality) to annotate all the data in Yelp. For tense, we use POS tagging to annotate the data automatically. Then we finetune the embedding layer and the first layer of GPT2-large by the labeled data. Since GPT2-FT is fully-supervised and not plug-and-play, it is not comparable with other baselines and ours, and we only use it for reference.

D.2.2 More Discussion of Generation with Compositional Attributes

Discussion of Quantitative Results

As we state in § 4.1.1, our method is superior to baselines. We want to discuss the results in Table 1.

For success rate, our method dramatically outperforms FUDGE and PPLM as expected since both control the text by modifying the outputs (hidden states and probabilities) of PLM, which includes the token-level feature and lacks the sentence-level semantic feature. On the contrary, our method controls the attributes by operating the sentence-level latent vector, which is more suitable.

For diversity, since our method bilaterally connects the discrete data space with continuous latent space, which is more flexible to sample, ours gains obvious superiority in diversity. Conversely, PLMs like GPT2, which is the basis of PPLM and FUDGE, are naturally short of the ability to generate diverse texts. They generate diverse texts by adopting other decoding methods (like top-k), which results in the low diversity of the baselines.

For fluency, we calculate the perplexity given by a finetuned GPT2, which processes the same architecture and training data of PPLM and FUDGE, so naturally, they can achieve better perplexity even compared to the perplexity of test data and human-annotated data. Moreover, our method only requires an Extra Adapter to guide the fixed GPT2, and our fluency is in a regular interval, a little higher than the perplexity of human-annotated data.

Since GPT2-FT is trained with full joint labels (all the data has all three attribute labels), it can achieve a reasonable success rate, and ours is comparable. Moreover, consistent with PPLM and FUDGE, GPT2-FT can achieve good perplexity but poor diversity due to the sampling method.

Discussion of Qualitative Results

We provide some generated examples in Table 11 to raise a more direct comparison. Consistent with the quantitative results, it is difficult for FUDGE to control all the desired attributes successfully, although GPT2-FT and ours perform well. For diversity, it is evident that FUDGE and GPT2-FT prefer to generate short sentences containing very little information. Some words appear highly, yet ours gives a more diverse description. Regarding fluency, since FUDGE and GPT2-FT tend to generate simple sentences, they can obtain better perplexity readily. However, ours is inclined to generate more informative sentences. In conclusion, there is a trade-off between diversity and fluency. It can be handled well by ours, but for the baselines, they pursue fluency too much and lose diversity.

\begin{tabular}{m{0.4\textwidth}m{0.4\textwidth}}

\toprule

\textbf{Positive + Present + Formal}&\textbf{Negative + Past + Inormal} \\\midrule

\multicolumn{1}{l}{GPT2-FT:}&\multicolumn{1}{l}{GPT2-FT:}\\

\quad the staff is friendly and helpful.&\quad didn't bother with the food and just walked out.\\

\quad i love it here. [Informal]&\quad just not a good place for me. [No Tense]\\

\quad this {is} the place to go for {traditional} chinese food.&\quad not a fan of this place. [No Tense]\\

\quad highly recommend them. [Informal]&\quad just not good. [No Tense]\\

\quad the menu {is} small but {very nice}.&\quad horrible! [No Tense]\\

\quad it{'s} a {great} place.&\quad oh and the cake was way too salty.\\

\quad i {highly {recommend}} this place.&\quad but we didn't even finish it.\\

\midrule

\multicolumn{1}{l}{PPLM:}&\multicolumn{1}{l}{PPLM:}\\

\quad i love this store and the service is always friendly and courteous.&\quad i ordered delivery... what?\\

\quad the staff was so friendly \& helpful![Informal]&\quad great service. [No Tense]\\

\quad the place is clean.&\quad this place was terrible!\\

\quad the best french bakery i have ever been to in las vegas!&\quad the service was horrible horrible horrible!\\

\quad this place was a gem!&\quad i ordered the ribs and brisket tacos and it was very bland. [Formal]\\