Video Pixel Networks

Nal Kalchbrenner, Aäron van den Oord, Karen Simonyan

Ivo Danihelka, Oriol Vinyals, Alex Graves, Koray Kavukcuoglu

{nalk,avdnoord,simonyan,danihelka,vinyals,gravesa,korayk}@google.com

Google DeepMind, London, UK

Abstract

We propose a probabilistic video model, the Video Pixel Network (VPN), that estimates the discrete joint distribution of the raw pixel values in a video. The model and the neural architecture reflect the time, space and color structure of video tensors and encode it as a four-dimensional dependency chain. The VPN approaches the best possible performance on the Moving MNIST benchmark, a leap over the previous state of the art, and the generated videos show only minor deviations from the ground truth. The VPN also produces detailed samples on the action-conditional Robotic Pushing benchmark and generalizes to the motion of novel objects.

Executive Summary: Video modeling presents a persistent challenge in artificial intelligence because videos combine motion over time with spatial details and color variations, often leading to predictions plagued by blurring or other distortions. Existing methods, such as those relying on error minimization or motion assumptions, struggle to produce clear, realistic continuations even for simple scenarios like moving shapes. This matters now as advances in video generation could enhance applications in robotics, simulation, and content creation, enabling more reliable predictions of future actions or movements.

This document introduces the Video Pixel Network (VPN), a new type of generative model designed to predict future video frames by directly estimating the full probability distribution of raw pixel values, accounting for dependencies across time, space within frames, and color channels.

The researchers developed the VPN using deep neural networks tailored to video structure. They encoded input frames with convolutional neural networks that maintain full resolution to preserve details, then processed these over time with a convolutional long short-term memory unit to capture motion. For each predicted pixel, decoder networks modeled spatial and color links using masked convolutions, outputting probability distributions over pixel intensities. The model trains on raw pixels without preprocessing, ensuring exact computation of video likelihoods. They tested it on two benchmarks: Moving MNIST, involving 20-frame sequences of two bouncing digits (trained on millions of generated examples, tested on 10,000 fixed sequences using 10 context frames to predict 10 more), and Robotic Pushing, with 20-frame clips of a robot arm interacting with objects (trained on 50,000 sequences, tested on 3,000, using 2 context frames and actions to predict 18 more). A baseline model omitted spatial and color dependencies for comparison, and all used standard optimization over hundreds of thousands of training steps.

The VPN delivered striking results on both benchmarks. First, it nearly matched the theoretical minimum error on Moving MNIST, scoring 87.6 nats per frame—within 1.5% of the 86.3 lower bound—outperforming the prior best by over 50% and the baseline by 20%. Generated videos remained sharp across 10 frames, even when diverging from actual paths. Second, on Robotic Pushing, the VPN cut prediction error by more than 65% compared to the baseline (0.62–0.64 versus 2.06 nats per dimension), producing detailed sequences that accurately depicted arm movements, object interactions, and occlusions. It generalized effectively: samples matched real videos in quality for unseen action sequences and even novel objects not encountered in training, while the baseline showed noise and inconsistency. Variants with advanced building blocks, like multiplicative units and dilated convolutions for broader context, further boosted performance, though simpler versions were nearly as effective on easier tasks.

These findings indicate that explicitly modeling pixel-level dependencies across video dimensions enables highly accurate, artifact-free predictions, surpassing methods that ignore spatial or color links. This reduces risks of unreliable outputs in practical uses, such as robotic planning where blurry predictions could lead to errors, or simulations needing precise motion. Unlike prior work, the VPN avoids special priors or losses, suggesting a more robust, general approach—though it confirms expectations that full dependencies prevent inconsistencies like mismatched motions.

Next, organizations should prioritize integrating VPN-like models into video-based AI systems, starting with pilots in controlled domains like robotics or surveillance to validate real-world gains in prediction accuracy and efficiency. Further development could explore scaling to higher resolutions or longer sequences, trading off computation time for broader applicability. Options include hybrid models combining VPN with faster approximations for real-time use, balancing detail against speed.

Key limitations include the high computational demands for generating long videos—requiring sequential sampling of hundreds of thousands of pixels—and reliance on simplified benchmarks that may not fully capture chaotic real-world footage. Confidence in the results is high for the tested scenarios, given the near-optimal scores and visual fidelity, but caution is warranted for complex, high-variety videos where additional data or refinements might be needed.

1. Introduction

Section Summary: Video modeling is a tough challenge because videos are complex and ambiguous, with existing methods often producing blurry or artifact-filled predictions even on simple tests. The authors introduce the Video Pixel Network (VPN), a deep neural network model that breaks down the probabilities of pixel values in videos across time, space, and color, allowing exact calculations of video likelihoods without simplifying assumptions. VPN uses resolution-keeping image processors, time-aware memory units, and pixel-by-pixel predictors, achieving sharp results on benchmarks like predicting digit movements in Moving MNIST and robotic object pushing, where it even handles unseen objects well.

Video modelling has remained a challenging problem due to the complexity and ambiguity inherent in video data. Current approaches range from mean squared error models based on deep neural networks ([1, 2]), to models that predict quantized image patches ([3]), incorporate motion priors ([4, 5]) or use adversarial losses ([6, 7]). Despite the wealth of approaches, future frame predictions that are free of systematic artifacts (e.g. blurring) have been out of reach even on relatively simple benchmarks like Moving MNIST ([1]).

We propose the Video Pixel Network (VPN), a generative video model based on deep neural networks, that reflects the factorization of the joint distribution of the pixel values in a video. The model encodes the four-dimensional structure of video tensors and captures dependencies in the time dimension of the data, in the two space dimensions of each frame and in the color channels of a pixel. This makes it possible to model the stochastic transitions locally from one pixel to the next and more globally from one frame to the next without introducing independence assumptions in the conditional factors. The factorization further ensures that the model stays fully tractable; the likelihood that the model assigns to a video can be computed exactly. The model operates on pixels without preprocessing and predicts discrete multinomial distributions over raw pixel intensities, allowing the model to estimate distributions of any shape.

The architecture of the VPN consists of two parts: resolution preserving CNN encoders and PixelCNN decoders ([8]). The CNN encoders preserve at all layers the spatial resolution of the input frames in order to maximize representational capacity. The outputs of the encoders are combined over time with a convolutional LSTM that also preserves the resolution ([9, 10]). The PixelCNN decoders use masked convolutions to efficiently capture space and color dependencies and use a softmax layer to model the multinomial distributions over raw pixel values. The network uses dilated convolutions in the encoders to achieve larger receptive fields and better capture global motion. The network also utilizes newly defined multiplicative units and corresponding residual blocks.

We evaluate VPNs on two benchmarks. The first is the Moving MNIST dataset ([1]) where, given 10 frames of two moving digits, the task is to predict the following 10 frames. In Sect. 5 we show that the VPN achieves 87.6 nats/frame, a score that is near the lower bound on the loss (calculated to be 86.3 nats/frame); this constitutes a significant improvement over the previous best result of 179.8 nats/frame ([4]).

The second benchmark is the Robotic Pushing dataset ([5]) where, given two natural video frames showing a robotic arm pushing objects, the task is to predict the following 18 frames. We show that the VPN not only generalizes to new action sequences with objects seen during training, but also to new action sequences involving novel objects not seen during training. Random samples from the VPN preserve remarkable detail throughout the generated sequence. We also define a baseline model that lacks the space and color dependencies. This lets us see that the latter dependencies are crucial for avoiding systematic artifacts in generated videos.

2. Model

Section Summary: The Video Pixel Networks model treats a video as a four-dimensional grid of pixels across time, rows, columns, and RGB colors, breaking down the probability of the entire video into a chain of simpler predictions for each pixel's color values. It predicts pixels frame by frame in temporal order, scanning each frame from top-left to bottom-right, and conditions each color (red, then green, then blue) on all prior frames and pixels already predicted in the current frame to capture both motion and spatial details accurately. In contrast, a baseline model only relies on previous frames while ignoring connections between pixels and colors within a frame, which can cause inconsistencies like blurry or mismatched movements, as shown in an example of a robotic arm deciding to move left or right.

In this section we define the probabilistic model implemented by Video Pixel Networks. Let a video $\mathbf{x}$ be a four-dimensional tensor of pixel values $\mathbf{x}{t, i, j, c}$, where the first (temporal) dimension $ t \in {0, ..., T}$ corresponds to one of the frames in the video, the next two (spatial) dimensions $i, j \in {0, ..., N}$ index the pixel at row $i$ and column $j$ in frame $t$, and the last dimension $c \in {R, G, B}$ denotes one of the three RGB channels of the pixel. We let each $\mathbf{x}{t, i, j, c}$ be a random variable that takes values from the RGB color intensities of the pixel.

By applying the chain rule to factorize the video likelihood $p(\mathbf{x})$ as a product of conditional probabilities, we can model it in a tractable manner and without introducing independence assumptions:

$ p(\mathbf{x}) = \prod_{t=0}^{T}\prod_{i=0}^N\prod_{j=0}^Np(\mathbf{x}{t, i, j, B} | \mathbf{x}{<}, \mathbf{x}{t, i, j, R}, \mathbf{x}{t, i, j, G})\ p(\mathbf{x}{t, i, j, G} | \mathbf{x}{<}, \mathbf{x}{t, i, j, R})\ p(\mathbf{x}{t, i, j, R} | \mathbf{x}_{<}). $

Here $\mathbf{x}{<}= \mathbf{x}{(t, <i, <j, :)}\cup \mathbf{x}_{(<t, :, :, :)}$ comprises the RGB values of all pixels to the left and above the pixel at position $(i, j)$ in the current frame $t$, as well as the RGB values of pixels from all the previous frames.

Note that the factorization itself does not impose a unique ordering on the set of variables. We choose an ordering according to two criteria. The first criterion is determined by the properties and uses of the data; frames in the video are predicted according to their temporal order. The second criterion favors orderings that can be computed efficiently; pixels are predicted starting from one corner of the frame (the top left corner) and ending in the opposite corner of the frame (the bottom right one) as this allows for the computation to be implemented efficiently ([8]). The order for the prediction of the colors is chosen by convention as R, G and B.

The VPN models directly the four dimensions of video tensors. We use $F_t$ to denote the $t$-th frame $\mathbf{x}_{t, :, :, :}$ in the video $\mathbf{x}$.

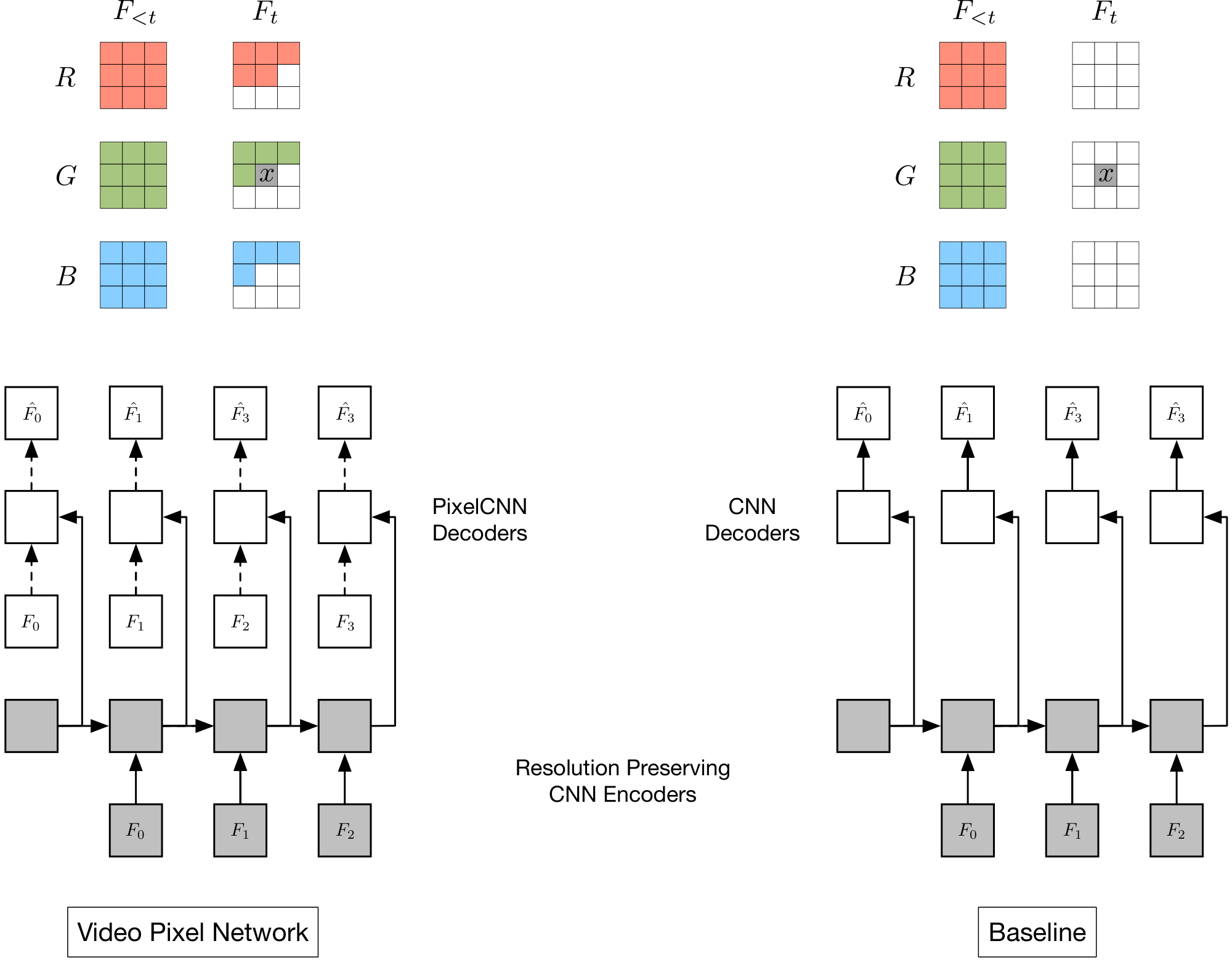

Figure 1 illustrates the fourfold dependency structure for the green color channel value of the pixel $x$ in frame $F_t$, which depends on: (i) all pixels in all the previous frames $F_{<t}$; (ii) all three colors of the already generated pixels in $F_t$; (iii) the already generated red color value of the pixel $x$.

We follow the PixelRNN approach ([8]) in modelling each conditional factor as a discrete multinomial distribution over 256 raw color values. This allows for the predicted distributions to be arbitrarily multimodal.

2.1 Baseline Model

We compare the VPN model with a baseline model that encodes the temporal dependencies in videos from previous frames to the next, but ignores the spatial dependencies between the pixels within a frame and the dependencies between the color channels. In this case the joint distribution is factorized by introducing independence assumptions:

$ p(\mathbf{x}) \approx \prod_{t=0}^{T}\prod_{i=0}^N\prod_{j=0}^N p(\mathbf{x}{t, i, j, B} | \mathbf{x}{<t, :, :, :})\ p(\mathbf{x}{t, i, j, G} | \mathbf{x}{<t, :, :, :})\ p(\mathbf{x}{t, i, j, R} | \mathbf{x}{<t, :, :, :}). $

Figure 1 illustrates the conditioning structure in the baseline model. The green channel value of pixel $\mathbf{x}$ only depends on the values of pixels in previous frames. Various models have been proposed that are similar to our baseline model in that they capture the temporal dependencies only ([3, 1, 2])

2.2 Remarks on the Factorization

To illustrate the properties of the two factorizations, suppose that a model needs to predict the value of a pixel $x$ and the value of the adjacent pixel $y$ in a frame $F$, where the transition to the frame $F$ from the previous frames $F_<$ is non-deterministic. For a simple example, suppose the previous frames $F_<$ depict a robotic arm and in the current frame $F$ the robotic arm is about to move either left or right. The baseline model estimates $p(x|F_<)$ and $p(y|F_<)$ as distributions with two modes, one for the robot moving left and one for the robot moving right. Sampling independently from $p(x|F_<)$ and $p(y|F_<)$ can lead to two inconsistent pixel values coming from distinct modes, one pixel value depicting the robot moving left and the other depicting the robot moving right. The accumulation of these inconsistencies for a few frames leads to known artifacts such as blurring of video continuations. By contrast, in this example, the VPN estimates $p(x|F_<)$ as the same bimodal distribution, but then estimates $p(y|x, F_<)$ conditioned on the selected value of $x$. The conditioned distribution is unimodal and, if the value of $x$ is sampled to depict the robot moving left, then the value of $y$ is sampled accordingly to also depict the robot moving left.

Generating a video tensor requires sampling $TN^23$ variables, which for a second of video with resolution $64 \times 64$ is in the order of $10^5$ samples. This figure is in the order of $10^4$ for generating a single image or for a second of audio signal ([11]), and it is in the order of $10^2$ for language tasks such as machine translation ([12]).

3. Architecture

Section Summary: The architecture of the Video Pixel Network (VPN) is designed to efficiently generate video frames by modeling their temporal, spatial, and color aspects in two main parts. The first part uses resolution-preserving convolutional neural network (CNN) encoders to process previous video frames and feeds their outputs into a convolutional long short-term memory (LSTM) unit, which captures how frames evolve over time without losing detail. The second part employs PixelCNN decoders, conditioned on these temporal outputs, to generate new pixels sequentially while considering their positions and colors within the frame; a baseline version swaps these for simpler CNN decoders that overlook intra-frame dependencies.

In this section we construct a network architecture capable of computing efficiently the factorized distribution in Sect. 2. The architecture consists of two parts. The first part models the temporal dimension of the data and consists of Resolution Preserving CNN Encoders whose outputs are given to a Convolutional LSTM. The second part models the spatial and color dimensions of the video and consists of PixelCNN architectures ([8, 13]) that are conditioned on the outputs of the CNN Encoders.

3.1 Resolution Preserving CNN Encoders

Given a set of video frames $F_{0}, ..., F_{T}$, the VPN first encodes each of the first $T$ frames $F_0, ..., F_{T-1}$ with a CNN Encoder. These frames form the histories that condition the generated frames. Each of the CNN Encoders is composed of $k$ ($k=8$ in the experiments) residual blocks (Sect. 4) and the spatial resolution of the input frames is preserved throughout the layers in all the blocks. Preserving the resolution is crucial as it allows the model to condition each pixel that needs to be generated without loss of representational capacity. The outputs of the $T$ CNN Encoders, which are computed in parallel during training, are given as input to a Convolutional LSTM, which also preserves the resolution. This part of the VPN computes the temporal dependencies of the video tensor and is represented in Figure 1 by the shaded blocks.

3.2 PixelCNN Decoders

The second part of the VPN architecture computes dependencies along the space and color dimensions. The $T$ outputs of the first part of the architecture provide representations for the contexts that condition the generation of a portion of the $T+1$ frames $F_0, ..., F_{T}$; if one generates all the $T+1$ frames, then the first frame $F_0$ receives no context representation. These context representations are used to condition decoder neural networks that are PixelCNNs. PixelCNNs are composed of $l$ resolution preserving residual blocks ($l=12$ in the experiments), each in turn formed of masked convolutional layers. Since we treat the pixel values as discrete random variables, the final layer of the PixelCNN decoders is a softmax layer over 256 intensity values for each color channel in each pixel.

Figure 1 depicts the two parts of the architecture of the VPN. The decoder that generates pixel $x$ of frame $F_t$ sees the context representation for all the frames up to $F_{t-1}$ coming from the preceding CNN encoders. The decoder also sees the pixel values above and left of the pixel $x$ in the current frame $F_t$ that is itself given as input to the decoder.

3.3 Architecture of Baseline Model

We implement the baseline model by using the same CNN encoders to build the context representations. In contrast with PixelCNNs, the decoders in the baseline model are CNNs that do not use masking on the weights; the frame to be predicted thus cannot be given as input. As shown in Figure 1, the resulting neural network captures the temporal dependencies, but ignores spatial and color channel dependencies within the generated frames. Just like for VPNs, we make the neural architecture of the baseline model resolution preserving in all the layers.

4. Network Building Blocks

Section Summary: The section outlines two key components for building the VPN network: the Multiplicative Unit (MU), which enhances convolutional layers with gate-like mechanisms inspired by LSTM cells to process image inputs through multiplicative operations and non-linear transformations, and the Residual Multiplicative Block (RMB), which stacks multiple MUs with shortcut connections to improve training by allowing gradients to flow more easily across layers. These blocks reduce channel counts for efficiency and resemble bottleneck designs in residual networks. Additionally, dilated convolutions are incorporated in the encoder part to expand the model's ability to detect larger object motions without increasing computational demands, using progressively wider spacing in filters across blocks.

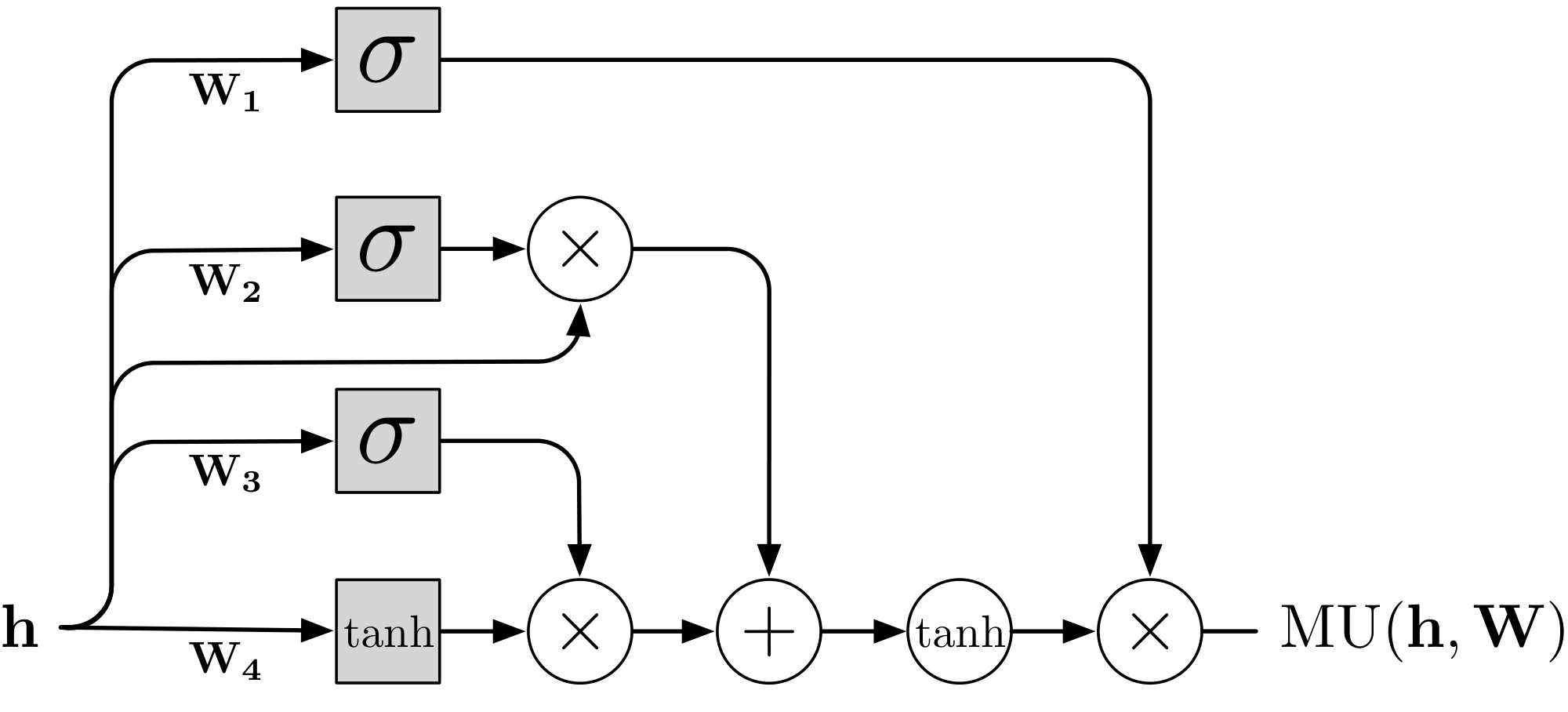

In this section we describe two basic operations that are used as the building blocks of the VPN. The first is the Multiplicative Unit (MU, Sect. 4.1) that contains multiplicative interactions inspired by LSTM ([9]) gates. The second building block is the Residual Multiplicative Block (RMB, Sect. 4.2) that is composed of multiple layers of MUs.

4.1 Multiplicative Units

A multiplicative unit (Figure 2) is constructed by incorporating LSTM-like gates into a convolutional layer. Given an input $\mathbf{h}$ of size $N \times N \times c$, where $c$ corresponds to the number of channels, we first pass it through four convolutional layers to create an update $\mathbf{u}$ and three gates $\mathbf{g_{1-3}}$. The input, update, and gates are then combined in the following manner:

$ \begin{align} \nonumber& \mathbf{g_1} = \sigma (\mathbf{W_1 \ast h}) \ \nonumber& \mathbf{g_2} = \sigma (\mathbf{W_2 \ast h}) \ & \mathbf{g_3} = \sigma (\mathbf{W_3 \ast h}) \ \nonumber& \mathbf{u} = \tanh (\mathbf{W_4 \ast h}) \ \nonumber\text{MU}(\mathbf{h}; \mathbf{W}) &= \mathbf{g_1} \odot \tanh(\mathbf{g_2} \odot \mathbf{h} + \mathbf{g_3} \odot \mathbf{u}) \end{align} $

where $\sigma$ is the sigmoid non-linearity and $\odot$ is component-wise multiplication. Biases are omitted for clarity. In our experiments the convolutional weights $\mathbf{W_{1-4}}$ use a kernel of size $3\times3$. Unlike LSTM networks, there is no distinction between memory and hidden states. Also, unlike Highway networks ([14]) and Grid LSTM ([15]), there is no setting of the gates such that $\text{MU}(\mathbf{h}; \mathbf{W})$ simply returns the input $\mathbf{h}$; the input is always processed with a non-linearity.

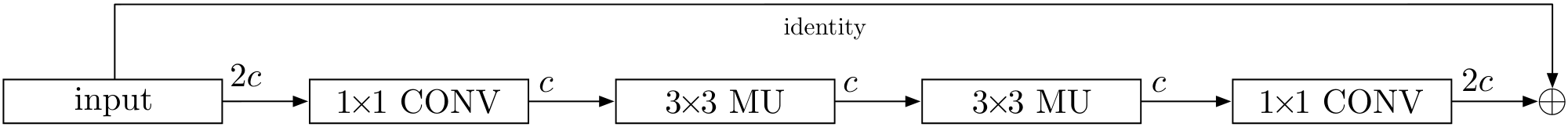

4.2 Residual Multiplicative Blocks

To allow for easy gradient propagation through many layers of the network, we stack two MU layers in a residual multiplicative block (Figure 3) where the input has a residual (additive skip) connection to the output ([16]). For computational efficiency, the number of channels is halved in MU layers inside the block. Namely, given an input layer $\mathbf{h}$ of size $N \times N \times 2c$ with $2c$ channels, we first apply a $1 \times 1$ convolutional layer that reduces the number of channels to $c$; no activation function is used for this layer, and it is followed by two successive MU layers each with a convolutional kernel of size $3\times3$. We then project the feature map back to $2c$ channels using another $1\times 1$ convolutional layer. Finally, the input $\mathbf{h}$ is added to the overall output forming a residual connection. Such a layer structure is similar to the bottleneck residual unit of ([16]). Formally, the Residual Multiplicative Block (RMB) is computed as follows:

$ \begin{align} \nonumber& \mathbf{h_1} = \mathbf{W_1 \ast h} \ \nonumber& \mathbf{h_2} = \text{MU} (\mathbf{h_1}; \mathbf{W_2}) \ & \mathbf{h_3} = \text{MU} (\mathbf{h_2}; \mathbf{W_3}) \ \nonumber& \mathbf{h_4} = \mathbf{W_4 \ast h_3} \ \nonumber&\text{RMB}(\mathbf{h}; \mathbf{W}) = \mathbf{h} + \mathbf{h_4} \end{align} $

We also experimented with a standard residual block of ([16]) which uses ReLU non-linearities – see Sect. 5 and 6 for details.

4.3 Dilated Convolutions

Having a large receptive field helps the model to capture the motion of larger objects. One way to increase the receptive field without much effect on the computational complexity is to use dilated convolutions ([17, 18]), which make the receptive field grow exponentially, as opposed to linearly, in the number of layers. In the variant of VPN that uses dilation, the dilation rates are the same within each RMB, but they double from one RMB to the next up to a chosen maximum size, and then repeat ([11]). In particular, in the CNN encoders we use two repetitions of the dilation scheme $\lbrack 1, 2, 4, 8 \rbrack$, for a total of 8 RMBs. We do not use dilation in the decoders.

5. Moving MNIST

Section Summary: The Moving MNIST dataset features short video sequences of two handwritten digits moving and bouncing around a small grid, with models trained to predict the next 10 frames based on the first 10. Researchers tested various video prediction models on this dataset, finding that their new Variational Predictive Network (VPN) achieved the best results so far, scoring just 87.6 nats per frame—very close to the theoretical minimum of 86.3—outperforming earlier models and a baseline by a wide margin. Qualitatively, the VPN generated clear, sharp continuations even as predictions diverged from reality, while the baseline produced increasingly blurry outputs over time.

\begin{tabular}{lccc}

\toprule

\textbf{Model} & \textbf{Test} \\ \midrule

([10]) & 367.2 \\

([1]) & 341.2 \\

([19]) & 285.2 \\

([4]) & 179.8 \\

{Baseline model} & 110.1 \\

\textbf{VPN} & $\mathbf{87.6}$ \\ \midrule

{Lower Bound} & 86.3 \\

\bottomrule

\end{tabular}

The Moving MNIST dataset consists of sequences of 20 frames of size $64 \times 64$, depicting two potentially overlapping MNIST digits moving with constant velocity and bouncing off walls. Training sequences are generated on-the-fly using digits from the MNIST training set without a limit on the number of generated training sequences (our models observe 19.2M training sequences before convergence). The test set is fixed and consists of 10000 sequences that contain digits from the MNIST test set. 10 of the 20 frames are used as context and the remaining 10 frames are generated.

In order to make our results comparable, for this dataset only we use the same sigmoid cross-entropy loss as used in prior work ([1]). The loss is defined as:

$ H(z, y) = -\sum_{i}z_i \log y_i + (1-z_i)\log(1-y_i) $

where $z_i$ are the grayscale targets in the Moving MNIST frames that are interpreted as probabilities and $y_i$ are the predictions. The lower bound on $H(z, y)$ may be non-zero. In fact, if we let $z_i=y_i$, for the 10 frames that are predicted in each sequence of the Moving MNIST test data, $H(z, y) = 86.3$ nats/frame.

5.1 Implementation Details

The VPNs with and without dilation, as well as the baseline model, have 8 RMBs in the encoders and 12 RMBs in the decoders; for the network variants that use ReLUs we double the number of residual blocks to 16 and 24, respectively, in order to equate the size of the receptive fields in the two model variants. The number of channels in the blocks is $c=128$ while the convolutional LSTM has 256 channels. The topmost layer before the output has 768 channels. We train the models for 300000 steps with 20-frame sequences predicting the last 10 frames of each sequence. Each step corresponds to a batch of 64 sequences. We use RMSProp for the optimization with an initial learning rate of $3 \cdot 10^{-4}$ and multiply the learning rate by $0.3$ when learning flatlines.

\begin{tabular}{lccc}

\toprule

\textbf{Model} & \textbf{Test} \\ \midrule

{VPN (RMB, No Dilation)} & 89.2 \\

{VPN (Relu, No Dilation)} & 89.1 \\

{VPN (Relu, Dilation)} & 87.7 \\

{{VPN (RMB, Dilation)}} & 87.6 \\ \midrule

{Lower Bound} & 86.3 \\

\bottomrule

\end{tabular}

5.2 Results

Table 1 reports the results of various recent video models on the Moving MNIST test set. Our baseline model achieves 110.1 nats/frame, which is significantly better than the previous state of the art ([4]). We attribute these gains to architectural features and, in particular, to the resolution preserving aspect of the network. Further, the VPN achieves 87.6 nats/frame, which approaches the lower bound of 86.3 nats/frame.

Table 2 reports results of architectural variants of the VPNs. The model with dilated convolutions improves over its non-dilated counterpart as it can more easily act on the relatively large digits moving in the $64 \times 64$ frames. In the case of Moving MNIST, MUs do not yield a significant improvement in performance over just using ReLUs, possibly due to the relatively low complexity of the task. A sizeable improvement is obtained from MUs on the Robotic Pushing dataset (Table 3).

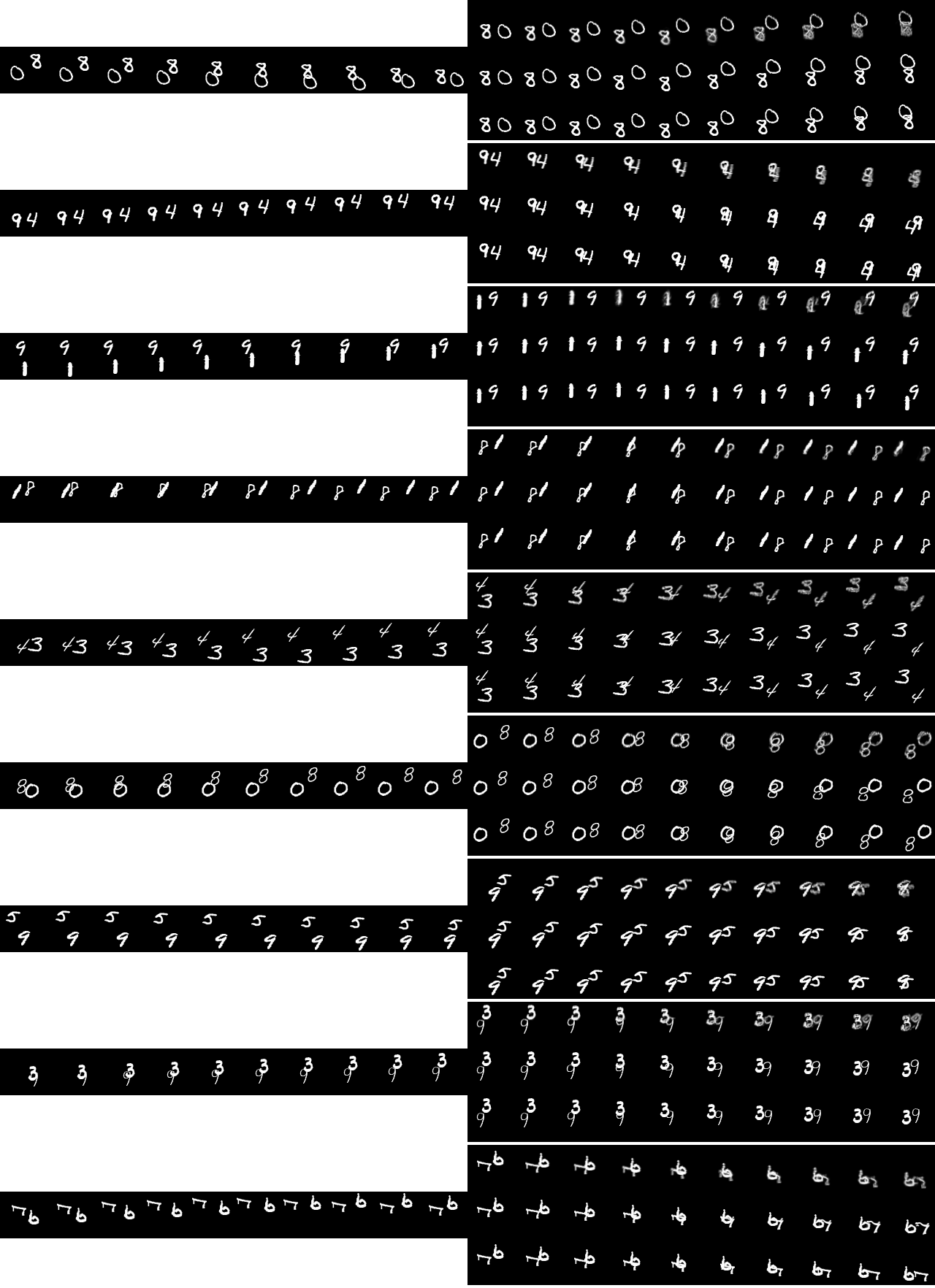

A qualitative evaluation of video continuations produced by the models matches the quantitative results. Figure 4 shows random continuations produced by the VPN and the baseline model on the Moving MNIST test set. The frames generated by the VPN are consistently sharp even when they deviate from the ground truth.

By contrast, the continuations produced by the baseline model get progressively more blurred with time – as the uncertainty of the model grows with the number of generated frames, the lack of inter-frame spatial dependencies leads the model to take the expectation over possible trajectories.

6. Robotic Pushing

Section Summary: The Robotic Pushing dataset features video sequences of a robotic arm pushing objects in a basket, with 50,000 training examples and test sets for both familiar and new objects, where each frame includes the robot's state and intended actions, though outcomes can vary due to blockages. Models like the Video Prediction Network (VPN) and a baseline are trained to predict future frames from initial context, using actions to guide the generation. The VPN significantly outperforms the baseline by capturing spatial and color details, producing realistic videos that generalize well to unseen objects and actions, while avoiding the noise and artifacts seen in simpler models.

The Robotic Pushing dataset consists of sequences of 20 frames of size $64 \times 64$ that represent camera recordings of a robotic arm pushing objects in a basket. The data consists of a training set of 50000 sequences, a validation set, and two test sets of 1500 sequences each, one involving a subset of the objects seen during training and the other one involving novel objects not seen during training. Each frame in the video sequence is paired with the state of the robot at that frame and with the desired action to reach the next frame. The transitions are non-deterministic as the robotic arm may not reach the desired state in a frame due to occlusion by the objects encountered on its trajectory. 2 frames, 2 states and 2 actions are used as context; the desired 18 actions in the future are also given. The 18 frames in the future are then generated conditioned on the 18 actions as well as on the 2 steps of context.

6.1 Implementation Details

For this dataset, both the VPN and the baseline model use the softmax cross-entropy loss, as defined in Sect. 2. As for Moving MNIST, the models have 8 RMBs in the encoders and 12 RMBs in the decoders; the ReLU variants have 16 residual blocks in the encoders and 24 in the decoders. The number of channels in the RMBs is $c=128$, the convolutional LSTM has 256 channels and the topmost layer before the output has 1536 channels. We use RMSProp with an initial learning rate of $10^{-4}$. We train for 275000 steps with a batch size of 64 sequences per step. Each training sequence is obtained by selecting a random subsequence of 12 frames together with the corresponding states and actions. We use the first 2 frames in the subsequence as context and predict the other 10 frames. States and actions come as vectors of 5 real values. For the 2 context frames, we condition each layer in the encoders and the decoders with the respective state and action vectors; the conditioning is performed by the result of a $1 \times 1$ convolution applied to the action and state vectors that are broadcast to all of the $64 \times 64$ positions. For the other 10 frames, we condition the encoders and decoders with the action vectors only. We discard the state vectors for the predicted frames during training and do not use them at generation. For generation we unroll the models for the entire sequence of 20 frames and generate 18 frames.

:Table 3: Negative log-likelihood in nats/dimension on the Robotic Pushing dataset.

| Model | Validation | Test (Seen) | Test (Novel) |

|---|---|---|---|

| Baseline model | 2.06 | 2.08 | 2.07 |

| VPN (Relu, Dilation) | 0.73 | 0.72 | 0.75 |

| VPN (Relu, No Dilation) | 0.72 | 0.73 | 0.75 |

| VPN (RMB, Dilation) | 0.63 | 0.65 | 0.64 |

| VPN (RMB, No Dilation) | $\mathbf{0.62}$ | $\mathbf{0.64}$ | $\mathbf{0.64}$ |

6.2 Results

Table 3 reports the results of the baseline model and variants of the VPN on the Robotic Pushing validation and test sets. The best variant of the VPN has a

gt;65%$ reduction in negative log-likelihood over the baseline model. This highlights the importance of space and color dependencies in non-deterministic environments. The results on the validation and test datasets with seen objects and on the test dataset with novel objects are similar. This shows that the models have learned to generalize well not just to new action sequences, but also to new objects. Furthermore, we see that using multiplicative interactions in the VPN gives a significant improvement over using ReLUs.Figures 5–9 visualize the samples generated by our models. Figure 5 contains random samples of the VPN on the validation set with seen objects (together with the corresponding ground truth). The model is able to distinguish between the robotic arm and the background, correctly handling occlusions and only pushing the objects when they come in contact with the robotic arm. The VPN generates the arm when it enters into the frame from one of the sides. The position of the arm in the samples is close to that in the ground truth, suggesting the VPN has learned to follow the actions. The generated videos remain detailed throughout the 18 frames and few artifacts are present. The samples remain good showing the ability of the VPN to generalize to new sequences of actions. Figure 6 evaluates an additional level of generalization, by showing samples from the test set with novel objects not seen during training. The VPN seems to identify the novel objects correctly and generates plausible movements for them. The samples do not appear visibly worse than in the datasets with seen objects. Figure 7 demonstrates the probabilistic nature of the VPN, by showing multiple different video continuations that start from the same context frames and are conditioned on the same sequence of 18 future actions. The continuations are plausible and varied, further suggesting the VPN's ability to generalize. Figure 8 shows samples from the baseline model. In contrast with the VPN samples, we see a form of high frequency noise appearing in the non-deterministic movements of the robotic arm. This can be attributed to the lack of space and color dependencies, as discussed in Sec. 2.2. Figure 9 shows a comparison of continuations of the baseline model and the VPN from the same context sequence. Besides artifacts, the baseline model also seems less responsive to the actions.

7. Conclusion

Section Summary: The Video Pixel Network is a new AI model for generating videos that breaks down the overall probability of video sequences into simpler parts. On a test with moving digits, it performs nearly as well as the theoretical best without relying on special motion rules, far surpassing earlier models. For videos of robots pushing objects, it creates clearer and more detailed future frames than basic alternatives, avoiding common glitches through a flexible structure that works reliably across different scenarios.

We have introduced the Video Pixel Network, a deep generative model of video data that models the factorization of the joint likelihood of video. We have shown that, despite its lack of specific motion priors or surrogate losses, the VPN approaches the lower bound on the loss on the Moving MNIST benchmark that corresponds to a large improvement over the previous state of the art. On the Robotic Pushing dataset, the VPN achieves significantly better likelihoods than the baseline model that lacks the fourfold dependency structure; the VPN generates videos that are free of artifacts and are highly detailed for many frames into the future. The fourfold dependency structure provides a robust and generic method for generating videos without systematic artifacts.

Acknowledgments

The authors would like to thank Chelsea Finn for her advice on the Robotic Pushing dataset and Lasse Espeholt for helpful remarks.

References

Section Summary: This references section lists 19 academic papers published mostly between 2013 and 2016, focusing on advancements in artificial intelligence and machine learning techniques for handling videos and sequences. Key themes include unsupervised learning for video prediction, using networks like LSTMs and convolutional variants to model motion and generate content in games or real-world scenarios, as well as generative models for images, audio, and language. It also cites foundational works on neural architectures such as highway networks, residual networks, and dilated convolutions to improve deep learning performance.

[1] Nitish Srivastava, Elman Mansimov, and Ruslan Salakhutdinov. Unsupervised learning of video representations using lstms. In ICML, volume 37, pages 843–852, 2015a.

[2] Junhyuk Oh, Xiaoxiao Guo, Honglak Lee, Richard L. Lewis, and Satinder P. Singh. Action-conditional video prediction using deep networks in atari games. In NIPS, pages 2863–2871, 2015.

[3] Marc'Aurelio Ranzato, Arthur Szlam, Joan Bruna, Michaël Mathieu, Ronan Collobert, and Sumit Chopra. Video (language) modeling: a baseline for generative models of natural videos. CoRR, abs/1412.6604, 2014.

[4] Viorica Patraucean, Ankur Handa, and Roberto Cipolla. Spatio-temporal video autoencoder with differentiable memory. CoRR, abs/1511.06309, 2015.

[5] Chelsea Finn, Ian J. Goodfellow, and Sergey Levine. Unsupervised learning for physical interaction through video prediction. CoRR, abs/1605.07157, 2016.

[6] Michaël Mathieu, Camille Couprie, and Yann LeCun. Deep multi-scale video prediction beyond mean square error. CoRR, abs/1511.05440, 2015.

[7] Carl Vondrick, Hamed Pirsiavash, and Antonio Torralba. Generating videos with scene dynamics. CoRR, abs/1609.02612, 2016.

[8] Aäron van den Oord, Nal Kalchbrenner, and Koray Kavukcuoglu. Pixel recurrent neural networks. In ICML, volume 48, pages 1747–1756, 2016b.

[9] Sepp Hochreiter and Jürgen Schmidhuber. Long short-term memory. Neural computation, 1997.

[10] Xingjian Shi, Zhourong Chen, Hao Wang, Dit-Yan Yeung, Wai-Kin Wong, and Wang-chun Woo. Convolutional LSTM network: A machine learning approach for precipitation nowcasting. In NIPS, pages 802–810, 2015.

[11] Aaron van den Oord, Sander Dieleman, Heiga Zen, Karen Simonyan, Oriol Vinyals, Alex Graves, Nal Kalchbrenner, Andrew Senior, and Koray Kavukcuoglu. Wavenet: A generative model for raw audio. CoRR, abs/1609.03499, 2016a.

[12] Nal Kalchbrenner and Phil Blunsom. Recurrent continuous translation models. In EMNLP, pages 1700–1709, 2013.

[13] Aäron van den Oord, Nal Kalchbrenner, Oriol Vinyals, Lasse Espeholt, Alex Graves, and Koray Kavukcuoglu. Conditional image generation with pixelcnn decoders. In NIPS, 2016c.

[14] Rupesh Kumar Srivastava, Klaus Greff, and Jürgen Schmidhuber. Highway networks. CoRR, abs/1505.00387, 2015b.

[15] Nal Kalchbrenner, Ivo Danihelka, and Alex Graves. Grid long short-term memory. International Conference on Learning Representations, 2016.

[16] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Identity mappings in deep residual networks. CoRR, abs/1603.05027, 2016.

[17] Liang-Chieh Chen, George Papandreou, Iasonas Kokkinos, Kevin Murphy, and Alan L. Yuille. Semantic image segmentation with deep convolutional nets and fully connected crfs. CoRR, abs/1412.7062, 2014.

[18] Fisher Yu and Vladlen Koltun. Multi-scale context aggregation by dilated convolutions. CoRR, abs/1511.07122, 2015.

[19] Bert De Brabandere, Xu Jia, Tinne Tuytelaars, and Luc Van Gool. Dynamic filter networks. CoRR, abs/1605.09673, 2016.