Deep Unsupervised Learning using Nonequilibrium Thermodynamics

Jascha Sohl-Dickstein ([email protected])

Stanford University

Eric A. Weiss ([email protected])

University of California, Berkeley

Niru Maheswaranathan ([email protected])

Stanford University

Surya Ganguli ([email protected])

Stanford University

Abstract

A central problem in machine learning involves modeling complex data-sets using highly flexible families of probability distributions in which learning, sampling, inference, and evaluation are still analytically or computationally tractable. Here, we develop an approach that simultaneously achieves both flexibility and tractability. The essential idea, inspired by non-equilibrium statistical physics, is to systematically and slowly destroy structure in a data distribution through an iterative forward diffusion process. We then learn a reverse diffusion process that restores structure in data, yielding a highly flexible and tractable generative model of the data. This approach allows us to rapidly learn, sample from, and evaluate probabilities in deep generative models with thousands of layers or time steps, as well as to compute conditional and posterior probabilities under the learned model. We additionally release an open source reference implementation of the algorithm.

Executive Summary: In machine learning, a key challenge is building probabilistic models that can accurately capture the complex patterns in real-world data, such as images or sequences, while remaining easy to train, sample from, and evaluate. Traditional models often fail here: simple ones, like basic Gaussians, are computable but too rigid to handle intricate structures, while flexible ones require computationally intensive approximations that limit their practicality. This tradeoff hinders progress in areas like image generation, denoising, and data synthesis, which are increasingly vital amid growing datasets and applications in AI-driven fields like computer vision and drug discovery.

This paper introduces diffusion probabilistic models to address this issue. The authors aim to create a new class of generative models that combine high flexibility with full tractability, allowing exact likelihood computation, sampling, and even posterior estimation without heavy numerical approximations.

The approach draws inspiration from nonequilibrium thermodynamics and uses a step-by-step diffusion process to model data. It starts by gradually adding noise to real data in a forward chain of many small steps—up to thousands—turning complex data distributions into simple noise, like a Gaussian. A neural network then learns the reverse process: starting from noise, it iteratively removes noise to reconstruct data-like samples. Training maximizes a lower bound on the model's log likelihood using standard optimization techniques, with data from standard datasets spanning toy problems to natural images. Experiments covered the period of model development in 2015, using sample sizes from thousands of points for synthetic data to 50,000-60,000 images for benchmarks like MNIST and CIFAR-10. Key assumptions include smooth data distributions and small noise steps for accurate reversals, implemented via open-source code for reproducibility.

The core results show strong performance across diverse tasks. First, the models achieve high log likelihoods, measuring how well they fit data: on dead leaf images (layered circles mimicking natural scenes), they reached 1.49 bits per pixel, about 20% better than the prior state-of-the-art mixtures of Gaussian scale mixtures at 1.24 bits per pixel. Second, on handwritten digits (MNIST), they scored 317 bits total likelihood, comparable to top methods like adversarial networks at 325 bits and surpassing deep belief networks by roughly 60 bits. Third, for CIFAR-10 color images, the lower bound hit 5.4 bits per pixel, a 2.1-fold improvement over a naive Gaussian baseline. Fourth, toy tests like a 2D swiss roll and binary heartbeat sequences were nearly perfectly modeled, with likelihoods within 0.1 bits of the true distributions. Fifth, the models enable practical applications: they denoise noisy images effectively and inpaint missing regions, such as filling a 100x100 pixel hole in bark textures while preserving long-range patterns like cracks.

These findings mean diffusion models can generate realistic data and perform inference tasks more reliably than many existing techniques, reducing errors in downstream uses like augmentation for training other AI systems or simulating rare events in science. Unlike variational methods, which often struggle with deep architectures or posterior computation, this approach scales to thousands of layers without asymmetry in training, potentially cutting computational risks and timelines in model development. It outperforms expectations from prior physics-inspired methods by providing exact operations, challenging the dominance of approximate techniques and opening doors to hybrid models.

Next, organizations should adopt this framework for generative tasks, starting with pilot integrations into existing pipelines for image or sequence data to boost performance in synthesis or anomaly detection. For broader use, extend the open-source implementation to larger datasets like ImageNet, trading off added compute for even higher fidelity. If decisions hinge on untested domains, conduct targeted validations first, such as on audio or text.

Confidence in these results is high for the tested domains, backed by analytical bounds and comparisons to baselines, though caution is needed for highly discontinuous data where diffusion assumptions may falter. Limitations include the need for many steps, increasing training time (hours to days on GPUs), and reliance on neural networks that could overfit small samples; future work should explore adaptive step sizes to mitigate this.

1. Introduction

Section Summary: Probabilistic models in statistics and machine learning often face a trade-off between being easy to compute and analyze, like simple Gaussian distributions, and being flexible enough to capture complex patterns in real-world data, which usually requires computationally intensive approximations. This introduction proposes diffusion probabilistic models, which use a step-by-step Markov chain process inspired by physics to gradually transform a basic noise distribution, such as a Gaussian, into the actual data distribution, allowing for highly flexible models that support exact sampling, easy integration with other probabilities, and efficient likelihood calculations. The approach outperforms many existing methods by enabling training on diverse datasets like images and sequences, while drawing from non-equilibrium physics rather than traditional variational techniques and addressing challenges in inference and generative modeling.

Historically, probabilistic models suffer from a tradeoff between two conflicting objectives: tractability and flexibility. Models that are tractable can be analytically evaluated and easily fit to data (e.g. a Gaussian or Laplace). However, these models are unable to aptly describe structure in rich datasets. On the other hand, models that are flexible can be molded to fit structure in arbitrary data. For example, we can define models in terms of any (non-negative) function $\phi(\mathbf x)$ yielding the flexible distribution $p\left(\mathbf x\right) = \frac{\phi\left(\mathbf x \right)}{Z}$, where $Z$ is a normalization constant. However, computing this normalization constant is generally intractable. Evaluating, training, or drawing samples from such flexible models typically requires a very expensive Monte Carlo process.

A variety of analytic approximations exist which ameliorate, but do not remove, this tradeoff–for instance mean field theory and its expansions [1, 2], variational Bayes [3], contrastive divergence [4, 5], minimum probability flow [6, 7], minimum KL contraction [8], proper scoring rules [9, 10], score matching [11], pseudolikelihood [12], loopy belief propagation [13], and many, many more. Non-parametric methods [14] can also be very effective[^1].

[^1]: Non-parametric methods can be seen as transitioning smoothly between tractable and flexible models. For instance, a non-parametric Gaussian mixture model will represent a small amount of data using a single Gaussian, but may represent infinite data as a mixture of an infinite number of Gaussians.

1.1 Diffusion probabilistic models

We present a novel way to define probabilistic models that allows:

- extreme flexibility in model structure,

- exact sampling,

- easy multiplication with other distributions, e.g. in order to compute a posterior, and

- the model log likelihood, and the probability of individual states, to be cheaply evaluated.

Our method uses a Markov chain to gradually convert one distribution into another, an idea used in non-equilibrium statistical physics [15] and sequential Monte Carlo [16]. We build a generative Markov chain which converts a simple known distribution (e.g. a Gaussian) into a target (data) distribution using a diffusion process. Rather than use this Markov chain to approximately evaluate a model which has been otherwise defined, we explicitly define the probabilistic model as the endpoint of the Markov chain. Since each step in the diffusion chain has an analytically evaluable probability, the full chain can also be analytically evaluated.

Learning in this framework involves estimating small perturbations to a diffusion process. Estimating small perturbations is more tractable than explicitly describing the full distribution with a single, non-analytically-normalizable, potential function. Furthermore, since a diffusion process exists for any smooth target distribution, this method can capture data distributions of arbitrary form.

We demonstrate the utility of these diffusion probabilistic models by training high log likelihood models for a two-dimensional swiss roll, binary sequence, handwritten digit (MNIST), and several natural image (CIFAR-10, bark, and dead leaves) datasets.

1.2 Relationship to other work

The wake-sleep algorithm [17, 18] introduced the idea of training inference and generative probabilistic models against each other. This approach remained largely unexplored for nearly two decades, though with some exceptions [19, 20]. There has been a recent explosion of work developing this idea. In [21, 22, 23, 24] variational learning and inference algorithms were developed which allow a flexible generative model and posterior distribution over latent variables to be directly trained against each other.

The variational bound in these papers is similar to the one used in our training objective and in the earlier work of [19]. However, our motivation and model form are both quite different, and the present work retains the following differences and advantages relative to these techniques:

- We develop our framework using ideas from physics, quasi-static processes, and annealed importance sampling rather than from variational Bayesian methods.

- We show how to easily multiply the learned distribution with another probability distribution (eg with a conditional distribution in order to compute a posterior)

- We address the difficulty that training the inference model can prove particularly challenging in variational inference methods, due to the asymmetry in the objective between the inference and generative models. We restrict the forward (inference) process to a simple functional form, in such a way that the reverse (generative) process will have the same functional form.

- We train models with thousands of layers (or time steps), rather than only a handful of layers.

- We provide upper and lower bounds on the entropy production in each layer (or time step)

There are a number of related techniques for training probabilistic models (summarized below) that develop highly flexible forms for generative models, train stochastic trajectories, or learn the reversal of a Bayesian network. Reweighted wake-sleep [25] develops extensions and improved learning rules for the original wake-sleep algorithm. Generative stochastic networks [26, 27] train a Markov kernel to match its equilibrium distribution to the data distribution. Neural autoregressive distribution estimators [28] (and their recurrent [29] and deep [30] extensions) decompose a joint distribution into a sequence of tractable conditional distributions over each dimension. Adversarial networks [31] train a generative model against a classifier which attempts to distinguish generated samples from true data. A similar objective in [32] learns a two-way mapping to a representation with marginally independent units. In [33, 34] bijective deterministic maps are learned to a latent representation with a simple factorial density function. In [35] stochastic inverses are learned for Bayesian networks. Mixtures of conditional Gaussian scale mixtures (MCGSMs) [36] describe a dataset using Gaussian scale mixtures, with parameters which depend on a sequence of causal neighborhoods. There is additionally significant work learning flexible generative mappings from simple latent distributions to data distributions – early examples including [37] where neural networks are introduced as generative models, and [38] where a stochastic manifold mapping is learned from a latent space to the data space. We will compare experimentally against adversarial networks and MCGSMs.

Related ideas from physics include the Jarzynski equality [15], known in machine learning as Annealed Importance Sampling (AIS) [16], which uses a Markov chain which slowly converts one distribution into another to compute a ratio of normalizing constants. In [39] it is shown that AIS can also be performed using the reverse rather than forward trajectory. Langevin dynamics [40], which are the stochastic realization of the Fokker-Planck equation, show how to define a Gaussian diffusion process which has any target distribution as its equilibrium. In [41] the Fokker-Planck equation is used to perform stochastic optimization. Finally, the Kolmogorov forward and backward equations [42] show that for many forward diffusion processes, the reverse diffusion processes can be described using the same functional form.

![**Figure 3:** The proposed framework trained on the CIFAR-10 [43] dataset. *(a)* Example holdout data (similar to training data). *(b)* Holdout data corrupted with Gaussian noise of variance 1 (SNR = 1). *(c)* Denoised images, generated by sampling from the posterior distribution over denoised images conditioned on the images in (b). *(d)* Samples generated by the diffusion model.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/h8rj7kay/complex_fig_cb8afcc7eb53.png)

2. Algorithm

Section Summary: The algorithm outlines a forward diffusion process that gradually transforms a complex data distribution, such as images or other patterns, into a simple and predictable one, like pure noise, through repeated steps using Gaussian or binomial methods. It then trains a reverse process to reconstruct the original data from this noise, defining a generative model that can produce new samples. The model evaluates probabilities by comparing forward and reverse paths and is trained by maximizing a computable lower bound on data likelihood, allowing applications like image denoising or combining with other distributions.

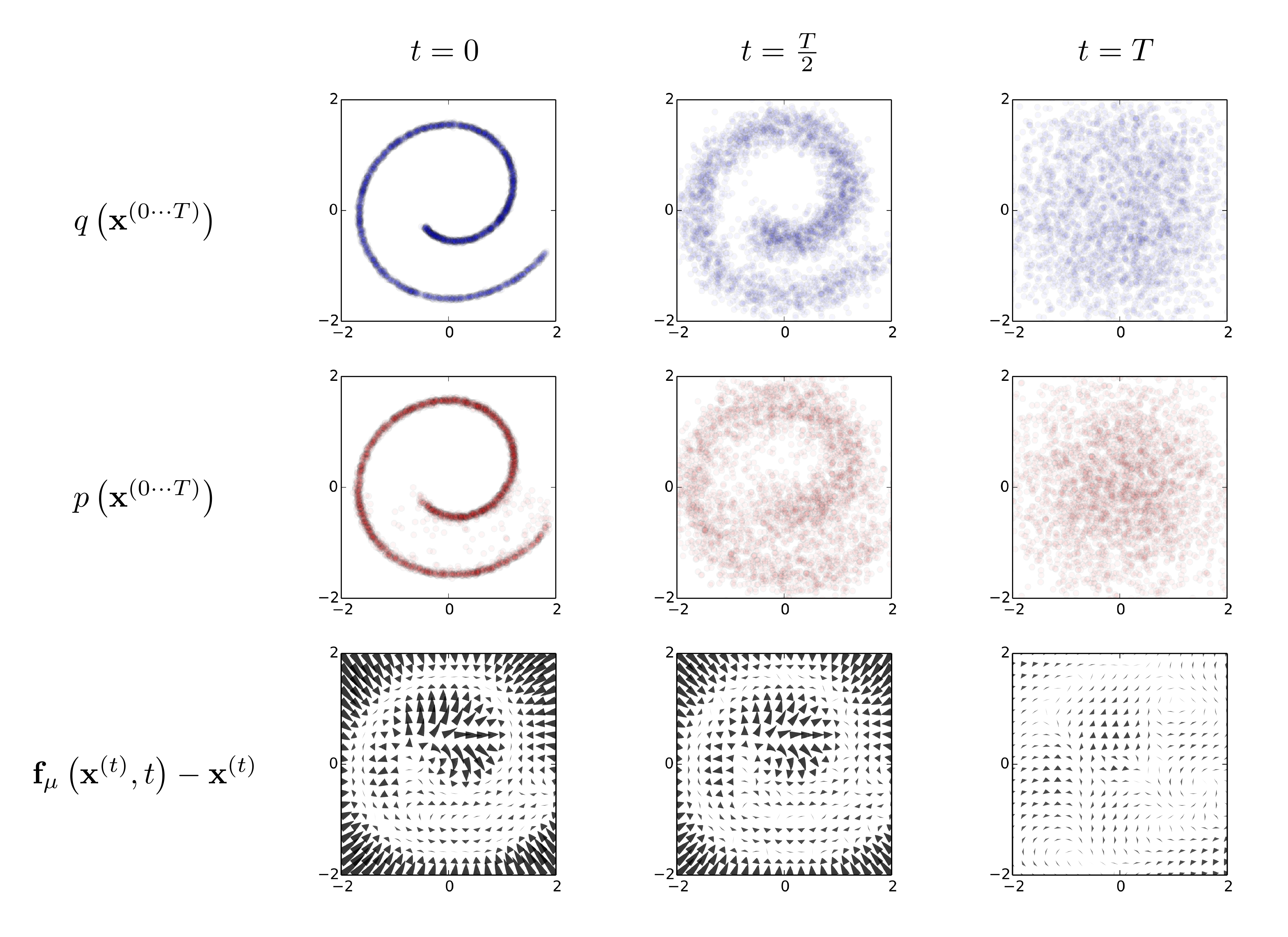

Our goal is to define a forward (or inference) diffusion process which converts any complex data distribution into a simple, tractable, distribution, and then learn a finite-time reversal of this diffusion process which defines our generative model distribution (See Figure 1). We first describe the forward, inference diffusion process. We then show how the reverse, generative diffusion process can be trained and used to evaluate probabilities. We also derive entropy bounds for the reverse process, and show how the learned distributions can be multiplied by any second distribution (e.g. as would be done to compute a posterior when inpainting or denoising an image).

2.1 Forward Trajectory

We label the data distribution $q \left(\mathbf x^{(0)} \right)$. The data distribution is gradually converted into a well behaved (analytically tractable) distribution $\pi\left(\mathbf y \right)$ by repeated application of a Markov diffusion kernel $T_\pi\left(\mathbf y | \mathbf y'; \beta \right)$ for $\pi\left(\mathbf y \right)$, where $\beta$ is the diffusion rate,

$

<<

The forward trajectory, corresponding to starting at the data distribution and performing $T$ steps of diffusion, is thus

$

<<

For the experiments shown below, $q\left(\mathbf x^{(t)} | \mathbf x^{(t-1)} \right)$ corresponds to either Gaussian diffusion into a Gaussian distribution with identity-covariance, or binomial diffusion into an independent binomial distribution. Table [@sec:tab_diff] gives the diffusion kernels for both Gaussian and binomial distributions.

2.2 Reverse Trajectory

The generative distribution will be trained to describe the same trajectory, but in reverse,

$

<<

For both Gaussian and binomial diffusion, for continuous diffusion (limit of small step size $\beta$) the reversal of the diffusion process has the identical functional form as the forward process [42]. Since $q\left(\mathbf x^{(t)} | \mathbf x^{(t-1)} \right)$ is a Gaussian (binomial) distribution, and if $\beta_t$ is small, then $q\left(\mathbf x^{(t-1)} | \mathbf x^{(t)} \right)$ will also be a Gaussian (binomial) distribution. The longer the trajectory the smaller the diffusion rate $\beta$ can be made.

During learning only the mean and covariance for a Gaussian diffusion kernel, or the bit flip probability for a binomial kernel, need be estimated. As shown in Table [@sec:tab_diff], $\mathbf f_\mu\left(\mathbf x^{(t)}, t \right)$ and $\mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right)$ are functions defining the mean and covariance of the reverse Markov transitions for a Gaussian, and $\mathbf f_b\left(\mathbf x^{(t)}, t \right)$ is a function providing the bit flip probability for a binomial distribution. The computational cost of running this algorithm is the cost of these functions, times the number of time-steps. For all results in this paper, multi-layer perceptrons are used to define these functions. A wide range of regression or function fitting techniques would be applicable however, including nonparameteric methods.

2.3 Model Probability

The probability the generative model assigns to the data is

$

<<

Naively this integral is intractable – but taking a cue from annealed importance sampling and the Jarzynski equality, we instead evaluate the relative probability of the forward and reverse trajectories, averaged over forward trajectories,

$

<<

This can be evaluated rapidly by averaging over samples from the forward trajectory $q\left(\mathbf x^{(1\cdots T)} | \mathbf x^{(0)} \right)$. For infinitesimal $\beta$ the forward and reverse distribution over trajectories can be made identical (see Section 2.2). If they are identical then only a single sample from $q\left(\mathbf x^{(1\cdots T)} | \mathbf x^{(0)} \right)$ is required to exactly evaluate the above integral, as can be seen by substitution. This corresponds to the case of a quasi-static process in statistical physics [44, 45].

2.4 Training

Training amounts to maximizing the model log likelihood,

$

<<

which has a lower bound provided by Jensen's inequality,

$

<<

As described in Appendix B, for our diffusion trajectories this reduces to,

$

<<

where the entropies and KL divergences can be analytically computed. The derivation of this bound parallels the derivation of the log likelihood bound in variational Bayesian methods.

As in Section 2.3 if the forward and reverse trajectories are identical, corresponding to a quasi-static process, then the inequality in [@eq:eq_ineq_LK] becomes an equality.

Training consists of finding the reverse Markov transitions which maximize this lower bound on the log likelihood,

$

<<

The specific targets of estimation for Gaussian and binomial diffusion are given in Table [@sec:tab_diff].

Thus, the task of estimating a probability distribution has been reduced to the task of performing regression on the functions which set the mean and covariance of a sequence of Gaussians (or set the state flip probability for a sequence of Bernoulli trials).

2.4.1 Setting the Diffusion Rate $\beta_t$

The choice of $\beta_t$ in the forward trajectory is important for the performance of the trained model. In AIS, the right schedule of intermediate distributions can greatly improve the accuracy of the log partition function estimate [46]. In thermodynamics the schedule taken when moving between equilibrium distributions determines how much free energy is lost [44, 45].

In the case of Gaussian diffusion, we learn[^2] the forward diffusion schedule $\beta_{2\cdots T}$ by gradient ascent on $K$. The variance $\beta_1$ of the first step is fixed to a small constant to prevent overfitting. The dependence of samples from $q\left(\mathbf x^{(1\cdots T)} | \mathbf x^{(0)} \right)$ on $\beta_{1\cdots T}$ is made explicit by using `frozen noise' – as in [21] the noise is treated as an additional auxiliary variable, and held constant while computing partial derivatives of $K$ with respect to the parameters.

[^2]: Recent experiments suggest that it is just as effective to instead use the same fixed $\beta_t$ schedule as for binomial diffusion.

For binomial diffusion, the discrete state space makes gradient ascent with frozen noise impossible. We instead choose the forward diffusion schedule $\beta_{1\cdots T}$ to erase a constant fraction $\frac{1}{T}$ of the original signal per diffusion step, yielding a diffusion rate of $\beta_t = \left(T-t +1\right)^{-1}$.

![**Figure 4:** The proposed framework trained on dead leaf images [47, 48]. *(a)* Example training image. *(b)* A sample from the previous state of the art natural image model [36] trained on identical data, reproduced here with permission. *(c)* A sample generated by the diffusion model. Note that it demonstrates fairly consistent occlusion relationships, displays a multiscale distribution over object sizes, and produces circle-like objects, especially at smaller scales. As shown in Table [@sec:tb_ll_compare], the diffusion model has the highest log likelihood on the test set.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/h8rj7kay/complex_fig_0008aad12bea.png)

2.5 Multiplying Distributions, and Computing Posteriors

Tasks such as computing a posterior in order to do signal denoising or inference of missing values requires multiplication of the model distribution $p\left(\mathbf x^{(0)} \right)$ with a second distribution, or bounded positive function, $r\left(\mathbf x^{(0)} \right)$, producing a new distribution $\tilde{p}\left(\mathbf x^{(0)} \right) \propto p\left(\mathbf x^{(0)} \right) r\left(\mathbf x^{(0)} \right)$.

Multiplying distributions is costly and difficult for many techniques, including variational autoencoders, GSNs, NADEs, and most graphical models. However, under a diffusion model it is straightforward, since the second distribution can be treated either as a small perturbation to each step in the diffusion process, or often exactly multiplied into each diffusion step. Figure 3 and Figure 5 demonstrate the use of a diffusion model to perform denoising and inpainting of natural images. The following sections describe how to multiply distributions in the context of diffusion probabilistic models.

2.5.1 Modified Marginal Distributions

First, in order to compute $\tilde{p}\left(\mathbf x^{(0)} \right)$, we multiply each of the intermediate distributions by a corresponding function $r \left(\mathbf x^{(t)} \right)$. We use a tilde above a distribution or Markov transition to denote that it belongs to a trajectory that has been modified in this way. $\tilde{p}\left(\mathbf x^{(0\cdots T)} \right)$ is the modified reverse trajectory, which starts at the distribution $\tilde{p}\left(\mathbf x^{(T)} \right) = \frac{1}{\tilde{Z}_T} p\left(\mathbf x^{(T)} \right) r\left(\mathbf x^{(T)} \right)$ and proceeds through the sequence of intermediate distributions

$

<<

where $\tilde{Z}_t$ is the normalizing constant for the $t$ th intermediate distribution.

2.5.2 Modified Diffusion Steps

The Markov kernel $p\left(\mathbf x^{(t)} \mid \mathbf x^{(t+1)}\right)$ for the reverse diffusion process obeys the equilibrium condition

$

<<

We wish the perturbed Markov kernel $\tilde{p}\left(\mathbf x^{(t)} \mid \mathbf x^{(t+1)}\right)$ to instead obey the equilibrium condition for the perturbed distribution,

$

<<

[@eq:eq_tild_fixed_point] will be satisfied if

$

<<

[@eq:eq_tild_unnorm] may not correspond to a normalized probability distribution, so we choose $\tilde{p}\left(\mathbf x^{(t)} | \mathbf x^{(t+1)} \right)$ to be the corresponding normalized distribution

$

<<

where $\tilde{Z}_t\left(\mathbf x^{(t+1)} \right)$ is the normalization constant.

For a Gaussian, each diffusion step is typically very sharply peaked relative to $r\left(\mathbf x^{(t)} \right)$, due to its small variance. This means that $\frac{ r\left(\mathbf x^{(t)} \right) }{ r\left(\mathbf x^{(t+1)} \right) }$ can be treated as a small perturbation to $p\left(\mathbf x^{(t)} | \mathbf x^{(t+1)} \right)$. A small perturbation to a Gaussian effects the mean, but not the normalization constant, so in this case Equations [@eq:eq_tild_unnorm] and [@eq:eq_tild_norm] are equivalent (see Appendix C).

2.5.3 Applying $r\left(\mathbf x^{(t)} \right)$

If $r\left(\mathbf x^{(t)} \right)$ is sufficiently smooth, then it can be treated as a small perturbation to the reverse diffusion kernel $p\left(\mathbf x^{(t)} | \mathbf x^{(t+1)} \right)$. In this case $\tilde{p}\left(\mathbf x^{(t)} | \mathbf x^{(t+1)} \right)$ will have an identical functional form to $p\left(\mathbf x^{(t)} | \mathbf x^{(t+1)} \right)$, but with perturbed mean for the Gaussian kernel, or with perturbed flip rate for the binomial kernel. The perturbed diffusion kernels are given in Table [@sec:tab_diff], and are derived for the Gaussian in Appendix C.

If $r\left(\mathbf x^{(t)} \right)$ can be multiplied with a Gaussian (or binomial) distribution in closed form, then it can be directly multiplied with the reverse diffusion kernel $p\left(\mathbf x^{(t)} | \mathbf x^{(t+1)} \right)$ in closed form. This applies in the case where $r\left(\mathbf x^{(t)} \right)$ consists of a delta function for some subset of coordinates, as in the inpainting example in Figure 5.

2.5.4 Choosing $r\left(\mathbf x^{(t)} \right)$

Typically, $r\left(\mathbf x^{(t)} \right)$ should be chosen to change slowly over the course of the trajectory. For the experiments in this paper we chose it to be constant,

$

<<

Another convenient choice is $r\left(\mathbf x^{(t)} \right) = r\left(\mathbf x^{(0)} \right)^\frac{T-t}{T}$. Under this second choice $r\left(\mathbf x^{(t)} \right)$ makes no contribution to the starting distribution for the reverse trajectory. This guarantees that drawing the initial sample from $\tilde{p}\left(\mathbf x^{(T)} \right)$ for the reverse trajectory remains straightforward.

2.6 Entropy of Reverse Process

Since the forward process is known, we can derive upper and lower bounds on the conditional entropy of each step in the reverse trajectory, and thus on the log likelihood,

$

<<

where both the upper and lower bounds depend only on $q\left(\mathbf x^{(1\cdots T)} | \mathbf x^{(0)} \right)$, and can be analytically computed. The derivation is provided in Appendix A.

\begin{tabular}{l|l|l}

{\em Dataset} & {\em $K$} & {\em $K - L_{null}$} \\

\hline

Swiss Roll & 2.35 bits & 6.45 bits \\

Binary Heartbeat & -2.414 bits/seq. & 12.024 bits/seq. \\

Bark & -0.55 bits/pixel & 1.5 bits/pixel \\

Dead Leaves & 1.489 bits/pixel & 3.536 bits/pixel \\

CIFAR-10\tablefootnote{

An earlier version of this paper reported higher log likelihood bounds on CIFAR-10.

These were the result of the model learning the 8-bit quantization of pixel values in the CIFAR-10 dataset.

The log likelihood bounds reported here are instead for data that has been pre-processed by adding uniform noise to remove pixel quantization, as recommended in [49].

}

& $5.4\pm0.2$ bits/pixel & $11.5\pm0.2$ bits/pixel \\

MNIST & \multicolumn{2}{c}{

See table [@sec:tb_ll_compare]

}

\end{tabular}

:Table 2: Log likelihood comparisons to other algorithms. Dead leaves images were evaluated using identical training and test data as in [36]. MNIST log likelihoods were estimated using the Parzen-window code from [31], with values given in bits, and show that our performance is comparable to other recent techniques. The perfect model entry was computed by applying the Parzen code to samples from the training data.

| Model | Log Likelihood |

|---|---|

| ** Dead Leaves** | |

| MCGSM | 1.244 bits/pixel |

| Diffusion | $\mathbf{1.489}$ bits/pixel |

| ** MNIST** | |

| Stacked CAE | $174 \pm 2.3$ bits |

| DBN | $199 \pm 2.9$ bits |

| Deep GSN | $309 \pm 1.6$ bits |

| Diffusion | $\mathbf{317 \pm 2.7}$ bits |

| Adversarial net | $325 \pm 2.9$ bits |

| Perfect model | $349 \pm 3.3$ bits |

![**Figure 5:** Inpainting. *(a)* A bark image from [50]. *(b)* The same image with the central 100 $\times$ 100 pixel region replaced with isotropic Gaussian noise. This is the initialization $\tilde{p}\left(\mathbf x^{(T)} \right)$ for the reverse trajectory. *(c)* The central 100 $\times$ 100 region has been inpainted using a diffusion probabilistic model trained on images of bark, by sampling from the posterior distribution over the missing region conditioned on the rest of the image. Note the long-range spatial structure, for instance in the crack entering on the left side of the inpainted region. The sample from the posterior was generated as described in 2.5, where $r\left(\mathbf x^{(0)} \right)$ was set to a delta function for known data, and a constant for missing data.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/h8rj7kay/complex_fig_a384c9b7f07c.png)

3. Experiments

Section Summary: Researchers trained diffusion probabilistic models on simple toy datasets like a twisted swiss roll shape and binary heartbeat patterns, successfully learning these distributions with near-perfect accuracy as shown in figures and tables. They then applied similar models to image datasets including handwritten digits from MNIST, colorful CIFAR-10 photos, synthetic dead leaf patterns, and bark textures, achieving strong performance in generating new images and filling in missing parts compared to other methods. All experiments used specialized software for training and evaluation, with results detailed in tables and sample images.

We train diffusion probabilistic models on a variety of continuous datasets, and a binary dataset. We then demonstrate sampling from the trained model and inpainting of missing data, and compare model performance against other techniques. In all cases the objective function and gradient were computed using Theano [51]. Model training was with SFO [52], except for CIFAR-10. CIFAR-10 results used the open source implementation of the algorithm, and RMSprop for optimization. The lower bound on the log likelihood provided by our model is reported for all datasets in Table [@sec:tb_K]. A reference implementation of the algorithm utilizing Blocks [53] is available at https://github.com/Sohl-Dickstein/Diffusion-Probabilistic-Models.

3.1 Toy Problems

3.1.1 Swiss Roll

A diffusion probabilistic model was built of a two dimensional swiss roll distribution, using a radial basis function network to generate $\mathbf f_\mu\left(\mathbf x^{(t)}, t \right)$ and $\mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right)$. As illustrated in Figure 1, the swiss roll distribution was successfully learned. See Appendix D.1.1 for more details.

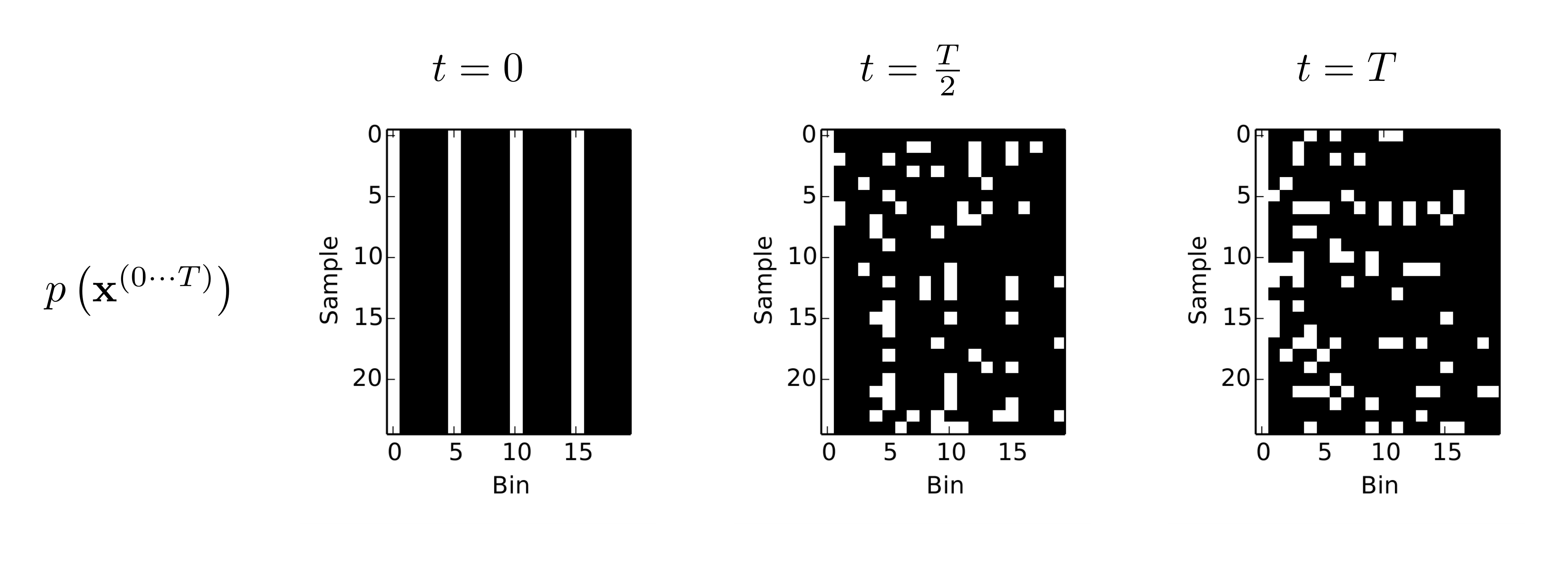

3.1.2 Binary Heartbeat Distribution

A diffusion probabilistic model was trained on simple binary sequences of length 20, where a 1 occurs every 5th time bin, and the remainder of the bins are 0, using a multi-layer perceptron to generate the Bernoulli rates $\mathbf f_b\left(\mathbf x^{(t)}, t \right)$ of the reverse trajectory. The log likelihood under the true distribution is $\log_2\left(\frac{1}{5} \right) = -2.322$ bits per sequence. As can be seen in Figure 2 and Table [@sec:tb_K] learning was nearly perfect. See Appendix D.1.2 for more details.

3.2 Images

We trained Gaussian diffusion probabilistic models on several image datasets. The multi-scale convolutional architecture shared by these experiments is described in Appendix D.2.1, and illustrated in Figure 7.

3.2.1 Datasets

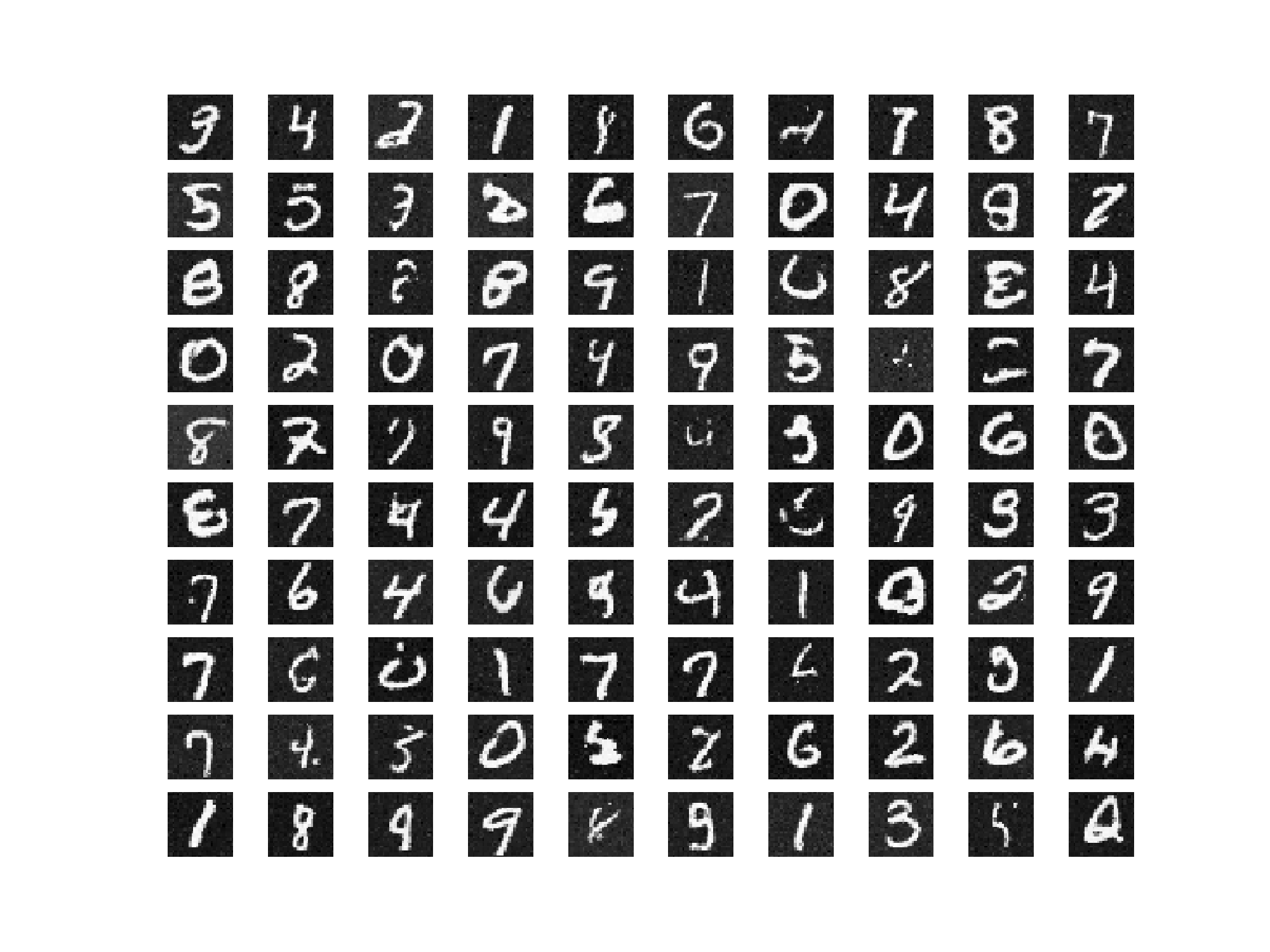

MNIST

In order to allow a direct comparison against previous work on a simple dataset, we trained on MNIST digits [54]. Log likelihoods relative to [55, 26, 31] are given in Table [@sec:tb_ll_compare]. Samples from the MNIST model are given in Appendix Figure [@sec:fig_mnist]. Our training algorithm provides an asymptotically consistent lower bound on the log likelihood. However most previous reported results on continuous MNIST log likelihood rely on Parzen-window based estimates computed from model samples. For this comparison we therefore estimate MNIST log likelihood using the Parzen-window code released with [31].

CIFAR-10

A probabilistic model was fit to the training images for the CIFAR-10 challenge dataset [43]. Samples from the trained model are provided in Figure 3.

Dead Leaf Images

Dead leaf images [47, 48] consist of layered occluding circles, drawn from a power law distribution over scales. They have an analytically tractable structure, but capture many of the statistical complexities of natural images, and therefore provide a compelling test case for natural image models. As illustrated in Table [@sec:tb_ll_compare] and Figure 4, we achieve state of the art performance on the dead leaves dataset.

Bark Texture Images

A probabilistic model was trained on bark texture images (T01-T04) from [50]. For this dataset we demonstrate that it is straightforward to evaluate or generate from a posterior distribution, by inpainting a large region of missing data using a sample from the model posterior in Figure 5.

4. Conclusion

Section Summary: This new algorithm models probability distributions in data by allowing precise sampling and probability calculations, and it has proven effective on simple test datasets as well as complex real-world ones like natural images, using the same basic approach throughout. At its heart, the method works by reversing a step-by-step process that turns data into random noise, making each reversal straightforward to compute as the steps increase. Unlike most current techniques that trade accuracy for speed or make sampling and evaluation costly, this approach fits any data distribution while staying efficient, easy to train, and flexible for handling conditional or posterior probabilities.

We have introduced a novel algorithm for modeling probability distributions that enables exact sampling and evaluation of probabilities and demonstrated its effectiveness on a variety of toy and real datasets, including challenging natural image datasets. For each of these tests we used a similar basic algorithm, showing that our method can accurately model a wide variety of distributions. Most existing density estimation techniques must sacrifice modeling power in order to stay tractable and efficient, and sampling or evaluation are often extremely expensive. The core of our algorithm consists of estimating the reversal of a Markov diffusion chain which maps data to a noise distribution; as the number of steps is made large, the reversal distribution of each diffusion step becomes simple and easy to estimate. The result is an algorithm that can learn a fit to any data distribution, but which remains tractable to train, exactly sample from, and evaluate, and under which it is straightforward to manipulate conditional and posterior distributions.

Acknowledgements

Section Summary: The acknowledgements section expresses gratitude to several researchers—Lucas Theis, Subhaneil Lahiri, Ben Poole, Diederik P. Kingma, Taco Cohen, Philip Bachman, and Aäron van den Oord—for their insightful discussions, as well as to Ian Goodfellow for providing Parzen-window code. It also thanks Khan Academy and the Office of Naval Research for supporting Jascha Sohl-Dickstein's work financially. Additionally, the Office of Naval Research, along with the Burroughs-Wellcome, Sloan, and James S. McDonnell foundations, is recognized for funding Surya Ganguli.

We thank Lucas Theis, Subhaneil Lahiri, Ben Poole, Diederik P. Kingma, Taco Cohen, Philip Bachman, and Aäron van den Oord for extremely helpful discussion, and Ian Goodfellow for Parzen-window code. We thank Khan Academy and the Office of Naval Research for funding Jascha Sohl-Dickstein, and we thank the Office of Naval Research and the Burroughs-Wellcome, Sloan, and James S. McDonnell foundations for funding Surya Ganguli.

Appendix

Section Summary: The appendix provides mathematical derivations for bounding conditional entropy in the reverse steps of diffusion probabilistic models, showing upper and lower limits based on maximum entropy distributions like Gaussian or binomial, which can be computed analytically from the forward process alone. It then derives a lower bound on the log likelihood by breaking it down into entropies, KL divergences, and adjustments to avoid edge effects, making it tractable for training. Key equations are summarized for Gaussian and binomial cases, illustrated with MNIST digit samples generated by the model.

Appendix

Section Summary: The appendix provides mathematical derivations for bounding conditional entropy in the reverse steps of diffusion probabilistic models, showing upper and lower limits based on maximum entropy distributions like Gaussian or binomial, which can be computed analytically from the forward process alone. It then derives a lower bound on the log likelihood by breaking it down into entropies, KL divergences, and adjustments to avoid edge effects, making it tractable for training. Key equations are summarized for Gaussian and binomial cases, illustrated with MNIST digit samples generated by the model.

A. Conditional Entropy Bounds Derivation

The conditional entropy $H_q\left(\mathbf X^{(t-1)} | \mathbf X^{(t)} \right)$ of a step in the reverse trajectory is

$

<<

An upper bound on the entropy change can be constructed by observing that $\pi\left(\mathbf y \right)$ is the maximum entropy distribution. This holds without qualification for the binomial distribution, and holds for variance 1 training data for the Gaussian case. For the Gaussian case, training data must therefore be scaled to have unit norm for the following equalities to hold. It need not be whitened. The upper bound is derived as follows,

$

<<

A lower bound on the entropy difference can be established by observing that additional steps in a Markov chain do not increase the information available about the initial state in the chain, and thus do not decrease the conditional entropy of the initial state,

$

<<

Combining these expressions, we bound the conditional entropy for a single step,

$

<<

where both the upper and lower bounds depend only on the conditional forward trajectory $q\left(\mathbf x^{(1\cdots T)} | \mathbf x^{(0)} \right)$, and can be analytically computed.

B. Log Likelihood Lower Bound

The lower bound on the log likelihood is

$

<<

B.1 Entropy of $p\left(\mathbf X^{(T)} \right)$

We can peel off the contribution from $p\left(\mathbf X^{(T)} \right)$, and rewrite it as an entropy,

$

<<

By design, the cross entropy to $\pi\left(\mathbf x^{(t)}\right)$ is constant under our diffusion kernels, and equal to the entropy of $p \left(\mathbf x^{(T)} \right)$. Therefore,

$

<<

B.2 Remove the edge effect at $t=0$

In order to avoid edge effects, we set the final step of the reverse trajectory to be identical to the corresponding forward diffusion step,

$

<<

We then use this equivalence to remove the contribution of the first time-step in the sum,

$

<<

where we again used the fact that by design $-\int d \mathbf x^{(t)} q\left(\mathbf x^{(t)} \right) \log \pi\left(\mathbf x^{(t)}\right) = H_p\left(\mathbf X^{(T)} \right)$ is a constant for all $t$.

B.3 Rewrite in terms of posterior $q\left(\mathbf x^{(t-1)} | \mathbf x^{(0)} \right)$

Because the forward trajectory is a Markov process,

$

<<

Using Bayes' rule we can rewrite this in terms of a posterior and marginals from the forward trajectory,

$

<<

B.4 Rewrite in terms of KL divergences and entropies

We then recognize that several terms are conditional entropies,

$

<<

Finally we transform the log ratio of probability distributions into a KL divergence,

$

<<

Note that the entropies can be analytically computed, and the KL divergence can be analytically computed given $\mathbf x^{(0)}$ and $\mathbf x^{(t)}$.

\begin{tabular}{p{0.22\linewidth}|r|l|l}

& & \em Gaussian & \em Binomial \\ \hline

Well behaved (analytically tractable) distribution & $\pi\left(\mathbf x^{(T)} \right) =$ & $\mathcal N \left(\mathbf x^{(T)} ; \mathbf 0, \mathbf I \right)$ & $\mathcal B\left(\mathbf x^{(T)} ; 0.5 \right)$ \\

Forward diffusion kernel & $q\left(\mathbf x^{(t)} | \mathbf x^{(t-1)} \right) =$ & $\mathcal N \left(\mathbf x^{(t)} ; \mathbf x^{(t-1)} \sqrt{1 - \beta_t}, \mathbf I \beta_t \right)$ & $\mathcal B \left(\mathbf x^{(t)} ; \mathbf x^{(t-1)} \left(1 - \beta_t\right) + 0.5 \beta_t \right)$ \\

Reverse diffusion kernel & $p\left(\mathbf x^{(t-1)} | \mathbf x^{(t)} \right) =$ & $\mathcal N \left(\mathbf x^{(t-1)} ; \mathbf f_\mu\left(\mathbf x^{(t)}, t \right), \mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right) \right)$ & $\mathcal B \left(\mathbf x^{(t-1)} ; \mathbf f_b\left(\mathbf x^{(t)}, t \right) \right)$ \\

Training targets & $ & $\mathbf f_\mu\left(\mathbf x^{(t)}, t \right)$, $\mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right)$, $\beta_{1\cdots T}$ & $\mathbf f_b\left(\mathbf x^{(t)}, t \right)$ \\

Forward distribution & $q\left(\mathbf x^{(0\cdots T)} \right) =$ & \multicolumn{2}{c}{$q \left(\mathbf x^{(0)} \right) \prod_{t=1}^T q\left(\mathbf x^{(t)} | \mathbf x^{(t-1)} \right)$} \\

Reverse distribution & $p\left(\mathbf x^{(0\cdots T)} \right) =$ & \multicolumn{2}{c}{$\pi\left(\mathbf x^{(T)} \right) \prod_{t=1}^T p\left(\mathbf x^{(t-1)} | \mathbf x^{(t)} \right)$} \\

Log likelihood & $L =$ & \multicolumn{2}{c}{$\int d \mathbf x^{(0)} q \left(\mathbf x^{(0)} \right) \log p\left(\mathbf x^{(0)} \right)$} \\

Lower bound on log likelihood & $K =$ & \multicolumn{2}{c}{

$-\sum_{t=2}^T \mathbb E_{q\left(\mathbf x^{(0)}, \mathbf x^{(t)} \right)}\left[D_{KL}\left(q\left(\mathbf x^{(t-1)} | \mathbf x^{(t)}, \mathbf x^{(0)} \right) \middle|\middle| p\left(\mathbf x^{(t-1)} | \mathbf x^{(t)} \right) \right) \right] + H_q\left(\mathbf X^{(T)} | \mathbf X^{(0)} \right) - H_q\left(\mathbf X^{(1)} | \mathbf X^{(0)} \right) - H_p\left(\mathbf X^{(T)} \right) \nonumber$

} \\

Perturbed reverse diffusion kernel & $\tilde{p}\left(\mathbf x^{(t-1)} | \mathbf x^{(t)} \right) =$ &

$\mathcal N \left(\mathbf \mathbf x^{(t-1)} ; \mathbf f_\mu\left(\mathbf x^{(t)}, t \right) + \mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right) \frac{\partial \log r\left(\mathbf x^{(t-1)'} \right) }{\partial \mathbf x^{(t-1)'} }\bigg|_{\mathbf x^{(t-1)'}=f_\mu\left(\mathbf x^{(t)}, t \right)}, \mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right) \right)$

&

$\mathcal B \left(x_i^{(t-1)} ; \frac{ c_i^{t-1} d_i^{t-1} }{ x_i^{t-1} d_i^{t-1} + (1-c_i^{t-1})(1-d_i^{t-1}) } \right)$

\end{tabular}

C. Perturbed Gaussian Transition

We wish to compute $\tilde{p}\left(\mathbf x^{(t-1)} \mid \mathbf x^{(t)} \right)$. For notational simplicity, let $\mu = \mathbf f_\mu\left(\mathbf x^{(t)}, t \right)$, $\Sigma = \mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right)$, and $\mathbf y = \mathbf x^{(t-1)}$. Using this notation,

$ \begin{align} \tilde{p}\left(\mathbf y \mid \mathbf x^{(t)} \right) &\propto p\left(\mathbf y \mid \mathbf x^{(t)} \right) r\left(\mathbf y \right) \ &= \mathcal N \left(\mathbf y ;\mu, \Sigma \right) r\left(\mathbf y \right) . \end{align} $

We can rewrite this in terms of energy functions, where $E_r\left(\mathbf y \right) = -\log r\left(\mathbf y \right)$,

$ \begin{align} \tilde{p}\left(\mathbf y \mid \mathbf x^{(t)} \right) &\propto \exp\left[-E\left(\mathbf y \right) \right] \ E\left(\mathbf y \right) &=\frac{1}{2} \left(\mathbf y - \mu \right)^T \Sigma^{-1} \left(\mathbf y - \mu \right) + E_r\left(\mathbf y \right) . \end{align} $

If $E_r\left(\mathbf y \right)$ is smooth relative to $\frac{1}{2} \left(\mathbf y - \mu \right)^T \Sigma^{-1} \left(\mathbf y - \mu \right)$, then we can approximate it using its Taylor expansion around $\mu$. One sufficient condition is that the eigenvalues of the Hessian of $E_r\left(\mathbf y \right)$ are everywhere much smaller magnitude than the eigenvalues of $\Sigma^{-1}$. We then have

$ \begin{align} E_r\left(\mathbf y \right) & \approx E_r\left(\mu \right) + \left(\mathbf y - \mu \right) \mathbf g \end{align} $

where $\mathbf g = \frac{\partial E_r\left(\mathbf y' \right)}{\partial \mathbf y'} \bigg|_{\mathbf y'=\mu}$. Plugging this in to the full energy,

$ \begin{align} E\left(\mathbf y \right) &\approx \frac{1}{2} \left(\mathbf y - \mu \right)^T \Sigma^{-1} \left(\mathbf y - \mu \right) + \left(\mathbf y - \mu \right)^T \mathbf g + \text{constant} \ &= \frac{1}{2} \mathbf y^T \Sigma^{-1} \mathbf y - \frac{1}{2} \mathbf y^T \Sigma^{-1} \mu - \frac{1}{2} \mu^T \Sigma^{-1} \mathbf y + \frac{1}{2} \mathbf y^T \Sigma^{-1} \Sigma \mathbf g + \frac{1}{2} \mathbf g^T \Sigma \Sigma^{-1} \mathbf y + \text{constant} \ &= \frac{1}{2} \left(\mathbf y - \mu + \Sigma \mathbf g\right)^T \Sigma^{-1} \left(\mathbf y - \mu + \Sigma \mathbf g \right) + \text{constant} . \end{align} $

This corresponds to a Gaussian,

$ \begin{align} \tilde{p}\left(\mathbf y \mid \mathbf x^{(t)} \right) &\approx \mathcal N \left(\mathbf y ; \mu - \Sigma \mathbf g, \Sigma \right) . \end{align} $

Substituting back in the original formalism, this is,

$ \begin{align} \tilde{p}\left(\mathbf x^{(t-1)} \mid \mathbf x^{(t)} \right) &\approx \mathcal N \left(\mathbf \mathbf x^{(t-1)} ; \mathbf f_\mu\left(\mathbf x^{(t)}, t \right) + \mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right) \frac{\partial \log r\left(\mathbf x^{(t-1)'} \right) }{\partial \mathbf x^{(t-1)'} }\Bigg|{\mathbf x^{(t-1)'}=f\mu\left(\mathbf x^{(t)}, t \right)} , \mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right) \right) . \end{align} $

D. Experimental Details

D.1 Toy Problems

D.1.1 Swiss Roll

A probabilistic model was built of a two dimensional swiss roll distribution. The generative model $p\left(\mathbf x^{(0\cdots T)} \right)$ consisted of 40 time steps of Gaussian diffusion initialized at an identity-covariance Gaussian distribution. A (normalized) radial basis function network with a single hidden layer and 16 hidden units was trained to generate the mean and covariance functions $\mathbf f_\mu\left(\mathbf x^{(t)}, t \right)$ and a diagonal $\mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right)$ for the reverse trajectory. The top, readout, layer for each function was learned independently for each time step, but for all other layers weights were shared across all time steps and both functions. The top layer output of $\mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right)$ was passed through a sigmoid to restrict it between 0 and 1. As can be seen in Figure 1, the swiss roll distribution was successfully learned.

D.1.2 Binary Heartbeat Distribution

A probabilistic model was trained on simple binary sequences of length 20, where a 1 occurs every 5th time bin, and the remainder of the bins are 0. The generative model consisted of 2000 time steps of binomial diffusion initialized at an independent binomial distribution with the same mean activity as the data ($p\left(x_i^{(T)} = 1 \right) = 0.2$). A multilayer perceptron with sigmoid nonlinearities, 20 input units and three hidden layers with 50 units each was trained to generate the Bernoulli rates $\mathbf f_b\left(\mathbf x^{(t)}, t \right)$ of the reverse trajectory. The top, readout, layer was learned independently for each time step, but for all other layers weights were shared across all time steps. The top layer output was passed through a sigmoid to restrict it between 0 and 1. As can be seen in Figure 2, the heartbeat distribution was successfully learned. The log likelihood under the true generating process is $\log_2\left(\frac{1}{5} \right) = -2.322$ bits per sequence. As can be seen in Figure 2 and Table [@sec:tb_K] learning was nearly perfect.

D.2 Images

D.2.1 Architecture

Readout

In all cases, a convolutional network was used to produce a vector of outputs $\mathbf y_i \in \mathcal R^{2J}$ for each image pixel $i$. The entries in $\mathbf y_i$ are divided into two equal sized subsets, $\mathbf y^\mu$ and $\mathbf y^\Sigma$.

Temporal Dependence

The convolution output $\mathbf y^\mu$ is used as per-pixel weighting coefficients in a sum over time-dependent "bump" functions, generating an output $\mathbf z^\mu_i \in \mathcal R$ for each pixel $i$,

$ \begin{align} \mathbf z^\mu_i &= \sum_{j=1}^J \mathbf y^\mu_{ij} g_j\left(t\right) . \end{align} $

The bump functions consist of

$ \begin{align} g_j\left(t\right) &= \frac{ \exp\left(-\frac{1}{2 w^2} \left(t - \tau_j \right)^2 \right) }{ \sum_{k=1}^J \exp\left(-\frac{1}{2 w^2} \left(t - \tau_k \right)^2 \right) } , \end{align} $

where $\tau_j \in (0, T)$ is the bump center, and $w$ is the spacing between bump centers. $\mathbf z^\Sigma$ is generated in an identical way, but using $y^\Sigma$.

For all image experiments a number of timesteps $T=1000$ was used, except for the bark dataset which used $T=500$.

Mean and Variance

Finally, these outputs are combined to produce a diffusion mean and variance prediction for each pixel $i$,

$ \begin{align} \Sigma_{ii} &= \sigma\left(z^\Sigma_i + \sigma^{-1}\left(\beta_t \right) \right), \ \mu_i &= \left(x_i - z^\mu_i \right)\left(1 - \Sigma_{ii}\right) + z^\mu_i . \end{align} $

where both $\Sigma$ and $\mu$ are parameterized as a perturbation around the forward diffusion kernel $T_\pi\left(\mathbf x^{(t)} | \mathbf x^{(t-1)}; \beta_t \right)$, and $z^\mu_i$ is the mean of the equilibrium distribution that would result from applying $p\left(\mathbf x^{(t-1)} | \mathbf x^{(t)} \right)$ many times. $\Sigma$ is restricted to be a diagonal matrix.

Multi-Scale Convolution

We wish to accomplish goals that are often achieved with pooling networks – specifically, we wish to discover and make use of long-range and multi-scale dependencies in the training data. However, since the network output is a vector of coefficients for every pixel it is important to generate a full resolution rather than down-sampled feature map. We therefore define multi-scale-convolution layers that consist of the following steps:

- Perform mean pooling to downsample the image to multiple scales. Downsampling is performed in powers of two.

- Performing convolution at each scale.

- Upsample all scales to full resolution, and sum the resulting images.

- Perform a pointwise nonlinear transformation, consisting of a soft relu ($\log\left[1 + \exp\left(\cdot \right)\right]$).

The composition of the first three linear operations resembles convolution by a multiscale convolution kernel, up to blocking artifacts introduced by upsampling. This method of achieving multiscale convolution was described in [56].

Dense Layers

Dense (acting on the full image vector) and kernel-width-1 convolutional (acting separately on the feature vector for each pixel) layers share the same form. They consist of a linear transformation, followed by a tanh nonlinearity.

![**Figure 7:** Network architecture for mean function $\mathbf f_\mu\left(\mathbf x^{(t)}, t \right)$ and covariance function $\mathbf f_\Sigma\left(\mathbf x^{(t)}, t \right)$, for experiments in 3.2. The input image $\mathbf x^{(t)}$ passes through several layers of multi-scale convolution ([@sec:sec_multiscale]). It then passes through several convolutional layers with $1\times 1$ kernels. This is equivalent to a dense transformation performed on each pixel. A linear transformation generates coefficients for readout of both mean $\mu^{(t)}$ and covariance $\Sigma^{(t)}$ for each pixel. Finally, a time dependent readout function converts those coefficients into mean and covariance images, as described in D.2.1. For CIFAR-10 a dense (or fully connected) pathway was used in parallel to the multi-scale convolutional pathway. For MNIST, the dense pathway was used to the exclusion of the multi-scale convolutional pathway.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/h8rj7kay/complex_fig_6650365b830e.png)

References

[1] T, P. Convergence condition of the TAP equation for the infinite-ranged Ising spin glass model. J. Phys. A: Math. Gen. 15 1971, 1982.

[2] Tanaka, T. Mean-field theory of Boltzmann machine learning. Physical Review Letters E, January 1998.

[3] Jordan, M. I., Ghahramani, Z., Jaakkola, T. S., and Saul, L. K. An introduction to variational methods for graphical models. Machine learning, 37(2):183–233, 1999.

[4] Welling, M. and Hinton, G. A new learning algorithm for mean field Boltzmann machines. Lecture Notes in Computer Science, January 2002.

[5] Hinton, G. E. Training products of experts by minimizing contrastive divergence. Neural Computation, 14(8):1771–1800, 2002.

[6] Sohl-Dickstein, J., Battaglino, P. B., and DeWeese, M. R. Minimum Probability Flow Learning. International Conference on Machine Learning, 107(22):11–14, November 2011b. ISSN 0031-9007. doi:10.1103/PhysRevLett.107.220601.

[7] Sohl-Dickstein, J., Battaglino, P., and DeWeese, M. New Method for Parameter Estimation in Probabilistic Models: Minimum Probability Flow. Physical Review Letters, 107(22):11–14, November 2011a. ISSN 0031-9007. doi:10.1103/PhysRevLett.107.220601.

[8] Lyu, S. Unifying Non-Maximum Likelihood Learning Objectives with Minimum KL Contraction. Advances in Neural Information Processing Systems 24, pp.\ 64–72, 2011.

[9] Gneiting, T. and Raftery, A. E. Strictly proper scoring rules, prediction, and estimation. Journal of the American Statistical Association, 102(477):359–378, 2007.

[10] Parry, M., Dawid, A. P., Lauritzen, S., and Others. Proper local scoring rules. The Annals of Statistics, 40(1):561–592, 2012.

[11] Hyvärinen, A. Estimation of non-normalized statistical models using score matching. Journal of Machine Learning Research, 6:695–709, 2005.

[12] Besag, J. Statistical Analysis of Non-Lattice Data. The Statistician, 24(3), 179-195, 1975.

[13] Murphy, K. P., Weiss, Y., and Jordan, M. I. Loopy belief propagation for approximate inference: An empirical study. In Proceedings of the Fifteenth conference on Uncertainty in artificial intelligence, pp.\ 467–475. Morgan Kaufmann Publishers Inc., 1999.

[14] Gershman, S. J. and Blei, D. M. A tutorial on Bayesian nonparametric models. Journal of Mathematical Psychology, 56(1):1–12, 2012.

[15] Jarzynski, C. Equilibrium free-energy differences from nonequilibrium measurements: A master-equation approach. Physical Review E, January 1997.

[16] Neal, R. Annealed importance sampling. Statistics and Computing, January 2001.

[17] Hinton, G. E. The wake-sleep algorithm for unsupervised neural networks ). Science, 1995.

[18] Dayan, P., Hinton, G. E., Neal, R. M., and Zemel, R. S. The helmholtz machine. Neural computation, 7(5):889–904, 1995.

[19] Sminchisescu, C., Kanaujia, A., and Metaxas, D. Learning joint top-down and bottom-up processes for 3D visual inference. In Computer Vision and Pattern Recognition, 2006 IEEE Computer Society Conference on, volume 2, pp.\ 1743–1752. IEEE, 2006.

[20] Kavukcuoglu, K., Ranzato, M., and LeCun, Y. Fast inference in sparse coding algorithms with applications to object recognition. arXiv preprint arXiv:1010.3467, 2010.

[21] Kingma, D. P. and Welling, M. Auto-Encoding Variational Bayes. International Conference on Learning Representations, December 2013.

[22] Gregor, K., Danihelka, I., Mnih, A., Blundell, C., and Wierstra, D. Deep AutoRegressive Networks. arXiv preprint arXiv:1310.8499, October 2013.

[23] Rezende, D. J., Mohamed, S., and Wierstra, D. Stochastic Backpropagation and Approximate Inference in Deep Generative Models. Proceedings of the 31st International Conference on Machine Learning (ICML-14), January 2014.

[24] Ozair, S. and Bengio, Y. Deep Directed Generative Autoencoders. arXiv:1410.0630, October 2014.

[25] Bornschein, J. and Bengio, Y. Reweighted Wake-Sleep. International Conference on Learning Representations, June 2015.

[26] Bengio, Y. and Thibodeau-Laufer, E. Deep generative stochastic networks trainable by backprop. arXiv preprint arXiv:1306.1091, 2013.

[27] Yao, L., Ozair, S., Cho, K., and Bengio, Y. On the Equivalence Between Deep NADE and Generative Stochastic Networks. In Machine Learning and Knowledge Discovery in Databases, pp.\ 322–336. Springer, 2014.

[28] Larochelle, H. and Murray, I. The neural autoregressive distribution estimator. Journal of Machine Learning Research, 2011.

[29] Uria, B., Murray, I., and Larochelle, H. RNADE: The real-valued neural autoregressive density-estimator. Advances in Neural Information Processing Systems, 2013a.

[30] Uria, B., Murray, I., and Larochelle, H. A Deep and Tractable Density Estimator. arXiv:1310.1757, pp.\ 9, October 2013b.

[31] Goodfellow, I. J., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., Courville, A., and Bengio, Y. Generative Adversarial Nets. Advances in Neural Information Processing Systems, 2014.

[32] Schmidhuber, J. Learning factorial codes by predictability minimization. Neural Computation, 1992.

[33] Rippel, O. and Adams, R. P. High-Dimensional Probability Estimation with Deep Density Models. arXiv:1410.8516, pp.\ 12, February 2013.

[34] Dinh, L., Krueger, D., and Bengio, Y. NICE: Non-linear Independent Components Estimation. arXiv:1410.8516, pp.\ 11, October 2014.

[35] Stuhlmüller, A., Taylor, J., and Goodman, N. Learning stochastic inverses. Advances in Neural Information Processing Systems, 2013.

[36] Theis, L., Hosseini, R., and Bethge, M. Mixtures of conditional Gaussian scale mixtures applied to multiscale image representations. PloS one, 7(7):e39857, 2012.

[37] MacKay, D. Bayesian neural networks and density networks. Nuclear Instruments and Methods in Physics Research Section A: Accelerators, Spectrometers, Detectors and Associated Equipment, 1995.

[38] Bishop, C., Svensén, M., and Williams, C. GTM: The generative topographic mapping. Neural computation, 1998.

[39] Burda, Y., Grosse, R. B., and Salakhutdinov, R. Accurate and Conservative Estimates of MRF Log-likelihood using Reverse Annealing. arXiv:1412.8566, December 2014.

[40] Langevin, P. Sur la théorie du mouvement brownien. CR Acad. Sci. Paris, 146(530-533), 1908.

[41] Suykens, J. and Vandewalle, J. Nonconvex optimization using a Fokker-Planck learning machine. In 12th European Conference on Circuit Theory and Design, 1995.

[42] Feller, W. On the theory of stochastic processes, with particular reference to applications. In Proceedings of the [First] Berkeley Symposium on Mathematical Statistics and Probability. The Regents of the University of California, 1949.

[43] Krizhevsky, A. and Hinton, G. Learning multiple layers of features from tiny images. Computer Science Department University of Toronto Tech. Rep., 2009.

[44] Spinney, R. and Ford, I. Fluctuation Relations : A Pedagogical Overview. arXiv preprint arXiv:1201.6381, pp.\ 3–56, 2013.

[45] Jarzynski, C. Equalities and inequalities: irreversibility and the second law of thermodynamics at the nanoscale. Annu. Rev. Condens. Matter Phys., 2011.

[46] Grosse, R. B., Maddison, C. J., and Salakhutdinov, R. Annealing between distributions by averaging moments. In Advances in Neural Information Processing Systems, pp.\ 2769–2777, 2013.

[47] Jeulin, D. Dead leaves models: from space tesselation to random functions. Proc. of the Symposium on the Advances in the Theory and Applications of Random Sets, 1997.

[48] Lee, A., Mumford, D., and Huang, J. Occlusion models for natural images: A statistical study of a scale-invariant dead leaves model. International Journal of Computer Vision, 2001.

[49] Theis, L., van den Oord, A., and Bethge, M. A note on the evaluation of generative models. arXiv preprint arXiv:1511.01844, 2015.

[50] Lazebnik, S., Schmid, C., and Ponce, J. A sparse texture representation using local affine regions. Pattern Analysis and Machine Intelligence, IEEE Transactions on, 27(8):1265–1278, 2005.

[51] Bergstra, J. and Breuleux, O. Theano: a CPU and GPU math expression compiler. Proceedings of the Python for Scientific Computing Conference (SciPy), 2010.

[52] Sohl-Dickstein, J., Poole, B., and Ganguli, S. Fast large-scale optimization by unifying stochastic gradient and quasi-Newton methods. In Proceedings of the 31st International Conference on Machine Learning (ICML-14), pp.\ 604–612, 2014.

[53] van Merriënboer, B., Chorowski, J., Serdyuk, D., Bengio, Y., Bogdanov, D., Dumoulin, V., and Warde-Farley, D. Blocks and Fuel. Zenodo, May 2015. doi:10.5281/zenodo.17721.

[54] LeCun, Y. and Cortes, C. The MNIST database of handwritten digits. 1998.

[55] Bengio, Y., Mesnil, G., Dauphin, Y., and Rifai, S. Better Mixing via Deep Representations. arXiv preprint arXiv:1207.4404, July 2012.

[56] Barron, J. T., Biggin, M. D., Arbelaez, P., Knowles, D. W., Keranen, S. V., and Malik, J. Volumetric Semantic Segmentation Using Pyramid Context Features. In 2013 IEEE International Conference on Computer Vision, pp.\ 3448–3455. IEEE, December 2013. ISBN 978-1-4799-2840-8. doi:10.1109/ICCV.2013.428.