On Calibration of Modern Neural Networks

Chuan Guo ${}^{* 1}$

Geoff Pleiss ${}^{* 1}$

Yu Sun ${}^{* 1}$

Kilian Q. Weinberger ${}^{1}$

${}^{*}$ Equal contribution, alphabetical order. ${}^{1}$ Cornell University.

Correspondence to: Chuan Guo [email protected], Geoff Pleiss [email protected], Yu Sun [email protected].

Abstract

Confidence calibration – the problem of predicting probability estimates representative of the true correctness likelihood – is important for classification models in many applications. We discover that modern neural networks, unlike those from a decade ago, are poorly calibrated. Through extensive experiments, we observe that depth, width, weight decay, and Batch Normalization are important factors influencing calibration. We evaluate the performance of various post-processing calibration methods on state-of-the-art architectures with image and document classification datasets. Our analysis and experiments not only offer insights into neural network learning, but also provide a simple and straightforward recipe for practical settings: on most datasets, temperature scaling – a single-parameter variant of Platt Scaling – is surprisingly effective at calibrating predictions.

Executive Summary: Modern neural networks, which power applications like self-driving cars, medical diagnostics, and speech recognition, have become remarkably accurate at classifying images, text, and other data. However, they often fail to provide reliable confidence scores—probabilities that accurately reflect the true likelihood of being correct. This miscalibration is a growing issue as these models integrate into real-world decision systems, where overconfidence can lead to errors, such as a car ignoring uncertain detections or a diagnostic tool misleading doctors. The problem has intensified with recent advances in network design, making it urgent to address for safer, more trustworthy AI deployments.

This paper examines why contemporary neural networks produce unreliable confidence estimates despite their high accuracy and tests practical ways to fix this through post-training adjustments. It focuses on supervised classification tasks, evaluating calibration across image and document datasets.

The authors conducted experiments on standard datasets like CIFAR-10 (small images in 10 categories), CIFAR-100 (100 categories), ImageNet (1,000 natural scene categories with over a million images), and text sets like news articles and movie reviews. They trained state-of-the-art models, including deep ResNets (up to 152 layers) and DenseNets, using common practices like data augmentation and the past decade's data splits (e.g., 45,000 training samples for CIFAR-10). To assess calibration, they used reliability diagrams and metrics like Expected Calibration Error (ECE), which measures the gap between predicted confidence and actual accuracy in binned groups of predictions. They analyzed influences such as network depth, width, batch normalization (a technique to stabilize training), and weight decay (a regularization to prevent overfitting), then compared post-processing methods like histogram binning (grouping probabilities and adjusting averages), isotonic regression (fitting stepwise functions), and their proposed temperature scaling (dividing network outputs by a single learned scalar to soften probabilities).

The core findings reveal that modern networks are systematically overconfident: on CIFAR-100, a 110-layer ResNet's average confidence exceeds its 28% accuracy by about 20 percentage points, yielding ECE scores of 4-16% across datasets, far worse than older, simpler networks from 2005. Increased model depth and width boost accuracy but raise ECE by 2-3 times, as larger models fit training data too closely and inflate confidences to minimize a common loss function (negative log likelihood). Batch normalization and reduced weight decay, trends that aid training speed and accuracy, further degrade calibration by 20-50% in tests, creating a disconnect where probabilistic predictions overfit without harming classification error. Remarkably, temperature scaling—a lightweight method with one parameter—cuts ECE to under 2% on most vision tasks (e.g., from 16.5% to 1.3% on CIFAR-100 ResNet), outperforming complex alternatives like matrix scaling, which fails on datasets with over 100 classes due to overfitting. Binning methods reduce ECE by half but sometimes alter predictions, increasing error by 5-10 percentage points, while temperature scaling preserves accuracy entirely.

These results mean that uncalibrated networks pose hidden risks: high reported confidence can mask uncertainties, eroding trust in high-stakes uses and complicating integration with other systems, like combining image detection with sensor data. Unlike older networks, which aligned confidence with accuracy, modern ones prioritize raw performance over probability quality, partly because training optimizes a loss that rewards overconfidence after accuracy plateaus—this matters for policy and compliance, as regulators increasingly demand interpretable AI. Overall, calibration improves safety and performance in pipelines, with minimal computational cost.

Leaders should implement temperature scaling as a standard post-training step: it requires only a validation set (typically 5,000-25,000 samples) and runs in seconds, offering the best trade-off of simplicity, speed, and effectiveness without retraining. For text or low-error datasets like house numbers (SVHN), binning may suffice if class changes are tolerable. Avoid matrix scaling for large class counts due to its poor scalability. Next, incorporate more regularization like higher weight decay during training to inherently improve calibration, and pilot these methods in production systems.

While robust across 10 datasets and multiple architectures, the analysis assumes data independence and fixed training protocols; ECE can vary with bin choices (15 bins used here), and small validation sets may limit precision. Confidence in temperature scaling's superiority is high, given consistent outperformance in negative log likelihood and maximum errors, but further studies should explore adversarial data or real-time applications.

1. Introduction

Section Summary: Recent advances in deep learning have made neural networks highly accurate and essential for tasks like detecting objects, recognizing speech, and diagnosing diseases, but they also need to provide reliable confidence scores that truly reflect the likelihood of being correct to support safe decision-making in areas such as self-driving cars and healthcare. While older, simpler networks produced well-calibrated probabilities that matched their accuracy, modern deeper networks like ResNets often show overconfidence, where their predicted probabilities are too high compared to actual performance, as illustrated in histograms and reliability diagrams. This paper explores the reasons for this miscalibration, tests it across vision and language tasks, and finds that a simple method called temperature scaling effectively fixes the issue for practical use.

Recent advances in deep learning have dramatically improved neural network accuracy ([1, 2, 3, 4, 5]). As a result, neural networks are now entrusted with making complex decisions in applications, such as object detection [6], speech recognition [7], and medical diagnosis [8]. In these settings, neural networks are an essential component of larger decision making pipelines.

In real-world decision making systems, classification networks must not only be accurate, but also should indicate when they are likely to be incorrect. As an example, consider a self-driving car that uses a neural network to detect pedestrians and other obstructions [9]. If the detection network is not able to confidently predict the presence or absence of immediate obstructions, the car should rely more on the output of other sensors for braking. Alternatively, in automated health care, control should be passed on to human doctors when the confidence of a disease diagnosis network is low [10]. Specifically, a network should provide a calibrated confidence measure in addition to its prediction. In other words, the probability associated with the predicted class label should reflect its ground truth correctness likelihood.

:::: {cols="1"}

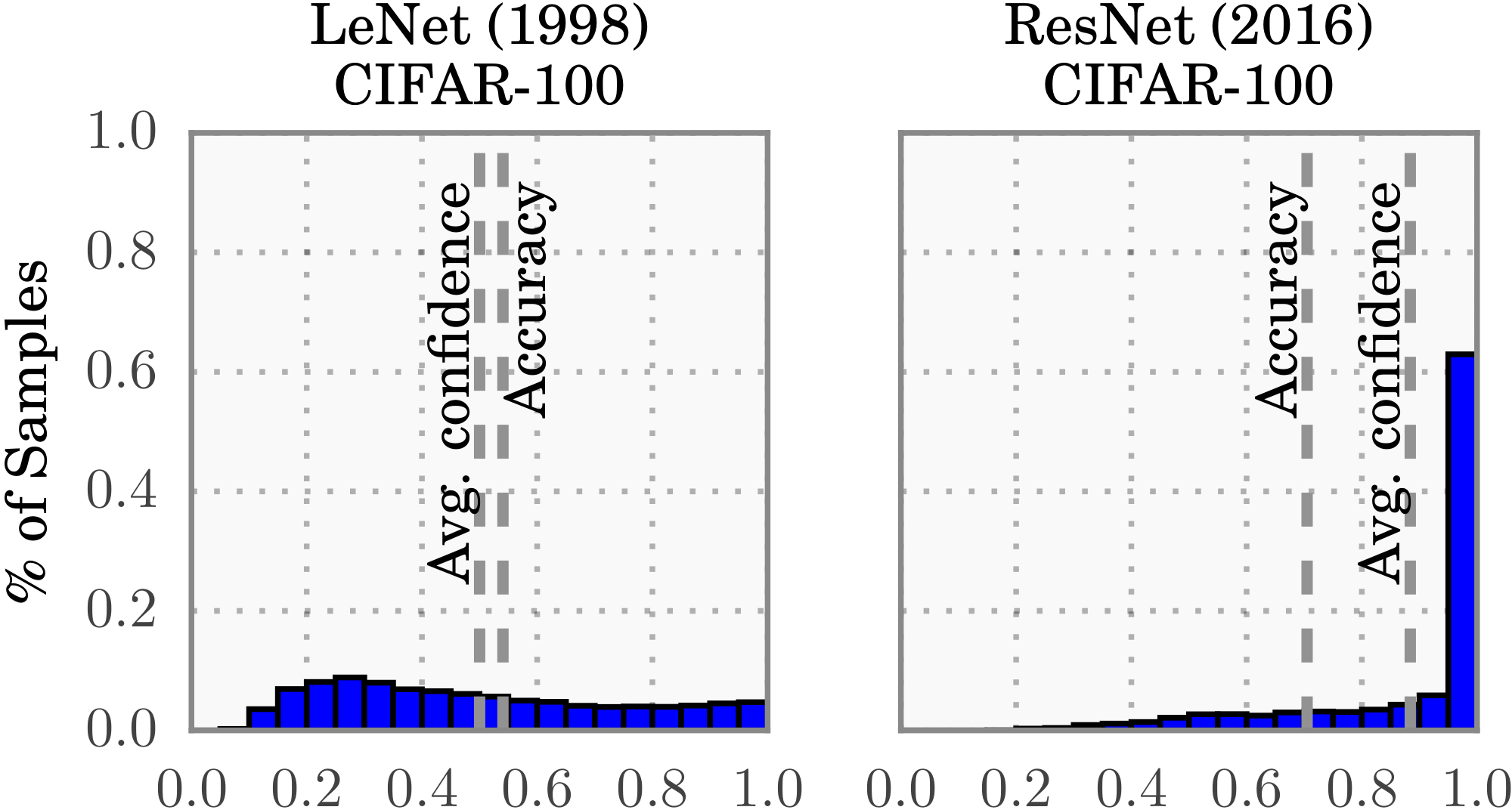

Figure 1: Confidence histograms (top) and reliability diagrams (bottom) for a 5-layer LeNet (left) and a 110-layer ResNet (right) on CIFAR-100. Refer to the text below for detailed illustration. ::::

Calibrated confidence estimates are also important for model interpretability. Humans have a natural cognitive intuition for probabilities [11]. Good confidence estimates provide a valuable extra bit of information to establish trustworthiness with the user – especially for neural networks, whose classification decisions are often difficult to interpret. Further, good probability estimates can be used to incorporate neural networks into other probabilistic models. For example, one can improve performance by combining network outputs with a language model in speech recognition [7, 12], or with camera information for object detection ([13]).

In 2005, [14] showed that neural networks typically produce well-calibrated probabilities on binary classification tasks. While neural networks today are undoubtedly more accurate than they were a decade ago, we discover with great surprise that modern neural networks are no longer well-calibrated. This is visualized in Figure 1, which compares a 5-layer LeNet (left) [15] with a 110-layer ResNet (right) [3] on the CIFAR-100 dataset. The top row shows the distribution of prediction confidence (i.e. probabilities associated with the predicted label) as histograms. The average confidence of LeNet closely matches its accuracy, while the average confidence of the ResNet is substantially higher than its accuracy. This is further illustrated in the bottom row reliability diagrams [16, 14], which show accuracy as a function of confidence. We see that LeNet is well-calibrated, as confidence closely approximates the expected accuracy (i.e. the bars align roughly along the diagonal). On the other hand, the ResNet's accuracy is better, but does not match its confidence.

Our goal is not only to understand why neural networks have become miscalibrated, but also to identify what methods can alleviate this problem. In this paper, we demonstrate on several computer vision and NLP tasks that neural networks produce confidences that do not represent true probabilities. Additionally, we offer insight and intuition into network training and architectural trends that may cause miscalibration. Finally, we compare various post-processing calibration methods on state-of-the-art neural networks, and introduce several extensions of our own. Surprisingly, we find that a single-parameter variant of Platt scaling [17] – which we refer to as temperature scaling – is often the most effective method at obtaining calibrated probabilities. Because this method is straightforward to implement with existing deep learning frameworks, it can be easily adopted in practical settings.

2. Definitions

Section Summary: This section defines key concepts for evaluating neural networks in multi-class classification tasks, where the goal is to make the model's confidence scores accurately reflect the true probability of correct predictions—for instance, an 80% confidence should mean about 80 correct out of 100 such predictions. It introduces reliability diagrams as visual tools that plot binned accuracies against average confidences, with a perfect diagonal line indicating ideal calibration, and explains metrics like Expected Calibration Error (ECE), a weighted average of gaps between accuracy and confidence across bins, and Maximum Calibration Error (MCE), the largest such gap. It also covers Negative Log Likelihood (NLL), a standard loss measure that assesses how closely the model's predicted probabilities match the actual data distribution.

The problem we address in this paper is supervised multi-class classification with neural networks. The input $X\in \mathcal{X}$ and label $Y\in \mathcal{Y}={1, \ldots, K}$ are random variables that follow a ground truth joint distribution $\pi(X, Y) = \pi(Y|X) \pi(X)$. Let $h$ be a neural network with $h(X) = (\hat{Y}, \hat{P})$, where $\hat{Y}$ is a class prediction and $\hat{P}$ is its associated confidence, i.e. probability of correctness. We would like the confidence estimate $\hat{P}$ to be calibrated, which intuitively means that $\hat{P}$ represents a true probability. For example, given 100 predictions, each with confidence of $0.8$, we expect that $80$ should be correctly classified. More formally, we define perfect calibration as

$ \mathop{\mathbb{P}} \left(\hat{Y}=Y ;|; \hat{P} = p\right)=p, \quad\forall p \in [0, 1]\tag{1} $

where the probability is over the joint distribution. In all practical settings, achieving perfect calibration is impossible. Additionally, the probability in Equation 1 cannot be computed using finitely many samples since $\hat{P}$ is a continuous random variable. This motivates the need for empirical approximations that capture the essence of Equation 1.

Reliability Diagrams

(e.g. Figure 1 bottom) are a visual representation of model calibration ([16, 14]). These diagrams plot expected sample accuracy as a function of confidence. If the model is perfectly calibrated – i.e. if Equation 1 holds – then the diagram should plot the identity function. Any deviation from a perfect diagonal represents miscalibration.

To estimate the expected accuracy from finite samples, we group predictions into $M$ interval bins (each of size $1/M$) and calculate the accuracy of each bin. Let $B_m$ be the set of indices of samples whose prediction confidence falls into the interval $I_m = (\frac{m-1}{M}, \frac{m}{M}]$. The accuracy of $B_m$ is

$ \operatorname{acc}(B_m) = \frac{1}{|B_m|}\sum_{i \in B_m}\mathbf{1}(\hat{y}_i = y_i), $

where $\hat{y}_i$ and $y_i$ are the predicted and true class labels for sample $i$. Basic probability tells us that $\operatorname{acc}(B_m)$ is an unbiased and consistent estimator of $\mathop{\mathbb{P}}(\hat{Y} = Y \mid \hat{P} \in I_m)$. We define the average confidence within bin $B_m$ as

$ \operatorname{conf}(B_m) = \frac{1}{|B_m|}\sum_{i \in B_m}\hat{p}_i, $

where $\hat{p}_i$ is the confidence for sample $i$. $\operatorname{acc}(B_m)$ and $\operatorname{conf}(B_m)$ approximate the left-hand and right-hand sides of Equation 1 respectively for bin $B_m$. Therefore, a perfectly calibrated model will have $\operatorname{acc}(B_m) = \operatorname{conf}(B_m)$ for all $m \in { 1, \ldots, M }$. Note that reliability diagrams do not display the proportion of samples in a given bin, and thus cannot be used to estimate how many samples are calibrated.

Expected Calibration Error (ECE).

While reliability diagrams are useful visual tools, it is more convenient to have a scalar summary statistic of calibration. Since statistics comparing two distributions cannot be comprehensive, previous works have proposed variants, each with a unique emphasis. One notion of miscalibration is the difference in expectation between confidence and accuracy, i.e.

$ \begin{align} \mathop{\mathbb{E}}_{\hat{P}} \left[\left| \mathop{\mathbb{P}}\left(\hat{Y} = Y ;|; \hat{P} = p\right) - p \right| \right] \end{align}\tag{2} $

Expected Calibration Error ([18]) – or ECE – approximates Equation 2 by partitioning predictions into $M$ equally-spaced bins (similar to the reliability diagrams) and taking a weighted average of the bins' accuracy/confidence difference. More precisely,

$ \text{ECE} = \sum_{m=1}^{M}\frac{|B_m|}{n}\bigg| \operatorname{acc}(B_m) - \operatorname{conf}(B_m)\bigg|,\tag{3} $

where $n$ is the number of samples. The difference between $\operatorname{acc}$ and $\operatorname{conf}$ for a given bin represents the calibration gap (red bars in reliability diagrams – e.g. Figure 1). We use ECE as the primary empirical metric to measure calibration. See Section S1 for more analysis of this metric.

Maximum Calibration Error (MCE).

In high-risk applications where reliable confidence measures are absolutely necessary, we may wish to minimize the worst-case deviation between confidence and accuracy:

$ \max_{p \in [0, 1]} \left| \mathop{\mathbb{P}}\left(\hat{Y} = Y ;|; \hat{P} = p\right) - p \right|. $

The Maximum Calibration Error ([18]) – or MCE – estimates this deviation. Similarly to ECE, this approximation involves binning:

$ \text{MCE} = \max_{m \in {1, \ldots, M} }\left| \operatorname{acc}(B_m) - \operatorname{conf}(B_m)\right|.\tag{4} $

We can visualize MCE and ECE on reliability diagrams. MCE is the largest calibration gap (red bars) across all bins, whereas ECE is a weighted average of all gaps. For perfectly calibrated classifiers, MCE and ECE both equal 0.

Negative log likelihood

is a standard measure of a probabilistic model's quality [19]. It is also referred to as the cross entropy loss in the context of deep learning [20]. Given a probabilistic model $\hat{\pi}(Y|X)$ and $n$ samples, NLL is defined as:

$ \begin{align} \mathcal{L} = -\sum_{i=1}^{n}\log(\hat{\pi}(y_i| \mathbf{x}_i)) \end{align} $

It is a standard result [19] that, in expectation, NLL is minimized if and only if $\hat{\pi}(Y|X)$ recovers the ground truth conditional distribution $\pi(Y|X)$.

3. Observing Miscalibration

Section Summary: Recent advances in neural networks, such as building much larger models with more layers and filters, have improved accuracy but often make the models overly confident in their predictions, leading to poor calibration where stated probabilities don't match actual outcomes. Techniques like batch normalization speed up training and enable deeper networks but tend to worsen this overconfidence, while reducing traditional regularization like weight decay—once common to prevent overfitting—further harms calibration without always affecting error rates. This issue stems from a key mismatch: during training, models can fine-tune to minimize prediction uncertainty more than needed for correct classifications, prioritizing confidence over reliable probability estimates.

The architecture and training procedures of neural networks have rapidly evolved in recent years. In this section we identify some recent changes that are responsible for the miscalibration phenomenon observed in Figure 1. Though we cannot claim causality, we find that increased model capacity and lack of regularization are closely related to model miscalibration.

Model capacity.

The model capacity of neural networks has increased at a dramatic pace over the past few years. It is now common to see networks with hundreds, if not thousands of layers [3, 4] and hundreds of convolutional filters per layer [21]. Recent work shows that very deep or wide models are able to generalize better than smaller ones, while exhibiting the capacity to easily fit the training set [22].

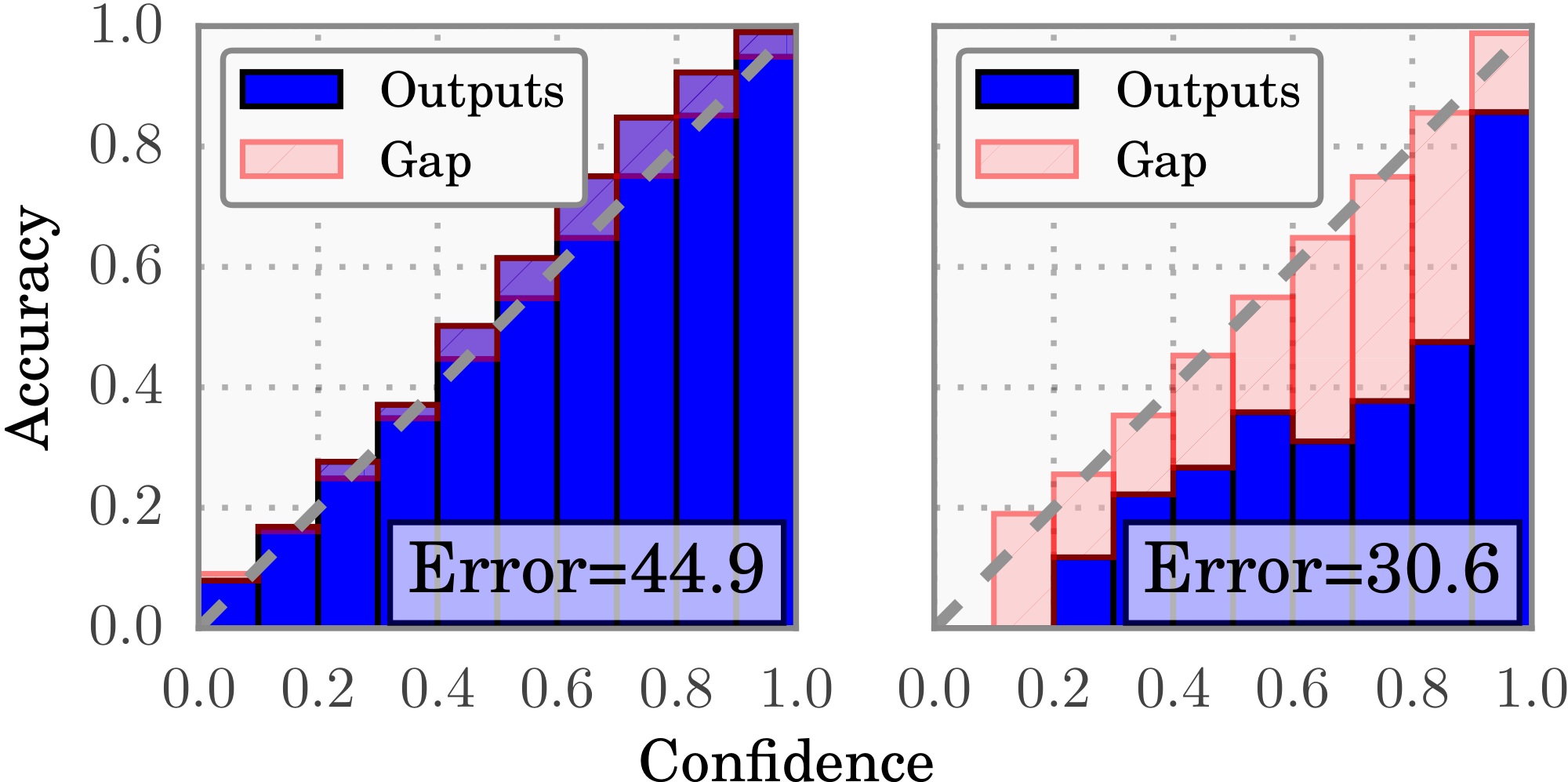

Although increasing depth and width may reduce classification error, we observe that these increases negatively affect model calibration. Figure 2 displays error and ECE as a function of depth and width on a ResNet trained on CIFAR-100. The far left figure varies depth for a network with 64 convolutional filters per layer, while the middle left figure fixes the depth at 14 layers and varies the number of convolutional filters per layer. Though even the smallest models in the graph exhibit some degree of miscalibration, the ECE metric grows substantially with model capacity. During training, after the model is able to correctly classify (almost) all training samples, NLL can be further minimized by increasing the confidence of predictions. Increased model capacity will lower training NLL, and thus the model will be more (over)confident on average.

Batch Normalization

[23] improves the optimization of neural networks by minimizing distribution shifts in activations within the neural network's hidden layers. Recent research suggests that these normalization techniques have enabled the development of very deep architectures, such as ResNets [3] and DenseNets [5]. It has been shown that Batch Normalization improves training time, reduces the need for additional regularization, and can in some cases improve the accuracy of networks.

While it is difficult to pinpoint exactly how Batch Normalization affects the final predictions of a model, we do observe that models trained with Batch Normalization tend to be more miscalibrated. In the middle right plot of Figure 2, we see that a 6-layer ConvNet obtains worse calibration when Batch Normalization is applied, even though classification accuracy improves slightly. We find that this result holds regardless of the hyperparameters used on the Batch Normalization model (i.e. low or high learning rate, etc.).

Weight decay,

which used to be the predominant regularization mechanism for neural networks, is decreasingly utilized when training modern neural networks. Learning theory suggests that regularization is necessary to prevent overfitting, especially as model capacity increases [24]. However, due to the apparent regularization effects of Batch Normalization, recent research seems to suggest that models with less L2 regularization tend to generalize better [23]. As a result, it is now common to train models with little weight decay, if any at all. The top performing ImageNet models of 2015 all use an order of magnitude less weight decay than models of previous years [3, 1].

We find that training with less weight decay has a negative impact on calibration. The far right plot in Figure 2 displays training error and ECE for a 110-layer ResNet with varying amounts of weight decay. The only other forms of regularization are data augmentation and Batch Normalization. We observe that calibration and accuracy are not optimized by the same parameter setting. While the model exhibits both over-regularization and under-regularization with respect to classification error, it does not appear that calibration is negatively impacted by having too much weight decay. Model calibration continues to improve when more regularization is added, well after the point of achieving optimal accuracy. The slight uptick at the end of the graph may be an artifact of using a weight decay factor that impedes optimization.

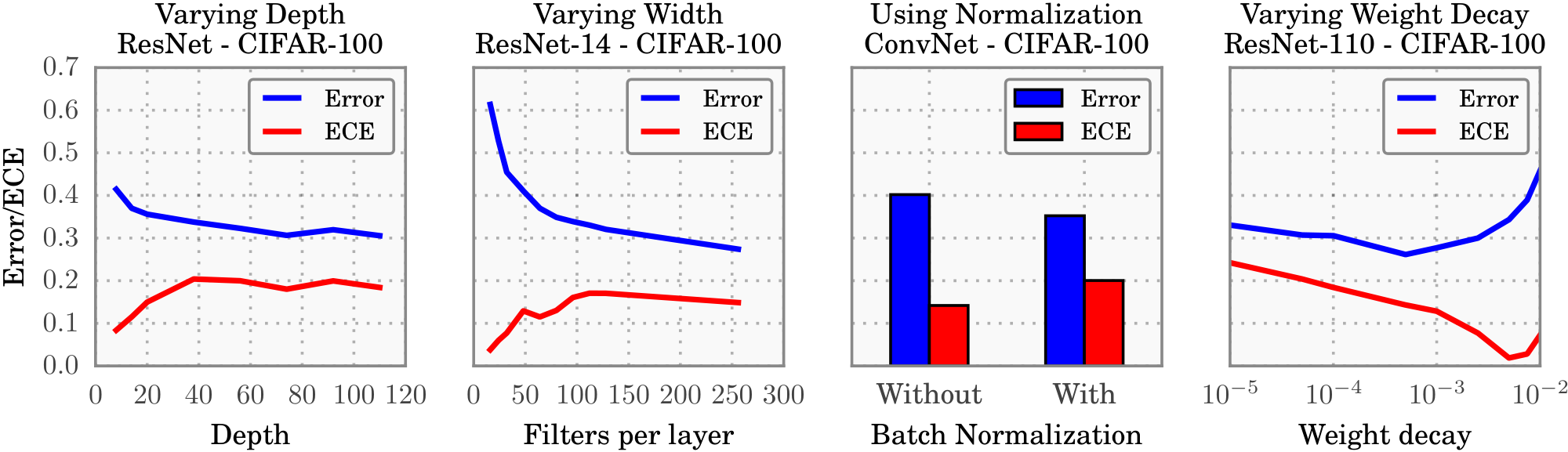

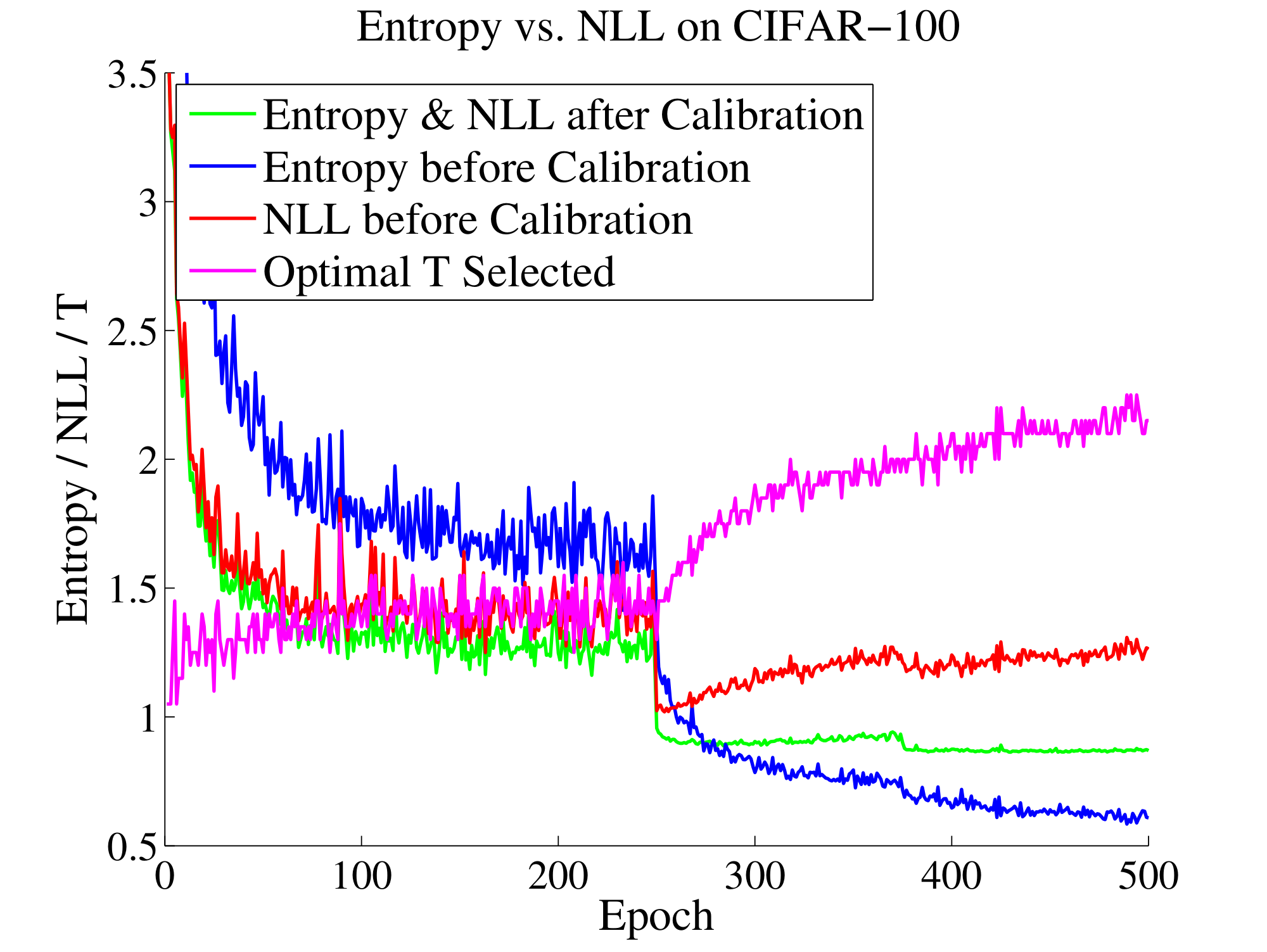

NLL

can be used to indirectly measure model calibration. In practice, we observe a disconnect between NLL and accuracy, which may explain the miscalibration in Figure 2. This disconnect occurs because neural networks can overfit to NLL without overfitting to the 0/1 loss. We observe this trend in the training curves of some miscalibrated models. Figure 3 shows test error and NLL (rescaled to match error) on CIFAR-100 as training progresses. Both error and NLL immediately drop at epoch 250, when the learning rate is dropped; however, NLL overfits during the remainder of training. Surprisingly, overfitting to NLL is beneficial to classification accuracy. On CIFAR-100, test error drops from $29%$ to $27%$ in the region where NLL overfits. This phenomenon renders a concrete explanation of miscalibration: the network learns better classification accuracy at the expense of well-modeled probabilities.

We can connect this finding to recent work examining the generalization of large neural networks. [22] observe that deep neural networks seemingly violate the common understanding of learning theory that large models with little regularization will not generalize well. The observed disconnect between NLL and 0/1 loss suggests that these high capacity models are not necessarily immune from overfitting, but rather, overfitting manifests in probabilistic error rather than classification error.

4. Calibration Methods

Section Summary: This section explains methods for calibrating probability outputs from binary classification models, which are post-processing techniques applied to a separate validation set to make predictions more reliable without retraining the model. It reviews simple approaches like histogram binning, which groups predictions into fixed intervals and sets calibrated values based on the actual outcomes in each group, and isotonic regression, a more flexible version that optimizes both the groups and their values to better match reality. Other methods include Bayesian binning, which averages results across many possible groupings for smoother calibration, and Platt scaling, a straightforward parametric technique that fits a logistic regression to adjust the model's raw scores into trustworthy probabilities.

In this section, we first review existing calibration methods, and introduce new variants of our own. All methods are post-processing steps that produce (calibrated) probabilities. Each method requires a hold-out validation set, which in practice can be the same set used for hyperparameter tuning. We assume that the training, validation, and test sets are drawn from the same distribution.

4.1. Calibrating Binary Models

We first introduce calibration in the binary setting, i.e. $\mathcal{Y}={0, 1}$. For simplicity, throughout this subsection, we assume the model outputs only the confidence for the positive class.[^1] Given a sample $\mathbf{x}_i$, we have access to $\hat p_i$ – the network's predicted probability of $y_i = 1$, as well as $z_i \in \mathbb{R}$ – which is the network's non-probabilistic output, or logit. The predicted probability $\hat p_i$ is derived from $z_i$ using a sigmoid function $\sigma$; i.e. $\hat p_i = \sigma(z_i)$. Our goal is to produce a calibrated probability $\hat q_i$ based on $y_i$, $\hat p_i$, and $z_i$.

[^1]: This is in contrast with the setting in Section 2, in which the model produces both a class prediction and confidence.

Histogram binning

[25] is a simple non-parametric calibration method. In a nutshell, all uncalibrated predictions $\hat{p}i$ are divided into mutually exclusive bins $B_1, \dots, B_M$. Each bin is assigned a calibrated score $\theta_m$; i.e. if $\hat p_i$ is assigned to bin $B_m$, then $\hat q_i = \theta_m$. At test time, if prediction $\hat{p}{te}$ falls into bin $B_m$, then the calibrated prediction $\hat q_{te}$ is $\theta_m$. More precisely, for a suitably chosen $M$ (usually small), we first define bin boundaries $0 = a_1 \leq a_2 \leq \ldots \leq a_{M+1} = 1$, where the bin $B_m$ is defined by the interval $(a_m, a_{m+1}]$. Typically the bin boundaries are either chosen to be equal length intervals or to equalize the number of samples in each bin. The predictions $\theta_m$ are chosen to minimize the bin-wise squared loss:

$ \min_{\theta_1, \ldots, \theta_M} : \sum_{m=1}^M \sum_{i = 1}^n \mathbf{1} (a_m \leq \hat p_i < a_{m+1}) \left(\theta_m - y_i \right)^2,\tag{5} $

where $\mathbf{1}$ is the indicator function. Given fixed bins boundaries, the solution to 5 results in $\theta_m$ that correspond to the average number of positive-class samples in bin $B_m$.

Isotonic regression

[26], arguably the most common non-parametric calibration method, learns a piecewise constant function $f$ to transform uncalibrated outputs; i.e. $\hat q_i = f(\hat p_i)$. Specifically, isotonic regression produces $f$ to minimize the square loss $\sum_{i=1}^n (f(\hat p_i) - y_i)^2$. Because $f$ is constrained to be piecewise constant, we can write the optimization problem as:

$ \begin{aligned}

\min_{\substack{M \ \theta_1, \ldots, \theta_M \ a_1, \ldots, a_{M+1}}} & \hspace{3pt} \sum_{m=1}^M \sum_{i = 1}^n \mathbf{1} (a_m \leq \hat p_i < a_{m+1}) \left(\theta_m - y_i \right)^2 \ \text{subject to} & \hspace{8pt} 0 = a_1 \leq a_2 \leq \ldots \leq a_{M+1} = 1, \nonumber \ & \hspace{8pt} \theta_1 \leq \theta_2 \leq \ldots \leq \theta_M. \nonumber \end{aligned}\tag{6} $

where $M$ is the number of intervals; $a_1, \ldots, a_{M+1}$ are the interval boundaries; and $\theta_1, \ldots, \theta_M$ are the function values. Under this parameterization, isotonic regression is a strict generalization of histogram binning in which the bin boundaries and bin predictions are jointly optimized.

Bayesian Binning into Quantiles (BBQ)

[18] is a extension of histogram binning using Bayesian model averaging. Essentially, BBQ marginalizes out all possible binning schemes to produce $\hat q_i$. More formally, a binning scheme $s$ is a pair $(M, \mathcal{I})$ where $M$ is the number of bins, and $\mathcal{I}$ is a corresponding partitioning of $[0, 1]$ into disjoint intervals ($0 = a_1 \leq a_2 \leq \ldots \leq a_{M+1} = 1$). The parameters of a binning scheme are $\theta_1, \ldots, \theta_M$. Under this framework, histogram binning and isotonic regression both produce a single binning scheme, whereas BBQ considers a space $\mathcal{S}$ of all possible binning schemes for the validation dataset $D$. BBQ performs Bayesian averaging of the probabilities produced by each scheme:[^2]

[^2]: Because the validation dataset is finite, $\mathcal{S}$ is as well.

$ \begin{align*} \mathop{\mathbb{P}}(\hat q_{te} ;|; \hat p_{te}, D) &= \sum_{s \in \mathcal{S}} \mathop{\mathbb{P}}(\hat q_{te}, S=s ;|; \hat p_{te}, D) \ &= \sum_{s \in \mathcal{S}} \mathop{\mathbb{P}}(\hat q_{te} ;|; \hat p_{te}, S!=!s, D) \mathop{\mathbb{P}}(S!=!s ;|; D). \end{align*} $

where $\mathop{\mathbb{P}}(\hat q_{te} ;|; \hat p_{te}, S!=!s, D)$ is the calibrated probability using binning scheme $s$. Using a uniform prior, the weight $\mathop{\mathbb{P}}(S!=!s ;|; D)$ can be derived using Bayes' rule:

$ \mathop{\mathbb{P}}(S!=!s ;|; D) = \frac{\mathop{\mathbb{P}}(D ;|; S!=!s)}{\sum_{s' \in \mathcal{S}} \mathop{\mathbb{P}}(D ;|; S!=!s')}. $

The parameters $\theta_1, \ldots, \theta_M$ can be viewed as parameters of $M$ independent binomial distributions. Hence, by placing a Beta prior on $\theta_1, \ldots, \theta_M$, we can obtain a closed form expression for the marginal likelihood $\mathop{\mathbb{P}}(D ;|; S!=!s)$. This allows us to compute $\mathop{\mathbb{P}}(\hat q_{te} ;|; \hat p_{te}, D)$ for any test input.

Platt scaling

[17] is a parametric approach to calibration, unlike the other approaches. The non-probabilistic predictions of a classifier are used as features for a logistic regression model, which is trained on the validation set to return probabilities. More specifically, in the context of neural networks [14], Platt scaling learns scalar parameters $a, b \in \mathbb{R}$ and outputs $\hat q_i = \sigma(a z_i +b)$ as the calibrated probability. Parameters $a$ and $b$ can be optimized using the NLL loss over the validation set. It is important to note that the neural network's parameters are fixed during this stage.

:::

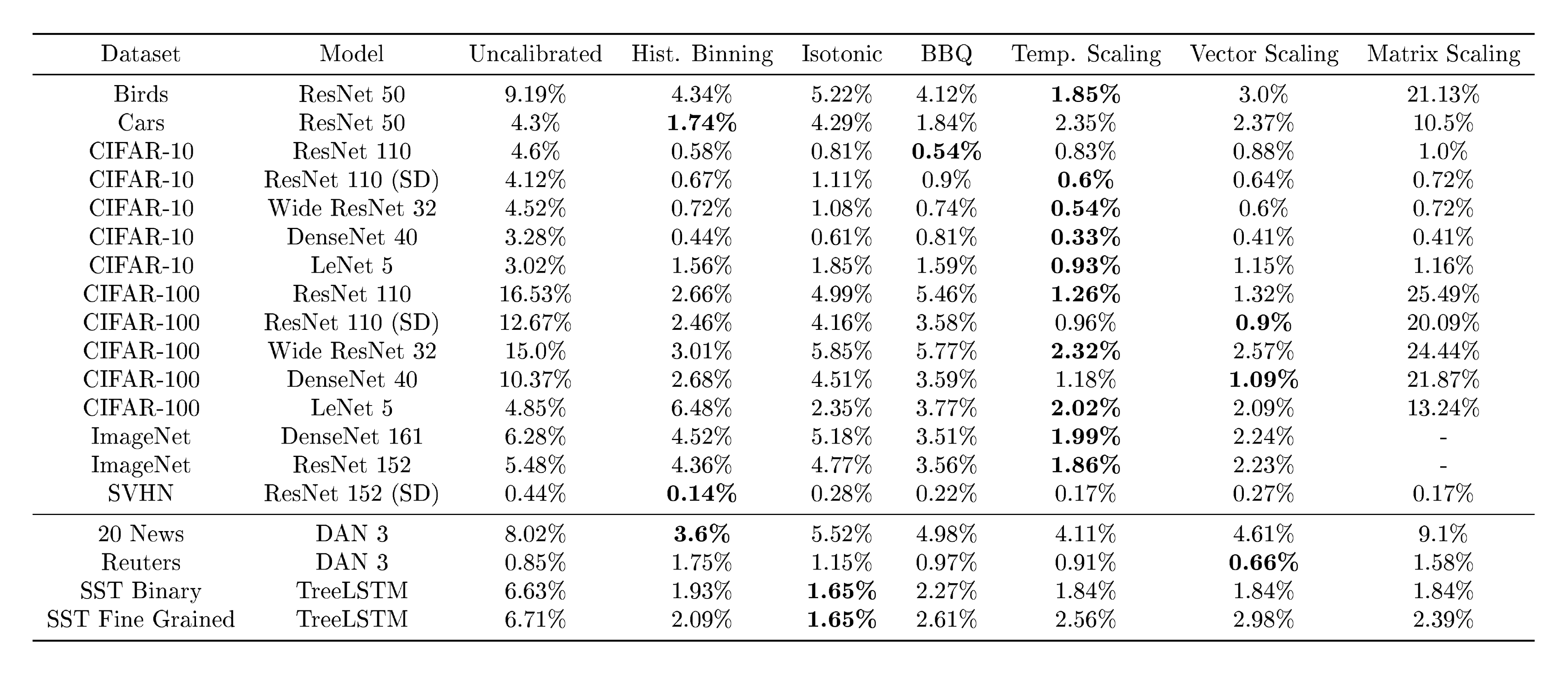

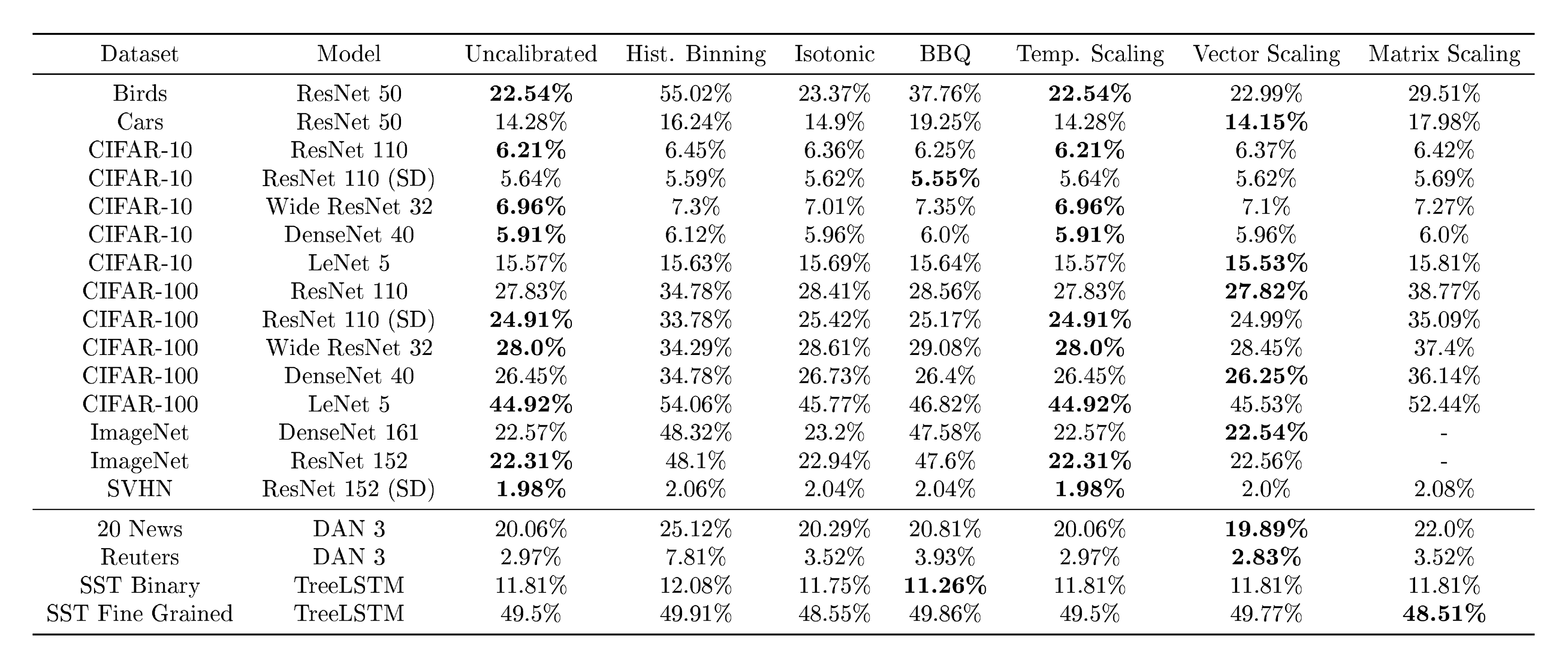

Table 1: ECE (%) (with $M=15$ bins) on standard vision and NLP datasets before calibration and with various calibration methods. The number following a model's name denotes the network depth.

:::

4.2. Extension to Multiclass Models

For classification problems involving $K>2$ classes, we return to the original problem formulation. The network outputs a class prediction $\hat y_i$ and confidence score $\hat p_i$ for each input $\mathbf{x}i$. In this case, the network logits $\mathbf{z}i$ are vectors, where $\hat y_i = \operatorname{argmax}{k} z_i^{(k)}$, and $\hat p_i$ is typically derived using the softmax function $\sigma\text{SM}$:

$ \sigma_\text{SM}(\mathbf{z}i)^{(k)} = \frac{\exp(z_i^{(k)})} {\sum{j=1}^K \exp(z_i^{(j)})}, \hspace{10pt} \hat p_i = \max_k : \sigma_\text{SM}(\mathbf{z}_i)^{(k)}. $

The goal is to produce a calibrated confidence $\hat q_i$ and (possibly new) class prediction $\hat y_i'$ based on $y_i$, $\hat y_i$, $\hat p_i$, and $\mathbf{z}_i$.

Extension of binning methods.

One common way of extending binary calibration methods to the multiclass setting is by treating the problem as $K$ one-versus-all problems [26]. For $k = 1, \ldots, K$, we form a binary calibration problem where the label is $\mathbf{1}(y_i = k)$ and the predicted probability is $\sigma_\text{SM}(\mathbf{z}i)^{(k)}$. This gives us $K$ calibration models, each for a particular class. At test time, we obtain an unnormalized probability vector $[\hat q_i^{(1)}, \ldots, \hat q_i^{(K)}]$, where $\hat q_i^{(k)}$ is the calibrated probability for class $k$. The new class prediction $\hat y_i'$ is the argmax of the vector, and the new confidence $\hat q_i'$ is the max of the vector normalized by $\sum{k=1}^K \hat q_i^{(k)}$. This extension can be applied to histogram binning, isotonic regression, and BBQ.

Matrix and vector scaling

are two multi-class extensions of Platt scaling. Let $\mathbf{z}_i$ be the logits vector produced before the softmax layer for input $\mathbf{x}_i$. Matrix scaling applies a linear transformation $\mathbf{W} \mathbf{z}_i + \mathbf{b}$ to the logits:

$ \begin{aligned} \hat q_i &= \max_k : \sigma_\text{SM} (\mathbf{W} \mathbf{z}_i + \mathbf{b})^{(k)}, \ \hat y_i' &= \operatorname{argmax}_k : (\mathbf{W} \mathbf{z}_i + \mathbf{b})^{(k)}. \end{aligned}\tag{7} $

The parameters $\mathbf{W}$ and $\mathbf{b}$ are optimized with respect to NLL on the validation set. As the number of parameters for matrix scaling grows quadratically with the number of classes $K$, we define vector scaling as a variant where $\mathbf{W}$ is restricted to be a diagonal matrix.

Temperature scaling,

the simplest extension of Platt scaling, uses a single scalar parameter $T > 0$ for all classes. Given the logit vector $\mathbf{z}_i$, the new confidence prediction is

$ \hat q_i = \max_k : \sigma_\text{SM} (\mathbf{z}_i / T)^{(k)}.\tag{8} $

$T$ is called the temperature, and it "softens" the softmax (i.e. raises the output entropy) with $T > 1$. As $T \rightarrow \infty$, the probability $\hat q_i$ approaches $1/K$, which represents maximum uncertainty. With $T=1$, we recover the original probability $\hat p_i$. As $T \rightarrow 0$, the probability collapses to a point mass (i.e. $\hat q_i = 1$). $T$ is optimized with respect to NLL on the validation set. Because the parameter $T$ does not change the maximum of the softmax function, the class prediction $\hat y_i'$ remains unchanged. In other words, temperature scaling does not affect the model's accuracy.

Temperature scaling is commonly used in settings such as knowledge distillation ([27]) and statistical mechanics ([28]). To the best of our knowledge, we are not aware of any prior use in the context of calibrating probabilistic models.[^3] The model is equivalent to maximizing the entropy of the output probability distribution subject to certain constraints on the logits (see Section S2).

[^3]: To highlight the connection with prior works we define temperature scaling in terms of $\frac{1}{T}$ instead of a multiplicative scalar.

4.3. Other Related Works

Calibration and confidence scores have been studied in various contexts in recent years. [29] study the problem of calibration in the online setting, where the inputs can come from a potentially adversarial source. [30] investigate how to produce calibrated probabilities when the output space is a structured object. [31] use ensembles of networks to obtain uncertainty estimates. [32] penalize overconfident predictions as a form of regularization. [33] use confidence scores to determine if samples are out-of-distribution.

Bayesian neural networks [34, 35] return a probability distribution over outputs as an alternative way to represent model uncertainty. [36] draw a connection between Dropout [37] and model uncertainty, claiming that sampling models with dropped nodes is a way to estimate the probability distribution over all possible models for a given sample. [38] combine this approach with a model that outputs a predictive mean and variance for each data point. This notion of uncertainty is not restricted to classification problems. Additionally, neural networks can be used in conjunction with Bayesian models that output complete distributions. For example, deep kernel learning [39, 40, 41] combines deep neural networks with Gaussian processes on classification and regression problems. In contrast, our framework, which does not augment the neural network model, returns a confidence score rather than returning a distribution of possible outputs.

5. Results

Section Summary: Researchers tested calibration techniques on neural networks for image and document classification using several datasets, finding that most models produce overconfident predictions with miscalibration rates typically between 4% and 10%, though a couple of datasets were already well-calibrated. The simplest method, temperature scaling, proved surprisingly effective, outperforming more complex approaches like vector or matrix scaling on image tasks and matching them on text tasks, revealing that miscalibration often stems from a low-dimensional issue fixable with just one parameter. Binning methods improved calibration but were less effective than temperature scaling and sometimes reduced accuracy, while the latter was also the fastest to compute.

We apply the calibration methods in Section 4 to image classification and document classification neural networks. For image classification we use 6 datasets:

- Caltech-UCSD Birds [42]: 200 bird species. 5994/2897/2897 images for train/validation/test sets.

- Stanford Cars [43]: 196 classes of cars by make, model, and year. 8041/4020/4020 images for train/validation/test.

- ImageNet 2012 [44]: Natural scene images from 1000 classes. 1.3 million/25, 000/25, 000 images for train/validation/test.

- CIFAR-10/CIFAR-100 [45]: Color images ($32\times 32$) from 10/100 classes. 45, 000/5, 000/10, 000 images for train/validation/test.

- Street View House Numbers (SVHN) [46]: $32\times 32$ colored images of cropped out house numbers from Google Street View. 598, 388/6, 000/26, 032 images for train/validation/test.

We train state-of-the-art convolutional networks: ResNets [3], ResNets with stochastic depth (SD) [4], Wide ResNets [21], and DenseNets [5]. We use the data preprocessing, training procedures, and hyperparameters as described in each paper. For Birds and Cars, we fine-tune networks pretrained on ImageNet.

For document classification we experiment with 4 datasets:

- 20 News: News articles, partitioned into 20 categories by content. 9034/2259/7528 documents for train/validation/test.

- Reuters: News articles, partitioned into 8 categories by topic. 4388/1097/2189 documents for train/validation/test.

- Stanford Sentiment Treebank (SST) [47]: Movie reviews, represented as sentence parse trees that are annotated by sentiment. Each sample includes a coarse binary label and a fine grained 5-class label. As described in [48], the training/validation/test sets contain 6920/872/1821 documents for binary, and 544/1101/2210 for fine-grained.

On 20 News and Reuters, we train Deep Averaging Networks (DANs) [49] with 3 feed-forward layers and Batch Normalization. On SST, we train TreeLSTMs (Long Short Term Memory) [48]. For both models we use the default hyperparmaeters suggested by the authors.

Calibration Results.

Table 1 displays model calibration, as measured by ECE (with $M=15$ bins), before and after applying the various methods (see Section S3 for MCE, NLL, and error tables). It is worth noting that most datasets and models experience some degree of miscalibration, with ECE typically between $4$ to $10%$. This is not architecture specific: we observe miscalibration on convolutional networks (with and without skip connections), recurrent networks, and deep averaging networks. The two notable exceptions are SVHN and Reuters, both of which experience ECE values below $1%$. Both of these datasets have very low error ($1.98%$ and $2.97%$, respectively); and therefore the ratio of ECE to error is comparable to other datasets.

Our most important discovery is the surprising effectiveness of temperature scaling despite its remarkable simplicity. Temperature scaling outperforms all other methods on the vision tasks, and performs comparably to other methods on the NLP datasets. What is perhaps even more surprising is that temperature scaling outperforms the vector and matrix Platt scaling variants, which are strictly more general methods. In fact, vector scaling recovers essentially the same solution as temperature scaling – the learned vector has nearly constant entries, and therefore is no different than a scalar transformation. In other words, network miscalibration is intrinsically low dimensional.

The only dataset that temperature scaling does not calibrate is the Reuters dataset. In this instance, only one of the above methods is able to improve calibration. Because this dataset is well-calibrated to begin with (ECE $\leq 1%$), there is not much room for improvement with any method, and post-processing may not even be necessary to begin with. It is also possible that our measurements are affected by dataset split or by the particular binning scheme.

Matrix scaling performs poorly on datasets with hundreds of classes (i.e. Birds, Cars, and CIFAR-100), and fails to converge on the 1000-class ImageNet dataset. This is expected, since the number of parameters scales quadratically with the number of classes. Any calibration model with tens of thousands (or more) parameters will overfit to a small validation set, even when applying regularization.

Binning methods improve calibration on most datasets, but do not outperform temperature scaling. Additionally, binning methods tend to change class predictions which hurts accuracy (see Section S3). Histogram binning, the simplest binning method, typically outperforms isotonic regression and BBQ, despite the fact that both methods are strictly more general. This further supports our finding that calibration is best corrected by simple models.

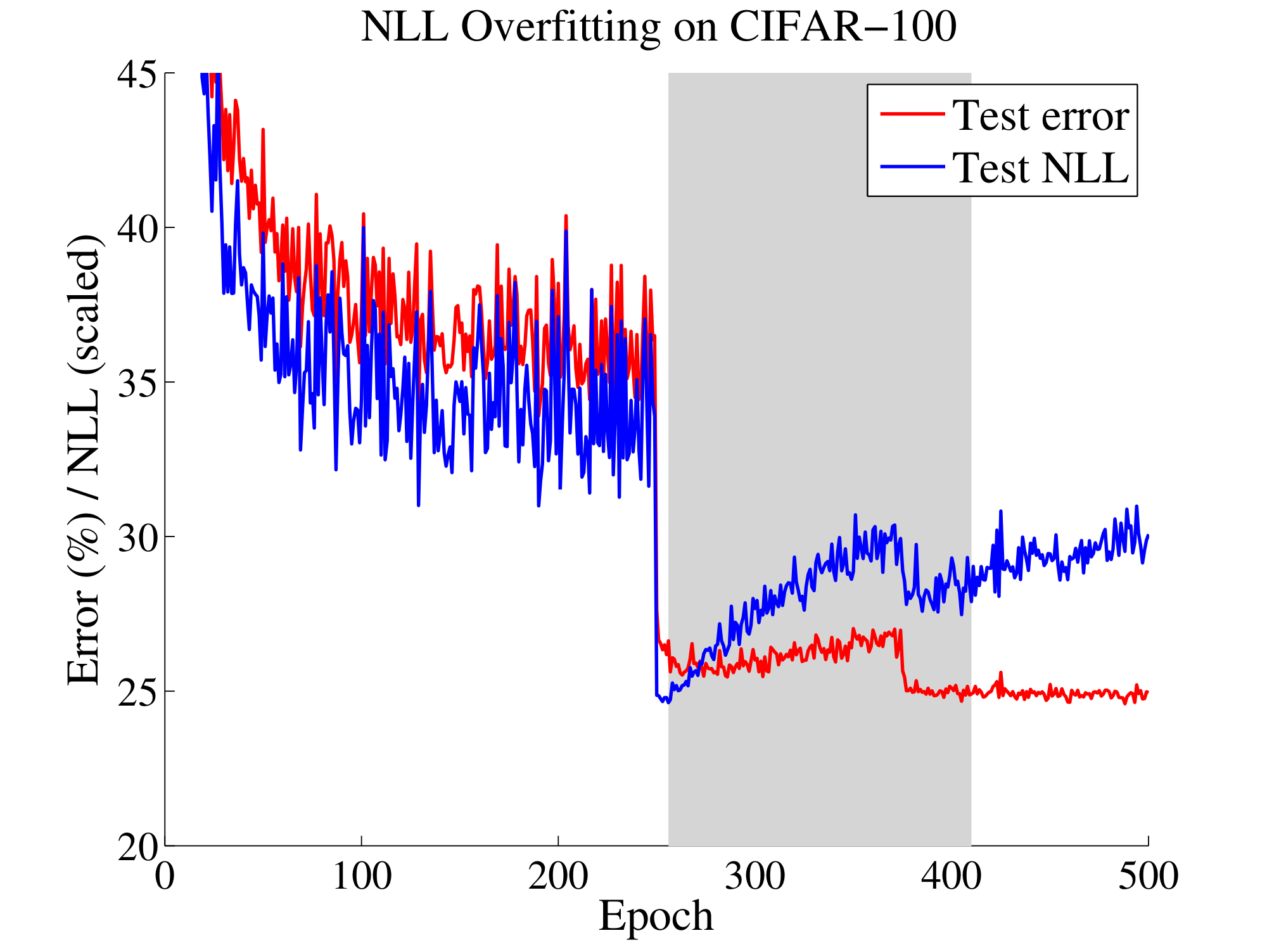

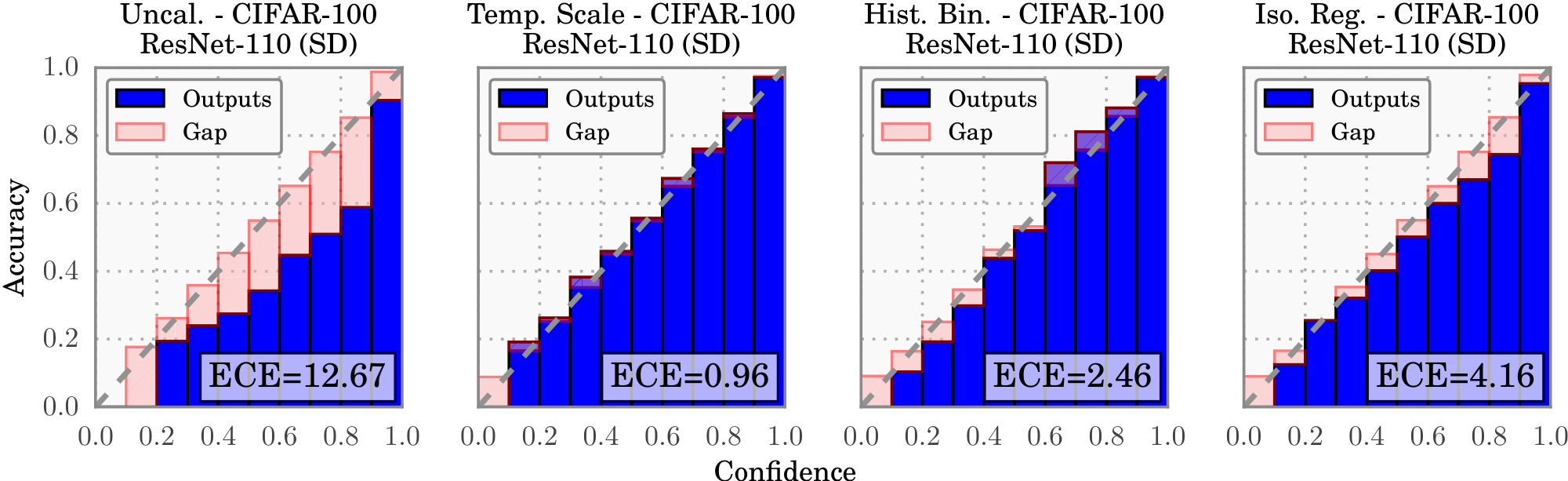

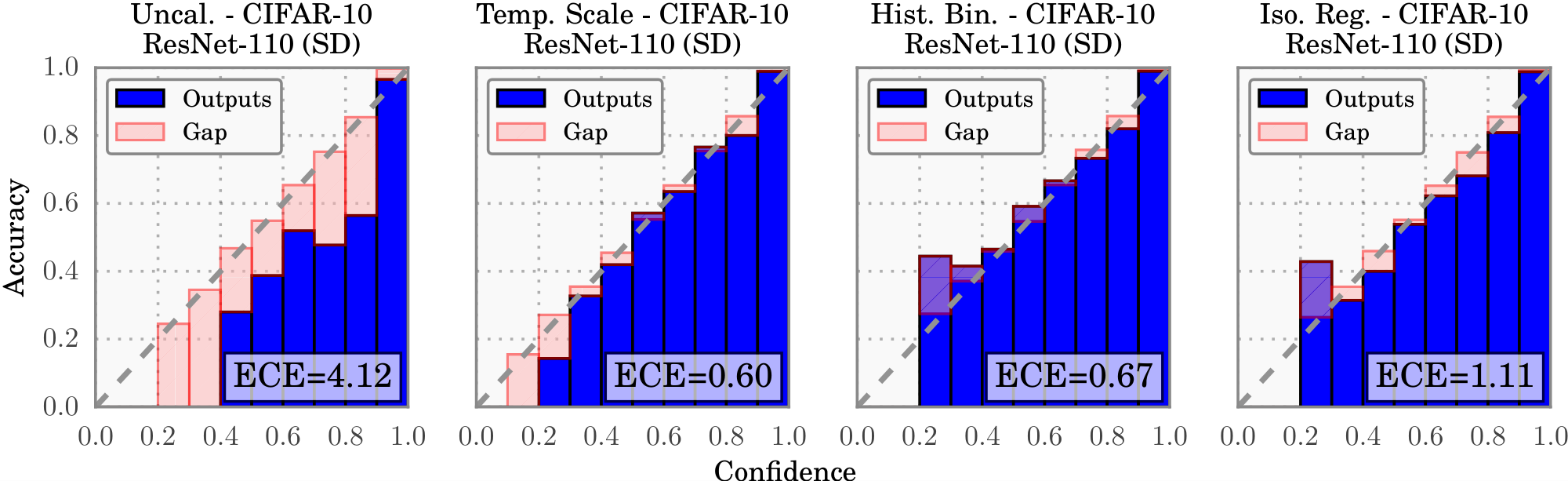

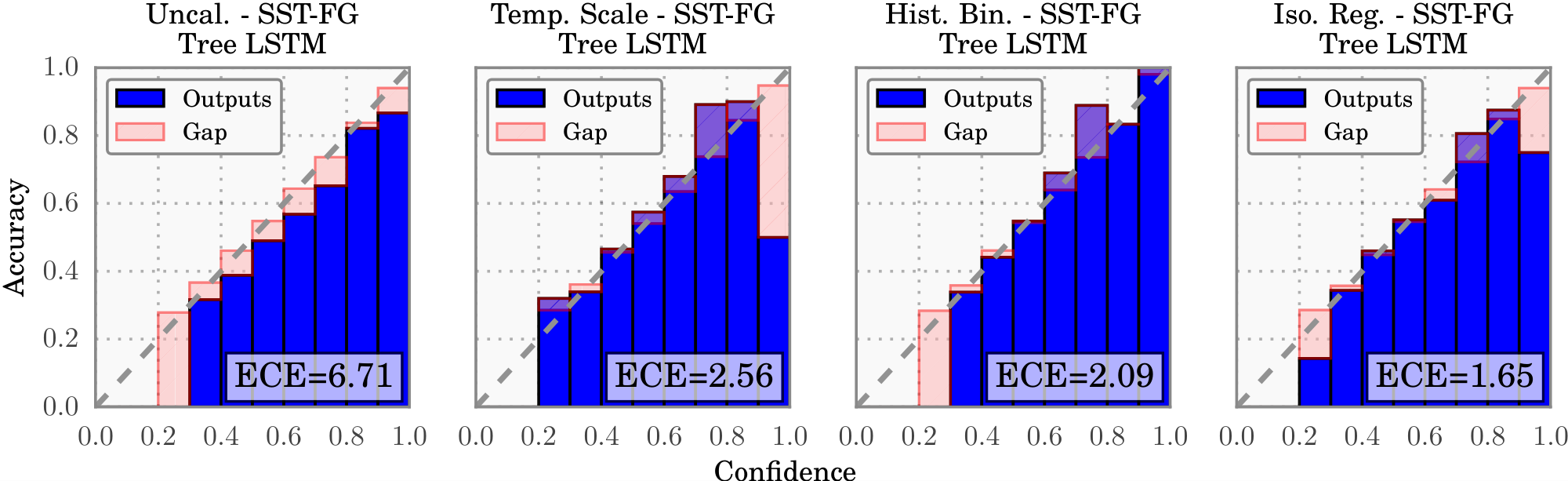

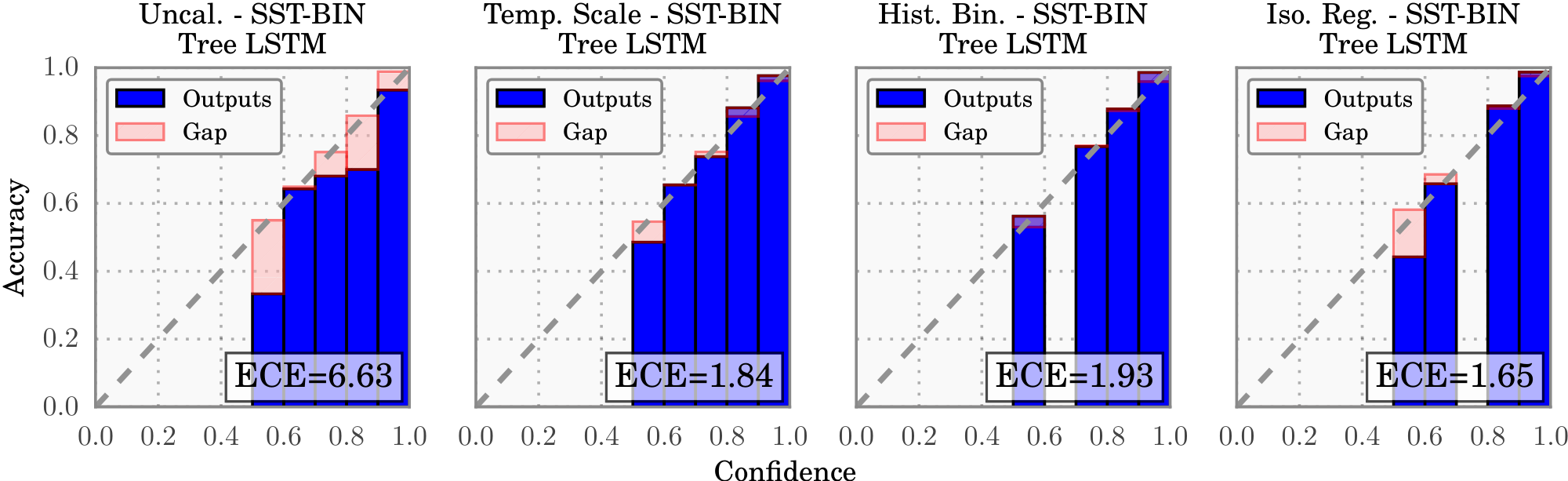

Reliability diagrams.

Figure 4 contains reliability diagrams for 110-layer ResNets on CIFAR-100 before and after calibration. From the far left diagram, we see that the uncalibrated ResNet tends to be overconfident in its predictions. We then can observe the effects of temperature scaling (middle left), histogram binning (middle right), and isotonic regression (far right) on calibration. All three displayed methods produce much better confidence estimates. Of the three methods, temperature scaling most closely recovers the desired diagonal function. Each of the bins are well calibrated, which is remarkable given that all the probabilities were modified by only a single parameter. We include reliability diagrams for other datasets in Section S4.

Computation time.

All methods scale linearly with the number of validation set samples. Temperature scaling is by far the fastest method, as it amounts to a one-dimensional convex optimization problem. Using a conjugate gradient solver, the optimal temperature can be found in 10 iterations, or a fraction of a second on most modern hardware. In fact, even a naive line-search for the optimal temperature is faster than any of the other methods. The computational complexity of vector and matrix scaling are linear and quadratic respectively in the number of classes, reflecting the number of parameters in each method. For CIFAR-100 ($K=100$), finding a near-optimal vector scaling solution with conjugate gradient descent requires at least 2 orders of magnitude more time. Histogram binning and isotonic regression take an order of magnitude longer than temperature scaling, and BBQ takes roughly 3 orders of magnitude more time.

Ease of implementation.

BBQ is arguably the most difficult to implement, as it requires implementing a model averaging scheme. While all other methods are relatively easy to implement, temperature scaling may arguably be the most straightforward to incorporate into a neural network pipeline. In Torch7 ([50]), for example, we implement temperature scaling by inserting a nn.MulConstant between the logits and the softmax, whose parameter is $1/T$. We set $T!=!1$ during training, and subsequently find its optimal value on the validation set.[^4]

[^4]: For an example implementation, see http://github.com/gpleiss/temperature_scaling.

6. Conclusion

Section Summary: Modern neural networks often get better at correctly classifying things, but they become worse at estimating how confident they are in their predictions. Advances in how these networks are built and trained, like making them more complex or adding techniques to stabilize learning, play a big role in this confidence issue, though experts still need to figure out exactly why it happens. Luckily, straightforward fixes like temperature scaling can quickly correct the problem and often work the best.

Modern neural networks exhibit a strange phenomenon: probabilistic error and miscalibration worsen even as classification error is reduced. We have demonstrated that recent advances in neural network architecture and training – model capacity, normalization, and regularization – have strong effects on network calibration. It remains future work to understand why these trends affect calibration while improving accuracy. Nevertheless, simple techniques can effectively remedy the miscalibration phenomenon in neural networks. Temperature scaling is the simplest, fastest, and most straightforward of the methods, and surprisingly is often the most effective.

Acknowledgments

Section Summary: The authors express gratitude for partial financial support from various organizations that helped fund their research. This includes grants from the National Science Foundation, specifically numbered III-1618134, III-1526012, and IIS-1149882. They also acknowledge contributions from the Bill and Melinda Gates Foundation and the Office of Naval Research.

The authors are supported in part by the III-1618134, III-1526012, and IIS-1149882 grants from the National Science Foundation, as well as the Bill and Melinda Gates Foundation and the Office of Naval Research.

S1. Further Information on Calibration Metrics

Section Summary: This section explains how the Expected Calibration Error (ECE) metric links to a precise definition of miscalibration in predictive models, which measures the average absolute difference between a model's predicted confidence in being correct and its actual accuracy. It uses the distribution of predicted probabilities to express this as an integral, which can be approximated by summing differences across bins of predictions for large datasets. Overall, ECE with multiple bins serves as a practical way to estimate this true miscalibration reliably.

We can connect the ECE metric with our exact miscalibration definition, which is restated here:

$ \begin{align*} \mathop{\mathbb{E}}_{\hat{P}} \left[\left| \mathop{\mathbb{P}}\left(\hat{Y} = Y ;|; \hat{P} = p\right) - p \right| \right] \end{align*}\tag{2} $

Let $F_{\hat{P}}(p)$ be the cumulative distribution function of $\hat{P}$ so that $F_{\hat{P}}(b) - F_{\hat{P}}(a) = \mathop{\mathbb{P}}(\hat{P} \in [a, b])$. Using the Riemann-Stieltjes integral we have

$ \begin{align*} & \mathop{\mathbb{E}}{\hat{P}} \left[\left| \mathop{\mathbb{P}}\left(\hat{Y} = Y ;|; \hat{P} = p\right) - p \right| \right] \ &= \int_0^1 \left| \mathop{\mathbb{P}}\left(\hat{Y} = Y ;|; \hat{P}=p\right) - p \right| dF{\hat{P}}(p) \ &\approx \sum_{m=1}^{M} \left| \mathop{\mathbb{P}}(\hat{Y}=Y| \hat{P} = p_m) - p_m \right| \mathop{\mathbb{P}}(\hat{P} \in I_m) \end{align*} $

where $I_m$ represents the interval of bin $B_m$. $\left| \mathop{\mathbb{P}}(\hat{Y}=Y| \hat{P} = p_m) - p_m \right|$ is closely approximated by $\left| \text{acc}(B_m) - \hat{p}(B_m) \right|$ for $n$ large. Hence ECE using $M$ bins converges to the $M$-term Riemann-Stieltjes sum of $\mathop{\mathbb{E}}_{\hat{P}} \left[\left| \mathop{\mathbb{P}}\left(\hat{Y} = Y ;|; \hat{P} = p\right) - p \right| \right]$.

S2. Further Information on Temperature Scaling

Section Summary: Temperature scaling is a method to calibrate machine learning models by adjusting their confidence levels, derived here through maximizing the entropy of probability distributions while ensuring they match the average strength of correct predictions from the model's raw outputs, called logits. This approach guarantees a unique solution in the form of a softened softmax function, where a temperature parameter T scales the logits to prevent overconfidence as the model overfits during training. As shown in visualizations, applying this scaling increases the entropy of predictions and reduces errors on validation data, making the model's outputs more reliable.

Here we derive the temperature scaling model using the entropy maximization principle with an appropriate balanced equation. Claim 1. Given $n$ samples' logit vectors $\mathbf{z}_1, \ldots, \mathbf{z}_n$ and class labels $y_1, \ldots, y_n$, temperature scaling is the unique solution $q$ to the following entropy maximization problem:

$ \begin{align*} \max_{q} \hspace{8pt} & -\sum_{i=1}^n \sum_{k=1}^K q(\mathbf{z}i)^{(k)} \log q(\mathbf{z}i)^{(k)} \ \text{subject to} \hspace{10pt} & q(\mathbf{z}i)^{(k)} \geq 0 \hspace{30pt} \forall i, k \ & \sum{k=1}^K q(\mathbf{z}i)^{(k)} = 1 \hspace{12pt} \forall i \ & \sum{i=1}^n z_i^{(y_i)} = \sum{i=1}^n \sum{k=1}^K z_i^{(k)} q(\mathbf{z}_i)^{(k)}. \end{align*} $

The first two constraint ensure that $q$ is a probability distribution, while the last constraint limits the scope of distributions. Intuitively, the constraint specifies that the average true class logit is equal to the average weighted logit.

Proof: We solve this constrained optimization problem using the Lagrangian. We first ignore the constraint $q(\mathbf{z}_i)^{(k)}$ and later show that the solution satisfies this condition. Let $\lambda, \beta_1, \ldots, \beta_n \in \mathbb{R}$ be the Lagrangian multipliers and define

$ \begin{align*} L = &-\sum_{i=1}^n \sum_{k=1}^K q(\mathbf{z}i)^{(k)} \log q(\mathbf{z}i)^{(k)} \ & + \lambda \sum{i=1}^n \left[\sum{k=1}^K z_i^{(k)} q(\mathbf{z}i)^{(k)} - z_i^{(y_i)} \right] \ & + \sum{i=1}^n \beta_i \sum_{k=1}^K (q(\mathbf{z}_i)^{(k)} - 1). \end{align*} $

Taking the derivative with respect to $q(\mathbf{z}_i)^{(k)}$ gives

$ \frac{\partial}{\partial q(\mathbf{z}_i)^{(k)}} L = -nK - \log q(\mathbf{z}_i)^{(k)} + \lambda z_i^{(k)} + \beta_i. $

Setting the gradient of the Lagrangian $L$ to 0 and rearranging gives

$ q(\mathbf{z}_i)^{(k)} = e^{\lambda z_i^{(k)} + \beta_i - nK}. $

Since $\sum_{k=1}^K q(\mathbf{z}_i)^{(k)} = 1$ for all $i$, we must have

$ q(\mathbf{z}i)^{(k)} = \frac{e^{\lambda z_i^{(k)}}}{\sum{j=1}^K e^{\lambda z_i^{(j)}}}, $

which recovers the temperature scaling model by setting $T = \frac{1}{\lambda}$.

Figure S1 visualizes Claim 1. We see that, as training continues, the model begins to overfit with respect to NLL (red line). This results in a low-entropy softmax distribution over classes (blue line), which explains the model's overconfidence. Temperature scaling not only lowers the NLL but also raises the entropy of the distribution (green line).

S3. Additional Tables

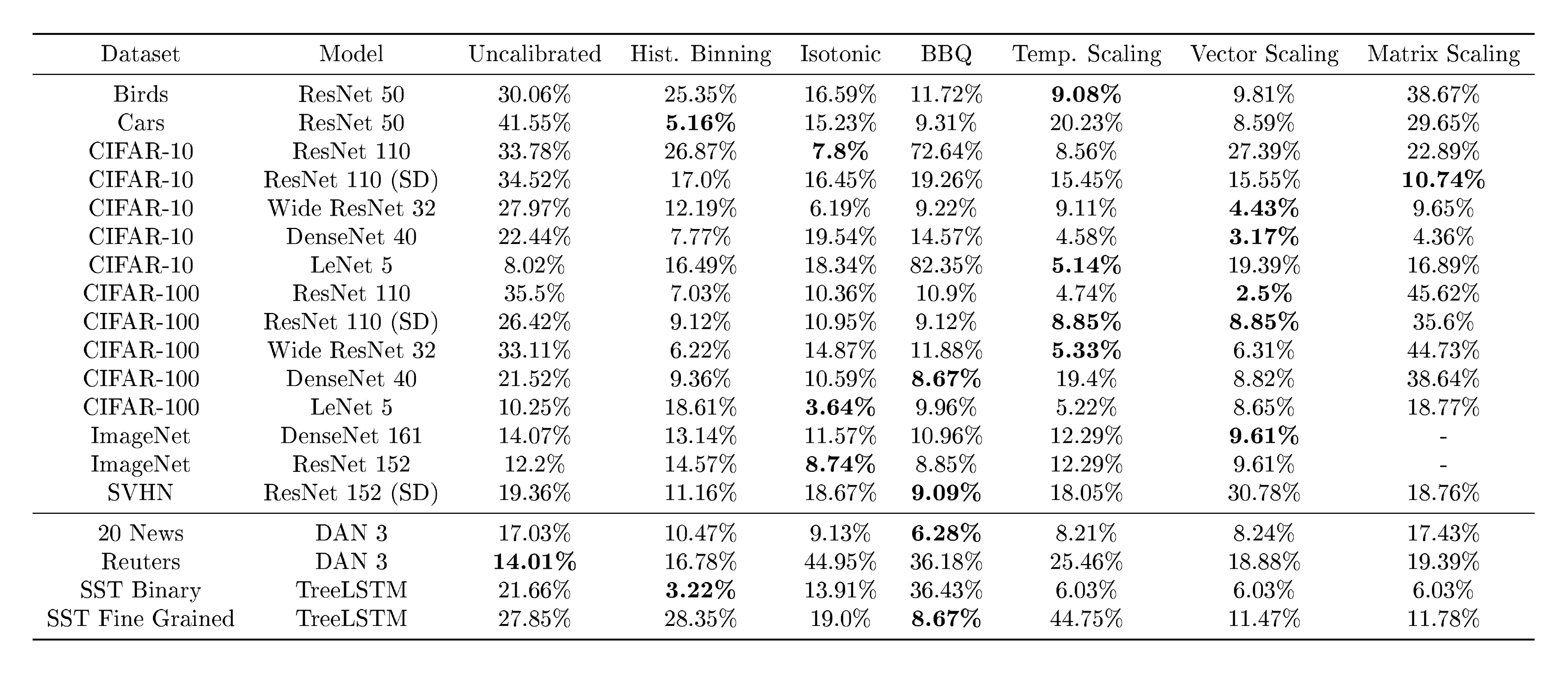

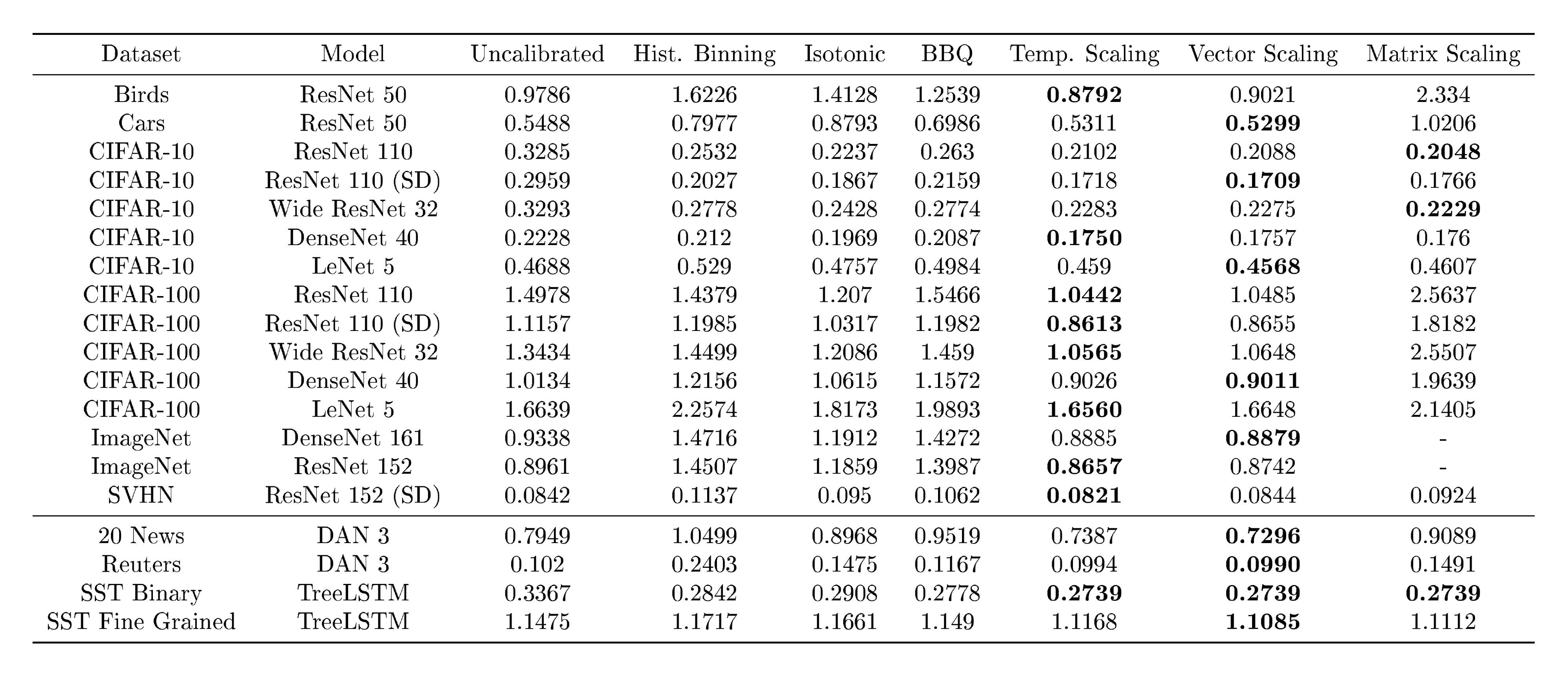

Section Summary: This section presents three supplementary tables that detail performance metrics from experiments on vision and natural language processing datasets, including before and after applying various calibration techniques to machine learning models of different network depths. Table S1 focuses on maximum calibration error, noting its sensitivity to how data is grouped into bins and its limitations with smaller datasets, while Table S2 covers test error rates, observing that one common calibration method yields the same results as the unadjusted models. Table S3 examines negative log-likelihood, which generally mirrors patterns seen in expected calibration error from the main results.

Tables S1, S2, and S3 display the MCE, test error, and NLL for all the experimental settings outlined in Section 5.

:::

Table S1: MCE (%) (with $M=15$ bins) on standard vision and NLP datasets before calibration and with various calibration methods. The number following a model's name denotes the network depth. MCE seems very sensitive to the binning scheme and is less suited for small test sets.

:::

:::

Table S2: Test error (%) on standard vision and NLP datasets before calibration and with various calibration methods. The number following a model's name denotes the network depth. Error with temperature scaling is exactly the same as uncalibrated.

:::

:::

Table S3: NLL (%) on standard vision and NLP datasets before calibration and with various calibration methods. The number following a model's name denotes the network depth. To summarize, NLL roughly follows the trends of ECE.

:::

S4. Additional Reliability Diagrams

Section Summary: This section presents reliability diagrams for two additional datasets, CIFAR-10 and the SST sentiment analysis task in both binary and fine-grained versions, showing how model confidence in predictions compares to actual accuracy before and after a calibration process. The diagrams illustrate improvements in reliability through visual plots divided into before and after views for different methods. Importantly, these diagrams do not indicate the share of predictions falling into each confidence bin, as clarified in an earlier section.

We include reliability diagrams for additional datasets: CIFAR-10 (Figure S2) and SST (Figure S3 and Figure S4). Note that, as mentioned in Section 2, the reliability diagrams do not represent the proportion of predictions that belong to a given bin.

References

Section Summary: This section lists dozens of academic references, primarily papers and books from the mid-1990s to 2017, that form the foundation for research in deep learning and artificial intelligence. It covers advancements in neural networks for tasks like image recognition, speech processing, self-driving cars, and healthcare predictions, including techniques for improving model accuracy and handling uncertainties in forecasts. Classic works on statistical learning and Bayesian methods are also included, providing a broad scholarly backdrop for understanding reliable AI predictions.

[1] Simonyan, Karen and Zisserman, Andrew. Very deep convolutional networks for large-scale image recognition. In ICLR, 2015.

[2] Srivastava, Rupesh Kumar, Greff, Klaus, and Schmidhuber, Jürgen. Highway networks. arXiv preprint arXiv:1505.00387, 2015.

[3] He, Kaiming, Zhang, Xiangyu, Ren, Shaoqing, and Sun, Jian. Deep residual learning for image recognition. In CVPR, pp.\ 770–778, 2016.

[4] Huang, Gao, Sun, Yu, Liu, Zhuang, Sedra, Daniel, and Weinberger, Kilian. Deep networks with stochastic depth. In ECCV, 2016.

[5] Huang, Gao, Liu, Zhuang, Weinberger, Kilian Q, and van der Maaten, Laurens. Densely connected convolutional networks. In CVPR, 2017.

[6] Girshick, Ross. Fast r-cnn. In ICCV, pp.\ 1440–1448, 2015.

[7] Hannun, Awni, Case, Carl, Casper, Jared, Catanzaro, Bryan, Diamos, Greg, Elsen, Erich, Prenger, Ryan, Satheesh, Sanjeev, Sengupta, Shubho, Coates, Adam, et al. Deep speech: Scaling up end-to-end speech recognition. arXiv preprint arXiv:1412.5567, 2014.

[8] Caruana, Rich, Lou, Yin, Gehrke, Johannes, Koch, Paul, Sturm, Marc, and Elhadad, Noemie. Intelligible models for healthcare: Predicting pneumonia risk and hospital 30-day readmission. In KDD, 2015.

[9] Bojarski, Mariusz, Del Testa, Davide, Dworakowski, Daniel, Firner, Bernhard, Flepp, Beat, Goyal, Prasoon, Jackel, Lawrence D, Monfort, Mathew, Muller, Urs, Zhang, Jiakai, et al. End to end learning for self-driving cars. arXiv preprint arXiv:1604.07316, 2016.

[10] Jiang, Xiaoqian, Osl, Melanie, Kim, Jihoon, and Ohno-Machado, Lucila. Calibrating predictive model estimates to support personalized medicine. Journal of the American Medical Informatics Association, 19(2):263–274, 2012.

[11] Cosmides, Leda and Tooby, John. Are humans good intuitive statisticians after all? rethinking some conclusions from the literature on judgment under uncertainty. cognition, 58(1):1–73, 1996.

[12] Xiong, Wayne, Droppo, Jasha, Huang, Xuedong, Seide, Frank, Seltzer, Mike, Stolcke, Andreas, Yu, Dong, and Zweig, Geoffrey. Achieving human parity in conversational speech recognition. arXiv preprint arXiv:1610.05256, 2016.

[13] Kendall, Alex and Cipolla, Roberto. Modelling uncertainty in deep learning for camera relocalization. 2016.

[14] Niculescu-Mizil, Alexandru and Caruana, Rich. Predicting good probabilities with supervised learning. In ICML, pp.\ 625–632, 2005.

[15] LeCun, Yann, Bottou, Léon, Bengio, Yoshua, and Haffner, Patrick. Gradient-based learning applied to document recognition. Proceedings of the IEEE, 86(11):2278–2324, 1998.

[16] DeGroot, Morris H and Fienberg, Stephen E. The comparison and evaluation of forecasters. The statistician, pp.\ 12–22, 1983.

[17] Platt, John et al. Probabilistic outputs for support vector machines and comparisons to regularized likelihood methods. Advances in large margin classifiers, 10(3):61–74, 1999.

[18] Naeini, Mahdi Pakdaman, Cooper, Gregory F, and Hauskrecht, Milos. Obtaining well calibrated probabilities using bayesian binning. In AAAI, pp.\ 2901, 2015.

[19] Friedman, Jerome, Hastie, Trevor, and Tibshirani, Robert. The elements of statistical learning, volume 1. Springer series in statistics Springer, Berlin, 2001.

[20] Bengio, Yoshua, Goodfellow, Ian J, and Courville, Aaron. Deep learning. Nature, 521:436–444, 2015.

[21] Zagoruyko, Sergey and Komodakis, Nikos. Wide residual networks. In BMVC, 2016.

[22] Zhang, Chiyuan, Bengio, Samy, Hardt, Moritz, Recht, Benjamin, and Vinyals, Oriol. Understanding deep learning requires rethinking generalization. In ICLR, 2017.

[23] Ioffe, Sergey and Szegedy, Christian. Batch normalization: Accelerating deep network training by reducing internal covariate shift. 2015.

[24] Vapnik, Vladimir N. Statistical Learning Theory. Wiley-Interscience, 1998.

[25] Zadrozny, Bianca and Elkan, Charles. Obtaining calibrated probability estimates from decision trees and naive bayesian classifiers. In ICML, pp.\ 609–616, 2001.

[26] Zadrozny, Bianca and Elkan, Charles. Transforming classifier scores into accurate multiclass probability estimates. In KDD, pp.\ 694–699, 2002.

[27] Hinton, Geoffrey, Vinyals, Oriol, and Dean, Jeff. Distilling the knowledge in a neural network. 2015.

[28] Jaynes, Edwin T. Information theory and statistical mechanics. Physical review, 106(4):620, 1957.

[29] Kuleshov, Volodymyr and Ermon, Stefano. Reliable confidence estimation via online learning. arXiv preprint arXiv:1607.03594, 2016.

[30] Kuleshov, Volodymyr and Liang, Percy. Calibrated structured prediction. In NIPS, pp.\ 3474–3482, 2015.

[31] Lakshminarayanan, Balaji, Pritzel, Alexander, and Blundell, Charles. Simple and scalable predictive uncertainty estimation using deep ensembles. arXiv preprint arXiv:1612.01474, 2016.

[32] Pereyra, Gabriel, Tucker, George, Chorowski, Jan, Kaiser, Łukasz, and Hinton, Geoffrey. Regularizing neural networks by penalizing confident output distributions. arXiv preprint arXiv:1701.06548, 2017.

[33] Hendrycks, Dan and Gimpel, Kevin. A baseline for detecting misclassified and out-of-distribution examples in neural networks. In ICLR, 2017.

[34] Denker, John S and Lecun, Yann. Transforming neural-net output levels to probability distributions. In NIPS, pp.\ 853–859, 1990.

[35] MacKay, David JC. A practical bayesian framework for backpropagation networks. Neural computation, 4(3):448–472, 1992.

[36] Gal, Yarin and Ghahramani, Zoubin. Dropout as a bayesian approximation: Representing model uncertainty in deep learning. In ICML, 2016.

[37] Srivastava, Nitish, Hinton, Geoffrey, Krizhevsky, Alex, Sutskever, Ilya, and Salakhutdinov, Ruslan. Dropout: A simple way to prevent neural networks from overfitting. Journal of Machine Learning Research, 15:1929–1958, 2014.

[38] Kendall, Alex and Gal, Yarin. What uncertainties do we need in bayesian deep learning for computer vision? arXiv preprint arXiv:1703.04977, 2017.

[39] Wilson, Andrew G, Hu, Zhiting, Salakhutdinov, Ruslan R, and Xing, Eric P. Stochastic variational deep kernel learning. In NIPS, pp.\ 2586–2594, 2016a.

[40] Wilson, Andrew Gordon, Hu, Zhiting, Salakhutdinov, Ruslan, and Xing, Eric P. Deep kernel learning. In AISTATS, pp.\ 370–378, 2016b.

[41] Al-Shedivat, Maruan, Wilson, Andrew Gordon, Saatchi, Yunus, Hu, Zhiting, and Xing, Eric P. Learning scalable deep kernels with recurrent structure. arXiv preprint arXiv:1610.08936, 2016.

[42] Welinder, P., Branson, S., Mita, T., Wah, C., Schroff, F., Belongie, S., and Perona, P. Caltech-UCSD Birds 200. Technical Report CNS-TR-2010-001, California Institute of Technology, 2010.

[43] Krause, Jonathan, Stark, Michael, Deng, Jia, and Fei-Fei, Li. 3d object representations for fine-grained categorization. In IEEE Workshop on 3D Representation and Recognition (3dRR), Sydney, Australia, 2013.

[44] Deng, Jia, Dong, Wei, Socher, Richard, Li, Li-Jia, Li, Kai, and Fei-Fei, Li. Imagenet: A large-scale hierarchical image database. In CVPR, pp.\ 248–255, 2009.

[45] Krizhevsky, Alex and Hinton, Geoffrey. Learning multiple layers of features from tiny images, 2009.

[46] Netzer, Yuval, Wang, Tao, Coates, Adam, Bissacco, Alessandro, Wu, Bo, and Ng, Andrew Y. Reading digits in natural images with unsupervised feature learning. In Deep Learning and Unsupervised Feature Learning Workshop, NIPS, 2011.

[47] Socher, Richard, Perelygin, Alex, Wu, Jean, Chuang, Jason, Manning, Christopher D., Ng, Andrew, and Potts, Christopher. Recursive deep models for semantic compositionality over a sentiment treebank. In EMNLP, pp.\ 1631–1642, 2013.

[48] Tai, Kai Sheng, Socher, Richard, and Manning, Christopher D. Improved semantic representations from tree-structured long short-term memory networks. 2015.

[49] Iyyer, Mohit, Manjunatha, Varun, Boyd-Graber, Jordan, and Daumé III, Hal. Deep unordered composition rivals syntactic methods for text classification. In ACL, 2015.

[50] Collobert, Ronan, Kavukcuoglu, Koray, and Farabet, Clément. Torch7: A matlab-like environment for machine learning. In BigLearn Workshop, NIPS, 2011.