Mastering Atari with Discrete World Models

Danijar Hafner$^{*}$

Google Research

Timothy Lillicrap

DeepMind

Mohammad Norouzi

Google Research

Jimmy Ba

University of Toronto

$^{*}$ Correspondence to: Danijar Hafner [email protected].

Abstract

Intelligent agents need to generalize from past experience to achieve goals in complex environments. World models facilitate such generalization and allow learning behaviors from imagined outcomes to increase sample-efficiency. While learning world models from image inputs has recently become feasible for some tasks, modeling Atari games accurately enough to derive successful behaviors has remained an open challenge for many years. We introduce DreamerV2, a reinforcement learning agent that learns behaviors purely from predictions in the compact latent space of a powerful world model. The world model uses discrete representations and is trained separately from the policy. DreamerV2 constitutes the first agent that achieves human-level performance on the Atari benchmark of 55 tasks by learning behaviors inside a separately trained world model. With the same computational budget and wall-clock time, Dreamer V2 reaches 200M frames and surpasses the final performance of the top single-GPU agents IQN and Rainbow. DreamerV2 is also applicable to tasks with continuous actions, where it learns an accurate world model of a complex humanoid robot and solves stand-up and walking from only pixel inputs.

Executive Summary: Reinforcement learning agents must learn effective behaviors in complex, uncertain environments like classic Atari video games, where trial-and-error exploration is costly and generalization from limited experience is essential. For years, model-free algorithms—those that learn directly from rewards without building an internal model of the world—have dominated benchmarks such as Atari, achieving superhuman performance through years of refinements. However, these methods struggle with sample efficiency, transfer to new tasks, and directed exploration. World models, which predict future states and rewards from past observations, offer a promising alternative by enabling agents to "imagine" outcomes and plan ahead, potentially reducing real-world interactions. Despite progress, world models have not yet matched model-free leaders on Atari due to inaccuracies in high-dimensional image predictions and inefficient planning.

This paper introduces and evaluates DreamerV2, an agent that learns behaviors entirely within the predictions of a separately trained world model, aiming to demonstrate human-level performance across 55 Atari games. The goal is to show that a compact, accurate world model can enable planning that rivals or exceeds established model-free approaches, while using modest computational resources.

The authors built DreamerV2 by refining an earlier agent called DreamerV1. They trained the world model on sequences of images, actions, and rewards from the agent's interactions, using a recurrent neural network to encode compact, discrete representations of the environment—avoiding the error buildup of pixel-level predictions. Key innovations include categorical latent variables for better handling of discrete changes in games and a balanced regularization technique to improve prediction accuracy. Behaviors were then learned by imagining thousands of short trajectories in this latent space, training an actor to select actions and a critic to estimate long-term rewards, all without generating images. Experiments ran on a single GPU with one environment instance per game, collecting 200 million steps over about 10 days, drawing from a replay buffer of past experiences.

DreamerV2 achieved a median score 2.15 times that of a skilled human gamer across the 55 games, marking the first time a purely model-based agent reached human-level play on this benchmark. It outperformed top single-GPU model-free agents like Rainbow (1.47x human) and IQN (1.29x human), surpassing their final scores by 46% and 66%, respectively, under the same budget. Using a more robust metric—averaging scores normalized to human world records and clipped at 100%—DreamerV2 scored 0.28, compared to 0.17 for Rainbow and 0.21 for IQN. Ablations confirmed that discrete latents boosted performance on 42 of 55 games over continuous ones, while the balancing technique improved results on 44 games. Beyond Atari, DreamerV2 solved challenging continuous-control tasks, such as making a humanoid robot stand and walk from pixel inputs alone, and handled hard-exploration games like Montezuma's Revenge without add-ons, matching specialized methods.

These results prove that world models can deliver competitive performance on demanding benchmarks, challenging the dominance of model-free learning and highlighting their edge in efficiency—DreamerV2 imagined 468 billion latent states during training, 10,000 times more than real interactions. This matters for real-world applications, like robotics, where data collection is expensive and risky; it reduces reliance on vast trials, improves generalization to unseen scenarios, and enables planning for safety or long-term goals. Unlike resource-heavy alternatives like MuZero, which needs months of computation and lacks public code, DreamerV2 is practical and reproducible, paving the way for integration with model-free techniques for hybrid gains. It also suggests world models inherently aid exploration in sparse-reward settings, smoothing out uncertainties.

Adopt DreamerV2 as a baseline for future reinforcement learning research, especially for image-based tasks, and integrate complementary model-free elements like prioritized replay to push scores higher—early tests show this boosts the human-normalized median by 22%. For broader impact, test it on multi-task setups to leverage transfer learning, or apply it to physical robots for sample-efficient training. Pilot studies in continuous domains, like the humanoid walker, indicate strong potential; expand these with more diverse environments. If aiming for decisions on deployment, prioritize tasks needing generalization over raw speed.

While robust on the standard Atari suite with sticky actions and no life information, results may vary on unseen games or altered settings, as training is single-task per game. Assumptions like fixed discount factors and discrete actions limit direct applicability to all real-world problems, though extensions to continuous actions succeeded. Confidence in Atari findings is high, backed by five seeds and comparisons to established baselines, but continuous-control results are preliminary and warrant larger-scale validation before firm commitments.

1. Introduction

Section Summary: Reinforcement learning agents use world models to build an understanding of their environments, allowing them to predict action outcomes, plan ahead, and generalize to new tasks more effectively than trial-and-error methods alone. While world models show promise, they have historically fallen short on challenging benchmarks like Atari games, where model-free approaches like Rainbow have led the way. This paper presents DreamerV2, an improved agent that achieves human-level performance on Atari by training entirely within a highly accurate world model, surpassing top competitors using just a single GPU and proving the potential of this approach.

![**Figure 1:** Gamer normalized median score on the Atari benchmark of 55 games with sticky actions at 200M steps. DreamerV2 is the first agent that learns purely within a world model to achieve human-level Atari performance, demonstrating the high accuracy of its learned world model. DreamerV2 further outperforms the top single-GPU agents Rainbow and IQN, whose scores are provided by Dopamine ([1]). According to its authors, SimPLe ([2]) was only evaluated on an easier subset of 36 games and trained for fewer steps and additional training does not further increase its performance.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/d42vmszr/complex_fig_17011c6e8780.png)

To successfully operate in unknown environments, reinforcement learning agents need to learn about their environments over time. World models are an explicit way to represent an agent's knowledge about its environment. Compared to model-free reinforcement learning that learns through trial and error, world models facilitate generalization and can predict the outcomes of potential actions to enable planning ([3]). Capturing general aspects of the environment, world models have been shown to be effective for transfer to novel tasks ([4]), directed exploration ([5]), and generalization from offline datasets ([6]). When the inputs are high-dimensional images, latent dynamics models predict ahead in an abstract latent space ([7, 8, 9, 10]). Predicting compact representations instead of images has been hypothesized to reduce accumulating errors and their small memory footprint enables thousands of parallel predictions on a single GPU ([9, 11]). Leveraging this approach, the recent Dreamer agent ([11]) has solved a wide range of continuous control tasks from image inputs.

Despite their intriguing properties, world models have so far not been accurate enough to compete with the state-of-the-art model-free algorithms on the most competitive benchmarks. The well-established Atari benchmark ([12]) historically required model-free algorithms to achieve human-level performance, such as DQN ([13]), A3C ([14]), or Rainbow ([15]). Several attempts at learning accurate world models of Atari games have been made, without achieving competitive performance ([16, 17, 2]). On the other hand, the recently proposed MuZero agent ([18]) shows that planning can achieve impressive performance on board games and deterministic Atari games given extensive engineering effort and a vast computational budget. However, its implementation is not available to the public and it would require over 2 months of computation to train even one agent on a GPU, rendering it impractical for most research groups.

In this paper, we introduce DreamerV2, the first reinforcement learning agent that achieves human-level performance on the Atari benchmark by learning behaviors purely within a separately trained world model, as shown in Figure 1. Learning successful behaviors purely within the world model demonstrates that the world model learns to accurately represent the environment. To achieve this, we apply small modifications to the Dreamer agent ([11]), such as using discrete latents and balancing terms within the KL loss. Using a single GPU and a single environment instance, DreamerV2 outperforms top single-GPU Atari agents Rainbow ([15]) and IQN ([19]), which rest upon years of model-free reinforcement learning research ([20, 21, 22, 23, 24]). Moreover, aspects of these algorithms are complementary to our world model and could be integrated into the Dreamer framework in the future. To rigorously compare the algorithms, we report scores normalized by both a human gamer ([13]) and the human world record ([25]) and make a suggestion for reporting scores going forward.

2. DreamerV2

Section Summary: DreamerV2 is an advanced version of the Dreamer agent, designed as a model-based AI system that learns from past experiences by building a predictive world model, imagining future scenarios to train its decision-making parts, and then acting in the real environment to collect more data. This world model compresses high-dimensional inputs like images into simpler latent states using a recurrent state-space model, which predicts actions' effects without needing to generate visuals, making it efficient for long-term planning. Key improvements include switching to categorical variables for better handling of uncertainties and incorporating predictors for rewards and episode endings to enhance performance in tasks.

We present DreamerV2, an evolution of the Dreamer agent ([11]). We refer to the original Dreamer agent as DreamerV1 throughout this paper. This section describes the complete DreamerV2 algorithm, consisting of the three typical components of a model-based agent ([3]). We learn the world model from a dataset of past experience, learn an actor and critic from imagined sequences of compact model states, and execute the actor in the environment to grow the experience dataset. In Appendix C, we include a list of changes that we applied to DreamerV1 and which of them we found to increase empirical performance.

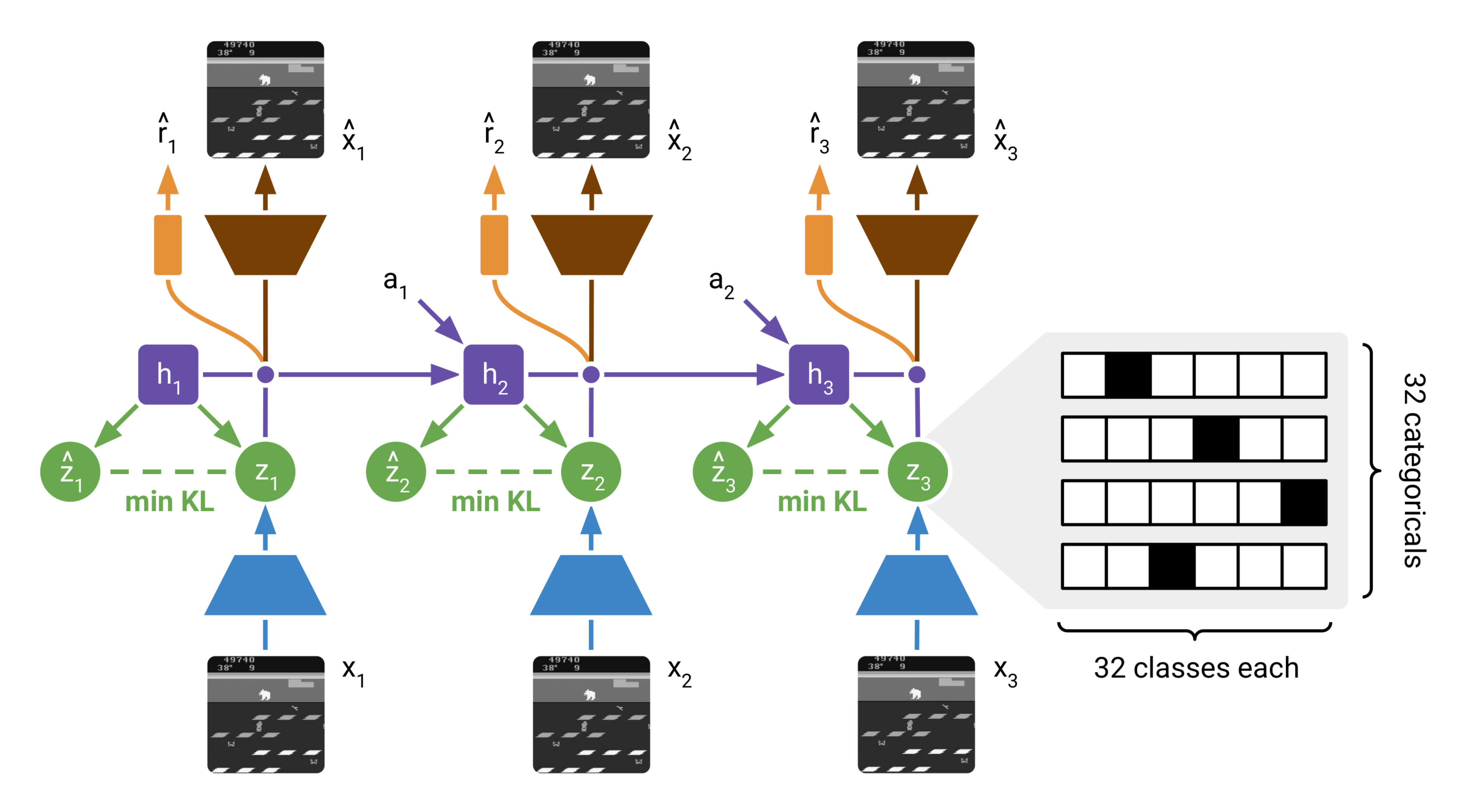

2.1 World Model Learning

World models summarize an agent's experience into a predictive model that can be used in place of the environment to learn behaviors. When inputs are high-dimensional images, it is beneficial to learn compact state representations of the inputs to predict ahead in this learned latent space ([7, 26, 8]). These models are called latent dynamics models. Predicting ahead in latent space not only facilitates long-term predictions, it also allows to efficiently predict thousands of compact state sequences in parallel in a single batch, without having to generate images. DreamerV2 builds upon the world model that was introduced by PlaNet ([9]) and used in DreamerV1, by replacing its Gaussian latents with categorical variables.

Experience dataset

The world model is trained from the agent's growing dataset of past experience that contains sequences of images $x_{1:T}$, actions $a_{1:T}$, rewards $r_{1:T}$, and discount factors $\gamma_{1:T}$. The discount factors equal a fixed hyper parameter $\gamma=0.999$ for time steps within an episode and are set to zero for terminal time steps. For training, we use batches of $B=50$ sequences of fixed length $L=50$ that are sampled randomly within the stored episodes. To observe enough episode ends during training, we sample the start index of each training sequence uniformly within the episode and then clip it to not exceed the episode length minus the training sequence length.

Model components

The world model consists of an image encoder, a Recurrent State-Space Model (RSSM; [9]) to learn the dynamics, and predictors for the image, reward, and discount factor. The world model is summarized in Figure 2. The RSSM uses a sequence of deterministic recurrent states $h_t$, from which it computes two distributions over stochastic states at each step. The posterior state $z_t$ incorporates information about the current image $x_t$, while the prior state $\hat{z}_t$ aims to predict the posterior without access to the current image. The concatenation of deterministic and stochastic states forms the compact model state. From the posterior model state, we reconstruct the current image $x_t$ and predict the reward $r_t$ and discount factor $\gamma_t$. The model components are:

$ \begin{alignedat}{4}

\text{RSSM} \hspace{1ex} \begin{cases} \hphantom{A} \ \hphantom{A} \ \hphantom{A} \end{cases} \hspace*{-2.4ex}

& \text{Recurrent model:} \hspace{3.5em} && h_t &\ = &\ f_\phi(h_{t-1}, z_{t-1}, a_{t-1}) \

& \text{Representation model:} \hspace{3.5em} && z_t &\ \sim &

{q_\phi (z_t ;\vert; h_t, x_t)} \

& \text{Transition predictor:} \hspace{3.5em} && \hat{z}t &\ \sim &

{p\phi (\hat{z}_t ;\vert; h_t)} \

& \text{Image predictor:} \hspace{3.5em} && \hat{x}t &\ \sim &

{p\phi (\hat{x}_t ;\vert; h_t, z_t)} \

& \text{Reward predictor:} \hspace{3.5em} && \hat{r}t &\ \sim &

{p\phi (\hat{r}_t ;\vert; h_t, z_t)} \

& \text{Discount predictor:} \hspace{3.5em} && \hat{\gamma}t &\ \sim &

{p\phi (\hat{\gamma}_t ;\vert; h_t, z_t)}.

\end{alignedat}\tag{1}

$

All components are implemented as neural networks and $\phi$ describes their combined parameter vector. The transition predictor guesses the next model state only from the current model state and the action but without using the next image, so that we can later learn behaviors by predicting sequences of model states without having to observe or generate images. The discount predictor lets us estimate the probability of an episode ending when learning behaviors from model predictions.

sample = one_hot(draw(logits)) # sample has no gradient

probs = softmax(logits) # want gradient of this

sample = sample + probs - stop_grad(probs) # has gradient of probs

Neural networks

The representation model is implemented as a Convolutional Neural Network (CNN; [27]) followed by a Multi-Layer Perceptron (MLP) that receives the image embedding and the deterministic recurrent state. The RSSM uses a Gated Recurrent Unit (GRU; [28]) to compute the deterministic recurrent states. The model state is the concatenation of deterministic GRU state and a sample of the stochastic state. The image predictor is a transposed CNN and the transition, reward, and discount predictors are MLPs. We down-scale the $84 \times 84$ grayscale images to $64 \times 64$ pixels so that we can apply the convolutional architecture of DreamerV1. We use the ELU activation function for all components of the model ([29]). The world model uses a total of 20M trainable parameters.

kl_loss = alpha * compute_kl(stop_grad(approx_posterior), prior)

+ (1 - alpha) * compute_kl(approx_posterior, stop_grad(prior))

Distributions

The image predictor outputs the mean of a diagonal Gaussian likelihood with unit variance, the reward predictor outputs a univariate Gaussian with unit variance, and the discount predictor outputs a Bernoulli likelihood. In prior work, the latent variable in the model state was a diagonal Gaussian that used reparameterization gradients during backpropagation ([30, 31]). In DreamerV2, we instead use a vector of several categorical variables and optimize them using straight-through gradients ([32]), which are easy to implement using automatic differentiation as shown in Algorithm 1. We discuss possible benefits of categorical over Gaussian latents in the experiments section.

Loss function

All components of the world model are optimized jointly. The distributions produced by the image predictor, reward predictor, discount predictor, and transition predictor are trained to maximize the log-likelihood of their corresponding targets. The representation model is trained to produce model states that facilitates these prediction tasks, through the expectation below. Moreover, it is regularized to produce model states with high entropy, such that the model becomes robust to many different model states during training. The loss function for learning the world model is:

$ \begin{gather} \begin{aligned} \mathcal{L}(\phi) \doteq \operatorname{E}{ {q\phi (z_{1:T} ;\vert; a_{1:T}, x_{1:T})}}\Big[\textstyle\sum_{t=1}^T &\hspace*{.12em}\underbrace{-\ln {p_\phi (x_t ;\vert; h_t, z_t)}\hspace*{0pt}}\text{image log loss} \hspace*{.12em}\underbrace{-\ln {p\phi (r_t ;\vert; h_t, z_t)}\hspace*{0pt}}\text{reward log loss} \hspace*{.12em}\underbrace{-\ln {p\phi (\gamma_t ;\vert; h_t, z_t)}\hspace*{0pt}}\text{discount log loss} \ &\hspace*{.12em}\underbrace{+\beta{\operatorname{KL}!\big[{q\phi (z_t ;\vert; h_t, x_t)} ;\big|; {p_\phi (z_t ;\vert; h_t)} \big]}\hspace*{0pt}}_\text{KL loss} \Big]. \end{aligned} \end{gather}\tag{2} $

We jointly minimize the loss function with respect to the vector $\phi$ that contains all parameters of the world model using the Adam optimizer ([33]). We scale the KL loss by $\beta=0.1$ for Atari and by $\beta=1.0$ for continuous control ([34]).

KL balancing

The world model loss function in Equation 2 is the ELBO or variational free energy of a hidden Markov model that is conditioned on the action sequence. The world model can thus be interpreted as a sequential VAE, where the representation model is the approximate posterior and the transition predictor is the temporal prior. In the ELBO objective, the KL loss serves two purposes: it trains the prior toward the representations, and it regularizes the representations toward the prior. However, learning the transition function is difficult and we want to avoid regularizing the representations toward a poorly trained prior. To solve this problem, we minimize the KL loss faster with respect to the prior than the representations by using different learning rates, $\alpha=0.8$ for the prior and $1-\alpha$ for the approximate posterior. We implement this technique as shown in Algorithm 2 and refer to it as KL balancing. KL balancing encourages learning an accurate prior over increasing posterior entropy, so that the prior better approximates the aggregate posterior. KL balancing is different from and orthogonal to beta-VAEs ([34]).

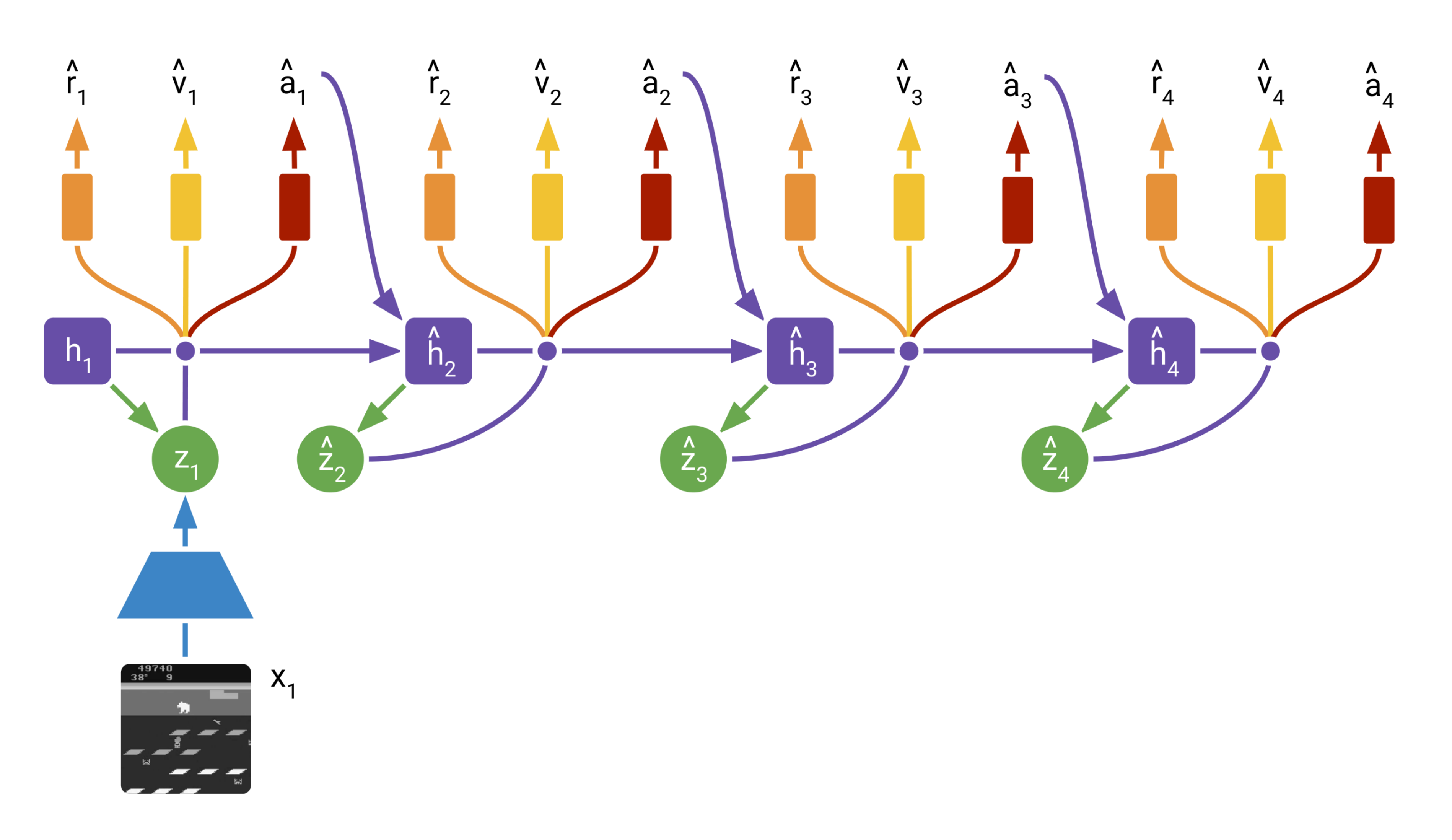

2.2 Behavior Learning

DreamerV2 learns long-horizon behaviors purely within its world model using an actor and a critic. The actor chooses actions for predicting imagined sequences of compact model states. The critic accumulates the future predicted rewards to take into account rewards beyond the planning horizon. Both the actor and critic operate on top of the learned model states and thus benefit from the representations learned by the world model. The world model is fixed during behavior learning, so the actor and value gradients do not affect its representations. Not predicting images during behavior learning lets us efficiently simulate 2500 latent trajectories in parallel on a single GPU.

Imagination MDP

To learn behaviors within the latent space of the world model, we define the imagination MPD as follows. The distribution of initial states $\hat{z}0$ in the imagination MDP is the distribution of compact model states encountered during world model training. From there, the transition predictor ${p\phi (\hat{z}t ;\vert; \hat{z}{t-1}, \hat{a}{t-1})}$ outputs sequences $\hat{z}{1:H}$ of compact model states up to the imagination horizon $H=15$. The mean of the reward predictor ${p_\phi (\hat{r}t;\vert;\hat{z}t)}$ is used as reward sequence $\hat{r}{1:H}$. The discount predictor ${p\phi (\hat{\gamma}_t;\vert;\hat{z}t)}$ outputs the discount sequence $\hat{\gamma}{1:H}$ that is used to down-weight rewards. Moreover, we weigh the loss terms of the actor and critic by the cumulative predicted discount factors to softly account for the possibility of episode ends.

Model components

To learn long-horizon behaviors in the imagination MDP, we leverage a stochastic actor that chooses actions and a deterministic critic. The actor and critic are trained cooperatively, where the actor aims to output actions that lead to states that maximize the critic output, while the critic aims to accurately estimate the sum of future rewards achieved by the actor from each imagined state. The actor and critic use the parameter vectors $\psi$ and $\xi$, respectively:

$ \begin{alignedat}{3} & \text{Actor:} \hspace{3.5em} && \hat{a}t \sim p\psi (\hat{a}t ;\vert; \hat{z}t) \ & \text{Critic:} \hspace{3.5em} && v\xi(\hat{z}t) \approx \operatorname{E}{p\phi, p_\psi}\Big[\textstyle\sum_{\tau \geq t} \hat{\gamma}^{\tau-t} \hat{r}_\tau \Big]. \ \end{alignedat}\tag{3} $

In contrast to the actual environment, the latent state sequence is Markovian, so that there is no need for the actor and critic to condition on more than the current model state. The actor and critic are both MLPs with ELU activations ([29]) and use 1M trainable parameters each. The actor outputs a categorical distribution over actions and the critic has a deterministic output. The two components are trained from the same imagined trajectories but optimize separate loss functions.

Critic loss function

The critic aims to predict the discounted sum of future rewards that the actor achieves in a given model state, known as the state value. For this, we leverage temporal-difference learning, where the critic is trained toward a value target that is constructed from intermediate rewards and critic outputs for later states. A common choice is the 1-step target that sums the current reward and the critic output for the following state. However, the imagination MDP lets us generate on-policy trajectories of multiple steps, suggesting the use of n-step targets that incorporate reward information into the critic more quickly. We follow DreamerV1 in using the more general $\lambda$-target ([35, 36]) that is defined recursively as follows:

$ \begin{gather} \begin{aligned} V^\lambda_t \doteq \hat{r}t + \hat{\gamma}t \begin{cases} (1 - \lambda) v\xi(\hat{z}{t+1}) + \lambda V^\lambda_{t+1} & \text{if}\quad t<H, \ v_\xi(\hat{z}_H) & \text{if}\quad t=H. \ \end{cases} \end{aligned} \end{gather}\tag{4} $

Intuitively, the $\lambda$-target is a weighted average of n-step returns for different horizons, where longer horizons are weighted exponentially less. We set $\lambda=0.95$ in practice, to focus more on long horizon targets than on short horizon targets. Given a trajectory of model states, rewards, and discount factors, we train the critic to regress the $\lambda$-return using a squared loss:

$ \begin{gather} \begin{aligned} \mathcal{L}(\xi) \doteq \operatorname{E}{p\phi, p_\psi}\Big[\textstyle \sum_{t=1}^{H-1} \frac{1}{2} \big(v_\xi(\hat{z}_t) - \operatorname{sg}(V^\lambda_t) \big)^2 \Big]. \end{aligned} \end{gather}\tag{5} $

We optimize the critic loss with respect to the critic parameters $\xi$ using the Adam optimizer. There is no loss term for the last time step because the target equals the critic at that step. We stop the gradients around the targets, denoted by the $\operatorname{sg}(\cdot)$ function, as typical in the literature. We stabilize value learning using a target network ([13]), namely, we compute the targets using a copy of the critic that is updated every $100$ gradient steps.

Actor loss function

The actor aims to output actions that maximize the prediction of long-term future rewards made by the critic. To incorporate intermediate rewards more directly, we train the actor to maximize the same $\lambda$-return that was computed for training the critic. There are different gradient estimators for maximizing the targets with respect to the actor parameters. DreamerV2 combines unbiased but high-variance Reinforce gradients with biased but low-variance straight-through gradients. Moreover, we regularize the entropy of the actor to encourage exploration where feasible while allowing the actor to choose precise actions when necessary.

Learning by Reinforce ([37]) maximizes the actor's probability of its own sampled actions weighted by the values of those actions. The variance of this estimator can be reduced by subtracting the state value as baseline, which does not depend on the current action. Intuitively, subtracting the baseline centers the weights and leads to faster learning. The benefit of Reinforce is that it produced unbiased gradients and the downside is that it can have high variance, even with baseline.

DreamerV1 relied entirely on reparameterization gradients ([30, 31]) to train the actor directly by backpropagating value gradients through the sequence of sampled model states and actions. DreamerV2 uses both discrete latents and discrete actions. To backpropagate through the sampled actions and state sequences, we leverage straight-through gradients ([32]). This results in a biased gradient estimate with low variance. The combined actor loss function is:

$ \begin{aligned} \mathcal{L}(\psi) \doteq \operatorname{E}{p\phi, p_\psi}\Big[\textstyle \sum_{t=1}^{H-1} \big(\underbrace{-\rho \ln p_\psi (\hat{a}t;\vert;\hat{z}t)\operatorname{sg}(V^\lambda_t-v\xi({\hat{z}t}))}\text{reinforce} \underbrace{-(1-\rho) V^\lambda_t}{\substack{\text{dynamics} \ \text{backprop}}} \underbrace{-\eta \operatorname{H}[a_t|\hat{z}t]}\text{entropy regularizer} \big)\Big]. \end{aligned}\tag{6} $

We optimize the actor loss with respect to the actor parameters $\psi$ using the Adam optimizer. We consider both Reinforce gradients and straight-through gradients, which backpropagate directly through the learned dynamics. Intuitively, the low-variance but biased dynamics backpropagation could learn faster initially and the unbiased but high-variance could to converge to a better solution. For Atari, we find Reinforce gradients to work substantially better and use $\rho=1$ and $\eta=10^{-3}$. For continuous control, we find dynamics backpropagation to work substantially better and use $\rho=0$ and $\eta=10^{-4}$. Annealing these hyper parameters can improve performance slightly but to avoid the added complexity we report the scores without annealing.

3. Experiments

Section Summary: DreamerV2, an AI system for playing Atari video games, was tested against four leading algorithms without internal models, and it performed better across all tested games using a standard set of 55 titles. The researchers compared results through four different ways of averaging scores—normalizing them against human players or world records—and recommended a new method that caps superhuman scores to fairly assess performance on every game. This evaluation ran to 200 million game steps in under 10 days on a single high-end graphics card, imagining thousands of times more internal scenarios than real ones to train the AI efficiently.

We evaluate DreamerV2 on the well-established Atari benchmark with sticky actions, comparing to four strong model-free algorithms. DreamerV2 outperforms the four model-free algorithms in all scenarios. For an extensive comparison, we report four scores according to four aggregation protocols and give a recommendation for meaningfully aggregating scores across games going forward. We also ablate the importance of discrete representations in the world model. Our implementation of DreamerV2 reaches 200M environment steps in under 10 days, while using only a single NVIDIA V100 GPU and a single environment instance. During the 200M environment steps, DreamerV2 learns its policy from 468B compact states imagined under the model, which is 10, 000 $\times$ more than the 50M inputs received from the real environment after action repeat. Refer to the project website for videos, the source code, and training curves in JSON format.^1

Experimental setup

We select the 55 games that prior works in the literature from different research labs tend to agree on ([14, 38, 15, 1, 39]) and recommend this set of games for evaluation going forward. We follow the evaluation protocol of [40] with 200M environment steps, action repeat of 4, a time limit of 108, 000 steps per episode that correspond to 30 minutes of game play, no access to life information, full action space, and sticky actions. Because the world model integrates information over time, DreamerV2 does not use frame stacking. The experiments use a single-task setup where a separate agent is trained for each game. Moreover, each agent uses only a single environment instance. We compare the algorithms based on both human gamer and human world record normalization ([25]).

Model-free baselines

We compare the learning curves and final scores of DreamerV2 to four model-free algorithms, IQN ([19]), Rainbow ([15]), C51 ([23]), and DQN ([13]). We use the scores of these agents provided by the Dopamine framework ([1]) that use sticky actions. These may differ from the reported results in the papers that introduce these algorithms in the deterministic Atari setup. The training time of Rainbow was reported at 10 days on a single GPU and using one environment instance.

3.1 Atari Performance

![**Figure 4:** Atari performance over 200M steps. See Table 1 for numeric scores. The standards in the literature to aggregate over tasks are shown in the left two plots. These normalize scores by a professional gamer and compute the median or mean over tasks ([13, 14]). In Section 3, we point out limitations of this methodology. As a robust measure of performance, we recommend the metric in the right-most plot. We normalize scores by the human world record ([25]) and then clip them, such that exceeding the record does not further increase the score, before averaging over tasks.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/d42vmszr/complex_fig_2856171499c0.png)

:Table 1: Atari performance at 200M steps. The scores of the 55 games are aggregated using the four different protocols described in Section 3. To overcome limitations of the previous metrics, we recommend the task mean of clipped record normalized scores as a robust measure of algorithm performance, shown in the right-most column. DreamerV2 outperforms previous single-GPU agents across all metrics. The baseline scores are taken from Dopamine Baselines ([1]).

| Agent | Gamer Median | Gamer Mean | Record Mean | Clipped Record Mean |

|---|---|---|---|---|

| DreamerV2 | 2.15 | 11.33 | 0.44 | 0.28 |

| DreamerV2 (schedules) | 2.64 | 10.45 | 0.43 | 0.28 |

| IQN | 1.29 | 8.85 | 0.21 | 0.21 |

| Rainbow | 1.47 | 9.12 | 0.17 | 0.17 |

| C51 | 1.09 | 7.70 | 0.15 | 0.15 |

| DQN | 0.65 | 2.84 | 0.12 | 0.12 |

The performance curves of DreamerV2 and four standard model-free algorithms are visualized in Figure 4. The final scores at 200M environment steps are shown in Table 1 and the scores on individual games are included in Table K.1. There are different approaches for aggregating the scores across the 55 games and we show that this choice can have a substantial impact on the relative performance between algorithms. To extensively compare DreamerV2 to the model-free algorithms, we consider the following four aggregation approaches:

- Gamer Median Atari scores are commonly normalized based on a random policy and a professional gamer, averaged over seeds, and the median over tasks is reported ([13, 14]). However, if almost half of the scores would be zero, the median would not be affected. Thus, we argue that median scores are not reflective of the robustness of an algorithm and results in wasted computational resources for games that will not affect the score.

- Gamer Mean Compared to the task median, the task mean considers all tasks. However, the gamer performed poorly on a small number of games, such as Crazy Climber, James Bond, and Video Pinball. This makes it easy for algorithms to achieve a high normalized score on these few games, which then dominate the task mean so it is not informative of overall performance.

- Record Mean Instead of normalizing based on the professional gamer, [25] suggest to normalize based on the registered human world record of each game. This partially addresses the outlier problem but the mean is still dominated by games where the algorithms easily achieve superhuman performance.

- Clipped Record Mean To overcome these limitations, we recommend normalizing by the human world record and then clipping the scores to not exceed a value of 1, so that performance above the record does not further increase the score. The result is a robust measure of algorithm performance on the Atari suite that considers performance across all games.

From Figure 4 and Table 1, we see that the different aggregation approaches let us examine agent performance from different angles. Interestingly, Rainbow clearly outperforms IQN in the first aggregation method but IQN clearly outperforms Rainbow in the remaining setups. DreamerV2 outperforms the model-free agents in all four metrics, with the largest margin in record normalized mean performance. Despite this, we recommend clipped record normalized mean as the most meaningful aggregation method, as it considers all tasks to a similar degree without being dominated by a small number of outlier scores. In Table 1, we also include DreamerV2 with schedules that anneal the actor entropy loss scale and actor gradient mixing over the course of training, which further increases the gamer median score of DreamerV2.

Individual games

The scores on individual Atari games at 200M environment steps are included in Table K.1, alongside the model-free algorithms and the baselines of random play, human gamer, and human world record. We filled in reasonable values for the 2 out of 55 games that have no registered world record. Figure E.1 compares the score differences between DreamerV2 and each model-free algorithm for the individual games. DreamerV2 achieves comparable or higher performance on most games except for Video Pinball. We hypothesize that the reconstruction loss of the world model does not encourage learning a meaningful latent representation because the most important object in the game, the ball, occupies only a single pixel. One the other hand, DreamerV2 achieves the strongest improvements over the model-free agents on the games James Bond, Up N Down, and Assault.

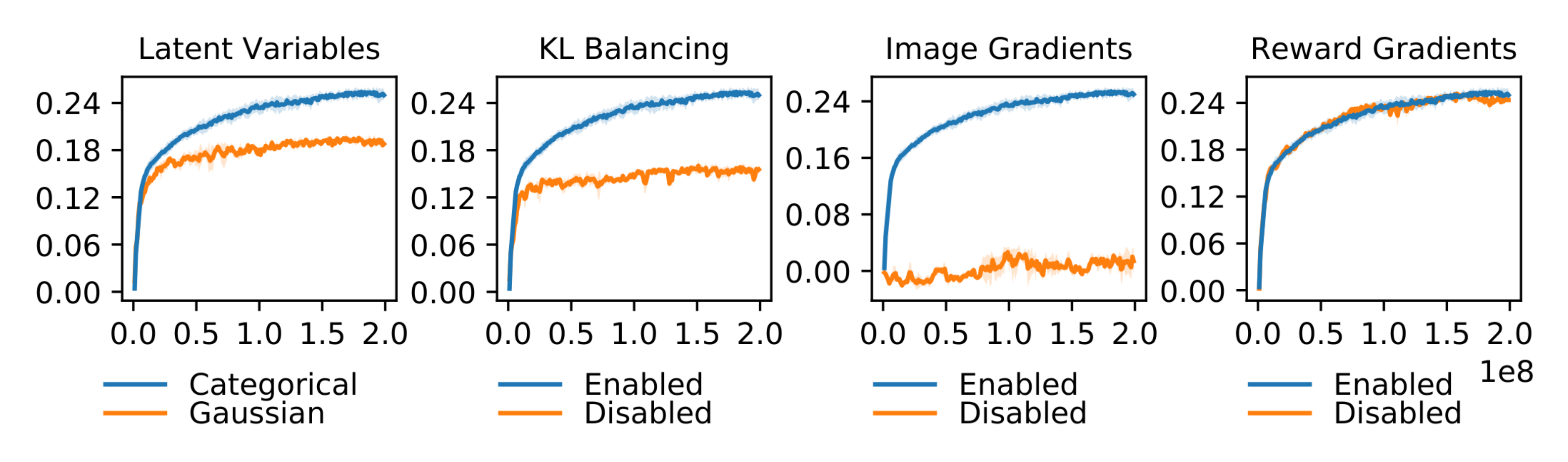

3.2 Ablation Study

:Table 2: Ablations to DreamerV2 measured by their Atari performance at 200M frames, sorted by the last column. The this experiment uses a slightly earlier version of DreamerV2 compared to Table 1. Each ablation only removes one part of the DreamerV2 agent. Discrete latent variables and KL balancing substantially contribute to the success of DreamerV2. Moreover, the world model relies on image gradients to learn general representations that lead to successful behaviors, even if the representations are not specifically learned for predicting past rewards.

| Agent | Gamer Median | Gamer Mean | Record Mean | Clipped Record Mean |

|---|---|---|---|---|

| DreamerV2 | 1.64 | 11.33 | 0.36 | 0.25 |

| No Layer Norm | 1.66 | 5.95 | 0.38 | 0.25 |

| No Reward Gradients | 1.68 | 6.18 | 0.37 | 0.24 |

| No Discrete Latents | 1.08 | 3.71 | 0.24 | 0.19 |

| No KL Balancing | 0.84 | 3.49 | 0.19 | 0.16 |

| No Policy Reinforce | 0.69 | 2.74 | 0.16 | 0.15 |

| No Image Gradients | 0.04 | 0.31 | 0.01 | 0.01 |

To understand which ingredients of DreamerV2 are responsible for its success, we conduct an extensive ablation study. We compare equipping the world model with categorical latents, as in DreamerV2, to Gaussian latents, as in DreamerV1. Moreover, we study the importance of KL balancing. Finally, we investigate the importance of gradients from image reconstruction and reward prediction for learning the model representations, by stopping one of the two gradient signals before entering the model states. The results of the ablation study are summarized in Figure 5 and Table 2. Refer to the appendix for the score curves of the individual tasks.

Categorical latents

Categorical latent variables outperform than Gaussian latent variables on 42 tasks, achieve lower performance on 8 tasks, and are tied on 5 tasks. We define a tie as being within $5%$ of another. While we do not know the reason why the categorical variables are beneficial, we state several hypotheses that can be investigated in future work:

- A categorical prior can perfectly fit the aggregate posterior, because a mixture of categoricals is again a categorical. In contrast, a Gaussian prior cannot match a mixture of Gaussian posteriors, which could make it difficult to predict multi-modal changes between one image and the next.

- The level of sparsity enforced by a vector of categorical latent variables could be beneficial for generalization. Flattening the sample from the 32 categorical with 32 classes each results in a sparse binary vector of length 1024 with 32 active bits.

- Despite common intuition, categorical variables may be easier to optimize than Gaussian variables, possibly because the straight-through gradient estimator ignores a term that would otherwise scale the gradient. This could reduce exploding and vanishing gradients.

- Categorical variables could be a better inductive bias than unimodal continuous latent variables for modeling the non-smooth aspects of Atari games, such as when entering a new room, or when collected items or defeated enemies disappear from the image.

KL balancing

KL balancing outperforms the standard KL regularizer on 44 tasks, achieves lower performance on 6 tasks, and is tied on 5 tasks. Learning accurate prior dynamics of the world model is critical because it is used for imagining latent state trajectories using policy optimization. By scaling up the prior cross entropy relative to the posterior entropy, the world model is encouraged to minimize the KL by improving its prior dynamics toward the more informed posteriors, as opposed to reducing the KL by increasing the posterior entropy. KL balancing may also be beneficial for probabilistic models with learned priors beyond world models.

Model gradients

Stopping the image gradients increases performance on 3 tasks, decreases performance on 51 tasks, and is tied on 1 task. The world model of DreamerV2 thus heavily relies on the learning signal provided by the high-dimensional images. Stopping the reward gradients increases performance on 15 tasks, decreases performance on 22 tasks, and is tied on 18 tasks. Figure H.1 further shows that the difference in scores is small. In contrast to MuZero, DreamerV2 thus learns general representations of the environment state from image information alone. Stopping reward gradients improved performance on a number of tasks, suggesting that the representations that are not specific to previously experienced rewards may generalize better to unseen situations.

Policy gradients

Using only Reinforce gradients to optimize the policy increases performance on 18 tasks, decreases performance on 24 tasks, and is tied on 13 tasks. This shows that DreamerV2 relies mostly on Reinforce gradients to learn the policy. However, mixing Reinforce and straight-through gradients yields a substantial improvement on James Bond and Seaquest, leading to a higher gamer normalized task mean score. Using only straight-through gradients to optimize the policy increases performance on 5 tasks, decreases performance on 44 tasks, and is tied on 6 tasks. We conjecture that straight-through gradients alone are not well suited for policy optimization because of their bias.

4. Related Work

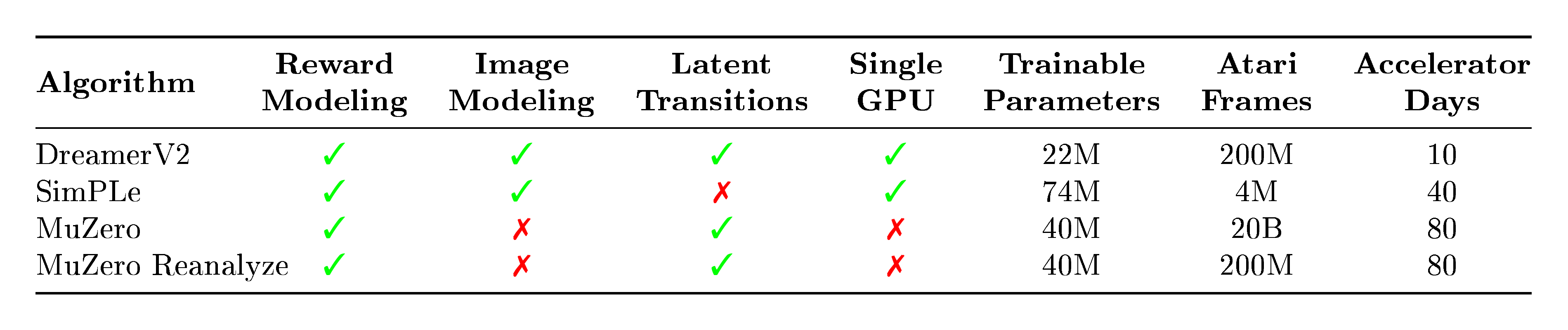

Section Summary: Most AI agents for playing Atari games have used model-free techniques, starting with the Deep Q-Network (DQN) that trains neural networks via Q-learning with tricks like experience replay, and later improvements like bias corrections, better memory prioritization, and policy-based methods such as PPO. World models, which build internal simulations of the environment, have been explored in various ways, such as predicting images or combining model-based planning with model-free training, though they're often limited to simpler tasks. The section details two Atari-specific approaches: SimPLe, which predicts video frames in pixel space to guide a policy agent but underperforms at standard evaluation scales, and MuZero, which uses tree search for planning with task-specific models but demands massive computation; in contrast, the new DreamerV2 method learns fuller world models from images more efficiently and accessibly.

Model-free Atari

The majority of agents applied to the Atari benchmark have been trained using model-free algorithms. DQN ([13]) showed that deep neural network policies can be trained using Q-learning by incorporating experience replay and target networks. Several works have extended DQN to incorporate bias correction as in DDQN ([20]), prioritized experience replay ([21]), architectural improvements ([22]), and distributional value learning ([23, 41, 19]). Besides value learning, agents based on policy gradients have targeted the Atari benchmark, such as ACER ([42]), PPO ([42]), ACKTR ([43]), and Reactor ([44]). Another line of work has focused on improving performance by distributing data collection, often while increasing the budget of environment steps beyond 200M ([14, 45, 46, 47, 39]).

World models

Several model-based agents focus on proprioceptive inputs ([7, 48, 49, 50, 51, 52, 53]), model images without using them for planning ([16, 54, 26, 17, 55, 56, 57, 58, 59, 60]), or combine the benefits of model-based and model-free approaches ([61, 62, 63, 64, 65, 8, 66, 67, 68, 69]). [70] optimize discrete latents using evolutionary search. [71] combine reinforce and reparameterization gradients. Most world model agents with image inputs have thus far been limited to relatively simple control tasks ([7, 72, 8, 9, 10, 11]). We explain the two model-based approaches that were applied to Atari in detail below.

:::

Table 3: Conceptual comparison of recent RL algorithms that leverage planning with a learned model. DreamerV2 and SimPLe learn complete models of the environment by leveraging the learning signal provided by the image inputs, while MuZero learns its model through value gradients that are specific to an individual task. The Monte-Carlo tree search used by MuZero is effective but adds complexity and is challenging to parallelize. This component is orthogonal to the world model proposed here.

:::

SimPLe

The SimPLe agent ([2]) learns a video prediction model in pixel-space and uses its predictions to train a PPO agent ([42]), as shown in Table 3. The model directly predicts each frame from the previous four frames and receives an additional discrete latent variable as input. The authors evaluate SimPLe on a subset of Atari games for 400k and 2M environment steps, after which they report diminishing returns. Some recent model-free methods have followed the comparison at 400k steps ([73, 74]). However, the highest performance achieved in this data-efficient regime is a gamer normalized median score of 0.28 ([74]) that is far from human-level performance. Instead, we focus on the well-established and competitive evaluation after 200M frames, where many successful model-free algorithms are available for comparison.

MuZero

The MuZero agent ([18]) learns a sequence model of rewards and values ([75]) to solve reinforcement learning tasks via Monte-Carlo Tree Search (MCTS; [76, 77]). The sequence model is trained purely by predicting task-specific information and does not incorporate explicit representation learning using the images, as shown in Table 3. MuZero shows that with significant engineering effort and a vast computational budget, planning can achieve impressive performance on several board games and deterministic Atari games. However, MuZero is not publicly available, and it would require over 2 months to train an Atari agent on one GPU. By comparison, DreamerV2 is a simple algorithm that achieves human-level performance on Atari on a single GPU in 10 days, making it reproducible for many researchers. Moreover, the advanced planning components of MuZero are complementary and could be applied to the accurate world models learned by DreamerV2. DreamerV2 leverages the additional learning signal provided by the input images, analogous to recent successes by semi-supervised image classification ([78, 79, 80]).

5. Discussion

Section Summary: DreamerV2 is a smart AI agent that learns to play Atari games at a human level by predicting outcomes in a hidden "world model" space, without needing direct rewards, and it beats top non-model-based agents using just one computer chip and the same training time. The developers made simple tweaks to an earlier version, like using a special type of data encoding and a balancing technique, which boosted its performance, and it works well by focusing on visual details from images rather than reward predictions. Overall, DreamerV2 proves that this planning-based approach can outshine heavily researched alternatives, paving the way for more efficient learning across tasks, robots, and exploration using uncertainty.

We present DreamerV2, a model-based agent that achieves human-level performance on the Atari 200M benchmark by learning behaviors purely from the latent-space predictions of a separately trained world model. Using a single GPU and a single environment instance, DreamerV2 outperforms top model-free single-GPU agents Rainbow and IQN using the same computational budget and training time. To develop DreamerV2, we apply several small modifications to the Dreamer agent ([11]). We confirm experimentally that learning a categorical latent space and using KL balancing improves the performance of the agent. Moreover, we find the DreamerV2 relies on image information for learning generally useful representations — its performance is not impacted by whether the representations are especially learned for predicting rewards.

DreamerV2 serves as proof of concept, showing that model-based RL can outperform top model-free algorithms on the most competitive RL benchmarks, despite the years of research and engineering effort that modern model-free agents rest upon. Beyond achieving strong performance on individual tasks, world models open avenues for efficient transfer and multi-task learning, sample-efficient learning on physical robots, and global exploration based on uncertainty estimates.

Acknowledgements

We thank our anonymous reviewers for their feedback and Nick Rhinehart for an insightful discussion about the potential benefits of categorical latent variables.

Appendix

Section Summary: The appendix showcases DreamerV2's capabilities beyond its main Atari tests, including success in controlling a humanoid robot to stand and walk using only pixel inputs in a continuous-action environment, marking a first for such challenges, and strong performance on the exploration-heavy Atari game Montezuma's Revenge without special tricks, matching advanced methods by tweaking a single setting. It also details improvements made to the original Dreamer agent, like using categorical data representations and specific learning tweaks that boosted results, while noting unsuccessful experiments such as binary data or added normalization that didn't help much. Finally, it lists key hyperparameters for Atari games, suggesting ranges to tune for new tasks.

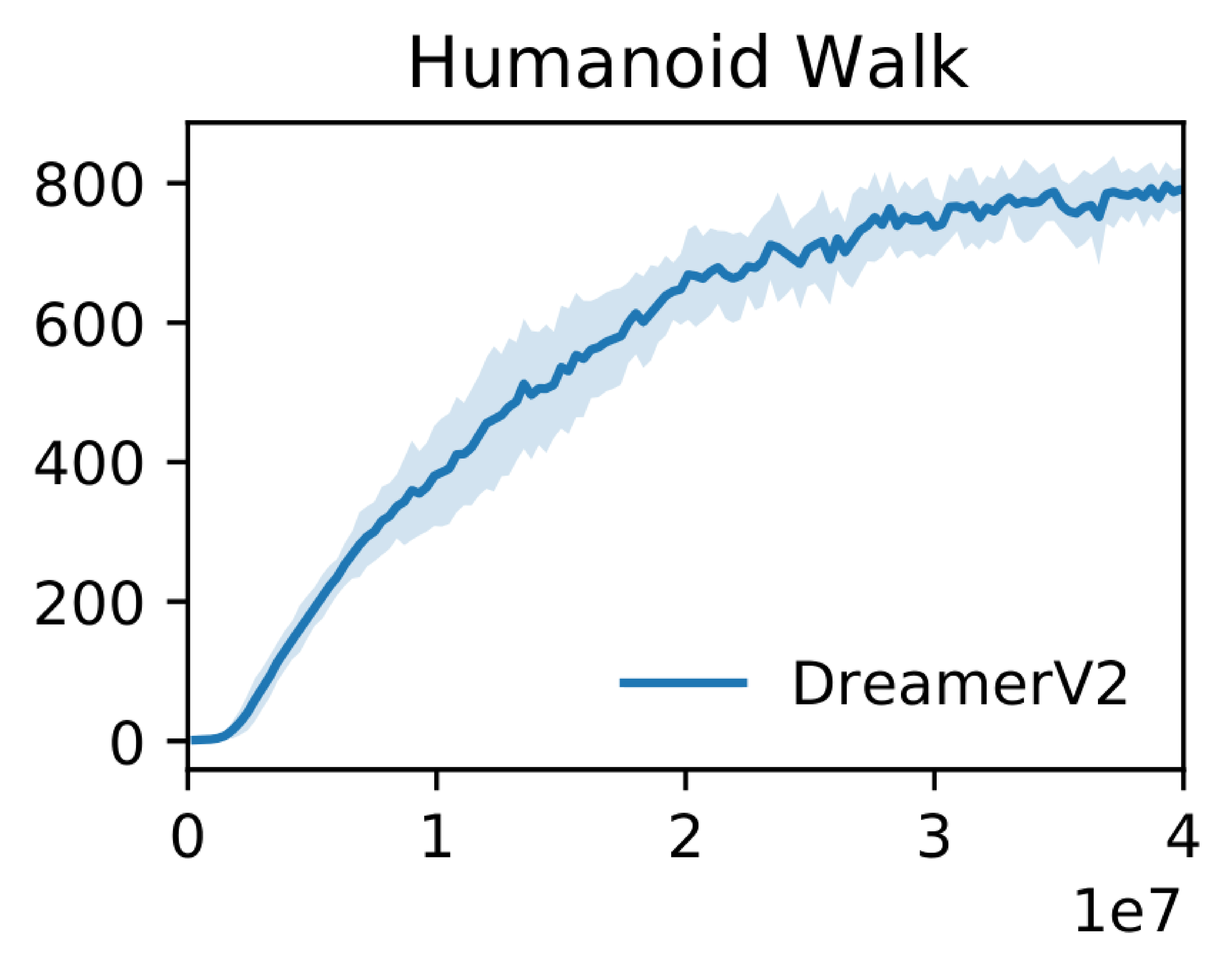

A. Humanoid from Pixels

While the main experiments of this paper focus on the Atari benchmark with discrete actions, DreamerV2 is also applicable to control tasks with continuous actions. For this, we the actor outputs a truncated normal distribution instead of a categorical distribution. To demonstrate the abilities of DreamerV2 for continuous control, we choose the challenging humanoid environment with only image inputs, shown in Figure A.1. We find that for continuous control tasks, dynamics backpropagation substantially outperforms reinforce gradients and thus set $\rho=0$. We also set $\eta=10^{-5}$ and $\beta=2$ to further accelerate learning. We find that DreamerV2 reliably solves both the stand-up motion required at the beginning of the episode and the subsequent walking. The score is shown in Figure A.2. To the best of our knowledge, this constitutes the first published result of solving the humanoid environment from only pixel inputs.

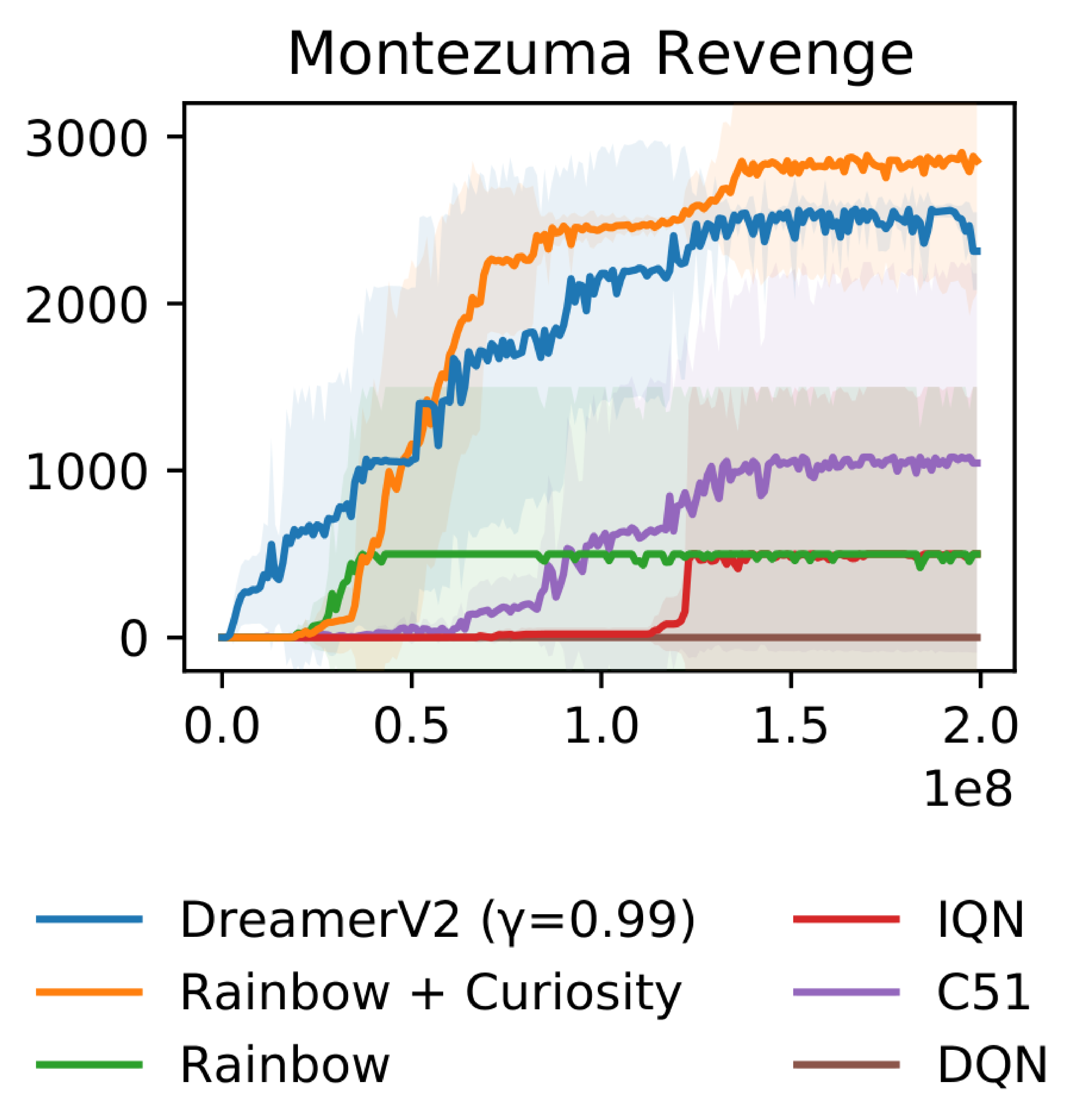

B. Montezuma's Revenge

While our main experiments use the same hyper parameters across all tasks, we find that DreamerV2 achieves higher performance on Montezuma's Revenge by using a lower discount factor of $\gamma=0.99$, possibly to stabilize value learning under sparse rewards. Figure B.2 shows the resulting performance, with all other hyper parameters left at their defaults. DreamerV2 outperforms existing model-free approaches on the hard-exploration game Montezuma's Revenge and matches the performance of the explicit exploration algorithm ICM ([81]) that was applied on top of Rainbow by [82]. This suggests that the world model may help with solving sparse reward tasks, for example due to improved generalization, efficient policy optimization in the compact latent space enabling more actor critic updates, or because the reward predictor generalizes and thus smooths out the sparse rewards.

C. Summary of Modifications

To develop DreamerV2, we used the Dreamer agent ([11]) as a starting point. This subsection describes the changes that we applied to the agent to achieve high performance on the Atari benchmark, as well as the changes that were tried but not found to increase performance and thus were not not included in DreamerV2.

Summary of changes that were tried and were found to help:

- Categorical latents Using categorical latent states using straight-through gradients in the world model instead of Gaussian latents with reparameterized gradients.

- KL balancing Separately scaling the prior cross entropy and the posterior entropy in the KL loss to encourage learning an accurate temporal prior, instead of using free nats.

- Reinforce only Reinforce gradients worked substantially better for Atari than dynamics backpropagation. For continuous control, dynamics backpropagation worked substantially better.

- Model size Increasing the number of units or feature maps per layer of all model components, resulting in a change from 13M parameters to 22M parameters.

- Policy entropy Regularizing the policy entropy for exploration both in imagination and during data collection, instead of using external action noise during data collection.

Summary of changes that were tried but were found to not help substantially:

- Binary latents Using a larger number of binary latents for the world model instead of categorical latents, which could have encouraged a more disentangled representation, was worse.

- Long-term entropy Including the policy entropy into temporal-difference loss of the value function, so that the actor seeks out states with high action entropy beyond the planning horizon.

- Mixed actor gradients Combining Reinforce and dynamics backpropagation gradients for learning the actor instead of Reinforce provided marginal or no benefits.

- Scheduling Scheduling the learning rates, KL scale, actor entropy loss scale, and actor gradient mixing (from 0.1 to 0) provided marginal or no benefits.

- Layer norm Using layer normalization in the GRU that is used as part of the RSSM latent transition model, instead of no normalization, provided no or marginal benefits.

Due to the large computational requirements, a comprehensive ablation study on this list of all changes is unfortunately infeasible for us. This would require 55 tasks times 5 seeds for 10 days per change to run, resulting in over 60, 000 GPU hours per change. However, we include ablations for the most important design choices in the main text of the paper.

D. Hyper Parameters

:::

Table D.1: Atari hyper parameters of DreamerV2. When tuning the agent for a new task, we recommend searching over the KL loss scale $\beta \in {0.1, 0.3, 1, 3}$, actor entropy loss scale $\eta \in {3\cdot10^{-5}, 10^{-4}, 3\cdot10^{-4}, 10^{-3}}$, and the discount factor $\gamma \in {0.99, 0.999}$. The training frequency update should be increased when aiming for higher data-efficiency.

:::

E. Agent Comparison

F. Model-Free Comparison

G. Latents and KL Balancing Ablations

H. Representation Learning Ablations

I. Policy Learning Ablations

J. Additional Ablations

K. Atari Task Scores

References

Section Summary: This section is a bibliography listing over 35 key research papers and resources primarily focused on deep reinforcement learning, a branch of artificial intelligence where systems learn decision-making through trial and error, often applied to games like Atari. It covers topics such as model-based planning, world simulations, neural network architectures, and optimization techniques that help AI agents explore and improve strategies more efficiently. The references include recent preprints from arXiv, conference proceedings, and foundational texts dating back to the 1980s and 1990s, forming a foundation for advancing AI control and prediction from raw data like images.

[1] PS Castro, S Moitra, C Gelada, S Kumar, MG Bellemare. Dopamine: A research framework for deep reinforcement learning. arXiv preprint arXiv:1812.06110, 2018.

[2] L Kaiser, M Babaeizadeh, P Milos, B Osinski, RH Campbell, K Czechowski, D Erhan, C Finn, P Kozakowski, S Levine, et al. Model-based reinforcement learning for atari. arXiv preprint arXiv:1903.00374, 2019.

[3] RS Sutton. Dyna, an integrated architecture for learning, planning, and reacting. ACM SIGART Bulletin, 2(4), 1991.

[4] A Byravan, JT Springenberg, A Abdolmaleki, R Hafner, M Neunert, T Lampe, N Siegel, N Heess, M Riedmiller. Imagined value gradients: Model-based policy optimization with transferable latent dynamics models. arXiv preprint arXiv:1910.04142, 2019.

[5] R Sekar, O Rybkin, K Daniilidis, P Abbeel, D Hafner, D Pathak. Planning to explore via self-supervised world models. arXiv preprint arXiv:2005.05960, 2020.

[6] T Yu, G Thomas, L Yu, S Ermon, J Zou, S Levine, C Finn, T Ma. Mopo: Model-based offline policy optimization. arXiv preprint arXiv:2005.13239, 2020.

[7] M Watter, J Springenberg, J Boedecker, M Riedmiller. Embed to control: A locally linear latent dynamics model for control from raw images. Advances in neural information processing systems, 2015.

[8] D Ha J Schmidhuber. World models. arXiv preprint arXiv:1803.10122, 2018.

[9] D Hafner, T Lillicrap, I Fischer, R Villegas, D Ha, H Lee, J Davidson. Learning latent dynamics for planning from pixels. arXiv preprint arXiv:1811.04551, 2018.

[10] M Zhang, S Vikram, L Smith, P Abbeel, M Johnson, S Levine. Solar: deep structured representations for model-based reinforcement learning. International Conference on Machine Learning, 2019.

[11] D Hafner, T Lillicrap, J Ba, M Norouzi. Dream to control: Learning behaviors by latent imagination. arXiv preprint arXiv:1912.01603, 2019.

[12] MG Bellemare, Y Naddaf, J Veness, M Bowling. The arcade learning environment: An evaluation platform for general agents. Journal of Artificial Intelligence Research, 47, 2013.

[13] V Mnih, K Kavukcuoglu, D Silver, AA Rusu, J Veness, MG Bellemare, A Graves, M Riedmiller, AK Fidjeland, G Ostrovski, et al. Human-level control through deep reinforcement learning. Nature, 518(7540), 2015.

[14] V Mnih, AP Badia, M Mirza, A Graves, T Lillicrap, T Harley, D Silver, K Kavukcuoglu. Asynchronous methods for deep reinforcement learning. International Conference on Machine Learning, 2016.

[15] M Hessel, J Modayil, H Van Hasselt, T Schaul, G Ostrovski, W Dabney, D Horgan, B Piot, M Azar, D Silver. Rainbow: Combining improvements in deep reinforcement learning. Thirty-Second AAAI Conference on Artificial Intelligence, 2018.

[16] J Oh, X Guo, H Lee, RL Lewis, S Singh. Action-conditional video prediction using deep networks in atari games. Advances in Neural Information Processing Systems, 2015.

[17] S Chiappa, S Racaniere, D Wierstra, S Mohamed. Recurrent environment simulators. arXiv preprint arXiv:1704.02254, 2017.

[18] J Schrittwieser, I Antonoglou, T Hubert, K Simonyan, L Sifre, S Schmitt, A Guez, E Lockhart, D Hassabis, T Graepel, et al. Mastering atari, go, chess and shogi by planning with a learned model. arXiv preprint arXiv:1911.08265, 2019.

[19] W Dabney, G Ostrovski, D Silver, R Munos. Implicit quantile networks for distributional reinforcement learning. arXiv preprint arXiv:1806.06923, 2018.

[20] H Van Hasselt, A Guez, D Silver. Deep reinforcement learning with double q-learning. arXiv preprint arXiv:1509.06461, 2015.

[21] T Schaul, J Quan, I Antonoglou, D Silver. Prioritized experience replay. arXiv preprint arXiv:1511.05952, 2015.

[22] Z Wang, T Schaul, M Hessel, H Hasselt, M Lanctot, N Freitas. Dueling network architectures for deep reinforcement learning. International conference on machine learning, 2016.

[23] MG Bellemare, W Dabney, R Munos. A distributional perspective on reinforcement learning. arXiv preprint arXiv:1707.06887, 2017.

[24] M Fortunato, MG Azar, B Piot, J Menick, I Osband, A Graves, V Mnih, R Munos, D Hassabis, O Pietquin, et al. Noisy networks for exploration. arXiv preprint arXiv:1706.10295, 2017.

[25] M Toromanoff, E Wirbel, F Moutarde. Is deep reinforcement learning really superhuman on atari? leveling the playing field. arXiv preprint arXiv:1908.04683, 2019.

[26] M Karl, M Soelch, J Bayer, P van der Smagt. Deep variational bayes filters: Unsupervised learning of state space models from raw data. arXiv preprint arXiv:1605.06432, 2016.

[27] Y LeCun, B Boser, JS Denker, D Henderson, RE Howard, W Hubbard, LD Jackel. Backpropagation applied to handwritten zip code recognition. Neural computation, 1(4), 1989.

[28] K Cho, B Van Merriënboer, C Gulcehre, D Bahdanau, F Bougares, H Schwenk, Y Bengio. Learning phrase representations using rnn encoder-decoder for statistical machine translation. arXiv preprint arXiv:1406.1078, 2014.

[29] DA Clevert, T Unterthiner, S Hochreiter. Fast and accurate deep network learning by exponential linear units (elus). arXiv preprint arXiv:1511.07289, 2015.

[30] DP Kingma M Welling. Auto-encoding variational bayes. arXiv preprint arXiv:1312.6114, 2013.

[31] DJ Rezende, S Mohamed, D Wierstra. Stochastic backpropagation and approximate inference in deep generative models. arXiv preprint arXiv:1401.4082, 2014.

[32] Y Bengio, N Léonard, A Courville. Estimating or propagating gradients through stochastic neurons for conditional computation. arXiv preprint arXiv:1308.3432, 2013.

[33] DP Kingma J Ba. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980, 2014.

[34] I Higgins, L Matthey, A Pal, C Burgess, X Glorot, M Botvinick, S Mohamed, A Lerchner. beta-vae: Learning basic visual concepts with a constrained variational framework. International Conference on Learning Representations, 2016.

[35] RS Sutton AG Barto. Reinforcement learning: An introduction. MIT press, 2018.

[36] J Schulman, P Moritz, S Levine, M Jordan, P Abbeel. High-dimensional continuous control using generalized advantage estimation. arXiv preprint arXiv:1506.02438, 2015.

[37] RJ Williams. Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine learning, 8(3-4), 1992.

[38] G Brockman, V Cheung, L Pettersson, J Schneider, J Schulman, J Tang, W Zaremba. Openai gym, 2016.

[39] AP Badia, B Piot, S Kapturowski, P Sprechmann, A Vitvitskyi, D Guo, C Blundell. Agent57: Outperforming the atari human benchmark. arXiv preprint arXiv:2003.13350, 2020.

[40] MC Machado, MG Bellemare, E Talvitie, J Veness, M Hausknecht, M Bowling. Revisiting the arcade learning environment: Evaluation protocols and open problems for general agents. Journal of Artificial Intelligence Research, 61, 2018.

[41] W Dabney, M Rowland, MG Bellemare, R Munos. Distributional reinforcement learning with quantile regression. arXiv preprint arXiv:1710.10044, 2017.

[42] J Schulman, F Wolski, P Dhariwal, A Radford, O Klimov. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017a.

[43] Y Wu, E Mansimov, RB Grosse, S Liao, J Ba. Scalable trust-region method for deep reinforcement learning using kronecker-factored approximation. Advances in neural information processing systems, 2017.

[44] A Gruslys, W Dabney, MG Azar, B Piot, M Bellemare, R Munos. The reactor: A fast and sample-efficient actor-critic agent for reinforcement learning. arXiv preprint arXiv:1704.04651, 2017.

[45] J Schulman, F Wolski, P Dhariwal, A Radford, O Klimov. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017b.

[46] D Horgan, J Quan, D Budden, G Barth-Maron, M Hessel, H Van Hasselt, D Silver. Distributed prioritized experience replay. arXiv preprint arXiv:1803.00933, 2018.

[47] S Kapturowski, G Ostrovski, J Quan, R Munos, W Dabney. Recurrent experience replay in distributed reinforcement learning. International conference on learning representations, 2018.

[48] Y Gal, R McAllister, CE Rasmussen. Improving pilco with bayesian neural network dynamics models. Data-Efficient Machine Learning workshop, ICML, 2016.

[49] JCG Higuera, D Meger, G Dudek. Synthesizing neural network controllers with probabilistic model based reinforcement learning. arXiv preprint arXiv:1803.02291, 2018.

[50] M Henaff, WF Whitney, Y LeCun. Model-based planning with discrete and continuous actions. arXiv preprint arXiv:1705.07177, 2018.

[51] K Chua, R Calandra, R McAllister, S Levine. Deep reinforcement learning in a handful of trials using probabilistic dynamics models. Advances in Neural Information Processing Systems, 2018.

[52] T Wang, X Bao, I Clavera, J Hoang, Y Wen, E Langlois, S Zhang, G Zhang, P Abbeel, J Ba. Benchmarking model-based reinforcement learning. CoRR, abs/1907.02057, 2019.

[53] T Wang J Ba. Exploring model-based planning with policy networks. arXiv preprint arXiv:1906.08649, 2019.

[54] RG Krishnan, U Shalit, D Sontag. Deep kalman filters. arXiv preprint arXiv:1511.05121, 2015.

[55] M Babaeizadeh, C Finn, D Erhan, RH Campbell, S Levine. Stochastic variational video prediction. arXiv preprint arXiv:1710.11252, 2017.

[56] M Gemici, CC Hung, A Santoro, G Wayne, S Mohamed, DJ Rezende, D Amos, T Lillicrap. Generative temporal models with memory. arXiv preprint arXiv:1702.04649, 2017.

[57] E Denton R Fergus. Stochastic video generation with a learned prior. arXiv preprint arXiv:1802.07687, 2018.

[58] L Buesing, T Weber, S Racaniere, S Eslami, D Rezende, DP Reichert, F Viola, F Besse, K Gregor, D Hassabis, et al. Learning and querying fast generative models for reinforcement learning. arXiv preprint arXiv:1802.03006, 2018.

[59] A Doerr, C Daniel, M Schiegg, D Nguyen-Tuong, S Schaal, M Toussaint, S Trimpe. Probabilistic recurrent state-space models. arXiv preprint arXiv:1801.10395, 2018.

[60] K Gregor F Besse. Temporal difference variational auto-encoder. arXiv preprint arXiv:1806.03107, 2018.

[61] G Kalweit J Boedecker. Uncertainty-driven imagination for continuous deep reinforcement learning. Conference on Robot Learning, 2017.

[62] A Nagabandi, G Kahn, RS Fearing, S Levine. Neural network dynamics for model-based deep reinforcement learning with model-free fine-tuning. arXiv preprint arXiv:1708.02596, 2017.

[63] T Weber, S Racanière, DP Reichert, L Buesing, A Guez, DJ Rezende, AP Badia, O Vinyals, N Heess, Y Li, et al. Imagination-augmented agents for deep reinforcement learning. arXiv preprint arXiv:1707.06203, 2017.

[64] T Kurutach, I Clavera, Y Duan, A Tamar, P Abbeel. Model-ensemble trust-region policy optimization. arXiv preprint arXiv:1802.10592, 2018.

[65] J Buckman, D Hafner, G Tucker, E Brevdo, H Lee. Sample-efficient reinforcement learning with stochastic ensemble value expansion. Advances in Neural Information Processing Systems, 2018.

[66] G Wayne, CC Hung, D Amos, M Mirza, A Ahuja, A Grabska-Barwinska, J Rae, P Mirowski, JZ Leibo, A Santoro, et al. Unsupervised predictive memory in a goal-directed agent. arXiv preprint arXiv:1803.10760, 2018.

[67] M Igl, L Zintgraf, TA Le, F Wood, S Whiteson. Deep variational reinforcement learning for pomdps. arXiv preprint arXiv:1806.02426, 2018.

[68] A Srinivas, A Jabri, P Abbeel, S Levine, C Finn. Universal planning networks. arXiv preprint arXiv:1804.00645, 2018.

[69] AX Lee, A Nagabandi, P Abbeel, S Levine. Stochastic latent actor-critic: Deep reinforcement learning with a latent variable model. arXiv preprint arXiv:1907.00953, 2019.

[70] S Risi KO Stanley. Deep neuroevolution of recurrent and discrete world models. Proceedings of the Genetic and Evolutionary Computation Conference, 2019.

[71] P Parmas, CE Rasmussen, J Peters, K Doya. Pipps: Flexible model-based policy search robust to the curse of chaos. arXiv preprint arXiv:1902.01240, 2019.

[72] F Ebert, C Finn, AX Lee, S Levine. Self-supervised visual planning with temporal skip connections. arXiv preprint arXiv:1710.05268, 2017.

[73] A Srinivas, M Laskin, P Abbeel. Curl: Contrastive unsupervised representations for reinforcement learning. arXiv preprint arXiv:2004.04136, 2020.

[74] I Kostrikov, D Yarats, R Fergus. Image augmentation is all you need: Regularizing deep reinforcement learning from pixels. arXiv preprint arXiv:2004.13649, 2020.

[75] J Oh, S Singh, H Lee. Value prediction network. Advances in Neural Information Processing Systems, 2017.

[76] R Coulom. Efficient selectivity and backup operators in monte-carlo tree search. International conference on computers and games. Springer, 2006.

[77] D Silver, J Schrittwieser, K Simonyan, I Antonoglou, A Huang, A Guez, T Hubert, L Baker, M Lai, A Bolton, et al. Mastering the game of go without human knowledge. Nature, 550(7676), 2017.

[78] T Chen, S Kornblith, M Norouzi, G Hinton. A simple framework for contrastive learning of visual representations. arXiv preprint arXiv:2002.05709, 2020.

[79] K He, H Fan, Y Wu, S Xie, R Girshick. Momentum contrast for unsupervised visual representation learning. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020.

[80] JB Grill, F Strub, F Altché, C Tallec, PH Richemond, E Buchatskaya, C Doersch, BA Pires, ZD Guo, MG Azar, et al. Bootstrap your own latent: A new approach to self-supervised learning. arXiv preprint arXiv:2006.07733, 2020.

[81] D Pathak, P Agrawal, AA Efros, T Darrell. Curiosity-driven exploration by self-supervised prediction. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, 2017.

[82] AA Taiga, W Fedus, MC Machado, A Courville, MG Bellemare. On bonus based exploration methods in the arcade learning environment. International Conference on Learning Representations, 2019.