Mixtral of Experts

Authors: Albert Q. Jiang, Alexandre Sablayrolles, Antoine Roux, Arthur Mensch, Blanche Savary, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Emma Bou Hanna, Florian Bressand, Gianna Lengyel, Guillaume Bour, Guillaume Lample, Lélio Renard Lavaud, Lucile Saulnier, Marie-Anne Lachaux, Pierre Stock, Sandeep Subramanian, Sophia Yang, Szymon Antoniak, Teven Le Scao, Théophile Gervet, Thibaut Lavril, Thomas Wang, Timothée Lacroix, William El Sayed

Abstract

We introduce Mixtral 8x7B, a Sparse Mixture of Experts (SMoE) language model. Mixtral has the same architecture as Mistral 7B, with the difference that each layer is composed of 8 feedforward blocks (i.e. experts). For every token, at each layer, a router network selects two experts to process the current state and combine their outputs. Even though each token only sees two experts, the selected experts can be different at each timestep. As a result, each token has access to 47B parameters, but only uses 13B active parameters during inference. Mixtral was trained with a context size of 32k tokens and it outperforms or matches Llama 2 70B and GPT-3.5 across all evaluated benchmarks. In particular, Mixtral vastly outperforms Llama 2 70B on mathematics, code generation, and multilingual benchmarks. We also provide a model fine-tuned to follow instructions, Mixtral 8x7B – Instruct, that surpasses GPT-3.5 Turbo, Claude-2.1, Gemini Pro, and Llama 2 70B – chat model on human benchmarks. Both the base and instruct models are released under the Apache 2.0 license.

Code: https://github.com/mistralai/mistral-src

Webpage: https://mistral.ai/news/mixtral-of-experts/

Executive Summary: The rapid growth of artificial intelligence, particularly large language models, has created a pressing need for tools that deliver high performance without excessive computational costs or restricted access. Current leading models, such as those from Meta and OpenAI, often require vast resources for training and inference, limiting their use in commercial and research settings. Open-source alternatives have lagged in matching closed models' capabilities, especially in specialized areas like mathematics, coding, and multilingual tasks. This document addresses that gap by introducing an innovative, efficient model to democratize advanced AI.

The objective was to develop and evaluate Mixtral 8x7B, a Sparse Mixture of Experts (SMoE) language model designed to rival or surpass much larger models using fewer active resources during operation. It also fine-tuned a version for instruction-following to enhance practical usability in chat and task-oriented applications.

Researchers built Mixtral on a proven transformer architecture, similar to the earlier Mistral 7B model, but replaced standard processing blocks with eight specialized "expert" sub-networks per layer. For each input token, a routing system selects only two experts to activate, enabling the model to draw from a total of 47 billion parameters while using just 13 billion actively—about one-fifth of a comparable dense model. Training occurred on a diverse multilingual dataset with a context window of 32,000 tokens, spanning several months on high-performance hardware. Evaluations used standardized benchmarks from sources like academic datasets on reasoning, knowledge, math, code, and bias, with results re-run for fairness against baselines like Llama 2 70B and GPT-3.5.

Key findings highlight Mixtral's superior efficiency and performance. First, it outperformed Llama 2 70B—a 70-billion-parameter model—across most benchmarks, including a 5-10% edge in overall multitask understanding (MMLU score around 70%), and much larger gains of 20-30% in math (GSM8K and MATH) and code generation (HumanEval and MBPP). Second, Mixtral matched or exceeded GPT-3.5 on general tasks like commonsense reasoning and reading comprehension, despite its smaller active footprint. Third, its multilingual capabilities shone, surpassing Llama 2 70B by 15-25% in French, German, Spanish, and Italian on challenges like ARC and Hellaswag. Fourth, the fine-tuned Instruct version topped human-evaluated chat benchmarks, scoring 8.3 on MT-Bench and outperforming GPT-3.5 Turbo, Claude-2.1, Gemini Pro, and Llama 2 70B chat by 5-15% in arenas like LMSys. Finally, it showed lower biases than Llama 2 on datasets like BBQ (51.5% vs. 56%) and more balanced sentiment in open-ended generation.

These results mean organizations can deploy powerful AI with lower inference costs—up to five times faster at small scales and higher throughput at large ones—reducing energy use and hardware needs while maintaining or boosting accuracy. This shifts the landscape for AI applications in education, software development, and global services, where efficiency impacts scalability and affordability. Unlike denser models, Mixtral's design avoids the pitfalls of overparameterization, offering better performance per compute dollar. It also handles long contexts reliably, retrieving information accurately up to 32,000 tokens, which aids complex tasks like document analysis. The open Apache 2.0 release fosters innovation without proprietary barriers.

Next steps should include integrating Mixtral into production systems for pilots in high-value areas like code assistance or multilingual support, leveraging tools like vLLM for optimized inference. For the Instruct variant, further customization via fine-tuning on domain-specific data could enhance safety and alignment. Trade-offs to consider: while active parameters are low, the full 47-billion-parameter memory load requires capable GPUs, so smaller deployments might prioritize distillation to a denser model. Additional analysis on real-world latency and edge cases, plus bias mitigation, would strengthen adoption.

Confidence in these findings is high, as they stem from rigorous, re-run benchmarks on diverse tasks, but limitations persist. Evaluations rely on synthetic or academic datasets, which may not fully capture live deployment nuances like user variability or adversarial inputs. Training assumptions, such as data upsampling for multilingual focus, could introduce unseen gaps in niche languages or topics. Routing analysis revealed no strong domain specialization among experts, suggesting potential for targeted improvements. Leaders should validate with internal trials before full commitment, especially for sensitive applications.

1 Introduction

Section Summary: This paper introduces Mixtral 8x7B, an innovative open-source AI model that combines multiple specialized components, called experts, to process language tasks efficiently by activating only a few at a time, making it faster and more capable than larger models like Llama 2 70B and GPT-3.5 in areas such as math, coding, and multilingual understanding. Trained on diverse global data with a large 32,000-token context window, it excels at retrieving and using information from long sequences. The authors also release a fine-tuned version, Mixtral 8x7B-Instruct, which follows instructions better than competitors like GPT-3.5 Turbo and shows less bias, all under a free Apache 2.0 license to encourage widespread use and development.

In this paper, we present Mixtral 8x7B, a sparse mixture of experts model (SMoE) with open weights, licensed under Apache 2.0. Mixtral outperforms Llama 2 70B and GPT-3.5 on most benchmarks. As it only uses a subset of its parameters for every token, Mixtral allows faster inference speed at low batch-sizes, and higher throughput at large batch-sizes.

Mixtral is a sparse mixture-of-experts network. It is a decoder-only model where the feedforward block picks from a set of 8 distinct groups of parameters. At every layer, for every token, a router network chooses two of these groups (the “experts”) to process the token and combine their output additively. This technique increases the number of parameters of a model while controlling cost and latency, as the model only uses a fraction of the total set of parameters per token.

Mixtral is pretrained with multilingual data using a context size of 32k tokens. It either matches or exceeds the performance of Llama 2 70B and GPT-3.5, over several benchmarks. In particular, Mixtral demonstrates superior capabilities in mathematics, code generation, and tasks that require multilingual understanding, significantly outperforming Llama 2 70B in these domains. Experiments show that Mixtral is able to successfully retrieve information from its context window of 32k tokens, regardless of the sequence length and the location of the information in the sequence.

We also present Mixtral 8x7B – Instruct, a chat model fine-tuned to follow instructions using supervised fine-tuning and Direct Preference Optimization [1]. Its performance notably surpasses that of GPT-3.5 Turbo, Claude-2.1, Gemini Pro, and Llama 2 70B – chat model on human evaluation benchmarks. Mixtral – Instruct also demonstrates reduced biases, and a more balanced sentiment profile in benchmarks such as BBQ, and BOLD.

We release both Mixtral 8x7B and Mixtral 8x7B – Instruct under the Apache 2.0 license^1, free for academic and commercial usage, ensuring broad accessibility and potential for diverse applications. To enable the community to run Mixtral with a fully open-source stack, we submitted changes to the vLLM project, which integrates Megablocks CUDA kernels for efficient inference. Skypilot also allows the deployment of vLLM endpoints on any instance in the cloud.

2 Architectural details

Section Summary: Mixtral builds on a standard transformer model but supports longer sequences of up to 32,000 words and replaces the usual processing blocks with a specialized "Mixture of Experts" system. In this setup, each piece of input text is routed to just two out of eight expert sub-networks, which handle the computation and combine their results into a weighted output, making the model more efficient by only activating a portion of its total parameters per task. This approach allows the model to scale up in size without proportionally increasing the computing power needed, and it runs smoothly on single or multiple graphics processors using techniques that balance the workload evenly.

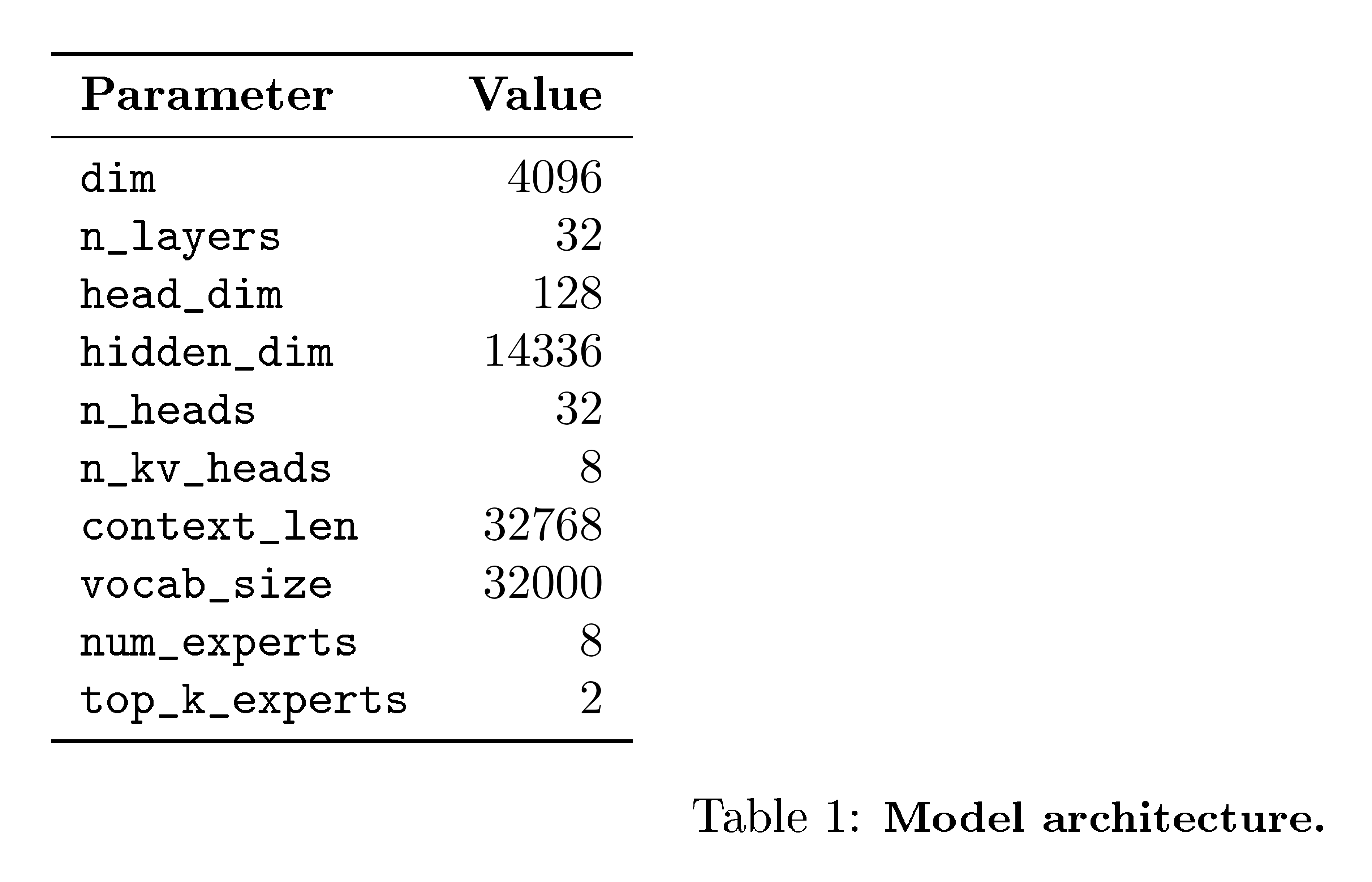

Mixtral is based on a transformer architecture [2] and uses the same modifications as described in [3], with the notable exceptions that Mixtral supports a fully dense context length of 32k tokens, and the feedforward blocks are replaced by Mixture-of-Expert layers (Section 2.1). The model architecture parameters are summarized in Table 1.

2.1 Sparse Mixture of Experts

We present a brief overview of the Mixture of Experts layer (Figure 1). For a more in-depth overview, see [4]. The output of the MoE module for a given input $x$ is determined by the weighted sum of the outputs of the expert networks, where the weights are given by the gating network's output. i.e. given $n$ expert networks ${E_0, E_i, ..., E_{n-1}}$, the output of the expert layer is given by:

$ \sum_{i=0}^{n-1} G(x)_i \cdot E_i(x). $

Here, $G(x)_i$ denotes the $n$-dimensional output of the gating network for the $i$-th expert, and $E_i(x)$ is the output of the $i$-th expert network. If the gating vector is sparse, we can avoid computing the outputs of experts whose gates are zero. There are multiple alternative ways of implementing $G(x)$ [5, 6, 7], but a simple and performant one is implemented by taking the softmax over the Top-K logits of a linear layer [8]. We use

$ G(x) := \text{Softmax}(\text{TopK}(x \cdot W_g)), $

where $(\text{TopK}(\ell))_i := \ell_i$ if $\ell_i$ is among the top-K coordinates of logits $\ell \in \mathbb{R}^n$ and $(\text{TopK}(\ell))_i := -\infty$ otherwise. The value of K – the number of experts used per token – is a hyper-parameter that modulates the amount of compute used to process each token. If one increases $n$ while keeping $K$ fixed, one can increase the model's parameter count while keeping its computational cost effectively constant. This motivates a distinction between the model's total parameter count (commonly referenced as the sparse parameter count), which grows with $n$, and the number of parameters used for processing an individual token (called the active parameter count), which grows with $K$ up to $n$.

MoE layers can be run efficiently on single GPUs with high performance specialized kernels. For example, Megablocks [9] casts the feed-forward network (FFN) operations of the MoE layer as large sparse matrix multiplications, significantly enhancing the execution speed and naturally handling cases where different experts get a variable number of tokens assigned to them. Moreover, the MoE layer can be distributed to multiple GPUs through standard Model Parallelism techniques, and through a particular kind of partitioning strategy called Expert Parallelism (EP) [8]. During the MoE layer's execution, tokens meant to be processed by a specific expert are routed to the corresponding GPU for processing, and the expert's output is returned to the original token location. Note that EP introduces challenges in load balancing, as it is essential to distribute the workload evenly across the GPUs to prevent overloading individual GPUs or hitting computational bottlenecks.

In a Transformer model, the MoE layer is applied independently per token and replaces the feed-forward (FFN) sub-block of the transformer block. For Mixtral we use the same SwiGLU architecture as the expert function $E_i(x)$ and set $K=2$. This means each token is routed to two SwiGLU sub-blocks with different sets of weights. Taking this all together, the output $y$ for an input token $x$ is computed as:

$ y = \sum_{i=0}^{n-1} \text{Softmax}(\text{Top2}(x \cdot W_g))_i \cdot \text{SwiGLU}_i(x). $

This formulation is similar to the GShard architecture [10], with the exceptions that we replace all FFN sub-blocks by MoE layers while GShard replaces every other block, and that GShard uses a more elaborate gating strategy for the second expert assigned to each token.

3 Results

Section Summary: Mixtral, a new AI model, performs as well as or better than the much larger Llama 2 70B on a broad range of tasks like reasoning, knowledge recall, math, and coding, while using only about one-fifth as many active parameters during processing, making it more efficient. It also matches or exceeds GPT-3.5 on key benchmarks and shines in multilingual tests, outperforming Llama 2 70B in languages such as French, German, Spanish, and Italian. Additionally, Mixtral handles long contexts exceptionally well, retrieving information accurately even in extended prompts and showing improved understanding as input length increases.

We compare Mixtral to Llama, and re-run all benchmarks with our own evaluation pipeline for fair comparison. We measure performance on a wide variety of tasks categorized as follow:

- Commonsense Reasoning (0-shot): Hellaswag [11], Winogrande [12], PIQA [13], SIQA [14], OpenbookQA [15], ARC-Easy, ARC-Challenge [16], CommonsenseQA [17]

- World Knowledge (5-shot): NaturalQuestions [18], TriviaQA [19]

- Reading Comprehension (0-shot): BoolQ [20], QuAC [21]

- Math: GSM8K [22] (8-shot) with maj@8 and MATH [23] (4-shot) with maj@4

- Code: Humaneval [24] (0-shot) and MBPP [25] (3-shot)

- Popular aggregated results: MMLU [26] (5-shot), BBH [27] (3-shot), and AGI Eval [28] (3-5-shot, English multiple-choice questions only)

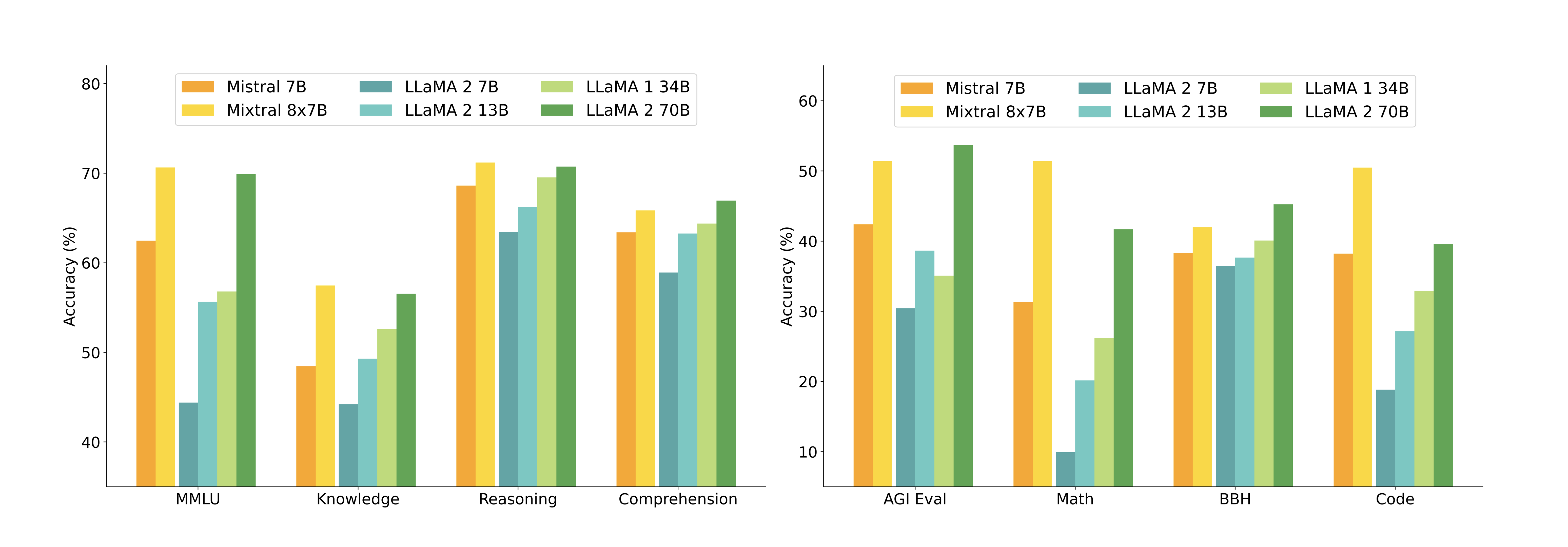

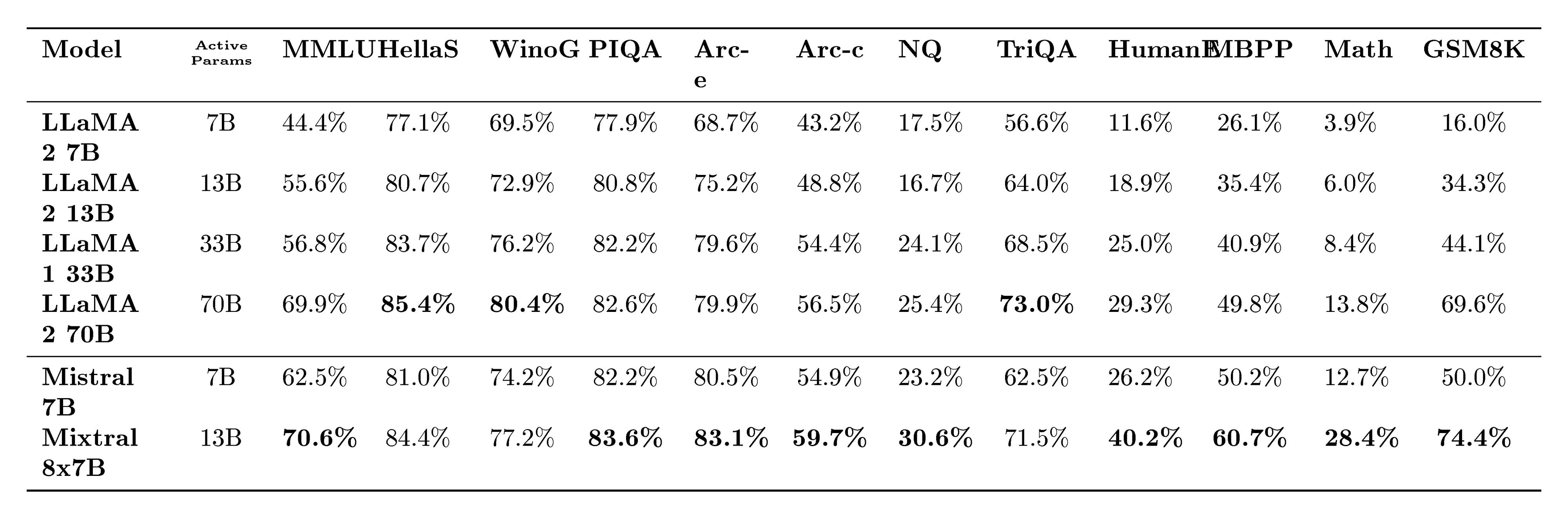

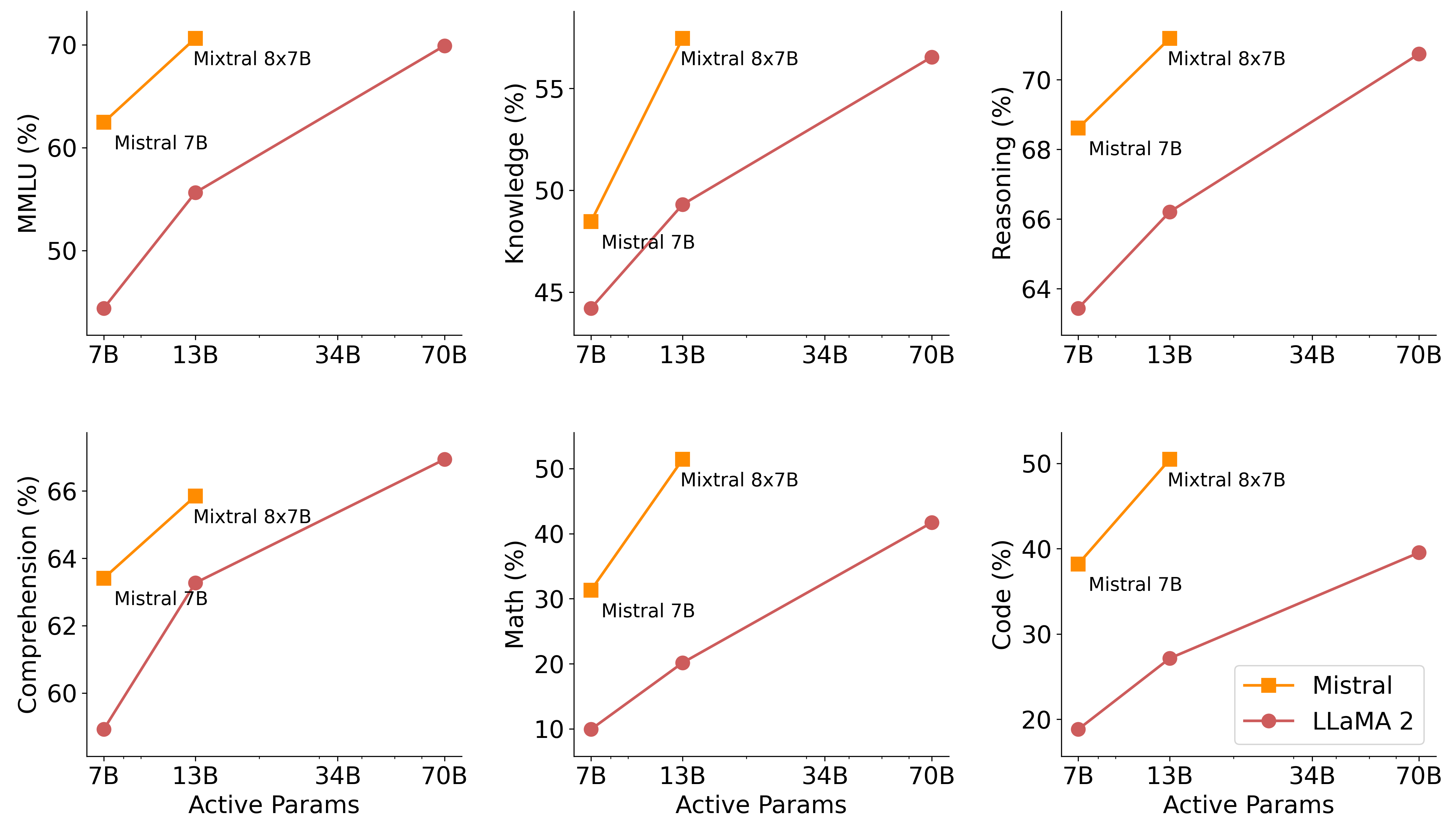

Detailed results for Mixtral, Mistral 7B and Llama 2 7B/13B/70B and Llama 1 34B[^2] are reported in Table 1. Figure 3 compares the performance of Mixtral with the Llama models in different categories. Mixtral surpasses Llama 2 70B across most metrics. In particular, Mixtral displays a superior performance in code and mathematics benchmarks.

[^2]: Since Llama 2 34B was not open-sourced, we report results for Llama 1 34B.

Size and Efficiency. We compare our performance to the Llama 2 family, aiming to understand Mixtral models' efficiency in the cost-performance spectrum (see Figure 3). As a sparse Mixture-of-Experts model, Mixtral only uses 13B active parameters for each token. With 5x lower active parameters, Mixtral is able to outperform Llama 2 70B across most categories.

Note that this analysis focuses on the active parameter count (see Section 2.1), which is directly proportional to the inference compute cost, but does not consider the memory costs and hardware utilization. The memory costs for serving Mixtral are proportional to its sparse parameter count, 47B, which is still smaller than Llama 2 70B. As for device utilization, we note that the SMoEs layer introduces additional overhead due to the routing mechanism and due to the increased memory loads when running more than one expert per device. They are more suitable for batched workloads where one can reach a good degree of arithmetic intensity.

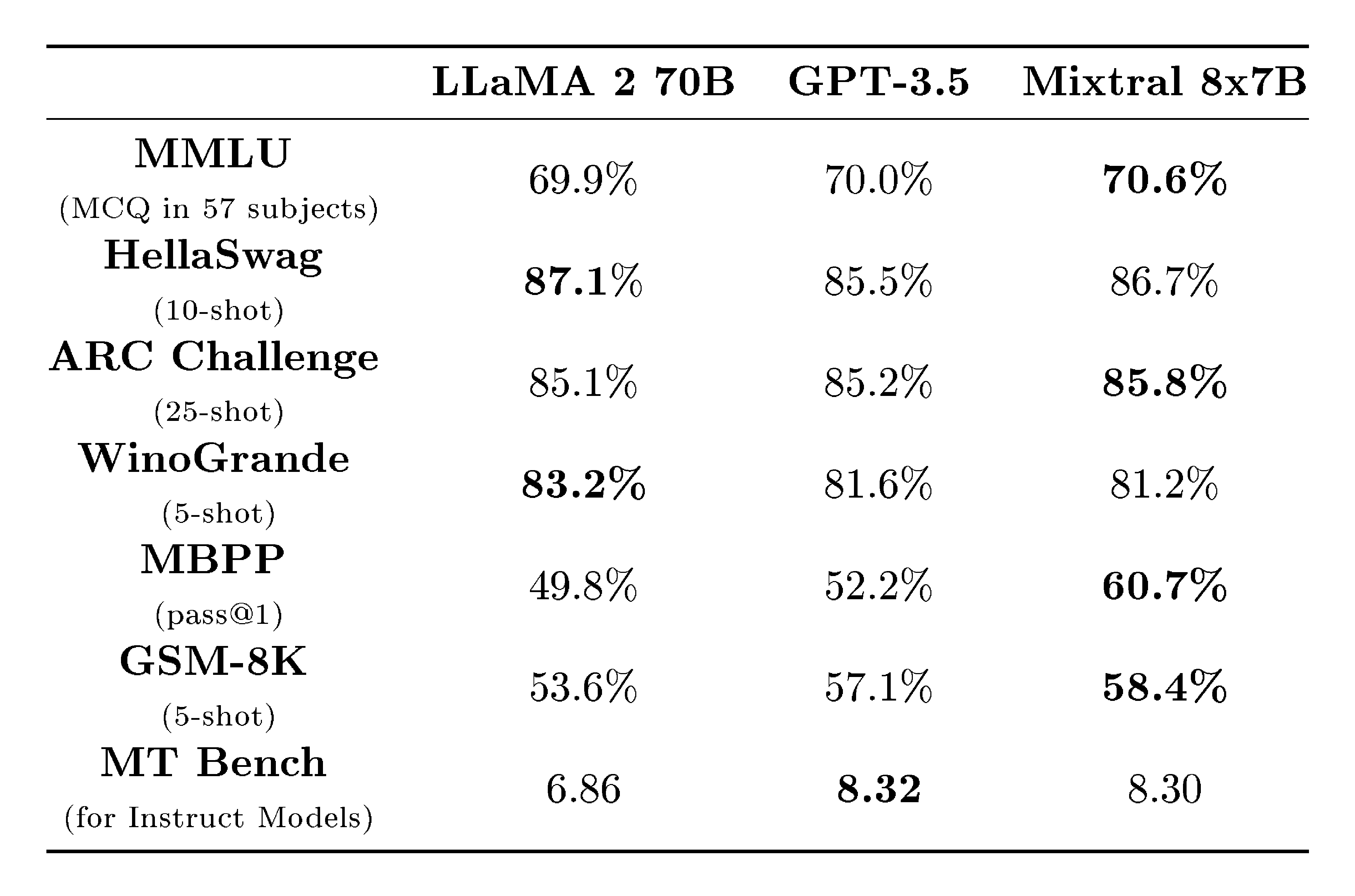

Comparison with Llama 2 70B and GPT-3.5. In Table 2, we report the performance of Mixtral 8x7B compared to Llama 2 70B and GPT-3.5. We observe that Mixtral performs similarly or above the two other models. On MMLU, Mixtral obtains a better performance, despite its significantly smaller capacity (47B tokens compared to 70B). For MT Bench, we report the performance of the latest GPT-3.5-Turbo model available, gpt-3.5-turbo-1106.

Evaluation Differences. On some benchmarks, there are some differences between our evaluation protocol and the one reported in the Llama 2 paper: 1) on MBPP, we use the hand-verified subset 2) on TriviaQA, we do not provide Wikipedia contexts.

:::

Table 2: Comparison of Mixtral with Llama 2 70B and GPT-3.5. Mixtral outperforms or matches Llama 2 70B and GPT-3.5 performance on most metrics.

:::

3.1 Multilingual benchmarks

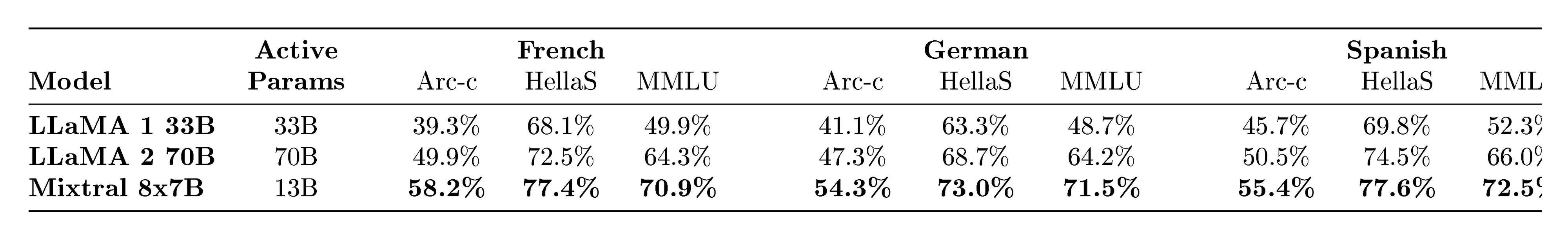

Compared to Mistral 7B, we significantly upsample the proportion of multilingual data during pretraining. The extra capacity allows Mixtral to perform well on multilingual benchmarks while maintaining a high accuracy in English. In particular, Mixtral significantly outperforms Llama 2 70B in French, German, Spanish, and Italian, as shown in Table 3.

:::

Table 3: Comparison of Mixtral with Llama on Multilingual Benchmarks. On ARC Challenge, Hellaswag, and MMLU, Mixtral outperforms Llama 2 70B on 4 languages: French, German, Spanish, and Italian.

:::

3.2 Long range performance

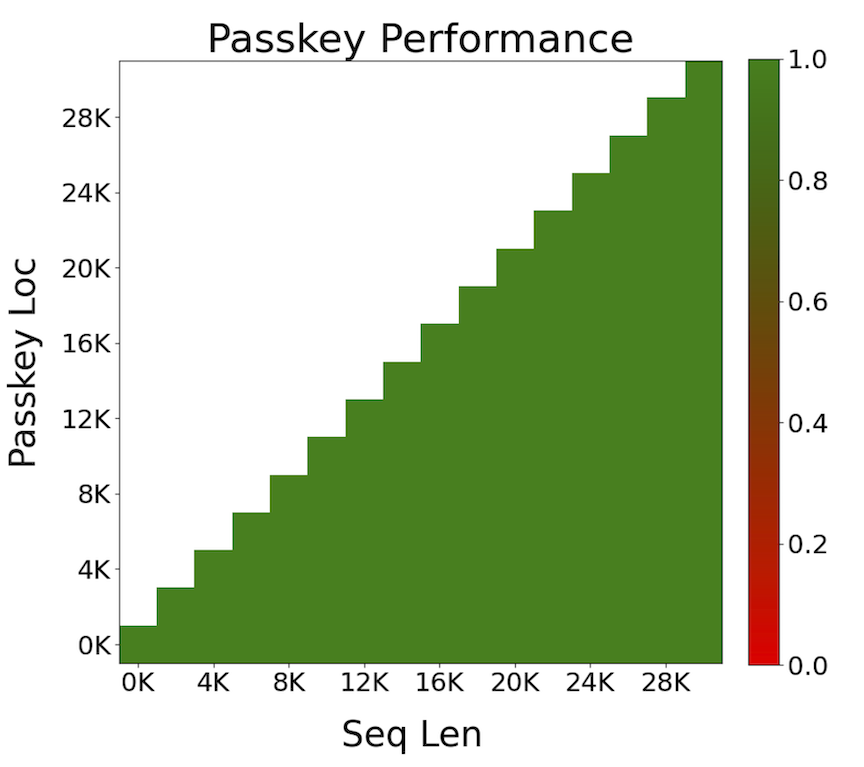

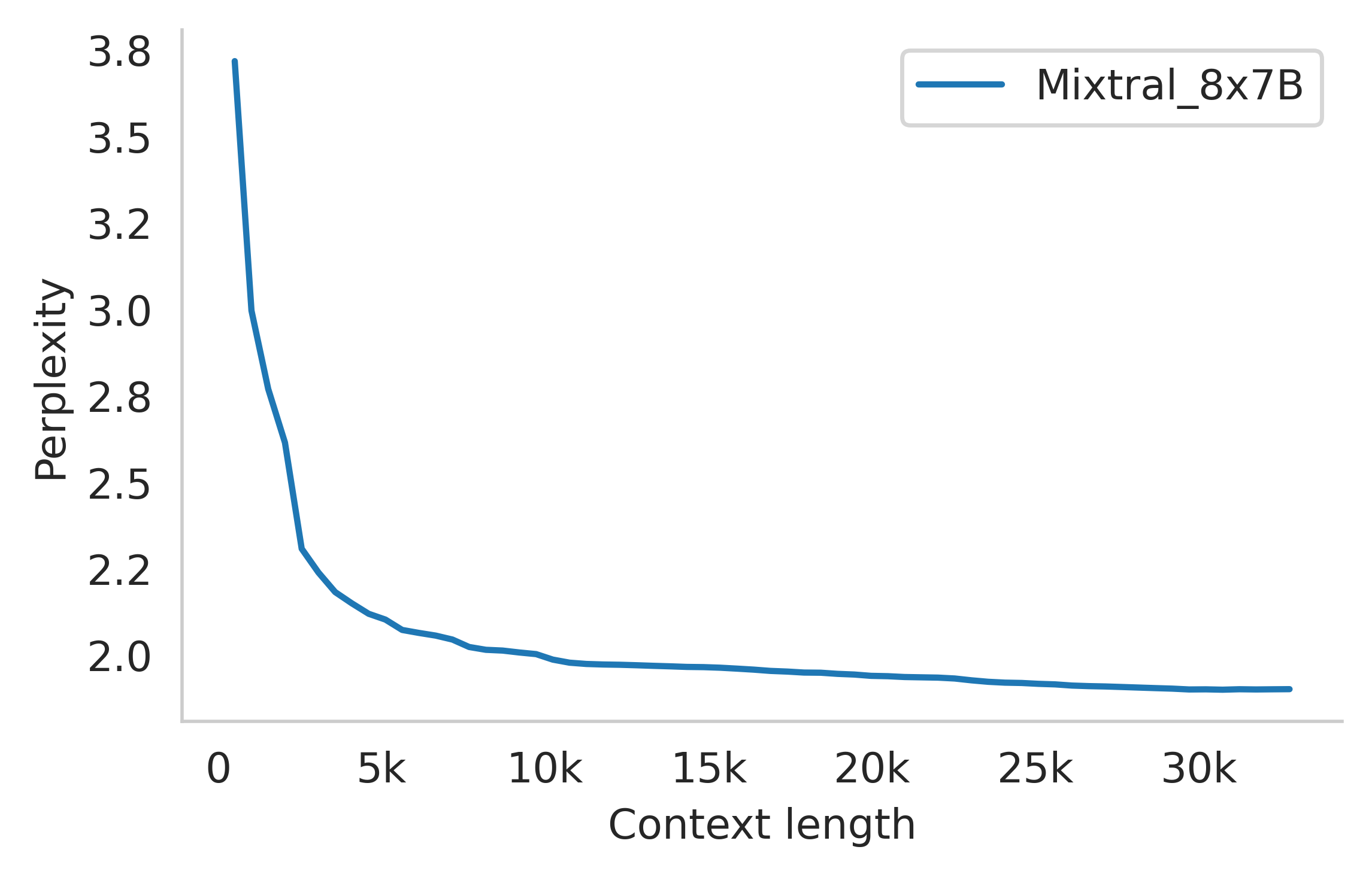

To assess the capabilities of Mixtral to tackle long context, we evaluate it on the passkey retrieval task introduced in [29], a synthetic task designed to measure the ability of the model to retrieve a passkey inserted randomly in a long prompt. Results in Figure 5 (Left) show that Mixtral achieves a 100% retrieval accuracy regardless of the context length or the position of passkey in the sequence. Figure 5 (Right) shows that the perplexity of Mixtral on a subset of the proof-pile dataset [30] decreases monotonically as the size of the context increases.

::::

Figure 4: Long range performance of Mixtral. (Left) Mixtral has 100% retrieval accuracy of the Passkey task regardless of the location of the passkey and length of the input sequence. (Right) The perplexity of Mixtral on the proof-pile dataset decreases monotonically as the context length increases. ::::

3.3 Bias Benchmarks

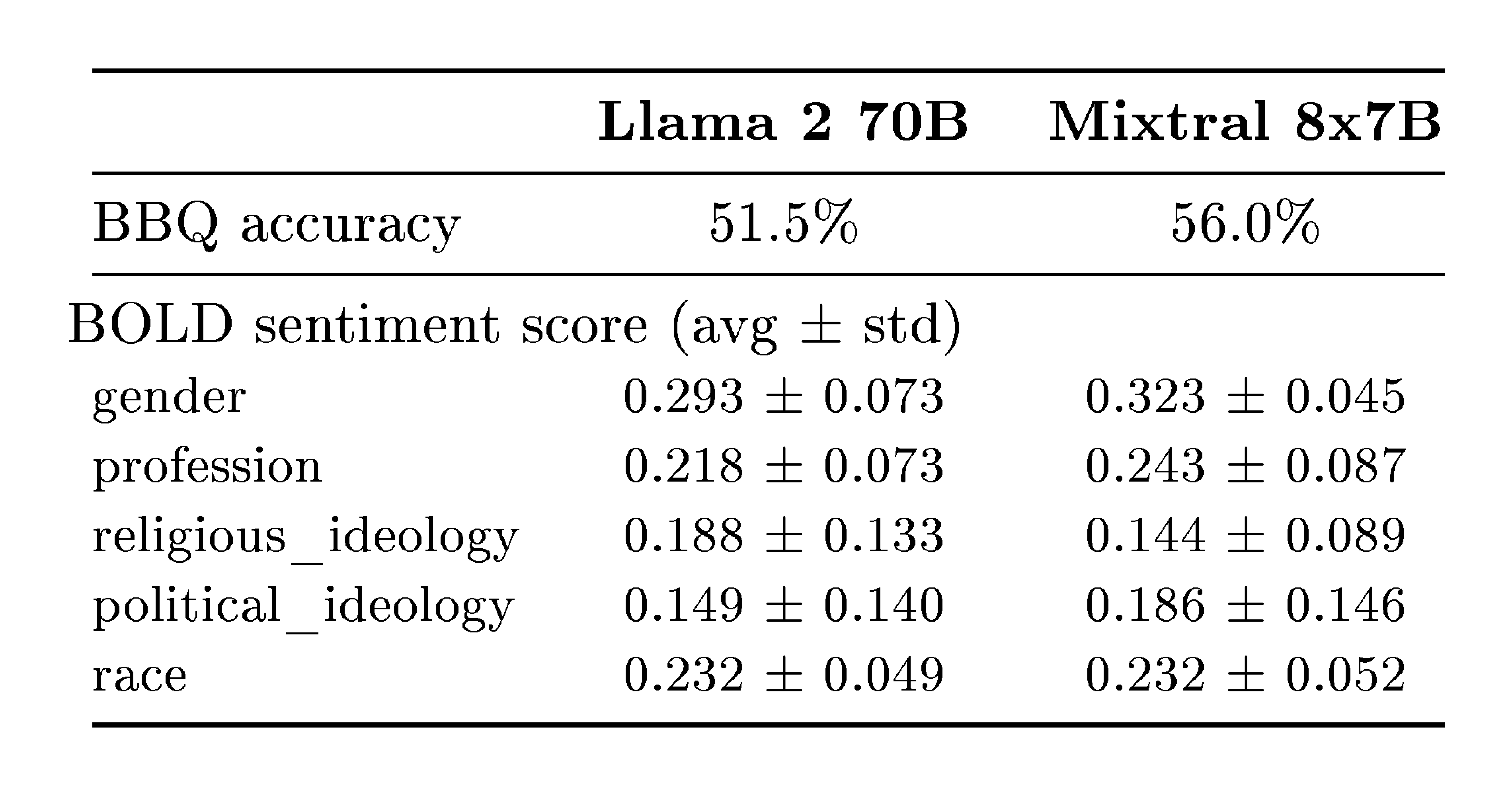

To identify possible flaws to be corrected by fine-tuning / preference modeling, we measure the base model performance on Bias Benchmark for QA (BBQ) [31] and Bias in Open-Ended Language Generation Dataset (BOLD) [32]. BBQ is a dataset of hand-written question sets that target attested social biases against nine different socially-relevant categories: age, disability status, gender identity, nationality, physical appearance, race/ethnicity, religion, socio-economic status, sexual orientation. BOLD is a large-scale dataset that consists of 23, 679 English text generation prompts for bias benchmarking across five domains.

We benchmark Llama 2 and Mixtral on BBQ and BOLD with our evaluation framework and report the results in Table 5. Compared to Llama 2, Mixtral presents less bias on the BBQ benchmark (56.0% vs 51.5%). For each group in BOLD, a higher average sentiment score means more positive sentiments and a lower standard deviation indicates less bias within the group. Overall, Mixtral displays more positive sentiments than Llama 2, with similar variances within each group.

4 Instruction Fine-tuning

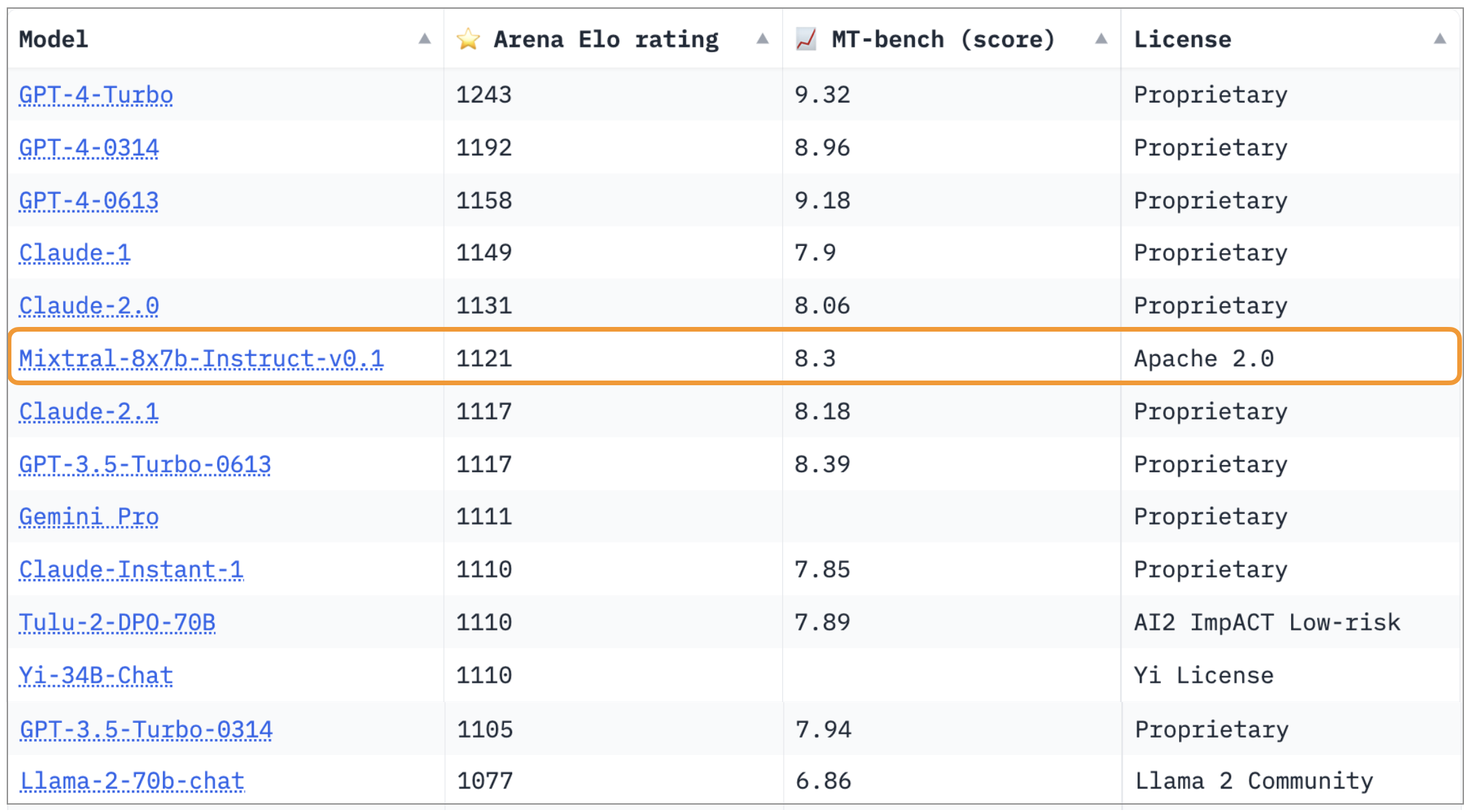

Section Summary: To create the instruction-following version of Mixtral, called Mixtral-Instruct, developers used a two-step training process: first fine-tuning the model on a dataset of instructions with correct responses, then optimizing it further with a technique that compares preferred and less preferred answers to improve helpfulness. This resulted in a top score of 8.30 on the MT-Bench evaluation, making it the leading open-source model available in December 2023. Independent tests by LMSys confirmed its superiority, with an Elo rating of 1121 on their leaderboard, beating models like GPT-3.5-Turbo, Gemini Pro, Claude-2.1, and Llama 2 70B chat.

We train Mixtral – Instruct using supervised fine-tuning (SFT) on an instruction dataset followed by Direct Preference Optimization (DPO) [1] on a paired feedback dataset. Mixtral – Instruct reaches a score of 8.30 on MT-Bench [33] (see Table 2), making it the best open-weights model as of December 2023. Independent human evaluation conducted by LMSys is reported in Figure 7^3 and shows that Mixtral – Instruct outperforms GPT-3.5-Turbo, Gemini Pro, Claude-2.1, and Llama 2 70B chat.

5 Routing analysis

Section Summary: Researchers analyzed how the model's router selects different "experts" (specialized components) during training, checking if they focus on specific topics like math, biology, or philosophy using subsets of a large text dataset. Surprisingly, expert assignments did not vary much by topic across most areas, such as scientific papers, biology abstracts, and philosophy texts, though math problems showed slight differences likely due to their artificial format. Instead, the router seemed to follow syntactic patterns, like routing similar words or code structures to the same expert and often assigning consecutive words to the same one, which could help optimize training and speed up the model's performance.

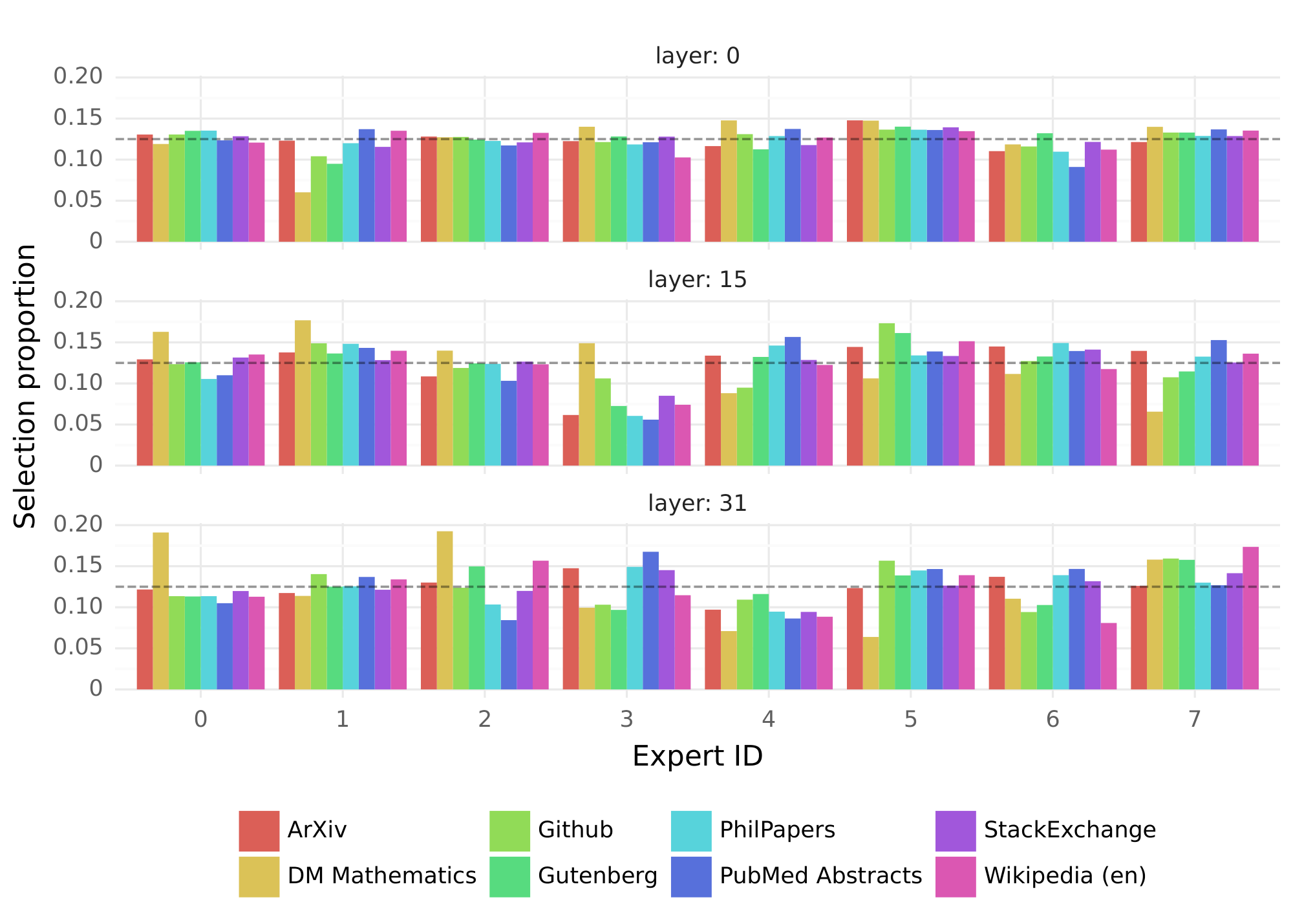

In this section, we perform a small analysis on the expert selection by the router. In particular, we are interested to see if during training some experts specialized to some specific domains (e.g. mathematics, biology, philosophy, etc.).

To investigate this, we measure the distribution of selected experts on different subsets of The Pile validation dataset [34]. Results are presented in Figure 7, for layers 0, 15, and 31 (layers 0 and 31 respectively being the first and the last layers of the model). Surprisingly, we do not observe obvious patterns in the assignment of experts based on the topic. For instance, at all layers, the distribution of expert assignment is very similar for ArXiv papers (written in Latex), for biology (PubMed Abstracts), and for Philosophy (PhilPapers) documents.

Only for DM Mathematics we note a marginally different distribution of experts. This divergence is likely a consequence of the dataset's synthetic nature and its limited coverage of the natural language spectrum, and is particularly noticeable at the first and last layers, where the hidden states are very correlated to the input and output embeddings respectively.

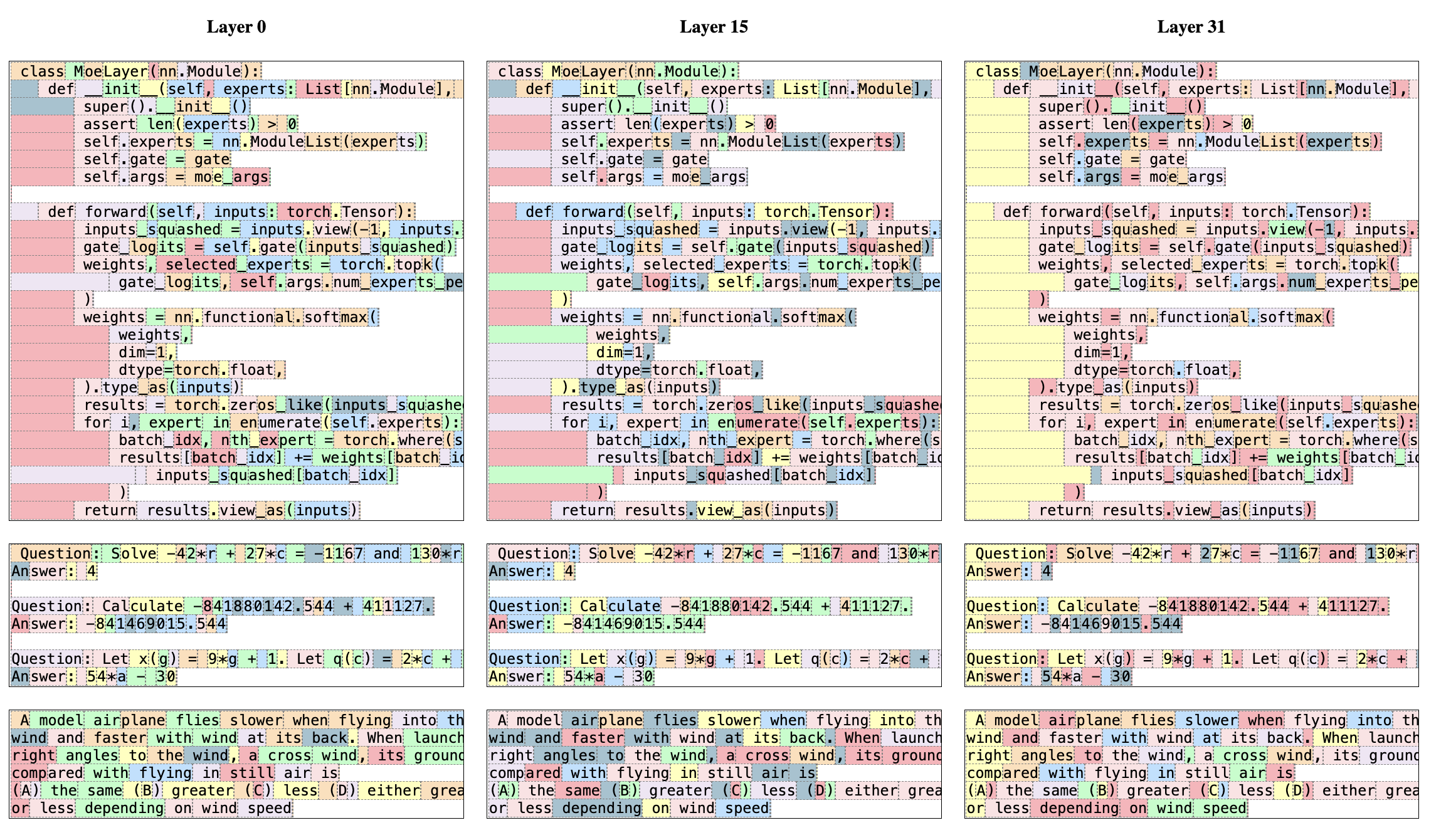

This suggests that the router does exhibit some structured syntactic behavior. Figure 9 shows examples of text from different domains (Python code, mathematics, and English), where each token is highlighted with a background color corresponding to its selected expert. The figure shows that words such as 'self' in Python and 'Question' in English often get routed through the same expert even though they involve multiple tokens. Similarly, in code, the indentation tokens are always assigned to the same experts, particularly at the first and last layers where the hidden states are more correlated to the input and output of the model.

We also note from Figure 9 that consecutive tokens are often assigned the same experts. In fact, we observe some degree of positional locality in The Pile datasets. Table 4 shows the proportion of consecutive tokens that get the same expert assignments per domain and layer. The proportion of repeated

consecutive assignments is significantly higher than random for higher layers. This has implications in how one might optimize the model for fast training and inference. For example, cases with high locality are more likely to cause over-subscription of certain experts when doing Expert Parallelism. Conversely, this locality can be leveraged for caching, as is done in [35]. A more complete view of these same expert frequency is provided for all layers and across datasets in Figure 10 in the Appendix.

\begin{tabular}{l|ccc|ccc}

\toprule

& \multicolumn{3}{c}{First choice} & \multicolumn{3}{c}{First or second choice} \\

& Layer 0 & Layer 15 & Layer 31 & Layer 0 & Layer 15 & Layer 31 \\

\midrule

ArXiv & 14.0\% & 27.9\% & 22.7\% & 46.5\% & 62.3\% & 52.9\% \\

DM Mathematics & 14.1\% & 28.4\% & 19.7\% & 44.9\% & 67.0\% & 44.5\% \\

Github & 14.9\% & 28.1\% & 19.7\% & 49.9\% & 66.9\% & 49.2\% \\

Gutenberg & 13.9\% & 26.1\% & 26.3\% & 49.5\% & 63.1\% & 52.2\% \\

PhilPapers & 13.6\% & 25.3\% & 22.1\% & 46.9\% & 61.9\% & 51.3\% \\

PubMed Abstracts & 14.2\% & 24.6\% & 22.0\% & 48.6\% & 61.6\% & 51.8\% \\

StackExchange & 13.6\% & 27.2\% & 23.6\% & 48.2\% & 64.6\% & 53.6\% \\

Wikipedia (en) & 14.4\% & 23.6\% & 25.3\% & 49.8\% & 62.1\% & 51.8\% \\

\bottomrule

\end{tabular}

6 Conclusion

Section Summary: This paper introduces Mixtral 8x7B, the first open-source mixture-of-experts AI model to achieve top performance in its category. Its instructed version beats competitors like Claude-2.1, Gemini Pro, and GPT-3.5 Turbo on key human-judged tests, while using just 13 billion active parameters per token—far fewer than the 70 billion needed by the previous leader, Llama 2 70B. The authors are releasing the trained and fine-tuned models for free under the Apache 2.0 license to help spur new innovations across various industries.

In this paper, we introduced Mixtral 8x7B, the first mixture-of-experts network to reach a state-of-the-art performance among open-source models. Mixtral 8x7B Instruct outperforms Claude-2.1, Gemini Pro, and GPT-3.5 Turbo on human evaluation benchmarks. Because it only uses two experts at each time step, Mixtral only uses 13B active parameters per token while outperforming the previous best model using 70B parameters per token (Llama 2 70B). We are making our trained and fine-tuned models publicly available under the Apache 2.0 license. By sharing our models, we aim to facilitate the development of new techniques and applications that can benefit a wide range of industries and domains.

Acknowledgements

Section Summary: The acknowledgements section expresses gratitude to the teams at CoreWeave and Scaleway for providing technical support during the training of the AI models. It also thanks NVIDIA for their assistance in integrating specialized software tools like TensorRT-LLM and Triton, as well as collaborating to adapt a technique called sparse mixture of experts to work with TensorRT-LLM.

We thank the CoreWeave and Scaleway teams for technical support as we trained our models. We are grateful to NVIDIA for supporting us in integrating TensorRT-LLM and Triton and working alongside us to make a sparse mixture of experts compatible with TensorRT-LLM.

References

Section Summary: This references section lists key academic papers and preprints on artificial intelligence, focusing on advancements in language models like the Transformer architecture and mixture-of-experts systems for scaling large neural networks efficiently. It includes foundational works on training and optimizing models, such as direct preference optimization and sparse expert routing techniques. Additionally, it covers numerous benchmarks for evaluating AI performance on tasks like commonsense reasoning, question answering, math problem-solving, code generation, and multitask understanding.

[1] Rafael Rafailov, Archit Sharma, Eric Mitchell, Stefano Ermon, Christopher D Manning, and Chelsea Finn. Direct preference optimization: Your language model is secretly a reward model. arXiv preprint arXiv:2305.18290, 2023.

[2] Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. Advances in neural information processing systems, 30, 2017.

[3] Albert Q Jiang, Alexandre Sablayrolles, Arthur Mensch, Chris Bamford, Devendra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guillaume Lample, Lucile Saulnier, et al. Mistral 7b. arXiv preprint arXiv:2310.06825, 2023.

[4] William Fedus, Jeff Dean, and Barret Zoph. A review of sparse expert models in deep learning. arXiv preprint arXiv:2209.01667, 2022.

[5] Aidan Clark, Diego De Las Casas, Aurelia Guy, Arthur Mensch, Michela Paganini, Jordan Hoffmann, Bogdan Damoc, Blake Hechtman, Trevor Cai, Sebastian Borgeaud, et al. Unified scaling laws for routed language models. In International Conference on Machine Learning, pages 4057–4086. PMLR, 2022.

[6] Hussein Hazimeh, Zhe Zhao, Aakanksha Chowdhery, Maheswaran Sathiamoorthy, Yihua Chen, Rahul Mazumder, Lichan Hong, and Ed Chi. Dselect-k: Differentiable selection in the mixture of experts with applications to multi-task learning. Advances in Neural Information Processing Systems, 34:29335–29347, 2021.

[7] Yanqi Zhou, Tao Lei, Hanxiao Liu, Nan Du, Yanping Huang, Vincent Zhao, Andrew M Dai, Quoc V Le, James Laudon, et al. Mixture-of-experts with expert choice routing. Advances in Neural Information Processing Systems, 35:7103–7114, 2022.

[8] Noam Shazeer, Azalia Mirhoseini, Krzysztof Maziarz, Andy Davis, Quoc Le, Geoffrey Hinton, and Jeff Dean. Outrageously large neural networks: The sparsely-gated mixture-of-experts layer. arXiv preprint arXiv:1701.06538, 2017.

[9] Trevor Gale, Deepak Narayanan, Cliff Young, and Matei Zaharia. Megablocks: Efficient sparse training with mixture-of-experts. arXiv preprint arXiv:2211.15841, 2022.

[10] Dmitry Lepikhin, HyoukJoong Lee, Yuanzhong Xu, Dehao Chen, Orhan Firat, Yanping Huang, Maxim Krikun, Noam Shazeer, and Zhifeng Chen. Gshard: Scaling giant models with conditional computation and automatic sharding. arXiv preprint arXiv:2006.16668, 2020.

[11] Rowan Zellers, Ari Holtzman, Yonatan Bisk, Ali Farhadi, and Yejin Choi. Hellaswag: Can a machine really finish your sentence? arXiv preprint arXiv:1905.07830, 2019.

[12] Keisuke Sakaguchi, Ronan Le Bras, Chandra Bhagavatula, and Yejin Choi. Winogrande: An adversarial winograd schema challenge at scale. Communications of the ACM, pages 99–106, 2021.

[13] Yonatan Bisk, Rowan Zellers, Jianfeng Gao, Yejin Choi, et al. Piqa: Reasoning about physical commonsense in natural language. In Proceedings of the AAAI conference on artificial intelligence, pages 7432–7439, 2020.

[14] Maarten Sap, Hannah Rashkin, Derek Chen, Ronan LeBras, and Yejin Choi. Socialiqa: Commonsense reasoning about social interactions. arXiv preprint arXiv:1904.09728, 2019.

[15] Todor Mihaylov, Peter Clark, Tushar Khot, and Ashish Sabharwal. Can a suit of armor conduct electricity? a new dataset for open book question answering. arXiv preprint arXiv:1809.02789, 2018.

[16] Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge. arXiv preprint arXiv:1803.05457, 2018.

[17] Alon Talmor, Jonathan Herzig, Nicholas Lourie, and Jonathan Berant. Commonsenseqa: A question answering challenge targeting commonsense knowledge. arXiv preprint arXiv:1811.00937, 2018.

[18] Tom Kwiatkowski, Jennimaria Palomaki, Olivia Redfield, Michael Collins, Ankur Parikh, Chris Alberti, Danielle Epstein, Illia Polosukhin, Jacob Devlin, Kenton Lee, et al. Natural questions: a benchmark for question answering research. Transactions of the Association for Computational Linguistics, pages 453–466, 2019.

[19] Mandar Joshi, Eunsol Choi, Daniel S Weld, and Luke Zettlemoyer. Triviaqa: A large scale distantly supervised challenge dataset for reading comprehension. arXiv preprint arXiv:1705.03551, 2017.

[20] Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, and Kristina Toutanova. Boolq: Exploring the surprising difficulty of natural yes/no questions. arXiv preprint arXiv:1905.10044, 2019.

[21] Eunsol Choi, He He, Mohit Iyyer, Mark Yatskar, Wen-tau Yih, Yejin Choi, Percy Liang, and Luke Zettlemoyer. Quac: Question answering in context. arXiv preprint arXiv:1808.07036, 2018.

[22] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

[23] Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv:2103.03874, 2021.

[24] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374, 2021.

[25] Jacob Austin, Augustus Odena, Maxwell Nye, Maarten Bosma, Henryk Michalewski, David Dohan, Ellen Jiang, Carrie Cai, Michael Terry, Quoc Le, et al. Program synthesis with large language models. arXiv preprint arXiv:2108.07732, 2021.

[26] Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300, 2020.

[27] Mirac Suzgun, Nathan Scales, Nathanael Schärli, Sebastian Gehrmann, Yi Tay, Hyung Won Chung, Aakanksha Chowdhery, Quoc V Le, Ed H Chi, Denny Zhou, , and Jason Wei. Challenging big-bench tasks and whether chain-of-thought can solve them. arXiv preprint arXiv:2210.09261, 2022.

[28] Wanjun Zhong, Ruixiang Cui, Yiduo Guo, Yaobo Liang, Shuai Lu, Yanlin Wang, Amin Saied, Weizhu Chen, and Nan Duan. Agieval: A human-centric benchmark for evaluating foundation models. arXiv preprint arXiv:2304.06364, 2023.

[29] Amirkeivan Mohtashami and Martin Jaggi. Landmark attention: Random-access infinite context length for transformers. arXiv preprint arXiv:2305.16300, 2023.

[30] Zhangir Azerbayev, Hailey Schoelkopf, Keiran Paster, Marco Dos Santos, Stephen McAleer, Albert Q Jiang, Jia Deng, Stella Biderman, and Sean Welleck. Llemma: An open language model for mathematics. arXiv preprint arXiv:2310.10631, 2023.

[31] Alicia Parrish, Angelica Chen, Nikita Nangia, Vishakh Padmakumar, Jason Phang, Jana Thompson, Phu Mon Htut, and Samuel R Bowman. Bbq: A hand-built bias benchmark for question answering. arXiv preprint arXiv:2110.08193, 2021.

[32] Jwala Dhamala, Tony Sun, Varun Kumar, Satyapriya Krishna, Yada Pruksachatkun, Kai-Wei Chang, and Rahul Gupta. Bold: Dataset and metrics for measuring biases in open-ended language generation. In Proceedings of the 2021 ACM conference on fairness, accountability, and transparency, pages 862–872, 2021.

[33] Lianmin Zheng, Wei-Lin Chiang, Ying Sheng, Siyuan Zhuang, Zhanghao Wu, Yonghao Zhuang, Zi Lin, Zhuohan Li, Dacheng Li, Eric Xing, et al. Judging llm-as-a-judge with mt-bench and chatbot arena. arXiv preprint arXiv:2306.05685, 2023.

[34] Leo Gao, Stella Biderman, Sid Black, Laurence Golding, Travis Hoppe, Charles Foster, Jason Phang, Horace He, Anish Thite, Noa Nabeshima, et al. The pile: An 800gb dataset of diverse text for language modeling. arXiv preprint arXiv:2101.00027, 2020.

[35] Artyom Eliseev and Denis Mazur. Fast inference of mixture-of-experts language models with offloading. arXiv preprint arXiv:2312.17238, 2023.