Diffusion-LM Improves Controllable Text Generation

Xiang Lisa Li

Stanford University

[email protected]

John Thickstun

Stanford University

[email protected]

Ishaan Gulrajani

Stanford Univeristy

[email protected]

Percy Liang

Stanford Univeristy

[email protected]

Tatsunori B. Hashimoto

Stanford Univeristy

[email protected]

Abstract

Controlling the behavior of language models (LMs) without re-training is a major open problem in natural language generation. While recent works have demonstrated successes on controlling simple sentence attributes (e.g., sentiment), there has been little progress on complex, fine-grained controls (e.g., syntactic structure). To address this challenge, we develop a new non-autoregressive language model based on continuous diffusions that we call Diffusion-LM. Building upon the recent successes of diffusion models in continuous domains, Diffusion-LM iteratively denoises a sequence of Gaussian vectors into word vectors, yielding a sequence of intermediate latent variables. The continuous, hierarchical nature of these intermediate variables enables a simple gradient-based algorithm to perform complex, controllable generation tasks. We demonstrate successful control of Diffusion-LM for six challenging fine-grained control tasks, significantly outperforming prior work.[^1]

[^1]: Code is available at https://github.com/XiangLi1999/Diffusion-LM.git

Executive Summary: Large language models generate high-quality text but struggle to produce output tailored to specific requirements, such as particular sentiments, topics, or syntactic structures, without costly retraining on task-specific data. This limits their practical use in applications like automated content creation, chatbots, or report writing, where reliability and customization are key. Current methods either retrain models, which is expensive and inflexible for combining controls, or use lightweight "plug-and-play" techniques that work only for basic attributes and fail on complex, detailed constraints like exact sentence structures.

This document introduces and evaluates Diffusion-LM, a new type of language model designed to generate text under fine-grained controls without retraining. The goal is to demonstrate that Diffusion-LM can reliably produce text meeting multiple, intricate specifications, such as targeted semantic details or parse trees, while keeping the model versatile for various tasks.

The authors adapted diffusion models—proven in image and audio generation—from continuous domains to discrete text. They embedded words into a continuous vector space, trained the model on two datasets (50,000 restaurant reviews and 98,000 short stories) to iteratively denoise random noise into word-like vectors over 2,000 steps, and used a transformer architecture with 80 million parameters. For control, they applied simple gradient updates to intermediate latent representations, guided by external classifiers trained on small labeled datasets. They tested on six tasks: four using classifiers for semantic content, parts-of-speech, syntax trees, and syntax spans; plus length control and sentence infilling without classifiers. Baselines included plug-and-play methods like PPLM and FUDGE, plus fine-tuned autoregressive models as oracles.

The most striking results show Diffusion-LM succeeding where others fail. It achieved control success rates of 81% for semantic content (versus 70% for FUDGE and 10% for PPLM), 90% for parts-of-speech (versus 27%), 86% for syntax trees (versus 18% for FUDGE and even outperforming fine-tuning's 76%), and 94% for syntax spans (versus 54%). Length control reached near-perfect 100% accuracy. When combining controls, like semantics plus syntax, success rates hit 70-75% for both elements, far above baselines' 15-30%. For infilling—generating a middle sentence to connect given contexts—Diffusion-LM matched specialized autoregressive models on automatic metrics (e.g., 7.1% BLEU-4 score) and human evaluations, outperforming prior relaxation-based methods by 4-5 times. Fluency, measured by perplexity under a reference model, stayed competitive, often 20-50% better than baselines.

These findings mean organizations can generate precise, compliant text—like reports with required structures or non-toxic dialogues—without custom training per task, cutting costs and enabling modular combinations of rules (e.g., positive tone plus factual accuracy). This boosts performance in high-stakes uses, reduces risks like biased or unsafe outputs, and aligns with policies for ethical AI. Unlike expectations that non-autoregressive models would lag in fluency, Diffusion-LM excelled on controls needing global planning, thanks to its hierarchical latents, though it underperformed autoregressive models on raw likelihood by 20-30%.

Leaders should prioritize Diffusion-LM for prototypes in controllable applications, starting with tasks like content moderation or personalized writing, and integrate it via the open-source code. Scale training on larger datasets to close the likelihood gap, and pursue optimizations like faster decoding (currently 7 times slower than standard models). If combining with existing systems, weigh trade-offs: it shines on control but may need hybrids for speed. Pilot tests on real workflows will clarify fit.

Key limitations include higher perplexity, indicating less efficient general modeling; slower generation (1-2 minutes for 50 short texts); and reliance on classifiers, which add setup for new controls. Confidence is strong for the tested control tasks, based on rigorous comparisons across 10,000+ samples, but users should validate on domain-specific data and monitor efficiency, as production scaling remains unproven.

1. Introduction

Section Summary: Large language models can produce high-quality text, but controlling the output to meet specific needs like particular topics or sentence structures is challenging for real-world use. Traditional methods like fine-tuning are costly and inflexible for combining multiple controls, while lighter approaches using external guides struggle with complex tasks in standard models. The authors introduce Diffusion-LM, a new model that generates text by gradually refining noisy vectors into words, enabling easy gradient-based steering for intricate controls such as shaping sentence grammar or semantics, which outperforms existing methods across various tasks including combining controls and filling in text gaps.

Large autoregressive language models (LMs) are capable of generating high quality text [1, 2, 3, 4], but in order to reliably deploy these LMs in real world applications, the text generation process needs to be controllable: we need to generate text that satisfies desired requirements (e.g. topic, syntactic structure). A natural approach for controlling a LM would be to fine-tune the LM using supervised data of the form (control, text) [5]. However, updating the LM parameters for each control task can be expensive and does not allow for compositions of multiple controls (e.g. generate text that is both positive sentiment and non-toxic). This motivates light-weight and modular plug-and-play approaches [6] that keep the LM frozen and steer the generation process using an external classifier that measures how well the generated text satisfies the control. But steering a frozen autoregressive LM has been shown to be difficult, and existing successes have been limited to simple, attribute-level controls (e.g., sentiment or topic) [6, 7, 8].

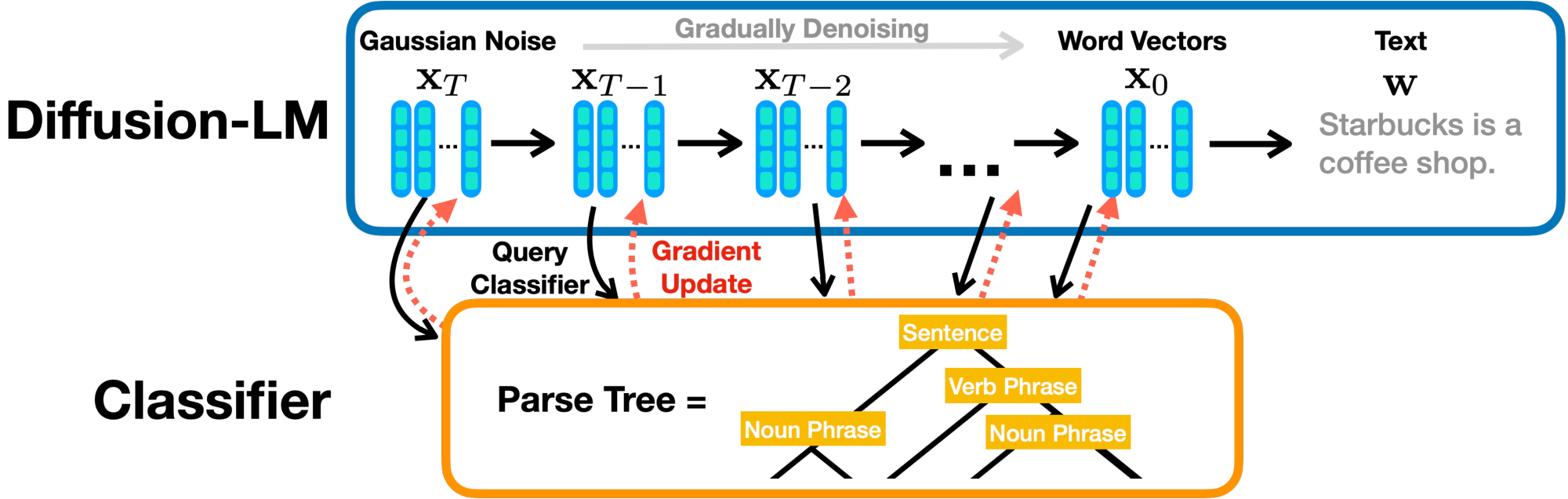

In order to tackle more complex controls, we propose Diffusion-LM, a new language model based on continuous diffusions. Diffusion-LM starts with a sequence of Gaussian noise vectors and incrementally denoises them into vectors corresponding to words, as shown in Figure 1. These gradual denoising steps produce a hierarchy of continuous latent representations. We find that this hierarchical and continuous latent variable enables simple, gradient-based methods to perform complex control tasks such as constraining the parse tree of a generated sequence.

Continuous diffusion models have been extremely successful in vision and audio domains [9, 10, 11, 12, 13], but they have not been applied to text because of the inherently discrete nature of text (Section 3). Adapting this class of models to text requires several modifications to standard diffusions: we add an embedding step and a rounding step to the standard diffusion process, design a training objective to learn the embedding, and propose techniques to improve rounding (Section 4). We control Diffusion-LM using a gradient-based method, as shown in Figure 1. This method enables us to steer the text generation process towards outputs that satisfy target structural and semantic controls. It iteratively performs gradient updates on the continuous latent variables of Diffusion-LM to balance fluency and control satisfaction (Section 5.1).

To demonstrate control of Diffusion-LM, we consider six control targets ranging from fine-grained attributes (e.g., semantic content) to complex structures (e.g., parse trees). Our method almost doubles the success rate of previous plug-and-play methods and matches or outperforms the fine-tuning oracle on all these classifier-guided control tasks (Section 7.1). In addition to these individual control tasks, we show that we can successfully compose multiple classifier-guided controls to generate sentences with both desired semantic content and syntactic structure (Section 7.2). Finally, we consider span-anchored controls, such as length control and infilling. Diffusion-LM allows us to perform these control tasks without a classifier, and our Diffusion-LM significantly outperforms prior plug-and-play methods and is on-par with an autoregressive LM trained from scratch for the infilling task (Section 7.3).

2. Related Work

Section Summary: Diffusion models, which have excelled at generating high-quality images and audio, have been adapted for text in both discrete and continuous ways, but this paper pioneers continuous diffusion models for text to enable more efficient control over generation through gradient-based techniques. Most large language models generate text sequentially from left to right, which restricts their ability to handle global controls like filling in gaps or enforcing sentence structure, leading to specialized fixes; non-autoregressive models exist for tasks like translation but struggle with general language use. Plug-and-play methods steer these models using classifiers to balance fluency and desired traits without retraining, yet autoregressive ones like PPLM are limited in fixing errors or managing complex controls, whereas the proposed diffusion approach uses continuous latent variables for more flexible, guided generation.

Diffusion Models for Text.

Diffusion models [14] have demonstrated great success in continuous data domains [9, 15, 10, 16], producing images and audio that have state-of-the-art sample quality. To handle discrete data, past works have studied text diffusion models on discrete state spaces, which defines a corruption process on discrete data (e.g., each token has some probability to be corrupted to an absorbing or random token) [17, 18, 19]. In this paper, we focus on continuous diffusion models for text and to the best of our knowledge, our work is the first to explore this setting. In contrast to discrete diffusion LMs, our continuous diffusion LMs induce continuous latent representations, which enables efficient gradient-based methods for controllable generation.

Autoregressive and Non-autoregressive LMs.

Most large pre-trained LMs are left-to-right autoregressive (e.g., GPT-3 [2], PaLM [3]). The fixed generation order limits the models' flexibility in many controllable generation settings, especially those that impose controls globally on both left and right contexts. One example is infilling, which imposes lexical control on the right contexts; another example is syntactic structure control, which controls global properties involving both left and right contexts. Since autoregressive LMs cannot directly condition on right contexts, prior works have developed specialized training and decoding techniques for these tasks [20, 21, 22]. For example, [23] proposed a decoding method that relaxes the discrete LM outputs to continuous variables and backpropagates gradient information from the right context. Diffusion-LM can condition on arbitrary classifiers that look at complex, global properties of the sentence. There are other non-autoregressive LMs that have been developed for machine translation and speech-to-text tasks [24, 25]. However these methods are specialized for speech and translation settings, where the entropy over valid outputs is low, and it has been shown that these approaches fail for language modeling [26].

Plug-and-Play Controllable Generation.

Plug-and-play controllable generation aims to keep the LM frozen and steer its output using potential functions (e.g., classifiers). Given a probabilistic potential function that measures how well the generated text satisfies the desired control, the generated text should be optimized for both control satisfaction (measured by the potential function) and fluency (measured by LM probabilities) . There are several plug-and-play approaches based on autoregressive LMs: FUDGE [8] reweights the LM prediction at each token with an estimate of control satisfaction for the partial sequence; GeDi [7] and DExperts [27] reweight the LM prediction at each token with a smaller LM finetuned/trained for the control task.

The closest work to ours is PPLM [6], which runs gradient ascent on an autoregressive LM's hidden activations to steer the next token to satisfy the control and maintain fluency. Because PPLM is based on autoregressive LMs, it can only generate left-to-right. This prevents PPLM from repairing and recovering errors made in previous generation steps. Despite their success on attribute (e.g., topic) controls, we will show these plug-and-play methods for autoregressive LMs fail on more complex control tasks such as controlling syntactic structure and semantic content in Section 7.1. We demonstrate that Diffusion-LM is capable of plug-and-play controllable generation by applying classifier-guided gradient updates to the continuous sequence of latent variables induced by the Diffusion-LM.

3. Problem Statement and Background

Section Summary: This section outlines controllable text generation, where the goal is to produce fluent sentences that meet specific criteria like a desired syntax or sentiment, using a pre-trained language model for natural flow combined with a separate classifier for the control, without retraining the main model. It describes autoregressive language models, which build text by sequentially predicting each next word based on what came before, often powered by Transformer architectures. Finally, it explains diffusion models for continuous data, which generate new samples by starting with random noise and iteratively refining it through a learned denoising process, trained by reversing the addition of noise to real data examples.

We first define controllable generation (Section 3.1) and then review continuous diffusion models (Section 3.3).

3.1 Generative Models and Controllable Generation for Text

Text generation is the task of sampling $\mathbf{w}$ from a trained language model $p_\text{lm} (\mathbf{w})$, where $\mathbf{w} = [w_1 \cdots w_n]$ is a sequence of discrete words and $p_\text{lm} (\mathbf{w})$ is a probability distribution over sequences of words. Controllable text generation is the task of sampling $\mathbf{w}$ from a conditional distribution $p(\mathbf{w} \mid \mathbf{c})$, where $\mathbf{c}$ denotes a control variable. For syntactic control, $\mathbf{c}$ can be a target syntax tree (Figure 1), while for sentiment control, $\mathbf{c}$ could be a desired sentiment label. The goal of controllable generation is to generate $\mathbf{w}$ that satisfies the control target $\mathbf{c}$.

Consider the plug-and-play controllable generation setting: we are given a language model $p_\text{lm}(\mathbf{w})$ trained from a large amount of unlabeled text data, and for each control task, we are given a classifier $p(\mathbf{c} \mid \mathbf{w})$ trained from smaller amount of labeled text data (e.g., for syntactic control, the classifier is a probabilistic parser). The goal is to utilize these two models to approximately sample from the posterior $p(\mathbf{w} \mid \mathbf{c})$ via Bayes rule $p(\mathbf{w} \mid \mathbf{c}) \propto p_\text{lm}(\mathbf{w}) \cdot p(\mathbf{c} \mid \mathbf{w})$. Here, $p_\text{lm}(\mathbf{w})$ encourages $\mathbf{w}$ to be fluent, and the $p(\mathbf{c} \mid \mathbf{w})$ encourages $\mathbf{w}$ to fulfill the control.

3.2 Autoregressive Language Models

The canonical approach to language modeling factors $p_\text{lm}$ in an autoregressive left-to-right mannar, $p_\text{lm}(\mathbf{w}) = p_\text{lm}(w_1) \prod_{i=2}^n p_\text{lm}(x_i \mid x_{<i})$. In this case, text generation is reduced to the task of repeatedly predicting the next token conditioned on the partial sequence generated so far. The next token prediction $p_\text{lm}(x_i \mid x_{<i})$ is often parametrized by Transformer architecture [28].

3.3 Diffusion Models for Continuous Domains

A diffusion model ([9, 15]) is a latent variable model that models the data $\mathbf{x}{0} \in \mathbb{R}^d$ as a Markov chain $\mathbf{x}{T} \dots \mathbf{x}{0}$ with each variable in $\mathbb{R}^d$, and $\mathbf{x}{T}$ is a Gaussian. The diffusion model incrementally denoises the sequence of latent variables $\mathbf{x}{T:1}$ to approximate samples from the target data distribution (Figure 2). The initial state $p\theta(\mathbf{x}{T}) \approx \mathcal{N} (0, \mathbf{I})$, and each denoising transition $\mathbf{x}{t} \rightarrow \mathbf{x}{t-1}$ is parametrized by the model $p\theta(\mathbf{x}{t-1}\mid \mathbf{x}{t}) = \mathcal{N}(\mathbf{x}{t-1}; \mu\theta(\mathbf{x}{t}, t), \Sigma\theta(\mathbf{x}{t}, t))$. For example, $\mu\theta$ and $\Sigma_\theta$ may be computed by a U-Net or a Tranformer.

To train the diffusion model, we define a forward process that constructs the intermediate latent variables $\mathbf{x}{1:T}$. The forward process incrementally adds Gaussian noise to data $\mathbf{x}{0}$ until, at diffusion step $T$, samples $\mathbf{x}{T}$ are approximately Gaussian. Each transition $\mathbf{x}{t-1} \rightarrow \mathbf{x}{t}$ is parametrized by $q(\mathbf{x}{t} \mid \mathbf{x}{t-1}) = \mathcal{N} (\mathbf{x}{t} ; \sqrt{1-\beta_t} \mathbf{x}_{t-1}, \beta_t \mathbf{I})$, where the hyperparameter $\beta_t$ is the amount of noise added at diffusion step $t$. This parametrization of the forward process $q$ contains no trainable parameters and allows us to define a training objective that involves generating noisy data according to a pre-defined forward process $q$ and training a model to reverse the process and reconstruct the data.

![**Figure 2:** A graphical model representing the forward and reverse diffusion processes. In addition to the original diffusion models [9], we add a Markov transition between $\mathbf{x}_{0}$ and $\mathbf{w}$, and propose the embedding Section 4.1 and rounding Section 4.2 techniques.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/99rz4uqq/graphical_model_v2.png)

The diffusion model is trained to maximize the marginal likelihood of the data $\mathbb{E}{\mathbf{x}{0} \sim p_\text{data} }[\log p_\theta(\mathbf{x}{0})]$, and the canonical objective is the variational lower bound of $\log p\theta(\mathbf{x}_{0})$ [14],

$ \mathcal{L}\text{vlb} (\mathbf{x}{0}) = \mathop{\mathbb{E}}{q(\mathbf{x}{1:T} | \mathbf{x}{0})} \left[\log \frac{q(\mathbf{x}{T} | \mathbf{x}{0})}{p\theta(\mathbf{x}{T})} + \sum{t=2}^T \log \frac{q(\mathbf{x}{t-1} | \mathbf{x}{0}, \mathbf{x}{t})} {p\theta(\mathbf{x}{t-1} | \mathbf{x}{t}) } - \log p_\theta(\mathbf{x}{0} | \mathbf{x}{1})\right].\tag{1} $

However, this objective can be unstable and require many optimization tricks to stabilize [15]. To circumvent this issue, [9] devised a simple surrogate objective that expands and reweights each KL-divergence term in $\mathcal{L}_\text{vlb}$ to obtain a mean-squared error loss (derivation in Appendix E) which we will refer to as

$ \mathcal{L}\text{simple} (\mathbf{x}{0}) = \sum_{t=1}^T \mathop{\mathbb{E}}{q(\mathbf{x}{t} \mid \mathbf{x}{0})} || \mu\theta(\mathbf{x}{t}, t) - \hat{\mu}(\mathbf{x}{t}, \mathbf{x}_{0}) ||^2, $

where $\hat{\mu}(\mathbf{x}{t}, \mathbf{x}{0})$ is the mean of the posterior $q(\mathbf{x}{t-1} | \mathbf{x}{0}, \mathbf{x}{t})$ which is a closed from Gaussian, and $\mu\theta(\mathbf{x}{t}, t)$ is the predicted mean of $p\theta(\mathbf{x}{t-1} \mid \mathbf{x}{t})$ computed by a neural network. While $\mathcal{L}_\text{simple}$ is no longer a valid lower bound, prior work has found that it empirically made training more stable and improved sample quality[^2]. We will make use of similar simplifications in Diffusion-LM to stabilize training and improve sample quality (Section 4.1).

[^2]: Our definition of $\mathcal{L}\text{simple}$ here uses a different parametrization from [9]. We define our squared loss in terms of $\mu\theta(\mathbf{x}{t}, t)$ while they express it in terms of $\epsilon\theta(\mathbf{x}_{t}, t)$.

4. Diffusion-LM: Continuous Diffusion Language Modeling

Section Summary: To adapt standard diffusion models for generating text, Diffusion-LM introduces key changes: it creates a way to convert discrete words into continuous vectors through a learned embedding function, trained alongside the model's other parts to better capture language structure. This embedding maps sequences of words into a continuous space, and the training process adds steps to smoothly transition from words to noisy versions and back, ensuring the model learns meaningful clusters, like words with similar grammatical roles. To convert the model's continuous outputs back into readable text, it uses a rounding technique that selects the most likely word per position, refined by tweaking the training goal so the model consistently predicts outputs that align precisely with these embeddings, reducing errors in the process.

Constructing Diffusion-LM requires several modifications to the standard diffusion model. First, we must define an embedding function that maps discrete text into a continuous space. To address this, we propose an end-to-end training objective for learning embeddings (Section 4.1). Second, we require a rounding method to map vectors in embedding space back to words. To address this, we propose training and decoding time methods to facilitate rounding (Section 4.2).

4.1 End-to-end Training

To apply a continuous diffusion model to discrete text, we define an embedding function $\textsc{Emb}(w_i)$ that maps each word to a vector in $\mathbb{R}^{d}$. We define the embedding of a sequence $\mathbf{w}$ of length $n$ to be: $\textsc{Emb}(\mathbf{w}) = [\textsc{Emb}(w_1), \dots, \textsc{Emb}(w_n)] \in \mathbb{R}^{nd}$.

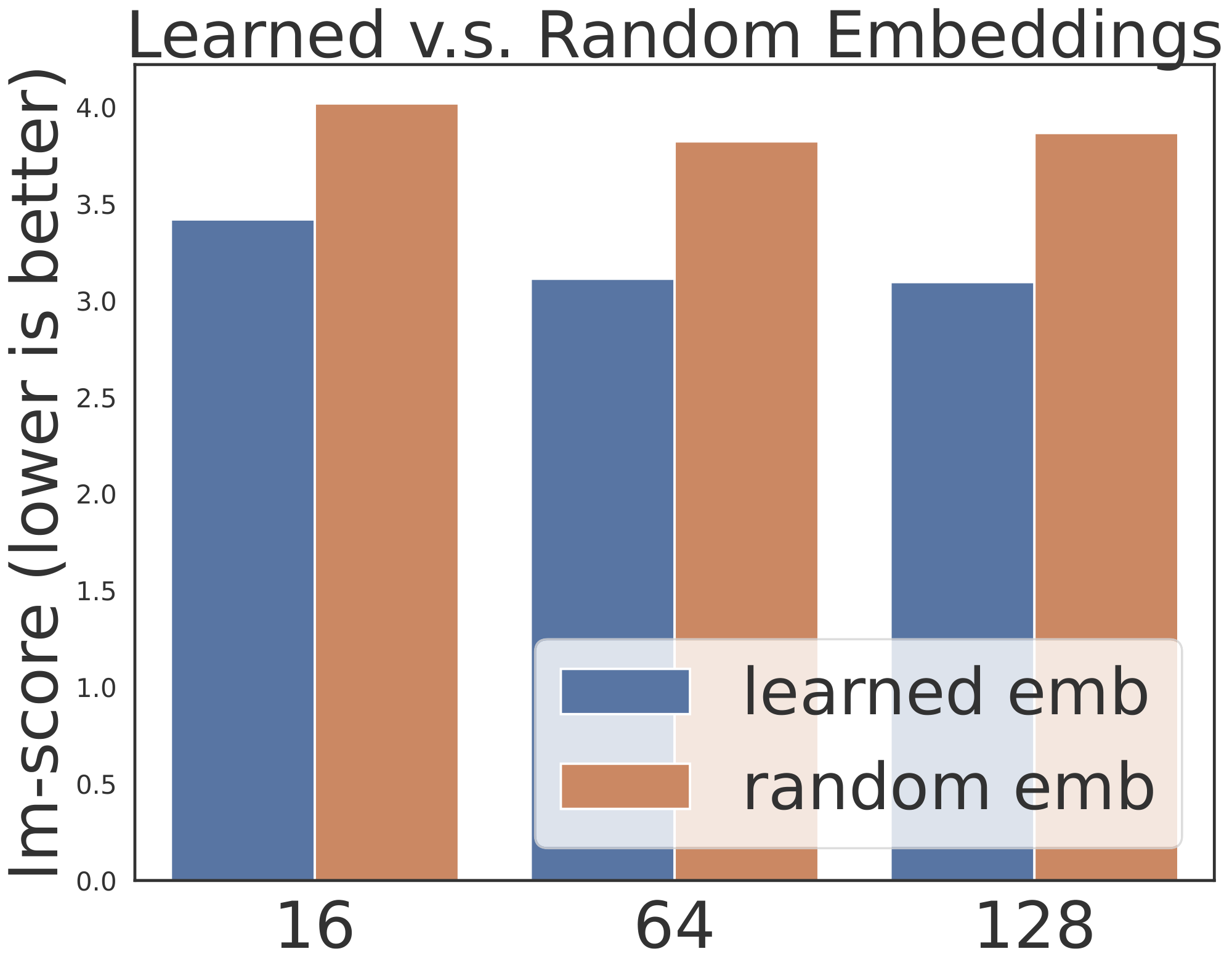

We propose a modification of the diffusion model training objective Equation (1) that jointly learns the diffusion model's parameters and word embeddings. In preliminary experiments, we explored random Gaussian embeddings, as well as pre-trained word embeddings [29, 1]. We found that these fixed embeddings are suboptimal for Diffusion-LM compared to end-to-end training[^3].

[^3]: While trainable embeddings perform best on control and generation tasks, we found that fixed embeddings onto the vocabulary simplex were helpful when optimizing for held-out perplexity. We leave discussion of this approach and perplexity results to Appendix F as the focus of this work is generation quality and not perplexity.

As shown in Figure 2, our approach adds a Markov transition from discrete words $\mathbf{w}$ to $\mathbf{x}{0}$ in the forward process, parametrized by $q\phi(\mathbf{x}{0} | \mathbf{w}) = \mathcal{N} (\textsc{Emb}(\mathbf{w}), \sigma_0 I)$. In the reverse process, we add a trainable rounding step, parametrized by $p\theta(\mathbf{w} \mid \mathbf{x}{0}) = \prod{i=1}^n p_\theta(w_i \mid x_i)$, where $p_\theta(w_i \mid x_i)$ is a softmax distribution. The training objectives introduced in Section 3 now becomes

$ \begin{aligned} \mathcal{L}^\text{e2e}{\text{vlb}} (\mathbf{w}) &= \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0} | \mathbf{w})}\left[\mathcal{L}{\text{vlb}} (\mathbf{x}{0}) + \log q\phi(\mathbf{x}{0} | \mathbf{w}) - \log p\theta(\mathbf{w} | \mathbf{x}{0})]\right], \ \mathcal{L}^\text{e2e}\text{simple} (\mathbf{w}) &= \mathop{\mathbb{E}}{q\phi(\mathbf{x}{0:T} | \mathbf{w})} \left[\mathcal{L}\text{simple}(\mathbf{x}{0}) + || \textsc{Emb}(\mathbf{w}) - \mu\theta(\mathbf{x}{1}, 1) ||^2 - \log p\theta(\mathbf{w} | \mathbf{x}_{0})\right]. \end{aligned}\tag{2} $

![**Figure 3:** A t-SNE [30] plot of the learned word embeddings. Each word is colored by its POS.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/99rz4uqq/embedding_128_v3.png)

We derive $ \mathcal{L}^\text{e2e}\text{simple} (\mathbf{w})$ from $\mathcal{L}^\text{e2e}{\text{vlb}}(\mathbf{w})$ following the simplification in Section 3.3 and our derivation details are in Appendix E. Since we are training the embedding function, $q_\phi$ now contains trainable parameters and we use the reparametrization trick ([31, 32]) to backpropagate through this sampling step. Empirically, we find the learned embeddings cluster meaningfully: words with the same part-of-speech tags (syntactic role) tend to be clustered, as shown in Figure 3.

4.2 Reducing Rounding Errors

The learned embeddings define a mapping from discrete text to the continuous $\mathbf{x}{0}$. We now describe the inverse process of rounding a predicted $\mathbf{x}{0}$ back to discrete text. Rounding is achieved by choosing the most probable word for each position, according to argmax $p_\theta(\mathbf{w} \mid \mathbf{x}{0})= \prod{i=1}^n p_\theta(w_i \mid x_i)$. Ideally, this argmax-rounding would be sufficient to map back to discrete text, as the denoising steps should ensure that $\mathbf{x}{0}$ lies exactly on the embedding of some word. However, empirically, the model fails to generate $\mathbf{x}{0}$ that commits to a single word.

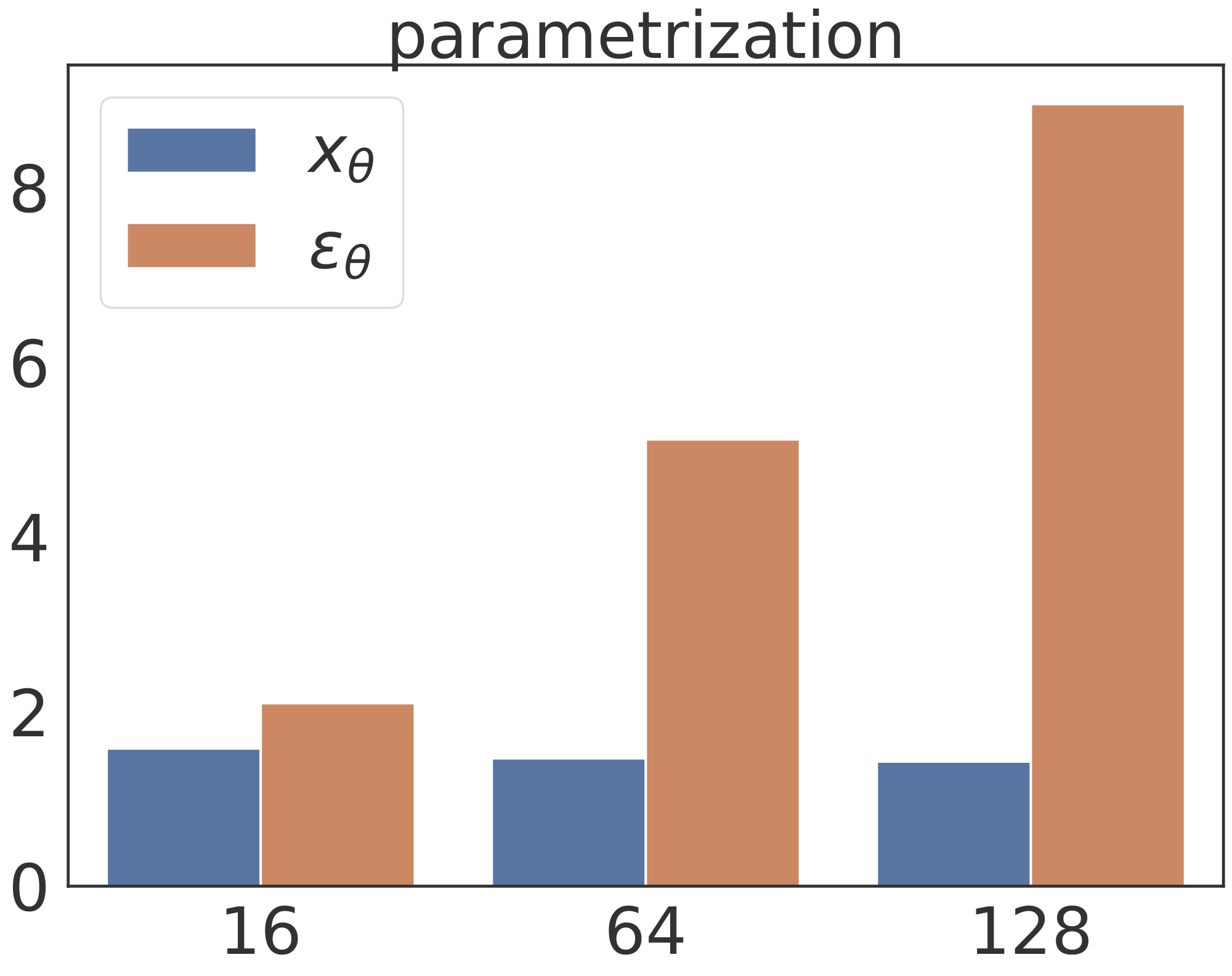

One explanation for this phenomenon is that the $\mathcal{L}\text{simple}(\mathbf{x}{0})$ term in our objective Equation 2 puts insufficient emphasis on modeling the structure of $\mathbf{x}{0}$. Recall that we defined $\mathcal{L}\text{simple}(\mathbf{x}{0}) =\sum{t=1}^T \mathbb{E}{\mathbf{x}{t}}|| \mu_\theta(\mathbf{x}{t}, t) - \hat{\mu}(\mathbf{x}{t}, \mathbf{x}{0}) ||^2$, where our model $\mu\theta(\mathbf{x}{t}, t)$ directly predicts the mean of $p\theta(\mathbf{x}{t-1}\mid \mathbf{x}{t})$ for each denoising step $t$. In this objective, the constraint that $\mathbf{x}_{0}$ has to commit to a single word embedding will only appear in the terms with $t$ near $0$, and we found that this parametrization required careful tuning to force the objective to emphasize those terms (see Appendix H).

Our approach re-parametrizes $\mathcal{L}\text{simple}$ to force Diffusion-LM to explicitly model $\mathbf{x}{0}$ in every term of the objective. Specifically, we derive an analogue to $\mathcal{L}\text{simple}$ which is parametrized via $\mathbf{x}{0}$, $\mathcal{L}^\text{e2e}{\mathbf{x}{0}\text{-simple}}(\mathbf{x}{0})=\sum{t=1}^T \mathbb{E}{\mathbf{x}{t}} || f_\theta(\mathbf{x}{t}, t) - \mathbf{x}{0} ||^2$, where our model $f_\theta(\mathbf{x}{t}, t)$ predicts $\mathbf{x}{0}$ directly [^4]. This forces the neural network to predict $\mathbf{x}{0}$ in every term and we found that models trained with this objective quickly learn that $\mathbf{x}{0}$ should precisely centered at a word embedding.

[^4]: Predicting $\mathbf{x}{0}$ and $\mathbf{x}{t-1}$ is equivalent up to scaling constants as the distribution of $\mathbf{x}{t-1}$ can be obtained in closed form via the forward process $\mathbf{x}{t-1} = \sqrt{\bar{\alpha}} \mathbf{x}_{0} +\sqrt{1- \bar{\alpha}} \epsilon$, see Appendix E for further details.

We described how re-parametrization can be helpful for model training, but we also found that the same intuition could be used at decoding time in a technique that we call the clamping trick. In the standard generation approach for a $\mathbf{x}{0}$-parametrized model, the model denoises $\mathbf{x}{t}$ to $\mathbf{x}{t-1}$ by first computing an estimate of $\mathbf{x}{0}$ via $f_\theta(\mathbf{x}{t}, t)$ and then sampling $\mathbf{x}{t-1}$ conditioned on this estimate: $\mathbf{x}{t-1} = \sqrt{\bar{\alpha}} f\theta(\mathbf{x}{t}, t) +\sqrt{1- \bar{\alpha}} \epsilon$, where $\bar{\alpha}t = \prod{s=0}^t (1-\beta_s)$ and $\epsilon \sim \mathcal{N}(0, I)$ [^5]. In the clamping trick, the model additionally maps the predicted vector $f\theta(\mathbf{x}{t}, t)$ to its nearest word embedding sequence. Now, the sampling step becomes $\mathbf{x}{t-1} = \sqrt{\bar{\alpha}}\cdot \operatorname{Clamp}(f_\theta(\mathbf{x}_{t}, t)) +\sqrt{1- \bar{\alpha}} \epsilon$. The clamping trick forces the predicted vector to commit to a word for intermediate diffusion steps, making the vector predictions more precise and reducing rounding errors.[^6]

[^5]: This follows from the marginal distribution $q(\mathbf{x}{t} \mid \mathbf{x}{0})$, which is a closed form Gaussian since all the Markov transitions are Gaussian.

[^6]: Intuitively, applying the clamping trick to early diffusion steps with $t$ near $T$ may be sub-optimal, because the model hasn't figured out what words to commit to. Empirically, applying clamping trick for all diffusion steps doesn't hurt the performance much. But to follow this intuition, one could also set the starting step of the clamping trick as a hyperparameter.

5. Decoding and Controllable Generation with Diffusion-LM

Section Summary: This section explains how Diffusion-LM can generate text with specific controls, like guiding the output toward certain topics or styles, by tweaking hidden continuous representations of the text rather than the words themselves, using simple math updates and a helper network to balance fluency and desired traits. To make this faster and better for text, the method adds a penalty to keep the language natural and runs several adjustment steps at once, while cutting down the overall process steps. For tasks needing just one polished result, like translation, it uses a selection technique called Minimum Bayes Risk decoding, which picks the best sample from a bunch by comparing them against each other to minimize errors.

Having described the Diffusion-LM, we now consider the problem of controllable text generation (Section 5.1) and decoding (Section 5.2).

5.1 Controllable Text Generation

We now describe a procedure that enables plug-and-play control on Diffusion-LM. Our approach to control is inspired by the Bayesian formulation in Section 3.1, but instead of performing control directly on the discrete text, we perform control on the sequence of continuous latent variables $\mathbf{x}_{0:T}$ defined by Diffusion-LM, and apply the rounding step to convert these latents into text.

Controlling $\mathbf{x}{0:T}$ is equivalent to decoding from the posterior $p(\mathbf{x}{0:T} | \mathbf{c}) = \prod_{t=1}^T p(\mathbf{x}{t-1} \mid \mathbf{x}{t}, \mathbf{c})$, and we decompose this joint inference problem to a sequence of control problems at each diffusion step: $p(\mathbf{x}{t-1} \mid \mathbf{x}{t}, \mathbf{c}) \propto p(\mathbf{x}{t-1} \mid \mathbf{x}{t}) \cdot p(\mathbf{c} \mid \mathbf{x}{t-1}, \mathbf{x}{t})$. We further simplify $p(\mathbf{c} \mid \mathbf{x}{t-1}, \mathbf{x}{t})=p(\mathbf{c} \mid \mathbf{x}{t-1})$ via conditional independence assumptions from prior work on controlling diffusions [33]. Consequently, for the $t$-th step, we run gradient update on $\mathbf{x}{t-1}$:

$ \begin{align*} \nabla_{\mathbf{x}{t-1}} \log p(\mathbf{x}{t-1} \mid \mathbf{x}{t}, \mathbf{c}) = \nabla{\mathbf{x}{t-1}} \log p(\mathbf{x}{t-1} \mid \mathbf{x}{t}) + \nabla{\mathbf{x}{t-1}} \log p(\mathbf{c} \mid \mathbf{x}{t-1}), \end{align*} $

where both $\log p(\mathbf{x}{t-1} \mid \mathbf{x}{t})$ and $\log p(\mathbf{c} \mid \mathbf{x}_{t-1})$ are differentiable: the first term is parametrized by Diffusion-LM, and the second term is parametrized by a neural network classifier.

Similar to work in the image setting [12, 33], we train the classifier on the diffusion latent variables and run gradient updates on the latent space $\mathbf{x}{t-1}$ to steer it towards fulfilling the control. These image diffusion works take one gradient step towards $\nabla{ \mathbf{x}{t-1}} \log p(\mathbf{c} \mid \mathbf{x}{t-1})$ per diffusion steps. To improve performance on text and speed up decoding, we introduce two key modifications: fluency regularization and multiple gradient steps.

To generate fluent text, we run gradient updates on a control objective with fluency regularization: $ \lambda \log p(\mathbf{x}{t-1} \mid \mathbf{x}{t}) + \log p(\mathbf{c} \mid \mathbf{x}{t-1})$, where $\lambda$ is a hyperparameter that trades off fluency (the first term) and control (the second term). While existing controllable generation methods for diffusions do not include the $\lambda \log p(\mathbf{x}{t-1} \mid \mathbf{x}{t})$ term in the objective, we found this term to be instrumental for generating fluent text. The resulting controllable generation process can be viewed as a stochastic decoding method that balances maximizing and sampling $p(\mathbf{x}{t-1} \mid \mathbf{x}_{t}, \mathbf{c})$, much like popular text generation techniques such as nucleus sampling [34] or sampling with low temperature. In order to improve the control quality, we take multiple gradient steps for each diffusion step: we run $3$ steps of the Adagrad [^7] [35] update for each diffusion steps. To mitigate for the increased computation cost, we downsample the diffusion steps from 2000 to 200, which speeds up our controllable generation algorithm without hurting sample quality much.

[^7]: We tried ablations that replaced Adagrad with SGD, but we found Adagrad to be substantially less sensitive to hyperparameter tuning.

5.2 Minimum Bayes Risk Decoding

Many conditional text generation tasks require a single high-quality output sequence, such as machine translation or sentence infilling. In these settings, we apply Minimum Bayes Risk (MBR) decoding [36] to aggregate a set of samples $\mathcal{S}$ drawn from the Diffusion-LM, and select the sample that achieves the minimum expected risk under a loss function $\mathcal{L}$ (e.g., negative BLEU score): $\hat{\mathbf{w}} = \operatorname{argmin}{\mathbf{w} \in S} \sum{\mathbf{w}' \in S} \frac{1}{|S|}\mathcal{L}(\mathbf{w}, \mathbf{w}')$. We found that MBR decoding often returned high quality outputs, since a low quality sample would be dissimilar from the remaining samples and penalized by the loss function.

6. Experimental Setup

Section Summary: Researchers trained a language model called Diffusion-LM on two datasets: E2E, which contains 50,000 labeled restaurant reviews, and ROCStories, a tougher set of 98,000 short stories about everyday events. They tested it on six control tasks to generate text with specific features, like matching certain meanings, parts of speech, sentence structures, lengths, or filling in gaps between story parts, using metrics for fluency and success rates. For comparison, they pitted it against methods like PPLM and FUDGE, which steer text generation without retraining the model, noting that their approach is faster in some cases.

With the above improvements on training (Section 4) and decoding (Section 5), we train Diffusion-LM for two language modeling tasks. We then apply the controllable generation method to $5$ classifier-guided control tasks, and apply MBR decoding to a classifier-free control task (i.e. infilling).

6.1 Datasets and Hyperparameters

We train Diffusion-LM on two datasets: E2E [37] and ROCStories [38]. The E2E dataset consists of 50K restaurant reviews labeled by 8 fields including food type, price, and customer rating. The ROCStories dataset consists of 98K five-sentence stories, capturing a rich set of causal and temporal commonsense relations between daily events. This dataset is more challenging to model than E2E, because the stories contain a larger vocabulary of 11K words and more diverse semantic content.

Our Diffusion-LM is based on Transformer [28] architecture with $80$ M parameters, with a sequence length $n=64$, diffusion steps $T=2000$ and a square-root noise schedule (see Appendix A for details). We treat the embedding dimension as a hyperparameter, setting $d=16$ for E2E and $d=128$ for ROCStories. See Appendix B for hyperparameter details. At decoding time, we downsample to 200 diffusion steps for E2E and maintain 2000 steps for ROCStories. Decoding Diffusion-LM for 200 steps is still 7x slower than decoding autoregressive LMs. For controllable generation, our method based on Diffusion-LM is 1.5x slower than FUDGE but 60x faster than PPLM.

6.2 Control tasks

\begin{tabular}{lp{13cm}}

\toprule

input (Semantic Content) & food : Japanese\\

output text & Browns Cambridge is good for Japanese food and also children friendly near The Sorrento . \\

\midrule

input (Parts-of-speech) & PROPN AUX DET ADJ NOUN NOUN VERB ADP DET NOUN ADP DET NOUN PUNCT\\

output text & Zizzi is a local coffee shop located on the outskirts of the city . \\

\midrule

input (Syntax Tree) & (TOP (S (NP (*) (*) (*)) (VP (*) (NP (NP (*) (*))))))\\

output text & The Twenty Two has great food \\

\midrule

input (Syntax Spans) & (7, 10, VP) \\

output text & Wildwood pub serves multicultural dishes and is ranked 3 stars\\

\midrule

input (Length) & 14 \\

output text & Browns Cambridge offers Japanese food located near The Sorrento in the city centre . \\

\midrule

input (left context) & My dog loved tennis balls.\\

input (right context) & My dog had stolen every one and put it under there.\\

output text & One day, I found all of my lost tennis balls underneath the bed. \\

\bottomrule

\end{tabular}

We consider $6$ control tasks shown in Table 1: the first 4 tasks rely on a classifier, and the last 2 tasks are classifier free[^8]. For each control task (e.g. semantic content), we sample $200$ control targets $\mathbf{c}$ (e.g., rating=5 star) from the validation splits, and we generate $50$ samples for each control target. To evaluate the fluency of the generated text, we follow the prior works [8, 6] and feed the generated text to a teacher LM (i.e., a carefully fine-tuned GPT-2 model) and report the perplexity of generated text under the teacher LM. We call this metric lm-score (denoted as lm): a lower lm-score indicates better sample quality. [^9] We define success metrics for each control task as follows:

[^8]: Length is classifier-free for our Diffusion-LM based methods, but other methods still require a classifier.

[^9]: Prior works [8, 6] use GPT [39] as the teacher LM whereas we use a fine-tuned GPT-2 model because our base autoregressive LM and Diffusion-LM both generate UNK tokens, which does not exist in pretrained vocabularies of GPT.

Semantic Content. Given a field (e.g., rating) and value (e.g., 5 star), generate a sentence that covers field=value, and report the success rate by exact match of 'value'.

Parts-of-speech. Given a sequence of parts-of-speech (POS) tags (e.g., Pronoun Verb Determiner Noun), generate a sequence of words of the same length whose POS tags (under an oracle POS tagger) match the target (e.g., I ate an apple). We quantify success via word-level exact match.

Syntax Tree. Given a target syntactic parse tree (see Figure 1), generate text whose syntactic parse matches the given parse. To evaluate the success, we parse the generated text by an off-the-shelf parser [40], and report F1 scores.

Syntax Spans. Given a target (span, syntactic category) pair, generate text whose parse tree over span $[i, j]$ matches the target syntactic category (e.g. prepositional phrase).We quantify success via the fraction of spans that match exactly.

Length. Given a target length $10, \dots, 40$, generate a sequence with a length within $\pm 2$ of the target. In the case of Diffusion-LM, we treat this as a classifier-free control task.

Infilling. Given a left context ($O_1$) and a right context ($O_2$) from the aNLG dataset [41], and the goal is to generate a sentence that logically connects $O_1$ and $O_2$. For evaluation, we report both automatic and human evaluation from the Genie leaderboard [42].

6.3 Classifier-Guided Control Baselines

For the first 5 control tasks, we compare our method with PPLM, FUDGE, and a fine-tuning oracle. Both PPLM and FUDGE are plug-and-play controllable generation approaches based on an autoregressive LM, which we train from scratch using the GPT-2 small architecture [1].

PPLM [6]. This method runs gradient ascent on the LM activations to increase the classifier probabilities and language model probabilities, and has been successful on simple attribute control. We apply PPLM to control semantic content, but not the remaining 4 tasks which require positional information, as PPLM's classifier lacks positional information.

FUDGE [8]. For each control task, FUDGE requires a future discriminator that takes in a prefix sequence and predicts whether the complete sequence would satisfy the constraint. At decoding time, FUDGE reweights the LM prediction by the discriminator scores.

FT. For each control task, we fine-tune GPT-2 on (control, text) pair, yielding an oracle conditional language model that's not plug-and-play. We report both the sampling (with temperature 1.0) and beam search (with beam size 4) outputs of the fine-tuned models, denoted as FT-sample and FT-search.

6.4 Infilling Baselines

We compare to 3 specialized baseline methods developed in past work for the infilling task.

DELOREAN [22]. This method continuously relaxes the output space of a left-to-right autoregressive LM, and iteratively performs gradient updates on the continuous space to enforce fluent connection to the right contexts. This yields a continuous vector which is rounded back to text.

COLD [23]. COLD specifies an energy-based model that includes fluency (from left-to-right and right-to-left LM) and coherence constraints (from lexical overlap). It samples continuous vectors from this energy-based model and round them to text.

AR-infilling. We train an autoregressive LM from scratch to do sentence infilling task [21]. Similar to training Diffusion-LM, we train on the ROCStories dataset, but pre-process it by reordering sentences from $(O_1, O_\text{middle}, O_2)$ to $(O_1, O_2, O_\text{middle})$. At evaluation time, we feed in $O_1, O_2$, and the model generates the middle sentence.

7. Main Results

Section Summary: Researchers trained a model called Diffusion-LM on two datasets and found that, while it doesn't predict text sequences as accurately as traditional models (based on a measure called negative log-likelihood), larger models and datasets help narrow the gap. However, when it comes to generating text with specific controls—like including certain meanings, word types, sentence structures, or exact lengths—Diffusion-LM performs much better than competing methods and even surpasses finely tuned models in challenging tasks such as shaping syntax trees and word spans. This success stems from the model's ability to plan ahead and adjust globally, allowing it to correct errors and produce fluent, on-target results, as shown in example comparisons where other approaches fail after initial mistakes.

We train Diffusion-LMs on the E2E and ROCStories datasets. In terms of negative log-likelihood (NLL, lower is better), we find that the variational upper bound of Diffusion-LM NLL [^10] underperforms the equivalent autoregressive Transformer model (2.28 vs. 1.77 for E2E, 3.88 vs 3.05 for ROCStories) although scaling up model and dataset size partially bridges the gap (3.88 $\xrightarrow{}$ 3.10 on ROCStories). Our best log-likelihoods required several modifications from Section 4; we explain these and give detailed log-likelihood results in Appendix F. Despite worse likelihoods, controllable generation based on our Diffusion-LM results in significantly better outputs than systems based on autoregressive LMs, as we will show in Section 7.1, Section 7.2, and Section 7.3

[^10]: Exact log-likelihoods are intractable for Diffusion-LM, so we report the lower bound $\mathcal{L}^\text{e2e}_{\text{vlb}}$.

7.1 Classifier-Guided Controllable Text Generation Results

\begin{tabular}{lcc|cc|cc|cc|cc}

\toprule

& \multicolumn{2}{c|}{Semantic Content} & \multicolumn{2}{c|}{Parts-of-speech} & \multicolumn{2}{c|}{Syntax Tree} & \multicolumn{2}{c|}{Syntax Spans} & \multicolumn{2}{c}{Length} \\

& ctrl $\uparrow$ & lm $\downarrow$ & ctrl $\uparrow$ & lm $\downarrow$ & ctrl $\uparrow$ & lm $\downarrow$ & ctrl $\uparrow$ & lm $\downarrow$ & ctrl $\uparrow$ & lm $\downarrow$ \\ \hline

PPLM & 9.9 & 5.32 & - & - & - & - & - & - & - & - \\

FUDGE & 69.9 & 2.83 & 27.0 & 7.96 & 17.9 & \textbf{3.39} & 54.2 & 4.03 & 46.9 & 3.11 \\

Diffusion-LM & \textbf{81.2} & \textbf{2.55} &\textbf{90.0} & \textbf{5.16 } & \textbf{86.0} & 3.71 & \textbf{93.8} & \textbf{2.53} & \textbf{99.9} & \textbf{2.16} \\

\midrule

FT-sample & 72.5 & 2.87 & 89.5 & 4.72 & 64.8 & 5.72 & 26.3 & 2.88 & 98.1 & 3.84\\

FT-search & 89.9 & 1.78 & 93.0 & 3.31 & 76.4 & 3.24 & 54.4 & 2.19 & 100.0 & 1.83\\

\bottomrule

\end{tabular}

As shown in Table 2, Diffusion-LM achieves high success and fluency across all classifier-guided control tasks. It significantly outperforms the PPLM and FUDGE baselines across all 5 tasks. Surprisingly, our method outperforms the fine-tuning oracle on controlling syntactic parse trees and spans, while achieving similar performance on the remaining 3 tasks.

Controlling syntactic parse trees and spans are challenging tasks for fine-tuning, because conditioning on the parse tree requires reasoning about the nested structure of the parse tree, and conditioning on spans requires lookahead planning to ensure the right constituent appears at the target position.

We observe that PPLM fails in semantic content controls and conjecture that this is because PPLM is designed to control coarse-grained attributes, and may not be useful for more targeted tasks such as enforcing that a restaurant review contains a reference to Starbucks.

FUDGE performs well on semantic content control but does not perform well on the remaining four tasks. Controlling a structured output (Parts-of-speech and Syntax Tree) is hard for FUDGE because making one mistake anywhere in the prefix makes the discriminator assign low probabilities to all continuations. In other control tasks that require planning (Length and Syntax Spans), the future discriminator is difficult to train, as it must implicitly perform lookahead planning.

The non-autoregressive nature of our Diffusion-LM allows it to easily solve all the tasks that require precise future planning (Syntax Spans and Length). We believe that it works well for complex controls that involve global structures (Parts-of-speech, Syntax Tree) because the coarse-to-fine representations allow the classifier to exert control on the entire sequence (near $t=T$) as well as on individual tokens (near $t=0$).

Qualitative Results.

Table 3 shows samples of Syntax Tree control. Our method and fine-tuning both provide fluent sentences that mostly satisfy controls, whereas FUDGE deviates from the constraints after the first few words. One key difference between our method and fine-tuning is that Diffusion-LM is able to correct for a failed span and have suffix spans match the target. In the first example, the generated span ("Family friendly Indian food") is wrong because it contains 1 more word than the target. Fortunately, this error doesn't propagate to later spans, since Diffusion-LM adjusts by dropping the conjunction. Analogously, in the second example, the FT model generates a failed span ("The Mill") that contains 1 fewer word. However, the FT model fails to adjust in the suffix, leading to many misaligned errors in the suffix.

\begin{tabular}{lp{14cm}}

\toprule

Syntactic Parse & (S (S (NP *) (VP * (NP (NP * *) (VP * \textbf{(NP (ADJP * *) *)})))) * (S (NP * * *) (VP * (ADJP (ADJP *)))))\\

\midrule

FUDGE & Zizzi is a cheap restaurant . \textbf{[incomplete]} \\

Diffusion-LM & Zizzi is a pub providing \textbf{family friendly Indian food} Its customer rating is low \\

FT & Cocum is a Pub serving \textbf{moderately priced meals} and the customer rating is high \\

\toprule

Syntactic Parse & (S (S (VP * (PP * (NP * *)))) * \textbf{(NP * * *)} (VP * (NP (NP * *) (SBAR (WHNP *) (S (VP * (NP * *)))))) *) \\

\midrule

FUDGE & In the city near The Portland Arms is a coffee and fast food place named The Cricketers which is not family - friendly with a customer rating of 5 out of 5 . \\

Diffusion-LM & Located on the riverside, \textbf{The Rice Boat} is a restaurant that serves Indian food . \\

FT & Located near The Sorrento, \textbf{The Mill} is a pub that serves Indian cuisine. \\

\bottomrule

\end{tabular}

7.2 Composition of Controls

\begin{tabular}{lccc|ccc}

\toprule

& \multicolumn{3}{c|}{Semantic Content + Syntax Tree} & \multicolumn{3}{c}{Semantic Content + Parts-of-speech } \\

& semantic ctrl $\uparrow$ & syntax ctrl $\uparrow$ & lm $\downarrow$ & semantic ctrl $\uparrow$ & POS ctrl $\uparrow$ & lm $\downarrow$ \\ \hline

FUDGE & 61.7 & 15.4 & 3.52 & 64.5 & 24.1 & 3.52\\

Diffusion-LM & \textbf{69.8} & \textbf{74.8} & 5.92 & \textbf{63.7} & \textbf{69.1} & 3.46\\

\midrule

FT-PoE & 61.7 & 29.2 & \textbf{2.77} & 29.4 & 10.5 & \textbf{2.97} \\

\bottomrule

\end{tabular}

One unique capability of plug-and-play controllable generation is its modularity. Given classifiers for multiple independent tasks, gradient guided control makes it simple to generate from the intersection of multiple controls by taking gradients on the sum of the classifier log-probabilities.

We evaluate this setting on the combination of Semantic Content + Syntax Tree control and Semantic Content + Parts-of-speech control. As shown in Table 4, our Diffusion-LM achieves a high success rate for both of the two components, whereas FUDGE gives up on the more global syntactic control. This is expected because FUDGE fails to control syntax on its own.

Fine-tuned models are good at POS and semantic content control individually but do not compose these two controls well by product of experts (PoE), leading to a large drop in success rates for both constraints.

7.3 Infilling Results

\begin{tabular}{l|cccc|cccc}

\toprule

& \multicolumn{4}{c|}{Automatic Eval} & \multicolumn{4}{c}{Human Eval} \\

& BLEU-4 $\uparrow$ & ROUGE-L $\uparrow$ & CIDEr $\uparrow$ & BERTScore $\uparrow$ & \\ \hline

Left-only & 0.9 & 16.3 & 3.5 & 38.5 & n/a\\

DELOREAN & 1.6 & 19.1 & 7.9 & 41.7 & n/a\\

COLD & 1.8 & 19.5 & 10.7 & 42.7 & n/a\\

Diffusion & \textbf{7.1} & \textbf{28.3} & \textbf{30.7} & \textbf{89.0} & $\textbf{0.37}^{+0.03}_{-0.02}$ \\

\midrule

AR & 6.7 & 27.0 & 26.9 & \textbf{89.0} & $\textbf{0.39}^{+0.02}_{-0.03}$ \\

\bottomrule

\end{tabular}

As shown in Table 5, our diffusion LM significantly outperforms continuous relaxation based methods for infilling (COLD and DELOREAN). Moreover, our method achieves comparable performance to fine-tuning a specialized model for this task. Our method has slightly better automatic evaluation scores and the human evaluation found no statistically significant improvement for either method. These results suggest that Diffusion LM can solve many types of controllable generation tasks that depend on generation order or lexical constraints (such as infilling) without specialized training.

7.4 Ablation Studies

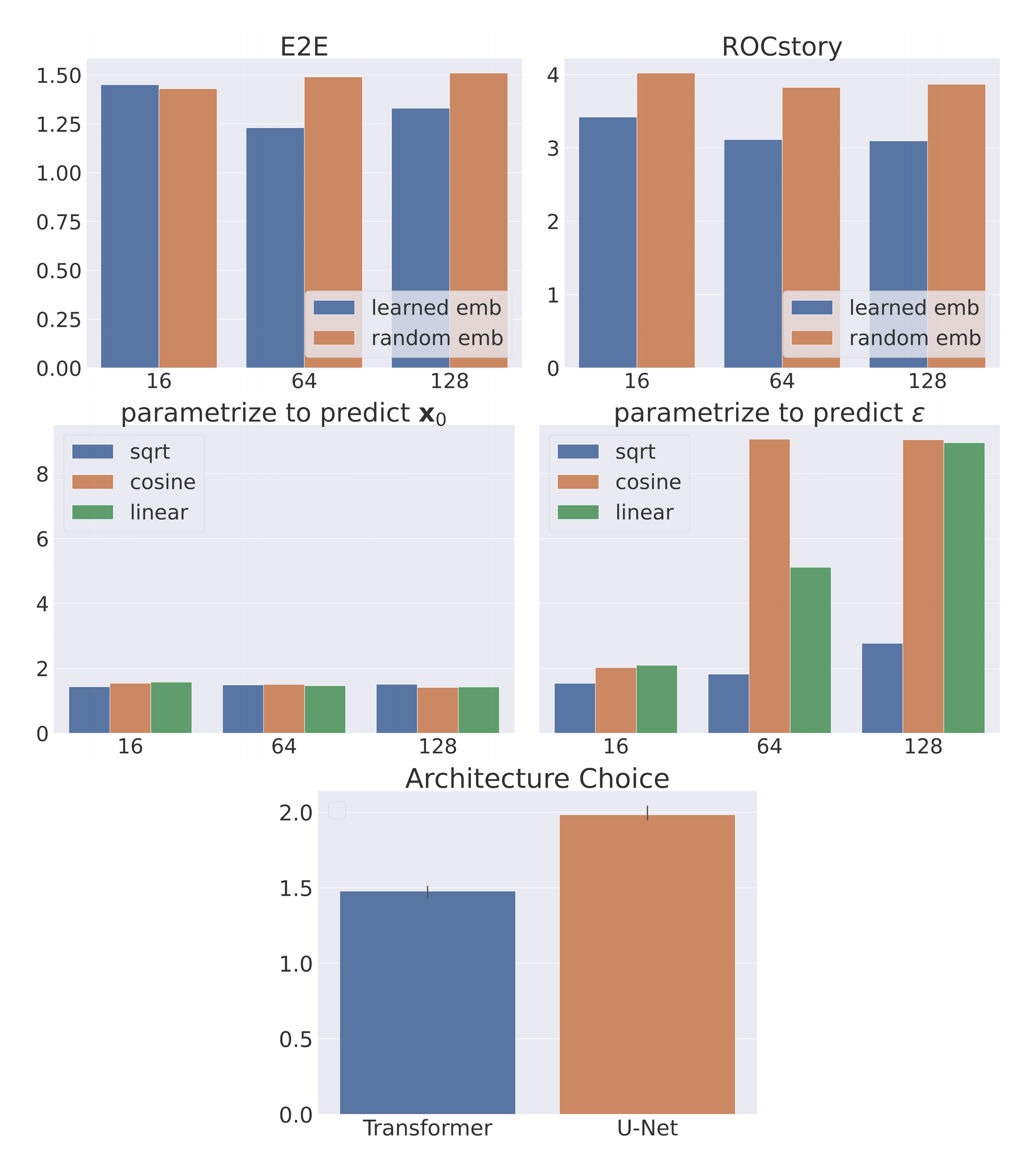

::::

Figure 4: We measure the impact of our proposed design choices through lm-score. We find both learned embeddings and reparametrization substantially improves sample quality. ::::

We verify the importance of our proposed design choices in Section 4 through two ablation studies. We measure the sample quality of Diffusion-LM using the lm-score on 500 samples Section 6.2.

Learned v.s. Random Embeddings (Section 4.1). Learned embeddings outperform random embeddings on the ROCStories, which is a harder language modeling task. The same trend holds for the E2E dataset but with a smaller margin.

Objective Parametrization (Section 4.2). We propose to let the diffusion model predict $\mathbf{x}{0}$ directly. Here, we compare this with standard parametrization in image generation which parametrizes by the noise term $\epsilon$. Figure 4 (right) shows that parametrizing by $\mathbf{x}{0}$ consistently attains good performance across dimensions, whereas parametrizing by $\epsilon$ works fine for small dimensions, but quickly collapses for larger dimensions.

8. Conclusion and Limitations

Section Summary: Diffusion-LM is a new type of language model that uses continuous diffusion processes to allow precise control over text generation, performing well in six challenging tasks by roughly doubling the success rates of earlier methods and matching approaches that need extra training. The researchers are enthusiastic about how it breaks away from the usual step-by-step text creation style in AI. However, it has downsides like higher uncertainty in predictions, much slower text output, and longer training times, though they expect improvements through future research to make it practical for large-scale use.

We proposed Diffusion-LM, a novel and controllable language model based on continuous diffusions, which enables new forms of complex fine-grained control tasks. We demonstrate Diffusion-LM’s success in 6 fine-grained control tasks: our method almost doubles the control success rate of prior methods and is competitive with baseline fine-tuning methods that require additional training.

We find the complex controls enabled by Diffusion-LM to be compelling, and we are excited by how Diffusion-LM is a substantial departure from the current paradigm of discrete autoregressive generation. As with any new technologies, there are drawbacks to the Diffusion-LMs that we constructed: (1) it has higher perplexity; (2) decoding is substantially slower; and (3) training converges more slowly. We believe that with more follow-up work and optimization, many of these issues can be addressed, and this approach will turn out to be a compelling way to do controllable generation at scale.

Acknowledgments and Disclosure of Funding

We thank Yang Song, Jason Eisner, Tianyi Zhang, Rohan Taori, Xuechen Li, Niladri Chatterji, and the members of p-lambda group for early discussions and feedbacks. We gratefully acknowledge the support of a PECASE award. Xiang Lisa Li is supported by a Stanford Graduate Fellowship.

Appendix

Section Summary: The appendix discusses improvements to diffusion models for text, introducing a custom "sqrt" noise schedule that starts with higher noise levels to better handle the discrete nature of words, unlike standard schedules that underperform early on. It also outlines key hyperparameters, such as using 2000 diffusion steps, a BERT-base architecture, and specific settings for training on datasets like E2E and ROCStories, along with details for controllable text generation using methods like PPLM and FUDGE. Finally, it addresses the slow decoding process of diffusion models, which requires thousands of steps compared to faster autoregressive approaches, and explores speed-ups like step skipping that work for simpler tasks but degrade quality on more complex ones.

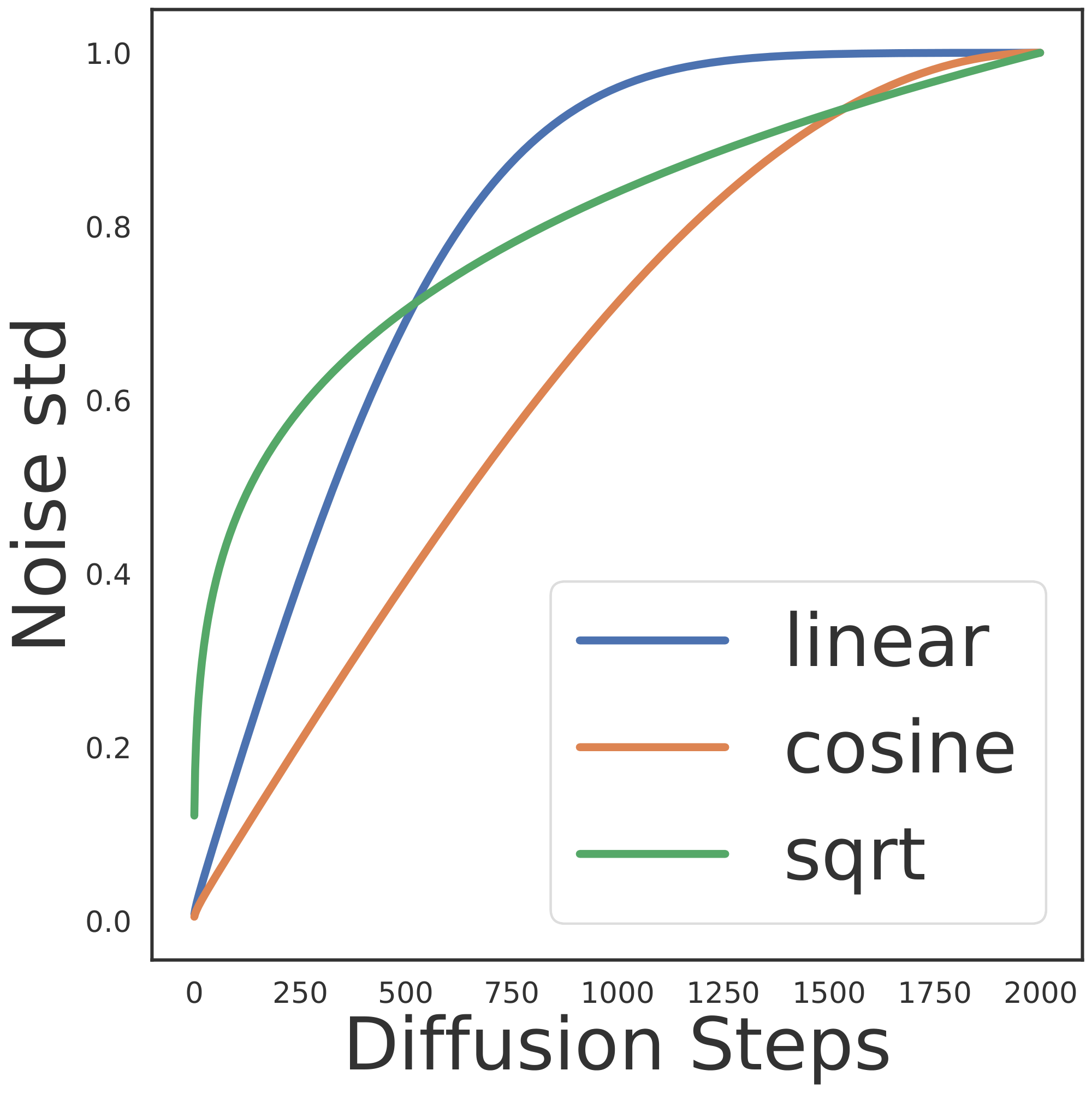

A. Diffusion Noise Schedule

Because a diffusion model shares parameters for all diffusion steps, the noise schedule (parametrized by $\bar{\alpha}_{1:T}$) is an important hyperparameter that determines how much weight we assign to each denoising problem. We find that standard noise schedules for continuous diffusions are not robust for text data. We hypothesize that the discrete nature of text and the rounding step make the model insensitive to noise near $t= 0$. Concretely, adding small amount of Gaussian noise to a word embedding is unlikely to change its nearest neighbor in the embedding space, making denoising an easy task near $t=0$.

To address this, we introduce a new sqrt noise schedule that is better suited for text, shown in Figure 5 defined by $\bar{\alpha}_t = 1-\sqrt{t/T + s}$, where $s$ is a small constant that corresponds to the starting noise level[^11]. Compared to standard linear and cosine schedules, our sqrt schedule starts with a higher noise level and increase noise rapidly for the first 50 steps. Then sqrt slows down injecting noise to avoid spending much steps in the high-noise problems, which may be too difficult to solve well.

[^11]: We set $s=$ 1e-4, and $T=2000$, which sets the initial standard deviation to $0.1$.

B. Hyperparameters

Diffusion-LM hyperparameters.

The hyperparameters that are specific to Diffusion-LM include the number of diffusion steps, the architecture of the Diffusion-LM, the embedding dimension, and the noise schedule, . We set the diffusion steps to be $2000$, the architecture to be BERT-base [43], and the sequence length to be $64$. For the embedding dimensions, we select from $d \in {16, 64, 128, 256}$ and select $d=16$ for the E2E dataset and $d=128$ for ROCStories. For the noise schedule, we design the sqrt schedule (Appendix A) that is more robust to different parametrizations and embedding dimensions as shown in Appendix H. However, once we picked the $\mathbf{x}_{0}$-parametrization (Section 4.2) the advantage of sqrt schedule is not salient.

Training hyperparameters.

We train Diffusion-LMs using AdamW optimizer and a linearly decay learning rate starting at 1e-4, dropout of 0.1, batch size of 64, and the total number of training iteration is 200K for E2E dataset, and 800K for ROCStories dataset. Our Diffusion-LMs are trained on a single GPU: NVIDIA RTX A5000, NVIDIA GeForce RTX 3090, or NVIDIA A100. It takes approximately 5 hours to train for 200K iterations on a single A100 GPU.

To stablize the training under $\mathcal{L}\text{vlb}^{\text{e2e}}$ objective, we find that we need to set gradient clipping to 1.0 and apply importance sampling to reweight each term in $\mathcal{L}\text{vlb}$ [15]. Both tricks are not necessary for $\mathcal{L}_\text{simple}^{\text{e2e}}$ objective.

Controllable Generation hyperparameters.

To achieve controllable generation, we run gradient update on the continuous latents of Diffusion-LM. We use the AdaGrad optimizer [35] to update the latent variables, and we tune the learning rate, $\text{lr} \in {0.05, 0.1, 0.15, 0.2}$ and the trade-off parameter $\lambda \in {0.1, 0.01, 0.001, 0.0005}$. Different plug-and-play controllable generation approaches tradeoff between fluency and control by tunning different hyperparameters: PPLM uses the number of gradient updates per token, denoted as $k$, and we tune $k \in {10, 30}$. FUDGE uses the tradeoff parameter $\lambda_{\text{FUDGE}}$ and we tune this $\lambda_{\text{FUDGE}} \in {16, 8, 4, 2 }$. Table 6 contains all the selected hyperparameter for each control tasks. Both PPLM and FUDGE has additional hyperparameters and we follow the instruction from the original paper to set those. For PPLM, we set the learning rate to be 0.04 and KL-scale to be 0.01. For FUDGE, we set precondition top-K to be 200, post top-K to be 10.

\begin{tabular}{lcc|cc|cc|cc|cc}

\toprule

& \multicolumn{2}{c|}{Semantic} & \multicolumn{2}{c|}{Parts-of-speech} & \multicolumn{2}{c|}{Syntax Tree} & \multicolumn{2}{c|}{Syntax Spans} & \multicolumn{2}{c}{Length} \\

& tradeoff & lr & tradeoff & lr & tradeoff & lr & tradeoff & lr & tradeoff & lr \\ \hline

PPLM & 30 & 0.04 & - & - & - & - & - & - & - & - \\

FUDGE & 8.0 & - &20.0 & - & 20.0 & - & 20.0 & - & 2.0 & - \\

Diffusion-LM & 0.01 & 0.1 & 0.0005 & 0.05 & 0.0005 & 0.2 & 0.1 & 0.15 & 0.01 & 0.1 \\

\bottomrule

\end{tabular}

C. Decoding Speed

Sampling from Diffusion-LMs requires iterating through the 2000 diffusion steps, yielding $O(2000)$ $f_\theta$ model calls. In contrast, sampling from autoregressive LMs takes $O(n)$ where $n$ is the sequence length. Therefore, decoding Diffusion-LM is slower than decoding autoregressive LMs in short and medium-length sequence regimes. Concretely, it takes around 1 minute to decode 50 sequence of length 64.

To speed up decoding, we tried skipping steps in the generative diffusion process and downsample 2000 steps to 200 steps. Concretely, we set $T=200$ and downsample the noise schedule $\bar{\alpha}{t} = \bar{\alpha}{10t}$, which is equivalent to setting each unit transition as the transition $\mathbf{x}{t} \rightarrow \mathbf{x}{t+10}$. We decode Diffusion-LM using this new noise schedule and discretization. We find that this naive approach doesn't hurt sample quality for simple language modeling tasks like E2E, but it hurts sample quality for harder language modeling tasks like ROCStories.

For plug-and-play controllable generation tasks, extant approaches are even slower. PPLM takes around 80 minutes to generate 50 samples (without batching), because it needs to run 30 gradient updates for each token. FUDGE takes 50 seconds to generate 50 samples (with batching), because it needs to call the lightweight classifier for each partial sequence, requiring 200 classifier calls for each token, yielding $100 \times$ sequence length calls. We can batch the classifier calls, but it sometimes limits batching across samples due to limited GPU memory. Our Diffusion-LM takes around 80 seconds to generate 50 samples (with batching). Our method downsamples the number of diffusion steps to 200, and it takes 3 classifier calls per diffusion step, yielding 600 model calls in total.

D. Classifiers for Classifier-Guided Controls

Semantic Content. We train an autoregressive LM (GPT-2 small architecture) to predict the (field, value) pair conditioned on text. To parametrize $\log p(\mathbf{c} \mid \mathbf{x}_{t})$, we compute the logprob of "value" per token.

Parts-of-speech. The classifier is parametrized by a parts-of-speech tagger, which estimates the probability of the target POS sequence conditioned on the latent variables. This tagger uses a BERT-base architecture: the input is the concantenated word embedding, and output a softmax distribution over all POS tags for each input word. $\log p(\mathbf{c} \mid \mathbf{x}_{t})$ is the sum of POS log-probs for each word in the sequence.

Syntax Tree. We train a Transformer-based constituency parser [40]. Our parser makes locally normalized prediction for each span, predicting either "not a constituent", or a label for the constituent (e.g., Noun Phrase). $\log p(\mathbf{c} \mid \mathbf{x}_{t})$ is the sum of log-probs for each labeled and non-constituency span in the sequence.

Syntax Span. We use the same parser trained for the syntax tree. $\log p(\mathbf{c} \mid \mathbf{x}_{t})$ is the log-probability that the target span is annotated with the target label.

E. End-to-end Objective Derivations

For continuous diffusion models (Section 3.3), $\mathcal{L}\text{simple}$ is derived from the canonical objective $\mathcal{L}\text{vlb}$ by reweighting each term. The first $T$ terms in $\mathcal{L}_\text{vlb}$ are all KL divergence between two Gaussian distributions, which has a closed form solution. Take the $t$-th term for example:

$ \mathop{\mathbb{E}}{q(\mathbf{x}{1:T} | \mathbf{x}{0})} \left[\log \frac{q(\mathbf{x}{t-1} | \mathbf{x}{0}, \mathbf{x}{t})} {p_\theta(\mathbf{x}{t-1} | \mathbf{x}{t}) }\right] = \mathop{\mathbb{E}}{ q(\mathbf{x}{1:T} | \mathbf{x}{0})} \left[\frac{1}{2\sigma_t^2} || \mu\theta(\mathbf{x}{t}, t) - \hat{\mu}(\mathbf{x}{t}, \mathbf{x}_{0}) ||^2\right] + C,\tag{3} $

where $C$ is a constant, $\hat{\mu}$ is the mean of the posterior $q(\mathbf{x}{t-1} | \mathbf{x}{0}, \mathbf{x}{t})$, and $\mu\theta$ is the mean of $p_\theta(\mathbf{x}{t-1} \mid \mathbf{x}{t})$ predicted by the diffusion model. Intuitively, this simplification matches the predicted mean of $\mathbf{x}{t-1}$ to its true posterior mean. The simplification involves removing the constant $C$ and the scaling factor $\frac{1}{2\sigma_t^2}$, yielding one term in $\mathcal{L}\text{simple}$: $\mathop{\mathbb{E}}{ q(\mathbf{x}{1:T} | \mathbf{x}{0})} \left[|| \mu\theta(\mathbf{x}{t}, t) - \hat{\mu}(\mathbf{x}{t}, \mathbf{x}_{0}) ||^2\right]$.

To apply continuous diffusion to model discrete text, we design Diffusion-LM (Section 4.1) and propose a new end-to-end training objective Equation (2) that learns the diffusion model and the embedding parameters jointly. The $\mathcal{L}_\text{vlb}^{\text{e2e}}$ can be written out as

$ \begin{align*} \mathcal{L}^\text{e2e}{\text{vlb}} (\mathbf{w}) &= \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0} | \mathbf{w})}\left[\mathcal{L}{\text{vlb}} (\mathbf{x}{0}) + \log q\phi(\mathbf{x}{0} | \mathbf{w}) - \log p\theta(\mathbf{w} | \mathbf{x}{0})]\right] \ &= \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0:T} | \mathbf{w})} \left[\underbrace{\log \frac{q(\mathbf{x}{T} | \mathbf{x}{0})}{p\theta(\mathbf{x}{T})}}{L_T} + \sum_{t=2}^T \underbrace{\log \frac{q(\mathbf{x}{t-1} | \mathbf{x}{0}, \mathbf{x}{t})} {p\theta(\mathbf{x}{t-1} | \mathbf{x}{t}) }}{L{t-1}} - \underbrace{\frac{\log q_\phi(\mathbf{x}{0} | \mathbf{w})}{\log p\theta(\mathbf{x}{0} | \mathbf{x}{1})}}{L{0}} - \underbrace{\log p_\theta(\mathbf{w} | \mathbf{x}{0})}{L_\text{round}}\right] \ \end{align*} $

We apply the same simplification which transforms $\mathcal{L}\text{vlb} \rightarrow \mathcal{L}\text{simple}$ to transform $\mathcal{L}^\text{e2e}{\text{vlb}} \rightarrow \mathcal{L}^\text{e2e}\text{simple}$:

$ \begin{align*} \mathop{\mathbb{E}}{q\phi(\mathbf{x}{0:T} | \mathbf{w})} [L_T] & \rightarrow \mathbb{E}[||\mathop{\mathbb{E}}{\mathbf{x}{T}\sim q}[\mathbf{x}{T}| \mathbf{x}{0}] - 0 ||^2] = \mathbb{E}[||\hat{\mu}(\mathbf{x}{T}; \mathbf{x}{0})] ||^2] \ \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0:T} | \mathbf{w})} [L{t-1}] & \rightarrow \mathbb{E}[|| \mathop{\mathbb{E}}{\mathbf{x}{t-1}\sim q}[\mathbf{x}{t-1}| \mathbf{x}{0}, \mathbf{x}{t}] - \mathop{\mathbb{E}}{\mathbf{x}{t-1} \sim p\theta} [\mathbf{x}{t-1} | \mathbf{x}{t}] ||^2] =\mathbb{E}[||\hat{\mu}(\mathbf{x}{t}, \mathbf{x}{0})-\mu_\theta(\mathbf{x}{t}, t) ||^2]\ \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0:T} | \mathbf{w})} [L{0}] & \rightarrow \mathbb{E}[|| \mathop{\mathbb{E}}{\mathbf{x}{0}\sim q_\phi} [\mathbf{x}{0} \mid \mathbf{w}] - \mathop{\mathbb{E}}{\mathbf{x}{0} \sim p\theta} [\mathbf{x}{0} \mid \mathbf{x}{1}] ||^2] = \mathbb{E}[|| \textsc{Emb}(w)-\mu_\theta(\mathbf{x}_{1}, 1) ||^2] \end{align*} $

It's worth noting that the first term is constant if the noise schedule satisfies $\bar{\alpha}T = 0$, which guarantees $\mathbf{x}{T}$ is pure Gaussian noise. In contrast, if the noise schedule doesn't go all the way such that $\mathbf{x}{T}$ is pure Gaussian noise, we need to include this regularization term to prevent the embedding from learning too large norms. Embedding with large norms is a degenerate solution, because it is impossible to sample from $p(\mathbf{x}{T})$ accurately, even though it makes all the other denoising transitions easily predictable.

Combining these terms yield $\mathcal{L}^\text{e2e}_\text{simple}$.

$ \begin{align*} \mathcal{L}^\text{e2e}\text{simple} (\mathbf{w}) & = \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0:T} | \mathbf{w})} \left[||\hat{\mu}(\mathbf{x}{T}; \mathbf{x}{0})||^2 + \sum{t=2}^T [||\hat{\mu}(\mathbf{x}{t}, \mathbf{x}{0})-\mu_\theta(\mathbf{x}{t}, t) ||^2] \right] \ & \quad \quad \quad ; + \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0:1} | \mathbf{w})} \left[|| \textsc{Emb}(\mathbf{w}) - \mu\theta(\mathbf{x}{1}, 1) ||^2 - \log p\theta(\mathbf{w} | \mathbf{x}_{0})\right]. \end{align*} $

Intuitively, we learn a Transformer model that that takes as input $(\mathbf{x}{t}, t) \in (\mathbb{R}^{nd}, \mathbb{R})$ and the goal is to predict the distribution of $\mathbf{x}{t-1} \in \mathbb{R}^{nd}$. It's worth noting that this Transformer model is shared across all the diffusion steps $t = 1\dots T$. As we demonstrated in the derivation of $\mathcal{L}^\text{e2e}\text{simple}$, the most natural thing is to directly parametrize the neural network to predict the mean of $\mathbf{x}{t-1} \mid \mathbf{x}{t}$, we call this $\mu\theta$-parametrization.

There are other parametrizations that are equivalent to $\mu_\theta$-parametrization up to a scaling constant. For example in Section 4.2, we can train the Transformer model to directly predict $\mathbf{x}{0}$ via $f\theta(\mathbf{x}{t}, t)$, and use the tractable Gaussian posterior $q(\mathbf{x}{t-1} \mid \mathbf{x}{0}, \mathbf{x}{t})$ to compute the mean of $\mathbf{x}{t-1}$, which has a closed form solution, conditioned on predicted $\mathbf{x}{0}$ and observed $\mathbf{x}{t}$: $ \frac{\sqrt{\bar{\alpha}{t-1}} \beta_t}{1- \bar{\alpha}t}\mathbf{x}{0} + \frac{\sqrt{\alpha_t} (1- \bar{\alpha}_{t-1})}{1- \bar{\alpha}t} \mathbf{x}{t}$.

$ \begin{align*} & ||\hat{\mu}(\mathbf{x}{t}, \mathbf{x}{0})-\mu_\theta(\mathbf{x}{t}, t) ||^2 \ = & ||(\frac{\sqrt{\bar{\alpha}{t-1}} \beta_t}{1- \bar{\alpha}t}\mathbf{x}{0} + \frac{\sqrt{\alpha_t} (1- \bar{\alpha}{t-1})}{1- \bar{\alpha}t} \mathbf{x}{t}) - (\frac{\sqrt{\bar{\alpha}{t-1}} \beta_t}{1- \bar{\alpha}t}f\theta(\mathbf{x}{t}, t) + \frac{\sqrt{\alpha_t} (1- \bar{\alpha}{t-1})}{1- \bar{\alpha}t} \mathbf{x}{t})||^2\ = & ||\frac{\sqrt{\bar{\alpha}{t-1}} \beta_t}{1- \bar{\alpha}t}(\mathbf{x}{0} - f\theta(\mathbf{x}{t}, t))||^2\ \propto & ||\mathbf{x}{0} - f_\theta(\mathbf{x}_{t}, t)||^2 \end{align*} $

These two parametrizations differ by a constant scaling, and we apply the $\mathbf{x}{0}$-parametrization to all terms in $\mathcal{L}^\text{e2e}\text{simple}$ to reduce rounding errors as discussed in Section 4.2:

$ \begin{align*} \mathcal{L}^\text{e2e}{\mathbf{x}{0}\text{-simple}} (\mathbf{w}) & = \mathop{\mathbb{E}}{q\phi(\mathbf{x}{0:T} | \mathbf{w})} \left[||\hat{\mu}(\mathbf{x}{T}; \mathbf{x}{0})||^2 + \sum{t=2}^T [||\mathbf{x}{0}- f\theta(\mathbf{x}{t}, t) ||^2] \right] \ & \quad \quad \quad ; + \mathop{\mathbb{E}}{q_\phi(\mathbf{x}{0:1} | \mathbf{w})} \left[|| \textsc{Emb}(\mathbf{w}) - f\theta(\mathbf{x}{1}, 1) ||^2 - \log p\theta(\mathbf{w} | \mathbf{x}_{0})\right]. \end{align*} $

To generate samples from a Diffusion-LM with $\mathbf{x}{0}$-parametrization, at each diffusion step, the model estimates the $\mathbf{x}{0}$ via $f_\theta (\mathbf{x}{t}, t)$ and then we sample $\mathbf{x}{t-1}$ from $q(\mathbf{x}{t-1} \mid f\theta (\mathbf{x}{t}, t), \mathbf{x}{t})$, which is fed as input to the next diffusion step.

F. Log-Likelihood Models and Results

To investigate Diffusion-LM's log-likelihood performance, we make several departures from the training procedure of Section 4. Ultimately the log-likelihood improvements described in this section did not translate into better generation quality in our experiments and therefore we focus on the original method in the rest of the paper. Our likelihood models are trained as follows:

- Instead of training a diffusion model on sequences of low-dimensional token embeddings, we train a model directly sequences of on one-hot token vectors.

- Following the setup of [44], we train a continuous-time diffusion model against the log-likelihood bound and learn the noise schedule simultaneously with the rest of the model to minimize the loss variance.

- Because our model predicts sequences of one-hot vectors, we use a softmax nonlinearity at its output and replace all squared-error terms in the loss function with cross-entropy terms. This choice of surrogate loss led to better optimization, even though we evaluate against the original loss with squared-error terms.

- The model applies the following transformation to its inputs before any Transformer layers: $x := \mathrm{softmax}(\alpha(t) x + \beta(t))$ where $\alpha(t) \in \mathbb{R}$ and $\beta(t) \in \mathbb{R}^v$ are learned functions of the diffusion timestep $t$ parameterized by MLPs ($v$ is the vocabulary size).

- At inference time, we omit the rounding procedure in Section 4.2.

For exact model architecture and training hyperparameter details, please refer to our released code.

We train these diffusion models, as well as baseline autoregressive Transformers, on E2E and ROCStories and report log-likelihoods in Table 7. We train two sizes of Transformers: "small" models with roughly 100M parameters and "medium" models with roughly 300M parameters. Both E2E and ROCstories are small enough datasets that all of our models reach their minimum test loss early in training (and overfit after that). To additionally compare model performance in a large-dataset regime, we also present "ROCStories (+GPT-J)" experiments in which we generate 8M examples of synthetic ROCStories training data by finetuning GPT-J ([45]) on the original ROCStories data, pretrain our models on the synthetic dataset, and then finetune and evaluate them on the original ROCStories data.

:Table 7: Log-likelihood results (nats per token)

| Dataset | Small AR | Small Diffusion | Medium Diffusion |

|---|---|---|---|

| E2E | 1.77 | 2.28 | - |

| ROCStories | 3.05 | 3.88 | - |

| ROCStories (+GPT-J) | 2.41 | 3.59 | 3.10 |

G. Qualitative Examples

We show randomly sampled outputs of Diffusion-LM both for unconditional generation and for the $5$ control tasks. Table 8 shows the unconditional generation results. Table 9, Table 10, Table 12, and Table 3 show the qualitative samples from span control, POS control, semantic content control, and syntax tree control, respectively. Table 11 shows the results of length control.

H. Additional Ablation Studies

In addition to the 2 ablation studies in Section 7.4, we provide more ablation results in Figure 6 about architecture choices and noise schedule.

Learned v.s. Random Embeddings (Section 4.1). Learned embeddings outperform random embeddings on both ROCStories and the E2E dataset by xx percent and xx percent respectively, as shown in the first row of Figure 6.

Noise Schedule (Appendix A). We compare the sqrt schedule with cosine [15] and linear [9] schedules proposed for image modeling. The middle row of Figure 6 demonstrates that sqrt schedule attains consistently good and stable performance across all dimension and parametrization choices. While the sqrt schedule is less important with $\mathbf{x}_{0}$-parametrization, we see that it provides a substantially more robust noise schedule under alternative parametrizations such as $\epsilon$.

Transformer v.s. U-Net.

The U-Net architecture in [9] utilizes 2D-convolutional layers, and we imitate all the model architectures except changing 2D-conv to 1D-conv which is suitable for text data. Figure 6 (last row) shows that the Transformer architecture outperforms U-Net.

I. Societal Impacts

On the one hand, having strong controllability in language models will help with mitigating toxicity, making the language models more reliable to deploy. Additionally, we can also control the model to be more truthful, reducing the inaccurate information generated by the language model by carefully controlling it to be truthful. On the other hand, however, one could also imagine more powerful targeted disinformation (e.g., narrative wedging) derived from the fine-grained controllability.

Towards this end, it might be worth considering generation methods that can watermark the generated outputs without affecting its fluency, and this type of watermark could also be framed as a controllable generation problem, with distinguish-ability and fluency as the constraints.

\begin{tabular}{lp{13cm}}

\toprule

\parbox[t]{2mm}{\multirow{5}{*}{\rotatebox[origin=c]{90}{ROCStories+Aug}}} & Matt was at the store . He was looking at a new toothbrush . He found the perfect one . When he got home, he bought it . It was bright and he loved it . \\\\

& I and my friend were hungry . We were looking for some to eat . We went to the grocery store . We bought some snacks . We decided to pick up some snacks . \\\\

& I was at the store . I had no money to buy milk . I decided to use the restroom . I went to the register . I was late to work . \\\\

& The man wanted to lose weight . He did n't know how to do weight . He decided to start walking . He ate healthy and ate less . He lost ten pounds in three months . \\\\

& I went to the aquarium . I wanted to feed something . I ordered a fish . When it arrived I had to find something . I was disappointed .\\

\toprule

\toprule

\parbox[t]{2mm}{\multirow{5}{*}{\rotatebox[origin=c]{90}{ROCStories}}} & Tom was planning a trip to California . He had fun in the new apartment . He was driving, until it began to rain . Unfortunately, he was soaked . Tom stayed in the rain at the beach . \\\\

& Carrie wanted a new dress . She did not have enough money . She went to the bank to get one, but saw the missed . Finally, she decided to call her mom . She could not wait to see her new dress . \\\\

& Tina went to her first football game . She was excited about it . When she got into the car she realized she forgot her hand . She ended up getting too late . Tina had to start crying . \\\\

& Michael was at the park . Suddenly he found a stray cat . He decided to keep the cat . He went to his parents and demanded a leg . His parents gave him medicine to get it safe . \\\\

& Tim was eating out with friends . They were out of service . Tim decided to have a pizza sandwich . Tim searched for several hours . He was able to find it within minutes . \\

\toprule

\toprule

\parbox[t]{2mm}{\multirow{5}{*}{\rotatebox[origin=l]{90}{E2E}}}

& The Waterman is an expensive pub that serves Japanese food . It is located in Riverside and has a low customer rating . \\\\

& A high priced pub in the city centre is The Olive Grove . It is a family friendly pub serving French food . \\\\

& The Rice Boat offers moderate priced Chinese food with a customer rating of 3 out of 5 . It is near Express by Holiday Inn . \\\\

& There is a fast food restaurant, The Phoenix, in the city centre . It has a price range of more than \u00a3 30 and the customer ratings are low .\\\\