Stable Video Diffusion: Scaling Latent Video Diffusion Models to Large Datasets

Andreas Blattmann${}^*$, Tim Dockhorn${}^*$, Sumith Kulal${}^*$, Daniel Mendelevitch,

Maciej Kilian, Dominik Lorenz, Yam Levi, Zion English, Vikram Voleti,

Adam Letts, Varun Jampani, Robin Rombach

Stability AI

${}^*$ Equal contributions.

Abstract

We present Stable Video Diffusion — a latent video diffusion model for high-resolution, state-of-the-art text-to-video and image-to-video generation. Recently, latent diffusion models trained for 2D image synthesis have been turned into generative video models by inserting temporal layers and finetuning them on small, high-quality video datasets. However, training methods in the literature vary widely, and the field has yet to agree on a unified strategy for curating video data. In this paper, we identify and evaluate three different stages for successful training of video LDMs: text-to-image pretraining, video pretraining, and high-quality video finetuning. Furthermore, we demonstrate the necessity of a well-curated pretraining dataset for generating high-quality videos and present a systematic curation process to train a strong base model, including captioning and filtering strategies. We then explore the impact of finetuning our base model on high-quality data and train a text-to-video model that is competitive with closed-source video generation. We also show that our base model provides a powerful motion representation for downstream tasks such as image-to-video generation and adaptability to camera motion-specific LoRA modules. Finally, we demonstrate that our model provides a strong multi-view 3D-prior and can serve as a base to finetune a multi-view diffusion model that jointly generates multiple views of objects in a feedforward fashion, outperforming image-based methods at a fraction of their compute budget. We release code and model weights at https://github.com/Stability-AI/generative-models.

Executive Summary: Generating high-quality videos from text descriptions or single images is a growing need in media, entertainment, and design, where current AI tools often produce inconsistent or low-fidelity results due to poor handling of motion and data quality. Recent advances in image generation using diffusion models have inspired video extensions, but these lack standardized approaches to training data curation, leading to suboptimal performance on large-scale datasets. This matters now as video AI applications expand, demanding reliable, efficient models to compete with closed-source systems while enabling open research.

This document evaluates effective training strategies for latent video diffusion models, emphasizing data curation's role, and demonstrates scalable methods to build state-of-the-art text-to-video and image-to-video generators. It also explores the models' potential as priors for related tasks like multi-view 3D synthesis.

The authors developed a three-stage training process starting with pretraining on existing image models for strong visual foundations, followed by video pretraining on a large, curated dataset of short clips, and ending with finetuning on a small set of high-quality videos at higher resolutions. They curated an initial 580 million clip dataset—equivalent to 212 years of footage—into a refined 152 million clip version by filtering for motion (using optical flow to remove static scenes), aesthetics (via CLIP scores), text alignment (via synthetic captions and similarities), and minimal on-screen text (via OCR). This involved automated processing like cut detection and multi-method captioning from sources including CoCa and VideoBLIP models. Training used a fixed architecture based on Stable Diffusion 2.1 with added temporal layers, evaluated via human preference studies on 64 prompts and metrics like Fréchet Video Distance (FVD) on benchmarks such as UCF-101, covering data from recent years with samples in the millions to hundreds of millions.

Key findings show that curated pretraining data boosts model quality by 20-30% in human preferences for visual fidelity and prompt adherence compared to uncurated sets, with gains persisting after finetuning—even on 50 million samples, curated versions outperformed larger uncurated ones by similar margins. The resulting base model achieved an FVD of 242 on UCF-101 zero-shot text-to-video, about 30-50% better than prior open models like PYOCO (355) or Video LDM (551). Finetuned text-to-video and image-to-video models (generating 14-25 frames at 576x1024 resolution) were preferred over closed-source leaders like GEN-2 and Pika Labs in human evaluations for quality and consistency. Additionally, adapting the model for multi-view generation yielded top scores on metrics like PSNR (16.83 vs. 14.51-15.29 for Zero123XL and SyncDreamer) and LPIPS (0.14 vs. 0.18-0.20), using just 16 hours of training versus days for competitors.

These results mean video diffusion models can now generate realistic, motion-coherent short clips efficiently, cutting risks of artifacts from noisy data and enabling downstream applications like controlled camera motion via lightweight LoRA adapters or frame interpolation for smoother playback. Unlike expectations from image-only priors, the video-trained base provides a robust motion and 3D understanding, outperforming image-based methods in consistency at a fraction of compute—potentially accelerating 3D content creation while reducing training costs by 3-4x through curation. This shifts focus from architecture tweaks to data quality, impacting performance in policy-sensitive areas like misinformation by improving control and reliability.

Adopt the three-stage training with systematic curation (filtering 25-50% of data per criterion) for new video models to achieve competitive results; finetune the released base model for tasks like image-to-video or multi-view synthesis, prioritizing curated high-quality subsets for efficiency. For options, full finetuning offers broad motion control but higher compute, while LoRAs enable task-specific adaptations with minimal resources—trade-offs favor LoRAs for rapid prototyping. Next, pilot longer video cascades or distillation for faster inference, and gather more diverse real-world data to address underrepresented motions.

Limitations include focus on short clips (up to 25 frames, about 1 second), occasional low generated motion, and high inference demands (slow sampling, high VRAM), assuming Gaussian noise and no real-time constraints. Confidence is high in core findings, backed by consistent human preferences (Elo rankings) and metrics across scales, though caution applies to untested long-form or extreme-motion scenarios where more validation data would help.

1. Introduction

Section Summary: Recent advances in AI image generation have spurred progress in creating videos using similar techniques, but researchers have overlooked how choosing and preparing training data affects results, despite its proven importance in image models. This study explores data curation's role by fixing model designs and testing strategies like pretraining on vast low-resolution video datasets followed by fine-tuning on smaller, high-quality ones, revealing that careful data selection greatly boosts performance even after further refinements. Using this approach on a massive 600-million-video dataset, the authors train top-performing models for tasks like generating videos from text or images, enabling consistent multi-view object creation and precise motion control, which also aids 3D modeling challenges.

Driven by advances in generative image modeling with diffusion models ([1, 2, 3, 4]), there has been significant recent progress on generative video models both in research ([5, 6, 7, 8]) and real-world applications ([9, 10]) Broadly, these models are either trained from scratch ([11]) or finetuned (partially or fully) from pretrained image models with additional temporal layers inserted ([8, 12, 13, 6]). Training is often carried out on a mix of image and video datasets ([11]).

While research around improvements in video modeling has primarily focused on the exact arrangement of the spatial and temporal layers [13, 6, 11, 8], none of the aforementioned works investigate the influence of data selection. This is surprising, especially since the significant impact of the training data distribution on generative models is undisputed ([14, 15]). Moreover, for generative image modeling, it is known that pretraining on a large and diverse dataset and finetuning on a smaller but higher quality dataset significantly improves the performance [4, 14]. Since many previous approaches to video modeling have successfully drawn on techniques from the image domain [8, 5, 13], it is noteworthy that the effect of data and training strategies, i.e., the separation of video pretraining at lower resolutions and high-quality finetuning, has yet to be studied. This work directly addresses these previously uncharted territories.

We believe that the significant contribution of data selection is heavily underrepresented in today's video research landscape despite being well-recognized among practitioners when training video models at scale. Thus, in contrast to previous works, we draw on simple latent video diffusion baselines ([8]) for which we fix architecture and training scheme and assess the effect of data curation. To this end, we first identify three different video training stages that we find crucial for good performance: text-to-image pretraining, video pretraining on a large dataset at low resolution, and high-resolution video finetuning on a much smaller dataset with higher-quality videos. Borrowing from large-scale image model training ([16, 17, 14]), we introduce a systematic approach to curate video data at scale and present an empirical study on the effect of data curation during video pretraining. Our main findings imply that pretraining on well-curated datasets leads to significant performance improvements that persist after high-quality finetuning.

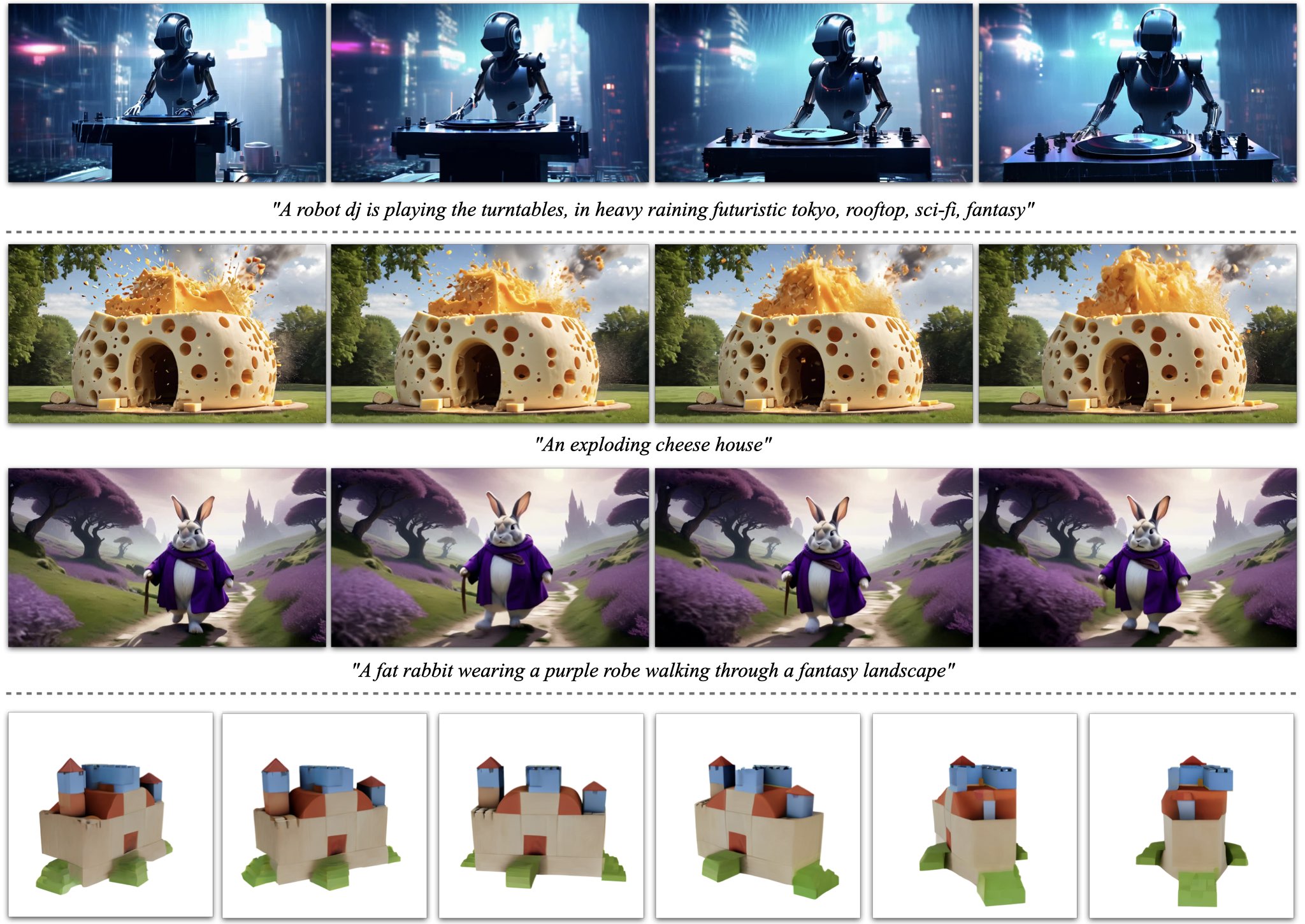

A general motion and multi-view prior

Drawing on these findings, we apply our proposed curation scheme to a large video dataset comprising roughly 600 million samples and train a strong pretrained text-to-video base model, which provides a general motion representation. We exploit this and finetune the base model on a smaller, high-quality dataset for high-resolution downstream tasks such as text-to-video (see Figure 1, top row) and image-to-video, where we predict a sequence of frames from a single conditioning image (see Figure 1, mid rows). Human preference studies reveal that the resulting model outperforms state-of-the-art image-to-video models.

Furthermore, we also demonstrate that our model provides a strong multi-view prior and can serve as a base to finetune a multi-view diffusion model that generates multiple consistent views of an object in a feedforward manner and outperforms specialized novel view synthesis methods such as Zero123XL ([18, 19]) and SyncDreamer ([20]). Finally, we demonstrate that our model allows for explicit motion control by specifically prompting the temporal layers with motion cues and also via training LoRA-modules [21, 12] on datasets resembling specific motions only, which can be efficiently plugged into the model.

To summarize, our core contributions are threefold: (i) We present a systematic data curation workflow to turn a large uncurated video collection into a quality dataset for generative video modeling. Using this workflow, we (ii) train state-of-the-art text-to-video and image-to-video models, outperforming all prior models. Finally, we (iii) probe the strong prior of motion and 3D understanding in our models by conducting domain-specific experiments. Specifically, we provide evidence that pretrained video diffusion models can be turned into strong multi-view generators, which may help overcome the data scarcity typically observed in the 3D domain [19].

2. Background

Section Summary: Recent video generation research mainly uses diffusion models, which create consistent sequences of frames from text or image prompts by iteratively refining noisy samples into clear outputs. This work builds on latent video diffusion models that operate in a compressed space for efficiency, adding temporal layers to pretrained image models and fine-tuning the entire system with text conditioning, frame rate adjustments, and a modified noise process to improve motion and high-resolution results. It also addresses gaps in video data preparation by systematically curating large datasets and proposing a three-stage training strategy, unlike the ad-hoc methods in prior studies that often relied on suboptimal or mixed image-video sources.

Most recent works on video generation rely on diffusion models ([22, 1, 23]) to jointly synthesize multiple consistent frames from text- or image-conditioning. Diffusion models implement an iterative refinement process by learning to gradually denoise a sample from a normal distribution and have been successfully applied to high-resolution text-to-image [2, 24, 4, 17, 14] and video synthesis [11, 6, 7, 8, 25].

In this work, we follow this paradigm and train a latent ([4, 26]) video diffusion model ([8, 27]) on our video dataset. We provide a brief overview of related works which utilize latent video diffusion models (Video-LDMs) in the following paragraph; a full discussion that includes approaches using GANs ([28, 29]) and autoregressive models ([13]) can be found in Appendix B. An introduction to diffusion models can be found in Appendix D.

Latent Video Diffusion Models

Video-LDMs ([30, 8, 31, 12, 32]) train the main generative model in a latent space of reduced computational complexity ([33, 4]). Most related works make use of a pretrained text-to-image model and insert temporal mixing layers of various forms ([34, 25, 8, 31, 12]) into the pretrained architecture. [25] additionally relies on temporally correlated noise to increase temporal consistency and ease the learning task. In this work, we follow the architecture proposed in [8] and insert temporal convolution and attention layers after every spatial convolution and attention layer. In contrast to works that only train temporal layers ([12, 8]) or are completely training-free ([35, 36]), we finetune the full model. For text-to-video synthesis in particular, most works directly condition the model on a text prompt ([8, 32]) or make use of an additional text-to-image prior [27, 6].

In our work, we follow the former approach and show that the resulting model is a strong general motion prior, which can easily be finetuned into an image-to-video or multi-view synthesis model. Additionally, we introduce micro-conditioning ([17]) on frame rate. We also employ the EDM-framework ([37]) and significantly shift the noise schedule towards higher noise values, which we find to be essential for high-resolution finetuning. See Section 4 for a detailed discussion of the latter.

Data Curation

Pretraining on large-scale datasets [38] is an essential ingredient for powerful models in several tasks such as discriminative text-image ([15, 16]) and language ([39, 40, 41]) modeling. By leveraging efficient language-image representations such as CLIP ([42, 16, 15]), data curation has similarly been successfully applied for generative image modeling ([38, 14, 17]). However, discussions on such data curation strategies have largely been missing in the video generation literature ([11, 43, 13, 6]), and processing and filtering strategies have been introduced in an ad-hoc manner. Among the publicly accessible video datasets, WebVid-10M ([44]) dataset has been a popular choice ([6, 8, 45]) despite being watermarked and suboptimal in size. Additionally, WebVid-10M is often used in combination with image data [38], to enable joint image-video training. However, this amplifies the difficulty of separating the effects of image and video data on the final model. To address these shortcomings, this work presents a systematic study of methods for video data curation and further introduces a general three-stage training strategy for generative video models, producing a state-of-the-art model.

3. Curating Data for HQ Video Synthesis

Section Summary: This section outlines a strategy for training advanced video generation models using large video datasets, divided into three stages: starting with pretraining on images, followed by broad video pretraining, and ending with fine-tuning on select high-quality videos. It details data preparation by collecting long videos, detecting and splitting them at scene cuts to create shorter clips, and annotating them with AI-generated captions, resulting in a massive dataset of over 580 million clips spanning 212 years of content. To improve model performance, the data is further filtered to remove static scenes, excessive text, and low-quality elements using tools like optical flow analysis and aesthetic scoring, with experiments showing that curated data and image pretraining lead to better video synthesis results.

In this section, we introduce a general strategy to train a state-of-the-art video diffusion model on large datasets of videos. To this end, we (i) introduce data processing and curation methods, for which we systematically analyze the impact on the quality of the final model in Section 3.3 and Section 3.4, and (ii), identify three different training regimes for generative video modeling. In particular, these regimes consist of

- Stage I: image pretraining, i.e a 2D text-to-image diffusion model [4, 17, 14].

- Stage II: video pretraining, which trains on large amounts of videos.

- Stage III: video finetuning, which refines the model on a small subset of high-quality videos at higher resolution.

We study the importance of each regime separately in Section 3.2, Section 3.3, and Section 3.4.

3.1 Data Processing and Annotation

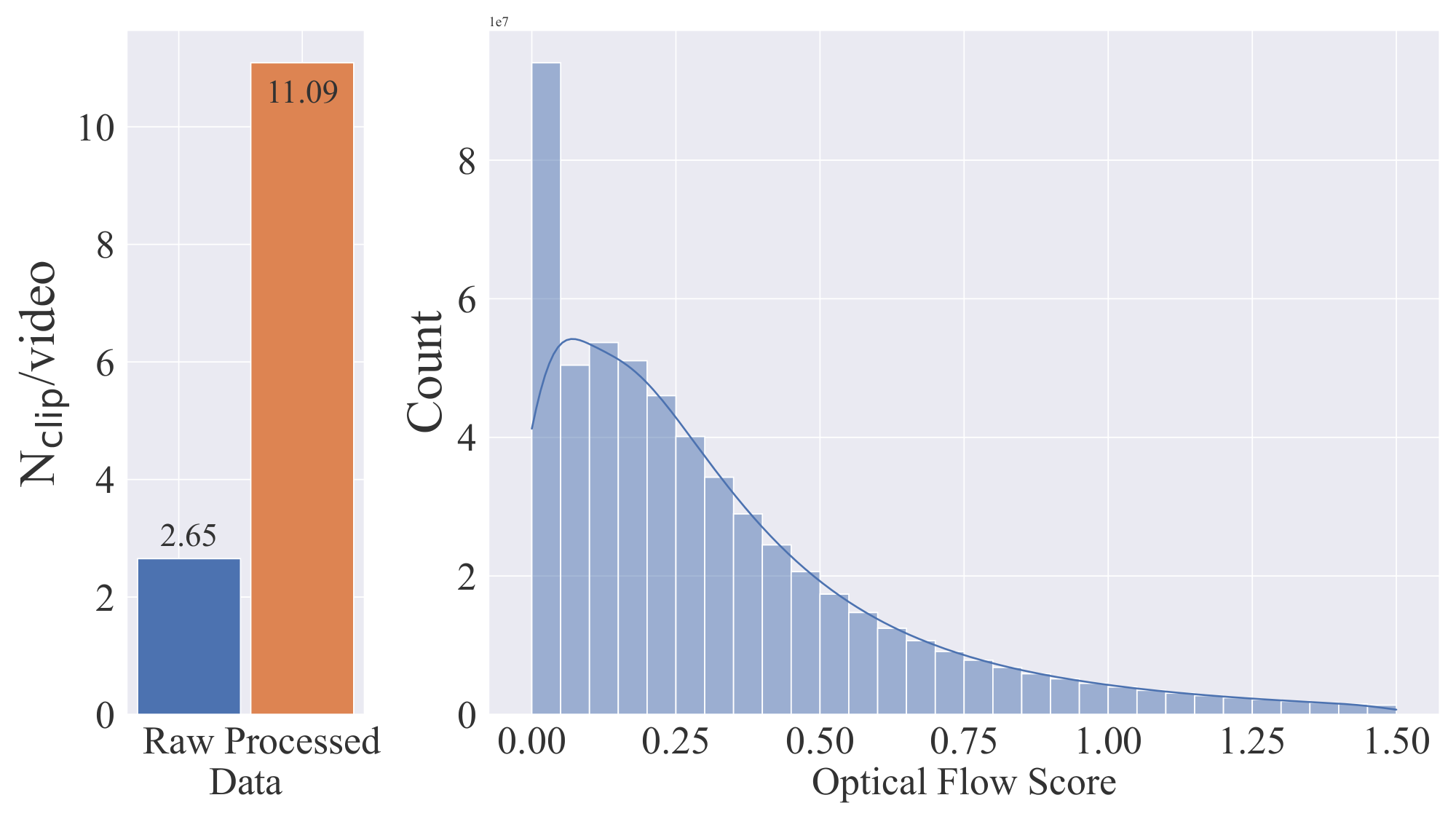

We collect an initial dataset of long videos which forms the base data for our video pretraining stage. To avoid cuts and fades leaking into synthesized videos, we apply a cut detection pipeline^1 in a cascaded manner at three different FPS levels. Figure 2, left, provides evidence for the need for cut detection: After applying our cut-detection pipeline, we obtain a significantly higher number ($\sim\hspace{-0.3em}4\times$) of clips, indicating that many video clips in the unprocessed dataset contain cuts beyond those obtained from metadata.

Next, we annotate each clip with three different synthetic captioning methods: First, we use the image captioner CoCa [46] to annotate the mid-frame of each clip and use V-BLIP ([47]) to obtain a video-based caption. Finally, we generate a third description of the clip via an LLM-based summarization of the first two captions.

The resulting initial dataset, which we dub Large Video Dataset (LVD), consists of 580M annotated video clip pairs, forming 212 years of content.

:Table 1: Comparison of our dataset before and after fitering with publicly available research datasets.

| LVD | LVD-F | LVD-10M | LVD-10M-F | WebVid | InternVid | |

|---|---|---|---|---|---|---|

| LVD | LVD-F | LVD-10M | LVD-10M-F | WebVid | InternVid | |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: |

| #Clips | 577M | 152M | 9.8M | 2.3M | 10.7M | 234M |

| Clip Duration (s) | 11.58 | 10.53 | 12.11 | 10.99 | 18.0 | 11.7 |

| Total Duration (y) | 212.09 | 50.64 | 3.76 | 0.78 | 5.94 | 86.80 |

| Mean #Frames | 325 | 301 | 335 | 320 | - | - |

| Mean Clips/Video | 11.09 | 4.76 | 1.2 | 1.1 | 1.0 | 32.96 |

| Motion Annotations? | ✓ | ✓ | ✓ | ✓ | ✗ | ✗ |

However, further investigation reveals that the resulting dataset contains examples that can be expected to degrade the performance of our final video model, such as clips with less motion, excessive text presence, or generally low aesthetic value. We therefore additionally annotate our dataset with dense optical flow ([48, 49]), which we calculate at 2 FPS and with which we filter out static scenes by removing any videos whose average optical flow magnitude is below a certain threshold. Indeed, when considering the motion distribution of LVD (see Figure 2, right) via optical flow scores, we identify a subset of close-to-static clips therein.

Moreover, we apply optical character recognition [50] to weed out clips containing large amounts of written text. Lastly, we annotate the first, middle, and last frames of each clip with CLIP ([16]) embeddings from which we calculate aesthetics scores ([38]) as well as text-image similarities. Statistics of our dataset, including the total size and average duration of clips, are provided in Table 1.

3.2 Stage I: Image Pretraining

We consider image pretraining as the first stage in our training pipeline. Thus, in line with concurrent work on video models ([6, 11, 8]), we ground our initial model on a pretrained image diffusion model - namely Stable Diffusion 2.1 ([4]) - to equip it with a strong visual representation.

To analyze the effects of image pretraining, we train and compare two identical video models as detailed in Appendix D on a 10M subset of LVD; one with and one without pretrained spatial weights. We compare these models using a human preference study (see Appendix E for details) in Figure 3a, which clearly shows that the image-pretrained model is preferred in both quality and prompt-following.

(a) Initializing spatial layers from pretrained images models greatly improves performance.

(b) Video data curation boosts performance after video pretraining.

Figure 3: Effects of image-only pretraining and data curation on video-pretraining on LVD-10M: A video model with spatial layers initialized from a pretrained image model clearly outperforms a similar one with randomly initialized spatial weights as shown in Figure 3a. Figure 3b emphasizes the importance of data curation for pretraining, since training on a curated subset of LVD-10M with the filtering threshold proposed in Section 3.3 improves upon training on the entire uncurated LVD-10M.

3.3 Stage II: Curating a Video Pretraining Dataset

A systematic approach to video data curation. For multimodal image modeling, data curation is a key element of many powerful discriminative ([16, 15]) and generative ([14, 51, 52]) models. However, since there are no equally powerful off-the-shelf representations available in the video domain to filter out unwanted examples, we rely on human preferences as a signal to create a suitable pretraining dataset. Specifically, we curate subsets of LVD using different methods described below and then consider the human-preference-based ranking of latent video diffusion models trained on these datasets.

(a) User preference for LVD-10M-F and WebVid [44].

(b) User preference for LVD-10M-F and InternVid [54].

(c) User preference at 50M samples scales.

(d) User preference on scaling datasets.

(e) Relative ELO progression over time during Stage III.

Figure 4: Summarized findings of Sections 3.3 and 3.4: Pretraining on curated datasets consistently boosts performance of generative video models during video pretraining at small (Figures 4a and 4b) and larger scales (Figures 4c and 4d). Remarkably, this performance improvement persists even after 50k steps of video finetuning on high quality data (Figure 4e).

More specifically, for each type of annotation introduced in Section 3.1 (i.e, CLIP scores, aesthetic scores, OCR detection rates, synthetic captions, optical flow scores), we start from an unfiltered, randomly sampled 9.8M-sized subset of LVD, LVD-10M, and systematically remove the bottom 12.5, 25 and 50% of examples. Note that for the synthetic captions, we cannot filter in this sense. Instead, we assess Elo rankings [53] for the different captioning methods from Section 3.1. To keep the number of total subsets tractable, we apply this scheme separately to each type of annotation. We train models with the same training hyperparameters on each of these filtered subsets and compare the results of all models within the same class of annotation with an Elo ranking [53] for human preference votes. Based on these votes, we consequently select the best-performing filtering threshold for each annotation type. The details of this study are presented and discussed in Appendix E. Applying this filtering approach to LVD results in a final pretraining dataset of 152M training examples, which we refer to as LVD-F, cf Table 1.

Curated training data improves performance. In this section, we demonstrate that the data curation approach described above improves the training of our video diffusion models. To show this, we apply the filtering strategy described above to LVD-10M and obtain a four times smaller subset, LVD-10M-F. Next, we use it to train a baseline model that follows our standard architecture and training schedule and evaluate the preference scores for visual quality and prompt-video alignment compared to a model trained on uncurated LVD-10M.

We visualize the results in Figure 3b, where we can see the benefits of filtering: In both categories, the model trained on the much smaller LVD-10M-F is preferred. To further show the efficacy of our curation approach, we compare the model trained on LVD-10M-F with similar video models trained on WebVid-10M ([44]), which is the most recognized research licensed dataset, and InternVid-10M ([54]), which is specifically filtered for high aesthetics. Although LVD-10M-F is also four times smaller than these datasets, the corresponding model is preferred by human evaluators in both spatiotemporal quality and prompt alignment as shown in Figure 4b and Figure 4b.

Data curation helps at scale. To verify that our data curation strategy from above also works on larger, more practically relevant datasets, we repeat the experiment above and train a video diffusion model on a filtered subset with 50M examples and a non-curated one of the same size. We conduct a human preference study and summarize the results of this study in Figure 4c, where we can see that the advantages of data curation also come into play with larger amounts of data. Finally, we show that dataset size is also a crucial factor when training on curated data in Figure 4d, where a model trained on 50M curated samples is superior to a model trained on LVD-10M-F for the same number of steps.

3.4 Stage III: High-Quality Finetuning

In the previous section, we demonstrated the beneficial effects of systematic data curation for video pretraining. However, since we are primarily interested in optimizing the performance after video finetuning, we now investigate how these differences after Stage II translate to the final performance after Stage III. Here, we draw on training techniques from latent image diffusion modeling ([17, 14]) and increase the resolution of the training examples. Moreover, we use a small finetuning dataset comprising 250K pre-captioned video clips of high visual fidelity.

To analyze the influence of video pretraining on this last stage, we finetune three identical models, which only differ in their initialization. We initialize the weights of the first with a pretrained image model and skip video pretraining, a common choice among many recent video modeling approaches ([8, 6]). The remaining two models are initialized with the weights of the latent video models from the previous section, specifically, the ones trained on 50M curated and uncurated video clips. We finetune all models for 50K steps and assess human preference rankings early during finetuning (10K steps) and at the end to measure how performance differences progress in the course of finetuning. We show the obtained results in Figure 4e, where we plot the Elo improvements of user preference relative to the model ranked last, which is the one initialized from an image model. Moreover, the finetuning resumed from curated pretrained weights ranks consistently higher than the one initialized from video weights after uncurated training.

Given these results, we conclude that i) the separation of video model training in video pretraining and video finetuning is beneficial for the final model performance after finetuning and that ii) video pretraining should ideally occur on a large scale, curated dataset, since performance differences after pretraining persist after finetuning.

4. Training Video Models at Scale

Section Summary: This section describes how researchers trained advanced video generation models on a massive scale, starting with a powerful base model adapted from Stable Diffusion and fine-tuned on large video datasets to handle high resolutions like 320x576 pixels. They then customized this base for specific tasks, such as creating videos from text descriptions, animating still images into videos, and interpolating between frames, while introducing techniques like adjustable guidance to reduce visual flaws and low-rank adaptations for controlling camera movements. The resulting models outperform competitors in quality and consistency, including zero-shot text-to-video tests and human preference evaluations, and even serve as a foundation for generating consistent multi-view 3D scenes.

In this section, we borrow takeaways from Section 3 and present results of training state-of-the-art video models at scale. We first use the optimal data strategy inferred from ablations to train a powerful base model at $320\times576$ in Appendix D.2. We then perform finetuning to yield several strong state-of-the-art models for different tasks such as text-to-video in Section 4.2, image-to-video in Section 4.3 and frame interpolation in Section 4.4. Finally, we demonstrate that our video-pretraining can serve as a strong implicit 3D prior, by tuning our image-to-video models on multi-view generation in Section 4.5 and outperform concurrent work, in particular Zero123XL [19, 18] and SyncDreamer [20] in terms of multi-view consistency.

4.1 Pretrained Base Model

\begin{tabular}{l c}

\toprule

\textbf{Method} & FVD ($\downarrow$) \\

\midrule

CogVideo (ZH) \citep{hong2022cogvideo} & 751.34 \\

CogVideo (EN) \citep{hong2022cogvideo} & 701.59 \\

Make-A-Video \citep{singer2022make} & 367.23 \\

Video LDM \citep{blattmann2023align} & 550.61 \\

MagicVideo \citep{zhou2022magicvideo} & 655.00 \\

PYOCO \citep{ge2023preserve} & 355.20 \\

\midrule

SVD (\emph{ours}) & \textbf{242.02} \\

\bottomrule

\end{tabular}

![**Figure 6:** Our 25 frame Image-to-Video model is preferred by human voters over GEN-2 [9] and PikaLabs [10].](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/bwv2eujm/sota_i2v_baselines.png)

As discussed in Section 3.2, our video model is based on Stable Diffusion 2.1 ([4]) (SD 2.1). Recent works ([55]) show that it is crucial to adopt the noise schedule when training image diffusion models, shifting towards more noise for higher-resolution images. As a first step, we finetune the fixed discrete noise schedule from our image model towards continuous noise ([23]) using the network preconditioning proposed in [37] for images of size $256\times384$. After inserting temporal layers, we then train the model on LVD-F on 14 frames at resolution $256\times384$. We use the standard EDM noise schedule ([37]) for 150k iterations and batch size 1536. Next, we finetune the model to generate 14 $320\times576$ frames for 100k iterations using batch size 768. We find that it is important to shift the noise schedule towards more noise for this training stage, confirming results by [55] for image models. For further training details, see Appendix D. We refer to this model as our base model which can be easily finetuned for a variety of tasks as we show in the following sections. The base model has learned a powerful motion representation, for example, it significantly outperforms all baselines for zero-shot text-to-video generation on UCF-101 ([56]) (Table 2). Evaluation details can be found in Appendix E.

4.2 High-Resolution Text-to-Video Model

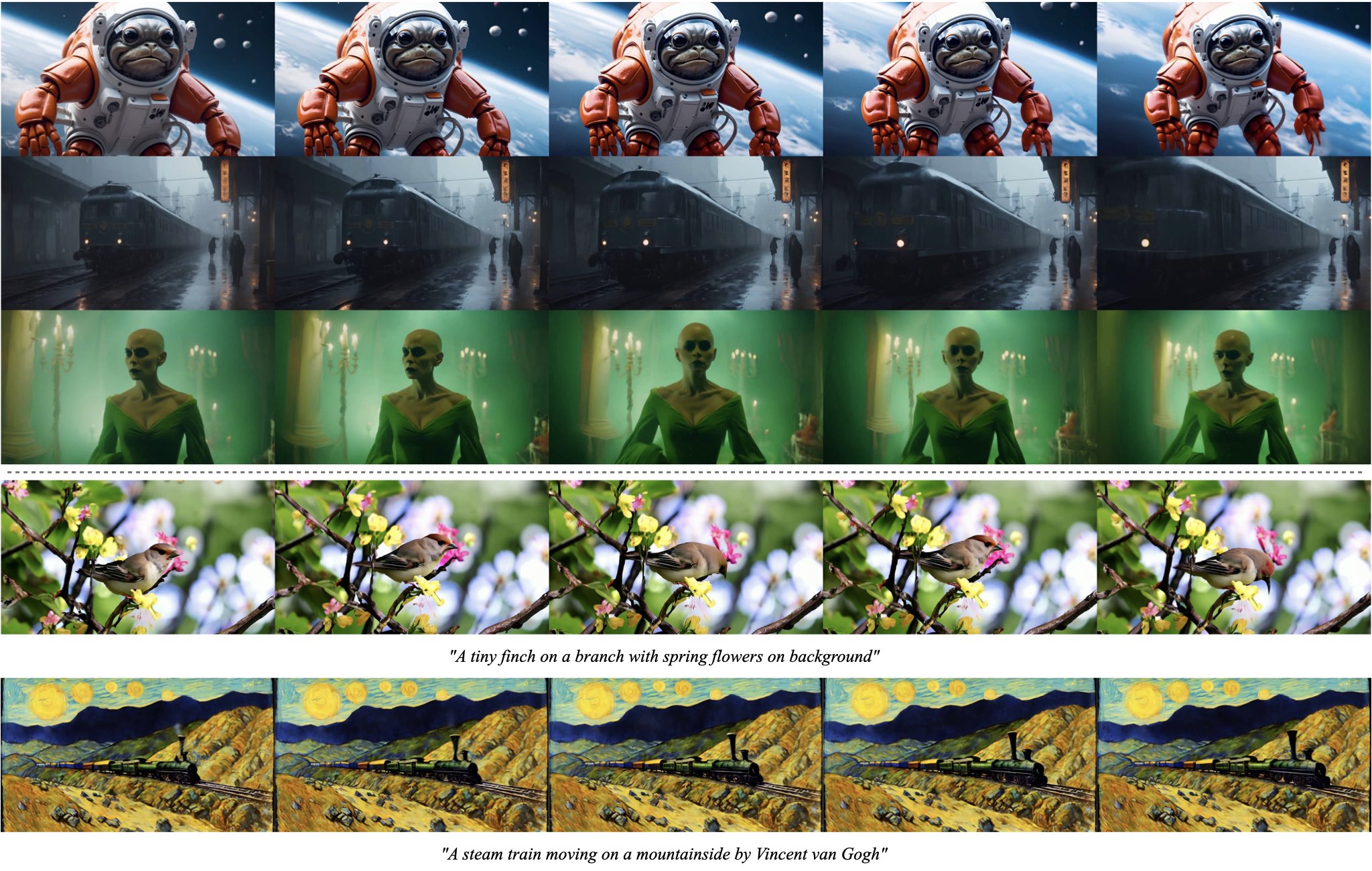

We finetune the base text-to-video model on a high-quality video dataset of $\sim$ 1M samples. Samples in the dataset generally contain lots of object motion, steady camera motion, and well-aligned captions, and are of high visual quality altogether. We finetune our base model for 50k iterations at resolution $576\times1024$ (again shifting the noise schedule towards more noise) using batch size 768. Samples in Figure 5, more can be found in Appendix E.

4.3 High Resolution Image-to-Video Model

Besides text-to-video, we finetune our base model for image-to-video generation, where the video model receives a still input image as a conditioning. Accordingly, we replace text embeddings that are fed into the base model with the CLIP image embedding of the conditioning. Additionally, we concatenate a noise-augmented ([57]) version of the conditioning frame channel-wise to the input of the UNet [58]. We do not use any masking techniques and simply copy the frame across the time axis. We finetune two models, one predicting 14 frames and another one predicting 25 frames; implementation and training details can be found in Appendix D. We occasionally found that standard vanilla classifier-free guidance ([59]) can lead to artifacts: too little guidance may result in inconsistency with the conditioning frame while too much guidance can result in oversaturation. Instead of using a constant guidance scale, we found it helpful to linearly increase the guidance scale across the frame axis (from small to high). Details can be found in Appendix D. Samples in Figure 5, more can be found in Appendix E.

In Figure 6 we compare our model with state-of-the-art, closed-source video generative models, in particular GEN-2 ([27, 9]) and PikaLabs ([10]), and show that our model is preferred in terms of visual quality by human voters. Details on the experiment, as well as many more image-to-video samples, can be found in Appendix E.

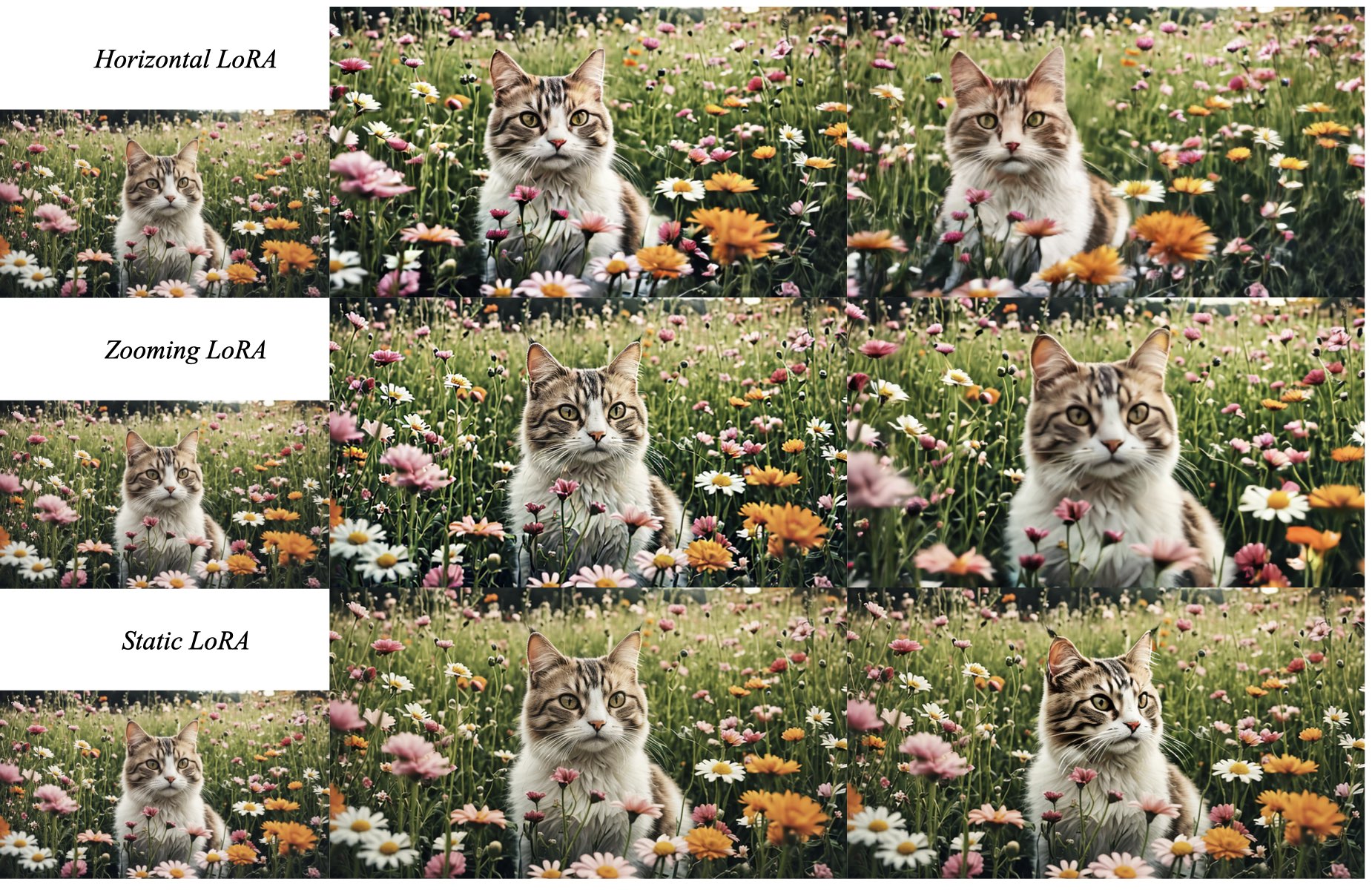

4.3.1 Camera Motion LoRA

To facilitate controlled camera motion in image-to-video generation, we train a variety of camera motion LoRAs within the temporal attention blocks of our model ([12]); see Appendix D for exact implementation details. We train these additional parameters on a small dataset with rich camera-motion metadata. In particular, we use three subsets of the data for which the camera motion is categorized as "horizontally moving", "zooming", and "static". In Figure 7 we show samples of the three models for identical conditioning frames; more samples can be found in Appendix E.

4.4 Frame Interpolation

To obtain smooth videos at high frame rates, we finetune our high-resolution text-to-video model into a frame interpolation model. We follow [8] and concatenate the left and right frames to the input of the UNet via masking. The model learns to predict three frames within the two conditioning frames, effectively increasing the frame rate by four. Surprisingly, we found that a very small number of iterations ($\approx 10k$) suffices to get a good model. Details and samples can be found in Appendix D and Appendix E, respectively.

4.5 Multi-View Generation

![**Figure 8:** Generated multi-view frames of a GSO test object using our SVD-MV model (i.e. SVD finetuned for Multi-View generation), SD2.1-MV [60], Scratch-MV, SyncDreamer [20], and Zero123XL [19].](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/bwv2eujm/video_to_3D_turtle6.jpg)

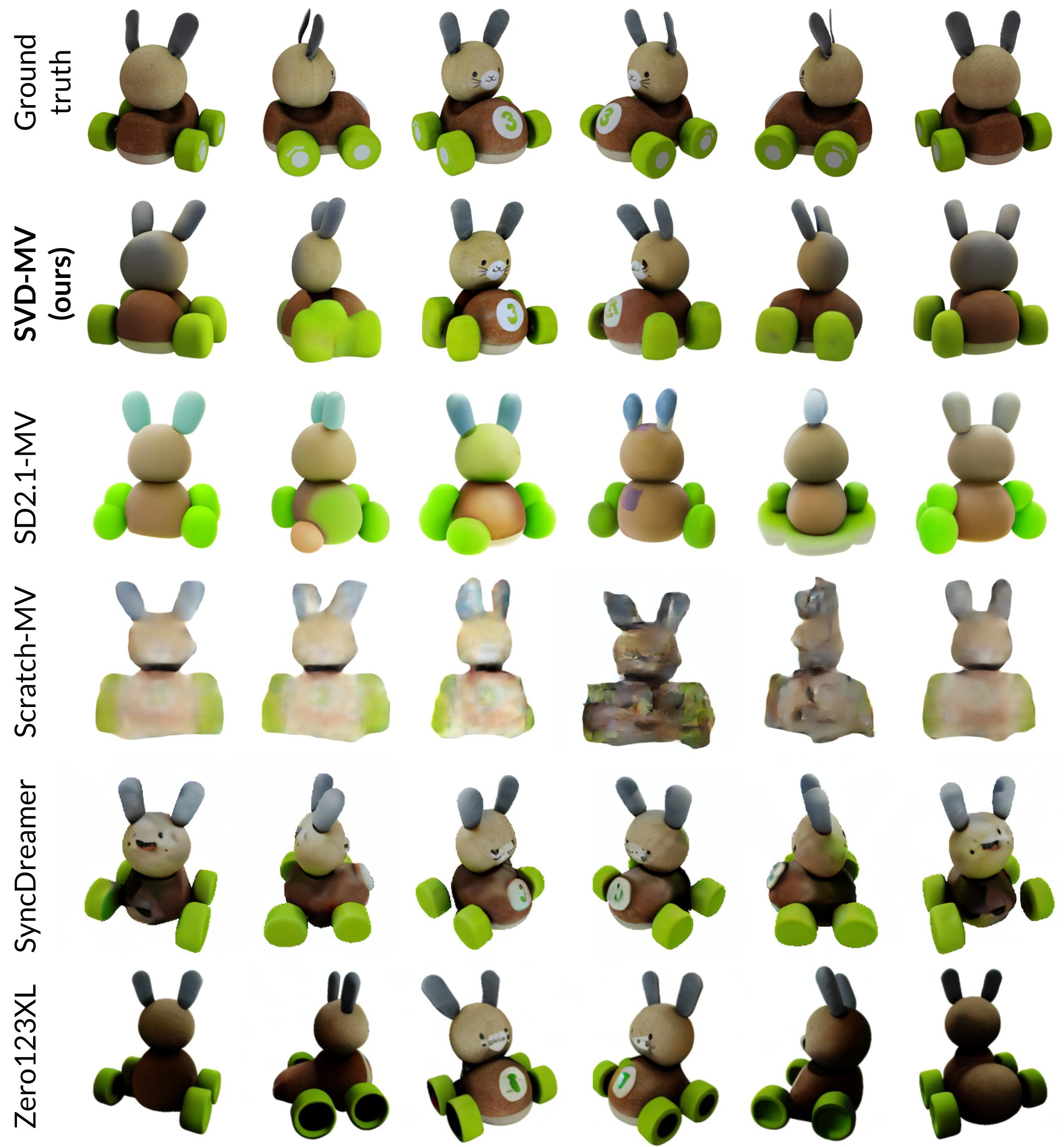

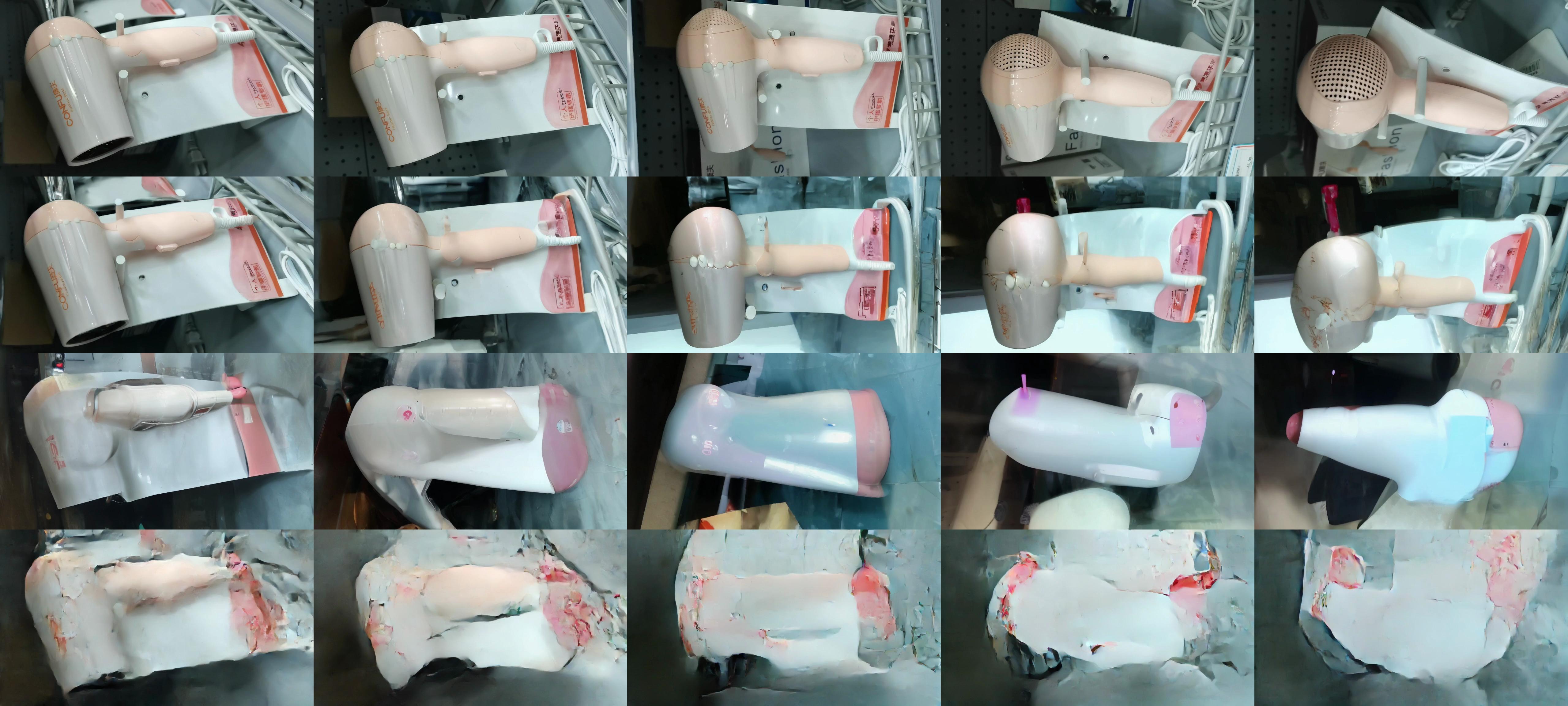

To obtain multiple novel views of an object simultaneously, we finetune our image-to-video SVD model on multi-view datasets [61, 19, 62].

Datasets. We finetuned our SVD model on two datasets, where the SVD model takes a single image and outputs a sequence of multi-view images: (i) A subset of Objaverse [61] consisting of 150K curated and CC-licensed synthetic 3D objects from the original dataset [61]. For each object, we rendered $360^\circ$ orbital videos of 21 frames with randomly sampled HDRI environment map and elevation angles between $[-5^\circ, 30^\circ]$. We evaluate the resulting models on an unseen test dataset consisting of 50 sampled objects from Google Scanned Objects (GSO) dataset [63]. and (ii) MVImgNet [62] consisting of casually captured multi-view videos of general household objects. We split the videos into $\sim$ 200K train and 900 test videos. We rotate the frames captured in portrait mode to landscape orientation.

The Objaverse-trained model is additionally conditioned on the elevation angle of the input image, and outputs orbital videos at that elevation angle. The MVImgNet-trained models are not conditioned on pose and can choose an arbitrary camera path in their generations. For details on the pose conditioning mechanism, see Appendix E.

Models. We refer to our finetuned Multi-View model as SVD-MV. We perform an ablation study on the importance of the video prior of SVD for multi-view generation. To this effect, we compare the results from SVD-MV i.e. from a video prior to those finetuned from an image prior i.e. the text-to-image model SD2.1 (SD2.1-MV), and that trained without a prior i.e. from random initialization (Scratch-MV). In addition, we compare with the current state-of-the-art multiview generation models of Zero123 [18], Zero123XL [19], and SyncDreamer [20].

Metrics. We use the standard metrics of Peak Signal-to-Noise Ratio (PSNR), LPIPS [64], and CLIP [16] Similarity scores (CLIP-S) between the corresponding pairs of ground truth and generated frames on 50 GSO test objects.

Training. We train all our models for 12k steps ($\sim$ 16 hours) with 8 80GB A100 GPUs using a total batch size of 16, with a learning rate of 1e-5.

Results. Figure 9(a) shows the average metrics on the GSO test dataset. The higher performance of SVD-MV compared to SD2.1-MV and Scratch-MV clearly demonstrates the advantage of the learned video prior in the SVD model for multi-view generation. In addition, as in the case of other models finetuned from SVD, we found that a very small number of iterations ($\approx 12k$) suffices to get a good model. Moreover, SVD-MV is competitive w.r.t state-of-the-art techniques with lesser training time (12 $k$ iterations in 16 hours), whereas existing models are typically trained for much longer (for example, SyncDreamer was trained for four days specifically on Objaverse). Figure 9(b) shows convergence of different finetuned models. After only 1k iterations, SVD-MV has much better CLIP-S and PSNR scores than its image-prior and no-prior counterparts.

Figure 8 shows a qualitative comparison of multi-view generation results on a GSO test object and Figure 10 on an MVImgNet test object. As can be seen, our generated frames are multi-view consistent and realistic. More details on the experiments, as well as more multi-view generation samples, can be found in Appendix E.

| Method | LPIPS $\downarrow$ | PSNR $\uparrow$ | CLIP-S $\uparrow$ |

|---|---|---|---|

| SyncDreamer [20] | 0.18 | 15.29 | 0.88 |

| Zero123 [18] | 0.18 | 14.87 | 0.87 |

| Zero123XL [19] | 0.20 | 14.51 | 0.87 |

| Scratch-MV | 0.22 | 14.20 | 0.76 |

| SD2.1-MV [60] | 0.18 | 15.06 | 0.83 |

| SVD-MV (ours) | 0.14 | 16.83 | 0.89 |

![**Figure 10:** Generated novel multi-view frames for MVImgNet dataset using our SVD-MV model, SD2.1-MV [60], Scratch-MV.](https://ittowtnkqtyixxjxrhou.supabase.co/storage/v1/object/public/public-images/bwv2eujm/MVI4.jpg)

5. Conclusion

Section Summary: Stable Video Diffusion is a new AI model that creates high-quality videos from text descriptions or starting images, using a carefully curated dataset from vast, messy video collections and a three-stage training process to boost performance. It serves as a strong foundation for fine-tuning tasks like controlling camera angles in videos or generating multiple views, achieving top results in 3D video synthesis while using far less computing power than before. The researchers hope these advances will inspire further progress in AI video generation, with more on impacts and limits in the appendix.

We present Stable Video Diffusion (SVD), a latent video diffusion model for high-resolution, state-of-the-art text-to-video and image-to-video synthesis. To construct its pretraining dataset, we conduct a systematic data selection and scaling study, and propose a method to curate vast amounts of video data and turn large and noisy video collection into suitable datasets for generative video models. Furthermore, we introduce three distinct stages of video model training which we separately analyze to assess their impact on the final model performance. Stable Video Diffusion provides a powerful video representation from which we finetune video models for state-of-the-art image-to-video synthesis and other highly relevant applications such as LoRAs for camera control. Finally we provide a pioneering study on multi-view finetuning of video diffusion models and show that SVD constitutes a strong 3D prior, which obtains state-of-the-art results in multi-view synthesis while using only a fraction of the compute of previous methods.

We hope these findings will be broadly useful in the generative video modeling literature. A discussion on our work's broader impact and limitations can be found in Appendix A.

Acknowledgements

Section Summary: The authors express special gratitude to Emad Mostaque for his outstanding support throughout the project. They also thank their colleagues Jonas Müller, Axel Sauer, Dustin Podell, and Rahim Entezari for their helpful discussions and feedback. Finally, appreciation is extended to Harry Saini and Richard Vencu for keeping the data and computing resources running smoothly and efficiently.

Special thanks to Emad Mostaque for his excellent support on this project. Many thanks go to our colleagues Jonas Müller, Axel Sauer, Dustin Podell and Rahim Entezari for fruitful discussions and comments. Finally, we thank Harry Saini and the one and only Richard Vencu for maintaining and optimizing our data and computing infrastructure.

Appendix

Section Summary: The appendix discusses the broader implications of generative video models, emphasizing their potential to transform media creation while stressing the need to mitigate risks like misinformation, harm, and biases through thorough safety evaluations before deployment. It highlights limitations of the approach, such as challenges in generating long videos efficiently, insufficient motion in outputs, and high computational demands, suggesting future improvements like cascaded generation or distillation techniques. Additionally, it reviews related research on video synthesis methods, from early low-resolution models to recent diffusion-based and autoregressive approaches using large datasets, and explores multi-view generation, arguing that video models offer advantages over image-based ones for consistent outputs, before detailing the data processing pipeline starting from raw video collections.

A. Broader Impact and Limitations

Broader Impact: Generative models for different modalities promise to revolutionize the landscape of media creation and use. While exploring their creative applications, reducing the potential to use them for creating misinformation and harm are crucial aspects before real-world deployment. Furthermore, risk analyses need to highlight and evaluate the differences between the various existing model types, such as interpolation, text-to-video, animation, and long-form generation. Before these models are used in practice, a thorough investigation of the models themselves, their intended uses, safety aspects, associated risks, and potential biases is essential.

Limitations: While our approach excels at short video generation, it comes with some fundamental shortcomings w.r.t. long video synthesis: Although a latent approach provides efficiency benefits, generating multiple keyframes at once is expensive both during training but also inference, and future work on long video synthesis should either try a cascade of very coarse frame generation or build dedicated tokenizers for video generation. Furthermore, videos generated with our approach sometimes suffer from too little generated motion. Lastly, video diffusion models are typically slow to sample and have high VRAM requirements, and our model is no exception. Diffusion distillation methods [65, 66, 11] are promising candidates for faster synthesis.

B. Related Work

Video Synthesis. Many approaches based on various models such as variational RNNs [67, 68, 69, 70, 71], normalizing flows ([72, 73]), autoregressive transformers [74, 75, 13, 76, 77, 78, 79], and GANs ([80, 81, 82, 83, 84, 85, 29, 86, 87, 88, 89, 90]) have tackled video synthesis. Most of these works, however, have generated videos either on low-resolution ([67, 68, 69, 70, 71, 72, 73, 80, 81, 82, 83, 84]) or on comparably small and noisy datasets [91, 56, 92] which were originally proposed to train discriminative models.

Driven by increasing amounts of available compute resources and datasets better suited for generative modeling such as WebVid-10M ([44]), more competitive approaches have been proposed recently, mainly based on well-scalable, explicit likelihood-based approaches such as diffusion [5, 6, 11] and autoregressive models ([43]). Motivated by a lack of available clean video data, all these approaches are leveraging joint image-video training ([6, 11, 45, 8]) and most methods are grounding their models on pretrained image models ([6, 45, 8]). Another commonality between these and most subsequent approaches to (text-to-)video synthesis [25, 32, 93] is the usage of dedicated expert models to generate the actual visual content at a coarse frame rate and to temporally upscale this low-fps video to temporally smooth final outputs at 24-32 fps ([6, 11, 8]). Similar to the image domain, diffusion-based approaches can be mainly separated into cascaded approaches ([11]) following ([57, 25]) and latent diffusion models ([8, 45, 94]) translating the approach of [4] to the video domain. While most of these works aim at learning general motion representation and are consequently trained on large and diverse datasets, another well-recognized branch of diffusion-based video synthesis tackles personalized video generation based on finetuning of pretrained text-to-image models on more narrow datasets tailored to a specific domain ([12]) or application, partly including non-deep motion priors ([94]). Finally, many recent works tackle the task of image-to-video synthesis, where the start frame is already given, and the model has to generate the consecutive frames [94, 32, 12]. Importantly, as shown in our work (see Figure 1) when combined with off-the-shelf text-to-image models, image-to-video models can be used to obtain a full text-(to-image)-to-video pipeline.

Multi-View Generation Motivated by their success in 2D image generation, diffusion models have also been used for multi-view generation. Early promising diffusion-based results ([95, 96, 97, 98, 99, 100]) have mainly been restricted by lacking availability of useful real-world multi-view training data. To address this, more recent works such as Zero-123 [18], MVDream [101], and SyncDreamer [20] propose techniques to adapt and finetune pretrained image generation models such as Stable Diffusion (SD) for multi-view generation, thereby leveraging image priors from SD. One issue with Zero-123 [18] is that the generated multi-views can be inconsistent with respect to each other as they are generated independently with pose-conditioning. Some follow-up works try to address this view-consistency problem by jointly synthesizing the multi-view images. MVDream [101] proposes to jointly generate four views of an object using a shared attention module across images. SyncDreamer [20] proposes to estimate a 3D voxel structure in parallel to the multi-view image diffusion process to maintain consistency across the generated views.

Despite rapid progress in multi-view generation research, these approaches rely on single image generation models such as SD. We believe that our video generative model is a better candidate for the multi-view generation as multi-view images form a specific form of video where the camera is moving around an object. As a result, it is much easier to adapt a video-generative model for multi-view generation compared to adapting an image-generative model. In addition, the temporal attention layers in our video model naturally assist in the generation of consistent multi-views of an object without needing any explicit 3D structures like in [20].

C. Data Processing

In this section, we provide more details about our processing pipeline including their outputs on a few public video examples for demonstration purposes.

Motivation

We start from a large collection of raw video data which is not useful for generative text-video (pre)training ([102, 54]) because of the following adverse properties: First, in contrast to discriminative approaches to video modeling, generative video models are sensitive to motion inconsistencies such as cuts of which usually many are contained in raw and unprocessed video data, cf Figure 2, left. Moreover, our initial data collection is biased towards still videos as indicated by the peak at zero motion in Figure 2, right. Since generative models trained on this data would obviously learn to generate videos containing cuts and still scenes, this emphasizes the need for cut detection and motion annotations to ensure temporal quality. Another critical ingredient for training generative text-video models are captions - ideally more than one per video ([103]) - which are well-aligned with the video content. The last essential component for generative video training which we are considering here is the high visual quality of the training examples.

The design of our processing pipeline addresses the above points. Thus, to ensure temporal quality, we detect cuts with a cascaded approach directly after download, clip the videos accordingly, and estimate optical flow for each resulting video clip. After that, we apply three synthetic captioners to every clip and further extract frame-level CLIP similarities to all of these text prompts to be able to filter out outliers. Finally, visual quality at the frame level is assessed by using a CLIP-embeddings-based aesthetics score ([38]). We describe each step in more detail in what follows.

Cascaded Cut Detection.

Similar to previous work ([54]), we use PySceneDetect ^2 to detect cuts in our base video clips. However, as qualitatively shown in Figure 11 we observe many fade-ins and fade-outs between consecutive scenes, which are not detected when running the cut detector at a unique threshold and only native fps. Thus, in contrast to previous work, we apply a cascade of 3 cut detectors which are operating at different frame rates and different thresholds to detect both sudden changes and slow ones such as fades.

Keyframe-Aware Clipping.

We clip the videos using FFMPEG ([104]) directly after cut detection by extracting the timestamps of the keyframes in the source videos and snapping detected cuts onto the closest keyframe timestamp, which does not cross the detected cut. This allows us to quickly extract clips without cuts via seeking and isn't prohibitively slow at scale like inserting new keyframes in each video.

Optical Flow.

As motivated in Section 3.1 and Figure 2 it is crucial to provide means for filtering out static scenes. To enable this, we extract dense optical flow maps at 2fps using the OpenCV ([49]) implementation of the Farnebäck algorithm ([48]). To further keep storage size tractable we spatially downscale the flow maps such that the shortest side is at 16px resolution. By averaging these maps over time and spatial coordinates, we further obtain a global motion score for each clip, which we use to filter out static scenes by using a threshold for the minimum required motion, which is chosen as detailed on Appendix E.2.2. Since this only yields rough approximate, for the final Stage III finetuning, we compute more accurate dense optical flow maps using RAFT [105] at $800 \times 450$ resolution. The motion scores are then computed similarly. Since the high-quality finetuning data is relatively much smaller than the pretraining dataset, this makes the RAFT-based flow computation tractable.

Synthetic Captioning.

At a million-sample scale, it is not feasible to hand-annotate data points with prompts. Hence we resort to synthetic captioning to extract captions. However in light of recent insights on the importance of caption diversity ([103]) and taking potential failure cases of these synthetic captioning models into consideration, we extract three captions per clip by using i) the image-only captioning model CoCa ([106]), which describes spatial aspects well, ii) - to also capture temporal aspects - the video-captioner VideoBLIP ([47]) and iii) to combine these two captions and like that, overcome potential flaws in each of them, a lightweight LLM. Examples of the resulting captions are shown in Figure 13.

Caption similarities and Aesthetics.

Extracting CLIP ([16]) image and text representations have proven to be very helpful for data curation in the image domain since computing the cosine similarity between the two allows for assessment of text-image alignment for a given example ([38]) and thus to filter out examples with erroneous captions. Moreover, it is possible to extract scores for visual aesthetics ([38]). Although CLIP is only able to process images, and this consequently is only possible on a single frame level we opt to extract both CLIP-based i) text-image similarities and ii) aesthetics scores of the first, center, and last frames of each video clip. As shown in Section 3.3 and Appendix E.2.2, using training text-video models on data curated by using these scores improves i) text following abilities and ii) visual quality of the generated samples compared to models trained on unfiltered data.

Text Detection.

In early experiments, we noticed that models trained on earlier versions of LVD-F obtained a tendency to generate videos with excessive amounts of written text depicted which is arguably not a desired feat for a text-to-video model. To this end, we applied the off-the-shelf text-detector CRAFT ([50]) to annotate the start, middle, and end frames of each clip in our dataset with bounding box information on all written text. Using this information, we filtered out all clips with a total area of detected bounding boxes larger than 7% to construct the final LVD-F.

D. Model and Implementation Details

D.1 Diffusion Models

In this section, we give a concise summary of DMs. We make use of the continuous-time DM framework ([23, 37]). Let $p_{\rm{data}}({\mathbf{x}}0)$ denote the data distribution and let $p({\mathbf{x}}; \sigma)$ be the distribution obtained by adding i.i.d. $\sigma^2$-variance Gaussian noise to the data. Note that or sufficiently large $\sigma{\mathrm{max}}$, $p({\mathbf{x}}; \sigma_{\mathrm{max}^2}) \approx {\mathcal{N}}\left(\bm{0}, \sigma_{\mathrm{max}^2}\right)$. DM uses this fact and, starting from high variance Gaussian noise ${\mathbf{x}}M \sim {\mathcal{N}}\left(\bm{0}, \sigma{\mathrm{max}^2}\right)$, sequentially denoise towards $\sigma_0=0$. In practice, this iterative refinement process can be implemented through the numerical simulation of the Probability Flow ordinary differential equation (ODE) ([23])

$ \begin{align} d {\mathbf{x}} = -\dot \sigma(t) \sigma(t) \nabla_{\mathbf{x}} \log p({\mathbf{x}}; \sigma(t)) , dt, \end{align}\tag{1} $

where $\nabla_{\mathbf{x}} \log p({\mathbf{x}}; \sigma)$ is the score function ([107]). DM training reduces to learning a model ${\bm{s}}{\bm{\theta}}({\mathbf{x}}; \sigma)$ for the score function $\nabla{\mathbf{x}} \log p({\mathbf{x}}; \sigma)$. The model can, for example, be parameterized as $\nabla_{\mathbf{x}} \log p({\mathbf{x}}; \sigma) \approx s_{\bm{\theta}}({\mathbf{x}}; \sigma) = (D_{\bm{\theta}}({\mathbf{x}}; \sigma) - {\mathbf{x}})/ \sigma^2$ ([37]), where $D_{\bm{\theta}}$ is a learnable denoiser that tries to predict the clean ${\mathbf{x}}0$. The denoiser $D{\bm{\theta}}$ is trained via denoising score matching (DSM)

$ \begin{align} \mathbb{E}{\substack{({\mathbf{x}}0, {\mathbf{c}}) \sim p{\rm{data}}({\mathbf{x}}0, {\mathbf{c}}), (\sigma, {\mathbf{n}}) \sim p(\sigma, {\mathbf{n}})}} \left[\lambda\sigma |D{\bm{\theta}}({\mathbf{x}}_0 + {\mathbf{n}}; \sigma, {\mathbf{c}}) - {\mathbf{x}}_0 |_2^2 \right], \end{align}\tag{2} $

where $p(\sigma, {\mathbf{n}}) = p(\sigma), {\mathcal{N}}\left({\mathbf{n}}; \bm{0}, \sigma^2\right)$, $p(\sigma)$ can be a probability distribution or density over noise levels $\sigma$. It is both possible to use a discrete set or a continuous range of noise levels. In this work, we use both options, which we further specify in Appendix D.2.

$\lambda_\sigma \colon \mathbb{R}+ \to \mathbb{R}+$ is a weighting function, and ${\mathbf{c}}$ is an arbitrary conditioning signal. In this work, we follow the EDM-preconditioning framework ([37]), parameterizing the learnable denoiser $D_{\bm{\theta}}$ as

$ \begin{align} D_{\bm{\theta}}({\mathbf{x}}; \sigma) = c_\mathrm{skip}(\sigma) {\mathbf{x}} + c_\mathrm{out}(\sigma) F_{\bm{\theta}}(c_\mathrm{in}(\sigma) {\mathbf{x}}; c_\mathrm{noise}(\sigma)), \end{align}\tag{3} $

where $F_{\bm{\theta}}$ is the network to be trained.

Classifier-free guidance. Classifier-free guidance ([108]) is a method used to guide the iterative refinement process of a DM towards a conditioning signal ${\mathbf{c}}$. The main idea is to mix the predictions of a conditional and an unconditional model

$ \begin{align} D^w({\mathbf{x}}; \sigma, {\mathbf{c}}) = w D({\mathbf{x}}; \sigma, {\mathbf{c}}) - (w - 1) D({\mathbf{x}}; \sigma), \end{align}\tag{4} $

where $w \geq 0$ is the guidance strength. The unconditional model can be trained jointly alongside the conditional model in a single network by randomly replacing the conditional signal ${\mathbf{c}}$ with a null embedding in Equation 2, e.g., 10% of the time ([108]). In this work, we use classifier-free guidance, for example, to guide video generation toward text conditioning.

D.2 Base Model Training and Architecture

As discussed in , we start the publicly available Stable Diffusion 2.1 ([4]) (SD 2.1) model. In the EDM-framework ([37]), SD 2.1 has the following preconditioning functions:

$ \begin{align} c_\mathrm{skip}^\mathrm{SD 2.1}(\sigma) &= 1, \ c_\mathrm{out}^\mathrm{SD 2.1}(\sigma) &= -\sigma, , \ c_\mathrm{in}^\mathrm{SD 2.1}(\sigma) &= \frac{1}{\sqrt{\sigma^2 + 1}}, , \ c_\mathrm{noise}^\mathrm{SD 2.1}(\sigma) &= \operatorname{arg, min}_{j \in {1 \dots 1000}} (\sigma - \sigma_j), , \ \end{align} $

where $\sigma_{j+1} > \sigma_j$. The distribution over noise levels $p(\sigma)$ used for the original SD 2.1. training is a uniform distribution over the 1000 discrete noise levels ${\sigma_j}{j \in {1 \dots 1000}}$. One issue with the training of SD 2.1 (and in particular its noise distribution $p(\sigma)$) is that even for the maximum discrete noise level $\sigma{1000}$ the signal-to-noise ratio ([109]) is still relatively high which results in issues when, for example, generating very dark images ([110, 111]). [111] proposed offset noise, a modification of the training objective in Equation 2 by making $p({\mathbf{n}} \mid \sigma)$ non-isotropic Gaussian. In this work, we instead opt to modify the preconditioning functions and distribution over training noise levels altogether.

Image model finetuning. We replace the above preconditioning functions with

$ \begin{align} c_\mathrm{skip}(\sigma) &= \left(\sigma^2 + 1 \right)^{-1}, , \ c_\mathrm{out}(\sigma) &= \frac{-\sigma}{\sqrt{\sigma^2 + 1}}, , \ c_\mathrm{in}(\sigma) &= \frac{1}{\sqrt{\sigma^2 + 1}}, , \ c_\mathrm{noise}(\sigma) &= 0.25 \log \sigma, \ \end{align} $

which can be recovered in the EDM framework ([37]) by setting $\sigma_\mathrm{data} = 1$); the preconditioning functions were originally proposed in ([66]). We also use the noise distribution and weighting function proposed in [37], namely $\log \sigma \sim {\mathcal{N}}(P_\mathrm{mean}, P_\mathrm{std}^2)$ and $\lambda(\sigma) = (1 + \sigma^2) \sigma^{-2}$, with $P_\mathrm{mean}=-1.2$ and $P_\mathrm{std}=1$. We then finetune the neural network backbone $F_{\bm{\theta}}$ of SD2.1 for 31k iterations using this setup. For the first 1k iterations, we freeze all parameters of $F_{\bm{\theta}}$ except for the time-embedding layer and train on SD2.1's original training resolution of $512 \times 512$. This allows the model to adapt to the new preconditioning functions without unnecessarily modifying the internal representations of $F_{\bm{\theta}}$ too much. Afterward, we train all layers of $F_{\bm{\theta}}$ for another 30k iterations on images of size $256 \times 384$, which is the resolution used in the initial stage of video pretraining.

Video pretraining. We use the resulting model as the image backbone of our video model. We then insert temporal convolution and attention layers. In particular, we follow the exact setup from ([8]), inserting a total of 656M new parameters into the UNet bumping its total size (spatial and temporal layers) to 1521M parameters. We then train the resulting UNet on 14 frames on resolution $256\times384$ for 150k iters using AdamW ([112]) with learning rate $10^{-4}$ and a batch size of 1536. We train the model for classifier-free guidance ([59]) and drop out the text-conditioning 15% of the time. Afterward, we increase the spatial resolution to $320 \times 576$ and train for an additional 100k iterations, using the same settings as for the lower-resolution training except for a reduced batch size of 768 and a shift of the noise distribution towards more noise, in particular, we increase $P_\mathrm{mean} = 0$. During training, the base model and the high-resolution Text/Image-to-Video models are all conditioned on the input video's frame rate and motion score. This allows us to vary the amount of motion in a generated video at inference time.

D.3 High-Resolution Text-to-Video Model

We finetune our base model on a high-quality dataset of $\sim$ 1M samples at resolution $576 \times 1024$. We train for $50k$ iterations at a batch size of 768, learning rate $3 \times 10^{-5}$, and set $P_\mathrm{mean} = 0.5$ and $P_\mathrm{std} = 1.4$. Additionally, we track an exponential moving average of the weights at a decay rate of 0.9999. The final checkpoint is chosen using a combination of visual inspection and human evaluation.

D.4 High-Resolution Image-to-Video Model

We can finetune our base text-to-video model for the image-to-video task. In particular, during training, we use one additional frame on which the model is conditioned. We do not use text-conditioning but rather replace text embeddings fed into the base model with the CLIP image embedding of the conditioning frame. Additionally, we concatenate a noise-augmented ([57]) version of the conditioning frame channel-wise to the input of the UNet [58]. In particular, we add a small amount of noise of strength $\log \sigma \sim {\mathcal{N}}(-3.0, 0.5^2)$ to the conditioning frame and then feed it through the standard SD 2.1 encoder. The mean of the encoder distribution is then concatenated to the input of the UNet (copied across the time axis). Initially, we finetune our base model for the image-to-video task on the base resolution ($320 \times 576$) for 50k iterations using a batch size of 768 and learning rate $3 \times 10^{-5}$. Since the conditioning signal is very strong, we again shift the noise distribution towards more noise, i.e., $P_\mathrm{mean} = 0.7$ and $P_\mathrm{std} = 1.6$. Afterwards, we fintune the base image-to-video model on a high-quality dataset of $\sim$ 1M samples at $576 \times 1024$ resolution. We train two versions: one to generate 14 frames and one to generate 25 frames. We train both models for $50k$ iterations at a batch size of 768, learning rate $3 \times 10^{-5}$, and set $P_\mathrm{mean} = 1.0$ and $P_\mathrm{std} = 1.6$. Additionally, we track an exponential moving average of the weights at a decay rate of 0.9999. The final checkpoints are chosen using a combination of visual inspection and human evaluation.

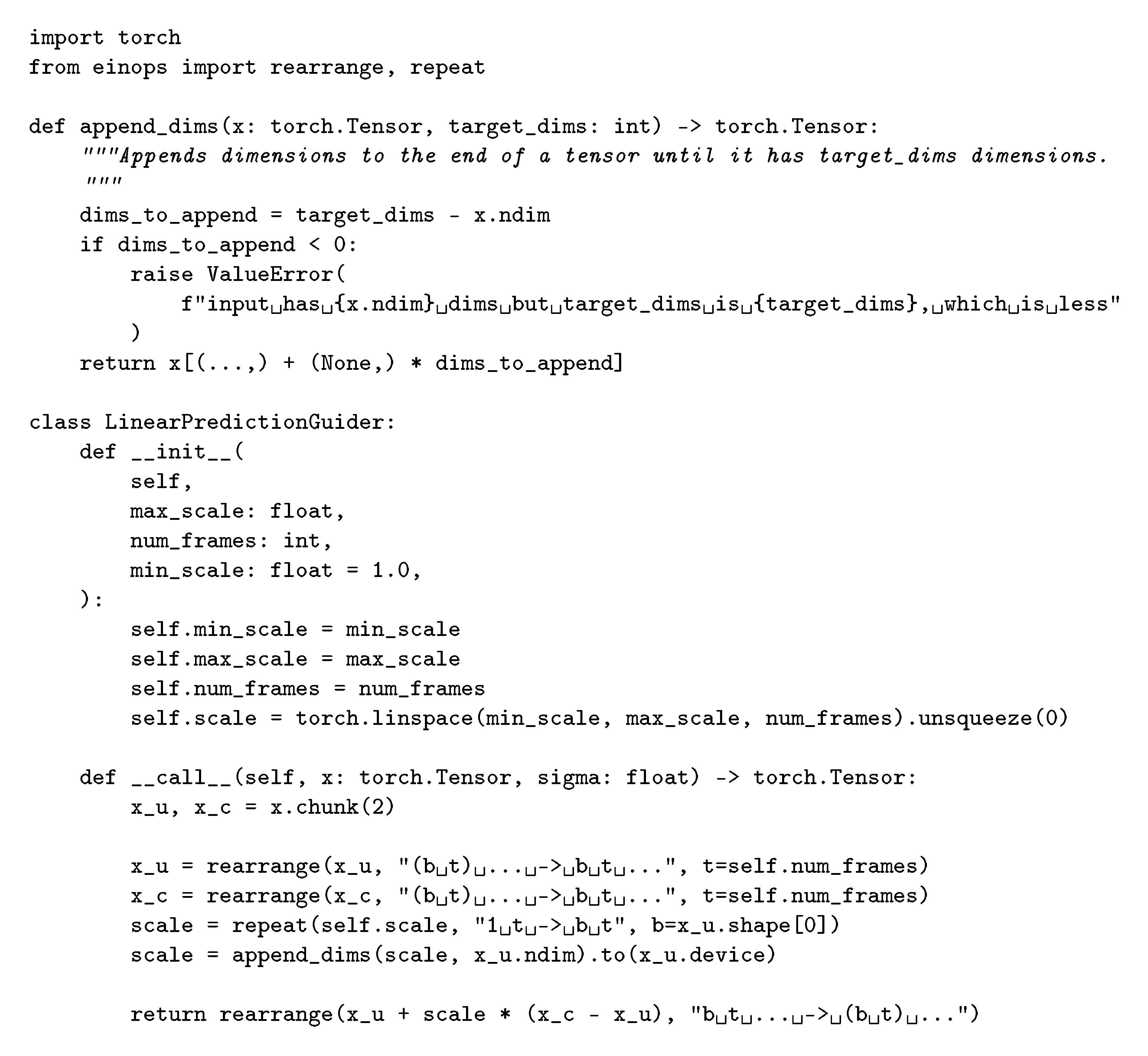

D.4.1 Linearly Increasing Guidance

We occasionally found that standard vanilla classifier-free guidance ([59]) (see Equation 4) can lead to artifacts: too little guidance may result in inconsistency with the conditioning frame while too much guidance can result in oversaturation. Instead of using a constant guidance scale, we found it helpful to linearly increase the guidance scale across the frame axis (from small to high). A PyTorch implementation of this novel technique can be found in Figure 15.

D.4.2 Camera Motion LoRA

To facilitate controlled camera motion in image-to-video generation, we train a variety of camera motion LoRAs within the temporal attention blocks of our model ([12]). In particular, we train low-rank matrices of rank 16 for 5k iterations. Additional samples can be found in Figure 20.

D.5 Interpolation Model Details

Similar to the text-to-video and image-to-video models, we finetune our interpolation model starting from the base text-to-video model, cf Appendix D.2. To enable interpolation, we reduce the number of output frames from 14 to 5, of which we use the first and last as conditioning frames, which we feed to the UNet ([58]) backbone of our model via the concat-conditioning-mechanism ([4]). To this end, we embed these frames into the latent space of our autoencoder, resulting in two image encodings $z_s, , z_e \in \mathbb{R}^{c \times h \times w}$, where $c=4, , h=52, , w=128$. To form a latent frame sequence that is of the same shape as the noise input of the UNet, i.e $\mathbb{R}^{5 \times c \times h \times w}$, we use a learned mask embedding $z_m \in\mathbb{R}^{c \times h \times w}$ and form a latent sequence $ \boldsymbol{z} = {z_s, z_m, z_m, z_m, z_e}\in \mathbb{R}^{5 \times c \times h \times w}$. We concatenate this sequence channel-wise with the noise input and additionally with a binary mask where 1 indicates the presence of a conditioning frame and 0 that of a mask embedding. The final input for the UNet is thus of shape $\left(5, 9, 52, 128 \right)$. In line with previous work ([11, 6, 8]), we use noise augmentation for the two conditioning frames, which we apply in the latent space. Moreover, we replace the CLIP text representation for the crossattention conditioning with the corresponding CLIP image representation of the start frame and end frame, which we concatenate to form a conditioning sequence of length 2.

We train the model on our high-quality dataset at spatial resolution $576 \times 1024$ using AdamW ([112]) with a learning rate of $10^{-4}$ in combination with exponential moving averaging at decay rate 0.9999 and use a shifted noise schedule with $P_\mathrm{mean}=1$ and $P_\mathrm{std}=1.2$. Surprisingly, we find this model, which we train with a comparably small batch size of 256, to converge extremely fast and to yield consistent and smooth outputs after only 10k iterations. We take this as another evidence of the usefulness of the learned motion representation our base text-to-video model has learned.

D.6 Multi-view generation

We finetuned the high-resolution image-to-video model on our specific rendering of the Objaverse dataset. We render 21 frames per orbit of an object in the dataset at $576 \times 576$ resolution and finetune the 25-frame Image-to-Video model to generate these 21 frames. We feed one view of the object as the image condition. In addition, we feed the elevation of the camera as conditioning to the model. We first pass the elevation through a timestep embedding layer that embeds the sine and cosine of the elevation angle at various frequencies and concatenates them into a vector. This vector is finally concatenated to the overall vector condition of the UNet.

We trained for 12 $k$ iterations with a total batch size of 16 across 8 A100 GPUs of 80GB VRAM at a learning rate of $1 \times 10^{-5}$.

E. Experiment Details

E.1 Details on Human Preference Assessment

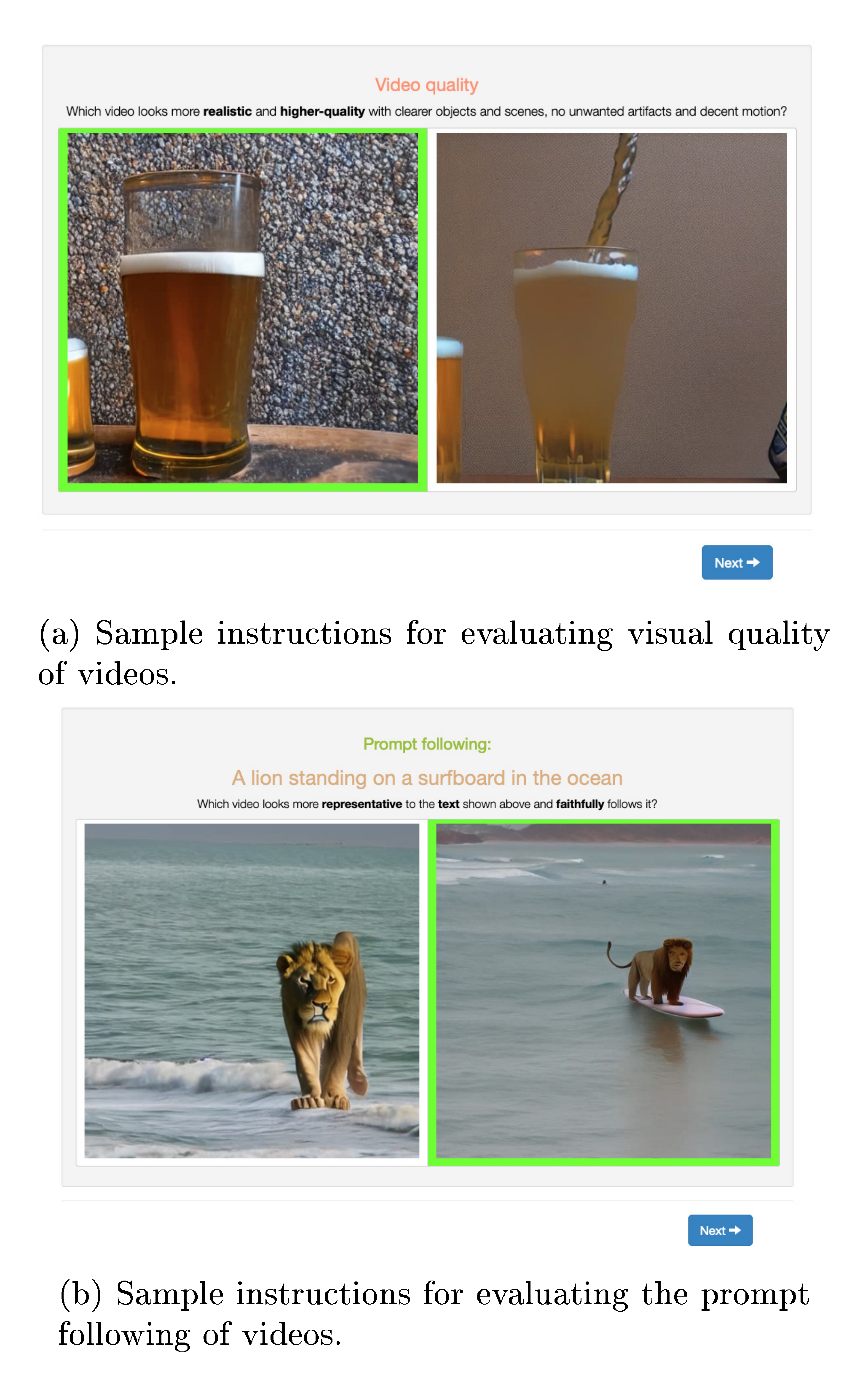

For most of the evaluation conducted in this paper, we employ human evaluation as we observed it to contain the most reliable signal. For text-to-video tasks and all ablations conducted for the base model, we generate video samples from a list of 64 test prompts. We then employ human annotators to collect preference data on two axes: i) visual quality and ii) prompt following. More details on how the study was conducted Appendix E.1.1 and the rankings computed Appendix E.1.2 are listed below.

E.1.1 Experimental Setup

Given all models in one ablation axis (e.g four models of varying aesthetic or motion scores), we compare each prompt for each pair of models (1v1). For every such comparison, we collect on average three votes per task from different annotators, i.e., three each for visual quality and prompt following, respectively. Performing a complete assessment between all pair-wise comparisons gives us robust and reliable signals on model performance trends and the effect of varying thresholds. Sample interfaces that the annotators interact with are shown in Figure 16. The order of prompts and the order between models are fully randomized. Frequent attention checks are in place to ensure data quality.

E.1.2 Elo Score Calculation

To calculate rankings when comparing more than two models based on 1v1 comparisons as outlined in Appendix E.1.1, we use Elo Scores (higher-is-better) ([53]), which were originally proposed as a scoring method for chess players but have more recently also been applied to compare instruction-tuned generative LLMs [113, 114]. For a set of competing players with initial ratings $R_{\text{init}}$ participating in a series of zero-sum games, the Elo rating system updates the ratings of the two players involved in a particular game based on the expected and actual outcome of that game. Before the game with two players with ratings $R_1$ and $R_2$, the expected outcome for the two players is calculated as

$ \begin{align} E_1 = \frac{1}{1 + 10^{\frac{R_2 - R_1}{400}}} , , \ E_2 = \frac{1}{1 + 10^{\frac{R_1 - R_2}{400}}} , . \end{align}\tag{5} $

After observing the result of the game, the ratings $R_i$ are updated via the rule

$ \begin{align} R^{'}_{i} = R_i + K \cdot \left(S_i - E_i \right), \quad i \in {1, 2} \end{align}\tag{6} $

where $S_i$ indicates the outcome of the match for player $i$. In our case, we have $S_i=1$ if player $i$ wins and $S_i = 0$ if player $i$ loses. The constant $K$ can be seen as weight emphasizing more recent games. We choose $K=1$ and bootstrap the final Elo ranking for a given series of comparisons based on 1000 individual Elo ranking calculations in a randomly shuffled order. Before comparing the models, we choose the start rating for every model as $R_{\text{init}} = 1000$.

E.2 Details on Experiments from Section 3

E.2.1 Architectural Details

Architecturally, all models trained for the presented analysis in Section 3 are identical. To insert create a temporal UNet ([58]) based on an existing spatial model, we follow [8] and add temporal convolution and (cross-)attention layers after each corresponding spatial layer. As a base 2D-UNet, we use the architecture from Stable Diffusion 2.1, whose weights we further use to initialize the spatial layers for all runs except the second one presented in Figure 3a, where we intentionally skip this initialization to create a baseline for demonstrating the effect of image-pretraining. Unlike [8], we train all layers, including the spatial ones, and do not freeze the spatial layers after initialization. All models are trained with the AdamW ([112]) optimizer with a learning rate of $1.e-4$ and a batch size of $256$. Moreover, in contrast to our models from Section 4, we do not translate the noise process to continuous time but use the standard linear schedule used in Stable Diffusion 2.1, including offset noise ([111]), in combination with the v-parameterization ([108]). We omit the text-conditioning in 10% of the cases to enable classifier-free guidance ([108]) during inference. To generate samples for the evaluations, we use 50 steps of the deterministic DDIM sampler ([115]) with a classifier guidance scale of 12 for all models.

E.2.2 Calibrating Filtering Thresholds

Here, we present the outcomes of our study on filtering thresholds presented in Section 3.3. As stated there, we conduct experiments for the optimal filtering threshold for each type of annotation while not filtering for any other types. The only difference here is our assessment of the most suitable captioning method, where we simply compare all used captioning methods. We train each model on videos consisting of 8 frames at resolution $256 \times 256$ for exactly 40k steps with a batch size of 256, roughly corresponding to 10M training examples seen during training. For evaluation, we create samples based on 64 pre-selected prompts for each model and conduct a human preference study as detailed in Appendix E.1. Figure 17 shows the ranking results of these human preference studies for each annotation axis for spatiotemporal sample quality and prompt following. Additionally, we show an averaged 'aggregated' score.

For captioning, we see that - surprisingly - the captions generated by the simple clip-based image captioning method CoCa of [46] clearly have the most beneficial influence on the model. However, since recent research recommends using more than one caption per training example, we sample one of the three distinct captions during training. We nonetheless reflect the outcome of this experiment by shifting the captioning sampling distribution towards CoCa captions by using $p_{\text{CoCa}} = 0.5; , p_{\text{V-BLIP}} = 0.25; , p_{\text{LLM}} = 0.25; , $.

For motion filtering, we choose to filter out 25% of the most static examples. However, the aggregated preference score of the model trained with this filtering method does not rank as high in human preference as the non-filtered score. The rationale behind this is that non-filtered ranks best primarily because it ranks best in the category prompt following' which is less important than the 'quality' category when assessing the effect of motion filtering. Thus, we choose the 25% threshold, as mentioned above, since it achieves both competitive performances in prompt following' and 'quality'.

For aesthetics filtering, where, as for motion thresholding, the 'quality' category is more important than the `prompt following'-category, we choose to filter out the 25 % with the lowest aesthetics score, while for CLIP-score thresholding we omit even 50% since the model trained with the corresponding threshold is performing best. Finally, we filter out the 25% of samples with the largest text area covering the videos since it ranks highest both in the 'quality' category and on average.

Using these filtering methods, we reduce the size of LVD by more than a factor of 3, cf Table 1, but obtain a much cleaner dataset as shown in Section 3. For the remaining experiments in Section 3.3, we use the identical architecture and hyperparameters as stated above. We only vary the dataset as detailed in Section 3.3.

E.2.3 Finetuning Experiments

For the finetuning experiments shown in Section 3.4, we again follow the architecture, training hyperparameters, and sampling procedure stated at the beginning of this section. The only notable differences are the exchange of the dataset and the increase in resolution from the pretraining resolution $256 \times 256$ to $512 \times 512$ while still generating videos consisting of 8 frames. We train all models presented in this section for 50k steps.

E.3 Human Eval vs SOTA

For comparison of our image-to-video model with state-of-the-art models like Gen-2 [9] and Pika [10], we randomly choose 64 conditioning images generated from a $1024 \times 576$ finetune of SDXL [17]. We employ the same framework as in Appendix E.1.1 to evaluate and compare the visual quality generated samples with other models.

For Gen-2, we sample the image-to-video model from the web UI. We fixed the same seed of 23, used the default motion value of 5 (on a scale of 10), and turned on the "Interpolate" and "Remove watermark" features. This results in 4-second samples at $1408 \times 768$. We then resize the shorter side to yield $1056 \times 576$ and perform a center-crop to match our resolution of $1024 \times 576$. For our model, we sample our 25-frame image-to-video finetune to give 28 frames and also interpolate using our interpolation model to yield samples of 3.89 seconds at 28 FPS. We crop the Gen-2 samples to 3.89 seconds to avoid biasing the annotators.

For Pika, we sample the image-to-video model from the Discord bot. We fixed the same seed of 23, used the motion value of 2 (on a scale of 0-4), and specified a 16:9 aspect ratio. This results in 3-second samples at $1024 \times 576$, which matches our resolution. For our model, we sample our 25-frame image-to-video finetune to give 28 frames and also interpolate using our interpolation model to yield samples of 3.89 seconds at 28 FPS. We crop our samples to 3 seconds to match Pika and avoid biasing the annotators. Since Pika samples have a small "Pika Labs" watermark in the bottom right, we pad that region with black pixels for both Pika and our samples to also avoid bias.

E.4 UCF101 FVD

This section describes the zero-shot UCF101 FVD computation of our base text-to-video model. The UCF101 dataset ([56]) consists of 13, 320 video clips, which are classified into 101 action categories. All videos are of frame rate 25 FPS and resolution $240\times320$. To compute FVD, we generate 13, 320 videos (16 frames at 25 FPS, classifier-free guidance with scale $w=7$) using the same distribution of action categories, that is, for example, 140 videos of "TableTennisShot", 105 videos of "PlayingPiano", etc. We condition the model directly on the action category ("TableTennisShot", "PlayingPiano", etc.) and do not use any text modification. Our samples are generated at our model's native resolution $320 \times 576$ (16 frames), and we downsample to $240 \times 432$ using bilinear interpolation with antialiasing, followed by a center crop to $240 \times 320$. We extract features using a pretrained I3D action classification model ([91]), in particular we are using a torchscript[^3] provided by [29].

[^3]: https://www.dropbox.com/s/ge9e5ujwgetktms/i3d_torchscript.pt with keyword arguments rescale=True, resize=True, return_features=True.

E.5 Additional Samples